Branded drugs generating $356 billion in worldwide sales face patent expiration between 2023 and 2028. Every one of those assets has a Loss of Exclusivity date that someone — a generic manufacturer, a biosimilar developer, an activist investor — is tracking more precisely than the brand holder thinks. The question is whether that brand holder is using the same quality of intelligence to defend those assets.

The honest answer, for most organizations still running keyword searches on USPTO’s public portal or relying on annual FTO audits, is no.

This guide covers the full architecture of AI-driven pharmaceutical patent intelligence: why the traditional approach fails on a structural level, exactly how machine learning, NLP, and generative AI address each failure point, and what a genuinely predictive IP function looks like when the technology is correctly integrated. It is written for IP teams, portfolio managers, R&D leads, and the institutional investors who care about what happens to blockbuster revenue when exclusivity ends.

Part I: The Economics of Pharmaceutical IP — Why Patent Analysis Is a Board-Level Function

The 20-Year Illusion: Effective Patent Life vs. Statutory Term

A pharmaceutical patent has a statutory life of 20 years from the filing date. That number is almost meaningless as a proxy for commercial exclusivity.

By the time a drug reaches market, the clock has already consumed the majority of that term. Discovery and preclinical work absorbs 4 to 7 years. Phase I through Phase III clinical trials consume another 6 to 8 years. FDA review under a standard NDA pathway adds 10 to 12 months, sometimes longer. Companies file composition-of-matter patents on novel molecular entities early — frequently at the candidate selection stage, before a single human has received the compound. The math is straightforward and brutal: a typical small-molecule drug enters the market with 7 to 12 years of effective patent life remaining. Gene therapy programs, which have longer development timelines, sometimes arrive with even less.

The mechanism for recovering some of that lost time is the patent term extension (PTE) under 35 U.S.C. § 156, which restores a portion of the term consumed by FDA regulatory review, capped at five years and subject to a total post-approval protection limit of 14 years. Even with a maximum PTE applied, the recoupment window remains compressed. A drug that cost $2 billion to develop and required 12 years of clinical work might have 9 years to generate returns before generic entry.

That 9-year window defines everything downstream: pricing strategy, rebate structure with PBMs, clinical publication timing, label expansion efforts, and the aggressiveness of the lifecycle management program.

IP Valuation as a Core Asset: Quantifying What the Patent Fortress Is Actually Worth

For portfolio managers and investment analysts, the patent estate of a pharmaceutical company is not a legal footnote — it is the primary determinant of revenue visibility. A composition-of-matter patent on a $5 billion-per-year drug, with eight years of exclusivity remaining and no credible Paragraph IV filer in the queue, has a calculable net present value that belongs on the balance sheet alongside pipeline assets.

Standard IP valuation methodologies in pharma use income-based approaches: the relief-from-royalty method (estimating what the company would pay to license the IP if it did not own it) and the multi-period excess earnings method (isolating the cash flows attributable specifically to the patented technology). In both cases, the critical inputs are exclusivity duration, probability of litigation success, probability of FDA approval for any in-development lifecycle extension, and the price erosion curve upon generic entry.

Generic entry dynamics are well-documented. In highly competitive markets, brand revenue falls 80 to 90 percent within 12 months of first generic launch. In markets where only one or two generic manufacturers are authorized — often because the API manufacturing process is complex or the Paragraph IV challenge was settled under a reverse payment agreement — erosion is slower. An AI system that can accurately forecast which scenarios apply to a given drug, and with what probability distribution, is generating IP valuations that are materially more accurate than ones built on static assumptions.

Key Takeaways: Part I

The effective patent life for most drugs is 7 to 12 years, not 20. IP valuation built on statutory terms without adjustments for PTE, regulatory exclusivity, and litigation probability systematically misstates asset value. AI-driven forecasting of LOE dates and litigation outcomes directly improves the accuracy of those valuations.

Investment Strategy Note

Analysts valuing pharma companies should treat the AI-derived LOE probability distribution — not just the nominal expiry date — as the appropriate input for DCF modeling. A drug with a primary patent expiring in 2028 but a 70% probability of surviving a Paragraph IV challenge through 2031 has a materially different NPV than one with a 30% survival probability. Platforms that output probabilistic LOE scenarios, rather than single-date estimates, give institutional investors a structurally better model.

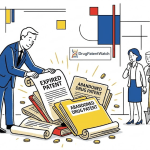

Part II: Lifecycle Management — The Evergreening Toolkit, Annotated

Composition-of-Matter Patents and the Primary IP Anchor

The composition-of-matter patent, protecting the active pharmaceutical ingredient itself, is the highest-value asset in any pharma company’s portfolio. When Pfizer’s atorvastatin (Lipitor) patents expired in November 2011, brand revenue fell from roughly $9.6 billion annually to a fraction of that figure within 18 months. That is the stakes of a composition-of-matter expiration in a high-volume therapeutic area.

The entire lifecycle management apparatus exists to extend commercial exclusivity beyond that anchor patent. Each strategy generates its own IP, with its own valuation and its own vulnerability to challenge.

Formulation Patents: IP Valuation and Litigation Track Record

Extended-release formulations represent one of the most frequently deployed evergreening mechanisms. The IP claim rests on a specific release profile, polymeric matrix, or coating technology that produces a clinical benefit — longer duration of action, reduced peak-trough variability, or an improved side-effect profile. The patent must claim more than a trivial reformulation of the known compound; it must demonstrate unexpected results or a non-obvious manufacturing innovation.

Eli Lilly’s extended-release fluoxetine (Prozac Weekly) is a textbook case. When Prozac’s composition-of-matter patents expired in 2001, Lilly had already secured patents on the weekly formulation. The IP value of those formulation patents was limited — generic manufacturers challenged and worked around them quickly — but they provided a bridging period during which Lilly could shift patients willing to accept the once-weekly regimen. Bristol-Myers Squibb executed a similar play with Glucophage XR (extended-release metformin), though the commercial impact was moderated by the low cost of manufacturing immediate-release generic metformin.

The IP valuation lesson is that formulation patents have lower expected litigation survival rates than composition-of-matter patents. They are more vulnerable to anticipation arguments (the formulation technology was known), obviousness challenges (a PHOSITA would have tried this approach), and enablement challenges (the specification does not teach how to achieve the claimed release profile across the full range of doses). An AI litigation prediction model trained on ANDA challenge outcomes — where formulation patents are contested in Paragraph IV proceedings far more often than composition-of-matter patents — can quantify this valuation discount.

Method-of-Use Patents: New Indications as IP Extension

Merck’s finasteride program demonstrates the method-of-use strategy at its most commercially elegant. Finasteride was first approved at 5 mg for benign prostatic hyperplasia as Proscar (1992). Merck identified that the same molecule at 1 mg suppressed scalp dihydrotestosterone levels sufficiently to treat androgenetic alopecia, filed new method-of-use patents on that specific indication and dose, and launched Propecia (1997). The IP covering the hair-loss use was separate from, and expired later than, the BPH patents — providing years of additional exclusivity for what was, chemically, the same molecule at a lower dose.

The IP valuation of method-of-use patents depends heavily on the strength of the ‘label-carving’ defense available to generic manufacturers. Under FDA regulations, a generic can seek approval for only the non-patented indications on the brand’s label — a process called skinny labeling. If the new indication drives the majority of the drug’s prescriptions, skinny labeling limits generic manufacturers’ market opportunity and preserves brand revenue. If the new indication accounts for a small share of use, a generic with a skinny label captures most of the market anyway. AI platforms that integrate prescription data with patent and regulatory status can model this dynamic and produce indication-level revenue forecasts.

Polymorphs and Chiral Switches: The Crystal Form Gambit

A pharmaceutical compound can exist in multiple crystalline arrangements — polymorphs — each with potentially different solubility, bioavailability, and stability characteristics. Polymorph patents protect the specific crystal form used in the commercial product, extending IP coverage beyond the original compound patent.

AstraZeneca’s transition from omeprazole (Prilosec) to esomeprazole (Nexium) is the most cited example of the chiral switch strategy. Omeprazole is a racemic mixture of R- and S-enantiomers; esomeprazole is the pure S-enantiomer. AstraZeneca filed composition-of-matter patents on esomeprazole, arguing it had superior pharmacokinetics. The strategy was commercially successful — Nexium became one of the highest-grossing drugs in history — but the IP was aggressively challenged. The Federal Circuit ultimately found some of the Nexium-related patents valid after protracted litigation. The IP valuation at the time of launch was contested precisely because the novelty and non-obviousness of isolating a known enantiomer was legally uncertain.

For R&D teams evaluating chiral switch opportunities, the analytical question is whether the single enantiomer demonstrates a clinically meaningful difference from the racemate — in PK profile, efficacy, or tolerability. Patents claiming a chiral switch without compelling clinical differentiation carry elevated invalidity risk. AI models trained on polymorph and enantiomer patent prosecution histories and challenge outcomes can score that risk before the program advances.

Patent Thickets: Building the Fortress, Layer by Layer

The patent thicket strategy produces the most complex IP valuation scenarios. A single blockbuster drug may have dozens or hundreds of patents listed in the FDA’s Orange Book or maintained in the company’s portfolio, covering formulations, dosing regimens, manufacturing processes, packaging, and metabolite forms. AbbVie’s adalimumab (Humira) program is the most documented modern example: at peak, Humira was protected by more than 130 U.S. patents, many of which were secondary patents filed years after the original biologic application.

The IP valuation of a thicket is not additive. A portfolio manager cannot sum the expected exclusivity value of 130 individual patents. The relevant question is which patents represent genuine barriers to market entry and which are litigation deterrents whose primary function is cost and delay. Generic and biosimilar manufacturers have become sophisticated at identifying which claims in a thicket are most vulnerable to inter partes review (IPR) at the PTAB or Paragraph IV challenge in district court. AI platforms that have indexed PTAB institution rates, claim-type survival rates, and the litigation history of specific law firms and examiners can disaggregate a thicket and assign a probability of challenge survival to each patent. The aggregate of those probabilities, discounted to present value, is the actual IP asset value.

Regulatory Exclusivity: The Parallel Track

Patent protection and regulatory exclusivity operate independently and must be analyzed together. Under the Hatch-Waxman framework, a New Chemical Entity (NCE) receives five years of regulatory exclusivity during which FDA cannot accept an ANDA. A Biological License Application for a novel biologic receives 12 years of reference product exclusivity under the BPCIA, plus four years during which FDA cannot accept a biosimilar application. Orphan drug designation adds seven years of ODE. Pediatric exclusivity — triggered by completing FDA-requested pediatric studies — adds six months to whichever exclusivity period or patent term is in force at the time.

The failure to integrate these tracks is one of the most consequential analytical errors in traditional patent searching. An analyst who identifies that a drug’s composition-of-matter patent expires in 2026 without also noting that its NCE exclusivity runs through 2027 will produce an incorrect LOE forecast. Conversely, an analyst relying only on regulatory exclusivity who fails to identify that a competitor has filed a Paragraph IV certification on a secondary patent, with a court date set for 2025, will overestimate the time remaining on the exclusivity clock.

Key Takeaways: Part II

Each evergreening mechanism generates IP with distinct valuation characteristics and distinct litigation vulnerability profiles. Formulation and polymorph patents carry higher invalidity risk than composition-of-matter patents. Patent thickets require probabilistic disaggregation, not simple counting. Regulatory exclusivity must be integrated with patent data to produce an accurate LOE forecast.

Investment Strategy Note

Analysts assessing evergreening defensibility should model at least three scenarios: base (all Orange Book patents survive challenge), bear (only composition-of-matter and NCE exclusivity hold), and stress (one or more IPRs invalidates secondary patents in the thicket). The probability-weighted average of those revenue curves is more informative than any single LOE date. AI platforms that output these scenarios with empirically derived probabilities are a competitive advantage in this work.

Part III: Why Traditional Patent Searches Fail — The Structural Failures

The Volume Problem Is Not What You Think

Patent database scale is real — over 100 million documents across more than 100 national and regional patent offices — but raw volume is not the hardest problem. The harder problem is that pharmaceutical patent documents are structurally unlike other text data. Claims language is drafted to define legal boundaries, not to communicate in ordinary English. A single independent claim can span three pages. A Markush structure in a chemical patent claim can theoretically encompass billions of compounds without naming a single one explicitly.

Traditional search tools respond to volume with classification systems: the Cooperative Patent Classification (CPC) and International Patent Classification (IPC) schemes. Both are useful for coarse filtering, but both fail at the margin. The CPC is updated continuously as new technology areas emerge, meaning a search conducted using 2020 classification codes may miss patents filed in a reclassified subcategory. Databases are inconsistently indexed: documents are misfiled, missing, or assigned incorrect classifications at a rate that makes any single classification-based query structurally incomplete.

The Semantic Trap: Where Keyword Search Breaks Down

The foundational failure of keyword search in pharmaceutical patent analysis is the precision-recall trade-off. A broad keyword search on ‘cancer therapy’ retrieves an unmanageable volume of irrelevant documents. A specific keyword search on a particular mechanism or compound name misses all documents that describe the same concept using alternative terminology.

This failure is not a small inconvenience. It is a systematic risk. Consider the synonymy problem: atorvastatin can appear in patents as ‘Lipitor,’ as ‘(3R,5R)-7-[2-(4-fluorophenyl)-3-phenyl-4-(phenylcarbamoyl)-5-propan-2-ylpyrrol-1-yl]-3,5-dihydroxyheptanoic acid’ (the IUPAC name), as the compound’s CAS registry number, or as an internal company project code. A keyword search optimized for any one identifier fails to retrieve patents that use only the others.

For biologics, the problem is worse. Protein and nucleic acid sequences — the actual inventive content of a biologic patent — are frequently disclosed in patent specifications as amino acid strings, gene sequences, or tables. They are not reliably filed in standardized sequence listing files that databases index separately. A keyword search cannot find a patent claiming a specific monoclonal antibody binding to PD-L1 if the sequence is embedded in a figure and the claims use a functional definition rather than the sequence itself.

The Markush structure problem is its own category. Patent claims on chemical compounds routinely use Markush language to claim a genus of compounds defined by a core scaffold and variable substituent groups. A single Markush claim may encompass 10 million distinct molecules. No keyword search identifies whether a specific candidate compound falls within a Markush structure — that requires chemical structure search capabilities operating on machine-readable structural data.

The Cost Reality of Manual FTO Analysis

A basic patentability search from a professional firm costs $500 to $2,000. A thorough FTO search for a late-stage pharmaceutical product, covering all major markets and all relevant patent families, runs $3,000 to $10,000 or more. The total cost to prosecute a single U.S. utility patent — including drafting, filing fees, office action responses, and appeals — routinely exceeds $50,000. For a company with a deep pipeline across multiple therapeutic areas, these per-search costs compound into a significant drag.

The false economy of relying on free public databases — Google Patents, the USPTO’s public search system — is not the subscription fee avoided. It is the unquantified risk of the search not finding critical prior art or an in-force blocking patent. A missed blocking patent discovered post-launch, when the product is already in the market, triggers litigation exposure that can run into hundreds of millions of dollars. The cost-benefit calculation on professional search quality is straightforward; the problem is that the cost of a missed patent is probabilistic and therefore systematically underweighted in budget discussions.

Key Takeaways: Part III

Keyword and classification-based searches have a structural ceiling on recall. They cannot resolve synonymy across compound identifiers, cannot search Markush structures, and cannot extract sequence data embedded in patent specifications. The economic cost of a missed blocking patent vastly exceeds the cost of the search. Traditional methods are not adequate for high-stakes pharmaceutical IP analysis.

Part IV: The AI Toolkit — NLP, ML, and Generative AI as Patent Intelligence Infrastructure

Natural Language Processing: Breaking the Semantic Trap

Natural Language Processing gives machines the ability to parse, interpret, and act on language with contextual awareness rather than string-matching. In pharmaceutical patent analysis, NLP addresses the semantic trap directly: instead of searching for words, NLP-powered systems search for meaning.

The core mechanism is semantic vector embedding. An NLP model trained on large corpora of patent text, scientific literature, and regulatory documents learns to represent words, phrases, and documents as high-dimensional vectors. Documents that describe the same conceptual territory — regardless of the specific terminology they use — will have similar vector representations. A semantic search query converts the user’s concept into a vector and retrieves documents with the nearest vector neighbors. This produces high recall (finding relevant documents regardless of terminology) without sacrificing precision (excluding irrelevant documents that happen to share keywords).

Named Entity Recognition (NER) is the layer of NLP that structures patent text for downstream analysis. A pharmaceutical-specific NER model identifies and tags drug names (brand and generic), company names, diseases and indications, mechanisms of action, clinical trial identifiers, and legal terms like ‘Paragraph IV certification’ or ‘inter partes review.’ The output is a structured, queryable representation of documents that were previously unstructured text. A properly built NER pipeline on a corpus of 10 million pharma patents produces an indexed knowledge graph that supports queries impossible in a keyword system — for example, ‘find all patents assigned to Janssen covering PCSK9 inhibition filed in the two years after the FDA approved alirocumab.’

Document summarization using NLP allows analysts to process large review queues efficiently. A 40-page patent specification with 150 claims can be reduced to a structured summary covering the claimed compound class, key dependent claim limitations, and file history issues flagged during prosecution. This reduces the per-document review time from hours to minutes, allowing a team to triage a landscape of 500 relevant patents in a fraction of the time a manual review would require.

Chemical Structure Search and the Markush Problem

Pharmaceutical-specific AI platforms have developed tooling to address Markush structures and chemical compound identification in ways that general-purpose patent databases cannot. Structure-aware AI systems convert SMILES (Simplified Molecular-Input Line-Entry System) notations and InChI identifiers into searchable representations. They can compare a candidate compound’s structure against the variable substituent space defined in Markush claims and determine whether the candidate falls within the scope of the claim.

This capability is not universal across AI patent platforms. Platforms built for general patent searching — even those with strong NLP capabilities — frequently lack the cheminformatics layer necessary for rigorous Markush analysis. For pharmaceutical FTO work specifically, this gap is a critical differentiator. A platform that can perform chemical substructure searches against a database of 50 million compounds represented in Markush claims is not simply a better version of a keyword search; it is a categorically different capability.

Machine Learning for Landscape Analysis and Pattern Recognition

Machine learning operates on a different level of the intelligence stack than NLP. Where NLP enables document-level understanding, ML enables portfolio-level and field-level pattern recognition from the resulting structured data.

Patent landscaping — mapping all patent activity in a technology domain to understand the competitive terrain — is the most resource-intensive analytical task in traditional IP work. A comprehensive landscape of mRNA delivery technology patents, for example, could involve reviewing 20,000 to 50,000 documents. Manual landscaping at this scale takes months and typically delivers a snapshot that is outdated by the time the report is published.

ML automates landscaping through classification. The analyst provides a ‘seed set’ of 100 to 200 patents that clearly define the technology boundary, and a comparable ‘anti-seed set’ of patents that are adjacent but outside scope. The ML model learns the discriminating features and can classify the full patent corpus — 50,000 documents — in hours, not months. The output is a continuously updatable landscape map rather than a static deliverable. When a competitor files a new patent family, it gets classified and appears in the landscape without manual intervention.

Clustering algorithms applied to this classified corpus identify sub-technology areas and reveal density patterns. High-density areas indicate crowded IP space where freedom to operate may be limited. Low-density areas — ‘white space’ — indicate territories where novel patents can be secured and R&D investment faces lower IP risk. For pipeline investment decisions, this white space analysis is directly actionable: it identifies where a company can innovate without entering a patent minefield.

Predictive Analytics: Forecasting LOE and Litigation Outcomes

The highest-value output of ML in pharmaceutical patent intelligence is predictive. Historical data — patterns in patent prosecution, litigation outcomes, examiner decision rates, claim language characteristics, citation velocity — can be used to train models that forecast future events with quantified confidence intervals.

LOE forecasting is the clearest use case. Calculating the precise LOE date for a drug requires integrating the original composition-of-matter patent term, any PTEs granted, the scope of NCE or biologic exclusivity, pediatric exclusivity if applicable, the status of any Orange Book-listed patents, and the outcome of pending or potential Paragraph IV litigation. An AI model with access to this integrated dataset can produce probability distributions over LOE dates rather than single-point estimates. For a drug with a complex patent landscape and three active Paragraph IV certifications in different stages of litigation, the range of probable LOE dates might span three years; the model can quantify which scenarios have which probabilities and under which conditions.

Litigation outcome prediction is more complex. The relevant inputs span the characteristics of the patent itself (claim breadth, prosecution history, examiner allowance rate, citation history), the characteristics of the litigation (forum, judge assignment, law firms on each side), and broader market context (size of the potential damage award, which creates settlement incentives). Studies of Hatch-Waxman litigation have found that certain patent characteristics — overly broad claim scope, heavy reliance on clinical data rather than structural novelty, arguments that were substantially narrowed during prosecution — correlate strongly with invalidity outcomes. An ML model trained on thousands of past Paragraph IV outcomes can score a new patent on these factors and output a probability of surviving challenge.

Generative AI as the Analysis Layer

Large Language Models add a synthesis and interaction layer on top of the NLP and ML infrastructure. They allow analysts to interrogate a structured knowledge base in natural language, receiving synthesized answers rather than ranked document lists.

The practical difference is significant. An analyst using a traditional platform to understand Novo Nordisk’s oral semaglutide IP strategy must run multiple searches, manually read the relevant documents, and synthesize the findings into a coherent analysis. An analyst using a GenAI-enabled platform can ask ‘Describe the formulation strategy differences between Novo Nordisk’s oral semaglutide patents and the patents filed by competing companies working on oral GLP-1 analogs, including key claim limitations and expiry timelines.’ The system queries the underlying structured data, identifies the relevant patent families, analyzes the claim language, and generates a synthesized response with tables and patent citations, in seconds.

LexisNexis’s PatentSight+ and TechDiscovery products, IPRally’s conversational review interface, and platforms built on top of enterprise LLMs are already delivering this capability for early adopters. The bottleneck is not the technology — it is the quality and completeness of the underlying structured patent database on which the GenAI operates. A GenAI layer on top of a poorly indexed or incomplete database produces confident-sounding but unreliable output. The data infrastructure matters as much as the model.

Generative AI is also reshaping patent prosecution support. Platforms like Dolcera’s IP Author and Solve Intelligence can draft initial claim sets and specification language from an invention disclosure, identify claim dependencies, and flag potential 35 U.S.C. § 112 written description issues. They can analyze office actions from USPTO examiners, map the examiner’s objections against the claim language, and suggest response arguments grounded in the prosecution history and relevant case law. None of this replaces a registered patent attorney’s judgment — but it compresses the mechanical work in a prosecution cycle from weeks to days.

Multimodal AI: Reading Chemical Structures from Patent Images

Patent documents are not purely textual. They contain structural formula diagrams, synthesis routes, spectroscopic data, clinical trial graphs, and device schematics. Current-generation AI patent platforms are largely blind to this visual content; they index and analyze the text but treat the images as opaque.

Multimodal AI — models that process both text and image inputs — is the next major capability frontier. A multimodal model integrated into a patent platform can read a structural diagram from a PDF page, convert it to a SMILES notation, and cross-reference it against the compound database. It can extract a PK curve from a figure, read the axis labels and data points, and incorporate the quantitative data into its analysis. For biologics, multimodal extraction of amino acid sequence tables from legacy PDF patents addresses precisely the ‘hidden sequence’ problem that renders traditional text search ineffective on the oldest biologic IP.

Platforms that deploy multimodal search capabilities will offer a material advantage in the biologic and complex-molecule landscape, where the most strategically sensitive IP is often embedded in non-text content.

Key Takeaways: Part IV

NLP addresses the semantic trap through vector embedding and NER, enabling concept-based search and structured querying. ML enables automated landscaping, white-space identification, and probabilistic forecasting of LOE and litigation outcomes. Generative AI synthesizes the resulting intelligence into natural-language analysis. Multimodal AI is the next capability frontier, addressing patent content that text systems cannot access. The quality of the underlying data infrastructure determines the quality of all AI outputs built on top of it.

Part V: AI in Action — Core Applications Across the Pharmaceutical IP Lifecycle

Prior Art Search and Patentability Assessment

A patent that fails the novelty or non-obviousness requirement will be rejected during prosecution or, worse, granted and then invalidated in litigation. The cost of prosecuting an unpatentable claim through a full prosecution cycle — office actions, responses, appeals, potentially the Patent Trial and Appeal Board — is substantial. The cost of reaching an IPR invalidity ruling after the patent has been asserted against a generic manufacturer is catastrophic: the patent is gone, and the brand’s legal defense of its exclusivity strategy collapses.

AI-powered semantic prior art search reduces this risk by enabling concept-based retrieval that catches relevant references independent of terminology. A search for ‘subcutaneous depot formulation for sustained-release biologics’ using semantic vectors will retrieve patents that describe the same technology using different terminology — ‘injectable polymeric microspheres for long-acting protein delivery,’ for example — that a keyword search would miss. PQAI (Patent Quality AI), developed as an open-source initiative, is specifically designed to improve prior art search completeness using semantic methods.

Clustering of search results adds another dimension. Rather than presenting 500 results as a flat ranked list, AI platforms cluster documents into technology groups — polymer matrix systems, lipid nanoparticle delivery, hydrogel depots, osmotic pump mechanisms — and visualize the density of each cluster. The analyst can immediately identify the areas of heaviest patenting activity and focus detailed review on the most pertinent cluster, rather than working through the entire result set linearly.

Freedom-to-Operate Analysis: From Checklist to Risk-Scoring Engine

Freedom-to-Operate analysis is the highest-stakes application of pharmaceutical patent search. The output is a legal opinion on whether a product can be commercialized without infringing valid, in-force patents. A wrong answer has two consequences: the product either enters the market and faces an injunction, or it is killed before launch based on a blocking patent that was actually invalid.

AI transforms FTO from a sequential, checklist-based process into a continuous, risk-scoring function. At the screening stage, AI tools apply automated claim parsing to compare product features against potentially relevant patent claims, flagging candidate blocking patents by degree of structural or functional overlap. This narrows the field from hundreds of potentially relevant patents to the twenty or thirty that warrant detailed attorney review, with each one accompanied by a preliminary risk score and the specific claim elements driving it.

Claim chart generation, traditionally one of the most time-consuming tasks in FTO analysis, is now partially automated on platforms like Clearstone FTO. The system takes a patent’s independent claims, identifies their element-by-element structure using NLP, and generates a draft chart comparing each element against the product feature set. Attorneys review and refine the chart rather than creating it from scratch, compressing a task that previously took days into hours.

The most strategically significant AI application in FTO is design-around generation. When a blocking patent is identified, the critical question is not just ‘does our product infringe?’ but ‘what modifications would avoid infringement while preserving commercial viability?’ AI platforms trained on claim structure and chemical or formulation design space can suggest specific modifications — alternative substituents, different polymorphic forms, modified release mechanisms — that would fall outside the scope of the blocking claim. This converts FTO from a pure risk-identification exercise into an R&D guidance tool.

Continuous monitoring closes the loop. Products in development face an evolving IP landscape: new patents publish weekly, pending applications mature into grants, and post-grant challenges resolve. Platforms like senseIP provide real-time alert systems that monitor all relevant patent activity and notify the FTO team when a new filing creates a potential conflict. A threat identified 18 months before a product launch is a design problem; the same threat identified two weeks before launch is a crisis.

Competitive Intelligence: Reading Competitor R&D Strategy From Patent Filings

Patent filings are the most detailed publicly available record of a company’s R&D pipeline. A new patent application is typically filed one to three years before a clinical trial begins — well before any competitive intelligence from conference presentations or regulatory submissions. For pharma BD teams, IP counsel, and institutional investors, systematic analysis of competitor patent activity is a primary source of early-stage pipeline intelligence.

AI enables that analysis at a scale and depth that manual review cannot match. An ML model trained on Merck’s entire patent portfolio over the past 10 years, for example, can identify that the company has sharply increased its filing activity in KRAS inhibition chemistry, with a specific focus on covalent inhibitor scaffolds targeting KRAS G12C and G12D mutations, beginning approximately 18 months before their clinical program was publicly disclosed. That is actionable competitive intelligence for a company in the same space.

DrugPatentWatch is built specifically around this multi-dimensional intelligence model for pharmaceutical IP. It links patent data to Orange Book filings, FDA approval records, ANDA Paragraph IV certifications, court litigation records, and commercial sales data. The integration matters because patent data in isolation answers questions about IP scope and timing; patent data integrated with clinical and regulatory data answers questions about which assets are actually moving toward market and with what commercial trajectory. An AI model operating on this integrated data set can detect the early signal of a competitor’s follow-on program — new process patents filed simultaneously with an IND submission — that pure patent analysis would not flag.

White-space identification is the offensive complement to competitive threat monitoring. By classifying all patents in a therapeutic area into technology clusters and mapping their density, AI reveals where patent activity is sparse relative to scientific opportunity. In immuno-oncology, for example, while checkpoint inhibitor IP is extremely dense around PD-1/PD-L1, TIM-3, and LAG-3, other regulatory pathways have seen relatively sparse patent filing despite growing mechanistic evidence. White-space analysis gives R&D portfolio managers a data-driven basis for directing investment toward areas where novel IP can be secured with less risk of entering a crowded field.

LOE Forecasting and Generic Entry Strategy

For brand manufacturers, accurate LOE forecasting is the foundation of the commercial strategy for the five years leading up to patent expiration. The 18 to 24 months before LOE are typically where the brand accelerates its patient switching program — moving patients to the next-generation product protected by a fresh patent estate — and adjusts its contracting with payers and PBMs to maximize market retention.

For generic manufacturers and biosimilar developers, LOE forecasting determines which products to target, when to file an ANDA or 351(k) application, and how aggressively to invest in a Paragraph IV challenge. The first ANDA filer to successfully challenge a brand’s patent receives 180 days of generic market exclusivity, during which other generics cannot enter. At $3 to $5 billion in annual brand revenue, that 180-day exclusivity period is worth hundreds of millions of dollars in gross profit. The AI-driven LOE forecast that correctly identifies the ‘true’ LOE date — accounting for PTE, exclusivity, and expected litigation outcomes — and allows a generic manufacturer to optimize its filing timing accordingly is a direct competitive advantage worth quantifying.

Key Takeaways: Part V

AI applications in pharmaceutical patent intelligence span the full lifecycle from discovery-stage patentability assessment through commercial LOE strategy. Each application reduces a specific category of risk: invalidity risk for prosecution, infringement risk for FTO, competitive surprise risk for landscape monitoring, and timing uncertainty for LOE strategy. The applications are most powerful when deployed as an integrated, continuous function rather than as point-in-time searches.

Investment Strategy Note

For generic manufacturers evaluating Paragraph IV filing opportunities, an AI platform that outputs probability-weighted LOE dates and litigation survival rates enables a rigorous expected-value calculation on each potential challenge. The expected value of a Paragraph IV filing is a function of the probability of invalidating or designing around the blocking patent, the value of the 180-day exclusivity period, and the cost of litigation. AI-derived probability estimates materially improve the numerator of that calculation.

Part VI: The Legal Frontier — AI, Inventorship, Non-Obviousness, and the Disclosure Problem

Thaler v. Vidal and the Human Inventorship Requirement

The Federal Circuit’s 2022 ruling in Thaler v. Vidal settled one question and opened several others. The court held that ‘inventors’ under the Patent Act must be natural persons; an AI system cannot be listed as an inventor. That ruling was expected and consistent with the statute’s language and congressional intent as understood by practitioners for decades.

What the ruling did not address is the more commercially consequential question: what level of AI assistance in the inventive process disqualifies or complicates human inventorship? The USPTO provided its answer in February 2024, issuing Inventorship Guidance for AI-Assisted Inventions. The guidance confirms that AI-assisted inventions are patentable when at least one human has made a ‘significant contribution’ to the conception of each claimed invention.

The USPTO directs examiners to apply the Pannu factors, derived from Pannu v. I. & N. Cable, Inc.: the human must (1) contribute in some significant manner to the conception or reduction to practice of the claimed invention, (2) make a contribution that is not insignificant in quality relative to the full invention, and (3) do more than explain well-known concepts to the actual inventors. The guidance then maps these factors to AI-assisted scenarios:

Merely running an AI platform and reviewing its output does not establish inventorship. Crafting a highly specific, creative prompt that steers an AI system toward a novel solution can constitute a significant contribution. Interpreting and substantially modifying an AI-generated compound candidate — identifying which structural features are responsible for the observed activity and using that insight to design a better candidate — likely qualifies. Designing and training an AI model for a specific discovery purpose, where the training architecture embodies novel scientific insight, can be a significant contribution to inventions the model generates.

The practical implication for pharmaceutical AI programs — Insilico Medicine’s generative chemistry platform, Recursion Pharmaceuticals’ phenomics-based compound identification system, Exscientia’s AI-designed molecule programs — is that meticulous contemporaneous documentation of human scientific judgment at each stage of the AI-assisted process is now a legal requirement, not a best practice.

IP Valuation Spotlight: Insilico Medicine and Recursion Pharmaceuticals

Insilico Medicine has moved AI-generated drug candidates into clinical trials, with INS018_055 (a small-molecule TNIK inhibitor for IPF) the most advanced. The company’s IP estate for AI-generated compounds depends entirely on demonstrating that human scientists made significant contributions to the molecular design process — selecting the biological target, defining the optimization objectives, interpreting the generative model’s output in the context of the broader SAR landscape, and directing subsequent medicinal chemistry work. The strength and defensibility of the composition-of-matter patents on AI-designed candidates will be a central diligence question in any financing or licensing transaction. Platforms that assess IP vulnerability on AI-assisted inventions are therefore directly relevant to the valuation of these companies.

Recursion Pharmaceuticals’ approach is based on large-scale biological imaging — phenomics — rather than molecular generation. The IP strategy here is different: the primary IP assets are not necessarily composition-of-matter patents on AI-designed molecules, but rather the platform technology itself (methods for biological perturbation, imaging, and phenotypic analysis), combined with composition-of-matter patents on specific compounds identified through the platform. The company’s RXDX-101 program and its partnership pipeline with Bayer and Roche add complexity: who owns the IP generated through a collaborative AI platform program? The contractual IP ownership provisions in those partnerships are as important as the underlying patent portfolio.

Non-Obviousness in the Age of AI: The Rising PHOSITA

Patent non-obviousness is assessed against the standard of a ‘person having ordinary skill in the art’ (PHOSITA) — a hypothetical practitioner with the knowledge and capabilities typical of their field at the time of the invention. This hypothetical construct does not remain static. It reflects the actual state of technology available to practitioners.

As AI-driven compound design and property prediction become routine tools in pharmaceutical R&D, the capabilities of the PHOSITA will expand. Courts and examiners will be expected to ask: could a PHOSITA using standard AI tools available at the time have generated this compound or formulation? If the answer is yes, the invention may be obvious.

This is not a hypothetical future problem. The USPTO’s own guidance on AI tools notes that examiners should consider whether AI-generated results would have been obvious to a PHOSITA using standard AI tools. Patent practitioners at Marshall, Gerstein & Borun and other firms with active AI-inventorship practices are already advising clients that incremental molecular modifications of known scaffolds — precisely the work that AI-assisted generative chemistry excels at — face elevated obviousness risk under an AI-aware PHOSITA standard.

The strategic response is bifurcated. Companies can pursue truly novel biological targets and mechanisms of action where AI’s predictive accuracy is lowest — areas of high biological complexity and incomplete training data. Alternatively, they can protect AI-driven innovations as trade secrets rather than patents, accepting the trade-off that trade secret protection offers no defense against independent discovery.

For evergreening programs specifically, the implication is that formulation and polymorph improvements generated with AI assistance face compounded IP risk: they were already more vulnerable to obviousness challenges than composition-of-matter inventions, and the AI-aware PHOSITA standard raises the bar further.

The Confidentiality Risk in GenAI Patent Tools

Third-party generative AI platforms present a specific IP risk that is not adequately appreciated in many organizations’ current procurement and security frameworks. Submitting a detailed description of an unpublished invention to a cloud-based LLM — for the purpose of drafting claims or analyzing prior art — could constitute a public disclosure under 35 U.S.C. § 102, destroying novelty and barring international patent protection. Beyond the legal exposure, submitting unpublished technical data to a platform that uses it for model training creates a trade secret disclosure.

The mitigation strategies are available but require organizational commitment: on-premises AI deployment where models run on the company’s own infrastructure; enterprise contracts with AI providers that include zero data retention and training exclusions; or use of platforms specifically designed for IP sensitivity, with architectural guarantees of data isolation. Most organizations have not systematically evaluated which of their AI tooling relationships expose them to this risk.

The Enablement Problem for AI-Discovered Drugs

Patent law requires that an application’s specification enable a person skilled in the art to make and use the claimed invention without undue experimentation. For AI-discovered compounds, where the AI’s output is a molecular structure and the human inventor’s contribution is the experimental validation of that structure’s activity, the enablement question is whether the application teaches enough about why the compound works to satisfy the written description and enablement requirements under 35 U.S.C. § 112.

If the AI model operates as a black box — producing a compound with excellent in vitro activity but no interpretable SAR rationale — the application may struggle to provide the mechanistic explanation that 35 U.S.C. § 112 requires. Examiners and courts have increasingly scrutinized broad functional claims in biotechnology under the enablement doctrine, most recently in the Supreme Court’s Amgen v. Sanofi decision (2023), which invalidated Amgen’s broad functional antibody claims. AI-generated compound claims that rely on functional characterization rather than structural specificity face analogous risk.

Key Takeaways: Part VI

The Thaler ruling settled AI inventorship but created an affirmative documentation requirement for AI-assisted inventions. The evolving PHOSITA standard raises the non-obviousness bar for AI-generated incremental modifications. Cloud-based GenAI platforms create IP confidentiality risk that many organizations are not managing. AI-generated compounds face potential enablement challenges if the AI process is not sufficiently interpretable to support 35 U.S.C. § 112 disclosure requirements.

Investment Strategy Note

Investors conducting IP diligence on AI-first drug discovery companies should assess four specific issues: (1) the completeness of contemporaneous documentation establishing human significant contribution under the Pannu factors; (2) whether the company’s AI platform agreements include data isolation and training exclusion provisions; (3) whether the composition-of-matter patent claims on AI-generated compounds are structurally specific enough to survive an Amgen-standard enablement challenge; and (4) how the company’s IP strategy would adapt if the evolving PHOSITA standard renders its AI-generated lead series candidates obvious.

Part VII: Building the Augmented IP Function — Strategy, Tooling, and Organizational Architecture

The Integrated Intelligence Stack

A pharmaceutical organization’s AI patent intelligence capability is only as good as its data infrastructure. The analytical outputs — LOE forecasts, litigation probability scores, competitive landscape maps, white-space analyses — are computed from underlying data, and the completeness, accuracy, and integration of that data determines the quality of those outputs.

The components of a complete pharmaceutical IP intelligence data stack include: global patent data from all major jurisdictions (USPTO, EPO, WIPO PCT, CNIPA, JPO, and regional offices), covering both granted patents and pending applications; Orange Book listings and ANDA Paragraph IV certification records; PTAB trial records and district court litigation dockets; FDA approval records, NDA supplements, and 505(b)(2) applications; clinical trial registrations from ClinicalTrials.gov and international equivalents; and where available, prescription and revenue data to map IP assets to commercial performance.

DrugPatentWatch is among the purpose-built platforms integrating these data streams for pharmaceutical-specific analysis. Its link between Orange Book patent listings, Paragraph IV certifications, and court outcomes — all connected to the specific drug products they cover — allows queries that are impossible on general patent databases: ‘Which Paragraph IV certifications have been filed against extended-release formulation patents in the SSRI class in the last five years, and what were the litigation outcomes?’ A question like that drives both brand defense strategy and generic portfolio planning.

IPRally’s differentiation is its graph-based AI approach. Rather than treating patents as documents to be retrieved, it models the patent corpus as a knowledge graph in which the relationships between concepts, citations, and technology areas carry as much information as the documents themselves. This architecture is particularly effective at identifying conceptually related patents that share no textual similarity — the ‘unknown unknowns’ of prior art that keyword and vector-similarity search both miss.

LexisNexis PatentSight+ adds a corporate intelligence layer: enriched assignee data that maps patent ownership through complex acquisition and holding company structures, normalized company identifiers that resolve ownership across jurisdictions, and technology category scores derived from ML classification of the patent corpus. Its GenAI-enabled TechDiscovery product translates a natural-language query about a technology domain into an instant patent landscape, returned in minutes rather than months.

The Four-Stage Intelligence Cycle

An operationally mature AI patent intelligence function runs a continuous four-stage cycle rather than discrete project-based searches.

The monitoring stage establishes real-time tracking across all relevant activity: competitor patent applications publishing weekly, new ANDA Paragraph IV filings appearing in the FDA’s database, new IPR petitions filed at PTAB, and regulatory actions from FDA and EMA. Alert thresholds are set by asset priority, triggering escalation when a new filing crosses into the exclusivity zone of a key product.

The analysis stage converts monitoring signals into structured intelligence. When a competitor files a new patent application in a technology area overlapping with a company’s pipeline program, the AI platform classifies the new filing, assesses its overlap with existing FTO clearances, and flags specific claims that may require updated analysis. The output is a prioritized action queue for the IP team, not a raw list of new filings.

The prediction stage applies ML models to the current intelligence picture. Updated LOE probability distributions are generated as new Paragraph IV certifications are filed and litigation milestones pass. Litigation outcome probability scores are updated as new case data becomes available. Competitive threat scores for pipeline programs are recalculated as competitor activity patterns shift.

The action stage closes the loop: intelligence outputs are integrated into R&D portfolio decisions, commercial planning, and legal strategy. White-space analysis informs which therapeutic areas receive increased patent filing resources. LOE forecasts trigger the initiation of patient-switching programs at the appropriate commercial milestone. Litigation probability scores inform settlement negotiation parameters.

The Augmented IP Professional: Role Redefinition

AI does not eliminate the pharmaceutical patent professional. It eliminates the specific tasks that have historically consumed the largest portion of that professional’s time: document retrieval, manual classification, basic claim charting, and routine prosecution mechanics. What remains — and what becomes more valuable — is the judgment to interpret AI outputs, the legal knowledge to assess the strategic implications of a patent landscape, the scientific background to evaluate the plausibility of a design-around suggestion, and the credibility to communicate IP risk to a board or an investment committee.

The skills that compound in value in an AI-augmented IP function are prompt engineering (constructing queries that elicit accurate, complete AI outputs), critical evaluation of AI-generated analysis (identifying where a GenAI system has confidently stated something incorrect), and cross-functional communication (translating IP risk scores and LOE forecasts into financial and strategic language that commercial and finance teams can use). Organizations that retrain their IP teams to develop these skills, rather than treating AI as a cost-reduction mechanism to reduce headcount, will capture the full strategic value of the capability.

Technology Roadmap: Where AI Patent Intelligence Goes Next

Near-term developments already in progress include wider deployment of automated claim drafting and prosecution support tools, more sophisticated multimodal patent analysis that extracts structural data from figures, and tighter integration of patent intelligence platforms with R&D data management systems so that IP clearance is embedded in the compound advancement workflow rather than running parallel to it.

Over the medium term — two to five years — the most significant capability development is likely to be AI systems that can model the strategic interaction between patent prosecution decisions, litigation outcomes, and generic entry timing in an integrated dynamic model. Currently, these three analytical functions are performed separately by different tools. An integrated model would allow an IP team to simulate the full game tree of a lifecycle management program: if we file this continuation with these claim limitations, how does that affect the probability that a Paragraph IV challenge succeeds, and by how much does that shift the LOE distribution?

The longer-term trajectory runs into the inventorship doctrine itself. If AI systems become primary generators of novel pharmaceutical compounds and therapeutic concepts, and if courts continue to require that human contributors meet the Pannu significant-contribution standard, the industry may face a growing gap between its actual innovation process and the legal framework for protecting its outputs. That gap will require either legal evolution — a new statutory category for AI-assisted inventions with modified inventorship requirements — or a strategic shift toward trade secret protection of discovery platforms rather than patent protection of their outputs. Both paths have material consequences for how pharmaceutical IP is valued and how pharmaceutical innovation is financed.

Key Takeaways: Part VII

A complete pharmaceutical AI patent intelligence capability requires integrated data infrastructure spanning patents, regulatory records, litigation data, and commercial data — not patent databases alone. The operational model is a continuous four-stage intelligence cycle, not project-based searches. The augmented IP professional’s value lies in judgment and interpretation, not document retrieval. The technology roadmap moves toward integrated dynamic modeling of IP strategy, prosecution, and litigation as a single analytical function.

Investment Strategy Note

For institutional investors assessing pharmaceutical companies’ IP functions as a component of competitive moat analysis, the relevant questions are: (1) Is the company running continuous AI-driven LOE forecasting and competitive monitoring, or ad-hoc point-in-time searches? (2) Does the company’s IP data infrastructure integrate patent, regulatory, and litigation data, or are those sources siloed? (3) Can the IP team produce probabilistic LOE scenarios with confidence intervals, or only nominal expiry dates? A company that can answer these questions affirmatively has a structurally stronger IP function than one that cannot, and that difference has a calculable impact on revenue predictability across the commercial lifecycle of its assets.