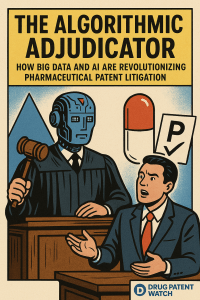

A deep-dive reference for pharma IP counsel, portfolio managers, R&D leads, and institutional investors who need to turn litigation data into competitive advantage.

Part 1: Why Pharmaceutical Patent Litigation Is a Balance Sheet Problem

The average capitalized cost to develop a new drug sits at approximately $2.6 billion, a figure that captures not just direct R&D expenditure but the cost of capital across a decade-long development arc that fails far more often than it succeeds. What secures that investment is not scientific achievement alone. It is market exclusivity, the temporary monopoly that a granted patent extends to an innovator, allowing the company to price the drug at a level sufficient to amortize development costs and generate capital for the next pipeline cycle. Strip that exclusivity away prematurely, and the economics of pharmaceutical innovation collapse.

That exclusivity is not a static asset. It exists under continuous challenge. Generic manufacturers, whose business model depends on launching at the moment of patent expiration, have a financial incentive to shorten or eliminate the exclusivity window as aggressively as the law allows. Biosimilar manufacturers face a structurally similar dynamic, though with higher technical barriers and a more complex regulatory overlay under the Biologics Price Competition and Innovation Act (BPCIA). The result is one of the highest-stakes legal environments in any industry: pharmaceutical patent litigation.

The financial exposure is concrete. In cases where more than $25 million is at risk, median litigation costs through trial and appeal reach $4 million to $5.5 million. Those are direct legal fees. The indirect costs — scientific personnel diverted to litigation support, delayed pipeline decisions, shareholder value erosion during prolonged uncertainty — rarely appear on a single line item but are often larger than the direct spend. A blockbuster drug generating $3 billion in annual U.S. net revenue might face a generic challenge that, if successful, wipes $1.5 billion in revenue within the first year of generic entry. Against that exposure, a $5 million litigation budget is not a cost center. It is a modest insurance premium.

This is the economic logic that has made predictive legal analytics a genuine strategic priority rather than a technology experiment. When the financial stakes are this concentrated and the legal outcomes this variable, the ability to forecast probable outcomes with data-derived confidence translates directly into better resource allocation, earlier and more informed settlement decisions, and tighter coordination between IP counsel and C-suite leadership.

The table below frames the cost structure that drives demand for prediction tools.

Table 1: Direct Litigation Cost Benchmarks for Pharmaceutical Patent Cases

| Amount at Risk | Median Cost Through Discovery (USD) | Median Cost Through Trial & Appeal (USD) | Primary Indirect Cost Drivers |

|---|---|---|---|

| $1M – $10M | $600,000 | $1,500,000 | Personnel distraction; minor market uncertainty |

| $10M – $25M | $1,225,000 | $2,700,000 | Scientific and executive time diversion; stock volatility |

| More than $25M | $2,375,000 | $5,500,000+ | R&D capital reallocation; strategic planning delays; material shareholder value impact |

Source: AIPLA surveys. High-stakes pharma cases routinely exceed median figures due to expert witness costs, international parallel proceedings, and the technical complexity of biologic claims.

Key Takeaways: The Litigation-as-Finance Framework

Litigation is not a legal department function that happens to cost money. It is a financial event that reshapes the revenue forecast for a drug asset with a known patent expiry date. The organizations that treat it that way, building predictive models that inform both legal and financial decision-making simultaneously, gain material advantage over those that treat it as a cost to be minimized after the lawsuit is filed.

Part 2: The Legal Architecture — Patentability Standards, KSR, and the Obviousness Trap

Understanding where predictive analytics creates value requires understanding the legal standards that generate uncertainty in the first place. The validity of a pharmaceutical patent is not a fixed attribute; it is a legal conclusion that courts and the USPTO reach through processes with significant subjective components. Those subjective components are where models can offer the most actionable probabilistic forecasts.

Statutory Patentability: The Four Requirements

Under 35 U.S.C., a patent requires the invention to satisfy four conditions. The first is statutory subject matter under 35 U.S.C. § 101, which requires that the invention be a process, machine, manufacture, or composition of matter. The Supreme Court’s decisions in Mayo Collaborative Services v. Prometheus Laboratories (2012) and Alice Corp. v. CLS Bank International (2014) substantially narrowed this requirement by holding that inventions directed to laws of nature, natural phenomena, or abstract ideas are ineligible. For pharma, Mayo created the most consequential constraint: method-of-treatment claims that essentially claim a natural correlation between a biomarker and a therapeutic response now face serious Section 101 vulnerability. Diagnostic method patents in personalized medicine are particularly exposed, and any predictive model applied to patent validity must encode this post-Mayo landscape.

The second requirement is utility under 35 U.S.C. § 101, which demands a specific, substantial, and credible practical use. In drug discovery, this requirement becomes relevant in the context of AI-generated compound candidates that have predicted but not yet demonstrated biological activity, a point discussed in detail in Part 9.

The third requirement is novelty under 35 U.S.C. § 102, the ‘newness’ standard. An anticipation challenge asserts that a single piece of prior art discloses every element of the claimed invention. Because anticipation requires a single-reference showing, it is a more binary and often more defensible standard than obviousness. Still, AI-powered semantic search tools have materially improved the probability of locating anticipatory prior art that would have been missed by keyword-dependent searches.

Non-Obviousness Under 35 U.S.C. § 103: The Primary Battleground

The fourth requirement — non-obviousness — is where most pharmaceutical patent litigation is decided, and where the application of machine learning creates the greatest practical advantage. Under the Graham v. John Deere framework, courts consider four factual questions: the scope and content of the prior art, the differences between the prior art and the claims, the level of ordinary skill in the pertinent art, and objective indicia of non-obviousness such as commercial success, long-felt need, and the failure of others.

The Supreme Court’s 2007 decision in KSR International Co. v. Teleflex Inc. replaced the rigid ‘teaching-suggestion-motivation’ (TSM) test with a flexible, common-sense standard. Under KSR, if a combination of known elements produces only predictable results, a court may find it obvious even without an explicit suggestion to combine in the prior art. This flexible standard placed exactly the analytical task that machine learning excels at — identifying patterns of conceptual combination across massive corpora — into the hands of courts and examiners who must assess it qualitatively.

An AI model trained on the full body of chemical and pharmacological literature can construct an obviousness argument by demonstrating that the structural modification at issue is a predictable step in a well-documented optimization series, without requiring a single reference to state this explicitly. This is the KSR doctrine operationalized at computational scale. The same model can identify secondary consideration evidence — commercial success data from IMS Health or IQVIA, failure records from discontinued clinical programs, long-felt-need evidence from published medical literature — that can rebut an obviousness case.

Objective Indicia of Non-Obviousness: Where IP Valuation Intersects Litigation

Secondary considerations are not merely legal formalities. They are empirical facts that, when documented with commercial sales data and clinical outcomes data, can shift a court’s non-obviousness analysis. A drug generating $2 billion in annual revenue carries implicit evidence of commercial success. The nexus requirement — the legal demand that the commercial success be attributable to the claimed invention rather than to marketing — requires the patent owner to connect the revenue directly to the patented features.

This is where IP valuation methodology overlaps with litigation strategy. An IP valuation that explicitly attributes revenue to specific claim elements, conducted through a royalty-rate analysis or a discounted cash flow model tied to patent-protected features, can be structured to satisfy the nexus requirement and provide quantified commercial success evidence. Patent owners who commission IP valuations primarily for transaction or licensing purposes and who fail to structure those valuations to address the nexus requirement miss an opportunity to pre-build their obviousness defense.

Part 3: Hatch-Waxman and the Paragraph IV Battlefield

The Drug Price Competition and Patent Term Restoration Act of 1984 created the legal infrastructure within which nearly all small-molecule brand-versus-generic litigation occurs. Its architecture has three components relevant to litigation prediction: the Orange Book listing system, the Paragraph IV certification mechanism, and the 30-month stay.

The Orange Book Patent Listing Strategy

Brand manufacturers list patents in the FDA’s Orange Book that they contend would be infringed by a generic’s ANDA. The strategic objective is to maximize the number of Orange Book-listed patents, extending the runway of potential Paragraph IV challenges and increasing the cost and complexity for generic entrants. As of 2024, some blockbuster drugs carry Orange Book listings of 30 or more patents covering the compound itself, specific formulations, polymorphs, methods of use, dosing regimens, and drug-device combinations.

The IP valuation implications are direct. A densely listed Orange Book portfolio does not simply signal legal defensibility; it represents a calculable delay value. Each additional patent that a generic must challenge, either in ANDA litigation or through an IPR petition, extends the expected generic entry date. An analyst modeling the net present value of a brand franchise must include not just the earliest patent expiry date but the probability-weighted expected entry date across all potential challenge scenarios. A portfolio with layered formulation and method-of-use patents may deliver three to five years of effective exclusivity beyond the compound patent expiry date, depending on how courts rule and how many generics are willing to sustain multi-year litigation.

The Paragraph IV Certification Mechanics

When a generic manufacturer files an ANDA and certifies under Paragraph IV that a listed patent is invalid, unenforceable, or will not be infringed, the generic must provide a detailed notice letter to the patent owner. The patent owner then has a 45-day window to file suit. If the suit is filed within that window, the FDA cannot approve the ANDA for 30 months, with exceptions for certain invalidity findings. This automatic 30-month stay is the single most valuable procedural tool available to brand companies and the primary reason why Paragraph IV notice letters trigger immediate legal response.

In 2024, there were 312 ANDA complaints filed, up from 259 the prior year. The District of Delaware and the District of New Jersey together handle the majority of this docket. Nearly half of all 2024 ANDA complaints landed before five judges, creating a highly concentrated judicial environment where case outcome prediction based on individual judge behavioral analytics is not only feasible but practically valuable.

Settlement Dynamics and the 180-Day Exclusivity Period

The first generic filer to submit a Paragraph IV challenge earns 180 days of marketing exclusivity before other generics can enter. This prize creates strong financial incentives for generic manufacturers to file early, even against patents they may not be fully confident in challenging. For brand companies, the settlement calculus must account for this exclusivity period: settling with the first filer and agreeing to an authorized generic arrangement may be more financially efficient than litigating to a judgment that, even if favorable, may be followed by immediate re-challenge from subsequent filers.

Predicting whether a given Paragraph IV challenge will settle, and under what terms, requires modeling the financial stakes for both sides, the strength of the patent claims based on prosecution history and prior art analysis, the litigation track record of both law firms, and the specific judge’s historical tendency to encourage or discourage early settlement. These are precisely the multi-variable inputs that a well-trained Random Forest or gradient boosting model handles more systematically than intuition alone.

Key Takeaways: Hatch-Waxman as a Strategic System

The Hatch-Waxman framework is not a litigation mechanism to manage defensively. For brand companies, it is a product lifecycle management system. Each Orange Book patent listing, each 30-month stay triggered, and each settlement structured with a specific generic entry date is a calculated decision with a quantifiable impact on revenue. Portfolio managers who model these decisions explicitly, rather than treating litigation as a legal cost center, can more accurately forecast revenue duration for branded drugs approaching patent expiry.

Part 4: PTAB and Inter Partes Review — Institution Rates, Funnel Economics, and Portfolio Implications

The America Invents Act of 2011 created Inter Partes Review, a proceeding at the Patent Trial and Appeal Board that allows a third party to challenge patent validity on grounds of novelty and obviousness based on prior art patents and printed publications. The stated rationale was efficiency: a faster, less expensive tribunal to weed out patents that should not have been granted. The practical effect has been to create a parallel front in patent disputes that is tactically distinct from district court litigation.

The IPR Funnel: Three Decision Points, Three Probability Estimates

An IPR proceeds through a gate structure with distinct probability distributions at each stage. Understanding this funnel is the prerequisite for any predictive model applied to PTAB proceedings.

The first decision point is the PTAB’s institution decision, which the Board must issue within three months of the patent owner’s preliminary response. The Board will institute a trial only if the petitioner demonstrates a reasonable likelihood of prevailing on at least one challenged claim. For biopharma patents, the institution rate runs at approximately 73%, above the all-technology average of 67 to 69%. This higher rate reflects the effectiveness of semantic prior art search tools in surfacing previously unidentified references.

The second decision point is the final written decision, which must issue within 12 months of institution. Biopharma patents that survive to a final written decision have a higher claim survival rate than patents in software or electronics. The institution-to-invalidation rate for pharma claims runs approximately 66%, versus 68 to 87% for all technologies combined. A pharma patent that clears the institution hurdle is more likely to survive with at least some claims intact than the all-sector average would suggest.

The third decision point — often overlooked — is the Federal Circuit appeal. PTAB final written decisions can be appealed, and the Federal Circuit reviews claim construction de novo while reviewing factual findings for substantial evidence. The appellate reversal rate is low but non-trivial, and any complete IPR outcome model must account for this tail risk.

Table 2: IPR Funnel Statistics for Biopharma vs. All Technologies (FY2024)

| Stage | Biopharma | All Technologies | Strategic Implication |

|---|---|---|---|

| Petitions as % of Total | ~6-7% | ~93-94% | Biopharma is a concentrated but intensely contested subset of the PTAB docket |

| Institution Rate | ~73% | ~67-69% | PTAB is more likely to grant a trial on a biopharma patent; the institution hurdle is effectively lower |

| Post-Institution Claim Invalidation Rate | ~66% | ~68-87% | Biopharma patents survive institution at a higher rate than most other technology sectors |

Data: PTAB Statistical Reports, FY2024. Rates vary by fiscal year and technology classification methodology.

The IPR IP Valuation Discount: Modeling the Probability of Invalidation

Every biopharma patent under active IPR challenge should carry an explicit validity discount in any IP valuation. The appropriate discount rate is not the binary outcome of a single IPR but the probability-weighted expected value across the full funnel. If a given IPR petition has a 73% chance of institution and, given institution, a 66% chance of at least partial invalidation, the compound probability that the patent survives with all commercially relevant claims intact is approximately 27%. A drug franchise whose revenue depends entirely on a single composition-of-matter patent facing an IPR petition is not a 12-month risk item; it is an asset that carries a material probability of near-term revenue loss that should be reflected in the valuation model.

Portfolio managers at pharmaceutical companies should apply this probabilistic discount continuously, updating it as the IPR progresses through institution, patent owner response, oral argument, and final written decision. This is not speculative; it is the same probability-tree methodology applied to clinical trial assets at Phase 2 and Phase 3.

Strategic Use of IPR Petitions in Biosimilar Disputes

For biosimilar manufacturers, IPR petitions serve a distinct function from their role in ANDA litigation. The BPCIA ‘patent dance’ requires biosimilar applicants to exchange information with the reference product sponsor about the biosimilar’s manufacturing process and then negotiate which patents will be litigated. The IPR is an out-of-band mechanism: a biosimilar applicant can file an IPR petition against a biologic patent without triggering the full dance, potentially clearing a freedom-to-operate concern more quickly and less expensively than district court litigation. Conversely, reference product sponsors can use the threat of IPR to complicate the biosimilar applicant’s litigation planning.

This tactical complexity means that any predictive model applied to biologic patent disputes must encode both the BPCIA patent dance structure and the IPR funnel as interdependent decision trees, not separate processes.

Key Takeaways: IPR as a Financial Instrument

When a generic or biosimilar company files an IPR petition, it is not simply initiating a legal proceeding. It is buying a put option on a patent’s value. When a brand company defends against one, it is defending an asset whose revenue contribution is quantifiable. Both sides benefit from probabilistic outcome modeling that moves the decision beyond experienced-attorney intuition to data-derived confidence intervals.

Part 5: Building the Predictive Data Foundation

The most architecturally sophisticated machine learning model produces unreliable outputs if its training data is thin, biased, or poorly integrated. In pharmaceutical patent analytics, the data problem is not a lack of information — it is the fragmentation of relevant information across incompatible public and private systems. The organizations that solve the data integration problem first own a structural competitive advantage that is harder to replicate than any particular algorithm.

USPTO Open Data: PTAB API, Office Action Data, and Assignment Records

The USPTO’s Open Data Portal provides programmatic access to the full corpus of U.S. patent records through a suite of APIs. The PTAB API contains every IPR petition, institution decision, patent owner response, oral argument transcript, and final written decision. The Office Action API contains the full text of patent examiner rejections and applicant responses during prosecution, giving direct access to the prosecution history that often determines the scope of claims in litigation and the strength of any argument for invalidity based on file wrapper estoppel.

For predictive modeling, prosecution history data is among the most predictive raw inputs available. A patent that overcame a prior art rejection by making a narrow argument — ‘our compound is distinguished from the prior art because it has property X’ — has constrained its claims in a way that can be exploited in litigation. The legal doctrine of prosecution history estoppel bars the patent owner from recapturing scope that was surrendered to overcome a prior art rejection. An AI model that can read prosecution histories at scale and identify these estoppel-generating arguments has a capability that no law firm associate reviewing manually can match at the same speed.

The USPTO Patent Assignment API tracks ownership transfers, which is essential for understanding acquisition strategies, secondary market transactions in patent assets, and the identity of the actual economic beneficiary of a patent, which can differ from the named assignee in litigation contexts where patents have been sold to non-practicing entities.

PatentsView: The Disambiguation Layer

PatentsView, a USPTO-supported database, provides a cleaned and entity-disambiguated version of patent metadata. Raw USPTO data suffers from severe entity inconsistency: the same assignee appears under dozens of variant names across different filings, and the same inventor appears with name variations, middle initials, and address changes that make longitudinal tracking difficult. PatentsView resolves these inconsistencies, enabling clean tracking of a company’s full patenting activity, citation network, and examiner-specific approval rates.

Citation data is a particularly rich feature for predictive models. Forward citation count — how many later patents cite a given patent — correlates with the patent’s perceived importance in its technology field and has been shown to correlate with litigation probability. Backward citation density and the technical diversity of cited references can signal the breadth of the patent’s claimed innovation. These citation-derived features often rank among the top predictors in Random Forest models of IPR petition outcomes.

PACER: The Litigation History Repository

Public Access to Court Electronic Records (PACER) contains more than one billion documents from every federal court proceeding. For patent litigation analytics, PACER is the primary source of ground truth on case outcomes. But PACER was built as a document retrieval service for individual practitioners, not as a queryable database for machine learning. Extracting it at scale requires either building a web-scraping infrastructure capable of navigating its per-page fee model and inconsistent formatting, or relying on third-party aggregators like the CourtListener RECAP Archive, which mirrors PACER content under an open-access model.

The key structured data elements from PACER for a litigation prediction model include the parties and their counsel, the assigned judge, filing and termination dates, nature of suit codes, and the full docket entry log. The key unstructured data elements — the documents themselves — require NLP processing to extract argument types, claim construction positions, expert witness identities, and judicial reasoning. Judge-level analytics are among the most consequential features. A model that captures the historical rate at which a specific judge grants summary judgment on obviousness grounds, or the average time from Markman hearing to final judgment in ANDA cases, has direct predictive value that practitioners experience intuitively but rarely quantify.

Commercial Intelligence: DrugPatentWatch and IQVIA Integration

Public data provides the legal and procedural skeleton of a predictive model. Commercial intelligence provides the economic context that makes predictions actionable. DrugPatentWatch covers patents in 134 countries, integrates Orange Book listings with litigation history, and in some cases provides access to confidential royalty and settlement terms, offering a data layer on the true economics of past disputes that is simply not available from public records alone. Its coverage of anticipated generic entry dates, biosimilar approvals, and clinical trial status connects patent events to the revenue timeline of a drug franchise in a way that allows a model to estimate the financial stakes of a given dispute with specificity rather than approximation.

IQVIA (formerly IMS Health) revenue and prescription data, when integrated with patent filing and expiry data, allows an analyst to calculate the annual revenue that a given patent or set of patents is protecting at any point in time. This ‘amount at risk’ figure is itself a powerful input to a litigation prediction model: brand companies defend patents more aggressively when the protected revenue is larger, which affects settlement probability, litigation duration, and the likelihood of an IPR petition being filed versus a district court suit being allowed to proceed.

Data Wrangling: The Real Cost of Predictive Analytics

The internal build cost for a high-quality pharmaceutical patent litigation prediction system is routinely underestimated because the visible costs — model development, compute, licensing — are smaller than the invisible ones: entity resolution, data linking, feature engineering, and bias auditing.

Entity resolution alone is a substantial engineering project. ‘Pfizer Inc.,’ ‘Pfizer Pharmaceuticals LLC,’ and ‘Wyeth LLC’ are legally distinct entities that all appear as Pfizer-affiliated patent owners in different records depending on the vintage and type of the filing. Linking each of these to the consolidated Pfizer corporate entity is not a lookup exercise. It requires trained classifiers applied to company name variations, address histories, and corporate hierarchy data from commercial sources like Dun & Bradstreet or Bureau van Dijk.

Data linking — connecting a PACER case record to the specific USPTO patent numbers at issue — requires parsing the complaint text, matching cited patent numbers to the USPTO database, and handling cases where the same patent is involved in multiple parallel proceedings simultaneously. This link is the bridge that allows a model to correlate patent characteristics with litigation outcomes. Without it, the model is blind to the very features that are most predictive.

Selection bias deserves explicit attention. PACER contains only disputes that escalated to federal court filings. The much larger universe of challenges that settled before litigation, or that were resolved by license, does not appear in PACER and cannot easily be reconstructed. A model trained exclusively on litigated cases will likely overweight features that predict aggressive litigation behavior, and may produce poorly calibrated forecasts for disputes where early settlement is the more probable outcome. This bias is not a reason to abandon the modeling exercise; it is a reason to interpret model outputs with explicit awareness of what the training data does and does not represent.

Key Takeaways: Data Is the Defensible Asset

The algorithm in a predictive legal analytics system is not the scarce resource. Open-source machine learning libraries provide state-of-the-art implementations of every model class discussed in this guide. The scarce resource is the integrated, high-quality, entity-resolved, bias-audited dataset that feeds the model. Organizations that invest in building this dataset own a competitive asset that compounds over time as new cases, decisions, and commercial data are ingested. Those that do not have to either replicate the investment or accept inferior inputs.

Part 6: IP Valuation as a Core Litigation Asset

Patent validity disputes and IP valuation are typically treated as separate disciplines inside pharmaceutical companies, with litigation managed by legal and valuation managed by business development or finance. This separation is strategically costly. The methodologies and data that support a rigorous IP valuation are often directly relevant to the litigation strategy for the same patents, and the litigation outcomes in turn determine whether the valuation assumptions are defensible.

Three Valuation Methodologies and Their Litigation Relevance

The income approach values a patent by discounting the cash flows it is projected to generate, adjusted for the probability that it will survive a validity challenge. The key inputs — revenue forecasts tied to the exclusivity period, risk-adjusted discount rates, and probability of success factors at each litigation stage — are exactly the same variables that a litigation prediction model must estimate. A pharmaceutical company that maintains a continuously updated IP valuation model using probability-of-success inputs from its litigation analytics platform has a unified system for both legal strategy and financial reporting.

The market approach values a patent by reference to comparable transactions in the secondary patent market. Reliable comparables require access to actual transaction prices, royalty rates from license agreements, and settlement terms from patent disputes — data that is largely confidential and accessible only through commercial intelligence sources like DrugPatentWatch, patent brokerage firms, or specialized licensing databases. The value of this data for both valuation and litigation purposes makes the subscription cost of these services a rounding error against the decisions they inform.

The cost approach values a patent based on the cost to recreate the underlying innovation. For pharmaceutical patents, this is rarely the primary valuation method because the cost to rediscover a specific compound or formulation is highly uncertain, but it provides a floor value that can be relevant in bankruptcy proceedings or in licensing disputes where the minimum value of a patent portfolio is at issue.

Evergreening: Technology Roadmaps for Secondary Patent Prosecution

Evergreening refers to the practice of filing successive secondary patents to extend effective market exclusivity beyond the expiry of the original composition-of-matter patent. The term is sometimes used pejoratively, but the underlying practice — filing patents on genuinely novel formulation improvements, polymorph forms, dosing regimens, and combination therapies — is a standard and legally permissible lifecycle management strategy.

A comprehensive evergreening technology roadmap for a small-molecule drug typically includes four layers of patent prosecution. The base layer is the composition-of-matter patent, which covers the active pharmaceutical ingredient (API) itself. The second layer covers specific crystalline or amorphous polymorphic forms, which can have different solubility, stability, or bioavailability characteristics that are patentably distinct from the original composition. The third layer covers formulation patents — extended-release dosing systems, modified-release pellets, specific salt forms, or drug-device combinations like autoinjectors or prefilled syringes. The fourth layer covers method-of-use patents for new indications discovered after the original compound patent filing.

Each layer has a different vulnerability profile in litigation. Composition-of-matter patents are the hardest to design around but are increasingly challenged under obviousness theories that point to prior art compound series. Polymorph patents face consistent invalidity challenges based on the argument that crystalline forms are inherently obvious variants of the parent compound. Formulation patents are vulnerable to prior art showing similar controlled-release technologies applied to other drugs. Method-of-use patents are increasingly exposed to Section 101 challenges when the claimed method relies on a biomarker-treatment correlation.

A predictive model that maps each layer of a drug’s patent portfolio to its specific vulnerability type and litigation probability, weighted by the revenue protected by each layer, gives an IP team a granular view of where the portfolio is strong, where it is exposed, and where additional prosecution investment would generate the greatest expected value in terms of extended exclusivity.

For biologics, the evergreening roadmap is structurally different. The reference product sponsor’s 12-year exclusivity period under the BPCIA provides a regulatory moat that does not depend on patent coverage in the same way. But biologic patent portfolios still cover manufacturing processes, specific formulation conditions, dosing regimens, and device components, and these patents are the subject of the BPCIA patent dance and associated IPR petitions. The prediction problem for biologic patents is whether specific manufacturing process patents will survive an IPR petition filed by a biosimilar applicant who has gained visibility into the manufacturing details through the patent dance exchange.

Key Takeaways: Valuation and Litigation Are the Same Analytical Problem

Any pharmaceutical IP team that runs IP valuation and litigation strategy as separate functions is running two overlapping models with inconsistent inputs and generating outputs that cannot be reconciled when a deal is being priced or a settlement is being negotiated. The unified approach — a single probabilistic model of patent survival that feeds both the financial forecast and the legal strategy — is more accurate and more actionable.

Part 7: The AI and NLP Toolkit — Semantic Prior Art Search, Predictive Models, and Network Analysis

The analytical toolkit for pharmaceutical patent litigation prediction has matured significantly in the past five years. The primary categories — NLP-based text analysis, classical machine learning models, deep learning, and network analysis — each address a distinct part of the problem.

Natural Language Processing: Semantic Search and Prosecution History Mining

Keyword-based prior art search is inadequate for pharmaceutical patent invalidity analysis for a structural reason: the same chemical concept can be described using different naming conventions, structural representations, biological effect descriptors, or mechanism-of-action terminology depending on the year, the country, and the disciplinary background of the author. An NLP-powered semantic search engine represents documents as high-dimensional vectors that encode meaning rather than exact terms, allowing it to surface conceptually similar documents that share no keywords with the query.

In a Paragraph IV invalidity case, this capability matters when the prior art predates standardized nomenclature, when it appears in non-English language publications, or when it describes a structurally analogous compound series using different substituent notation. A semantic search that surfaces two papers published in the German pharmacology literature of the 1980s that together describe a compound series from which the branded drug’s structure is an obvious modification is not a theoretical edge case. It is a routine occurrence when the search is conducted at adequate scale and semantic depth.

NLP applied to prosecution history mining is equally powerful. The file wrapper of a patent — the complete exchange between the applicant and the examiner during prosecution — contains every rejection, every argument made to overcome it, and every claim amendment. The legal doctrines of prosecution history estoppel and disclaimer can limit a patent owner’s claim scope based on statements made during prosecution even if the claims themselves appear broader. A model that reads thousands of prosecution histories and identifies the specific argumentative patterns that generate estoppel or disclaimer can flag these vulnerabilities automatically during invalidity analysis.

Claim construction analysis is a third NLP application with direct litigation relevance. At a Markman hearing, a district court judge must interpret the meaning of disputed claim terms. Courts rely on the intrinsic record — the patent itself, the prosecution history, and related patents in the same family — to construe claims. A model trained on thousands of prior Markman decisions from a specific court can identify the interpretive tendencies of a given judge, including whether that judge tends to favor the plain-and-ordinary meaning of a term or whether the judge gives substantial weight to prosecution history limitations. This predictive claim construction analysis changes the settlement calculus: if a favorable or unfavorable Markman outcome becomes predictable with meaningful confidence before the hearing, both parties can negotiate from a more informed position.

Machine Learning Models for Outcome Prediction

Random Forest models are the workhorses of pharma patent litigation prediction for practical reasons. They handle the high-dimensional feature space of legal data without catastrophic overfitting, they rank features by predictive importance in a way that produces interpretable outputs, and they perform reliably on datasets where the number of features is large relative to the number of training cases — a common condition in specialized legal analytics where only a few thousand IPR proceedings with consistent data are available for model training.

In a Random Forest applied to IPR institution decisions, the most predictive features typically include the technology area of the challenged patent (small molecule versus biologic versus device), the identity and historical track record of the three-judge panel, the number and type of prior art references cited in the petition, the presence or absence of a claim construction dispute in the petition, the law firm’s historical institution success rate before this particular panel or technology category, and the nature of the challenge (anticipation, obviousness, or a combination).

Gradient Boosting models, particularly XGBoost and LightGBM implementations, often outperform Random Forests on structured tabular data when sufficient training data is available. They sequentially correct the errors of previous weak learners, which tends to reduce bias on the majority class in imbalanced datasets. Given that IPR petitions are far more common than district court trials, and that institution decisions outnumber final written decisions, class imbalance is a consistent challenge that gradient boosting handles better than bagging-based approaches.

Support Vector Machines (SVMs) remain the standard for document classification tasks like technology-assisted review in e-discovery, where the objective is to classify a large document population as responsive or non-responsive to a given request. Their theoretical foundation in margin maximization provides strong generalization in high-dimensional text spaces even with relatively small training sets. In pharmaceutical patent litigation, e-discovery involves enormous volumes of technical scientific documents that require sophisticated classification to identify relevant prior art, laboratory notebooks, or communications related to an alleged inequitable conduct claim. SVMs applied to this task can dramatically reduce the manual review burden.

Network Analysis: Patent Citation Graphs and Litigation Topology

Patent citation networks — graphs in which patents are nodes and citations are directed edges — reveal structural properties of a patent’s position in its technology field that are not apparent from inspecting the patent in isolation. A patent with high in-degree (many later patents citing it) is a pioneer in its field, which has two opposing litigation implications: it may be more defensible because its foundational status is recognized, but it also means that many subsequent patents built their novelty arguments on distinguishing it, potentially providing a rich source of prior art references.

Litigant networks, which map the parties in patent disputes and their relationships to prior disputes with each other or with other industry participants, can reveal habitual adversaries, patterns of serial Paragraph IV challenges by specific generic manufacturers against specific brand companies, and the typical settlement behavior of particular litigants. A generic company with a long history of settling early against a specific brand’s drug franchise is a different strategic opponent from one that litigates to judgment as a matter of policy. Network analysis makes these behavioral patterns visible at scale.

Law firm and judge networks connect counsel track records to specific judicial panels, revealing which firms have demonstrated effectiveness before specific judges and which judges have historically ruled in favor of patent owners versus challengers in particular technology classes. This information exists in public records but is too voluminous for manual aggregation. Network analysis tools make it tractable.

Investment Strategy Note: Reading the Patent Litigation Landscape for Portfolio Signals

For institutional investors in pharmaceutical stocks, the predictive analytics framework described above generates investable signals. A high-confidence IPR institution prediction against a drug company’s primary composition-of-matter patent, when combined with IQVIA revenue data showing that the challenged patent protects more than 60% of the franchise’s U.S. revenue, is a material risk factor that should affect position sizing and hedging decisions. Drug patent analytics platforms that feed litigation predictions to institutional investor workflows are providing the same type of quantitative risk signal that genomic trial data or FDA meeting transcripts provide in other parts of pharmaceutical investment research.

Part 8: Generative AI in Legal Practice — Opportunities, Hallucination Risk, and the Evolving PHOSITA Standard

Large Language Models (LLMs) have entered the pharmaceutical patent litigation workflow through several entry points. Some are straightforward productivity tools. Others carry risks that require explicit institutional guardrails.

Productive Applications of LLMs in Patent Litigation

LLMs are effective at drafting routine sections of legal documents — background sections, procedural summaries, boilerplate claim charts — that previously required associate-level hours. They can summarize lengthy deposition transcripts and technical expert reports with sufficient accuracy to accelerate review by senior counsel. They can translate non-English prior art documents and generate preliminary technical summaries of scientific literature for attorneys who are not domain specialists.

In due diligence contexts, LLMs can process patent portfolios at speeds that allow coverage of hundreds of assets in the time it would previously take to analyze a dozen manually. This is particularly relevant in pharmaceutical M&A, where the acquirer must assess the validity and enforceability of the target’s patent portfolio before pricing the transaction. An LLM-assisted rapid patent review that flags specific prosecution history vulnerabilities, obviousness exposure, and Section 101 risks across a portfolio of 200 patents in 48 hours changes the timeline and cost structure of pharma M&A diligence.

Hallucination Risk: The Case for Mandatory Human Review

LLMs fabricate legal citations. This is not a marginal edge case; it is a documented and recurring failure mode. In several publicly documented incidents, U.S. courts sanctioned attorneys who submitted briefs containing AI-generated citations to nonexistent cases. Judges in the Southern District of New York, the Northern District of Texas, and other jurisdictions have now issued standing orders requiring counsel to certify that AI-generated content has been verified against actual legal databases before filing.

The pharmaceutical patent context makes this risk particularly acute because it operates at the intersection of two specialized knowledge domains — pharmaceutical chemistry and patent law — in which LLM hallucinations are most dangerous. A model that invents a Federal Circuit precedent on the obviousness of polymorph patents is not easily caught by a non-specialist reviewer. Every LLM output that will be filed with a court, submitted to the USPTO, or used in settlement negotiations must be independently verified against primary sources by a qualified attorney. This is not a best practice recommendation. It is a professional responsibility requirement.

AI and the Elevation of the PHOSITA Standard

The most structurally consequential impact of AI on pharmaceutical patent law may not be in the litigation tools themselves but in how AI changes the baseline capability of a ‘person having ordinary skill in the art.’ The PHOSITA standard defines the level of knowledge against which obviousness is measured. As AI-powered literature search and analysis tools become standard equipment for any skilled pharmaceutical scientist, the knowledge base of the hypothetical PHOSITA expands. An innovation that would have been non-obvious to a scientist working with manual search tools in 2010 might be obvious to the same scientist operating with AI-assisted semantic search in 2026.

This evolution is not yet fully reflected in case law, but the trajectory is discernible. Courts are beginning to consider arguments that AI-augmented researchers can identify previously invisible connections between prior art references faster and more comprehensively than their predecessors. If this argument gains traction in Federal Circuit jurisprudence, the threshold for patentability of incremental pharmaceutical innovations — improved formulations, new polymorphs, second-generation dosing regimens — will rise materially. For pharmaceutical IP teams, this means that patents filed today on incremental innovations may face a more demanding obviousness standard in litigation than the prosecution history suggests, because the court’s PHOSITA standard at the time of trial will reflect the AI tools available in that year, not the year of filing.

Part 9: The AI-Inventorship Disclosure Dilemma

The use of AI platforms in drug discovery — tools from companies like Insilico Medicine, Recursion Pharmaceuticals, and Schrödinger — has generated a novel legal problem that sits at the intersection of patent law, trade secret law, and corporate strategy.

Thaler v. Vidal and the Human Contribution Requirement

In Thaler v. Vidal, the U.S. Federal Circuit definitively held that an inventor must be a natural person under current U.S. patent law. The ruling rejected patent applications listing DABUS, an AI system, as the sole inventor. The USPTO’s 2024 guidance clarified the surviving standard: an invention is patentable when at least one human made a ‘significant contribution’ to the conception of the claimed invention. Operating or owning the AI platform is insufficient. The human researcher must have contributed meaningfully to the specific inventive concept, such as defining the optimization target, interpreting the AI’s output in a non-obvious way, or designing the experimental validation that confirmed the AI’s prediction.

The Trade Secret Tension

Pharmaceutical companies using AI drug discovery platforms face a structural dilemma. Patent law requires disclosure of the inventive process sufficient to enable a skilled practitioner to reproduce the invention, and it requires naming the actual human inventors. Trade secret law incentivizes concealing the specific capabilities and training data of the AI platform that generated the lead compound. These obligations pull in opposite directions.

A company that is fully transparent about the AI’s role in generating the lead structure risks having its patent invalidated under Thaler — the argument being that the AI did the inventive work and the humans merely pressed the button. A company that understates the AI’s contribution to protect its trade secret faces two risks: an enablement challenge (the patent doesn’t disclose enough to enable the relevant audience to understand how the invention was made), and an inventorship challenge if it later becomes apparent in litigation that the named human inventors did not actually conceive the claimed invention in the legally required sense.

This dilemma creates a predictable new front in pharmaceutical patent litigation. During discovery in ANDA cases involving AI-discovered drugs, generic defendants will systematically seek documents showing the extent of AI involvement in compound selection, lead optimization, and claim drafting. The ‘disclosure dilemma’ is not hypothetical; it is already generating discovery disputes in cases involving early-stage AI-discovered compounds that are now reaching clinical development and Orange Book listing.

IP Valuation Implications for AI-Discovered Drug Portfolios

A patent covering an AI-discovered compound carries a higher validity risk premium than an otherwise comparable patent covering a compound discovered by conventional medicinal chemistry. This premium should be reflected in any IP valuation of a drug portfolio containing AI-discovered compounds. The mechanism is straightforward: the probability that the patent survives a Thaler-based inventorship challenge or an AI-disclosure-based enablement challenge is lower than the probability for a patent with a clean human inventorship record. Lower survival probability means lower expected value for the protected revenue stream. Pharma M&A teams and licensing analysts who fail to apply this discount to AI-discovered compound patents are mispricing the asset.

Part 10: Explainable AI, Algorithmic Bias, and Non-Negotiable Guardrails

A litigation prediction model that cannot explain its output is not a strategic tool. It is a curiosity. In high-stakes legal decisions where millions of dollars and years of exclusivity turn on a strategic choice, a black-box probability estimate without an interpretable rationale cannot receive the institutional trust it needs to influence decisions.

Explainable AI Techniques: LIME, SHAP, and Feature Importance

Local Interpretable Model-Agnostic Explanations (LIME) and Shapley Additive exPlanations (SHAP) are the standard XAI tools for making individual model predictions interpretable. LIME creates a locally approximating simple model around a specific prediction, identifying which features pushed the prediction toward the predicted outcome in that particular case. SHAP uses cooperative game theory to assign precise contribution values to each feature, answering the question: ‘For this specific IPR petition, how much did the identity of the assigned APJ panel, the number of prior art references cited, and the prosecution history of the challenged patent each contribute to the 68% institution probability?’

This level of interpretability transforms a probability estimate into a litigation brief. A partner advising a brand company on whether to file an early motion for sanctions against a weak IPR petition can point to the specific features that make the petition vulnerable — the prior art references cited are secondary to the compound, the petitioner’s counsel has a below-average institution rate before this panel, the prosecution history shows a specific argument that distinguishes all the cited references — and use that analysis to structure the patent owner’s preliminary response.

Algorithmic Bias: Where It Enters and How to Audit It

Algorithmic bias in legal analytics arises from four sources: historical bias in the training data (if certain types of companies won more often historically for reasons unrelated to patent quality), selection bias in which cases are represented (only litigated cases, not settled ones), representation bias in the judicial record (certain jurisdictions or technology classes are over-represented), and confirmation bias in feature engineering (if engineers encode assumptions about which factors matter, those assumptions are baked into the model).

For pharmaceutical patent prediction, the most practically significant bias is jurisdictional over-representation. Because the District of Delaware handles a disproportionate share of ANDA litigation, a model trained on this data learns Delaware-specific judicial tendencies that may not transfer to predictions about cases in the Western District of Texas or the District of New Jersey. Explicit jurisdiction stratification, either through separate models for each major venue or through explicit jurisdiction features with adequate representation in the training data, is required to produce reliable cross-venue predictions.

Continuous bias auditing — running fairness tests on model outputs after deployment to detect emergent biases as the case law evolves — is not optional. A model trained on 2015-2020 data may have encoded assumptions about the validity rates of method-of-treatment patents that the post-Mayo jurisprudence has since overridden. Without continuous retraining and bias auditing, the model becomes progressively more miscalibrated as the legal landscape shifts.

Key Takeaways: The Institutional Framework for Responsible AI in Legal Decision-Making

A responsible institutional framework for AI-assisted patent litigation decision-making requires four components: explainable outputs with LIME or SHAP attribution, bias audits on a defined schedule, mandatory human review of every model output before it influences a legal filing or settlement negotiation, and a clearly defined escalation path for cases where the model’s prediction conflicts with experienced attorney judgment. The model is an input to the decision, not the decision itself.

Part 11: Building vs. Buying Predictive Legal Analytics

The strategic choice between building a proprietary litigation analytics platform and purchasing a vendor solution — Pre/Dicta, Lex Machina, Docket Alarm, or a specialized pharmaceutical analytics provider — turns on four variables: the organization’s data ambition, its technical talent availability, its tolerance for build timeline risk, and the degree of customization it requires.

A vendor solution provides immediate access to pre-built data integrations, cleaned historical datasets, and trained models. The deployment timeline is months rather than years. The cost is predictable. The limitations are standardization (vendor models are built for broad applicability, not for the specific nuances of your drug portfolio’s patent thicket), data custody (your litigation queries and strategic inputs are processed through a third-party system), and dependency (you are subject to the vendor’s product roadmap, pricing changes, and discontinuation risk).

A proprietary build gives complete control over the data architecture, the feature engineering choices, the model design, and the outputs. It produces a proprietary data asset — the integrated litigation-patent-commercial database — that compounds in value as new data is ingested and that cannot be acquired or used by competitors. The cost is higher upfront, the timeline is typically two to four years to a production-grade system, and it requires hiring or partnering with a team that combines data engineering, machine learning, and pharmaceutical IP domain expertise — a talent combination that is genuinely scarce.

A hybrid model — purchasing a vendor platform for baseline court analytics while building proprietary pharmaceutical-specific overlays for commercial data integration, portfolio-specific patent vulnerability mapping, and settlement term modeling — often provides the best risk-adjusted outcome for large pharmaceutical companies. The vendor platform handles the commodity data infrastructure. The proprietary build focuses on the differentiated analytical layer where pharmaceutical-specific domain expertise creates the greatest value.

Part 12: Investment Strategy — Reading Patent Litigation as a Financial Signal

For portfolio managers with exposure to pharmaceutical equities, patent litigation events are material financial signals that are systematically under-modeled in fundamental research. The following framework translates patent litigation analytics into portfolio decision inputs.

Revenue at Risk Calculation

The baseline input is the revenue protected by the challenged patent, net of any revenue that would survive patent expiry through authorized generics, co-pay cards, or payer contracting advantages that do not depend on exclusivity. For a drug generating $4 billion in annual U.S. net revenue, where the compound patent expiry in the absence of litigation would occur in 2028, an IPR petition filed in 2026 that targets the compound patent creates a potential revenue at risk of roughly $4 billion per year for a period determined by the litigation timeline.

Probability-Weighted Expected Value

Applying the IPR funnel probabilities — 73% institution, 66% post-institution partial or full invalidation — produces a compound probability of approximately 48% that the challenged patent survives with all commercially relevant claims intact. A rough expected revenue calculation discounts the protected revenue stream by this validity probability. If the market is pricing the stock on the assumption that the patent survives with 90% probability, a data-driven model showing 48% survival probability implies material downside that is not reflected in the current price.

Litigation Timeline as an Implied Option

The 30-month stay in ANDA litigation and the 18-month IPR timeline function as implicit put options on the brand company’s revenue stream, with the exercise date being the likely generic or biosimilar entry date. Options pricing methodology — specifically, modeling the range of possible entry dates as a distribution of expiration times — provides a more nuanced valuation than simple event probability analysis. Portfolio managers with quant capabilities can model the implied volatility of a drug franchise’s patent position as a function of the active litigation docket.

Reading Settlement Terms as Market Signals

When a brand company settles an ANDA case by agreeing to an authorized generic launch date, the settlement terms embed the parties’ private probability assessments of litigation outcomes. If a brand company settles by granting generic entry two years before the patent’s nominal expiry, the market can infer that the brand’s counsel assessed the patent as materially vulnerable. Systematic tracking of settlement entry dates relative to nominal patent expiry dates, available through services like DrugPatentWatch, provides a proprietary signal about portfolio-level patent quality that supplements formal IP valuation.

Part 13: Key Takeaways by Segment

For IP Counsel

The KSR flexible obviousness standard operationalizes exactly what machine learning models do best. AI-powered semantic search for prior art and NLP-based prosecution history mining are not luxuries; they are tools that close the gap between what human search can cover and what the legal standard now assumes the prior art universe contains. IPR petition analysis should include APJ panel behavioral analytics before filing or responding, because the institution decision is the most consequential single event in the IPR timeline. Every IP valuation exercise should encode current litigation probability inputs from a maintained prediction model.

For Portfolio Managers

Patent expirations are imprecise financial forecasts without litigation probability overlays. A drug franchise’s revenue duration is a probability distribution, not a single date. The 30-month stay, the IPR timeline, and settlement dynamics can add two to five years of effective exclusivity beyond the nominal patent expiry. Conversely, a high-confidence IPR institution prediction against a primary composition-of-matter patent is a financial risk factor that should affect position sizing before the institution decision is publicly known.

For R&D Strategy Teams

The Thaler v. Vidal ruling and the 2024 USPTO guidance on AI inventorship create a documentation imperative: detailed human contribution records for every stage of AI-assisted drug discovery are not optional. They are the evidentiary foundation that will determine patentability when a generic manufacturer demands to depose your scientists about what, precisely, the human researchers contributed to the claimed invention. Build those records now, before litigation creates a document retention dispute around them.

For M&A and Business Development

IP valuation of pharmaceutical targets must include a litigation risk premium for any patent that is: (a) an Orange Book-listed primary composition-of-matter patent within five years of expiry, (b) a biologic patent covering a reference product facing biosimilar competition, or (c) a patent covering an AI-discovered compound with a legally ambiguous inventorship record. These categories warrant a probabilistic discount to the base IP valuation that reflects the survival probability distribution of the challenged patents.

Part 14: Frequently Asked Questions

Can AI models predict pharmaceutical patent litigation outcomes with certainty?

No. The outputs are probabilistic — confidence-interval forecasts, not deterministic predictions. The value is in moving from intuition-based estimates with no stated confidence to data-derived probability distributions that can be stress-tested and refined as new information enters the record. A 70% predicted probability of IPR institution is not a guarantee; it is an actionable input to a decision framework.

What is the most commonly underestimated cost in building an in-house patent litigation analytics system?

Data integration and entity resolution. The model development work — selecting algorithms, tuning hyperparameters, validating outputs — is well-understood and increasingly commoditized. The work of building a clean, linked, entity-resolved database that connects USPTO prosecution histories to PACER litigation records to IQVIA commercial data is less glamorous and routinely underestimated in both time and cost. Plan for 18 to 24 months of data engineering before a model produces reliable outputs.

How does the AI inventorship issue affect the IP valuation of a drug portfolio?

Any patent covering a compound in which AI played a substantial role in lead identification or lead optimization carries a higher validity risk than an otherwise comparable patent with a clean human inventorship record. The magnitude of the discount depends on how clearly the human contribution is documented, how aggressively generic competitors are likely to pursue inventorship challenges, and how much revenue the patent is protecting. For primary composition-of-matter patents on AI-discovered drugs in late-stage development, the inventorship risk premium should be explicitly modeled as a separate scenario in the valuation.

Is the PHOSITA standard actually changing due to AI?

Formal Federal Circuit precedent has not yet codified an AI-augmented PHOSITA standard, but the argumentative foundation for doing so is already appearing in briefs. The logical inference from KSR — that the PHOSITA can use available tools to make predictable combinations — applies directly to AI-assisted literature search. As courts and the USPTO gain experience with how AI changes the practical scope of prior art searching, the effective PHOSITA standard will rise for technology areas where AI tools are already standard equipment, including pharmaceutical chemistry.

Build or buy for litigation analytics?

The honest answer for a large pharmaceutical company with an active litigation docket is: start with a vendor platform for baseline court analytics, build proprietary overlays for pharmaceutical-specific commercial and prosecution history integration, and plan a three-to-five-year path to a proprietary integrated platform if the litigation volume and competitive dynamics justify the investment. The data asset that results from the proprietary build is a strategic IP management tool, not a single-use analytics application.