A deep-dive reference for pharma/biotech IP teams, R&D leads, portfolio managers, and institutional investors who need forecasts that hold up in boardrooms, due diligence rooms, and courtrooms.

Part 1: Why Biopharma Forecasts Fail — and Why That’s a Structural Problem, Not a People Problem

A sales forecast in biopharma does not occupy the same risk category as a forecast in consumer packaged goods or enterprise software. Get a toothpaste forecast wrong by 30% and the company adjusts production. Get a drug forecast wrong by 30% and you have mispriced a Phase III trial, miscalculated an acquisition, overbuilt commercial infrastructure, or blown the covenant on a royalty-backed debt facility.

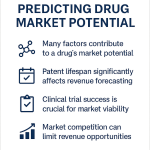

The empirical record on forecast accuracy is sobering. A study of 1,700 forecasts for 260 drugs found actual peak sales diverged from predictions made just one year before launch by a median of 71%. A majority of those projections overstated eventual sales by more than 160%. A parallel Austrian analysis of reimbursement submissions found a median overestimation of 33%, with 55.9% of forecasts qualifying as severely inaccurate — either overshooting by more than 100% or undershooting by more than 50%. Those are not outlier cases. They are the norm.

The cause is not forecaster incompetence. It is the systematic application of inadequate models to an industry that violates nearly every assumption those models carry.

What Makes Biopharma Forecasting Structurally Different

Four features of the biopharma market make standard demand modeling approaches unreliable.

First, the market-creation problem. Most consumer markets exist before the product launches. Biopharma often creates or redefines the market it enters. A first-in-class GLP-1 agonist for cardiovascular risk reduction does not compete against an established category — it establishes one. Analog-based peak-sales models collapse when there is no meaningful analog.

Second, the discontinuous shock problem. Revenue trajectories in pharma are not smooth curves interrupted by mild seasonality. They are punctuated by Paragraph IV litigation outcomes, FDA Complete Response Letters, payer formulary decisions, and surprise biosimilar entries — any of which can trigger immediate 40-80% revenue resets. A model that cannot encode these events probabilistically is not forecasting; it is extrapolating.

Third, the IP-revenue entanglement. Patent life is not background context for a biopharma forecast. It is the primary driver of the revenue curve’s terminal shape. A drug’s commercial value is inseparable from the defensibility of its intellectual property, and the timeline and magnitude of generic or biosimilar entry are determined as much by litigation strategy and secondary patent portfolios as by primary composition-of-matter expiry dates. Forecasting revenue without modeling the IP landscape is the equivalent of building a DCF without a terminal value.

Fourth, the regulatory optionality problem. Special designations — Orphan Drug, Breakthrough Therapy, Priority Review, Fast Track, Accelerated Approval — are not binary milestones. They alter pricing power, speed to market, payer negotiating dynamics, and the shape of the uptake curve. A model that treats designation as a dummy variable capturing a fixed average lift misses the trajectory effect entirely.

The Systemic Cost

When forecasts fail at the portfolio level, the downstream consequences compound. Overoptimistic launch forecasts lead to excess sales force hiring, bloated DTC budgets, and manufacturing capacity that must be written down. In M&A, inflated target projections result in acquisition premiums that destroy acquirer shareholder value — a pattern documented in study after study, with more than half of acquired lead assets missing pre-deal forecasts by an average of 40% over the three years following close.

Regression analysis does not eliminate uncertainty. No model can. What it does is replace assumption-driven extrapolation with evidence-driven quantification of causal relationships. That shift — from description to explanation — is where the commercial advantage lies.

Key Takeaways: Part 1

- The median biopharma peak-sales forecast misses reality by 71% at one year pre-launch. This is a structural failure of methodology, not a talent gap.

- Four features make standard models unreliable: market-creation dynamics, discontinuous IP and regulatory shocks, the IP-revenue entanglement, and regulatory optionality.

- Regression analysis addresses the root cause by quantifying causal relationships rather than extrapolating historical trends.

- For M&A, the stakes are acute: the average acquired asset misses its pre-deal forecast by 40% over three post-close years.

Part 2: The Regression Toolkit — Model Selection as Strategic Decision

The choice of regression model is not a statistical technicality. It is a strategic decision that must map directly to the business question. Applying the wrong model produces outputs that are statistically valid but commercially meaningless. The following covers each model class with the precision its application demands.

Simple and Multiple Linear Regression

Simple linear regression (Y = a + bX + ε) remains the most frequently misapplied model in commercial forecasting. Its structural assumption — a straight-line relationship between a single predictor and the outcome — is almost never satisfied in biopharma market dynamics. It is useful for isolating a first-order relationship (does ASP correlate with formulary tier?) but insufficient for any real commercial question.

Multiple linear regression (Y = β0 + β1X1 + β2X2 + … + βnXn + ε) extends this framework to accommodate the actual complexity of a drug’s commercial environment. It allows the forecaster to simultaneously quantify the independent contribution of marketing spend, competitive entry, sales force size, pricing, and clinical label specifics to revenue outcomes — while controlling for each factor’s covariation with the others.

The practical power of the ‘holding all else constant’ condition cannot be overstated. Without it, you cannot separate the revenue impact of increasing sales force headcount from the concurrent effect of a competitor’s patent expiry. With it, you can present a board with a defensible statement: ‘Each additional 100 detailing FTEs is associated with a $12.4M quarterly revenue increase, independent of pricing changes and competitive dynamics.’ That kind of precision changes budget conversations.

In litigation, multiple regression is the standard tool for constructing the ‘but-for’ world in pharmaceutical antitrust cases. Economists build models estimating what a drug’s price or sales volume would have been absent an alleged anti-competitive act — typically a reverse-payment or pay-for-delay settlement that delayed generic entry under a Paragraph IV agreement. The regression coefficient on the ‘post-settlement period’ dummy variable, after controlling for time trends, market dynamics, and comparator products, becomes the quantified damages estimate. These models have been accepted as evidence in cases involving billions in alleged harm.

Time-Series Models: ARIMA, SARIMA, and Hybrid Architectures

Time-series models are designed for a different question than multiple linear regression. Where multiple regression explains the drivers of sales, time-series models analyze the structure of the sales data itself to project forward.

Any time-series of drug sales decomposes into four components: level (the series mean), trend (the long-term directional pattern), seasonality (regular cycles such as the Q4 Medicaid pull-forward in specialty drugs), and noise (genuinely random variation). Time-series models isolate and project these components.

ARIMA (Auto-Regressive Integrated Moving Average) remains the workhorse for in-market products with stable sales histories. The AR component models the relationship between current sales and lagged sales values — capturing momentum. The I component applies differencing to remove trend and make the series stationary, which is a prerequisite for the AR and MA components to function correctly. The MA component models the relationship between current sales and lagged forecast errors, capturing how the series self-corrects after deviations.

For drugs with pronounced seasonal demand — influenza antivirals, allergy treatments, respiratory biologics — SARIMA extends ARIMA with seasonal parameters that explicitly capture the repeating annual cycle.

The most accurate time-series architectures in empirical pharmaceutical forecasting literature are hybrids. An ARIMA-Holt-Winters combination, for instance, captures the ARIMA model’s statistical rigor on trend and autocorrelation while leveraging exponential smoothing’s strength on level estimation during periods of volatile demand. Research published in the International Journal of Production Economics found hybrid ARIMA-exponential smoothing models consistently outperformed either approach alone on pharmaceutical sales datasets.

The key limitation of pure time-series models: they cannot explain why sales are moving. A sudden decline in a SARIMA forecast is indistinguishable between competitive entry, payer restriction, and a genuine demand shift. This is why sophisticated forecasting functions maintain both a time-series model for short-term operational forecasting and a multiple regression model for strategic scenario analysis.

Logistic Regression: Modeling Probability Outcomes

Logistic regression applies when the outcome is binary rather than continuous. Predicting whether a patient adheres to therapy beyond 12 months (Yes/No), whether a Paragraph IV challenge succeeds (Yes/No), whether a payer grants preferred formulary status (Yes/No) — these are logistic regression problems.

The model estimates the log-odds of the outcome occurring as a linear function of the predictors, then transforms this to a probability via the logistic function. In practical application, a market access team can build a logistic model trained on historical payer decisions across 50 molecules, with predictors including clinical superiority margin (QALY gain vs. comparator), annual treatment cost, availability of generics in class, and therapeutic area. The model’s output is a probability score for preferred tier placement for a new asset — a directly actionable input for the gross-to-net negotiation strategy.

For IP teams, logistic regression can model the probability of a Paragraph IV filer prevailing in district court based on patent type (composition of matter vs. method of use), patent age at filing, prior litigation history on the same molecule, and the technology cluster of the underlying innovation. This turns litigation outcome prediction from qualitative legal opinion into a probabilistic, data-driven input for the revenue erosion scenario.

Survival Analysis: Cox Proportional Hazards for Time-to-Event Data

The Cox Proportional Hazards model occupies a distinct analytical niche that biopharma forecasters frequently overlook. It models the time until an event occurs — patent expiry, loss of exclusivity, generic entry, patient discontinuation — as a function of a set of covariates.

Its primary clinical application is modeling Overall Survival or Progression-Free Survival in oncology trials. Its underutilized commercial application is modeling the ‘time-to-generic entry’ as a function of patent portfolio characteristics. A Cox model trained on historical Paragraph IV timelines, with covariates including patent thicket density, number of active litigations, prior Orange Book certifications, and molecule revenue (a proxy for litigation incentive), can generate a probability distribution over generic entry timing rather than a single point estimate. This is a materially more useful input to a revenue model than a binary ‘patent expires in year X’ assumption.

Investment Strategy Note: Model Selection

For portfolio managers evaluating biopharma assets: the model type underlying an issuer’s commercial forecast is a due diligence input. A company presenting a time-series extrapolation for a pre-launch asset has no valid basis for its projection — time-series requires in-market history. A company presenting a multiple regression model should be asked to disclose the training dataset, the validation methodology, and the coefficient values for key predictors. Opaque or undisclosed forecasting methodology is a risk flag, not a minor operational detail.

Key Takeaways: Part 2

- Multiple linear regression is the core strategic tool. Its ‘holding all else constant’ property allows causal attribution of revenue to specific drivers.

- Time-series models (ARIMA, SARIMA, hybrid architectures) are operationally superior for in-market products but cannot explain causation. Maintain both types in parallel.

- Logistic regression converts binary outcomes — payer decisions, litigation results, patient adherence — into probability scores that feed directly into revenue models.

- Cox Proportional Hazards models should be applied to generic entry timing, replacing single-date patent expiry assumptions with probability distributions.

- Scrutinize forecast methodology as a due diligence input. Pre-launch assets modeled with time-series extrapolation signal analytical weakness in the commercial team.

Part 3: Reading the Output — Turning Coefficients Into Commercial Narratives

A regression output is a numerical object. The job of the analyst is to turn it into a strategic argument. The following covers interpretation of the four key output elements — coefficients, p-values, confidence intervals, and goodness-of-fit measures — with the precision required for high-stakes decisions.

Coefficients: Direction, Magnitude, and Causal Framing

Each coefficient β in a multiple regression model represents the average change in the dependent variable (e.g., quarterly net revenue) for a one-unit increase in the corresponding independent variable, holding all other variables constant. Reading it requires attention to both sign and scale.

A positive coefficient on ‘market exclusivity months remaining’ confirms the intuitive expectation: more IP runway is associated with higher near-term revenue. A negative coefficient on ‘number of Paragraph IV filers’ is the statistical confirmation that each additional generic ANDA filer, before any of them actually launch, depresses branded revenue — presumably through payer anticipation effects and pre-emptive rebate concessions.

The magnitude matters for resource allocation. If the coefficient on ‘HCP digital promotion spend (per $100K)’ is +$1.8M in quarterly revenue and the coefficient on ‘DTC television spend (per $100K)’ is +$0.4M, the model has just produced the core argument for a budget reallocation — not as a qualitative hypothesis but as an empirical estimate derived from your own historical data.

Analysts should resist the temptation to assign causal interpretation to correlational coefficients without domain-knowledge validation. A positive coefficient on ‘number of patient advocacy groups active in the disease area’ may reflect genuine advocacy-driven uptake acceleration, or it may be a proxy for disease awareness driven by prior blockbuster launches in the same class. Regression quantifies associations; domain expertise determines whether the causal story is plausible.

P-values and Statistical Significance: The Signal-to-Noise Test

The p-value for each coefficient tests the null hypothesis that the true coefficient is zero — that the variable has no relationship with the outcome in the broader population. A p-value below 0.05 means you can reject that null hypothesis at the 95% confidence level: there is less than a 5% probability of observing the estimated coefficient if the true relationship were zero.

For strategic purposes, variables with p > 0.10 should generally be treated as noise — included in the model for structural completeness if theoretically warranted, but not used as the basis for resource allocation decisions. Variables with p < 0.01 carry strong statistical evidence of a real relationship and can support confident operational conclusions.

One common misinterpretation: p-value significance does not imply practical significance. A marketing spend variable with p = 0.001 but a coefficient of +$8K per $100K spend is statistically real but commercially irrelevant. Always pair significance testing with effect-size interpretation.

Confidence Intervals: Quantifying Forecast Uncertainty

The 95% confidence interval around a coefficient defines the range within which the true coefficient lies with 95% probability. For commercial planning, this interval is the quantified expression of forecast uncertainty. A coefficient of +$1.5M quarterly revenue per 100 FTE sales reps, with a 95% CI of [$0.2M, $2.8M], tells you the relationship is real (the interval excludes zero) but highly uncertain in magnitude. That uncertainty should propagate directly into your scenario planning — your upside scenario uses the upper bound, your downside uses the lower bound.

For M&A due diligence, confidence intervals on a target company’s published market share projections (reverse-engineered via comp-set regression) can quantify the range of plausible valuations rather than anchoring on a point estimate. This reframes the negotiation around a distribution, not a single number.

R-squared vs. Adjusted R-squared: The Overfitting Trap

R-squared (R²) measures the proportion of outcome variance explained by the model. An R² of 0.78 means 78% of the variation in quarterly sales across your historical dataset is accounted for by the included predictors. This sounds reassuring. It frequently is not.

R² always increases when you add more variables, regardless of whether those variables have any genuine relationship with the outcome. Add enough variables and you can achieve an R² of 0.99 on historical data while building a model that cannot predict next quarter’s sales better than a coin flip. This is overfitting.

Adjusted R² applies a penalty for each additional variable, increasing only when the new variable’s contribution to explanatory power exceeds what chance alone would produce. Use Adjusted R² as the primary goodness-of-fit metric for model comparison. For strategic forecasting, a model with Adjusted R² of 0.65 and six coefficients that are all statistically significant and directionally logical is more useful than a model with Adjusted R² of 0.88 and fourteen variables, four of which are proxies for each other.

The highest-authority forecasting models are not optimized for goodness-of-fit. They are optimized for interpretability and out-of-sample accuracy. Those are different objectives.

Residual Analysis: The Diagnostic Layer

After fitting any regression model, plot the residuals (actual minus predicted values) against fitted values and against each predictor. Three patterns indicate model misspecification.

A curved or parabolic pattern in residuals vs. fitted values means the relationship is non-linear — typically requiring a polynomial or log-transformed variable to correct. A funnel shape (residuals growing or shrinking as fitted values increase) indicates heteroskedasticity: the variance of the outcome is not constant across the range of predictions. This violates OLS assumptions and requires correction via weighted least squares or robust standard errors. Any systematic pattern linked to time indicates omitted variable bias — some time-correlated factor is affecting outcomes but is not included in the model.

Clean residuals — random scatter centered near zero, no discernible patterns — are the signature of a correctly specified model. Presenting residual plots alongside coefficient tables is the mark of an analytically rigorous forecast submission.

Key Takeaways: Part 3

- Coefficient magnitude and sign convert to resource allocation decisions. Match the coefficient units to the budget units for direct ROI translation.

- P-values below 0.05 establish statistical significance; they do not establish commercial relevance. Always pair with effect-size interpretation.

- Confidence intervals quantify forecast uncertainty and should feed directly into scenario planning ranges.

- Use Adjusted R², not R², for model comparison. High R² on historical data is compatible with poor predictive accuracy out-of-sample.

- Residual analysis is non-optional. Patterns in residuals diagnose specific model failures that require targeted correction.

Part 4: Building the Engine — Data Sourcing, Variable Engineering, and Model Validation

Data Sourcing: The Infrastructure Problem Nobody Talks About

The single most common reason biopharma regression models underperform their theoretical potential is not model misspecification. It is data infrastructure failure. Many commercial teams are still building strategic forecasts by hand-transcribing figures from competitor press releases, PDF reimbursement compendia, and IQVIA exports that don’t share a common time grain or geographic scope. A model is only as good as the data fed into it.

The primary data inputs for a biopharma regression model come from seven source categories:

Internal sales and finance systems (revenue by SKU, region, channel, and account; gross-to-net adjustments by payer segment; sales force call data from CRM), internal clinical and regulatory records (trial endpoints, label language, designation status, post-marketing commitment timelines), claims data (Symphony Health, IQVIA Xponent, Komodo Health — for prescription-level demand, patient duration metrics, and payer mix), patent and litigation data (USPTO, Orange Book, DrugPatentWatch — for expiry dates, secondary patent portfolios, Paragraph IV certifications, and litigation outcomes), reimbursement and formulary data (payer coverage policies, tier placements, prior authorization requirements), epidemiology and market research (disease prevalence and incidence, diagnosis rates, treatment rates by subpopulation), and macroeconomic and healthcare policy data (GDP, healthcare spending growth, IRA negotiation status for Medicare Part D and Part B drugs).

The key structural requirement: all data sources must be harmonized to a common time grain and geographic scope before model construction begins. Weekly data aggregated to monthly. Country-level epidemiology matched to country-level sales. Fiscal quarters aligned to calendar quarters. Failure to harmonize creates ghost correlations that survive statistical testing but have no causal meaning.

The ATC Classification Standard

For cross-product or market-level regressions, the WHO Anatomical Therapeutic Chemical (ATC) classification system is the required taxonomy. It assigns each drug to a five-level hierarchy based on the organ or system it acts on (Level 1), its therapeutic subgroup (Level 2 and 3), its pharmacological subgroup (Level 4), and its specific chemical entity (Level 5).

Grouping competitive products at ATC Level 4 (e.g., L01XC for monoclonal antibodies for oncology) defines the relevant competitive set for market share regressions. Grouping at Level 3 defines the therapeutic class for pricing comparables. Without ATC discipline, competitive benchmarking models mix products that do not compete clinically, producing coefficients that conflate distinct market dynamics.

Missing Data and Outlier Handling

Real-world pharmaceutical data has gaps — particularly in launch-year data, payer coverage in smaller markets, and litigation outcome timing. Imputation strategies depend on the nature of the missing data. For missing-at-random variables (e.g., a payer’s formulary decision not yet recorded), mean or median imputation within subgroups (therapeutic class, payer type) is defensible. For missing-not-at-random data (e.g., payers systematically failing to publish coverage for drugs they restrict), the absence itself is informative — a binary ‘coverage not published’ flag should be included as a separate variable.

Outliers require domain judgment before statistical treatment. A single month of anomalously high sales should prompt investigation: channel stuffing ahead of a patent cliff, wholesaler inventory stocking before a price increase, or pandemic-driven demand surge. Statistical removal of a genuine market event produces a model that cannot explain the most commercially relevant scenarios. Only data entry errors and measurement artifacts should be removed. True market anomalies should be retained and explained with a binary event flag.

Variable Engineering: From Raw Data to Predictive Inputs

Raw data variables rarely enter a regression model in their native form. Variable engineering converts source data into inputs with stronger linear relationships to the outcome, cleaner statistical properties, and greater interpretability.

Log-transformation is appropriate for sales revenue (which is right-skewed and multiplicative in nature), patient population sizes, and marketing spend variables where percentage changes are more economically meaningful than absolute ones. A 1% increase in DTC spend has a different commercial implication when the base is $10M versus $500M.

Interaction terms create new variables that capture how the effect of one predictor depends on the level of another. The most commercially important interaction in biopharma forecasting is between time-on-market and competitive entry: a drug’s sales trajectory after year five has a fundamentally different relationship to marketing spend depending on whether a direct competitor has entered. A model without this interaction term conflates the two regimes.

Lag variables encode temporal dynamics. Current quarter marketing spend rarely translates to current quarter prescription volume; there is a prescribing decision lag. Including spend lagged by one or two quarters often improves coefficient stability and predictive accuracy substantially.

Binary event flags encode discontinuous events that standard continuous variables cannot capture: the quarter a biosimilar first launched, the month a Paragraph IV challenge was filed, the period following a black-box warning addition to the label. Without these flags, the model’s residuals will show systematic patterns at exactly the periods when the forecast is most commercially consequential.

The Master Independent Variable Reference Table

The following table consolidates the variable framework for a comprehensive biopharma revenue regression model. It is designed as a quality-control checklist for forecasting teams, ensuring no material driver is omitted from the model specification.

| Category | Variable | Quantification Method | Strategic Rationale | Primary Data Sources |

|---|---|---|---|---|

| Commercial | Marketing spend by channel | Dollars by DTC, HCP, digital, medical education | Channel-level ROI quantification; identifies diminishing-return thresholds | Internal Finance/Marketing |

| Commercial | Sales force detailing reach | FTE count; % of target physicians detailed quarterly | Quantifies sales force productivity independent of market growth | CRM, Internal Sales Ops |

| Commercial | List price (WAC) | Wholesaler Acquisition Cost per unit | Baseline pricing input; required for gross-to-net reconstruction | Internal Finance |

| Commercial | Net price (ASP) | Average Sales Price after rebates and chargebacks | Actual revenue driver; WAC minus gross-to-net adjustments | Internal Finance, Payer Contracts |

| Commercial | Co-pay card redemption rate | % of prescriptions using manufacturer assistance | Quantifies patient cost sensitivity and access program effectiveness | Specialty Pharmacy Data |

| Market/Competitive | Number of branded competitors | Count of approved branded drugs in ATC Level 4 class | Market saturation proxy; directly associated with pricing pressure | IQVIA, DrugPatentWatch |

| Market/Competitive | Number of generic/biosimilar competitors | Count of approved generics or biosimilars in class | Primary driver of volume and price erosion post-LoE | Orange Book, Purple Book, DrugPatentWatch |

| Market/Competitive | Competitor market share (TRx) | Competitor’s share of total prescriptions | Direct measure of relative competitive position | IQVIA Xponent, Symphony Health |

| Market/Competitive | Competitor launch event | Binary (1/0) for month of major competitive entry | Captures structural market-share inflections | Press Releases, FDA Approvals |

| Clinical/Epidemiological | Target patient population | Disease incidence (new cases/year) and prevalence (total cases) | Foundational population input for patient-based forecasting | Epidemiology literature, claims data |

| Clinical/Epidemiological | Diagnosis rate | % of prevalent patients who receive a diagnosis | First ‘haircut’ on total market to addressable population | Market research, claims data |

| Clinical/Epidemiological | Treatment rate | % of diagnosed patients actively receiving pharmacotherapy | Second ‘haircut’ defining the treated market size | Market research, claims data |

| Clinical/Epidemiological | OS/PFS benefit vs. SoC | Months of improvement in Overall Survival or PFS in pivotal trial | Quantifies clinical differentiation; directly predictive of uptake velocity | Published trial data, FDA label |

| Clinical/Epidemiological | Grade 3+ adverse event rate | % of patients experiencing severe adverse events in pivotal trial | Quantifies tolerability liability; inversely associated with market penetration | Published trial data, FDA label |

| Clinical/Epidemiological | Real-world adherence rate | % of patients remaining on therapy at 12 months | Refines revenue-per-patient calculation for chronic therapies | Specialty pharmacy data, claims data |

| IP | Composition of matter patent expiry | Years to primary composition-of-matter patent expiration | Primary LoE date; the anchor for revenue erosion modeling | DrugPatentWatch, Orange Book, USPTO |

| IP | Total secondary patent count | Count of formulation, method-of-use, manufacturing process patents | Patent thicket density proxy; associated with extended effective exclusivity | DrugPatentWatch, USPTO |

| IP | Post-approval patent filings | Count or % of patents filed after initial FDA approval | Evergreening strategy indicator; associated with average 7.7 additional exclusivity years | DrugPatentWatch |

| IP | Method-of-use patent share | % of portfolio classified as method-of-use patents | Independently associated with 18% higher peak sales in academic analysis | DrugPatentWatch, Patent analytics platforms |

| IP | Active Paragraph IV certifications | Count of outstanding ANDA/NDA Paragraph IV challenges | Litigation pressure indicator; predicts probability of early generic entry | DrugPatentWatch, Legal databases |

| IP | Litigation win rate | Historical % of Paragraph IV defenses successful for the brand | Calibrates litigation risk probability in revenue erosion scenarios | DrugPatentWatch, Legal databases |

| Regulatory | Orphan Drug Designation | Binary (1/0) | Associated with 7-year market exclusivity, premium pricing, faster uptake in small populations | FDA, EMA |

| Regulatory | Breakthrough Therapy Designation | Binary (1/0) | Associated with accelerated review, stronger payer evidence package, earlier revenue generation | FDA |

| Regulatory | Priority Review | Binary (1/0) | Reduces PDUFA review clock from 12 to 6 months; accelerates time-to-revenue | FDA |

| Regulatory | Accelerated Approval status | Binary (1/0) | Conditional approval on surrogate endpoint; carries post-market withdrawal risk if confirmatory trial fails | FDA |

| Payer/Access | Formulary tier | Ordinal (1=Preferred, 2=Non-Preferred, 3=Not Covered) | Direct measure of patient cost exposure; lower tier directly reduces uptake | Payer coverage policies |

| Payer/Access | Prior authorization requirement | Binary (1/0) | Access friction variable; reduces filled prescriptions independent of patient demand | Payer coverage policies |

| Payer/Access | Step edit requirement | Binary (1/0) | Requires demonstrated failure on cheaper alternative before coverage; delays uptake and reduces peak penetration | Payer coverage policies |

| Payer/Access | IRA Medicare negotiation status | Binary (1/0) or negotiated price % reduction | For eligible drugs, mandatory price negotiation under IRA reduces net revenue; quantifies policy risk | CMS, Public disclosure |

| Macro | Healthcare spending growth rate | Annual % change in national healthcare expenditure | Controls for macro-level demand expansion independent of product-specific drivers | Government statistics |

| Macro | Major health event | Binary (1/0) for pandemic or public health emergency period | Captures exogenous demand shocks that disrupt baseline prescribing patterns | Public health data |

Model Validation: The Five-Step Protocol

A regression model that has not been formally validated is an unverified hypothesis. In a commercial forecasting context, presenting an unvalidated model to leadership is the equivalent of submitting clinical data without a control arm. The following five-step validation protocol is the minimum standard for a strategically credible forecast.

Step 1, data split. Partition the historical dataset into a training set (typically 70-80% of observations) and a held-out test set (20-30%). The model is fitted exclusively on training data. Performance evaluation on the test set simulates out-of-sample predictive accuracy.

Step 2, k-fold cross-validation. For datasets with limited observations (common in early-launch or rare-disease forecasting), k-fold cross-validation is preferred over a single train-test split. The data is divided into k subsets; the model is trained on k-1 folds and evaluated on the remaining fold, rotating through all k configurations. The k performance metrics are averaged for a stable accuracy estimate.

Step 3, benchmark comparison. The regression model’s test-set accuracy must be compared against at least two naive benchmarks: the random walk (last period’s sales, no change) and the moving average. If the regression model does not outperform both benchmarks on MAPE (Mean Absolute Percentage Error) or RMSPE (Root Mean Square Percentage Error), the added complexity is not justified. This comparison is frequently omitted in internal forecasting reviews and should be standard practice.

Step 4, residual diagnostics. Plot residuals against fitted values, time, and each predictor. Confirm the absence of systematic patterns. Apply the Durbin-Watson test for autocorrelation in time-series residuals (a DW statistic substantially below 2.0 indicates positive autocorrelation, requiring a correction such as lagged residuals or ARIMA-based error modeling). Apply the Breusch-Pagan test for heteroskedasticity.

Step 5, sensitivity analysis. Systematically vary each key input assumption by ±10% and ±25% and observe the resulting forecast change. A model where a 10% change in a single variable produces a 50% change in the forecast is dangerously fragile — the output is dominated by one assumption, and the forecast is not credible as a planning basis. Document sensitivity results alongside the central forecast in all strategic submissions.

Key Takeaways: Part 4

- Data infrastructure failure — fragmented, unharmonized source data — is the primary cause of regression model underperformance, not model misspecification.

- ATC Level 4 classification is the required taxonomy for competitive benchmarking in market-level regressions.

- Variable engineering (log-transforms, interaction terms, lag variables, event flags) converts raw source data into inputs with appropriate statistical properties.

- The five-step validation protocol — data split, cross-validation, benchmark comparison, residual diagnostics, sensitivity analysis — is the minimum standard for a commercially credible forecast.

- The Master Variable Reference Table is a quality-control checklist, not an exhaustive list. Every model should be evaluated against it for omitted-variable risk.

Part 5: The IP Dimension — Patent Portfolios as Quantifiable Forecasting Variables

Why IP Is Not Background Context

Revenue in branded pharma is a patent-protected annuity. The moment that protection expires — or more precisely, the moment effective competition begins — the annuity converts into a declining stream at a rate determined by generic or biosimilar market dynamics. With over $200 billion in biopharma revenue facing loss of exclusivity by 2030 across the global market (with estimates ranging from $180B to $250B depending on scope and definition), accurately modeling LoE timing and post-cliff erosion rates is not an analytical nicety. It is the central challenge of biopharma financial planning.

The error most forecasting models make is treating ‘patent expiry’ as synonymous with ‘generic entry.’ They are not the same event. The gap between them — which can range from zero to more than a decade — is determined by the density of the secondary patent portfolio, the strategic behavior of Paragraph IV filers, and the litigation outcomes that follow. A model that ignores this gap systematically underestimates the effective exclusivity period of drugs with robust IP strategies and overestimates the exclusivity of drugs with weak secondary portfolios.

Understanding Patent Thickets: The IP Valuation Architecture

The term ‘patent thicket’ describes the strategic accumulation of overlapping patents around a single drug product to delay generic entry beyond the primary composition-of-matter expiry date. This is not a secondary consideration for the largest revenue generators in pharma — it is the primary IP lifecycle management strategy.

For the top-selling drugs in the U.S. market, the average patent portfolio contains approximately 74 granted patents. In Europe, the equivalent figure is around 18 patents per product. The disparity reflects both the more expansive U.S. patent eligibility standards and the more aggressive IP lifecycle management strategies deployed for the U.S. market, where branded drug revenues are disproportionately concentrated relative to global share.

These portfolios contain four primary patent types, each with distinct commercial value and litigation exposure.

Composition-of-matter patents, covering the active chemical or biological entity itself, are the most legally robust and therefore the most commercially valuable. They are also the shortest-lived relative to commercial launch dates, because they are typically filed early in development, consuming exclusivity years before the drug reaches the market.

Formulation patents cover specific dosage forms, delivery mechanisms, extended-release technologies, or combination products. They are filed later, often post-approval, and are associated with the 7.7 years of additional exclusivity that DrugPatentWatch analysis attributes to post-approval patent filings. Their litigation vulnerability varies: a formulation patent on a genuinely superior extended-release mechanism is more defensible than one on a marginal reformulation.

Method-of-use patents cover specific therapeutic indications or dosing regimens. Academic analysis of the relationship between patent type and commercial outcomes has found that method-of-use patents are independently associated with approximately 18% higher peak sales, likely because they reflect the discovery of differentiated efficacy in specific patient populations that drives prescriber adoption.

Manufacturing process patents cover production methods and are typically the weakest defense against generic entry, as process patents are difficult to enforce if the generic manufacturer uses a different process to produce the same compound.

The IP Strength Score: A Composite Forecasting Variable

Rather than encoding IP as a single binary variable (‘patent active: Yes/No’) or a simple continuous variable (‘years to primary expiry’), a more predictive approach is to construct a composite IP Strength Score. This score captures the multidimensional nature of IP defensibility as a single input to the revenue regression model.

The score is built from the following components, weighted by their empirical association with extended effective exclusivity in historical datasets:

Primary composition-of-matter years remaining (highest weight: this is the expiry that triggers the Paragraph IV clock), total secondary patent count (each additional patent in the portfolio is associated with measurable delay in the median generic launch date), method-of-use patent share of total portfolio (higher share is associated with premium pricing and superior uptake), post-approval patent filing rate (number of patents filed after FDA approval date, normalized by years on market), active Paragraph IV challenge count (inversely weighted: more challenges indicate higher litigation pressure and earlier expected generic entry), and brand litigation win rate on prior challenges in the same drug class.

Data for all these inputs is available from DrugPatentWatch, the Orange Book (for small molecules), the Purple Book (for biologics and biosimilars), and the USPTO full-text patent database. No other commercially available source integrates patent portfolio data, Paragraph IV certification history, and litigation outcomes with the granularity required for this type of composite variable construction.

In a regression model, the IP Strength Score has two primary applications. As a predictor of current-period revenue, it captures the pricing and market-share premium associated with strong IP protection. As a predictor of future revenue trajectory, it provides the variable that shapes the revenue erosion curve — a high score predicts a slower, more gradual post-LoE decline; a low score predicts the rapid 80-90% revenue collapse within 12 months of first generic entry that characterizes drugs with thin secondary portfolios.

Modeling the Patent Cliff: The Erosion Curve, Not the Cliff Edge

The ‘patent cliff’ metaphor is useful for communication but misleading as a modeling assumption. Revenue does not fall instantaneously on the day the primary patent expires. It erodes according to a curve determined by the secondary patent landscape, the number and type of generic entrants, the drug’s formulation complexity (oral solid dose generics enter faster than injectable or biologic products), and the market’s prior authorization requirements for therapeutic substitution.

The historical generic erosion curve for oral solid-dose small molecules with thin secondary patent portfolios follows a well-documented pattern: branded volume typically falls 80% within the first 12 months of multi-source generic availability, with the remaining branded volume stabilizing around 10-15% of pre-generic share, concentrated in patients or payers with loyalty to the brand or formulary barriers to switching.

For biologics, the biosimilar erosion curve is structurally different. Biosimilar interchangeability — the FDA designation allowing pharmacist substitution without prescriber intervention — is the primary determinant of erosion rate. Interchangeable biosimilars erode the reference biologic’s market share at rates closer to the small-molecule generic dynamic. Non-interchangeable biosimilars, which require active prescriber switching, produce much slower penetration curves. The Humira (adalimumab) biosimilar experience in the U.S. after the 2023 entry of Hadlima, Hyrimoz, and others at significant WAC discounts, versus the slower penetration of non-interchangeable biosimilars in earlier reference biologic markets, illustrates this divergence empirically.

A regression model encoding the erosion curve must include the following time-varying variables post-LoE: number of generic or biosimilar competitors on market at each period, whether any entrant has FDA interchangeability designation, the price discount of the leading generic/biosimilar relative to branded WAC, and the active Paragraph IV challenge count (as a leading indicator of pending new entrants). These variables, combined with the pre-LoE IP Strength Score, produce an erosion trajectory rather than a cliff-edge discontinuity.

Evergreening: The Technology Roadmap for IP Lifecycle Extension

Evergreening refers to the strategy of extending a product’s effective market exclusivity through sequential IP filings on product modifications, new indications, or delivery system innovations — rather than relying solely on the primary composition-of-matter patent. It is legal, widely practiced, and consequential for commercial forecasting.

The evergreening toolkit includes seven primary strategies, each with distinct IP and commercial implications.

New formulation development (extended-release, subcutaneous from intravenous, once-daily from twice-daily) generates formulation patents and, for drugs requiring clinical data on the new formulation, potential new data exclusivity periods. AstraZeneca’s Nexium (esomeprazole), the S-enantiomer of Prilosec (omeprazole), is the canonical example — a new composition-of-matter patent on a stereoisomer extended commercially relevant exclusivity while offering modest therapeutic differentiation.

New indication approval generates additional method-of-use patents and, for drugs receiving orphan designation in the new indication, additional periods of orphan exclusivity independent of existing patent life. The new indication may also reset clinical data exclusivity in certain jurisdictions.

Pediatric exclusivity, obtained by conducting FDA-required pediatric studies under the Best Pharmaceuticals for Children Act, adds six months of exclusivity beyond all existing patents and exclusivity periods for small molecules. It is mechanically available for any drug that the FDA issues a Written Request for pediatric studies, regardless of whether the pediatric indication has commercial significance.

Fixed-dose combination products generate new composition-of-matter patents on the combination (if novel) and can qualify for new regulatory exclusivity if the combination receives independent FDA approval. Eliquis (apixaban) in combination with aspirin, for instance, represents a potential lifecycle extension pathway separate from the standalone apixaban franchise.

Drug-device combination products (prefilled syringes, autoinjectors, inhaler combinations) generate device patents and often qualify for 510(k) or PMA regulatory exclusivity separate from the drug’s NDA or BLA. The autoinjector IP around Humila (adalimumab) was one of the primary mechanisms delaying biosimilar interchangeability and commercial uptake in the U.S. market.

New salt or polymorph forms can generate composition-of-matter patents if the new form has genuinely distinct physical-chemical properties. Patent applicability and defensibility in this category varies substantially by jurisdiction and has been subject to increasing legal scrutiny.

REMS (Risk Evaluation and Mitigation Strategy) programs, while not patents, create practical barriers to generic substitution by requiring brand-specific safety protocols that generics must reference or replicate. REMS have been used strategically to delay shared system establishment between brand and generic manufacturers.

Investment Strategy Note: IP Valuation

For institutional investors evaluating biopharma equity: the IP Strength Score framework translates directly to a valuation adjustment factor. A drug with a composition-of-matter expiry in 2028 but a robust secondary patent portfolio generating an effective exclusivity runway to 2033-2034 should be discounted at a different probability-weighted LoE date than a drug with the same primary expiry and a thin secondary portfolio. The difference in the discounted revenue streams over those five to six additional years, capitalized at sector-appropriate discount rates, is material. Current consensus sell-side models frequently use single-point LoE date assumptions that undervalue strong IP portfolios and overvalue weak ones. Proprietary IP Strength Scoring using DrugPatentWatch data is an alpha-generating input to relative-value analysis in biopharma equity.

Key Takeaways: Part 5

- Patent expiry and effective generic entry are not the same event. The gap between them, determinable through secondary patent portfolio analysis, is the critical variable for revenue erosion timing.

- The U.S. average portfolio for top-selling drugs contains approximately 74 patents. 72% of those patents are filed post-FDA-approval, generating an average of 7.7 years of additional effective exclusivity.

- Method-of-use patents are independently associated with ~18% higher peak sales. Portfolio type mix is a commercially relevant variable, not just a legal detail.

- Construct a composite IP Strength Score combining patent count, type mix, post-approval filing rate, Paragraph IV challenge count, and litigation win rate. This replaces a binary ‘on/off patent’ input with a continuous, differentially predictive variable.

- Biosimilar interchangeability designation is the primary determinant of biologics erosion rate. Non-interchangeable biosimilars produce structurally slower penetration curves than FDA-designated interchangeables.

- For equity investors: proprietary IP Strength Scoring represents an alpha-generating input to relative-value analysis in biopharma — consensus models systematically miscode LoE timing for drugs with robust secondary portfolios.

Part 6: Decoding the Clinic — Phase-by-Phase Data Integration

Clinical trial data is the most underutilized source of commercial forecasting intelligence in biopharma. Most companies treat clinical data as a regulatory obligation — the information required to get the drug approved — rather than as a predictive commercial dataset that quantifies the drug’s value to prescribers, patients, and payers before those stakeholders have seen a sales representative.

The degree to which a drug demonstrably outperforms the existing standard of care on clinically meaningful endpoints is not just a marketing message. It is a direct, quantifiable predictor of prescriber adoption velocity, payer reimbursement willingness, and sustainable net price. A regression model connecting trial endpoints to commercial outcomes is the bridge between the scientific and commercial functions of a pharmaceutical company — and building it is one of the highest-value analytical projects a forecasting team can undertake.

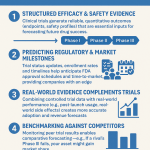

Phase I: De-Risking Probability of Success

Phase I trials assess safety, maximum tolerated dose, and pharmacokinetic/pharmacodynamic profiles in small patient cohorts. The commercial forecasting value of Phase I data is primarily in probability-of-success (POS) estimation rather than direct sales projection.

The key Phase I outputs with commercial relevance are the PK/PD profile (half-life determines dosing frequency, which directly affects patient adherence and market positioning versus competitors), the MTD and dose-limiting toxicity profile (early signals of tolerability liability relative to the therapeutic class), and the formulation characteristics (bioavailability, food-effect, route of administration) that determine the drug’s practical convenience for patients and prescribers.

In risk-adjusted NPV (rNPV) models, Phase I data refines the probability of reaching Phase II and Phase III. A drug showing favorable PK suitable for once-daily oral dosing in a market where competitors require multiple daily doses or subcutaneous injection commands a meaningfully higher POS and therefore a higher rNPV, even before Phase II efficacy data is available. This POS adjustment is the Phase I variable’s primary contribution to the forecast model.

Phase II: First Efficacy Signal and Population Refinement

Phase II data provides the first quantitative efficacy signal. Its commercial forecasting value lies in three areas.

The Objective Response Rate or primary endpoint result establishes the first estimate of clinical differentiation. Phase II ORR in oncology, for instance, is a strong predictor of Phase III success probability and of the magnitude of OS or PFS benefit that Phase III is likely to confirm. Historical analysis of oncology drug approvals has found that Phase II ORR above 50% in the target indication is associated with substantially higher Phase III success rates and with larger post-approval market share, though the relationship is indication-specific.

Biomarker prevalence data from Phase II defines the addressable patient population more precisely than epidemiological estimates alone. If the Phase II data demonstrates that the drug’s efficacy is confined to a biomarker-positive subpopulation representing 30% of the broader disease population, the total addressable market is not the prevalence of the disease — it is 30% of that prevalence. Models that fail to apply this biomarker haircut overestimate the addressable market and produce inflated peak-sales projections.

Safety signals from Phase II calibrate the tolerability assumption for market penetration. A drug showing Grade 3+ adverse event rates substantially above the class average, even with superior efficacy, will face physician and payer resistance that limits penetration below what the efficacy data alone would project.

Phase III: The Commercial-Grade Evidence Package

Phase III data is the primary input to commercial launch forecasting. It determines the label, the payer evidence package, the price justification, and the clinical narrative that drives prescriber adoption. Every key Phase III endpoint has a specific commercial translation.

Overall Survival benefit versus standard of care in oncology is the most commercially powerful efficacy measure. An additional two to four months of median OS over the current standard commands a price premium (in the U.S. value-based pricing framework for oncology) and generates prescriber adoption in a way that surrogate endpoints like ORR do not. In a regression model predicting peak market share for oncology drugs, OS benefit (in months) should be included as a continuous predictor alongside Phase III comparator type (active comparator vs. placebo — active comparator trials are more commercially relevant but harder to win).

Progression-Free Survival benefit is the more commonly available Phase III efficacy measure and is a valid predictor of commercial uptake, though with lower predictive power than OS given payer and physician skepticism about its long-term clinical meaning.

The Grade 3 or higher adverse event rate — severe adverse events in the Phase III population — is a strong negative predictor of prescriber adoption and a direct input to the adherence model. Physicians in oncology and rheumatology are sophisticated about tolerability tradeoffs, but a drug requiring dose reductions in 40% of patients or causing treatment discontinuation in 20% will face adoption resistance from cautious prescribers even with superior efficacy. Include this rate as a continuous predictor with an expected negative coefficient in market share models.

Quality of Life data from validated instruments (EQ-5D, FACT-G, disease-specific PRO measures) is increasingly required by European Health Technology Assessment bodies (NICE, G-BA, HAS) and is factored into U.S. payer value frameworks (ICER assessments). Including QoL improvement as a binary (exceeded MID threshold: Yes/No) or continuous (QoL score delta) variable captures the payer willingness-to-reimburse dimension of the evidence package that pure survival data does not.

Phase IV and Real-World Evidence: Updating the In-Market Forecast

Post-approval real-world evidence (RWE) from claims databases, electronic health records, and specialty pharmacy data provides the empirical basis for updating in-market forecasts as they diverge from the launch projection.

The most commercially critical RWE variable is real-world adherence. If the clinical trial protocol mandated 12-month treatment and 85% of trial participants completed the protocol, but real-world claims data shows 60% of patients discontinue within 6 months, the revenue-per-patient calculation must be revised downward by approximately 25%. This adherence adjustment directly affects revenue projections for chronic therapies and is almost always underestimated at launch.

RWE also provides the first empirical signal on off-label prescribing patterns, which can materially expand the addressable patient population beyond what the approved label defines. For drugs with mechanism-driven broad applicability (certain PD-1/PD-L1 checkpoint inhibitors, for instance), off-label use in non-approved tumor types has accounted for 20-30% of total prescriptions in real-world datasets. Whether this off-label use is included in the base-case forecast or modeled as an upside scenario is a commercial judgment; the key is that RWE provides the data to make the assumption quantitative rather than speculative.

Integrating Clinical Data: The Phase-by-Phase Summary

| Phase | Key Data for Forecast | Forecasting Application | Variable Type in Regression |

|---|---|---|---|

| Phase I | PK/PD profile, MTD, formulation | POS refinement for rNPV; dosing convenience proxy for competitive positioning | Binary (favorable/unfavorable profile) |

| Phase II | ORR/primary endpoint result, biomarker prevalence, Grade 3+ AE rate | Addressable market size; early efficacy signal for peak-share range | Continuous (ORR %); continuous (biomarker prevalence %) |

| Phase III | OS/PFS benefit vs. SoC, Grade 3+ AE rate, QoL data | Primary driver of peak market share, net price, and uptake velocity | Continuous (OS months delta); continuous (AE rate %); binary (QoL MID achieved) |

| Phase IV/RWE | Real-world adherence, off-label usage patterns, rare safety signals | In-market forecast update; revenue-per-patient recalibration; label expansion opportunity quantification | Continuous (adherence rate %); binary (new black-box warning) |

Key Takeaways: Part 6

- Clinical trial endpoints are directly predictive of commercial outcomes, not just scientific milestones. Build the bridge explicitly in the regression model.

- Phase II biomarker prevalence data defines the addressable patient population more precisely than epidemiology alone. Failure to apply a biomarker haircut systematically inflates peak-sales projections.

- OS benefit in months is the most commercially powerful Phase III variable in oncology. Include it as a continuous predictor in market share regression models, not as a binary ‘superior/non-superior’ flag.

- Real-world adherence from Phase IV data almost always diverges from clinical trial completion rates. Build this adherence adjustment into every chronic-therapy revenue model from the first RWE data point.

- Grade 3+ adverse event rate is a material negative predictor of prescriber adoption. It belongs in every market-penetration regression model.

Part 7: Regulatory Multipliers — Orphan Drug and Breakthrough Therapy Designations in the Model

Special regulatory designations are not administrative formalities. They alter the commercial dynamics of a drug product in measurable, quantifiable ways. A model that encodes them as a fixed-average dummy variable captures only a fraction of their predictive value. The full value emerges from modeling their interaction with the uptake trajectory over time.

Orphan Drug Designation: Small Population, Disproportionate Revenue

The Orphan Drug Designation in the U.S. applies to drugs targeting conditions affecting fewer than 200,000 Americans. Its commercial incentives — seven years of market exclusivity from the approval date (independent of patent life), 25% tax credit on qualified clinical trial expenses for for-profit sponsors, and user fee waivers — have transformed rare disease into one of the most commercially attractive segments in biopharma.

The orphan drug market globally was valued at approximately $193 billion in 2024 and is projected to reach over $621 billion by 2034, growing at roughly 12.4% annually. That growth rate reflects both the expanding pipeline of rare disease programs and the premium pricing that orphan designation supports.

From a forecasting standpoint, four features of orphan drug markets require explicit modeling adjustments. Annual treatment cost for orphan drugs averages approximately 4.5 times that of non-orphan drugs, with median annual treatment costs exceeding $218,000. Patient populations are small, geographically concentrated around specialist centers, and often pre-identified through patient advocacy networks — making the addressable population much more precisely enumerable than in common diseases. Treatment guidelines for rare diseases often have limited alternatives, which reduces payer ability to impose step edits or prior authorization based on cheaper therapeutic alternatives. Orphan market exclusivity is additive to patent life, not a substitute for it — the effective exclusivity period is the later of the patent expiry date or the seven-year orphan exclusivity period from approval.

In a regression model for an orphan drug, the addressable patient population variable requires a different construction than for a common disease. Replace incidence and prevalence with identified patient registry counts or specialist center patient volumes, which provide a more accurate and actionable denominator. Net price realization should be modeled as a function of payer type mix (commercial vs. Medicaid vs. Medicare) rather than a single net-price assumption, because the gross-to-net spread for orphan drugs varies substantially across payer categories.

Breakthrough Therapy Designation: The Pre-Launch Commercial Signal

Breakthrough Therapy Designation is granted when preliminary clinical evidence indicates a drug may offer substantial improvement over available therapy on a clinically significant endpoint for a serious or life-threatening disease. The FDA commitment that accompanies BTD includes intensive guidance, organizational commitment of senior FDA staff, and rolling review of clinical data — all of which accelerate the development and review timeline.

The BTD market globally is projected across multiple research sources to reach values ranging from $242 billion to $529 billion by the early 2030s, depending on scope and methodology. The wide range reflects the broad application of BTD across oncology, neurology, rare disease, and other therapeutic areas.

For commercial forecasting, BTD carries four quantifiable effects. Accelerated timeline to approval reduces the years of clinical stage investment before revenue generation begins — improving the IRR of the development investment even before considering the commercial premium. Payer willingness-to-reimburse is higher for BTD drugs because the designation signals FDA’s explicit endorsement of clinical superiority, providing a defensible basis for premium pricing in formulary negotiations. Physician awareness and anticipation of a BTD drug is elevated before launch, producing faster initial prescription uptake versus a non-BTD comparator in the same class. KOL engagement typically begins earlier for BTD drugs, because academic medical centers track FDA designation status and seek trial access for their patient populations.

Modeling Designations as Uptake Accelerants: The Interaction Term Approach

The limitation of a simple binary (1/0) designation variable is that it estimates a fixed average revenue ‘lift’ at every time point post-launch. In reality, designations affect the trajectory of revenue accumulation rather than the endpoint. A BTD drug reaches its peak faster than a non-BTD drug in the same class — the designation effects are front-loaded in the uptake curve.

The interaction term methodology captures this. In a multiple regression model where the dependent variable is cumulative revenue (or market share) since launch, the model specification becomes:

Sales = β0 + β1(Months-on-Market) + β2(BTD-Flag) + β3(Months-on-Market × BTD-Flag) + [control variables] + ε

In this specification, β1 is the baseline monthly revenue accumulation rate for non-BTD drugs, β2 is the level shift in initial sales attributable to designation, and β3 is the additional increment of monthly accumulation for BTD drugs. A statistically significant, positive β3 confirms that BTD drugs accumulate revenue faster over the measured period — not just that they start higher.

This interaction approach applies equally to Orphan Drug Designation, Priority Review, and Accelerated Approval. For Accelerated Approval specifically, a second interaction capturing the post-confirmatory-trial period is warranted — because AA drugs face potential withdrawal risk if confirmatory trials fail, and the revenue trajectory post-PDUFA decision for the confirmatory trial is structurally different from the pre-confirmation period.

Key Takeaways: Part 7

- Orphan Drug Designation is associated with a median annual treatment cost exceeding $218,000 — 4.5 times the non-orphan drug average. This price differential must be encoded in the forecasting model explicitly, not assumed to equal market comparables.

- ODD provides seven years of market exclusivity from approval, independent of and additive to patent life. This is a distinct and separately quantifiable exclusivity layer.

- Breakthrough Therapy Designation signals clinical superiority to payers and prescribers before the drug reaches market. Its commercial value is disproportionately concentrated in the launch and early-uptake period.

- Model designations via interaction terms (Months-on-Market × Designation-Flag) rather than simple binary variables. The interaction coefficient captures the trajectory acceleration effect that a main-effect-only specification misses entirely.

Part 8: Commercial Optimization — Marketing ROI, Diminishing Returns, and Budget Allocation

From Gut Feel to Quantified ROI

The pharmaceutical commercial model has historically allocated budgets by therapeutic area precedent, competitive match spending, or iterative negotiation between brand teams and finance. Regression analysis replaces this process with empirical ROI estimates derived from the company’s own historical spend-and-sales data.

The basic setup: a multiple regression model with quarterly net revenue as the dependent variable and quarterly spend by channel (HCP detailing, DTC television and digital, medical education, patient support programs, managed care contracting spend) as independent variables, controlling for time on market, competitive landscape, and pricing variables. The resulting coefficient for each spend channel is a first-order ROI estimate: the average quarterly revenue associated with a $1M increase in that channel, holding other factors constant.

In practice, this analysis consistently reveals substantial channel-level ROI disparities that are invisible to budget-holders relying on aggregate spend-and-sales correlation. HCP digital engagement (targeted email, HCP-facing digital media, EHR-based decision support) often shows higher ROI coefficients than equivalent spend on traditional physician meetings or speaker programs, particularly for oncology and rare disease drugs where the prescribing population is concentrated and digitally accessible. DTC television spend typically shows positive but lower ROI coefficients for specialty drugs, where the patient population is too small and geographically concentrated to justify mass-media reach costs.

Modeling Diminishing Returns: Beyond Linear ROI

A linear regression model assumes constant returns to marketing spend: the tenth million dollars generates the same revenue as the first. This assumption fails empirically and leads to over-investment in saturated channels. The correct specification for marketing spend variables is a curvilinear relationship captured through polynomial or logarithmic transformation.

Log-transforming the spend variable (using ln(Spend) as the independent variable rather than raw Spend) produces a coefficient that represents the revenue change associated with a 1% increase in spend — capturing the reality that revenue grows as a function of proportional spend changes, not absolute ones. As the log-spend coefficient is divided into the revenue response, the implied marginal return per additional dollar declines as the base spend level rises. This is the statistical encoding of diminishing returns.

The forecasting application: a brand team can use the log-spend model to identify the spend level at which the marginal revenue generated by one additional dollar equals one dollar — the point of zero marginal return. Spending beyond this level is value-destructive. In practice, optimal spend levels identified through this analysis have been found to fall below actual deployed budgets in a majority of mature brand analyses, suggesting systematic over-investment in promotional activity relative to the empirical return threshold.

Sales Force Optimization: Coverage, Frequency, and FTE Productivity

Sales force size and deployment represent the largest controllable cost line in a pharmaceutical commercial model. Regression analysis provides the framework for two key optimization decisions.

The first is the FTE-to-revenue relationship. A model with quarterly sales as dependent variable and quarterly sales calls (by physician segment, call frequency, and geographic territory) as predictors produces coefficients that quantify the revenue return per incremental detail. This allows the commercial team to identify the point at which additional calls generate diminishing incremental prescriptions — the coverage saturation threshold by physician segment.

The second is physician segmentation quality. The regression framework can test whether the current target list is optimally segmented. If the coefficient on ‘high-decile prescriber detail frequency’ is substantially larger than on ‘mid-decile prescriber detail frequency,’ resources are correctly concentrated on the highest-potential prescribers. If the coefficients converge or reverse — suggesting diminishing differential value from detailing the most-detailed physicians — the segmentation model has likely overconcentrated on already-converted prescribers and under-invested in conversion of mid-decile targets.

Key Takeaways: Part 8

- Multiple regression with channel-level spend variables produces empirical ROI coefficients that replace assumption-driven budget allocation with evidence-based quantification.

- Log-transformed spend variables capture diminishing returns. Optimal spend levels — where marginal return equals marginal cost — are almost always below actual deployed budgets in mature brand analyses.

- Sales force detailing models quantify coverage saturation thresholds by physician segment, identifying the point at which additional calls generate negligible incremental prescriptions.

- The commercial optimization use case for regression is recursive: models are updated quarterly as new spend-and-sales data accumulates, providing continuously improving ROI estimates throughout the product lifecycle.

Part 9: M&A Due Diligence — Using Regression to Pressure-Test Target Valuations

The Acquisition Forecast Problem

The pharmaceutical M&A market is large, competitive, and prone to value destruction at the acquirer level. More than half of acquired lead assets miss their pre-deal commercial projections by an average of 40% over three years post-close. The primary cause is not integration failure or commercial execution gaps — it is that the acquisition price was set against a forecast that was optimistic by construction. Target management teams preparing data rooms have structural incentives to present the most favorable version of their commercial projections.

A regression-based forecasting capability transforms the acquirer’s due diligence process from qualitative scrutiny of a target’s assumptions to quantitative comparison of those assumptions against an empirically calibrated external model.

The Pressure-Test Methodology

The acquirer builds and maintains a ‘meta-model’ — a multiple regression trained on the commercial outcomes of 30 to 60 historical drug launches in comparable indications and competitive environments. This meta-model is the reference standard against which target projections are evaluated.

During due diligence, the acquirer takes the target’s explicit assumptions on each commercial driver — market penetration rate at year 3, net price realization, uptake velocity to peak — and substitutes them into the meta-model. The model generates an independent peak-sales estimate. The gap between the target’s projection and the meta-model’s estimate is the due diligence finding.

If the target projects 25% market share at year 3 but the meta-model, trained on 40 analogous launches, estimates an average year-3 share of 12% with a 95th-percentile outcome of 19%, the target’s projection is outside the empirical distribution of comparable cases. This is a quantified red flag, not a qualitative concern, and it provides a defensible basis for price renegotiation.

The meta-model also identifies which specific assumptions are driving the gap. If the target’s projected uptake velocity assumes a time-to-peak of 18 months in a therapeutic area where the historical median is 36 months, the model isolates uptake speed as the primary source of projection optimism. This precision allows the acquirer to focus commercial due diligence resources on validating or refuting the specific assumption driving the largest valuation difference.

rNPV Integration

For deals structured around a risk-adjusted NPV (rNPV) framework, the regression-based peak-sales estimate feeds directly into the financial model. The rNPV calculation requires three commercial inputs: peak annual revenue, time-to-peak, and the post-peak revenue decay rate. All three are outputs of a properly specified regression model.

Peak revenue comes from the meta-model’s probability-weighted peak-sales estimate. Time-to-peak is modeled as a function of the clinical differentiation variables (OS benefit, ORR, Grade 3+ AE rate) and designation status (BTD flag interacted with time). Post-peak decay rate is modeled as a function of IP Strength Score, number of expected competitive entries, and biosimilar or generic entry probability over the horizon.

The result is an rNPV estimate that is grounded in empirical analogs rather than the target’s internally generated projections — a fundamentally more defensible valuation basis for the deal committee.

Key Takeaways: Part 9

- The 40% average miss rate on acquired asset commercial projections is a structural problem caused by optimistically constructed target forecasts, not post-acquisition execution failures.

- Acquirers with internally calibrated meta-models (regression-based, trained on 30+ historical analog launches) have a systematic due diligence advantage over acquirers relying on qualitative judgment.

- The pressure-test methodology substitutes target assumptions into the acquirer’s reference model, generates an independent estimate, and quantifies the gap — turning qualitative concern into quantified risk finding.

- All three rNPV commercial inputs (peak revenue, time-to-peak, post-peak decay rate) are direct outputs of a properly specified regression model. Integrate the forecasting model directly into the deal valuation framework.

Part 10: R&D Alignment — Closing the Loop Between the Lab and the Revenue Line

The Communication Gap Between Scientific and Commercial Functions

In most pharmaceutical organizations, R&D and commercial operate in different analytical languages. R&D teams optimize for scientific validity, clinical meaningfulness, and regulatory approvability. Commercial teams optimize for market share, revenue, and return on investment. The disconnect between these languages produces a specific and costly failure mode: drugs that are scientifically credible but commercially stranded — approved for indications with inadequate pricing headroom, in competitive markets where clinical differentiation is insufficient for formulary access, or in patient populations too narrowly defined by the clinical development strategy to support blockbuster revenue ambitions.

Regression analysis provides the quantitative framework for connecting these two functions. Specifically, it allows the commercial team to express its requirements in scientific terms: ‘An OS benefit of X months in this patient population is associated with the revenue level required for this asset to justify its development cost.’ This is the kind of specific, empirically grounded target that the R&D team can incorporate into trial design decisions.

AstraZeneca’s 5R Framework as a Case Study

AstraZeneca’s 5R Framework for R&D productivity — Right Target, Right Patient, Right Tissue, Right Safety, Right Commercial Potential — is the most publicly articulated organizational model for integrating commercial requirements into early R&D decision-making. The ‘Right Commercial Potential’ pillar is specifically designed to ensure that assets advancing through the pipeline have a credible path to sufficient market value to justify continued investment.

A regression-based commercial forecasting model is the methodological engine for the ‘Right Commercial Potential’ assessment. It provides a quantitative estimate of peak sales and rNPV at each stage-gate decision, using the clinical data available at that point (Phase I PK/PD for an early-stage asset, Phase II efficacy signal for a more advanced one) as input to the model. This allows portfolio committees to compare the commercial potential of assets at equivalent clinical stages, allocating advancement resources to those with the strongest empirically grounded commercial cases.

The framework also generates the specific endpoint targets that clinical teams need for Phase III design. If the model identifies that an OS benefit of at least four months is required for the drug to achieve the net price necessary for a positive payer cost-effectiveness assessment, the Phase III trial can be powered and designed to detect a four-month OS benefit with adequate statistical confidence — not an arbitrary endpoint drawn from internal scientific preference.

Key Takeaways: Part 10