How integrating patent, clinical, and scientific data into a single intelligence engine is replacing guesswork in life sciences strategy—and what it costs you to ignore it

The pharmaceutical industry spent north of $300 billion on R&D last year. It got a 4.1% internal rate of return for its trouble—a figure that sits comfortably below the cost of capital [1]. That is not a temporary blip or a pandemic hangover. It is the logical consequence of an industry that generates enormous quantities of data from patents, clinical registries, scientific publications, real-world evidence, and financial disclosures, and then stores most of it in separate databases that rarely talk to each other.

The problem is not a lack of data. The problem is that virtually every major life sciences company analyzes its patent estate in one department, monitors clinical trials in another, and tracks competitor publications somewhere else entirely. The strategy team then gets handed three separate reports and is expected to synthesize them into a coherent picture of the competitive landscape while the clock runs out on a Phase 3 asset. It is a process designed to produce mediocre decisions at great expense.

This article lays out a concrete framework for doing it differently. The core argument is this: the companies that will build and defend durable drug franchises over the next decade are not the ones with the biggest R&D budgets. They are the ones that build what can be called an ‘intelligence moat’—a systematic capability to integrate patent data, scientific literature, and clinical trial evidence into a single analytical engine that produces predictive, actionable intelligence rather than descriptive reports. Platforms like DrugPatentWatch are already providing pieces of this puzzle, enabling analysts to track patent filings, expiry timelines, and IP positioning in ways that feed directly into integrated competitive models.

What follows is both an argument and a manual.

Part I: The Pressure Is Real, and It Is Getting Worse

The Productivity Numbers Are Not Getting Better

There is a persistent belief in pharma boardrooms that the R&D productivity crisis is cyclical—that with the right structural adjustment, returns will recover. The data does not support that belief.

The success rate for drug candidates entering Phase 1 clinical trials dropped to 6.7% in 2024, down from roughly 10% a decade earlier [1]. That decline represents a compounding structural failure in how the industry allocates its early-stage resources. When nine out of ten compounds that enter human testing fail, and when development timelines routinely stretch past a decade, the question is not whether the model needs rethinking—it is how severe the rethinking needs to be.

The pattern is not uniform across disease areas or company sizes, which itself is informative. Oncology, which consumes the largest share of development resources, has some of the lowest success rates in Phase 2 to Phase 3 transitions, largely because the field moved faster than the ability to select patients who will actually respond to treatment. Rare disease programs, by contrast, have benefited from smaller, more defined patient populations and favorable regulatory pathways, producing relatively higher approval rates. These divergences are directly readable from the integrated data—if you are looking at it.

The scale of what is coming makes the current situation look like a warm-up. Between 2025 and 2029, pharmaceutical companies face an estimated $350 billion in revenue exposure as major drugs lose patent protection and face generic or biosimilar competition [1]. The wave is not hypothetical. The drugs are already identified. Their exclusivity windows are fixed. The generic filers are already preparing their ANDAs. The strategic question for innovators is what, specifically, they are doing about it—and whether their intelligence infrastructure is good enough to support the decisions that need to be made.

The Pipeline Has Become a Traffic Jam

The industry’s instinctive response to revenue pressure is to fill the pipeline. The result is that there are now more than 23,000 drug candidates in development globally [1]. That number sounds like a measure of vitality. It is equally a measure of competitive congestion.

In practice, what the crowded pipeline means is that differentiation has become the primary clinical challenge in many therapeutic categories. When six PD-1 inhibitors are in late-stage development for non-small cell lung cancer and four JAK inhibitors are chasing moderate-to-severe atopic dermatitis, the margin for error in target selection, patient population definition, endpoint choice, and commercial positioning approaches zero. You cannot simply develop a good drug in a crowded category. You have to develop a drug that is demonstrably better than the alternatives on the dimensions that the specific patients, physicians, and payers in your target market actually care about—and you have to know what those dimensions are before you design your Phase 3 trial.

That knowledge does not come from a patent database or a clinical trial registry in isolation. It comes from the synthesis of all three, combined with real-world evidence on how current treatments are actually performing for real patients.

Part II: What Siloed Analysis Actually Costs You

Three Dashboards, Zero Integration

The organizational reality in most large pharmaceutical companies is that different functions own different data domains. IP and legal teams track patents. Clinical operations teams monitor trials—their own and, selectively, competitors’. Medical affairs or market research teams follow the scientific literature. The commercial intelligence group aggregates quarterly earnings calls and deal announcements.

Each of these functions produces technically competent analysis within its domain. The problem is the white space between domains. A patent filing by a competitor is a legal document, but it is also a scientific disclosure that reveals the biological hypothesis underlying their program, the specific molecular modifications they believe improve activity or selectivity, and the manufacturing process innovations they think are necessary for scalable production. Reading that patent only as a legal document misses most of its strategic content. Reading it only as a scientific document misses the IP implications. You need both lenses simultaneously, and most organizations are not set up to apply both.

The same logic applies to clinical trial data. A Phase 2 trial registration on ClinicalTrials.gov tells you a competitor is testing a compound in a specific indication. But the trial protocol—the inclusion and exclusion criteria, the choice of primary endpoint, the selection of comparator arm, the biomarker stratification strategy—encodes the entirety of the competitor’s regulatory and commercial strategy. A company that is using progression-free survival as a primary endpoint in ovarian cancer is making a different bet about payer priorities than one using overall survival. A company that is restricting enrollment to biomarker-positive patients is making a different bet about market size versus treatment effect size than one running an unselected population. These design choices are visible. They are public. And most competitive intelligence teams read them as trial status information rather than strategy documents.

The ‘Data Rich, Insight Poor’ Failure Mode

The pathology that results from siloed analysis has a name: data-rich, insight-poor decision-making [2]. Organizations in this state can generate detailed reports on any single dimension of the competitive landscape on demand. What they cannot do is answer the questions that actually drive portfolio decisions.

Consider a concrete scenario. A company is evaluating whether to advance a bispecific antibody targeting two checkpoint molecules into a Phase 2 trial in a solid tumor indication. The relevant questions are: How crowded is the patent landscape around this combination, and how defensible is our own IP position? What does the scientific literature say about the mechanistic rationale, and which academic groups are publishing most actively on this biology? What clinical evidence already exists for single-agent approaches to each target, and what do those trial results imply about the likely magnitude of combination benefit? Are any competitors already in the clinic with similar combinations, and if so, what endpoints are they using and what patient populations are they targeting?

A company with siloed intelligence functions will spend two to three weeks pulling data from multiple disconnected sources to answer these questions—often with inconsistencies between sources because the data has been pulled at different time points by different teams using different search methodologies. A company with an integrated intelligence platform answers these questions in hours, with a coherent, cross-referenced picture that shows where the data sources agree and where they conflict.

The two-to-three-week timeline is not just inefficient. In the context of competitive drug development, it is potentially disqualifying. If your competitor is monitoring the same landscape and making go/no-go decisions faster, you will consistently be one step behind in target selection, in clinical design, and in partnering. That lag accumulates.

Part III: The Three Primary Data Pillars—And What Each One Actually Tells You

Patent Data: Forensic Intelligence, Not Just Legal Coverage

What a Patent Is, and What It Is Not

A pharmaceutical patent is, at its most basic level, a time-limited monopoly—typically 20 years from the earliest filing date—granted in exchange for a detailed public disclosure of a new, useful, and non-obvious invention [3]. The economic function of that monopoly is to provide the financial incentive that justifies the decade-plus investment required to bring a drug to market. The regulatory exclusivity provisions layered on top of patent protection—five years of new chemical entity exclusivity, seven years of orphan drug designation, twelve years of biologic data exclusivity—extend this protection further and are tracked by specialized databases like DrugPatentWatch, which maps the full exclusivity architecture for both branded and generic drugs.

For competitive intelligence purposes, the critical insight is that a patent is simultaneously a legal claim, a scientific disclosure, and a strategic signal. Most organizations exploit only the first dimension.

Reading the Patent Fortress Strategically

A major drug rarely has one patent. It has a portfolio—a layered set of claims designed to extend protection at multiple timepoints and through multiple legal routes. The composition of matter patent covering the core API is typically the first filed and most valuable, but it expires soonest relative to the commercial launch (since it is filed early in development). Secondary patents covering specific polymorphs, salts, formulations, dosing regimens, and manufacturing processes can push effective exclusivity years beyond the primary patent’s expiry.

Understanding this architecture for a competitor’s drug, or for your own, requires more than a simple patent count. It requires mapping the full patent family, understanding the claim scope of each patent, and identifying which patents are most likely to be litigated or challenged. DrugPatentWatch provides exactly this kind of structured patent term analysis, linking Orange Book-listed patents to their specific claims and expiry dates for FDA-approved drugs. That data, integrated with broader landscape analysis, gives a complete picture of how much runway a competing product has and where the IP walls are thinnest.

Patent Citations as a Quality Signal

Not all patents carry equal strategic weight, and citation analysis is the tool that separates the seminal from the routine. When a patent is frequently cited by subsequent inventions—whether by other companies or by the same company’s own later filings—it signals that the underlying technology is foundational [4]. This is a more reliable quality signal than simple patent counts because citations are added not only by the inventor but by patent examiners, who have no incentive to inflate the importance of any particular filing.

The caveat is timing. Truly radical innovations often take years to be recognized and built upon, meaning they accumulate citations on a long delay. Using a short citation window (say, three to five years) will systematically underestimate the value of breakthrough patents relative to incremental improvements. An analyst comparing the citation-weighted portfolio quality of two companies over a five-year window may draw exactly the wrong conclusion about which company has built more durable competitive assets [4].

The AI/ML Patent Problem: When Disclosure Becomes a Liability

The rise of AI and machine learning in drug discovery has created a specific and genuinely difficult strategic problem for IP protection. Patent law requires that a granted patent provide an ‘enabling’ disclosure—one that would allow a person skilled in the relevant field to practice the invention without ‘undue experimentation’ [3]. For AI/ML innovations in the medical and life sciences space, this requirement creates a painful dilemma.

A recent analysis found that many AI/ML patents in the life sciences fail to specify crucial technical details—model architecture, training data characteristics, hyperparameter choices—that would be necessary for reproducibility [3]. Companies face a binary choice: file a robust, detailed patent that reveals the core proprietary methodology, or file a vague patent that is legally weak. Many are choosing a third path: protecting their core algorithms as trade secrets, which offer no protection against independent discovery but do not require public disclosure.

The strategic implication for competitive analysts is significant. A competitor’s portfolio of AI/ML patents, particularly if the patents lack technical specificity, may be far weaker than the filing volume suggests. The true competitive moat may lie in trade secrets that are entirely invisible to patent landscape analysis. This means that for AI-driven drug discovery capabilities specifically, patent analysis provides at best a partial picture, and potentially a misleading one.

What Competitors’ Patents Cannot Tell You

There is a category of competitive knowledge that patent analysis structurally cannot provide: innovations that companies choose not to patent, either because they opt for trade secret protection or because the innovation does not meet patentability criteria. Process innovations, software implementations, and certain types of formulation know-how often fall into this category. A patent-centric competitive analysis will systematically miss these assets, creating a blind spot that can distort assessments of competitor strength and the barriers to market entry.

Scientific Literature: The Bedrock, With Cracks

The Dual Role of Academic Research

Scientific literature does not mean the same thing to an innovator drug company and a generic drug company. This is worth stating explicitly because it shapes how the literature should be analyzed for different strategic purposes.

For innovator companies, academic publications are the primary source of novel biological hypotheses [5]. The identification of a new disease-relevant protein, the elucidation of a previously unknown signaling pathway, the discovery of a genetic variant associated with treatment response—these are the building blocks of new drug programs, and they emerge first in academic publications, often years before any company begins internal development work on the resulting targets.

For generic companies, the scientific literature serves a structurally different function. It provides the analytical and methodological foundation for reverse engineering an innovator’s product—the bioequivalence methodologies, the analytical characterization techniques, the formulation science needed to demonstrate that a generic version is therapeutically equivalent [5]. An analyst reading a paper on advanced analytical characterization of a complex injectable formulation might read it as academic science. A generic drug scientist reads it as a development roadmap.

Understanding which lens is appropriate for a given analysis is not obvious. It requires knowing who publishes what, for what ultimate purpose, and with what relationship to ongoing development programs.

Extracting Signals at Scale: From PubMed to Knowledge Graphs

PubMed currently indexes tens of millions of abstracts. No human analyst can read them all, and keyword search is an inadequate substitute for reading, because it misses semantic relationships between concepts. The solution, which is increasingly available through both commercial platforms and open-source tools, is AI-powered knowledge graph construction.

A knowledge graph does not simply index documents. It extracts the biological entities within those documents—specific genes, proteins, drugs, diseases, cell types, pathways—and the relationships between them, then links those entities to the authors who wrote about them, the institutions they work at, and the funding sources supporting their research [6]. This creates a queryable network of scientific knowledge that supports questions no keyword search can answer. Who is publishing most actively on PCSK9 inhibitor mechanisms, and what institutional affiliations do those researchers have? What other disease areas are being explored by the teams publishing on GLP-1 receptor agonist biology, and what does that suggest about future pipeline directions? Which academic groups working on KRAS-targeted therapy have existing consulting or grant relationships with specific companies?

Tools built on this architecture—including platforms that integrate with PubMed and connect publication data to patent ownership records and clinical trial sponsor information—can identify key opinion leader networks, map the collaboration patterns between academic research and corporate programs, and surface the earliest signals of emerging therapeutic directions. This is the closest thing pharma has to a scientific intelligence early warning system.

The 17-Year Lag: More Complicated Than the Headline

A widely cited statistic holds that it takes an average of 17 years for research evidence to be translated into routine clinical practice [7]. That number is directionally useful—the drug development pipeline is genuinely slow—but it obscures enormous variation and is mechanistically misleading.

The 17-year figure comes from studies of specific interventions, primarily in primary care, and the variation around that mean is large. Some innovations translate in three to five years; others take decades or never make the transition. More importantly, the 17-year framing implies a linear pipeline with a single bottleneck. The reality is a network of translational gaps and feedback loops: from basic biology to target validation, from target validation to preclinical proof-of-concept, from preclinical findings to Phase 1 safety signals, from Phase 1 to Phase 2 mechanistic evidence, and so on [7]. Each transition has its own failure modes and its own probability distribution.

For the integrated intelligence analyst, this means that the publication record alone cannot tell you when a given scientific finding will translate into a clinical program. You need the patent data to see when companies started protecting IP around a target, and the clinical trial registry to see when they started testing it in humans. It is the triangulation that gives you the timeline, not any single source.

Publication Bias and the Problem of Missing Evidence

The published scientific literature is not an unbiased record of all research conducted. The well-documented tendency to publish positive results while negative and inconclusive studies go unpublished has specific strategic implications in drug development.

The most consequential form of this bias in pharma is selective reporting of clinical trial results. Under FDAAA 2007 and subsequent requirements, companies are legally required to register clinical trials and report results on ClinicalTrials.gov, but compliance has been inconsistent. A 2017 study comparing ClinicalTrials.gov coverage to a commercial database (Trialtrove) found that the commercial platform captured 31% more trials across four selected disease indications, and that the two databases showed wide discrepancies in key data fields—phase, status, and site information—for trials with the same registration numbers in both systems [8].

The strategic takeaway is that no single public database gives you a complete picture of competitor clinical activity. Comprehensive competitive intelligence in clinical development requires cross-referencing multiple sources and accepting that some competitor activity will simply not be visible in any public registry.

Clinical Trial Data: The Strategy Is Written in the Protocol

The Registry as a Real-Time Intelligence Feed

ClinicalTrials.gov and its international equivalents—the EU Clinical Trials Register, the ISRCTN registry, and others—provide the most direct and forward-looking view of what drugs are being tested in humans and by whom. Combined with commercial platforms like Clarivate’s Cortellis and Informa’s TrialTrove, which offer richer data curation, clinical trial monitoring is the closest thing pharma has to a real-time intelligence feed on competitor pipelines.

The basic utility of this data—knowing which companies are testing which compounds in which indications—is well understood. The more important and less exploited capability is reading trial design as strategy.

Decoding Trial Design as Competitive Strategy

Every clinical trial protocol encodes a set of strategic bets about how a drug will be differentiated, what claims the sponsor intends to make to regulators and payers, and what patient population will define the commercial opportunity. These bets are visible in the protocol text, and most competitive intelligence teams read past them.

Consider endpoint selection. A company running a cancer trial with progression-free survival (PFS) as the primary endpoint is making several simultaneous choices: it believes the effect size on PFS will be large enough to achieve statistical significance in a feasible sample size; it believes regulators will find PFS an acceptable surrogate for overall survival in the relevant tumor type; and it is prioritizing speed to approval over the definitively superior evidence that overall survival data would provide. A company that chooses overall survival as the primary endpoint is making the opposite tradeoffs—betting that it can fund a longer, larger trial and that the overall survival benefit will be large enough to justify payer reimbursement at a premium price point relative to existing standards of care.

Both strategies can be correct in the right circumstances. But if your drug is also in development for the same indication, and your competitor has chosen PFS, you now know exactly what they intend to claim, approximately how long it will take them to get there, and what your opportunities are for differentiation—either by going head-to-head on the same endpoint with a more convincing effect size, or by targeting a different patient subgroup, or by designing your trial to generate the overall survival data that theirs will not have.

The inclusion and exclusion criteria tell you who the competitor intends to treat. Tight biomarker restrictions signal a precision medicine approach with a defined target population. Broad enrollment signals a bet on a class effect across an unselected population. The choice of comparator arm tells you what standard of care the competitor expects to face at launch and what they believe the market benchmark will be. The secondary endpoints show what claims they are building for the label beyond the primary indication.

None of this analysis requires insider information. It requires reading the publicly available protocol with strategic intent rather than treating the registration as a milestone checkbox.

Monitoring Protocol Amendments as an Early Warning System

A clinical trial registration is not static. Sponsors amend protocols throughout the course of a study, and those amendments are public record. A substantive amendment—changing the primary endpoint, expanding or narrowing the patient population, adding or removing a study arm, extending the enrollment period—is a signal that something has changed in the sponsor’s assessment of their drug or their strategy.

An extended enrollment period with no corresponding increase in sample size suggests unexpected difficulty finding eligible patients. A primary endpoint change partway through a trial is a regulatory red flag that warrants careful analysis; it sometimes indicates that early data were not tracking as expected. An addition of a new study arm may signal that the sponsor is responding to data from a competitor’s trial that redefined the standard of care they need to beat.

Systematic monitoring of protocol amendments, combined with analysis of why the amendments occurred, is one of the most underutilized capabilities in pharmaceutical competitive intelligence. Most organizations track amendments reactively, as interesting news items. The organizations that gain competitive advantage treat them as structural events requiring an analytical response.

Part IV: The Expanded Universe—Real-World Data, Commercial Signals, and Financial Intelligence

Real-World Evidence: What Happens When the Protocol Ends

Clinical trials are designed to produce clean, interpretable evidence under controlled conditions. The patients who enroll in pivotal trials are not typical patients: they tend to be younger, healthier, more treatment-naive, and less burdened by comorbidities than the patients who will actually receive the drug after approval. The treatment environments in clinical trial sites are not typical clinical practices. The careful monitoring of compliance and adverse events in a trial does not reflect the messiness of real-world prescribing.

Real-world data (RWD)—drawn from electronic health records, insurance claims, pharmacy dispensing records, and patient registries—fills this gap. It describes how treatments perform in the full breadth of patients who receive them, including populations that were explicitly excluded from clinical trials. It captures long-term safety signals that trials are rarely powered or long enough to detect. It documents the actual treatment patterns that determine how a drug is used, how long patients stay on therapy, what they switch to when the drug fails, and what the real-world treatment pathway looks like before, during, and after the indexed drug. <blockquote> ‘By integrating real-world evidence from Flatiron Health’s electronic health record database covering 1,150 patients, Eli Lilly’s analysis revealed considerable heterogeneity in post-therapy treatments and quantified a significant unmet need, with a median overall survival of just 13.2 months—evidence that was instrumental in supporting the accelerated FDA approval of pirtobrutinib for mantle cell lymphoma.’ [9] </blockquote>

This case illustrates the specific strategic value of RWD in the regulatory and commercial context: it provides the evidence base for unmet need claims that clinical trials, by design, cannot generate. A trial tests whether a new treatment is better than a comparator. RWD characterizes what actually happens to patients in the current standard of care, establishing the baseline against which any new treatment’s value is measured.

For competitive intelligence, RWD adds a dimension that patent and trial data cannot provide: the real-world competitive landscape, as experienced by physicians and patients rather than as designed by clinical development teams. A drug that performs well in a trial but is used narrowly in practice—perhaps because its side effect profile is manageable only in specialized centers, or because its administration requirements are complex, or because its patient selection criteria in the label are difficult to apply in community oncology—has a very different competitive position than one that is broadly adopted. RWD tells you which of these scenarios is playing out for your competitor’s products and, by extension, what the actual market opportunity looks like for your own.

Commercial Platforms: Where Data Aggregation Meets Analytical Sophistication

A new generation of data and analytics companies has moved well beyond simply selling datasets. Firms like IQVIA, Clarivate, and Optum now offer integrated platforms that combine prescription and sales data, RWD, clinical trial intelligence, patent analytics, and AI-powered services in unified environments.

IQVIA’s MIDAS database, for example, is the pharmaceutical industry’s standard reference for global prescription and sales volumes, covering more than 150 countries [10]. Combined with IQVIA’s clinical trial intelligence tools, it connects the upstream activity of trials with the downstream reality of prescribing patterns. Clarivate’s Cortellis platform integrates patent data, regulatory approval timelines, clinical trial records, and deal intelligence, providing a unified competitive view of individual assets from discovery through commercialization [11].

These platforms matter because they lower the technical cost of data integration for organizations that have not built proprietary integration infrastructure. They are not a substitute for strategic analytical capability—the platform does not tell you what questions to ask or how to interpret what you find—but they dramatically reduce the time spent on data assembly.

Financial Signals and Corporate Communications

Quarterly earnings calls, investor day presentations, and deal announcements are underutilized intelligence sources in the drug development context. They are not technical documents, but they are strategic ones. When a CEO describes which therapeutic areas will receive increased investment in the next planning cycle, that is a pipeline signal. When a company takes a large write-down on a program and provides limited clinical rationale for the decision, that is a pipeline failure signal. When a global pharmaceutical company announces a licensing deal with a Chinese biotech for an asset in a specific mechanism class, that is a signal about where the global leaders believe the next generation of innovation is coming from.

The licensing deal signal is particularly important right now. There has been a notable acceleration in cross-border partnerships between Western pharma companies and Chinese biotechs, driven partly by the maturation of China’s drug development ecosystem and partly by the high cost of internal early-stage research. Companies that track these deals systematically—not just as business news but as indicators of mechanism class bets being made by organizations with significant analytical resources—can extract early-stage pipeline intelligence that is not yet visible in patent filings or clinical registries.

Part V: Building the Integration Engine—Architecture, Methodology, and the AI Layer

The Technical Challenges Are Real but Surmountable

Building an integrated intelligence platform in a major pharmaceutical company is not primarily a data challenge. It is primarily an organizational challenge that happens to require data infrastructure. The data challenges are significant—volume, silos, standardization gaps, quality inconsistencies, governance requirements—but they are technically solvable with available tools. The organizational barriers are harder.

The specific technical challenges include: data living in purpose-built incompatible systems across R&D, clinical, regulatory, and commercial functions [12]; inconsistent terminologies across those systems (the same drug referred to differently in an EHR, a patent database, and a clinical trial registry); poor data quality from incomplete records and duplicate entries; security and governance requirements that limit data sharing between functions; and the dominance of unstructured text—patents, publications, clinical notes—that standard database tools cannot process.

The standard solutions to these challenges are well established. Normalizing drug names to the RxNorm standard allows integration across pharmacy, EHR, and claims data [13]. Mapping patents to the Cooperative Patent Classification system creates a common vocabulary for technology categorization across geographies [14]. Adopting FAIR data principles—Findable, Accessible, Interoperable, Reusable—provides a governance framework for data that is designed to be used across functions rather than locked within them [15].

Statistical normalization is also necessary when data from different domains enters a shared analytical environment. A raw count of patent citations is not directly comparable to a raw count of clinical trial phases or PubMed publication volumes, because these variables have different natural scales and distributions. Z-score scaling or range normalization brings them into a common space where they can be weighted and combined without larger-magnitude variables dominating the analysis.

AI and NLP: The Processing Layer

Approximately 80% of enterprise data is unstructured text, and in life sciences, that includes patents, scientific papers, clinical protocols, regulatory submissions, and physician notes [16]. The only scalable way to extract structured intelligence from this text is through natural language processing.

The most relevant NLP capabilities for life sciences intelligence are named entity recognition (identifying mentions of specific drugs, genes, proteins, and diseases in free text), relation extraction (identifying the relationships between those entities—’Drug A inhibits Protein B’ or ‘Trial C tests Compound D in Indication E’), and document classification (categorizing patents or papers by technology area, mechanism class, or competitive relevance).

Tools built on biomedical-domain language models—BioBERT and its successors are the standard examples—perform substantially better on these tasks than general-purpose models, because they are pre-trained on the specific vocabulary and writing conventions of scientific and clinical text [6]. A model that understands that ‘carcinoma,’ ‘neoplasm,’ and ‘malignancy’ all refer to related concepts, and that ‘IC50’ and ‘inhibitory concentration’ describe the same measurement, will produce far better entity extraction results than one trained on general web text.

The downstream application of this extraction capability is knowledge graph construction. Rather than storing patent, literature, and trial data in separate tables, a knowledge graph represents the relationships between entities as explicit connections in a network. Drug A is linked to the patents that protect it, the clinical trials that test it, the scientific papers that describe its mechanism, and the researchers who authored those papers. A company X is linked to the patents it owns, the trials it sponsors, and the academic collaborators it funds. This web of explicit connections supports queries that no relational database can answer efficiently: ‘Show me all the academic researchers publishing on KRAS G12C mutations who have received funding from a company that also has an active Phase 2 trial in NSCLC.’ That query, running against a properly constructed knowledge graph, returns an answer in seconds. Running it manually would take weeks.

Generative AI: Useful, But Not Autonomous

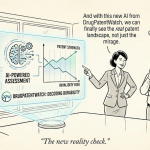

Large language models capable of synthesizing literature and generating draft analyses have significant potential in the intelligence workflow. The McKinsey estimate that generative AI could produce annual value of $60 billion to $110 billion for the pharmaceutical and medical products sector, driven primarily by R&D acceleration and operational efficiency gains, captures the scale of the opportunity [17].

The practical deployment of generative AI in competitive intelligence requires careful guard-railing. LLMs are prone to confident factual errors—’hallucinations’—particularly when they are asked to recall specific data points like patent filing dates, clinical trial results, or drug approval statuses. Grounding LLM outputs in a verified knowledge graph—essentially requiring the model to source every factual claim from the structured network of validated data rather than from its training set—dramatically reduces this failure mode and makes the outputs trustworthy enough for strategic use [18].

What generative AI is genuinely well-suited for, even without perfect grounding, is synthesis and communication: taking a dense set of verified facts extracted from a knowledge graph and producing a clear, structured summary of the competitive landscape for a non-specialist executive audience. A head of corporate development does not need to read 47 patent abstracts. They need a three-paragraph synthesis of what those patents tell us about a competitor’s technological direction and where the IP risks lie. Generative AI, applied to a verified data substrate, can produce that synthesis reliably and at scale.

Part VI: A Practical Methodology for Integrated Intelligence Projects

Phase 1: Define the Business Question First

The most common failure mode in pharma competitive intelligence projects is that they begin with data and end with data. An analyst pulls everything available about a therapeutic area, organizes it into slides, and presents the output as intelligence. It is, in most cases, information at best.

The discipline of integrated intelligence begins with the business question. What decision will be made as a result of this analysis? Who will make it, and what would they need to know to make a better decision than they could without the analysis? How will the answer change the company’s behavior?

The AstraZeneca competitive intelligence practice captures this principle directly: effective intelligence requires working with the ‘intelligence customer to understand up front what decision they will be making’ [19]. This customer-centered framing is not rhetorical. It determines the scope of data collection, the analytical methods applied, and the format of the final output. A question about whether to advance an asset into Phase 3 requires different data and different analysis than a question about which indication to pursue first, or whether to seek a partnership for a specific mechanism class.

Phase 2: Multi-Source Data Collection

Once the strategic question is defined, the data collection scope follows from it. For a typical competitive landscape question—’assess our competitive position for a PARP inhibitor in HER2-low breast cancer’—the required data sources span at minimum four domains.

Patent databases (USPTO, EPO, WIPO, and national offices for key markets) provide the IP landscape: who owns what, when the key composition of matter patents expire, and what formulation or method-of-use patents may extend protection. DrugPatentWatch provides a curated, cross-referenced view of pharmaceutical patent term extensions, Orange Book listings, and Para IV challenge histories for FDA-approved drugs—exactly the granular IP data needed to assess the real-world exclusivity timeline for an existing competitor asset [20].

Scientific literature databases (PubMed, Web of Science, Google Scholar) provide the foundational biology: what is known about the mechanism, what does the clinical biology look like in HER2-low tumors specifically, and who are the key academic thought leaders.

Clinical trial registries (ClinicalTrials.gov, EUCTR, and commercial equivalents) provide the competitive pipeline: what is in the clinic, at what stage, with what design choices, and what are the realistic timelines to potential approval.

Real-world evidence databases and commercial data platforms provide the market context: how is the current standard of care actually performing in this patient population, what are physicians dissatisfied with, and what does the prescribing landscape look like.

Phase 3: Harmonization and Knowledge Graph Construction

The raw data from these sources arrives in inconsistent formats, with inconsistent terminologies and varying levels of completeness. The harmonization step converts this heterogeneous material into a unified analytical substrate.

This is where the investment in data infrastructure pays off. Organizations that have already standardized their drug naming to RxNorm, mapped their disease vocabulary to MedDRA or SNOMED CT, and adopted CPC for patent classification can execute this step in hours. Organizations that have not done this groundwork spend weeks on it—and the final product is less reliable.

The construction of a knowledge graph from this harmonized data—linking drugs to patents, patents to scientific papers, papers to authors, authors to companies, companies to clinical trials—creates the analytical foundation for genuinely integrated intelligence.

Phase 4: Integrated Analysis and Multi-Layered Visualization

Analysis of the integrated dataset should produce outputs that are impossible to generate from any single data source. Multi-layered heat maps that plot patent filing density against clinical trial activity reveal the disconnects between IP ambition and clinical execution—areas where many companies are protecting IP but few are advancing clinical programs, or vice versa. Integrated timelines that track an individual asset from first patent filing through publication through Phase 1 initiation reveal how long competitors’ development cycles actually are, enabling more accurate forecasting of future launch timing [21].

Network visualizations that map the connections between key opinion leaders, their research publications, their academic collaborations, and their corporate consulting relationships reveal the informal intellectual infrastructure of a therapeutic area—who the real scientific shapers are, and what they think about the biology and the mechanism.

The value of these visualizations is not aesthetic. It is the ability to see patterns across domains that are invisible when each domain is analyzed separately.

Phase 5: Insight Generation and Strategic Recommendations

The final step is the one that most intelligence projects spend too little time on: translating the analytical findings into specific, actionable recommendations that address the original business question.

This requires moving from description (‘here is what the landscape looks like’) to interpretation (‘here is what it means for our specific situation’) to prescription (‘here is what we recommend you do about it’). The interpretation and prescription steps require human judgment about competitive dynamics, organizational capabilities, risk tolerance, and strategic priorities. AI and data platforms can accelerate the descriptive and some of the interpretive work. The strategic synthesis remains a human responsibility.

Part VII: Strategic Applications—What You Can Actually Do With This

Landscape Analysis: The Multi-Dimensional Map

Traditional pharmaceutical landscaping produces a one-dimensional picture: a list of competitors in an indication, organized by phase of development. An integrated landscape analysis produces a genuinely multi-dimensional picture that reveals the structure of the competitive terrain rather than just its current population.

The overlay of patent filing activity against clinical trial starts identifies where the science is maturing (high patent activity, increasing clinical starts) versus where it is stalling (high patent activity, low clinical translation). The overlay of publication volumes against company affiliations reveals which academic research programs are linked to corporate development activities and which represent unaffiliated work that any company could partner around or build upon.

A domain with high late-stage clinical activity but sparse recent patent filings is a mature, commoditized field where the core IP is largely established—a signal that new investment should focus on differentiation strategies rather than fundamental technology development. A domain with high patent activity but no clinical-stage programs yet is either too early for clinical translation or facing significant technical barriers. The two situations call for different strategic responses, and the distinction is visible only in the integrated view.

Predictive Competitive Timeline Modeling

Forecasting when a competitor’s drug will reach the market is one of the most commercially consequential questions in pharmaceutical strategy. A company launching a drug in a category where a superior competitor product is three years behind them has a very different commercial opportunity than one where the competitor is three months behind.

The integrated data enables substantially better timeline forecasting than any single source. By triangulating a competitor’s foundational patent filing date, the date of their first publication establishing the mechanistic rationale, the date their IND was filed or Phase 1 initiated, and the current trial phase and enrollment status, an analyst can build a probabilistic timeline model that incorporates both industry-average phase durations and competitor-specific execution history. The result is a probability distribution over potential launch dates rather than a single point estimate, which is far more useful for commercial planning [21].

IQVIA’s annual Global Trends in R&D report provides industry benchmark data on phase durations and transition probabilities by therapeutic area that can calibrate these models [21]. Combined with competitor-specific historical data available in clinical trial registries and patent databases, the resulting forecasts are substantially more accurate than the rough heuristics (‘Phase 3 takes three years’) that most commercial teams use.

Portfolio Triage and Go/No-Go Decision Support

The integrated intelligence framework is most valuable when it is applied to your own portfolio decisions rather than exclusively to competitor analysis. The most expensive mistakes in pharmaceutical R&D are not made in the laboratory—they are made in portfolio review meetings when promising assets are advanced into expensive late-stage development without adequate analysis of the competitive landscape they will face at launch.

A Phase 3 trial for a new molecular entity in oncology costs, on average, hundreds of millions of dollars. The decision to run that trial is irreversible once enrollment begins. Making that decision without a rigorous assessment of what the competitive landscape will look like when the trial completes—three to five years in the future—is strategically negligent.

The integrated intelligence framework addresses this gap by projecting the competitive environment at expected launch. If the analysis shows that three competitors with similar mechanisms of action are in Phase 2 and 3 trials today, and if their expected launch timelines place them in the market 12 to 18 months before your own projected launch, the commercial opportunity for your asset may be materially smaller than the pipeline stage analysis suggests. That information, presented to a portfolio review committee with specific data on the competitors’ clinical designs and commercial positioning, changes the nature of the go/no-go conversation.

Clinical Trial Design Optimization

Competitor intelligence on trial design should directly inform the design of your own clinical programs. If comprehensive review of competitors’ trials in a given indication reveals that enrollment in biomarker-positive patient populations is systematically slower than planned—a signal visible in protocol amendments extending enrollment periods—your own trial design can preemptively account for this by either broadening eligibility criteria or concentrating site selection in geographic markets with better biomarker testing infrastructure [22].

If competitor data shows that a specific primary endpoint has repeatedly failed to achieve regulatory acceptance in a particular tumor type, your trial design should not repeat that approach. If competitors are all running single-arm trials in a rare indication where response rate is the primary endpoint, and you have data suggesting a survival benefit that a randomized trial could demonstrate, your path to differentiation is a trial design that generates evidence theirs does not.

AI-driven platforms that mine historical trial data to identify the design features most predictive of trial success—in terms of both probability of meeting primary endpoints and probability of regulatory approval—provide empirical guidance for these design decisions [1]. This moves clinical trial design from a discipline driven primarily by experience and convention toward one informed by systematic learning from historical outcomes.

Part VIII: Case Studies—Where Integrated Intelligence Actually Worked

Eli Lilly and Pirtobrutinib: RWD Meets Regulatory Strategy

The development of pirtobrutinib for mantle cell lymphoma (MCL) illustrates exactly how real-world evidence, integrated with clinical development strategy, can shape both regulatory and commercial outcomes. MCL is a rare, aggressive B-cell lymphoma with limited options for patients who have progressed after standard first-line therapy. The treatment landscape was poorly characterized in the real-world setting, making it difficult to quantify unmet need with precision.

Lilly’s team used retrospective analysis of electronic health record data from Flatiron Health’s database, covering 1,150 real-world MCL patients, to characterize the post-therapy treatment landscape and patient outcomes [9]. The analysis demonstrated substantial variability in treatment patterns after initial therapy failure and, critically, documented a median overall survival of 13.2 months—data that established the severity of the unmet need in regulatory terms.

This RWD package became a key component of the FDA submission that supported pirtobrutinib’s accelerated approval. The integration of real-world evidence with clinical development strategy is not a post-approval activity here: it shaped the regulatory narrative during development. That is the practical application of integrated intelligence in a commercial context.

GSK and Niraparib: Extending Clinical Evidence with Real-World Data

GSK’s PARP inhibitor niraparib had pivotal Phase 3 data from the NOVA trial establishing its efficacy in recurrent ovarian cancer maintenance therapy. What the NOVA trial data could not provide, by virtue of its design, was evidence on how niraparib performed relative to the common clinical practice of active surveillance in patients who were not enrolled in trials—typically older, with more comorbidities, and representing the actual community oncology population.

GSK used Flatiron’s real-world EHR data to conduct a comparative effectiveness analysis of niraparib patients versus a real-world active surveillance cohort [9]. The analysis showed a statistically meaningful survival benefit for niraparib users, not only validating the NOVA trial’s findings but extending them to a broader and more demographically representative patient population. This real-world evidence package strengthened the drug’s reimbursement and formulary positioning by providing evidence that the trial benefit translated to the broader population payers were covering.

This case shows the integration of clinical trial data with real-world evidence in the post-approval phase as a commercial strategy tool, not merely a pharmacovigilance exercise.

The AML Case: 20 Months to 20 Days

A major pharmaceutical company developing a treatment for acute myeloid leukemia (AML) was performing the kind of multi-source data integration that the convergence framework describes—but doing it manually. The team was integrating internal clinical trial data with public resources like the Cancer Cell Line Encyclopedia to identify optimal dose ranges and correlate drug characteristics with patient outcomes. Doing this manually, across heterogeneous data sources in incompatible formats, took 20 months [23].

Cognizant’s AI team built an automated data pipeline that could ingest, normalize, and analyze the same combination of internal and external data. The result was a reduction in that analysis timeline from 20 months to 20 days [23]. The pipeline developers projected that this acceleration, applied across the full oncology development program, could reduce total development timelines by up to four years.

Four years of development time is not an abstract efficiency gain. In the context of a drug with meaningful patent exclusivity, it represents three to four additional years of market exclusivity. For a drug with $1 billion in annual sales, that is $3 to 4 billion in revenues that the siloed, manual approach would have left on the table.

Immunology Launch Intelligence: Agile CI in Real Time

A biopharma company launching a new immunology drug in a crowded market—the type of situation where multiple biologics compete aggressively and physicians are accustomed to frequent new launches—found its traditional launch intelligence model inadequate. The model produced quarterly brand-tracking reports, which arrived six to eight weeks after the data was collected. In a market where competitive dynamics were shifting weekly, that lag meant the commercial team was consistently responding to last quarter’s situation rather than the current one.

The company rebuilt its intelligence approach around integrated, weekly synthesis of primary market research with healthcare professionals, secondary commercial data on prescribing trends, and competitive signal monitoring across clinical trial registries and scientific publications [24]. The result was a weekly intelligence product that identified emerging issues—a competitor’s new clinical data publication, a formulary decision affecting physician access, a safety signal attracting media attention—in time for the commercial team to respond.

The direct output included development of new educational campaigns to address physician questions about the drug’s mechanism of action, and differentiated communications for different prescriber segments based on real-time insight into which physicians were switching from which competitors and why. This is integrated intelligence as a commercial operations tool rather than a strategic planning exercise.

Part IX: The ROI of Building the Intelligence Moat

Quantifying the Returns

The business case for integrated intelligence investment rests on a set of quantifiable mechanisms, each of which has empirical support even if the precise magnitude varies by organization.

AI applied to drug discovery and preclinical research can reduce costs in those stages by 25% to 50%, according to multiple published estimates [25]. That range is wide, and the lower end is probably more realistic for organizations at early stages of AI implementation, but even a 25% reduction in preclinical costs represents a meaningful improvement in portfolio productivity for a company running a large discovery operation.

Two-thirds of pharmaceutical professionals believe AI has the potential to improve R&D productivity by 26% or more [26]. That statistic captures an expectation rather than a demonstrated outcome, but the volume and consistency of this expectation across a large, sophisticated professional community is itself informative. AI in clinical trials—specifically for patient enrollment, site selection, and protocol design—can deliver approximately 20% cost improvement and 10% to 20% faster enrollment [17]. Given that Phase 3 enrollment cost and duration are among the primary drivers of late-stage development expense, these improvements translate directly to millions of dollars in cost reduction per pivotal program.

The most compelling empirical evidence comes from analysis of the Chinese pharmaceutical industry, where a one-unit increase in an AI-adoption index correlated with a 0.05 to 0.06 increase in new drug output per billion yuan of R&D investment [27]. That is a modest absolute effect size, but it is measured against the backdrop of an industry where any consistent improvement in R&D productivity represents enormous value at scale.

By 2025, some analysts project that 30% of new drugs will be discovered using AI [25]. That projection was made before 2025 arrived, and there is genuine debate about whether the timeline is optimistic. But the directional trend—toward AI as a standard component of the discovery workflow rather than a niche capability—is not in dispute.

The Organizational Prerequisites

The technical infrastructure for integrated intelligence is necessary but not sufficient. The organizational conditions that allow it to produce value are equally important and harder to establish.

The most critical organizational prerequisite is cross-functional data access. An intelligence platform that integrates patent, clinical, and scientific data creates value only if the people making portfolio decisions can actually access and use it. In most large pharmaceutical companies, those people are portfolio executives who do not sit within any of the functional groups that own the underlying data. Building the organizational permission structure and governance framework to give strategic decision-makers access to integrated intelligence across functional boundaries requires explicit executive sponsorship and is genuinely difficult to achieve.

The second prerequisite is a decision-focused framing for intelligence work. The AstraZeneca model—starting with the decision, not the data—requires competitive intelligence professionals to engage proactively with decision-makers before conducting the analysis. That engagement changes the professional dynamic of the CI function from a data service to a strategic advisory capability. It also requires decision-makers to articulate what they need more clearly than is comfortable, because it exposes the assumptions underlying the decision to scrutiny.

The third prerequisite is investment in data quality infrastructure over time. Integrated intelligence is no better than the underlying data, and the underlying data in most organizations contains inconsistencies, gaps, and duplicates that accumulate over years of siloed data management. The payoff from systematic investment in data quality compounds over time, but the investment is required before the payoff materializes.

Part X: What the Next Five Years Look Like

The AI-Powered Intelligence Engine as Standard Infrastructure

The current state of pharmaceutical competitive intelligence is dominated by manually assembled reports, periodic landscape analyses, and reactive monitoring of competitor activities. The trajectory is toward continuously updated, AI-powered intelligence systems that deliver synthesized insights directly into the decision workflows of R&D and commercial teams.

Platforms like Northern Light’s SinglePoint represent this future state already: enterprise-wide systems that continuously aggregate and structure data from internal and external sources, with AI processing working in the background to identify patterns and surface relevant insights, and natural language interfaces that allow non-specialist users to query the system without requiring data or analytics expertise [28].

In this model, a portfolio review meeting is not preceded by a two-week data pull by the competitive intelligence team. It is preceded by a two-hour review of the AI system’s current-state analysis, updated in real time, which the strategy team then interrogates with specific questions about the most important strategic uncertainties.

The Specific Role of Specialized Data Platforms

General-purpose AI platforms and data warehouses provide the infrastructure layer. Specialized data platforms—of which DrugPatentWatch is a prime example in the pharmaceutical IP domain—provide the domain-specific data quality and analytical structure that makes the integration tractable.

DrugPatentWatch’s specific value in an integrated intelligence framework is that it has already done the difficult work of mapping the pharmaceutical patent landscape to FDA regulatory data: connecting Orange Book patent listings to specific drug products, linking patent expirations to marketed drugs, tracking Paragraph IV challenges and their litigation outcomes, and providing structured data on patent term extensions. This pre-structured, high-quality data integrates far more efficiently into an enterprise intelligence platform than raw patent database outputs that require extensive cleaning and interpretation before they can be used analytically.

Specialized platforms like DrugPatentWatch serve as the ‘ground truth’ layer for specific data domains—the authoritative, curated source that the broader integration engine can trust without having to validate from first principles.

The Intelligence Moat in Practice

The organizations building durable competitive advantage in pharmaceutical intelligence are not doing so by acquiring better individual data sources. They are building the capability to integrate and act on data faster and more accurately than competitors. That capability is an organizational competency that takes years to develop and is difficult to replicate quickly.

The compounding effect of superior intelligence manifests in portfolio decisions made with better information: fewer late-stage failures because competitive analysis identified overcrowded markets earlier; faster development timelines because AI-assisted trial design improved enrollment and protocol efficiency; more defensible IP positions because integrated patent analysis identified design-around opportunities before competitors did; and more precise commercial launches because real-world evidence characterized the market opportunity before launch rather than after.

None of these benefits is dramatic on a single decision. Cumulatively, over a decade of portfolio management, they represent the difference between a company that consistently builds valuable, defensible franchises and one that consistently invests in the wrong assets at the wrong time.

Key Takeaways

The core argument of this analysis reduces to a small number of actionable conclusions for pharmaceutical executives, portfolio managers, and competitive intelligence professionals.

Siloed intelligence infrastructure is structurally incapable of answering the questions that matter. The decisions that determine whether a company builds a valuable franchise—target selection, indication sequencing, clinical trial design, go/no-go at Phase 2, commercial launch strategy—all require simultaneous insight from patent, clinical, scientific, and commercial data. No single data source provides that insight, and manual synthesis across sources is too slow and too inconsistent to be reliable.

Patent data is forensic evidence, not just legal documentation. The technical disclosure in a competitor’s patent reveals their biological hypothesis, their manufacturing approach, and their IP strategy. The citation profile of a patent reveals its foundational significance. The completeness of its technical disclosure signals how legally robust it is likely to be under challenge. Reading patents as strategy documents rather than legal documents requires analytical training, but it generates intelligence that is available nowhere else.

Clinical trial protocols encode entire commercial strategies. Endpoint choice, patient population definition, comparator arm selection, and biomarker stratification are not administrative choices. They are strategic bets that encode a company’s entire hypothesis about how their drug will be differentiated and what claims they intend to make to regulators, payers, and physicians. Systematic analysis of these design choices across the competitive landscape provides intelligence about competitor intent that is years ahead of any market research.

Real-world evidence is a regulatory and commercial strategy tool, not just a pharmacovigilance exercise. The pirtobrutinib case and the niraparib case both demonstrate that RWD, integrated with clinical development strategy, can define unmet need, support accelerated approval, and strengthen payer positioning. Companies that treat RWD as a post-approval safety activity are systematically underusing it.

The AI and knowledge graph layer is the force multiplier. An analyst can manually integrate two or three data sources for one or two competitors. An AI-powered knowledge graph system can integrate five data sources for an entire competitive landscape, continuously, and surface patterns that no human analyst would detect. The value is not in replacing human strategic judgment. It is in giving the strategist a far richer information substrate to exercise that judgment against.

Specialized platforms are the ground truth layer. Services like DrugPatentWatch, Flatiron, Clarivate Cortellis, and IQVIA provide pre-structured, high-quality domain-specific data that integrates far more reliably into enterprise intelligence systems than raw database outputs. Investing in these specialized sources is not a luxury for organizations with large intelligence budgets; it is the foundation of integration quality for any organization.

The organizational and cultural prerequisites are as important as the technical ones. An integrated intelligence platform creates value only if decision-makers have access to it, trust it, and are organizationally positioned to act on what it tells them. Building that environment requires explicit executive sponsorship, cross-functional data governance, and a decision-focused framing for the intelligence function—not just data management improvements.

FAQ

Q1: If a company relies heavily on trade secrets rather than patents for its AI/ML drug discovery methods, how does that change how you should analyze their competitive position?

It changes it substantially. Trade secrets are invisible to patent landscape analysis, which means a competitor whose core advantage is a proprietary AI model for target identification or compound optimization will look weaker in a patent-based competitive analysis than they actually are. The analytical response is to look for indirect signals of AI capability: the quality of the compounds they are advancing to clinical stage (which reflects the quality of their discovery process, even if the process itself is opaque), the specific biological targets they are pursuing (which may reflect the output of novel AI-driven analysis that is ahead of published science), and the institutional talent they are hiring (patent engineers, machine learning researchers, structural biologists). These indirect signals, combined with an explicit acknowledgment that trade secrets create a structural blind spot in patent analysis, produce a more accurate competitive assessment than treating the absence of AI/ML patents as evidence of weak AI capability.

Q2: How should a mid-size biotech with limited intelligence resources prioritize which data domains to integrate first?

Start with clinical trial data, because it is the most directly linked to near-term competitive threats and it is publicly available at no cost through ClinicalTrials.gov. Systematic monitoring of competitor trial registrations and protocol amendments provides actionable competitive intelligence on pipeline and strategy with relatively low analytical overhead. The second priority is patent data, particularly through platforms like DrugPatentWatch that provide pre-structured, curated pharmaceutical IP data rather than raw patent database access, which is expensive to interpret correctly without specialized expertise. Scientific literature integration—particularly NLP-based knowledge graph construction—requires more technical investment and is best treated as a Phase 2 build, once the foundational patent and clinical monitoring infrastructure is established and generating value.

Q3: What is the most common failure mode in pharmaceutical competitive intelligence projects, and how does the convergence framework address it?

The most common failure mode is answering the wrong question with precision. Most CI projects are scoped to describe ‘the landscape’ rather than to answer a specific strategic question that a specific decision-maker needs answered by a specific date. The result is voluminous, detailed, accurate, and largely irrelevant output. The convergence framework addresses this by mandating a strategic alignment phase at the beginning of every project that defines the business question, the decision-maker, the decision timeline, and the criteria by which a better answer changes the organization’s behavior. This phase is uncomfortable because it forces explicit articulation of assumptions and priorities, but it is the step that determines whether the final output gets used or filed.

Q4: How do you handle data quality problems when integrating pharmaceutical data sources that use different terminologies, different database standards, and different levels of completeness?

Data quality problems in life sciences integration are not solved once and then forgotten; they are managed continuously. The practical approach is to invest in a small number of high-quality master data standards—RxNorm for drug names, MedDRA or SNOMED CT for disease classification, CPC for patent technology areas, ORCID or a similar persistent identifier for researchers—and to apply those standards consistently at the point of data ingestion rather than during analysis. This means establishing clear data governance policies for which standard applies to each entity type, building automated mapping tools that convert incoming data from source-system terminologies to those standards, and running regular data quality audits that identify inconsistencies and track resolution. The FAIR principles (Findable, Accessible, Interoperable, Reusable) provide a useful governance framework for this ongoing process.

Q5: Can integrated patent and clinical intelligence realistically predict which therapeutic areas will produce the next generation of blockbuster drugs, or is drug innovation fundamentally unpredictable at that level?

It can substantially improve the odds of identifying the right areas early, without guaranteeing correct predictions. The historical record shows that the key scientific breakthroughs underpinning major drug franchises—checkpoint immunotherapy, GLP-1 receptor agonists, KRAS targeting, RNA interference—were visible in the academic literature years before the first pivotal trial data. Companies that were systematically monitoring the scientific publication record in those areas, cross-referencing emerging academic findings with early-stage patent filings and pre-clinical data, were in a position to enter those categories earlier than those relying on clinical-stage pipeline tracking. But the relationship between scientific activity and commercial success is not deterministic. Many promising biological mechanisms fail in clinical development for reasons that cannot be predicted from the pre-clinical and publication record. The most defensible claim for integrated intelligence is not that it predicts winners, but that it significantly increases the density of evidence available for strategic bets, thereby improving the quality of portfolio decisions made under fundamental uncertainty.

References

[1] Clinical Leader. (2025, January). Biopharma R&D faces productivity and attrition challenges in 2025. https://www.clinicalleader.com/doc/biopharma-r-d-faces-productivity-and-attrition-challenges-in-2025-0001

[2] Danforth Advisors. (n.d.). Transforming pharmaceutical data into strategic intelligence. https://www.danforthadvisors.com/resources/pharmaceutical-data-integration-strategy/

[3] Mintz. (2025, February 24). Patenting AI/ML life sciences and TechBio innovations: How much disclosure is required? https://www.mintz.com/insights-center/viewpoints/2231/2025-02-24-patenting-aiml-life-sciences-and-techbio-innovations-how

[4] Katila, R. (n.d.). Using patent data to measure radical innovative performance. Stanford University. https://web.stanford.edu/~rkatila/new/pdf/KatilaUsingpatentdata.pdf

[5] DrugPatentWatch. (n.d.). The role of academic research in generic drug development. https://www.drugpatentwatch.com/blog/the-role-of-academic-research-in-generic-drug-development/

[6] Xu, J., Kim, S., Song, M., Jeong, M., Kim, D., Kang, J., Rousseau, J. F., Li, X., Xu, W., Torvik, V. I., Bu, Y., Chen, C., Ebeid, I. A., Li, D., & Ding, Y. (2020). Building a PubMed knowledge graph. Scientific Data, 7, 205. https://pmc.ncbi.nlm.nih.gov/articles/PMC7320186/

[7] Morris, Z. S., Wooding, S., & Grant, J. (2011). The answer is 17 years, what is the question: understanding time lags in translational research. Journal of the Royal Society of Medicine, 104(12), 510-520. https://pmc.ncbi.nlm.nih.gov/articles/PMC3241518/

[8] Evaluating the Completeness of ClinicalTrials.gov. (2018). PubMed. https://pubmed.ncbi.nlm.nih.gov/30048602/

[9] Flatiron Health. (n.d.). Oncology case studies: How two life sciences companies applied real-world evidence to drive innovation. https://resources.flatiron.com/real-world-evidence/oncology-case-studies-real-world-evidence

[10] IQVIA. (n.d.). MIDAS. https://www.iqvia.com/solutions/commercialization/data-and-information-management/midas

[11] Clarivate. (n.d.). Cortellis Clinical Trials Intelligence. https://clarivate.com/life-sciences-healthcare/research-development/discovery-development/cortellis-clinical-trials-intelligence/

[12] WhizAI. (n.d.). 7 challenges & solutions for life sciences commercial analytics. https://www.whiz.ai/resources/blog/the-top-7-challenges-with-life-sciences-commercial-analytics

[13] Wolters Kluwer. (n.d.). Four medication use cases that require a data normalization solution. https://www.wolterskluwer.com/en/expert-insights/4-medication-use-cases-that-require-a-data-normalization-solution

[14] IPCheckups. (n.d.). How to perform a patent landscape analysis in 5 key steps. https://www.ipcheckups.com/patent-landscape-analysis-how-to-5-steps/

[15] Rädler, S., Rauniyar, S., Schneider, J., Phuyal, S., & Benis, A. (2023). Challenges and best practices for digital unstructured data enrichment in health research: A systematic narrative review. PLOS ONE. https://pmc.ncbi.nlm.nih.gov/articles/PMC10566734/

[16] Drug Development and Delivery. (n.d.). Natural language processing: How life sciences companies are leveraging NLP from molecule to market. https://drug-dev.com/natural-language-processing-how-life-sciences-companies-are-leveraging-nlp-from-molecule-to-market/

[17] McKinsey & Company. (n.d.). Generative AI in the pharmaceutical industry: Moving from hype to reality. https://www.mckinsey.com/industries/life-sciences/our-insights/generative-ai-in-the-pharmaceutical-industry-moving-from-hype-to-reality

[18] Digital Science. (2024, January). Fragmented knowledge in pharma: Bridging the divide between private and public data. https://www.digital-science.com/blog/2024/01/fragmented-knowledge-in-pharma-bridging-the-divide-between-private-and-public-data/

[19] Octopus Intelligence. (n.d.). Expert quotes on competitive intelligence. https://www.octopusintelligence.com/competitive-intelligence-expert-quotes/

[20] DrugPatentWatch. (n.d.). A business professional’s guide to drug patent searching. https://www.drugpatentwatch.com/blog/the-basics-of-drug-patent-searching/

[21] IQVIA. (2025, June). Global trends in R&D 2025: Signs of higher efficiency and productivity. https://www.iqvia.com/blogs/2025/06/global-trends-in-r-and-d-2025-signs-of-higher-efficiency-and-productivity

[22] Applied Clinical Trials Online. (n.d.). Clinical trials as a competitive edge: Strategic considerations. https://www.appliedclinicaltrialsonline.com/view/clinical-trials-as-a-competitive-edge-strategic-considerations

[23] Cognizant. (n.d.). Data science and AI fast-track cancer drug development: Case study. https://www.cognizant.com/us/en/case-studies/data-science-ai-cancer-drug-development

[24] Day One Strategy. (n.d.). Optimising brand performance in a hyper-competitive immunology market. https://www.dayonestrategy.com/case-studies/optimising-brand-performance-in-a-hyper-competitive-immunology-market/

[25] World Economic Forum. (2025, January). How 2025 can be a year of progress for biopharma. https://www.weforum.org/stories/2025/01/2025-can-be-a-pivotal-year-of-progress-for-pharma/

[26] ICON plc. (2019, September 19). Can AI improve pharma R&D productivity? https://www.iconplc.com/insights/blog/2019/09/19/can-ai-improve-rd-productivity

[27] Li, H., et al. (2025). The impact of AI adoption on R&D productivity: Evidence from Chinese pharmaceutical manufacturing industry. Journal of Asian Economics, 97. https://ideas.repec.org/a/eee/asieco/v97y2025ics1049007825000144.html

[28] Northern Light. (n.d.). The state of competitive intelligence in pharma: Key trends for 2025. https://northernlight.com/competitive-intelligence-in-pharma-key-trends/