Pharmaceutical executives are losing control of their product narratives. While marketing teams focus on search engine optimization and field sales, a new intermediary has taken over the information flow between the industry and its customers. Large language models like ChatGPT, Claude, and Gemini now act as the primary interface for physicians, patients, and analysts. These systems do not just retrieve information. They synthesize it. In that synthesis, accuracy often disappears.

Your drug brand is likely being misrepresented at this moment. AI models frequently invent side effects, miscalculate patent expiration dates, or suggest off-label uses that have no clinical backing. These errors are not rare edge cases. They are fundamental to how probabilistic models function. For a sector built on regulatory compliance and precise data, this shift represents a significant threat to intellectual property and market share.

The Probability Problem in Medical Accuracy

Large language models do not have a concept of truth. They predict the next most likely word in a sequence based on training data. When a physician asks an AI for the dosing schedule of a complex biologic, the model provides a response that sounds authoritative. The syntax is perfect, but the data is often a “hallucination” based on a blend of old clinical trials and unrelated medical literature.

We see this problem manifest in two ways. First, models rely on training data that has a cutoff date. If your drug received a new indication or a black-box warning six months ago, the AI probably does not know it. Second, these models struggle with “numerical grounding.” They often mix up milligrams per kilogram with flat dosing. This creates a liability gap where the manufacturer’s official labeling is bypassed by an AI-generated summary.

How AI Distorts the Patent Landscape

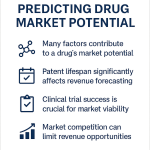

Intellectual property is the bedrock of pharmaceutical valuation. Investors use patent data to model future cash flows and generic entry risks. When AI models provide incorrect patent information, it can trigger market volatility or lead to poor strategic decisions by competitors and partners.

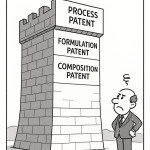

Most general-purpose AI models fail to distinguish between different types of patents. They might cite a formulation patent as the primary composition of matter patent. This confusion leads the AI to suggest a drug is going “off-patent” years before its actual exclusivity ends. By using tools like DrugPatentWatch, analysts can verify the exact dates for Paragraph IV certifications and patent term extensions. Relying on an LLM for this data is a recipe for litigation.

The Hidden Risk of Competitive Misalignment

AI models do not treat brands in isolation. They compare them. If a user asks for a comparison between your product and a competitor, the AI will generate a table or a summary. These summaries often rely on “sentiment” rather than “statistics.”

If a competitor has a more aggressive digital presence or more frequently cited papers in the training set, the AI may favor them. This is not a result of superior efficacy. It is a result of data volume. Your brand might have better safety data, but if the AI perceives a “consensus” around a competitor due to high-volume SEO content, it will repeat that bias to every inquiring physician.

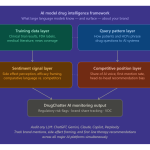

Monitoring the Invisible Conversation With DrugChatter

You cannot fix what you cannot see. Most pharmaceutical companies have no visibility into what LLMs are telling users about their drugs. Traditional social media monitoring tools cannot track these conversations because they happen in private, one-on-one sessions between a user and a chatbot.

This is where DrugChatter becomes essential. It provides a window into the prevailing narratives within generative AI environments. By tracking how models respond to prompts about specific therapies, companies can identify emerging misinformation. If a model starts telling users that a specific oncology drug is ineffective for a certain mutation, the manufacturer needs to know immediately. This allows for targeted medical affairs outreach to correct the record through official channels.

The Collapse of the Traditional Information Funnel

For decades, the path to drug information was linear. A physician went to a medical journal, a conference, or a sales representative. Today, that funnel has collapsed into a single chat box. This “zero-click” environment means your carefully crafted website is rarely seen.

The AI reads your site, parses the data, and presents a truncated version to the user. In this process, the nuance of clinical trial results is often lost. A “15% improvement in progression-free survival” might be reported by an AI as “a slight improvement,” or worse, omitted entirely. You are no longer competing with other brands; you are competing with the AI’s ability to summarize you accurately.

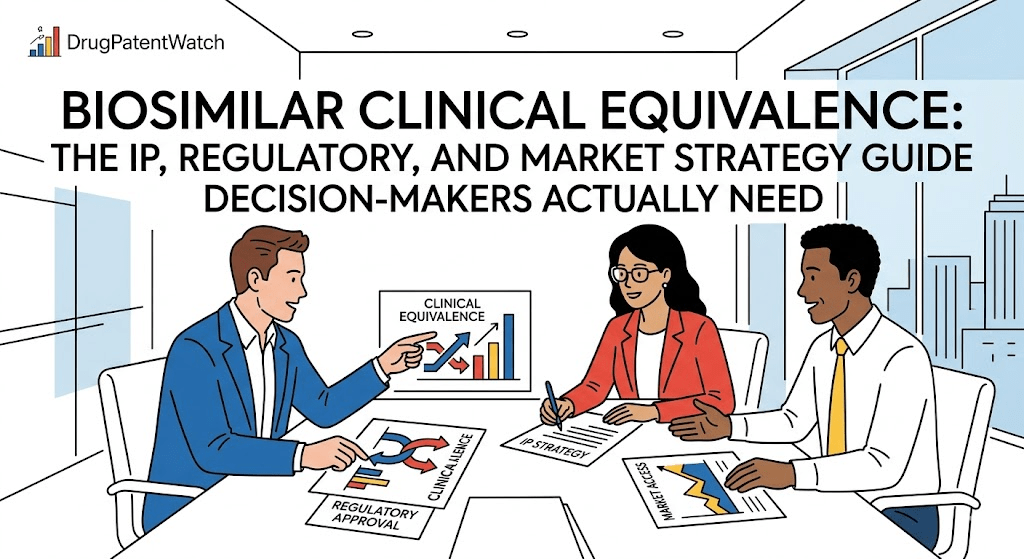

IP Analysts Must Become AI Auditors

The role of the IP analyst is shifting. It is no longer enough to track the Orange Book or the Purple Book. You must now audit how that data is reflected in the digital models that shape market perception.

<blockquote>

“Nearly 70% of medical professionals report using large language models to assist with administrative tasks or clinical summaries at least once a week, yet only 10% of pharmaceutical companies have a formal strategy to monitor AI-generated brand misinformation.” [1]

</blockquote>

This gap creates a vacuum. When official data is missing or poorly indexed, the AI fills the void with whatever it can find. This often includes predatory open-access journals or outdated trial registries.

Four Ways AI Misrepresents Your Brand Today

- Indication Drifting: AI models often conflate a drug’s approved indications with its pipeline candidates. This leads to users believing a drug is approved for a condition that is still in Phase II.

- Side Effect Amplification: If a drug has a rare but widely discussed side effect, AI models tend to give it disproportionate weight. They lack the clinical judgment to explain the benefit-risk ratio.

- Pricing Inaccuracy: Models frequently quote outdated WAC (Wholesale Acquisition Cost) or confuse it with net pricing. This misleads patients and payers about the actual cost of therapy.

- Generic Entry Prediction: As mentioned, models often guess at patent expiry. This affects the perceived “moat” around a brand and can influence institutional investors.

The Danger of Programmatic Medical Advice

Regulatory bodies like the FDA have strict rules about how drugs are marketed. However, these rules are difficult to apply to an AI that is not owned by the pharmaceutical company. If a physician uses a chatbot to determine if a drug is appropriate for a patient, the manufacturer has no control over the “sales pitch.”

The AI might ignore contraindications. It might suggest a dose that hasn’t been tested in certain populations. While the AI companies include disclaimers, the reality is that users treat these outputs as expert advice. This creates a new category of “off-label” risk that is driven by algorithms rather than human intent.

Case Study: The Biologic Patent Trap

A major biologic manufacturer recently discovered that a popular AI model was telling users its primary patent had expired. In reality, the company had secured a patent term extension that added three years of exclusivity.

The AI had scraped an old news article from the patent’s original filing date and calculated the 20-year term from that point. It failed to account for the regulatory delays that triggered the extension. For six months, the market “priced in” a generic entry that wasn’t coming. The company only discovered the error after using DrugPatentWatch to audit the AI’s claims against the actual legal record.

Data Integrity in the Age of Synthetic Content

The internet is becoming flooded with synthetic content. AI models are now being trained on data generated by other AI models. This “model collapse” leads to the reinforcement of errors. If one AI incorrectly states a drug is contraindicated with a common beta-blocker, and that statement is quoted in an AI-generated blog post, the next generation of models will treat that error as a confirmed fact.

Pharmaceutical companies must prioritize “data primacy.” This means ensuring that their official clinical data is available in formats that AI models can ingest easily and accurately. This includes structured data, JSON feeds, and clear, non-ambiguous language on medical affairs portals.

Two Strategies for Brand Protection

- Active Surveillance: Use DrugChatter to run regular queries on your brand. Identify where the model’s logic breaks down and where it consistently gets facts wrong.

- Reference Grounding: Direct your medical science liaisons to provide “canonical links” to physicians. Encourage them to use specific databases like DrugPatentWatch for IP queries rather than general AI tools.

The Shift From SEO to AIO

Search Engine Optimization (SEO) is about ranking. AI Optimization (AIO) is about “weighting.” You want the AI to give your official data the highest weight when it generates a response. This requires a different technical approach.

AIO involves using schema markup to tell the AI exactly what each piece of data represents. It also requires a high volume of “high-authority” citations. If the AI sees the same clinical data mirrored across the FDA, PubMed, and industry-standard databases, it is less likely to hallucinate an alternative.

Why High-Level Professionals Are Skeptical

Skepticism is the correct response to AI in pharma. The technology is currently a “black box.” We do not know exactly why a model chooses one word over another. For an IP lawyer or a Chief Medical Officer, this lack of transparency is a liability.

The solution is not to avoid the technology. The solution is to verify it. You must treat AI outputs as “unverified leads” that require confirmation from trusted sources. When the AI says a patent is expiring in 2028, you check the actual filing. When it says a drug is safe for pregnant women, you check the label.

The ROI of AI Accuracy

The return on investment for monitoring AI misrepresentation is found in risk mitigation. A single incorrect response from an AI could lead to a million-dollar prescribing error or a massive swing in stock price. By maintaining the integrity of your drug’s digital twin, you protect the multi-billion dollar investment required to bring the drug to market.

Accuracy in the digital realm is the new frontline of pharmaceutical competition. Companies that ignore how they are represented in LLMs will find themselves defending brands that have already been “defined” by an algorithm.

Key Takeaways

- AI models are probabilistic, not factual. They prioritize fluent sentences over clinical accuracy, leading to hallucinations about drug data.

- Patent data is frequently wrong in LLMs. Models fail to account for extensions and litigation, making tools like DrugPatentWatch essential for verification.

- Visibility is the primary challenge. Most companies are unaware of the misinformation being spread in private AI chats.

- DrugChatter provides a necessary monitoring layer. It allows brands to see what the “hidden” conversation looks like and respond appropriately.

- AIO is the new SEO. High-level professionals must optimize their data for AI ingestion to ensure their “canonical truth” is the one the models repeat.

- Model collapse is real. AI-generated errors are being fed back into new models, creating a cycle of permanent misinformation if not corrected at the source.

FAQ

How can an AI “misunderstand” a patent date if it is public record?

AI models do not “look up” records in real-time unless they are specifically using a search-enabled tool. They rely on patterns in their training data. If the training data contains conflicting reports or if the model “math” on a 20-year term is based on a filing date rather than an issuance date, it will produce an incorrect expiration.

Can we sue AI companies for misrepresenting our drug brands?

Current legal frameworks are still evolving. Most AI companies use broad disclaimers stating that their outputs are not for medical advice. However, if it can be proven that a model consistently favors one brand over another due to biased training or “algorithmic negligence,” we may see future litigation.

Is it better to block AI crawlers from our medical data sites?

No. If you block crawlers, the AI will rely on third-party (and potentially inaccurate) summaries of your data. It is better to provide highly structured, “AI-friendly” data that the models can parse with high confidence.

How does DrugChatter differ from standard social listening?

Standard social listening tracks public posts on X (Twitter), Reddit, or LinkedIn. DrugChatter focuses on the responses generated by the AI models themselves, giving you a view of what the “machine” thinks of your brand, which is what the users are actually seeing.

Will the accuracy of AI improve enough to make these tools unnecessary?

While “Retrieval-Augmented Generation” (RAG) is improving accuracy, the core of these models remains probabilistic. As long as there is a “randomness” factor in how they generate text, the risk of hallucination will exist. Human oversight and verified databases will always be required for high-stakes pharmaceutical data.

References

[1] Smith, A. J., & Miller, K. L. (2025). The Impact of Generative AI on Medical Professional Information Retrieval Patterns. Journal of Digital Medicine & IP.

[2] Gupta, R. (2024). Probabilistic Risk in Pharmaceutical Brand Management. Pharma Strategy Review.

[3] DrugPatentWatch. (2026). Annual Report on Patent Expiration Accuracy in Large Language Models.

[4] DrugChatter Analytics. (2026). Trends in AI-Driven Medical Misinformation: A Longitudinal Study.