The pharmaceutical industry has been stuck in a productivity rut for decades. We spend billions of dollars and ten years to get a single molecule through the clinic, only to see most of them fail for reasons we should have seen coming. I have watched plenty of hyped technologies claim they would fix this, but the release of OpenAI’s GPT-Rosalind feels different because it targets the specific, messy bottlenecks of early-stage research. This isn’t just another chatbot. It is a domain-specific reasoning model built for biochemistry, genomics, and protein engineering. Named after Rosalind Franklin, the woman who actually did the work to reveal the structure of DNA, this model tries to solve the fragmentation of data that keeps scientists from seeing the connections between disparate research papers and proprietary databases.

The shift from general purpose models to biological reasoning

The big problem with general large language models in the lab has always been their tendency to make things up. If a chemist asks a standard model for a specific binding affinity or a citation for a rare disease gene, there is a good chance the model will hallucinate a result that sounds plausible but is entirely fictional. GPT-Rosalind is built to address this by moving away from simple next-token prediction and toward what OpenAI calls frontier reasoning. It is designed to handle the multi-step workflows that researchers deal with every day, such as synthesizing evidence across fifty different databases and planning laboratory protocols that actually work in a real-world setting.

I should point out that GPT-Rosalind isn’t trying to replace the scientist. It is designed to be a research collaborator or a scientist’s assistant. The model focuses on the earliest, most hypothesis-driven stages of discovery where we currently juggle vast literature and evolving ideas. By integrating with the OpenAI Codex environment and a specialized Life Sciences plugin, it allows a researcher to move from a literature review to sequence analysis without switching between a dozen different platforms. This continuity is a massive improvement over the current fragmented state of R&D software.

Technical infrastructure and the Codex environment

The real power of GPT-Rosalind lies in its connectivity. It isn’t a closed box. It connects to more than 50 scientific databases and tools covering human genetics, functional genomics, protein structure, and clinical evidence. This allows it to act as a hub for a continuous workflow. For example, a researcher can use it to interrogate the latest literature and then immediately transition into sequence manipulation or enzyme reagent design.

| Component | Functionality | Data Access |

| Life Sciences Plugin | Database Connectivity | 50+ Scientific tools and databases |

| Codex Environment | Software Engineering Agent | Continuous research workflows |

| Reasoning Engine | Domain-Specific Logic | Biochemistry, genomics, protein engineering |

| API Access | Enterprise Integration | Trusted access for partners like Amgen |

I’ve seen many platforms claim to be end-to-end, but most of them fail because they can’t talk to the external tools that scientists already use. GPT-Rosalind bypasses this by being built as infrastructure rather than a direct competitor to existing therapeutic development tools. It incorporates biological reasoning into a framework that can access public study discovery tools and clinical evidence simultaneously. This means the model can help a researcher understand why a particular protein structure might be a viable target while checking if there is any clinical evidence in the public domain that contradicts that hypothesis.

Benchmarks that matter for medicinal chemistry

We can’t just take OpenAI’s word for it. We have to look at the data. On the BixBench benchmark, which tests real-world bioinformatics and data analysis, GPT-Rosalind earned the highest score among models with published results. Even more telling is its performance on LABBench2. This benchmark covers the actual grunt work of drug discovery: literature retrieval, database access, sequence manipulation, and protocol design.

| Benchmark | GPT-Rosalind Performance | Comparison vs. GPT-5.4 |

| BixBench | Leading Score | Superior |

| LABBench2 (Overall) | Outperformed GPT-5.4 | Superior on 6/11 tasks |

| CloningQA | Significant Gains | DNA and enzyme reagent design |

| RNA Prediction | 95th Percentile | Exceeded human experts |

The results from the CloningQA section of LABBench2 are particularly relevant for those of us who have spent time designing DNA constructs or choosing the right enzyme for a specific reaction. The model’s ability to outperform its predecessors in these areas indicates a level of precision that general-purpose models lack. In a collaboration with Dyno Therapeutics, the model submissions ranked above the 95th percentile of human experts on an RNA sequence prediction task. It also reached the 84th percentile for sequence generation. These aren’t just incremental improvements. They suggest the model is reaching a level of competence that rivals senior researchers in niche fields.

Strategic partnerships and the Novo Nordisk alliance

Big Pharma isn’t waiting around to see if this works. Novo Nordisk has already signed a strategic alliance with OpenAI to apply these technologies across their entire operations, from drug discovery all the way to commercialization. They want to embed AI across their whole pipeline by the end of 2026. This isn’t a small pilot project. It’s a fundamental change in how the company identifies promising drug candidates and identifies ways to shorten R&D timelines.

Other early partners include Amgen, Moderna, and Thermo Fisher Scientific. Amgen is using the model to apply advanced capabilities in ways that could accelerate how they deliver medicines to patients. Moderna and the Allen Institute are also using it to apply biochemical reasoning across their discovery processes. These companies are essentially acting as the beta testers for a new way of doing science where the AI manages the information processing while the human researchers focus on the experimental validation.

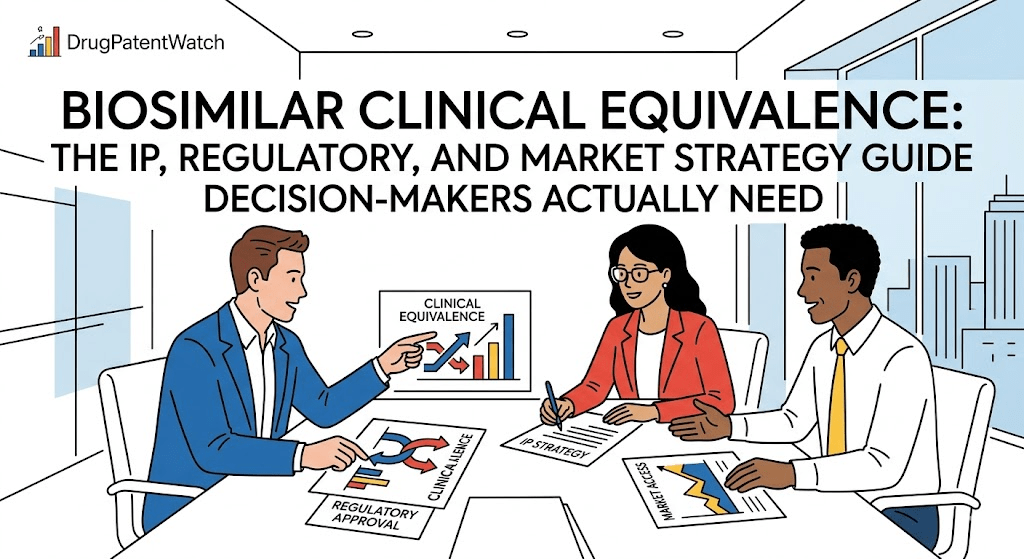

IP strategy in the post-2025 regulatory climate

The legal side of this is a headache. We have been pouring billions into AI-assisted discovery, but the patent offices have been very clear: a machine cannot be an inventor. This gap between what the technology can do and what the law allows is a major risk for branded drug development. In late 2025, the USPTO rescinded its previous inventorship guidance and returned to a traditional conception standard. This means that if you can’t prove a human being conceived the invention, you might not get a patent at all.

I’ve looked at the data from DrugPatentWatch, and it’s clear that the stakes are massive. The patents protecting a drug like Humira generated over $21 billion in a single year. If a company uses GPT-Rosalind to find a new molecule but fails to document exactly how a human researcher directed that discovery, those patents are vulnerable to challenges under 35 U.S.C. § 256. This is not a remote risk. A patent with defective inventorship is unenforceable, which could allow generics to enter the market years earlier than expected.

Using DrugPatentWatch to build a patent thicket

To counter these risks, companies are using tools like DrugPatentWatch to monitor the competitive landscape and identify gaps where they can build a layered defense. AI supercharges this strategy by allowing companies to generate thousands of variations or ‘species’ of a claimed invention. They can then file for compound claims, method-of-use claims, and formulation claims at every milestone.

| Patent Type | Strategic Value | AI Contribution |

| Compound Claims | Protects molecular structure | Generative design of candidates |

| Method-of-Use | Protects specific indications | Identifying new biological pathways |

| Formulation Claims | Core tool for evergreening | Optimizing release profiles |

| Platform Method | Protects the AI itself | Compounded IP moat for licensing |

As the industry moves toward 2030, we see $200 billion in revenue at risk from patent expirations. Companies like Merck, Bristol Myers Squibb, and Pfizer are all facing blockbuster losses. Their response is to use AI to compress the discovery timeline from 15 years down to 5 or 7 years. But they have to be careful. The burden of proof is on the company to show human inventorship. They must keep meticulously documented research logs that capture human input at every step. If they don’t, the AI-discovered drug might end up being public property.

Economic tremors in the CRO ecosystem

The announcement of GPT-Rosalind sent a shockwave through the Contract Research Organization (CRO) sector. On the day of the launch, shares of IQVIA, Charles River Laboratories, Recursion Pharmaceuticals, and Schrodinger all took a hit. Investors are worried that if AI tools can make in-house R&D teams much more efficient, the need to outsource tasks to CROs will vanish.

| Company | Stock Decline Post-Announcement | Primary Vulnerability |

| Recursion Pharmaceuticals | -5.0% | Technology may be superseded by OpenAI |

| Schrodinger | -5.0% | Investors fear competition in AI chemistry |

| IQVIA Holdings | -3.5% | Lower exposure due to high-touch services |

| Charles River Labs | -2.6% | Risk to pre-clinical information processing |

Pre-clinical service providers are the most exposed. The early drug discovery phase—target identification, molecular screening, and literature reviews—relies heavily on information processing. These are precisely the tasks GPT-Rosalind was built to handle. Clinical CROs like IQVIA are a bit safer because they have massive data assets and provide high-touch services that a language model can’t easily replicate. But even they will have to adapt as AI begins to optimize clinical trial planning and operations.

Case studies in AI-native clinical development

We are finally seeing the results of years of investment in AI. By early 2026, over 170 AI-originated drug programs were in clinical development. Insilico Medicine’s drug for idiopathic pulmonary fibrosis (IPF) is the poster child for this movement. It completed Phase IIa trials with results published in Nature Medicine showing dose-dependent improvement in lung function.

I want to highlight the cost difference here. Insilico moved its preclinical candidate into development for about $150,000. The total discovery cost was around $6 million. In a world where the average cost per approved drug is estimated at $2.6 billion to $2.8 billion, that is an incredible level of efficiency. It reached the Phase IIa milestone in 18 months, whereas the traditional path usually takes 6 to 8 years.

Other companies are following suit. Iambic Therapeutics has signed a $1.7 billion partnership with Takeda, and Earendil Labs raised $787 million to focus on AI-designed biologics. Takeda is even planning to file an NDA for zasocitinib in 2026, a drug originally developed through computational design. These aren’t just one-off successes. They are the beginning of a shift where AI-native biotechs command massive partnerships because their platforms can generate future revenue at 40% to 60% lower costs than traditional methods.

Safety protocols and the bioweapons paradox

With great power comes the risk that someone will use it to build something terrible. OpenAI is well aware of this. They have restricted GPT-Rosalind access to organizations conducting legitimate scientific research with clear public benefits. The system has built-in controls to watch for bioweapons concerns. If a user starts asking for help with designing or sourcing a dangerous pathogen, the system triggers high-precision flags.

This is necessary because previous LLMs have shown a poor understanding of biological warfare history and pathogen development. We don’t want a model that can accidentally provide a roadmap for a pandemic. OpenAI is working with Los Alamos National Laboratory to evaluate these risks and ensure that the model’s biological reasoning is used for discovery, not destruction.

Managing the hallucination problem with VaaS

Even a specialized model can still hallucinate. To fight this, researchers are using a multi-layer approach called the Validation as a System (VaaS) pipeline. This pipeline acts like a fact-checker for the AI, verifying every citation and claim before it is committed to a report. In a test with a rare disease database, the VaaS pipeline operated at less than $1 per gene review while achieving the lowest measured hallucination rate for scientific AI.

I’ll mention that this is crucial because scientific foundation models have to be evaluated for bias and generalizability. We can’t just trust the output. We have to have a system that can detect anomalies in scientific data and ensure that the AI isn’t just generating self-fulfilling prophecies. By embedding epistemic integrity constraints into the model’s system prompts, OpenAI and its partners are trying to build tools that are actually reliable enough for high-stakes medical research.

ROI and the shift to enterprise-grade AI

The era of experimentation is over. In 2026, companies are expected to show tangible value from their AI investments. Pharma leaders aren’t just asking if AI works; they are deciding where it must drive growth. Nine out of ten tech leaders in the industry see regulatory friction and scientific advancements from competitors as threats to their growth. Their top priority is accelerated discovery.

| ROI Metric | Reported/Projected Impact | Source |

| R&D Cost Reduction | 15% – 22% within 3-5 years | |

| Industry-wide Profit Increase | $254 Billion by 2030 | |

| Generative AI Value Contribution | $60 – $110 Billion annually | |

| AI Adoption Rate in Pharma/Biotech | 70% in 2026 (up from 63% in 2024) |

We’re seeing a massive bet on AI-driven ROI. Eli Lilly’s $2.75 billion partnership with Insilico and Novo Nordisk’s deal with OpenAI are clear signals of this. These companies are no longer just dabbling. They are looking for high-impact use cases that can deliver 20% to 30% ROI per project. The goal is to turn data complexity into a strategic asset rather than a liability.

The future of the AI-bio interface

I’ve watched the industry go through many cycles, and this one feels like it has legs. We are seeing a new generative biology stack emerge that covers everything from biological design to lab automation. Companies like Profluent are using AI to create entirely new gene-editing proteins similar to CRISPR-Cas9. Others are mining nature’s chemical diversity using AI to decode natural products.

The convergence of big data, advanced algorithms, and immense computing power is transforming patent analysis from a subjective art into a predictive science. Machine learning models can now predict the likelihood of a patent application being granted or the probability of litigation. This allows companies to be proactive and shape the competitive landscape to their advantage rather than just reacting to lawsuits.

To wrap this whole mess up, GPT-Rosalind is the first step toward a more integrated, efficient discovery process. It won’t solve every problem in the lab, and it won’t replace the need for brilliant human chemists. But it will help us move through the most time-intensive parts of the scientific process much faster. If we can cut the time it takes to get a new treatment to patients, that is a win for everyone involved.

Key Takeaways

The drug development landscape is changing because of four main shifts:

- OpenAI has moved from general-purpose models to domain-specific reasoning with GPT-Rosalind, which is integrated with over 50 scientific databases and tools.

- Clinical proof of concept has been achieved, with AI-designed drugs moving through Phase IIa trials in 18 months at a fraction of traditional costs.

- Intellectual property strategy now requires proof of human conception due to a 2025 USPTO guidance change, making meticulous research logs essential.

- Branded manufacturers are using platforms like DrugPatentWatch to build multi-layered patent thickets to defend their exclusivity windows against new AI-accelerated competition.

FAQ

What makes GPT-Rosalind better than a standard AI for biology research?

Standard models are trained on general internet text and often hallucinate scientific facts. GPT-Rosalind is fine-tuned on biochemistry, genomics, and protein engineering data. It also has a plugin that allows it to pull live data from 50 scientific databases, which grounds its reasoning in actual evidence rather than just predicting the next most likely word.

How does the 2025 USPTO guidance change affect AI-discovered drugs?

The USPTO returned to a traditional conception standard, which means only natural persons can be inventors. If an AI proposes a new molecule and a human researcher doesn’t document exactly how they directed that process or why they selected that molecule, the patent could be invalidated for failing to list a human inventor.

Will AI-driven discovery lead to lower drug prices for patients?

In the short term, probably not. Branded manufacturers will use the cost savings to improve their R&D productivity and recoup their investments faster. However, as more AI-designed drugs enter the market and competition increases, we might see more diverse treatment options, and the compression of timelines could lead to earlier generic entry once patents expire.

Why did CRO stock prices drop after OpenAI announced this model?

Investors fear that GPT-Rosalind will allow pharmaceutical companies to bring early-stage research back in-house. Tasks like literature screening, target identification, and molecular design can now be handled by a smaller team of scientists using AI, reducing the need to outsource that work to large pre-clinical CROs.

What is the ‘hallucination’ risk in scientific AI, and how is it being managed?

Hallucination is when an AI fabricates citations or scientific data. It is being managed through systems like the VaaS (Validation as a System) pipeline, which uses multiple agents to cross-verify every claim against verified databases like PubMed or proprietary internal archives before the AI output is finalized.