1. Scale and Economics: What AI Actually Costs and What It Might Deliver

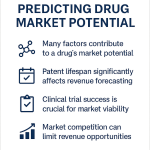

The pharmaceutical industry’s standard development timeline averages 10 to 15 years per approved drug, with total capitalized costs, including the cost of failures, estimated at $2.6 billion per new molecular entity. Clinical attrition drives most of that cost: approximately 90% of drug candidates that enter Phase 1 trials fail before reaching approval. The industry’s basic economic equation, therefore, is one in which a very small number of successful drugs must generate enough revenue to cross-subsidize a very large number of failures.

AI offers a specific intervention at specific points in that equation. It does not eliminate clinical risk, but it can change the composition of the candidate pool that enters the clinic by improving preclinical hit rates, predicting absorption, distribution, metabolism, and excretion (ADME) properties earlier, and identifying patient populations where a drug is more likely to work. A candidate pool with better preclinical pharmacology data and more precise patient stratification produces fewer late-stage failures, and late-stage failures are the costliest kind.

The market’s aggregate projection for AI in drug discovery reaches $16.52 billion by 2034, up from approximately $3.54 billion in 2023. McKinsey estimates that generative AI could create between $60 billion and $110 billion in annual value across the pharmaceutical industry by accelerating discovery, development, and commercialization. These are projections, not guarantees, but they establish the scale of capital that is being allocated to the hypothesis.

What the projections typically understate is the structural asymmetry between the upside and the risk. The upside accrues to companies that successfully develop and patent new drugs. The risk accrues to companies that develop drugs with significant AI contribution but fail to build the legal and regulatory documentation infrastructure needed to defend those drugs as protectable assets. A drug candidate that a company cannot patent, because it cannot demonstrate human inventorship, or cannot approve, because it cannot establish the credibility of the AI-generated data underlying its clinical submission, represents a total loss of both the R&D investment and the market opportunity.

This guide addresses that risk directly. It covers the legal doctrine, the regulatory framework, and the operational practices that determine whether an AI-assisted drug program produces a protectable, approvable commercial asset or a valuable scientific result with no legal home.

Key Takeaways: Section 1

AI’s primary economic contribution to pharma is reducing the cost of preclinical failure and improving candidate quality entering clinical trials. Market projections of $60-110 billion in annual AI-generated value assume that discoveries are patent-protected and regulatorily approvable. Companies that fail to build adequate IP documentation infrastructure risk developing scientifically valid drug candidates that have no legal basis for market exclusivity.

2. AI Across the Drug Lifecycle: Target ID Through Post-Market Surveillance

2a. Target Identification and Validation

Drug discovery begins with target identification: selecting the gene, protein, or biological pathway that, when modulated, will produce a therapeutic effect. Traditional target identification relies on genetic association studies, phenotypic screens, and decades of accumulated biochemical literature. The process is slow and highly dependent on the specific prior knowledge of the research team.

Machine learning approaches this problem differently. By ingesting multi-omics datasets, which combine genomic, transcriptomic, proteomic, and metabolomic data from patient cohorts, ML models can identify statistical associations between molecular profiles and disease states that are too complex to detect by any other method. Causal inference models can then assess which of those associations represent biological mechanisms that could, in principle, respond to pharmacological modulation.

The practical output is a longer and more diverse target list, with some targets that would not have been generated by conventional hypothesis-driven research. BenevolentAI’s identification of PDE10 as a target for ulcerative colitis is the canonical example: the company’s knowledge graph, built from millions of biomedical papers, identified a non-obvious relationship between PDE10 inhibition and intestinal inflammation that no single researcher had assembled from the literature. The resulting candidate BEN-8744 advanced to clinical trials, providing a concrete validation of ML-assisted target identification.

2b. De Novo Molecular Design

Once a validated target exists, the next step is finding or designing a molecule to interact with it. Generative AI has changed this process fundamentally. Generative models, including variational autoencoders (VAEs), generative adversarial networks (GANs), diffusion models, and transformer architectures adapted from natural language processing, can generate novel molecular structures that have never existed before, rather than simply searching chemical databases for known compounds.

The key technical advance enabling this is Google DeepMind’s AlphaFold, which can predict protein tertiary structure from amino acid sequence with accuracy that was not achievable computationally five years ago. AlphaFold outputs provide the three-dimensional structural information that generative models need to design molecules that fit into a protein’s active site with high affinity. The combination of AlphaFold-class structural prediction with a generative chemistry model constitutes the basic architecture of modern AI-first drug design.

Insilico Medicine’s INS018_055 program illustrates the end-to-end workflow. The company’s PandaOmics platform identified a novel target in idiopathic pulmonary fibrosis (IPF). Its Chemistry42 generative chemistry system then designed novel small molecule structures against that target. The result was a drug candidate with a novel target and a novel structure, neither drawn from existing literature. INS018_055 entered Phase 2 trials, earning the designation as the first drug with both an AI-identified target and an AI-designed molecule to reach that clinical stage.

2c. Predictive ADME/T Modeling and Virtual Screening

Before a compound is synthesized in a laboratory, AI models can predict its pharmacokinetic properties, including oral bioavailability, hepatic metabolism, renal clearance, blood-brain barrier penetration, and cardiac ion channel inhibition risk (hERG liability). These predictions allow research teams to triage hundreds or thousands of AI-generated candidates and select only those with predicted ADME profiles consistent with clinical development.

Prediction accuracy for these properties has improved substantially as training datasets have grown. The FDA’s database of approved drugs, combined with proprietary internal datasets at large pharmaceutical companies, provides millions of matched structure-property data points. Models trained on these datasets can now estimate basic ADME properties with error rates low enough to be practically useful for prioritization, though not for replacing experimental characterization.

2d. Clinical Trial Design, Patient Stratification, and AI-Assisted Submission

AI’s influence on clinical development is more recent and technically less mature than its influence on discovery, but it is accelerating. In trial design, ML models applied to electronic health record (EHR) data can identify patient populations where a therapeutic hypothesis is most likely to produce a detectable signal, allowing sponsors to design smaller, more targeted trials with higher a priori probability of success. AI can reduce trial duration estimates by 10% through improved enrollment efficiency, according to published analyses.

Patient stratification using predictive biomarker discovery is the highest-value near-term application. Identifying a biomarker subpopulation where response rates are substantially higher than in the unselected population can mean the difference between a failed Phase 3 trial and an approved drug with a companion diagnostic. Atomwise’s application of its AtomNet platform to TYK2 inhibitor design for autoimmune conditions included early integration of patient genetic data to identify likely responders, an approach that is now standard in precision oncology and is expanding into immunology.

Pfizer’s application of ML to regulatory submissions, documented in public statements from Boris Braylyan, then Vice President at Pfizer, involves training models on the historical record of FDA review questions and Complete Response Letters to predict what questions a given submission is likely to receive. Proactively addressing those questions in the original NDA can compress the back-and-forth communication cycle with the FDA by weeks to months.

2e. Manufacturing, Supply Chain, and Pharmacovigilance

Advanced Process Control (APC) systems apply real-time sensor data and ML models to pharmaceutical manufacturing processes, adjusting parameters continuously to maintain product quality within specification. This is particularly valuable for biologic manufacturing, where cell culture conditions directly affect product quality attributes and batch-to-batch consistency is a regulatory priority.

Post-market pharmacovigilance is another high-value AI application. Natural language processing models applied to the FDA Adverse Event Reporting System (FAERS), EHR databases, and patient forum text can detect adverse event signals earlier and at lower thresholds than manual case review. Drug repurposing, the identification of new therapeutic applications for approved drugs, is a related application: ML models that can identify mechanistic similarities between different diseases can flag existing drugs as candidates for new indications, generating clinical programs that leverage existing safety databases and potentially shortened development timelines.

Key Takeaways: Section 2

AI’s drug discovery applications span eight distinct workflow stages, from target identification to pharmacovigilance. De novo generative design, enabled by AlphaFold-class structural prediction, is the technology responsible for the first clinically validated AI-first drug candidates. Predictive ADME/T modeling shifts compound attrition from wet lab to virtual screen, reducing early-stage development costs. AI-assisted regulatory submission is an emerging capability that can reduce review cycle time by improving first-cycle approval rates.

3. The TechBio Vanguard: IP Asset Valuation for Insilico, Recursion, and BenevolentAI

3a. Insilico Medicine: The End-to-End Platform and Its IP Asset Structure

Insilico Medicine’s commercial value derives from two distinct, intertwined asset classes that must be analyzed separately in any IP valuation exercise. The first is its Pharma.AI platform, which integrates PandaOmics (target discovery), Chemistry42 (generative molecular design), and InClinico (clinical outcome prediction). The second is its proprietary drug pipeline, anchored by INS018_055 for IPF and several earlier-stage candidates across oncology and fibrosis.

For IP valuation purposes, the platform and the pipeline require different legal instruments and different risk discounts. The Pharma.AI platform’s core value as an IP asset depends almost entirely on trade secret protection. The specific neural network architectures, training data curations, model weights, and prompt engineering protocols that enable PandaOmics to identify non-obvious disease targets are not publicly disclosed. If they were disclosed in patent applications, competitors could use that information to build competing platforms. The platform is therefore protected primarily through confidentiality agreements, access controls, and the operational barrier of needing large proprietary datasets to replicate its performance, not through registered IP.

INS018_055, by contrast, is protected through conventional pharmaceutical patents on the compound’s composition of matter and its method of use for treating IPF. The patentability of INS018_055 does not depend on whether an AI system was involved in its design; it depends on whether the compound is novel and non-obvious relative to the prior art, and whether the human scientists who guided the discovery process made a sufficient inventive contribution under the standard the USPTO has articulated.

Insilico’s 2023 partnership with Sanofi, potentially worth up to $1.2 billion in milestones plus royalties, assigns value to both asset classes simultaneously. Sanofi is paying for access to Chemistry42 as a discovery tool and for downstream rights to specific compounds that emerge from that discovery process. The deal structure, with most value contingent on clinical milestones, reflects the market’s appropriate discount for the clinical stage uncertainties that AI does not eliminate.

At the IPO stage, Insilico’s IP valuation challenge is typical of TechBio companies: how do you apportion enterprise value between the platform and the pipeline? A pure-software valuation methodology undervalues the pipeline; a pure-pharma DCF methodology ignores the platform’s recurring value generation capacity. Most analysts have settled on a sum-of-parts approach: a probability-adjusted pipeline NPV using standard pharmaceutical DCF methodology, plus a platform value derived from a revenue multiple on licensing income or a comparable precedent transaction involving AI discovery platform sales.

3b. Recursion Pharmaceuticals: The Operating System and Its Valuation Complications

Recursion’s platform, the Recursion OS, generates value through a specific technical architecture: high-throughput automated biology experiments produce phenotypic images of drug-treated human cells; those images are analyzed by ML models to build a searchable map of biological relationships; and that map is queried to identify drug-target hypotheses. The company processes approximately 2.5 million experiments per week, generating a 23-petabyte biological dataset that it considers its primary competitive moat.

The 23-petabyte dataset is both Recursion’s most valuable asset and its most difficult to protect. Data is not, in the abstract, patentable, and it is difficult to protect as a trade secret when access to the data is shared with partners. The NVIDIA partnership, which involves providing Recursion’s biological data to train foundation models, illustrates the tension: training a public foundation model on proprietary data potentially transfers some of the competitive advantage embedded in that data to the foundation model’s users.

Recursion’s merger with Exscientia in 2024 consolidated two of the best-funded AI drug discovery platforms into a single entity with a combined pipeline of more than 40 drug candidates. For IP analysts, the post-merger entity presents a straightforward consolidation valuation: sum the probability-adjusted NPVs of all pipeline assets, add a platform premium for the combined 23+ petabyte dataset and computational infrastructure, and apply a liquidity discount for the clinical stage uncertainty. The platform premium is the contested number, because it depends on assumptions about how durable the dataset moat is relative to the datasets that competitors can build or acquire.

3c. BenevolentAI: Knowledge Graph IP and the Target Identification Premium

BenevolentAI’s core IP asset is its biomedical knowledge graph, a continuously updated structured representation of relationships extracted from more than 25 million scientific papers, structured databases, and clinical datasets. The knowledge graph is the substrate on which BenevolentAI’s target identification models operate. Its commercial value lies in its ability to surface non-obvious disease-biology relationships that generate patentable target-indication pairs.

The IP valuation framework for BenevolentAI’s knowledge graph is different from Insilico’s model weights or Recursion’s imaging data. Knowledge graphs are, in principle, reconstructable by any organization with sufficient natural language processing capability and access to the same scientific literature. BenevolentAI’s defensibility depends not on the graph being truly unique, but on it being continuously maintained and updated at a quality level that is difficult to replicate quickly, and on the proprietary extraction algorithms and entity-linking methodologies that translate raw text into graph relationships.

BEN-8744, the PDE10 inhibitor for ulcerative colitis that BenevolentAI advanced to clinical trials, validates the target identification platform’s commercial output. In IP terms, BEN-8744 is protected through a composition of matter patent on the compound and a method of use patent for its UC indication. The scientific narrative of the discovery, specifically the AI-generated hypothesis linking PDE10 to intestinal inflammation, does not appear in the patent claims themselves, but the documentation of that discovery process is critical for establishing that a human scientist, acting on the AI-generated hypothesis, made a sufficient inventive contribution to claim inventorship.

Investment Strategy: TechBio Asset Valuation

TechBio companies present a valuation problem that standard pharma and standard software methodologies both handle inadequately. The correct approach is a sum-of-parts model with at least four components: (1) probability-adjusted pipeline NPV for each clinical stage asset, using standard pharmaceutical failure rates by indication and phase; (2) platform licensing revenue multiple for disclosed partnership deals, capitalized at a discount rate that reflects the contract duration and renegotiation risk; (3) dataset or knowledge graph moat premium, estimated as the replacement cost of building a comparable proprietary dataset from scratch, discounted for the probability that competitors close the gap within five years; (4) a liquidity and execution discount reflecting the company’s cash runway relative to the capital needed to reach clinical proof-of-concept for its lead asset. Investors who apply only the pipeline NPV miss the platform premium; those who apply only a platform revenue multiple miss the execution risk attached to clinical development.

Key Takeaways: Section 3

Insilico, Recursion, and BenevolentAI represent three distinct platform architectures that require different IP protection strategies and different valuation methodologies. The platform asset (algorithms, data, prompts) requires trade secret protection; the pipeline asset (drug compounds) requires conventional composition of matter and method of use patents. A sum-of-parts valuation model with four components, covering pipeline NPV, platform licensing, dataset moat, and execution discount, is the appropriate analytical framework for TechBio equities.

4. The Dual-Asset Problem: Why TechBio IP Strategy Requires Two Separate Frameworks

A TechBio company owns two categories of IP that have different legal characteristics, different competitive dynamics, and different optimal protection strategies. Conflating them produces a strategy that is suboptimal for both.

The first category is the discovery engine: the AI models, the training datasets, the computational infrastructure, the prompt libraries, and the proprietary biological assays that feed into those models. This is what generates candidates. Its competitive value is durability, the ability to produce new drug candidates continuously over time. Durability requires secrecy. If a competitor learns the specific architecture of a model, the composition of its training data, and the prompting strategy used to generate its best outputs, they can replicate the discovery process. The correct protection instrument is trade secret law, supplemented by contractual confidentiality, strict access controls, and the practical barrier of needing proprietary data at scale to achieve equivalent performance.

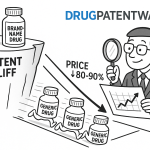

The second category is the discovery output: the specific drug compounds, methods of treating specific diseases, formulations, and dosing regimens that emerge from the engine. This is what generates revenue. Its competitive value is exclusivity, the legal right to prevent others from making, using, or selling the same compound or method. Exclusivity requires registration. Trade secrets do not provide registered exclusivity; patents do. The correct protection instrument is a composition of matter patent for the compound and a method of use patent for each approved indication.

The interaction between these two frameworks creates strategic tension. Satisfying patent disclosure requirements, specifically the enablement and written description requirements under 35 U.S.C. Section 112, may require disclosing more about the AI-assisted discovery process than the company would prefer from a trade secret standpoint. A patent application that describes, in sufficient technical detail, how the generative model produced the claimed compound could give competitors enough information to replicate key elements of the discovery process.

Managing this tension requires a deliberate decision for each patent application: how much AI process detail must be disclosed to satisfy Section 112, and how much can be withheld as trade secret without jeopardizing the patent? The answer depends on what a person of ordinary skill in the art (PHOSITA) would need to be taught in order to make and use the invention. If the compound’s synthesis and biological activity can be fully described without describing the AI model that generated the molecule’s structure, the AI process details can be omitted from the application. If the AI’s output is what makes the compound work in a non-obvious way, more process disclosure may be required.

Key Takeaways: Section 4

TechBio companies own two distinct IP asset classes, the discovery engine and the discovery output, that require different protection instruments. The discovery engine requires trade secret protection; the discovery output requires patent registration. The strategic tension between patent disclosure requirements and trade secret preservation is manageable through careful application drafting, but requires deliberate legal analysis for each filing rather than a uniform template.

5. The DABUS Cases: A Global Inventory of AI Inventorship Rulings

5a. Overview and Stakes

Between 2018 and 2025, Dr. Stephen Thaler filed patent applications in at least sixteen jurisdictions naming his AI system DABUS (Device for the Autonomous Bootstrapping of Unified Sentience) as the sole inventor. DABUS independently generated two inventions: a food container with a fractal surface geometry designed to improve grip and thermal efficiency, and a flashing light system for emergency signaling. Thaler did not claim any personal inventive contribution. His applications were designed as test cases to force patent systems to answer a binary question: does current law permit an AI system to be named as an inventor?

The answer from virtually every major patent jurisdiction is no. The global consensus matters because it establishes the legal baseline against which every AI-assisted drug discovery program operates. Companies that develop drugs using generative AI cannot name the AI system as the inventor. They must identify human scientists who made a ‘significant contribution’ to the conception of the invention, and they must document that contribution in sufficient detail to withstand patent examination and potential legal challenge.

5b. United States: Thaler v. Vidal (Fed. Cir. 2022)

The U.S. Court of Appeals for the Federal Circuit decided Thaler v. Vidal in August 2022, ruling that the Patent Act requires inventors to be natural persons. The court’s analysis rested on three textual arguments. First, the Patent Act uses the term ‘individual,’ which the Supreme Court has consistently construed to mean a natural person, not a corporation or a machine. Second, the statute uses personal pronouns, ‘himself’ and ‘herself,’ when referring to inventors, pronoun choices that reflect a legislative assumption of human inventors. Third, the statute requires an inventor to execute an oath or declaration, a performative act that a machine cannot perform.

The court expressly declined to reach the question of whether inventions made by humans with AI assistance are patentable. This deliberate limitation is the most important aspect of the ruling for pharmaceutical companies. The Federal Circuit did not hold that AI involvement disqualifies an invention from patent protection. It held that an AI system cannot itself be named as the inventor. The door to patenting AI-assisted inventions, provided a human inventor can be identified, remains open.

The U.S. Supreme Court’s April 2023 denial of certiorari in the Thaler case left the Federal Circuit’s ruling intact. It also left unresolved what level of human contribution is sufficient for inventorship in AI-assisted discovery, which is now the central unsettled question in pharmaceutical patent law.

5c. United Kingdom: Thaler v. Comptroller-General of Patents

The UK Supreme Court’s 2023 judgment addressed Thaler’s applications under the Patents Act 1977 and rejected two distinct theories of AI inventorship. The first, that the Act should be read to permit non-human inventors, failed because the Act’s provisions, particularly Section 7 and Section 13, use language that presupposes a natural person: an inventor is someone who ‘devises’ an invention, a cognitive act that current law attributes only to humans.

The second theory, the ‘doctrine of accession,’ argued that Thaler, as the owner of the DABUS machine, should own DABUS’s inventions by the same logic that a landowner owns the fruit of trees growing on their land. The Supreme Court rejected this argument on the grounds that the doctrine of accession applies to physical property, not intellectual property, and that extending it to patents would require a legislative change, not a judicial interpretation.

The UK ruling is notable for its intellectual rigor in addressing and dismantling the ownership-through-machine-possession argument, because this argument is the most commercially intuitive one that pharmaceutical companies might make for company ownership of AI-generated inventions. The ruling makes clear that the correct path is to identify a human inventor, not to claim ownership through the company’s ownership of the AI tool.

5d. European Patent Office

The EPO’s Legal Board of Appeal rejected Thaler’s applications under the European Patent Convention, finding that the EPC requires an inventor to be a natural person with legal personality. The EPO’s reasoning went beyond the UK Supreme Court’s textual approach to address the systemic function of inventorship: an inventor, under the EPC framework, must be capable of holding and transferring rights. An AI system has no legal personality and therefore cannot hold rights, cannot enter into assignments, and cannot execute declarations, all functions that the patent system requires of an inventor.

The EPO’s analysis is significant for pharmaceutical companies using AI platforms developed by third-party vendors. When a biotech company licenses an AI platform and uses it to discover a drug candidate, the question of inventorship runs to the human scientists at the biotech company who formulated the problem, guided the discovery, and validated the output, not to the AI vendor or to the AI platform itself.

5e. Germany: BGH DABUS Decision (June 2024)

The German Federal Court of Justice’s June 2024 decision added a practical nuance not present in the other rulings. The BGH held that the AI cannot be named as inventor but suggested that the human who provided the ‘decisive influence’ in the invention process must be identified as the inventor. It also indicated that the AI system’s role could be disclosed in the application as a tool used in the inventive process. This transparency mechanism, allowing the application to describe the AI’s role without making the AI the inventor, aligns with the broader trend in patent offices worldwide toward requiring disclosure of AI use in the application or prosecution process.

5f. Summary of Global Rulings

| Jurisdiction | Ruling | Year | Key Legal Basis | Path for AI-Assisted Inventions |

|---|---|---|---|---|

| United States | AI cannot be inventor | 2022 | ‘Individual’ means natural person (Patent Act) | Human with ‘significant contribution’ required |

| United Kingdom | AI cannot be inventor | 2023 | Inventor must ‘devise’; doctrine of accession rejected | Identify human inventor; AI is a tool |

| European Patent Office | AI cannot be inventor | 2020 | No legal personality; cannot hold or transfer rights | Human inventor required; AI disclosed as tool |

| Germany | AI cannot be inventor | 2024 | BGH; human with ‘decisive influence’ must be named | AI can be mentioned; human with decisive role is inventor |

| Australia (final) | AI cannot be inventor | 2022 | Full Federal Court overturned lower court ruling | Human inventor required |

| South Africa | Patent granted naming AI | 2021 | Depository system; no substantive examination | Outlier; no precedential value |

Key Takeaways: Section 5

Every major patent jurisdiction except South Africa has ruled that AI systems cannot be inventors. The rulings are consistent in their outcome but vary in their legal reasoning, with the EPO emphasizing legal personality, the UK addressing ownership-through-possession, and Germany providing the most operationally useful guidance by suggesting that AI can be disclosed as a tool while a human with ‘decisive influence’ is named as inventor. No jurisdiction has held that AI involvement disqualifies an invention from patent protection, provided a qualified human inventor can be identified.

6. Thaler v. Vidal and U.S. Law: The ‘Individual’ Requirement Examined

6a. The Statutory Text and Its History

The Federal Circuit’s analysis in Thaler v. Vidal turned on 35 U.S.C. Section 100(f), which defines ‘inventor’ as ‘the individual or, if a joint invention, the individuals collectively who invented or discovered the subject matter of the invention.’ The court treated ‘individual’ as the operative term and applied the ordinary statutory interpretation principle that a word used without definition takes its ordinary meaning, which the Supreme Court has repeatedly confirmed means a natural person when used in federal statutes.

This textualist reading, while legally sound, creates a gap between the statute’s language and the technological reality of AI-assisted drug discovery. Congress drafted the relevant provisions in 1952 and most recently updated them in the America Invents Act of 2011, neither of which contemplated generative AI systems capable of producing novel molecular structures with limited human direction. The statute’s text does not address AI contribution because AI contribution was not a legislative concern at the time of drafting.

The court’s ruling does not make those statutory gaps disappear. It means that courts and the USPTO must handle AI inventorship questions using the existing framework, with ‘individual’ meaning natural person, until Congress acts. The practical consequence is that every pharmaceutical patent application involving AI-assisted discovery must identify a natural person as inventor, and that person’s contribution must satisfy the legal standards for inventorship that the courts have developed over decades.

6b. The USPTO’s Guidance on AI-Assisted Inventions

The USPTO published guidance on AI-assisted inventions in February 2024, addressing the inventorship question directly in light of Thaler v. Vidal and related developments. The guidance confirms that a natural person must have made a ‘significant contribution’ to each claim in the patent application to qualify as an inventor for that claim. This is a per-claim standard, not a per-application standard. A scientist who made a significant contribution to the conception of compound A but not compound B should be named as an inventor of claims covering compound A but not those covering compound B.

The USPTO guidance draws explicitly on the legal standard articulated in Pannu v. Iolab Corp. (Fed. Cir. 1998), a joint inventorship case that required each co-inventor to (1) contribute in some significant manner to the conception or reduction to practice of the invention; (2) make a contribution to the claimed invention that is not insignificant in quality when measured against the full scope of the invention; and (3) do more than merely explain to the real inventors well-known concepts and/or the current state of the art. The USPTO applies these three Pannu factors to assess whether a human’s contribution in an AI-assisted discovery program is sufficient for inventorship.

Key Takeaways: Section 6

U.S. patent law requires a natural person inventor because the Patent Act uses the term ‘individual,’ which the Supreme Court has consistently read to mean a natural person. The USPTO’s 2024 guidance applies the Pannu three-factor test on a per-claim basis to assess whether a human scientist’s contribution in an AI-assisted discovery program is sufficient for inventorship. Pharmaceutical companies must identify and document qualifying human contributions for each individual claim, not just for the patent application as a whole.

7. Post-DABUS Doctrine: Significant Contribution, Pannu Factors, and the Prompting Problem

7a. What Constitutes ‘Significant Contribution’ in AI-Assisted Discovery

The Pannu framework was developed for joint human inventorship disputes, typically cases where two researchers disagree about who contributed what to a shared discovery. Applying it to AI-assisted discovery requires stretching its concepts into a new context, specifically, assessing the relative contributions of a human and an AI system that cannot itself be named as an inventor.

The USPTO’s 2024 guidance provides several examples of human activities that can constitute significant contribution. Constructing a specific problem in a way that meaningfully limits the AI’s search space counts as significant contribution. Selecting specific training data, curating it to address a defined biological problem, and making expert choices about which data to include or exclude can count. Critically evaluating the AI’s output using scientific expertise, not merely confirming that it is chemically valid, and making a judgment-driven selection among multiple AI-generated candidates can count. Experimentally modifying an AI-generated compound to improve its pharmacological performance, based on human-guided structural insights, almost certainly counts.

What does not count, under the guidance, is merely presenting a general research goal to an AI system, accepting the AI’s output without exercising expert judgment in its selection or modification, or performing standard confirmatory experiments that any skilled technician could conduct without inventive input.

7b. The Prompting Problem: Is Prompt Engineering Inventorship?

The most commercially important unsettled question in AI patent law is whether sophisticated prompt engineering constitutes inventorship. A scientist who designs a highly specific, technically informed prompt for a generative AI model, specifying the molecular scaffold, the binding pocket geometry, the ADME constraints, and the selectivity profile that the AI should optimize against, is doing something qualitatively different from a user who asks a general-purpose AI for ‘a drug molecule.’ The specific prompt reflects deep scientific expertise and makes choices that directly determine the character of the AI’s output.

Whether this rises to the level of significant contribution under Pannu is not settled law. The USPTO’s guidance suggests that ‘appreciably changing’ the output through prompt design can satisfy the standard. But ‘appreciably changing’ is itself an ambiguous formulation. The resolution of this question in future case law will have major implications for pharmaceutical patent strategy, because prompt engineering is the primary mode of human interaction with generative chemistry platforms.

The conservative approach, appropriate for companies that cannot afford ambiguity in their patent portfolios, is to ensure that prompt engineering is only one element of the documented human contribution, not the whole of it. Supporting that contribution with documented data curation, candidate selection rationale, and wet lab validation provides a multi-factor basis for inventorship that is far more robust than prompt engineering alone.

7c. Joint Inventorship with AI Systems: What the Guidance Prohibits

The USPTO guidance is unambiguous that a human cannot claim inventorship by arguing that they are a joint inventor alongside an AI system. The guidance states that AI systems cannot be listed as inventors. It also states that a human who only supervised an AI, without independently conceiving of any element of the claimed invention, does not qualify as an inventor. Supervision of the AI development process and ownership of the AI system do not, by themselves, create inventorship rights.

This prohibition is commercially significant because it eliminates a tempting shortcut: a company that wants to patent an AI-generated compound cannot simply name the human who deployed the AI as inventor on the grounds that they controlled the process. That human must have made an independent inventive contribution that would qualify under the Pannu factors regardless of what the AI did.

Key Takeaways: Section 7

The Pannu three-factor test is the operative legal standard for significant human contribution in AI-assisted pharmaceutical patents. Qualifying activities include constructing a specific and limiting research problem, curating proprietary training data, exercising expert judgment in candidate selection, and making structural modifications to AI-generated compounds based on experimental results. Prompt engineering alone, without supporting activities, is an ambiguous basis for inventorship. Supervision of the AI process and ownership of the AI tool do not create inventorship rights.

8. Enablement and Written Description: The Black-Box Disclosure Challenge

8a. The 35 U.S.C. Section 112 Requirements

Patent law requires the specification of a patent application to satisfy two distinct disclosure standards. The written description requirement demands that the specification show that the inventor was in possession of the claimed invention at the time of filing, that the invention was conceived before the application date. The enablement requirement demands that the specification teach a person of ordinary skill in the art how to make and use the claimed invention without undue experimentation.

For conventional pharmaceutical patents on small molecule drugs, satisfying these requirements typically involves providing synthesis routes, spectroscopic characterization data, binding affinity measurements, and in vivo efficacy data in a relevant animal model. The PHOSITA standard for a medicinal chemist or pharmacologist is well-developed, and patent examiners have decades of experience assessing whether a given specification adequately discloses a small molecule drug.

AI-assisted drug discovery creates new and more difficult enablement questions. If the claimed compound was generated by a generative AI model operating on a large, proprietary dataset, what must the specification disclose about the AI process for a PHOSITA to replicate the discovery? Must the specification describe the model architecture? The training data composition? The specific prompts used? The parameters of the model at the time of generation?

8b. The Black-Box Problem

Many commercial AI systems used in drug discovery are, in varying degrees, ‘black boxes.’ The term refers to neural networks whose internal decision-making process, specifically which training data features the model weighted most heavily in generating a particular output, is not directly interpretable even by the model’s developers. A graph neural network that generates a novel molecular structure does not produce an explanation of why it chose that structure over the billions of alternatives it evaluated internally.

This opacity creates a disclosure problem. If a patent applicant cannot explain how the AI generated the claimed compound, the specification may fail the enablement requirement because a PHOSITA following the patent’s disclosure cannot replicate the discovery without having access to the same AI model operating on the same data. Courts have invalidated patents on grounds of insufficient enablement when the claimed invention’s full scope could not be produced without undue experimentation, and the AI black-box problem creates a version of that same issue.

8c. Practical Solutions to the Disclosure Challenge

Three practical approaches help manage the black-box disclosure problem without compromising trade secrets. The first is to ground the patent claims in the experimentally characterized compound rather than in the AI process that generated it. If the specification provides a complete synthesis route, full structural characterization, and reproducible biological activity data for the compound, the enablement requirement is satisfied regardless of how the compound was initially designed, because a PHOSITA can replicate the compound using the disclosed synthesis route, not by running the AI model again.

The second approach is to disclose enough about the AI process to establish plausibility without disclosing the proprietary details that constitute trade secrets. A patent application might describe the general class of generative model used, the type of training data (e.g., ‘a dataset of known EGFR inhibitors with associated binding affinity data’), and the target property constraints specified in the prompt, without disclosing the specific model weights, the full dataset composition, or the exact prompt text.

The third approach, increasingly used by the most sophisticated TechBio patent filers, is to claim the compound and its method of use while filing a concurrent continuation application that claims aspects of the AI-assisted discovery method itself. This separates the drug compound claims (which need only disclose the compound) from the method claims (which must disclose the method), allowing each application to manage its own disclosure burden independently.

Key Takeaways: Section 8

The enablement requirement under 35 U.S.C. Section 112 applies to AI-assisted pharmaceutical patents and requires the specification to teach a PHOSITA how to make and use the claimed compound without undue experimentation. The black-box opacity of many generative AI systems creates a disclosure challenge because the internal process that generated the compound is not interpretable. The most robust solution is to ground claims in experimentally characterized compounds with complete synthesis and characterization data, supplemented by sufficient AI process description to establish plausibility, while preserving specific model architecture and training data details as trade secrets.

9. Non-Obviousness in the Age of Generative AI: The PHOSITA Elevation Problem

9a. The Legal Standard and Its Dynamic Nature

Under 35 U.S.C. Section 103, a patent claim is invalid if the differences between the claimed invention and the prior art are such that the invention ‘as a whole would have been obvious’ to a person having ordinary skill in the pertinent art at the time the invention was made. The PHOSITA is not a genius or an expert; they are a competent practitioner with average knowledge and average creative capability in their field.

The PHOSITA standard is not static. It reflects the actual state of knowledge and practice in the field at the time of the invention. As techniques, tools, and knowledge advance, the PHOSITA becomes more capable, and what qualifies as a non-obvious invention rises accordingly. This dynamic has been observed throughout pharmaceutical history: analytical techniques that once required specialized expertise are now routine, and molecular hypotheses that once required creative leaps can now be generated systematically.

Generative AI accelerates this PHOSITA elevation. When a commercially available AI platform can, in principle, generate thousands of novel molecular structures optimized against a given target within hours, the argument that any particular AI-generated structure is non-obvious becomes harder to sustain.

9b. The Obviousness Problem for Generativity at Scale

The core challenge is that generative AI systems perform a form of systematic exploration of chemical space that is analogous to, but vastly more efficient than, the combinatorial chemistry techniques that courts have evaluated in obviousness analyses. Courts have held that a compound is not automatically obvious merely because it belongs to a class of compounds known to have activity against a target. The question is whether there was reason to select this specific compound, with a reasonable expectation of success, given what was known in the prior art.

Generative AI, by exploring enormous chemical space against defined property constraints, provides exactly that kind of systematic selection rationale. A generative model prompted to design an EGFR inhibitor with a specified selectivity profile and oral bioavailability window is doing something a PHOSITA could, in principle, have done manually, just more efficiently. If AI tools make this exploration routine rather than exceptional, patent examiners could start treating AI-generated compounds as prima facie obvious, requiring applicants to show unexpected results to overcome the rejection.

9c. What Constitutes Non-Obvious Contribution in AI-Assisted Discovery

The non-obviousness of an AI-assisted invention is more defensible when the human contribution extends beyond running a commercial platform. Designing a novel computational method that enables the AI to explore regions of chemical space that existing tools cannot reach is non-obvious. Building a proprietary training dataset with unique biological annotations that enables the model to generate candidates with qualitatively different properties than those producible by publicly available platforms is non-obvious. Identifying a target-indication pair that no prior art suggested, using AI-assisted analysis of proprietary patient data, is non-obvious. Discovering that an AI-generated compound has a mechanism of action or a selectivity profile that was unexpected given the prior art is non-obvious.

The pattern across these examples is that non-obviousness is most defensible when the human scientist’s contribution involves either a novel methodological input, such as a unique dataset or model architecture, or a discovery about the AI-generated compound that was not predictable from what was known before.

Key Takeaways: Section 9

Generative AI elevates the PHOSITA standard in drug discovery by making systematic chemical space exploration more accessible. AI-generated compounds that a commercially available platform could produce using standard inputs face heightened obviousness risk. Non-obviousness is most defensible when the human contribution involves novel computational methodology, proprietary datasets, unexpected compound properties, or target identification from non-public data sources.

10. Technology Roadmap: De Novo Design, AlphaFold Integration, and the Generative Pipeline

10a. The Generative Chemistry Architecture: Current State

The current state of AI-first small molecule drug design uses a multi-stage computational pipeline. Stage one is target structure determination, increasingly enabled by AlphaFold 2 and its successors, which can predict protein three-dimensional structure from sequence with accuracy sufficient for structure-based drug design in many cases. Stage two is binding site characterization, combining AlphaFold outputs with molecular dynamics simulations to identify druggable pockets and their dynamic properties. Stage three is generative design, using graph neural networks, diffusion models such as DiffSBDD and DiffDock, or language model architectures trained on molecular SMILES representations to generate novel structures optimized for binding affinity and selectivity. Stage four is multi-property optimization, filtering and refining the generative output against ADME/T predictions, synthetic accessibility scores, and intellectual property landscape screening. Stage five is prioritization and wet lab handoff, where human scientists apply domain expertise to select candidates for synthesis and experimental validation.

The key technical inflection over the past three years has been the transition from rule-based and fragment-based design to generative design. Rule-based methods modify known scaffolds through predefined transformations; fragment-based methods connect validated pharmacophoric elements. Generative design starts from scratch, producing structures that may have no precedent in any chemical database.

10b. AlphaFold’s Specific Impact on Patent Strategy

AlphaFold’s structural predictions are published in the public AlphaFold Protein Structure Database, covering more than 200 million proteins. This public availability has a specific and underappreciated impact on patent prosecution. When AlphaFold predicts the structure of a previously unsolved protein target, that predicted structure immediately becomes prior art for any patent application claiming binding to that target’s active site.

Before AlphaFold, the three-dimensional structure of many disease-relevant proteins was unknown. An AI-designed molecule that exploited a specific geometric feature of an unsolved structure was inherently non-obvious because the structural knowledge required to design such a molecule was not in the prior art. After AlphaFold, the structural knowledge is public. An AI design that exploits an AlphaFold-predicted binding pocket must clear a higher obviousness bar because the binding pocket itself is no longer novel or non-obvious.

Patent prosecutors in AI-first companies must now conduct AlphaFold database searches as part of their prior art review. If AlphaFold predicts the structure of the target protein, and if the claimed compound’s mechanism of action involves the predicted binding pocket, the AlphaFold prediction is prior art that must be addressed in the patent application.

10c. The Biologic Generative Design Frontier

Generative AI for biologics, including antibodies, peptides, and engineered proteins, is a distinct technical track that is earlier in maturity than small molecule generative design. Antibody design using computational tools has a longer history, with methods like RosettaAntibody predating the deep learning era. The current frontier involves using large language models trained on protein sequence databases, combined with AlphaFold-class structural prediction, to design antibodies or other binding proteins with specified affinity and selectivity profiles.

The patent strategy for AI-designed biologics differs from small molecule strategy in important ways. Biologics are protected through composition of matter patents on the specific amino acid sequence and through method of use patents. Biosimilar developers cannot use an ANDA-equivalent abbreviated pathway to reference the innovator’s efficacy data; they must conduct comparative clinical trials. This means the market exclusivity implications of a biologic patent are different from a small molecule patent, and the IP moat for an AI-designed biologic may be more durable than for a small molecule with an equivalent patent thicket.

10d. Three-Year Technology Roadmap (2025-2028)

Over the next three years, four technical developments will reshape the AI drug discovery IP landscape. First, multi-target generative design, producing molecules simultaneously optimized against a primary target and against off-target selectivity constraints, will become more accessible, making pure single-target optimization insufficient as a basis for non-obviousness. Second, foundation models for drug discovery, large models pre-trained on massive chemical and biological datasets and fine-tuned for specific discovery tasks, will reduce the dataset requirements for individual companies and potentially commoditize some aspects of the discovery process. Third, AI-assisted clinical biomarker discovery will move from exploratory to evidential, with regulatory agencies beginning to expect AI-generated biomarker evidence to meet the credibility standards now being developed for drug discovery data. Fourth, protein-protein interaction (PPI) modulation, historically considered ‘undruggable,’ will become a focus area for generative AI due to the structural insight provided by AlphaFold multi-chain complex predictions.

Key Takeaways: Section 10

The current generative chemistry pipeline runs through five computational stages, from AlphaFold-based target characterization through wet lab handoff. AlphaFold’s public structure database has elevated the obviousness bar for binding-site-directed designs and must now be searched as part of patent prior art review. Foundation model development will commoditize parts of the discovery process over 2025-2028, making proprietary datasets and novel AI methodologies the primary basis for IP defensibility.

11. Building the IP Moat: Patents, Trade Secrets, and the Hybrid Architecture

11a. The Core Patent Filing Strategy

For any compound emerging from an AI-assisted discovery program, the minimum patent filing package should include a composition of matter application claiming the compound itself (defined by its structural formula), a method of use application claiming the compound’s use for treating the target indication, and, where the discovery involves a novel combination of known elements with surprising results, a method of treatment application documenting the unexpected outcomes that support non-obviousness.

For companies whose competitive advantage lies in a specific formulation, administration route, or dosing protocol, secondary patent applications covering those attributes should be filed early in development. The priority date, the date from which the patent term is measured, is established at first filing; every week that a secondary patent application is delayed after the compound is synthesized represents unnecessary reduction in the patent term for that secondary protection.

11b. The Trade Secret Infrastructure

Trade secret protection requires active maintenance. A company that does not take ‘reasonable measures’ to keep information secret loses trade secret protection under the Defend Trade Secrets Act (DTSA) and most state trade secret laws. For AI discovery platforms, reasonable measures must include at minimum: comprehensive non-disclosure agreements with all employees, contractors, and external collaborators who have access to proprietary models or data; technical access controls limiting platform access to personnel with a documented need; audit logs tracking who accessed model components and when; and data partitioning ensuring that a compromise of one part of the infrastructure does not expose the full dataset or model weights.

For pharmaceutical companies that partner with AI platform vendors, the trade secret analysis extends to the contractual relationship with the vendor. The agreement must specify what data and model details the vendor can access, what the vendor can do with inputs provided by the pharma company, who owns insights or derivative models generated from the pharma company’s proprietary data, and what happens to data access upon termination of the agreement. These questions are not standard in pharmaceutical licensing templates and require custom negotiation.

11c. Third-Party AI Platform: Who Owns the Output?

When a pharmaceutical company uses a third-party AI platform, such as Schrödinger’s computational chemistry software, a BenevolentAI or Recursion partnership, or a cloud-based generative chemistry API, to discover drug candidates, the IP ownership question is not automatically resolved in favor of the pharmaceutical company. The vendor’s platform agreement governs the initial allocation of rights, and that agreement is a critical legal document.

Common contractual structures include exclusive licensing of discovered compounds to the pharma company, with royalty or milestone payments to the vendor; co-ownership of compounds discovered using the vendor’s platform; and vendor retention of rights to use compounds as training data to improve the platform. Each structure has different implications for the pharma company’s ability to pursue a standard drug development and commercialization program.

The worst-case outcome is a co-ownership provision for discovered compounds, because co-owners of a U.S. patent can, absent a contrary agreement, independently make, use, sell, and license the patented invention without the other co-owner’s consent. A pharmaceutical company that co-owns a drug compound patent with its AI vendor could find the vendor licensing the compound to a competitor without the pharma company’s knowledge or permission. This risk is routinely overlooked in early-stage platform licensing negotiations and can dramatically reduce the commercial value of a discovered asset.

Key Takeaways: Section 11

The minimum patent filing package for an AI-discovered compound covers composition of matter and method of use. Trade secret protection requires active maintenance measures under the DTSA, including NDAs, access controls, and audit logs. Third-party AI platform agreements must be explicitly negotiated to address compound ownership, co-ownership risk, data use rights, and post-termination obligations. Co-ownership provisions in platform agreements can be catastrophic for commercialization strategy and must be identified and negotiated before discovery work begins.

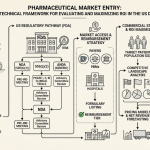

12. The Convergence Thesis: How FDA Documentation and USPTO Evidence Overlap

The FDA’s credibility assessment framework for AI-generated regulatory evidence and the USPTO’s significant contribution doctrine for AI-assisted inventions require different outputs for different purposes, but they draw on the same underlying documentation. This convergence is operationally significant because it means that a company investing in rigorous AI governance and documentation infrastructure is not incurring two separate compliance costs. It is building one asset that satisfies both requirements simultaneously.

The FDA’s January 2025 draft guidance requires sponsors to document the AI model’s context of use, its risk assessment in terms of model influence and decision consequence, its credibility assessment plan, the data used for training and validation (specifically its fitness for purpose and representativeness), and the lifecycle maintenance plan for monitoring model performance post-deployment. This is a documentation of the AI system’s properties, its training, its validation, and its operational boundaries.

The USPTO’s significant contribution standard requires documentation of the human scientist’s activities: how they formulated the research problem, what choices they made in designing or selecting training data, how they evaluated and selected among the AI’s outputs, and how they modified the AI-generated compound through experimental work. This is a documentation of human activity within the AI-assisted discovery process.

The overlap is substantial. A scientist who documents their data curation decisions in sufficient detail to satisfy the FDA’s ‘fit for use’ data standards is creating a record of those curation decisions that can also demonstrate their significant contribution to patent inventorship. A scientist who documents the model’s context of use and its functional limitations is creating a record that shows they did not simply accept the AI’s output uncritically, but engaged with it as an informed expert, which supports inventorship. A scientist who maintains a lifecycle maintenance log for the AI model is creating a time-stamped record of human intervention in the model’s development that can be used to document the sequence of inventive acts.

The practical recommendation is to design the AI governance and documentation framework from the outset with both regulatory and IP requirements in mind, producing a single source of truth that records human activity in sufficient detail to satisfy both audiences simultaneously.

Key Takeaways: Section 12

FDA credibility assessment documentation and USPTO inventorship evidence draw on the same underlying record of human activity in AI-assisted discovery programs. A unified AI governance framework that documents data curation decisions, context of use specifications, candidate selection rationale, and model performance monitoring simultaneously satisfies both regulatory and IP documentation requirements. Companies that treat regulatory compliance and IP documentation as separate functions incur unnecessary duplication costs and create version-control risk between the two records.

13. The U.S. FDA Credibility Assessment Framework: A Seven-Step Operational Guide

The FDA’s January 2025 draft guidance on the use of AI in regulatory decision-making for drugs and biologics established a seven-step framework for assessing the credibility of AI models used to generate evidence in regulatory submissions. The framework is explicitly risk-based: more rigorous assessment is required for models with higher influence on the regulatory decision and higher potential consequences of an incorrect decision.

13a. Steps 1 and 2: Question of Interest and Context of Use

The first step is defining the specific scientific or clinical question that the AI model is intended to address. This question of interest (QoI) must be stated precisely, because it determines what type of model is appropriate, what data is needed for training and validation, and what performance metrics are relevant. A QoI stated as ‘identify patients with a high probability of responding to Drug X’ is defined precisely enough to support the next steps. A QoI stated as ‘improve clinical trial efficiency’ is too vague to anchor a credibility assessment.

The Context of Use (COU) defines how the model’s output will be used in the regulatory submission or the drug development decision-making process. The COU must specify whether the AI output will be the primary basis for a regulatory claim, a supplementary source of evidence used alongside conventional data, or an operational tool used to manage trial logistics. Models with a primary-evidence COU require more rigorous credibility assessment than those with an operational-support COU.

13b. Step 3: Risk Assessment

Model risk is assessed on two dimensions: model influence (how much the regulatory decision depends on this model’s output relative to other evidence) and decision consequence (the potential harm to patients if the model produces an incorrect output). The FDA has proposed a two-by-two matrix framework, with each dimension rated as high or low, producing four risk categories. A model with high influence and high consequence, such as an AI that predicts serious adverse event risk and whose predictions are the primary basis for a dosing recommendation, sits in the highest risk category and requires the most extensive credibility assessment.

For pharmaceutical companies, the practical implication is that AI models used in high-consequence applications, particularly those that directly support dose selection, patient selection for high-risk interventions, or safety monitoring in vulnerable populations, should be subjected to the full credibility assessment protocol regardless of the sponsor’s confidence in the model.

13c. Steps 4 and 5: Credibility Plan Development and Execution

The credibility assessment plan specifies the validation studies, performance metrics, and contextual relevance assessments that will establish the model as fit for its intended COU. For most pharmaceutical AI applications, this plan must address several specific technical elements.

Data relevance and reliability: the training data must be relevant to the patient population and clinical setting where the model will be applied. A model trained on genomic data from European ancestry populations is not automatically credible for a clinical program in a predominantly Asian ancestry population. The FDA expects sponsors to explicitly address representativeness and, where gaps exist, to quantify the uncertainty they introduce.

Model transparency and explainability: the FDA does not require that all AI models be fully interpretable, because full interpretability is often not achievable for complex neural networks. It does require that sponsors have a principled understanding of the model’s key inputs, its performance boundaries, and the conditions under which it fails.

Performance characterization: the model must be evaluated on a held-out validation dataset that was not used in training, using pre-specified performance metrics relevant to the QoI. For a binary classification model, this typically means sensitivity, specificity, positive predictive value, and area under the ROC curve. For a regression model, it means mean error, root mean squared error, and prediction interval coverage.

13d. Steps 6 and 7: Documentation and Adequacy Determination

Step 6 requires complete documentation of the credibility assessment process, including any deviations from the plan. This documentation becomes part of the regulatory submission and must be available for FDA review. Step 7 is the sponsor’s determination, based on the evidence assembled in steps 1 through 6, that the model is adequate for its intended COU. If the evidence does not support adequacy, the sponsor must modify the COU, improve the model, or abandon the AI-assisted approach for that application.

The FDA strongly encourages early engagement through Pre-IND meetings and the Breakthrough Therapy and other designation programs to discuss AI-specific elements of development plans before they become embedded in costly clinical trials.

Investment Strategy: FDA Credibility Framework Implications

For investors, the FDA’s credibility framework has a direct bearing on clinical program risk. A drug program that uses AI-generated evidence in a high-influence, high-consequence context, such as an AI-selected patient population where all enrolled patients are AI-selected biomarker positives, faces higher regulatory risk than a program using AI only in a supporting role. If the AI model fails its credibility assessment, the regulatory submission may need to be redesigned, adding time and cost. Investors should scrutinize the context of use for any AI-generated evidence in clinical programs, paying particular attention to whether the company has conducted a pre-submission meeting with the FDA and whether the agency has provided feedback on the credibility assessment plan.

Key Takeaways: Section 13

The FDA’s seven-step credibility assessment framework requires sponsors to define a precise question of interest and context of use before building or deploying an AI model for regulatory purposes. Risk is assessed on model influence and decision consequence, and higher-risk models require more rigorous validation protocols. Data representativeness, model transparency, and pre-specified performance metrics are the core technical requirements. Early FDA engagement is strongly encouraged and materially reduces regulatory risk for high-influence AI applications.

14. The EMA Reflection Paper: Human-Centricity, GCP/GLP Integration, and the First Qualification Opinion

The European Medicines Agency published its final reflection paper on AI in the medicinal product lifecycle in September 2024, articulating a regulatory philosophy that complements the FDA’s credibility framework while emphasizing several distinct principles.

14a. The Human-Centricity Principle

The EMA’s reflection paper is anchored in the principle that AI should augment rather than replace human judgment in drug development and regulatory decision-making. This manifests in the expectation that a human will remain accountable for every AI-assisted decision and will have the ability to override, review, and contextualize AI outputs. The EMA does not define what ‘meaningful human oversight’ means in operational terms for all AI applications, acknowledging that the appropriate level of oversight depends on the specific context and risk profile.

For pharmaceutical companies operating in the EU, the human-centricity principle creates an obligation to design AI systems with explicit human review points built into the workflow. An AI model that generates adverse event reports, processes them, and generates safety signals without human review at any stage would face regulatory concern under the EMA’s framework, regardless of the model’s measured performance in validation studies.

14b. Integration with Existing Regulatory Frameworks

The EMA’s guidance does not create a parallel regulatory track for AI. It explicitly maps AI applications onto existing regulatory frameworks, requiring that AI used in clinical trials comply with Good Clinical Practice (GCP) guidelines and that AI used in non-clinical studies comply with Good Laboratory Practice (GLP) principles. This integration is both pragmatic and consequential. It means that pharmaceutical companies cannot escape GCP obligations for clinical trial data by routing the data through an AI model. The AI processing step does not sanitize the underlying data quality requirements; it inherits them.

For AI used in manufacturing process monitoring and control, the EMA maps the requirements onto Good Manufacturing Practice (GMP) frameworks. An AI-based APC system that controls a biological manufacturing process must be validated according to the same standards that apply to the process itself, including prospective validation, ongoing performance monitoring, and change control procedures.

14c. The First CHMP Qualification Opinion (March 2025)

The EMA’s Committee for Human Medicinal Products (CHMP) issued its first qualification opinion for an AI-based tool in March 2025, accepting clinical trial evidence generated using an AI-assisted digital pathology tool for liver biopsy analysis in metabolic dysfunction-associated steatohepatitis (MASH). The tool automates the quantification of histological features in liver biopsy images, a process that is subject to substantial inter-observer variability when performed manually by pathologists.

The qualification opinion establishes that AI-generated histological assessments can constitute valid clinical trial endpoints, provided the AI tool has been validated against a reference standard using a pre-specified validation protocol. The opinion specifies the validation requirements, performance thresholds, and human oversight requirements that must be met for such evidence to be acceptable in a CHMP regulatory review.

This qualification opinion is operationally significant as a template. The MASH pathology tool represents a class of AI applications, image analysis for quantitative biomarker assessment, where AI-generated measurements are objectively more reproducible than manual assessments by human experts. The EMA’s willingness to accept AI-generated measurements for this class of application, subject to rigorous validation, provides a pathway for similar applications in other therapeutic areas.

Key Takeaways: Section 14

The EMA’s human-centricity principle requires that human review remain a functional component of any AI-assisted regulatory decision, not merely a formal sign-off. The EMA integrates AI requirements into GCP, GLP, and GMP frameworks rather than creating a separate regulatory track, meaning existing quality and compliance obligations extend to AI components. The March 2025 CHMP qualification opinion for the MASH digital pathology tool establishes a validation template for AI-generated histological endpoints and signals the EMA’s willingness to accept AI-generated clinical evidence when rigorously validated.

15. Global Regulatory Divergence: China, Japan, and South Korea

15a. China: Volume, Ambition, and Data Portability Risk

China’s AI drug discovery regulatory environment is shaped by three concurrent dynamics. First, the National Medical Products Administration (NMPA) is actively developing AI-specific guidance, beginning with medical device software (SaMD) standards that are now being extended to therapeutics. Second, the Chinese government has made AI and biomedical R&D a national strategic priority, with substantial public funding and a regulatory posture that is, in general, more accommodating of novel methodologies than the U.S. or EU frameworks. Third, China now generates more AI-related pharmaceutical patents than any other country, according to analyses published through 2024.

The data portability risk for multinational pharmaceutical companies conducting AI-assisted discovery in China is significant. Data generated by an AI model trained on Chinese patient genomic data, electronic health records, or hospital-derived biological samples may be subject to China’s Data Security Law, the Personal Information Protection Law, and the outbound data transfer restrictions under the Security Assessment Measures for Data Export. Data generated in China on Chinese patient populations may not be transferable to the U.S. or EU for regulatory submissions without compliance with these restrictions, creating potential barriers to global clinical program integration.

15b. Japan: Soft Law and the AI Promotion Act

Japan’s AI Promotion Act, enacted in May 2025, embodies a deliberately non-punitive approach to AI governance. The Act establishes a framework of voluntary guidelines, industry collaboration, and government support, coordinated by an AI Strategy Headquarters at the Cabinet level. The Act does not impose legally binding requirements on pharmaceutical AI applications or create penalties for non-compliance with AI governance standards. It is, by design, an innovation-enabling framework rather than a risk-mitigation mandate.

The pharmaceutical regulatory implications are that the PMDA will likely apply existing medical device and clinical trial frameworks to AI applications on a case-by-case basis, using consultations with sponsors to align on expectations rather than prescriptive rules. Japan’s re-examination period (4 to 10 years for new drugs) means that drugs approved using AI-generated evidence will be subject to post-market reassessment of their safety and efficacy profile, a process that must account for how AI-generated evidence was validated and the conditions under which it remains valid.

15c. South Korea: High-Impact AI and the Framework Act

South Korea’s AI Framework Act, effective January 2026, takes a more structured approach than Japan’s soft law framework. The Act designates ‘high-impact AI’ categories in sectors including healthcare, finance, and critical infrastructure, and imposes specific obligations on developers and deployers of high-impact AI systems. For pharmaceutical applications, high-impact AI would include AI systems that make or substantially influence decisions about patient treatment, drug approval, or drug safety monitoring.

Obligations for high-impact AI under the Framework Act include mandatory risk management plans, transparency documentation, and human oversight requirements. These requirements align broadly with the FDA’s credibility assessment framework and the EMA’s human-centricity principle, suggesting that the South Korean MFDS’s AI regulatory expectations will eventually converge with those of the U.S. and EU, though with a distinct legal enforcement mechanism.

The Ministry of Food and Drug Safety is separately integrating AI into its own internal review processes, specifically for the initial screening of drug applications for completeness and the identification of safety signal patterns in adverse event databases. This internal AI adoption by the regulatory agency itself may accelerate the MFDS’s technical fluency with AI-generated evidence and its willingness to accept it in sponsor submissions.

Global Regulatory Comparison Table

| Regulatory Principle | U.S. FDA | EMA | China (NMPA) | Japan | South Korea |

|---|---|---|---|---|---|

| Core Framework | Risk-based credibility assessment | Human-centric, risk-based | Evolving; SaMD standards extending to therapeutics | Soft law; voluntary guidelines | Binding ‘high-impact AI’ obligations |

| Risk Dimensions | Model influence + decision consequence | Patient risk + regulatory impact | Sector-specific; less publicly defined | Voluntary compliance | ‘High-impact’ sector designation |

| Existing Framework Integration | GCP/GLP alignment encouraged | GCP/GLP/GMP mandatory | Medical device software as primary framework | Existing PMDA/MHLW frameworks | Framework Act plus existing MFDS standards |

| Human Oversight | Required for high-influence models | Mandatory; core principle | Required for SaMD; extending to drugs | Encouraged through guidelines | Legally mandated for ‘high-impact’ AI |

| Primary Enforcement Mechanism | Regulatory review; CRL risk | Approval refusal; compliance action | Government-led; can be rapid | Reputational; ‘name and shame’ | Legal enforcement; moderate penalties |

| Data Cross-Border Risk | Low | Low | High (data export restrictions) | Low | Low |

Key Takeaways: Section 15

China’s AI regulatory environment is more accommodating of novel methods but introduces data portability risk that can prevent Chinese-generated AI evidence from being used in U.S. or EU submissions. Japan’s AI Promotion Act creates a soft-law, innovation-first framework with no punitive enforcement, making sponsor-PMDA consultation the primary alignment mechanism. South Korea’s AI Framework Act creates legally binding obligations for high-impact AI in healthcare, aligning substantively with the FDA and EMA frameworks but enforced through a distinct legal mechanism.

16. Wet Lab Validation as IP Evidence: The In Silico-to-In Vitro Chain of Custody