1. What Biopharma Intelligence Actually Does (and What Most Firms Think It Does)

1.1 The Operational Definition

Biopharmaceutical business intelligence is the systematic conversion of raw industry data into decisions that affect capital allocation, R&D direction, market entry timing, and IP strategy. That definition sounds obvious. In practice, the function inside most organizations is something narrower and messier: a mix of periodic consultant reports, manually curated competitor trackers in shared spreadsheets, alert emails from database subscriptions that nobody has time to read, and occasional deep dives commissioned when a specific crisis demands them.

The gap between what intelligence could do and what it typically does inside a mid-sized biopharma company is the commercial premise of the entire direct data platform category. Understanding that gap requires a clear taxonomy of intelligence types and a frank assessment of where each type currently fails.

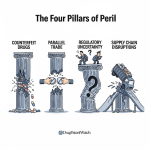

Competitive intelligence, as it operates in biopharma, covers four distinct analytical domains. Competitor pipeline surveillance tracks what compounds rivals are advancing, at what clinical phase, in which indications, and against what targets. Patent landscape analysis maps the IP barriers to market entry and identifies the specific claim structures that must be designed around, challenged, or licensed. Regulatory signal monitoring tracks FDA and EMA guidance publications, advisory committee decisions, approval actions, and enforcement activity that affects the commercial environment. Market access intelligence models pricing trajectories, formulary positioning, and reimbursement patterns across payer segments and geographies.

Each of these domains historically required a different data source, a different specialist, and a different analytical workflow. That fragmentation is the fundamental inefficiency that integrated raw data platforms are designed to collapse.

1.2 The Strategic Imperative Is Financial, Not Philosophical

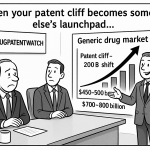

Drug development economics establish the baseline for why intelligence quality matters at a quantitative level. Bringing a new molecular entity to U.S. approval currently requires, by most estimates, 10-15 years and an all-in capitalized cost of $2-3 billion when development failures are absorbed into the cost of successful programs. Clinical failure rates across all phases run at approximately 90%. The average Phase III failure in a large indication costs $300-500 million in direct trial expenditure. Against that economic backdrop, an intelligence function that improves target selection by 5 percentage points, accelerates an IND filing by six months, or identifies a competitor’s Phase III read two weeks before market consensus does not represent a marginal operational improvement. It has a calculable financial value that, in most competitive intelligence budget discussions, never gets properly modeled.

The companies that treat intelligence as a cost center with headcount constraints are, in effect, subsidizing their own risk exposure. The argument for investing in data infrastructure and internal analytical capability is the same argument for investing in Phase I screening assays: the cost of upstream rigor is paid back many times over by the reduction in expensive late-stage failures.

Key Takeaways: Section 1

- Biopharma intelligence in most organizations is operationally fragmented across four analytical domains, each running on different data sources and workflows. The case for integrated platforms starts with that fragmentation cost.

- At $2-3 billion per approved new molecular entity and 90% clinical failure rates, even marginal improvements in intelligence quality have financial returns that dwarf the cost of the infrastructure required to deliver them.

- The gap between what intelligence could do and what it currently does inside most firms is the market opportunity that direct raw data platforms are filling.

2. The Cost Structure of Traditional Analyst-Mediated Intelligence: A Forensic Look

2.1 The Intermediary Model and Its Structural Inefficiencies

The traditional biopharma intelligence model routes analytical work through external intermediaries: consulting firms, market research agencies, and specialized data analysis boutiques. A company seeking a competitive landscape assessment of the IL-17 inhibitor space in psoriasis, for example, would commission a report, wait 6-12 weeks, receive a document with a publication date that is already 4-8 weeks behind the actual data collection, and pay somewhere between $50,000 and $250,000 depending on the depth of analysis and the vendor.

The financial structure of that engagement has three embedded inefficiencies. First, the time-to-insight gap. Clinical data moves fast: a Phase III read-out, an unexpected competitor discontinuation, or a new FDA Complete Response Letter can shift the competitive calculus within a single trading day. A report commissioned before that event and delivered after it is partially obsolete at the moment of delivery. Second, the generalization problem. Consulting reports are written to an assumed audience, not to the specific decision facing a specific strategy team. The analysis of the IL-17 inhibitor landscape will cover Novartis’s Cosentyx (secukinumab), Eli Lilly’s Taltz (ixekizumab), and perhaps AstraZeneca’s bimekizumab, but it will not be calibrated to the particular IP position of the reader’s own molecule, the specific formulary dynamics in the reader’s launch geography, or the reader’s internal clinical package constraints. Third, the proprietary data lock-in. When the engagement ends, the client receives the output document but not the underlying data, not the analytical methodology, and not the capability to update the analysis as conditions change. The next question requires a new commission.

2.2 The Silo Problem Inside Organizations

The external intermediary problem is compounded by an internal one. Most large biopharma companies operate with intelligence functions distributed across R&D (pipeline intelligence), IP/legal (patent analytics), commercial (market intelligence), regulatory affairs (regulatory tracking), and medical affairs (clinical evidence monitoring). These functions rarely share data infrastructure. They operate separate vendor relationships, maintain separate data repositories, and produce outputs in formats that do not integrate with each other.

Survey data from the Zifo 2024 biopharma data management study found that 70% of respondents reported difficulty accessing data for AI initiatives due to siloed systems. A separate finding noted that only 39% of biopharma organizations use standardized data formats and ontologies across functions. These are not technology problems in the first instance. They are organizational design problems that have produced incompatible data architectures, and those incompatible architectures now create a structural ceiling on what any single team can learn from cross-functional data analysis.

The practical consequence is that a competitive intelligence team tracking a rival’s pipeline and a regulatory affairs team tracking that rival’s FDA filing activity are operating with different data, different vocabularies, and different alert thresholds. A filing pattern that would be highly informative to the CI team, for example a cluster of section 505(b)(2) applications targeting a formulation space adjacent to a product in development, may never surface because no one is looking at patent filings and regulatory filings simultaneously in a unified analytical environment.

2.3 The Hidden Cost of Delayed Intelligence

The financial cost of the intermediary model is relatively easy to quantify: vendor spend plus internal labor for briefing and debriefing cycles plus opportunity cost of delayed decisions. The hidden cost is harder to measure but more consequential. It is the cost of decisions made on stale data.

In M&A contexts, stale intelligence is particularly expensive. An acquirer evaluating an asset in an active therapeutic area, working from a competitive landscape report that is four months old, may miss a competitor Phase II read-out that materially changes the asset’s probability of commercial success. In portfolio prioritization decisions, a company continuing to invest in a clinical program that a competitor has already terminated, for reasons visible in public FDA correspondence, has wasted capital on information that was technically accessible but organizationally invisible.

The direct data platform category’s core value proposition is collapsing the time between data generation and decision-relevant insight from weeks to hours. Whether any given platform actually delivers on that proposition depends on the quality of its data aggregation, its analytical tooling, and whether the client organization has built the internal capability to act on what the platform surfaces.

Key Takeaways: Section 2

- Commissioned competitive landscape reports carry a structural time-to-insight gap of 6-16 weeks from data collection to delivery, making them partially obsolete at receipt for fast-moving therapeutic areas.

- Internal intelligence fragmentation across R&D, IP, commercial, and regulatory functions prevents cross-domain pattern recognition that could surface material competitive signals. 70% of biopharma organizations report data access difficulty due to siloed systems.

- The most consequential cost of the traditional model is the opportunity cost of decisions made on stale intelligence: missed M&A timing, continued investment in programs competitors have already abandoned, and delayed response to regulatory shifts.

Investment Strategy: Section 2 Institutional analysts evaluating biopharma companies should ask specifically how the company’s competitive intelligence function is structured. A company with a centralized CI capability running on integrated data infrastructure, able to generate real-time patent, clinical, and regulatory signals, has a structural advantage in pipeline prioritization and M&A targeting that peer companies relying on periodic commissioned reports cannot match. In acquisition targets, the presence or absence of this infrastructure should factor into due diligence as an intangible asset with calculable value.

3. Why Raw Data Platforms Displace Intermediaries: The Architectural Case

3.1 Direct Access and What It Actually Means

‘Direct access to raw data’ is a phrase that gets deployed loosely in vendor marketing. It is worth being precise about what it means and why the distinction from curated data delivery matters for analytical depth.

A curated data delivery model gives the client a processed output: a formatted report, a dashboard with pre-defined metrics, a data table structured to answer a specific question. The processing decisions, including what data to include, how to normalize it, what to exclude, and what analytical framing to apply, are made by the vendor. The client receives the conclusion but not the audit trail that produced it, and cannot interrogate the underlying data for questions the vendor did not anticipate.

A raw data platform gives the client access to source records: FDA Orange Book patent listings, ANDA filing dates and status, clinical trial registry entries, EMA marketing authorization decisions, published bioequivalence study data, published regulatory guidance documents, and similar primary source material. The client’s analytical team applies its own processing, normalization, and interrogation to answer questions the platform vendor may never have contemplated.

The analytical depth difference is not marginal. A team with direct access to Orange Book patent filing dates, patent expiry dates, and concurrent ANDA filing activity can build a proprietary model of first-filer exclusivity windows and revenue-at-risk projections for any target molecule. That model reflects the company’s own IP position, its own competitive read on which Paragraph IV challengers are best positioned to prevail, and its own assumptions about generic erosion curves. No commissioned report can replicate that specificity, because the specificity depends on the client’s internal knowledge combined with the raw public data.

3.2 The Speed Architecture: Real-Time vs. Periodic

The most commercially valuable feature of direct data platforms in active pharmaceutical markets is update frequency. Platform data that refreshes daily or in near-real-time against regulatory agency databases, clinical trial registries, and patent filing systems allows users to detect competitive signals within hours of their public appearance.

The commercial impact of this speed is most obvious in two contexts. The first is Paragraph IV litigation monitoring: when a generic manufacturer files a new ANDA with a Paragraph IV certification against one of your listed Orange Book patents, the FDA publishes an acknowledgment of receipt within a defined window. A platform user monitoring that feed can identify the new threat within the same business day, giving the innovator company’s legal team the maximum possible time to evaluate a response before the 45-day lawsuit window begins running. For a drug with $3 billion in annual U.S. revenues, a 45-day window is not abstract; it is the interval between the current IP defense posture and the beginning of a process that, if uncontested, ends in generic competition.

The second context is clinical trial intelligence. ClinicalTrials.gov entries are updated by sponsors with varying regularity, but material changes, including primary completion date changes, status changes from ‘Active, not recruiting’ to ‘Completed,’ and the posting of results data, are searchable immediately upon posting. A company tracking a competitor’s Phase III trial in the same indication it is developing can detect a completion posting within hours of it going live, hours ahead of any press release, conference presentation, or analyst report.

3.3 The IP Valuation Layer in Platform Architecture

The IP dimensions of direct data platforms deserve specific treatment because they are the most financially material and the least well covered in standard platform marketing descriptions.

Patent intelligence at the raw data level means access to the complete prosecution history of pharmaceutical patents, the specific claim language of each issued claim, the prior art cited against each claim during examination, the Orange Book listing status, ongoing litigation dockets, inter partes review (IPR) petition filings, and Patent Trial and Appeal Board (PTAB) decisions. The combination of these data elements allows an IP strategy team to do something that no curated market report can do: form a real-time, claim-specific opinion on the litigation vulnerability of a competitor’s patent.

An IPR petition filed against a key compound patent in a top-revenue product, for example, is not just a legal event. It is a signal that a generic manufacturer has made a commercial judgment that the patent is worth attacking, which implies a commercial judgment that the molecule is worth genericizing, which implies an estimate of remaining revenue that justifies the litigation investment. That chain of inference, available within hours of the IPR filing through a real-time patent docket monitoring system, informs the innovator’s IP defense strategy, its lifecycle management planning, and its investor relations framing of exclusivity risk.

Key Takeaways: Section 3

- Raw data access allows client teams to build proprietary analytical models calibrated to their own IP position, competitive context, and internal assumptions, a specificity impossible to replicate in commissioned reports.

- Real-time monitoring of FDA Paragraph IV ANDA filings gives innovator companies maximum response time within the 45-day lawsuit window; for multi-billion revenue products, hours of advance notice have material legal and financial consequences.

- IPR petition filings are commercially actionable competitive signals, not just legal events. Real-time patent docket monitoring converts them into inputs for lifecycle management and investor relations within the same business day they appear.

4. The IP Valuation Layer: Patent Intelligence as a Financial Asset Class

4.1 Calculating the Economic Value of a Pharmaceutical Patent

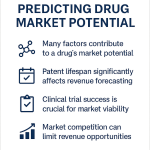

Pharmaceutical patents are financial assets with calculable present values. The standard methodology applies discounted cash flow analysis to the revenue stream protected by the patent, net of the erosion expected from generic entry. A compound patent covering a drug with $4 billion in U.S. annual revenues and five years of remaining exclusivity, assuming an 85% generic price erosion in year one following first generic entry and a 10% discount rate, carries a theoretical IP asset value in the range of $12-16 billion. That figure is sensitive to the assumed generic erosion curve, which itself depends on the number of ANDA filers, the presence of authorized generic competition, and the market’s price elasticity.

Patent intelligence platforms allow IP teams to stress-test these valuations against current ANDA filing activity. If DrugPatentWatch data shows six substantially complete ANDAs pending for a target molecule, all with Paragraph IV certifications, the generic erosion assumption should reflect rapid multi-competitor entry rather than a two-player exclusivity-period market. That adjustment can move the IP asset valuation by 30-40%. It should be in every investment memo and every licensing negotiation where the patent is a primary asset.

4.2 The Orange Book as a Strategic Intelligence Document

The FDA’s Orange Book lists, for each approved drug product, the patent numbers, expiry dates, and patent codes (whether the patent claims a drug substance, drug product, or method of use) that the NDA holder has certified as relevant to the approved product. Generic manufacturers must certify against every listed patent in an ANDA submission.

For IP strategy teams, the Orange Book is a near-real-time inventory of the IP barriers guarding every major revenue product on the U.S. market. Changes to Orange Book listings, patent additions and deletions by NDA holders, are particularly informative. An NDA holder adding a new Orange Book patent shortly after the first Paragraph IV ANDA filing is a standard defensive move: the new listing forces the generic manufacturer to certify against the new patent, potentially triggering a new 30-month stay and extending the litigation timeline. Patent delist requests by brand manufacturers, conversely, sometimes signal a litigation settlement or a strategic withdrawal from an unwinnable IP position.

IP Valuation Sub-Section: Orange Book Listing Timing and Exclusivity Extension Value

The commercial value of an Orange Book listing is not simply the value of the patent itself. It is the value of the 30-month stay that a Paragraph IV filing against the listed patent triggers, combined with the litigation cost burden it imposes on the generic challenger. For a drug generating $500 million per year in U.S. revenues, a 30-month stay, if litigated to its maximum duration, protects approximately $1.25 billion in revenue that would otherwise be subject to generic erosion. Even if the patent is ultimately held invalid, the stay period revenues have already accrued.

This arithmetic explains why NDA holders add continuation patents to the Orange Book late in a product’s lifecycle. The litigation runway value of each new listed patent exceeds the cost of prosecution by an order of magnitude for any drug with substantial remaining revenues. IP teams at generic companies should model the expected stay extension value of each new Orange Book listing added by a target innovator, as this directly affects the economic timing model for any planned Paragraph IV challenge.

4.3 IPR Petitions: Strategic Signals and Their Commercial Interpretation

Inter partes review at the Patent Trial and Appeal Board gives any party standing to challenge the validity of an issued patent on prior art grounds, with a lower evidentiary threshold than district court litigation and a much faster timeline (final written decisions typically issue within 18 months of institution). IPR petitions in the pharmaceutical space are almost exclusively filed by generic manufacturers and biotech companies with commercial interests in the challenged patent’s scope.

Between 2016 and 2025, the pharmaceutical sector saw steady IPR petition volume against Orange Book-listed patents. Petition filing patterns carry strategic content. A pharmaceutical patent receiving multiple coordinated IPR petitions from different petitioners in the same month signals that several generic manufacturers have independently assessed the patent as vulnerable and commercially worth attacking. Coordinated petitions sometimes indicate a licensing discussion or litigation settlement negotiation in the background, where the petitions provide negotiating leverage.

Monitoring PTAB dockets in real time, available through patent intelligence platforms aggregating PTAB filing data, allows innovator IP teams to identify coordinated challenges before they become public litigation events and adjust both their litigation strategy and their investor communications accordingly.

Key Takeaways: Section 4

- A standard compound patent on a $4 billion revenue product carries a DCF-based IP asset value of $12-16 billion, with significant sensitivity to generic erosion curve assumptions that ANDA filing surveillance can refine in real time.

- Orange Book listing additions late in a product’s lifecycle carry a litigation runway value that frequently exceeds the cost of patent prosecution by an order of magnitude; generic manufacturers should model stay extension value for each new listing when timing Paragraph IV challenges.

- Coordinated PTAB IPR petition filings against the same pharmaceutical patent are signals of commercial intent from multiple generic manufacturers; real-time PTAB docket monitoring converts them into actionable intelligence within hours of filing.

Investment Strategy: Section 4 Analysts building revenue models for branded pharmaceutical products should incorporate a patent monitoring risk adjustment that accounts for pending IPR petitions and PTAB institution rates. A patent with two or more active IPR petitions at the PTAB, covering claims that the Orange Book lists as protecting the active compound, carries materially higher near-term generic entry risk than a patent with no pending challenges. Institution rates at the PTAB for pharmaceutical patents have historically run at approximately 60-70% of petitioned claims, meaning most instituted petitions result in at least partial claim cancellation.

5. AI, Machine Learning, and Generative AI in Biopharma: Separating Signal From Projection

5.1 What AI Actually Does in a Biopharma Data Context

Before accepting the market projections for AI in pharmaceutical applications (the Global AI in Pharmaceutical market is estimated at $1.94 billion in 2025, accelerating toward $16.49 billion by 2034 at a 27% CAGR), it is worth being precise about what AI is doing in biopharma today versus what the projections assume it will do.

Current deployed AI in biopharma intelligence falls into four functional categories with clear technical descriptions. Machine learning classification models, trained on historical clinical trial outcomes, regulatory decisions, and molecular property datasets, predict the probability that a given compound or trial design will succeed or fail against defined criteria. Natural language processing models extract structured information from unstructured text, including published papers, clinical trial protocols, FDA warning letters, earnings call transcripts, and patent claims. Recommendation systems, trained on historical competitive intelligence queries and outcomes, surface relevant data entities (compounds, patents, companies, trials) that an analyst’s explicit query might not have identified. Graph neural networks, increasingly deployed on large knowledge graphs linking genes, targets, compounds, diseases, and clinical outcomes, identify non-obvious connections that inform target selection and combination therapy design.

Each of these is a real, currently deployed capability with documented outcomes. They are not the same thing as generative AI creating novel therapeutic candidates de novo, a capability that exists in prototype form at companies like Insilico Medicine and Recursion Pharmaceuticals but has not yet produced an approved drug from a fully AI-generated starting point.

5.2 The Generative AI Layer: What It Contributes to Intelligence Workflows

Generative AI, specifically large language models (LLMs) and diffusion-based molecular generation models, adds a qualitatively different capability to biopharma intelligence workflows. The distinction worth drawing is between generative AI as an output tool (drafting reports, summarizing literature, generating regulatory document templates) and generative AI as a scientific design tool (proposing novel molecular structures, predicting protein-ligand binding configurations, generating synthetic routes).

In intelligence workflows, the most practically deployed generative AI functions are document synthesis and query-responsive summarization. An LLM trained on regulatory documents, clinical trial reports, and scientific literature can produce a summary of all publicly available data on a specific competitor’s pipeline molecule, calibrated to a specific analytical question, in minutes. That function, while not scientifically novel, eliminates hours of manual literature review and allows analysts to operate at a scope and speed not otherwise achievable.

McKinsey’s 2024 analysis of generative AI in biopharma operations estimated that Gen AI applied to manufacturing deviation documentation and CAPA generation can reduce deviation closure time by 40% and overall deviation incidence by 30-40%. In a GMP manufacturing context where deviation investigations are both legally mandated and operationally expensive, those are not projection numbers. They are outcomes from pilot implementations at facilities where Gen AI has been validated and deployed.

5.3 The ‘AI-First’ vs. ‘AI-Adjacent’ Company Distinction

A 2024 industry analysis noted that ‘AI-first’ biotech firms, those that built their entire R&D model around computational design from inception, integrate AI into drug discovery at approximately 75% penetration of their workflows. Traditional large pharma companies operate at roughly one-fifth that integration rate. That differential is not primarily a technology purchasing question. It is an organizational architecture question.

An AI-first company builds its data infrastructure, hiring profiles, experimental feedback loops, and portfolio construction criteria around computational hypothesis generation. A traditional pharma company adding AI capabilities to an existing organizational structure optimized for wet-lab-driven discovery faces a much harder integration problem. The data generated by legacy processes is often unstructured, inconsistently annotated, and stored in systems that do not interface with modern ML training pipelines. Building the data infrastructure to support AI adoption in that context requires rearchitecting years of accumulated data before any ML model can be trained on it productively.

This organizational reality is why the market projections for AI in biopharma are likely accurate at the directional level, compound annual growth in AI adoption and spending, but may overestimate the near-term impact of AI on discovery productivity for the majority of the industry, which is not AI-first and faces significant data readiness gaps before AI delivers on its projected potential.

Key Takeaways: Section 5

- Deployed AI in biopharma intelligence today centers on four functional categories: classification models for outcome prediction, NLP for unstructured text extraction, recommendation systems for entity surfacing, and graph neural networks for knowledge graph analysis. Each has documented outcomes; none is speculative.

- Generative AI’s most practically deployed function in intelligence workflows is document synthesis and query-responsive summarization, not de novo molecular design. The latter exists in prototype form but has not yet produced an approved drug from a fully AI-generated starting point.

- The ‘AI-first’ vs. ‘AI-adjacent’ organizational distinction is more predictive of AI impact on discovery productivity than technology spending levels. Legacy data infrastructure is the primary bottleneck for most traditional pharma companies attempting to scale AI adoption.

6. Data Architecture for Biopharma Intelligence: Integration, Standardization, and the FAIR Stack

6.1 The Data Readiness Problem Is Structural

The Zifo 2024 survey finding that only 32% of biopharma scientists and informaticians express confidence in their ability to effectively leverage scientific data for AI initiatives is a number worth dwelling on. It means that at two-thirds of organizations attempting to implement AI in drug discovery or intelligence workflows, the people closest to the data do not trust the data sufficiently to stake scientific conclusions on AI outputs generated from it.

The underlying causes are well understood. Biopharma organizations accumulate data across years and decades in systems that were designed for different purposes at different times. An electronic lab notebook from 2012 stores assay data in a format that bears no relationship to the schema expected by a modern ML training pipeline. A clinical data management system implemented for Phase III trials uses controlled vocabularies that do not map automatically to the gene and target ontologies used by target identification models. A manufacturing execution system generates process parameter data in vendor-proprietary formats that require custom ETL development before any analytical system can consume them.

The result is a collection of data silos that are individually complete and internally consistent but collectively incompatible. Building a unified analytical environment on top of that inheritance requires either a multi-year data migration and rearchitecting program or a semantic harmonization layer that maps disparate data formats to a common ontology without requiring migration. Both approaches are expensive and require sustained organizational commitment that many companies have started and failed to maintain.

6.2 The FAIR Principles as a Practical Engineering Requirement

The FAIR data principles, Findable, Accessible, Interoperable, Reusable, were formulated in 2016 as a framework for scientific data management. In a biopharma context, they translate to specific engineering requirements that have direct implications for AI and intelligence platform performance.

Findable means that every data asset, a batch record, an assay result, a patent filing, a clinical endpoint measurement, has a persistent unique identifier and descriptive metadata that allows it to be located by a query. In practice, this requires a data catalog that indexes all data assets across all source systems, a common metadata schema, and a search interface. Most large pharma companies have partial data catalogs that cover some systems; very few have comprehensive catalogs covering all data assets including those in legacy systems and external vendor databases.

Accessible means that data can be retrieved under defined authentication and authorization conditions. For biopharma intelligence, this has two dimensions: internal access controls that ensure regulated data (GxP records, clinical data, patient-level data) is accessible only to authorized personnel and systems, and external accessibility to intelligence platforms through documented APIs or data feeds.

Interoperable means that data from one source can be combined with data from another without manual transformation. This is the hardest requirement to satisfy in practice. Interoperability requires that different data sources use the same controlled vocabularies for shared entities, such as compound names (standardized to InChI or CAS numbers), genes (referenced to NCBI Gene IDs), diseases (coded to MedDRA or ICD-10), and clinical outcomes (mapped to a common endpoint ontology).

Reusable means that data generated for one purpose can be applied to other analytical questions without reproducing the original experimental conditions. In drug discovery, reusability requires that assay protocols, reagent lots, instrument calibration records, and statistical analysis methods are documented in sufficient detail to allow a second scientist to interpret the original results and design comparable follow-on experiments.

6.3 AbbVie’s ARCH Platform: A Case Study in Knowledge Graph Architecture

AbbVie’s R&D Convergence Hub (ARCH) platform, connecting more than 200 internal and external data sources and indexing over 2 billion data points, is the most extensively documented large-scale biopharma knowledge graph implementation in the public record. The architectural decisions made in ARCH’s design illuminate the engineering requirements for any organization attempting to build comparable integrated intelligence infrastructure.

The knowledge graph representation chosen for ARCH treats biological entities (genes, proteins, pathways, diseases, compounds) as nodes and their documented relationships (drug-target interactions, disease-gene associations, mechanism-of-action links, clinical outcome correlations) as edges with associated evidence scores and data source provenance. This structure allows the system to identify non-obvious multi-hop relationships: a gene associated with Disease A that shares a regulatory pathway with a gene associated with Disease B that is the mechanism of action for an approved drug in Disease C. That chain of inference can generate a novel target hypothesis for Disease A using a mechanism borrowed from Disease C.

The 200-source data integration in ARCH required custom connectors for each data source, a semantic normalization layer mapping each source’s entity naming conventions to the platform’s unified ontology, and a conflict resolution system for handling cases where two sources disagree on the same fact (for example, different databases reporting different IC50 values for the same compound-target interaction). The practical lesson from ARCH’s architecture is that building a biopharma knowledge graph is not primarily a software engineering problem. It is a knowledge engineering problem that requires domain expertise in biology, chemistry, and regulatory science to design the ontological structure and resolve semantic conflicts correctly.

IP Valuation Sub-Section: AbbVie’s Data Infrastructure as a Competitive Moat

AbbVie’s ARCH platform represents a multi-year capital investment that has, by management’s account, accelerated target identification timelines for programs including those in the oncology and immunology franchises that followed Humira (adalimumab). The strategic IP implication of this infrastructure is significant: AbbVie’s ability to identify next-generation targets more quickly than competitors translates directly into earlier patent filing opportunities on novel mechanisms.

In the biologic space, where compound patents protect a specific protein sequence rather than a small-molecule scaffold, the ability to identify novel mechanisms before competitors is equivalent to opening new IP space. AbbVie’s immunology pipeline post-Humira, including compounds like navitoclax (BCL-2 inhibitor) and programs in TL1A, reflects a target identification strategy that ARCH was designed to support. Each successfully prosecuted compound patent on a novel target opens an IP protection window with potential economic value in the multi-billion dollar range for a drug that reaches approval in a major indication.

Key Takeaways: Section 6

- Only 32% of biopharma scientists trust their current data sufficiently to stake AI conclusions on it. This data confidence gap is the primary bottleneck for AI adoption at most organizations, preceding any question of which ML model to deploy.

- FAIR data compliance requires specific engineering decisions on persistent identifiers, metadata schemas, controlled vocabularies, and assay protocol documentation. These are knowable, implementable requirements, not aspirational principles.

- AbbVie’s ARCH knowledge graph architecture demonstrates that multi-source biological data integration is primarily a knowledge engineering problem requiring domain expertise to design ontological structure, not a software problem solvable by buying the right platform.

7. Drug Discovery Acceleration: Quantified Outcomes and Platform Anatomy

7.1 The Discovery Timeline Problem and Where AI Addresses It

A standard drug discovery timeline from target identification to IND filing runs approximately 5-7 years and costs $400-600 million in fully capitalized development expenditure before a single human has received the experimental compound. The components of that timeline, and the specific points where AI-assisted analysis has demonstrated measurable improvements, are worth detailing precisely because the marketing claims around ‘AI-accelerated discovery’ tend toward the vague.

Target identification and validation, the process of identifying a biological mechanism causally involved in a disease and demonstrating that modulating it is pharmacologically feasible, historically required 2-4 years. Computational target identification using machine learning on multi-omic datasets, training models on gene expression data from disease vs. healthy tissue, genome-wide association study findings, protein-protein interaction networks, and known drug-target interaction databases, can compress initial target hypothesis generation from months to weeks. The validation step, confirming that modulating the identified target produces the expected biological effect in disease-relevant models, still requires wet-lab work. But AI-generated target hypotheses, when ranked by multi-omic evidence score, improve the prior probability that a selected target will survive validation. Published analyses suggest AI-assisted target selection reduces preclinical failure rates by 15-25 percentage points in programs where the AI model was trained on relevant biological data.

Hit identification, using high-throughput screening or virtual screening to identify compounds that modulate the target, is where AI has the most mature and most quantified impact. Virtual screening using deep learning models trained on protein structure prediction outputs (AlphaFold2 and its successors), combined with generative molecular design to propose novel scaffolds fitting the binding site, has reduced the number of wet-lab assays required to identify a development-quality hit by 30-50% in multiple documented programs. Recursion Pharmaceuticals reported in 2023 that its phenotypic screening platform using AI-assisted image analysis processes data from 2.2 million compound-assay combinations per week, a throughput that would require orders of magnitude more laboratory capacity using manual analysis.

7.2 The AlphaFold Effect on Structure-Based Drug Design

DeepMind’s AlphaFold2, released publicly in 2021, predicted protein structures for nearly every protein in the human proteome and deposited approximately 200 million predicted structures in a publicly accessible database. The impact on structure-based drug design in biopharma is not yet fully quantified, but its direction is clear: protein structure has gone from a scarce, experimentally expensive resource to a computationally available commodity for the vast majority of human protein targets.

Before AlphaFold2, obtaining an experimentally determined crystal structure for a novel target required years of structural biology work costing $1-5 million and frequently failed due to expression, crystallization, or diffraction problems. Structure-based drug design was therefore limited to well-characterized target families where structural data was available. AlphaFold2 structures are not equivalent to experimentally determined structures in all respects, particularly for intrinsically disordered regions and binding site conformational flexibility, but they are sufficient for initial virtual screening and scaffold identification in a substantial fraction of target programs.

The competitive intelligence implication is that structure-based drug design, previously a capability available primarily to large pharma companies with dedicated structural biology groups, is now computationally accessible to small biotech companies with standard computational chemistry infrastructure. This has broadened the competitive field for novel target programs and accelerated the competitive intelligence challenge of monitoring who is working on what: more companies can pursue more targets simultaneously than the structural biology bottleneck previously permitted.

7.3 Repurposing Intelligence: Finding Approved Drugs for New Indications

Drug repurposing, identifying new clinical indications for approved drugs or clinical-stage compounds with known safety profiles, has a specific ROI advantage over novel target programs: the toxicity data, manufacturing process, and often the clinical-stage package for the compound already exist. Development timelines from repurposing hit identification to Phase II proof-of-concept can be 2-3 years rather than 10, with development costs of $50-150 million rather than $1-2 billion.

AI-powered repurposing uses knowledge graph analysis to identify approved drugs with mechanism-of-action profiles that match target biology in under-investigated indications. Sildenafil (Viagra/Revatio) and thalidomide are pre-AI examples of compounds discovered to have activity in indications far removed from their original development target. The AI contribution is systematic, high-throughput execution of that hypothesis-generation process across the full approved drug landscape simultaneously.

For IP strategy teams, repurposing creates a specific patent challenge: the compound patent has typically expired or is near expiration, so any IP position must come from method-of-use patents covering the new indication, formulation patents covering an optimized delivery for the new clinical context, or dosing regimen patents. Method-of-use patents are Orange Book listable if the FDA approves the new indication on the existing NDA or a new NDA referencing the existing compound’s safety data. This IP architecture requires early and proactive patent strategy work to ensure that the clinical data supporting the new indication generates protectable IP before the indication is granted, not after.

Key Takeaways: Section 7

- AI-assisted target selection reduces preclinical failure rates by 15-25 percentage points relative to traditional selection criteria when the model is trained on relevant multi-omic data. The downstream cost saving in Phase I and Phase II attrition is measurable and large.

- AlphaFold2’s release of approximately 200 million predicted protein structures has structurally shifted the competitive dynamics of structure-based drug design, enabling small biotech companies to pursue target programs that previously required large-pharma structural biology infrastructure.

- Repurposing intelligence requires a specific IP strategy: method-of-use and formulation patents must be prosecuted proactively before indication approval to ensure Orange Book listable protection exists at market launch.

8. Clinical Trial Intelligence: Patient-Level Data, EHR Integration, and Adaptive Design

8.1 The Trial Failure Cost and Intelligence’s Role in Reducing It

Phase III clinical trial failures are the single largest avoidable cost in pharmaceutical development. A Phase III failure in a large indication, after enrollment of several thousand patients over 3-5 years, typically consumes $300-500 million in direct trial costs and writes off the cumulative R&D investment in the program reaching that point. The root causes of Phase III failures fall into four categories: wrong patient population (the mechanism works but not in the enrolled patient mix), wrong dosing regimen, wrong primary endpoint, and wrong assumption about the clinical relevance of the mechanism in the disease state. All four are, in principle, addressable with better pre-clinical intelligence and better Phase II design.

Patient population selection is where clinical trial intelligence applied to EHR and real-world data has the most direct impact. The question a sponsor needs to answer before Phase III enrollment begins is: what is the precise clinical and molecular profile of the patients most likely to respond to this mechanism? That question requires access to real-world patient data describing treatment patterns, disease severity markers, biomarker distributions, and prior treatment history in the target indication. Electronic health records, stripped of identifying information and aggregated at scale, contain the raw material for that analysis. The problem historically was that EHR data was fragmented across health systems, structured in inconsistent formats, and not accessible to drug developers at the granularity needed for this type of analysis.

Platforms aggregating de-identified EHR data and claims data, including IQVIA’s MIDAS platform and Komodo Health, have partially addressed this problem by standardizing and centralizing patient-level data at the scale of hundreds of millions of patient records. The analytical application is direct: before Phase III protocol finalization, a biostatistics team can run cohort analyses on the aggregated EHR data to identify which patient subgroups showed outcomes most consistent with the proposed mechanism, refine the inclusion and exclusion criteria, and estimate the enrollment feasibility of the trial with the refined criteria in the geographies under consideration.

8.2 AI-Assisted Patient Recruitment and Site Selection

Trial recruitment is one of the most consistently underestimated operational challenges in clinical development. Roughly 85% of clinical trials fail to meet their original enrollment timelines, with an average delay of approximately 18 months for Phase III studies. That 18-month delay, at a fully loaded Phase III trial cost of $30-50 million per month for a large indication, translates directly into $540 million to $900 million in additional cost per delayed program.

AI-assisted site selection uses historical trial performance data, including enrollment rates, patient retention rates, protocol deviation rates, and investigator experience profiles, to predict which clinical sites are most likely to enroll the target patient population efficiently. Models trained on historical site performance data from thousands of previous trials can identify, for example, that the patient population most likely to meet a specific biomarker enrichment criterion is disproportionately concentrated in academic medical center hematology practices in the southeastern United States, where investigator networks have historically enrolled quickly in similar therapeutic areas.

Patient identification tools using NLP, including TrialGPT from the National Institutes of Health, apply natural language processing to EHR records to identify eligible patients meeting complex inclusion and exclusion criteria without requiring manual chart review. Published validation studies on TrialGPT showed that the model correctly identified eligible patients with sensitivity comparable to experienced clinical coordinators, at a fraction of the time per chart.

8.3 Adaptive Trial Design: Intelligence Infrastructure Requirements

Adaptive trial designs allow pre-specified modifications to ongoing trial protocols based on accumulating interim data, including sample size re-estimation, dose selection among multiple arms, and population enrichment based on biomarker data. The FDA’s 2019 Adaptive Designs for Clinical Trials of Drugs and Biologics guidance validated adaptive design for a broad range of applications. Adaptive designs have the potential to reduce trial duration by 10-20% and sample size requirements by 15-30% relative to fixed designs, while maintaining statistical validity.

The intelligence infrastructure requirement for an adaptive trial is substantially more demanding than for a fixed design. Adaptive analyses require a pre-specified statistical analysis plan, a blinded independent data monitoring committee, a database capable of producing clean interim datasets on a defined schedule, and an operational infrastructure for implementing protocol modifications without compromising trial integrity. Each interim analysis requires a near-real-time data cut from the trial database, which requires that data collection, entry, and cleaning processes operate on a shorter cycle than is standard in most traditional trial management operations.

Key Takeaways: Section 8

- The average Phase III trial delay of 18 months, at $30-50 million per month in fully loaded costs for large indication trials, makes recruitment intelligence one of the highest-ROI intelligence investments a drug developer can make.

- AI-assisted site selection and patient identification using NLP on EHR records can reduce enrollment delays by 20-40% in well-executed programs, representing $200-600 million in avoided delay costs per program in large indication trials.

- Adaptive trial designs require a data collection and management infrastructure that operates on a materially shorter cycle than traditional trial management systems. Implementing adaptive designs without that infrastructure upgrade creates operational risk that can invalidate the statistical integrity of the adaptation decisions.

Investment Strategy: Section 8 When evaluating clinical-stage biopharma companies, analysts should ask specifically about the data infrastructure supporting ongoing Phase III programs: whether patient selection criteria were informed by EHR cohort analysis, whether the trial management system supports adaptive modification if an interim analysis warrants it, and whether site selection used predictive performance modeling. Companies running Phase III trials with these infrastructure elements have lower probability of enrollment delays and population selection failures than peers running standard fixed designs with traditional site selection methods.

9. Commercial Intelligence Infrastructure: Market Access, LoE Forecasting, and Pricing Signal

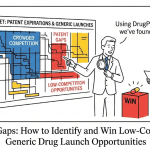

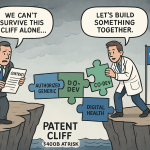

9.1 Loss of Exclusivity Forecasting as a Financial Planning Instrument

Loss of Exclusivity (LoE) modeling is the commercial intelligence function with the most direct and quantifiable financial impact for both innovator companies defending revenues and generic companies targeting entry opportunities. For an innovator company, LoE forecasting informs lifecycle management investment decisions: whether to develop a next-generation formulation, pursue a new indication, or invest in an authorized generic strategy to manage the revenue erosion curve.

The LoE forecast for any specific drug is a composite of four variables, each with its own uncertainty distribution. The primary patent expiry date is the most certain variable, available directly from patent filing data with the uncertainty limited to potential Patent Term Extension (PTE) or Pediatric Exclusivity additions. The ANDA filing landscape, specifically the number of active ANDAs, their current FDA review status, and whether any have first-filer Paragraph IV exclusivity implications, determines how many generic competitors will enter simultaneously at patent expiration. The litigation status of any outstanding Paragraph IV challenges, including the probability that litigation will run to judgment or settle, determines whether the formal patent expiry date or an earlier litigation-negotiated date drives market entry. The authorized generic decision by the NDA holder, which can be made and implemented within months of patent expiry, determines whether the first-filer exclusivity period produces a two-player or three-player market.

IQVIA’s MIDAS platform is the market standard for prescription-level sales data in commercial intelligence, aggregating monthly prescription volume and dollar value by product, geography, prescriber specialty, and payer type. For LoE forecasting, MIDAS data provides the current revenue base and trend that will be subject to generic erosion, calibrated at the payer segment level. A drug with 70% commercial payer penetration and 30% Medicare penetration will face a different erosion curve than one with inverse payer mix, because Medicare Part D’s formulary dynamics and preferred generic substitution policies differ from commercial payer formulary management practices.

IP Valuation Sub-Section: Quantifying the Authorized Generic Decision

When an innovator company deploys an authorized generic (AG) simultaneously with first-filer generic entry, the Paragraph IV winner’s 180-day exclusivity period generates lower revenue than the same period without AG competition. Academic analysis of AG impact shows approximately 40-50% reduction in first-filer exclusivity period revenues when an AG enters. For a first-filer whose total exclusivity period revenue in a non-AG scenario was projected at $800 million, AG deployment by the innovator reduces that to $400-480 million. This is not marginal; it changes the fundamental ROI calculation for the Paragraph IV litigation investment.

For innovator companies, the AG decision involves a different financial calculus: deploying an AG captures 30-40% of the generic market during the exclusivity period, reducing the total revenue loss during that window relative to ceding the entire generic volume to the Paragraph IV winner. The net financial comparison, AG revenue capture vs. brand revenue cannibalization, depends on the specific product’s price-volume dynamics and the specific payer environment. Real-time commercial intelligence on Paragraph IV filing activity, patent litigation status, and current brand revenue trends is the input set required to make this decision with the accuracy the financial stakes demand.

9.2 Pricing Intelligence and Market Access Signal

Pharmaceutical pricing intelligence has become more complex since the passage of the Inflation Reduction Act (IRA) of 2022, which established Medicare drug price negotiation for a defined set of high-expenditure drugs and introduced inflation rebate requirements. The IRA’s Medicare negotiation provisions created a new category of pricing risk for innovator companies: drugs selected for negotiation will face mandatory price reductions of up to 60-79% relative to their non-federal ceiling price. Selection criteria favor drugs with high Medicare expenditure and no generic or biosimilar competition.

Intelligence platforms monitoring CMS IRA drug selection criteria in real time, tracking the drugs most likely to be nominated for future negotiation cycles based on Medicare expenditure data and exclusivity status, allow innovator companies to model the IRA pricing risk embedded in their future revenue projections. For a drug with $3 billion in annual U.S. Medicare revenues and an IRA selection probability of 40% in year three, the expected revenue impact from negotiated pricing is a material input to the product’s long-term financial plan.

Key Takeaways: Section 9

- LoE forecasting requires a composite of four variables, each with separate uncertainty distributions: primary patent expiry, ANDA filing landscape, litigation status, and authorized generic decision. Commercial intelligence platforms that integrate data on all four provide substantially more reliable forecasts than approaches modeling each variable independently.

- The IRA’s Medicare negotiation mechanism, selecting drugs with high Medicare expenditure and no generic competition, has created a new category of pricing risk that intelligence platforms must track in real time to inform long-term revenue projections.

- The authorized generic decision, with approximately 40-50% impact on first-filer exclusivity revenues, is one of the highest-stakes decisions in generic pharmaceutical market entry strategy. It requires real-time commercial, IP, and litigation intelligence to model correctly.

10. Manufacturing Intelligence: Predictive Quality and Gen AI on the Shop Floor

10.1 Why Manufacturing Is an Intelligence Problem

Pharmaceutical manufacturing is typically framed as an operations problem with a quality compliance component. It is more accurately framed as a data problem whose consequences happen to include regulatory enforcement and patient safety events. Most manufacturing quality failures, from dissolution anomalies to environmental monitoring excursions, generate data signatures in process parameter records, environmental monitoring logs, and laboratory systems before they manifest as Out-of-Specification results or visible contamination events. The gap between the data signature appearing and the quality event being detected is the window in which manufacturing intelligence can convert a reactive investigation into a proactive intervention.

FDA Form 483 observations and Warning Letters consistently cite inadequate root cause investigation of OOS results and inadequate CAPA validation. Both of those findings reflect an intelligence failure: the organization’s analytical processes are not detecting the causal patterns in its process data before they repeat. A predictive quality model trained on historical process parameter data, environmental monitoring data, and OOS result records for a specific product can identify the multivariate parameter conditions that historically precede OOS results, with sufficient predictive precision to trigger an investigation before the OOS event occurs.

10.2 Gen AI in CAPA Documentation and Deviation Management

McKinsey’s 2024 analysis of Gen AI in biopharma operations documented specific measurable outcomes from Gen AI deployment in deviation and CAPA management: a 30-40% reduction in deviation incidence (through predictive detection of conditions likely to produce deviations) and a 40% reduction in deviation closure time (through AI-assisted root cause identification and CAPA documentation drafting).

The deviation closure time reduction is operationally significant beyond its own direct metric. Pharmaceutical manufacturing regulations (21 CFR 211.192 and EU GMP equivalent) require that OOS investigations be closed within defined timelines and that product release decisions be based on completed investigations. A batch sitting in investigation limbo ties up capital and delays revenue recognition. For a batch worth $5-10 million in manufacturing value, reducing investigation closure time from 60 days to 36 days (a 40% reduction) accelerates working capital release by three weeks and reduces the regulatory exposure from incomplete investigations that might trigger inspection findings.

Gen AI’s specific contribution to this process is in two functions. First, deviation classification: an LLM trained on historical deviations from the same manufacturing site can classify a new deviation record against historical patterns, identify the most similar precedent cases, and surface the root cause determinations and CAPA approaches that resolved those precedent cases. Second, documentation drafting: CAPA documentation requires specific structure, specific regulatory language, and specific cross-references to the process validation data and GMP procedures relevant to the deviation type. An LLM that has learned the documentation standards from a site’s historical CAPA records can draft a compliant CAPA document from a structured description of the deviation, reducing the time a quality engineer spends on documentation from hours to minutes.

Key Takeaways: Section 10

- Most manufacturing quality failures generate detectable data signatures in process parameter records and environmental monitoring logs before they manifest as OOS results. Predictive quality models trained on historical process and quality data can convert reactive investigations into proactive interventions.

- Gen AI’s documented contributions to deviation management (30-40% deviation incidence reduction, 40% closure time reduction) translate into measurable working capital improvements and reduced regulatory exposure, not just efficiency metrics.

- Deviation closure time improvement has a direct financial value: for a $5-10 million batch in investigation, reducing closure time by 3-4 weeks accelerates working capital release by a calculable amount. Manufacturing intelligence investment should be evaluated against this specific financial return.

11. The Regulatory Intelligence Layer: FDA Guidance Monitoring and Submission Risk Modeling

11.1 Why Regulatory Intelligence Is Different From Regulatory Compliance

Regulatory compliance is adherence to current requirements. Regulatory intelligence is tracking how those requirements are changing and what the implications of change are for pipeline and commercial decisions. The distinction matters because FDA guidance documents, advisory committee meeting agendas, and informal agency communications at scientific conferences contain forward signals about where regulatory expectations are moving, often 12-24 months before those signals become formal requirements.

FDA guidance documents are published in draft form for a public comment period before finalization. The draft-to-final timeline ranges from 6 months to several years depending on the complexity and controversy of the guidance topic. During the draft period, the agency is signaling its current thinking without imposing binding requirements. Companies that read and respond to draft guidances, and that adjust their development programs to align with the direction of draft guidance before finalization, consistently perform better in ANDA and NDA review cycles than companies that wait for final guidance to implement changes.

The FDA’s Office of Generic Drugs publishes product-specific guidances (PSGs) for complex generics that define the evidence package required for ANDA approval. PSG publication is a forward signal of competitive entry pressure on the corresponding branded product: PSG publication indicates the FDA has developed a regulatory pathway for generic development, which in turn indicates the agency expects ANDA submissions to follow. Monitoring PSG publication activity in real time, as regulatory intelligence platforms do, allows brand companies to anticipate where generic pressure is coming and allows generic companies to identify which complex products have just become technically approachable.

11.2 FDA’s 2025 AI Draft Guidance: Implications for Intelligence Platform Compliance

The FDA’s January 2025 Draft AI Regulatory Guidance established a risk-based credibility assessment framework for AI models used in drug and biological product development and regulatory decision-making. The guidance defines ‘credibility’ for an AI model as the degree to which the model’s structure, training data, and validation results support trust in its outputs for a specific use case.

The credibility assessment framework introduces several requirements that have direct implications for how intelligence platforms present AI-generated outputs to regulated users. AI models used to inform regulatory submissions must be validated against a relevant dataset that is independent of the training data. The validation results must be documented in a format sufficient for the FDA to assess the model’s performance in the specific context of use. Model drift, which the guidance defines as ‘susceptibility of model performance to change over time as real-world conditions evolve,’ must be monitored through post-deployment performance surveillance with defined alert thresholds for when retraining is required.

For biopharma companies using AI-generated intelligence outputs to support regulatory submissions, whether in bioequivalence prediction, clinical endpoint selection, or manufacturing process validation, the guidance creates specific documentation requirements for the AI models generating those outputs. Companies that have not implemented model validation, version control, and performance surveillance for their AI tools are operating with an unquantified regulatory risk that will become clearer as the guidance moves toward finalization and the FDA begins assessing AI model credibility in specific submission contexts.

Key Takeaways: Section 11

- FDA product-specific guidance publications for complex generics are commercially actionable signals: publication indicates the agency has defined a technical pathway for generic entry, which precedes a wave of ANDA filings. Real-time PSG monitoring is a competitive early-warning function.

- The FDA’s January 2025 Draft AI Guidance introduces specific model validation, version control, and performance surveillance requirements for AI models used to support regulatory submissions. Companies using AI tools without these governance elements face emerging regulatory risk.

- Draft guidance monitoring (before finalization) allows development programs to align with regulatory direction 12-24 months before compliance becomes mandatory, consistently improving review outcomes relative to companies that wait for finalization.

12. Competitive Signal Processing: Pipeline Tracking, Conference Data, and Real-Time ANDA Surveillance

12.1 The Conference Circuit as an Intelligence Source

Scientific and medical conferences represent a category of competitive intelligence that falls between published clinical data and informal rumor. Conference presentations include data from ongoing trials at interim stages, new safety signals observed in post-market surveillance, mechanism-of-action updates that reveal the sponsor’s scientific understanding of their compound, and off-label clinical experience that precedes formal indication expansion filings.

The major conference circuit for each therapeutic area, ASCO for oncology, ASH for hematology, ESC for cardiology, ACR for rheumatology, and their equivalents, concentrates material competitive data into a few high-density information events per year. Companies that have built systems to aggregate, classify, and extract structured information from conference abstracts, posters, and oral presentations within hours of publication have a several-day competitive intelligence advantage over peers working through manual review processes.

NLP models applied to conference abstract text can classify presentations by therapeutic area, compound type, mechanism of action, clinical phase, primary endpoint type, and outcome direction (positive, negative, mixed) in seconds per abstract. For a conference with 10,000 abstracts, that automated classification produces a structured competitive landscape update that would require weeks of manual analysis, available hours after the abstract book is published.

12.2 Real-Time ANDA Surveillance: The Generic Threat Monitoring Architecture

For innovator companies with branded drugs approaching or past primary patent expiry, real-time ANDA surveillance is the intelligence function most directly connected to near-term revenue risk. The FDA publishes the Paragraph IV ANDA notification process in its Orange Book updates and on the FDA’s drug approvals database, but the monitoring architecture required to detect new filings within hours of publication requires automated feed monitoring, not manual database checks.

An effective ANDA surveillance architecture monitors four data streams simultaneously. First, Orange Book patent status changes: new patent additions by NDA holders (which may trigger new certification requirements for existing ANDA filers), patent deletions (which may reflect settlements or validity concessions), and expiry date changes (which may reflect PTE grants). Second, FDA ANDA receipt acknowledgments: the FDA publishes weekly updates on new ANDA submissions and their filing dates. These do not identify the Paragraph IV certification status publicly at the moment of receipt, but the combination of filing date, reference listed drug, and applicant identity often allows an inference about likely certification type. Third, district court complaint filings: Paragraph IV notification triggers a 45-day window during which the patent holder may file suit. District court PACER dockets can be monitored for new complaints citing a specific patent, providing confirmation of both the filing and the patent at issue. Fourth, PTAB petitions: as discussed in Section 4, IPR petitions against Orange Book-listed patents are signals of generic commercial intent, often filed in coordination with ANDA submissions.

12.3 Competitor Pipeline Intelligence: Reading the Clinical Trial Registry

ClinicalTrials.gov is one of the most underutilized publicly available competitive intelligence sources in biopharma. Mandatory registration of clinical trials for drugs and biologics studied in the United States means that most Phase I, II, and III programs appear on the registry, with periodic updates that reflect enrollment progress, primary completion dates, protocol amendments, and results posting.

Protocol amendments filed with ClinicalTrials.gov reveal a great deal about a competitor’s scientific and regulatory thinking. A protocol amendment adding a biomarker-defined patient subgroup to the primary endpoint analysis, for example, suggests that interim data has shown differential efficacy in the biomarker-positive population and that the sponsor is pivoting to an enriched registration strategy. A protocol amendment extending the primary endpoint assessment period suggests the event rate assumptions in the original design proved optimistic, which is itself a signal about the mechanism’s activity in the enrolled population.

Real-time protocol amendment monitoring, available through ClinicalTrials.gov’s API, allows competitive intelligence teams to detect these strategic pivots within days of the amendment filing, often weeks or months before a conference presentation or press release communicates the change.

Key Takeaways: Section 12

- NLP classification of conference abstracts can produce a structured competitive landscape update from a 10,000-abstract conference within hours of abstract book publication. The competitive advantage window over manual review is several days to two weeks.

- An effective ANDA surveillance architecture monitors four simultaneous data streams: Orange Book patent status changes, FDA ANDA receipt acknowledgments, district court PACER complaint filings, and PTAB petition filings. No single stream provides complete coverage.

- ClinicalTrials.gov protocol amendments are commercially actionable competitive signals available days to weeks before press releases or conference presentations. Biomarker enrichment amendments and primary endpoint assessment period extensions both convey specific information about a competitor program’s clinical performance.

13. Ethical and Regulatory Constraints on AI in Biopharma: The 2025 FDA and EMA Frameworks

13.1 The FDA’s January 2025 Draft AI Guidance: Key Technical Requirements

The FDA’s January 2025 Draft Guidance on Artificial Intelligence in Drug and Biological Product Development represents the most detailed regulatory statement the agency has produced on AI model requirements. Its core technical framework is worth detailing precisely, because the gap between what it requires and what most deployed biopharma AI systems currently provide is substantial.

The guidance defines a ‘credibility assessment’ as a structured evaluation of whether an AI model is fit for the specific context of use. The credibility assessment must address six elements: the intended use of the model (specifically what decisions it will inform); the training data characteristics, including size, representativeness, and any known biases; the model architecture and its suitability for the data type and task; the validation approach, distinguishing internal validation (on a held-out portion of the training dataset) from external validation (on a dataset with no overlap with training data); performance metrics relevant to the intended use, selected prior to evaluation; and the expected range of conditions under which the model’s outputs are reliable.

The guidance’s treatment of model drift is technically specific. Drift monitoring requires defining, prior to deployment, the performance metrics and thresholds that will trigger a retraining or revalidation event. It requires a mechanism for collecting ground-truth outcomes data from deployed model predictions in order to track performance over time. For an AI model predicting bioequivalence study outcomes from formulation characteristics, ground-truth data would come from subsequent bioequivalence study results. The monitoring infrastructure must be operational before the model is used in a regulatory context, not retrofitted after the FDA raises questions in a submission.

13.2 The EMA’s October 2024 Reflection Paper: European Regulatory Positioning

The EMA’s October 2024 Reflection Paper on the Use of Artificial Intelligence (AI) in the Medicinal Product Lifecycle takes a risk-stratified approach to AI oversight requirements. The EMA categorizes AI applications by regulatory impact and patient risk, with higher-risk applications, including those used in clinical trial design, marketing authorization submissions, and pharmacovigilance signal detection, receiving more intensive oversight requirements.

For AI models with ‘high regulatory impact or high patient risk,’ the EMA’s paper indicates that AI model assessment should be incorporated into the regulatory authorization procedure itself: companies should expect assessors to request details of the AI model’s architecture, training data, validation methodology, and performance monitoring approach as part of the review of the clinical or quality data that the model informed.

This represents a significant operational shift for companies using AI in their regulatory programs. Documentation requirements for AI models used in submissions are now a regulatory science question, not merely an internal quality management question. Companies that deploy AI models in their regulatory workflows without comprehensive model cards documenting architecture, training data, validation results, and monitoring procedures are accumulating a submission risk that will manifest as questions or deficiency requests during regulatory review.

13.3 The Algorithmic Bias Problem: Not Just an Ethics Issue

Algorithmic bias in pharmaceutical AI models is frequently discussed in the context of healthcare equity: if a clinical trial patient recruitment model is trained on historical data from trials that underrepresented certain demographic groups, the model will systematically underidentify eligible patients from those groups in future trials. That is a genuine equity problem. It is also a commercial problem.

Phase III trials that enroll populations substantially different from the intended commercial population generate safety and efficacy data that may not generalize accurately to real-world use. A drug that shows strong efficacy in a trial population that, due to algorithmic bias in patient selection, is disproportionately composed of one demographic group may show attenuated efficacy in post-market use across a broader population. Post-market safety signals that emerge in demographic groups underrepresented in the trial population create both patient safety events and regulatory responses, including label changes, REMS requirements, and in severe cases, market withdrawal.

For AI model developers, addressing bias requires specific data curation practices: prospective assessment of demographic representation in training datasets, evaluation of model performance across demographic subgroups separately from overall performance metrics, and documentation of known representation gaps in model cards submitted to regulators.

Key Takeaways: Section 13

- The FDA’s January 2025 Draft AI Guidance requires six-element credibility assessments for AI models used in regulatory submissions, including external validation (on data with no overlap with training data), pre-specified performance metrics, and a deployed drift monitoring infrastructure. Most currently deployed biopharma AI systems do not yet meet all six requirements.

- The EMA’s October 2024 Reflection Paper indicates that AI model documentation will be requested as part of marketing authorization review for applications where AI informed clinical or quality data. Companies without model cards documenting architecture, validation, and monitoring for their regulatory AI tools are accumulating submission risk.

- Algorithmic bias in patient selection AI models is both an ethical and a commercial problem: trials that systematically underenroll certain demographic groups generate safety and efficacy data that may not generalize to the full commercial population, creating post-market regulatory risk.

14. Vendor Landscape: Platform Capabilities, Data Coverage, and Fit-for-Purpose Selection

14.1 The Platform Category and Its Segmentation

The biopharma intelligence platform market segments along three functional dimensions: data depth (how granular and comprehensive the underlying data is in a specific domain), analytical tooling (the sophistication of built-in analytical capabilities), and integration architecture (how well the platform connects to other data systems). No current vendor achieves best-in-class performance across all three dimensions for all data domains. Fit-for-purpose selection requires matching the platform’s strengths against the organization’s primary intelligence use cases.