The journey from a molecule in a lab to a medicine in a pharmacy is a decade-long, multi-billion-dollar gamble, with the average capitalized cost to develop a new drug estimated at a staggering $2.6 billion.1 This colossal investment is secured by the promise of market exclusivity, a temporary monopoly granted by a patent that allows innovator companies to recoup their costs and reinvest in the next generation of cures. This fundamental economic engine, an “Innovation-Exclusivity-Reinvestment Cycle,” is the lifeblood of the industry.

But this exclusivity is not guaranteed. It exists under constant siege. On one side are the brand-name manufacturers, defending their intellectual property fortress with every legal tool at their disposal. On the other are the generic and biosimilar drug makers, driven by the equally valid public interest of bringing affordable medicines to the market, which now account for 90% of all prescriptions filled.4 This inherent conflict creates one of the most complex, expensive, and high-stakes legal battlegrounds in the world: pharmaceutical patent litigation.

The cost of a single patent case where more than $25 million is at risk can easily exceed $4 million, and in some analyses, reach a median of $5.5 million through trial and appeal.1 These are not just line items on a budget; they are formidable strategic challenges that divert capital from R&D, spook investors, and can determine the fate of a blockbuster drug. The central conflict is fought over a deceptively simple question: Is the patent valid?

Answering this question has traditionally been a matter of human judgment, legal precedent, and expert intuition—an expensive and profoundly uncertain process. The legal standards themselves, particularly the test for “non-obviousness,” are described as “one of the most difficult determinations in patent law”. This inherent uncertainty, coupled with the staggering financial risk, has created a perfect storm—a multi-billion-dollar problem in desperate search of a solution.

That solution is now arriving, not from a law library, but from the cloud. We are at the dawn of a new era where big data, predictive analytics, and artificial intelligence are being marshaled to demystify the chaos of patent litigation. Can we, by analyzing millions of data points from past cases, court dockets, and patent office records, begin to predict the future? Can algorithms identify the subtle patterns in a judge’s behavior, the hidden weaknesses in a patent’s claims, or the likely outcome of a multi-million-dollar lawsuit before it’s even filed?

This report embarks on a journey from the courtroom to the data center to answer these questions. We will dissect the legal frameworks that define the battlefield, explore the vast data ecosystems that provide the ammunition, and unpack the sophisticated AI models that serve as the new strategic weaponry. For the business leaders, general counsel, and IP strategists aiming to turn data into dominance, this is a guide to the future of legal warfare—a future where the most powerful advocate may just be an algorithm.

Part 1: The High-Stakes Arena of Pharmaceutical Patent Validity

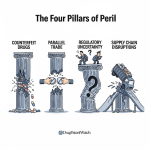

To understand the transformative potential of predictive analytics, one must first appreciate the complex and often perilous landscape of pharmaceutical patent law. The validity of a patent is not a simple binary state but a contested status determined through labyrinthine legal processes, each with its own rules, timelines, and strategic nuances. The entire system—with its parallel, high-cost venues and subjective legal standards—is the primary economic driver creating the market for predictive legal technology. The system’s inefficiency and uncertainty are not bugs; they are the features that make these analytical tools so immensely valuable.

Pillars of Patentability: A Refresher on Novelty and Non-Obviousness

Under U.S. law, for an invention to be worthy of a patent, it must clear several statutory hurdles. While all are important, the battle for pharmaceutical patents is most often fought over the pillar of non-obviousness.

First, the invention must be statutory subject matter. As defined in 35 U.S.C. § 101, this includes any new and useful “process, machine, manufacture, or composition of matter”. While seemingly broad, this requirement has become a significant battleground, particularly after the Supreme Court’s decisions in Mayo and Alice, which made it more difficult to patent diagnostic methods and other inventions deemed to be directed to “abstract ideas” or “laws of nature”.6

Second, the invention must be useful. This means it must have a “specific, substantial, and credible utility”. In the pharmaceutical context, this is a critical requirement; it’s not enough to have a new chemical compound, one must specify a practical use for it, such as treating a particular disease.

Third, the invention must be novel. Codified in 35 U.S.C. § 102, this is the “newness” requirement. An invention cannot be patented if it was already known to the public through prior disclosures, publications, or other patents—collectively known as “prior art”. An invalidity challenge based on novelty, or “anticipation,” argues that a single piece of prior art discloses every element of the claimed invention.6 This is a foundational area for data mining and analysis.

The fourth and most fiercely contested requirement is non-obviousness. If an invention is not identically disclosed in the prior art, it is novel. But to be patentable, it must also be a non-obvious improvement over what came before. Governed by 35 U.S.C. § 103, this standard asks whether the differences between the invention and the prior art would have been obvious “to a person having ordinary skill in the art” (a PHOSITA) at the time the invention was made.6

The framework for this determination was set by the Supreme Court in Graham v. John Deere Co., which established four factual inquiries :

- Determining the scope and content of the prior art.

- Ascertaining the differences between the claimed invention and the prior art.

- Resolving the level of ordinary skill in the pertinent art.

- Evaluating objective evidence of non-obviousness, such as commercial success, long-felt but unsolved needs, and the failure of others (often called “secondary considerations”).

For decades, courts applied a relatively rigid “teaching-suggestion-motivation” (TSM) test, which generally required a piece of prior art to contain an explicit suggestion to combine it with another to render an invention obvious. However, the Supreme Court’s 2007 decision in KSR International Co. v. Teleflex Inc. dramatically changed the landscape. KSR rejected the rigid TSM test in favor of a more flexible, common-sense approach. The Court stated that if a combination of known elements yields only “predictable results,” it would likely be obvious to a PHOSITA to make that combination, even without an explicit suggestion.6

This shift from a rigid test to a flexible, holistic analysis has had a profound, if unintended, consequence. It has inadvertently created a perfect use case for advanced artificial intelligence. The very task that KSR asks of human judges and patent examiners—to identify patterns, predictable variations, and logical connections across vast and disparate datasets of prior art—is precisely what modern machine learning models are designed to do. An AI can process millions of scientific papers and patents, identifying semantic similarities and technological trajectories that no human could possibly retain. In essence, the modern, AI-augmented PHOSITA has a vastly expanded “knowledge base.” An AI can construct a powerful argument for obviousness by showing that combining two references is a predictable step, not because a single paper says so, but because it has identified thousands of analogous conceptual combinations across the entire body of scientific literature. The legal evolution of the obviousness standard has thus created a direct technological demand for AI-powered prior art analysis.

The Gauntlet of Litigation: Navigating ANDA and Hatch-Waxman Disputes

The primary arena for brand-versus-generic patent warfare is defined by the Drug Price Competition and Patent Term Restoration Act of 1984, commonly known as the Hatch-Waxman Act. This landmark legislation created a delicate balance: it provides incentives for innovator companies to conduct risky R&D, while also creating an accelerated pathway for generic manufacturers to bring cheaper alternatives to market once patents expire.5 This framework gives rise to a unique and highly specialized form of litigation.

The process typically begins when a generic company files an Abbreviated New Drug Application (ANDA) with the FDA, seeking approval to market a generic version of a brand-name drug. A critical component of this filing is the generic’s certification regarding the brand’s patents listed in the FDA’s “Orange Book.” The most consequential of these is the Paragraph IV certification, in which the generic company asserts that the brand’s patent is invalid, unenforceable, or will not be infringed by the generic product.

Filing a Paragraph IV certification is a formal challenge, often described as an act of “artificial infringement,” that serves as the starting gun for litigation. The brand-name patent holder is notified and has a crucial 45-day window to respond by filing a patent infringement lawsuit.7 If a suit is filed within this window, it triggers an automatic

30-month stay on the FDA’s approval of the generic drug.9 This stay provides the brand company with a significant period of continued market exclusivity while the litigation unfolds, but it also puts their patent’s validity directly in the crosshairs.

The financial stakes of this process are immense, making predictive analytics a strategic necessity rather than a luxury. The table below, based on data from the American Intellectual Property Law Association (AIPLA), illustrates the direct costs, which are compounded by significant indirect costs like the diversion of R&D resources and market uncertainty.1

Table 1: The Financial Stakes of Pharmaceutical Patent Litigation

| Amount at Risk | Median Cost Through Discovery & Claim Construction (USD) | Median Total Cost Through Trial & Appeal (USD) | Key Indirect Costs (Qualitative) |

| $1 Million – $10 Million | $600,000 | $1,500,000 | Diversion of key personnel; Minor market uncertainty |

| $10 Million – $25 Million | $1,225,000 | $2,700,000 | Significant diversion of scientific and executive time; Stock price volatility |

| More than $25 Million | $2,375,000 | $4,000,000+ | Massive opportunity costs (R&D funds reallocated); Delayed strategic planning; Significant impact on shareholder value |

Data synthesized from AIPLA surveys as cited in industry analyses.1 Total costs for high-stakes pharma cases can exceed these medians, with some estimates placing the total at $5.5 million.

Recent litigation statistics paint a picture of an active and contentious landscape. In 2024, 312 complaints initiating Hatch-Waxman litigation were filed, a notable increase from 259 in the prior year. The overwhelming majority of these cases are concentrated in the District of Delaware and the District of New Jersey, venues whose judges have deep familiarity with the complexities of pharmaceutical patent law.7 In fact, nearly half of all ANDA complaints in 2024 were assigned to just five judges, creating a highly specialized judicial environment.

Analysis of case outcomes in 2024 reveals that while many cases settle (39% of terminated matters), innovator companies tended to prevail more often in cases that reached a decision on the merits (20% innovator win rate vs. 2% generic win rate). When cases went to trial, patents were found to be infringed more often than not, and validity was upheld more frequently than it was struck down. For those patents that were invalidated at trial, the most common ground was obviousness, reinforcing its status as the central battleground of patent validity. These trends provide a rich statistical backdrop for building and validating predictive models.

The PTAB Battleground: Inter Partes Review (IPR) as a Strategic Lever

In addition to district court litigation, a second major front for patent validity challenges exists at the U.S. Patent and Trademark Office (USPTO) itself. The America Invents Act (AIA) of 2011 established the Patent Trial and Appeal Board (PTAB) and created a new trial-like proceeding called Inter Partes Review (IPR).4

An IPR allows a third party to petition the PTAB for a “second look” at a patent’s validity, but only on the grounds of novelty and obviousness based on prior art consisting of patents and printed publications. The process was designed to be a faster, more efficient, and less expensive alternative to federal court litigation, with a statutory deadline of 18 months from institution to a final decision.

The strategic use of IPRs has become a cornerstone of modern patent conflict. For generic manufacturers, it is a powerful offensive weapon. They can use it to challenge a competitor’s patents to clear a path for their own products. Conversely, if a brand company sues a generic for infringement in district court, the generic can file a parallel IPR petition as a defensive maneuver, effectively saying, “Before we argue about infringement, let’s have the patent experts at the USPTO determine if this patent is even valid”. The mere act of filing an IPR can shift the dynamics of a dispute and become a potent tool in settlement negotiations.

This dual-track system has sparked a fierce debate. Proponents, such as the Association for Accessible Medicines (AAM), argue that IPRs are essential for “weeding out poor-quality patents” that were improvidently granted by an overburdened USPTO, thereby promoting competition and lowering drug prices for patients. They point to statistics showing that the process is fair and not a “death panel for patents,” with biopharma patents having lower institution and invalidation rates than other technology sectors.

On the other hand, innovator groups like the Pharmaceutical Research and Manufacturers of America (PhRMA) contend that the IPR process creates profound uncertainty in the patent system, stifles innovation, and has weakened the value of U.S. patents. They argue that for biopharmaceutical patents, IPRs are almost always a “second bite at the same apple,” forcing innovators to defend their patents in two different tribunals simultaneously under different rules, which disrupts the careful balance established by Hatch-Waxman.

The statistics surrounding IPRs reveal a nuanced and complex reality, underscoring why a one-size-fits-all predictive model would be insufficient. A deeper look at the data shows that the probabilities of success change dramatically at each stage of the IPR funnel—from filing to the institution decision to the final written decision—and that these probabilities differ significantly by technology area.

The data shows that while biopharma patents constitute a relatively small portion of total IPR petitions (around 6-7%), they have one of the highest institution rates (the rate at which the PTAB agrees to conduct a trial).13 However, once instituted, biopharma patent claims have a

higher survival rate than claims in other technology areas. This suggests that while it may be harder to survive the initial challenge, a biopharma patent that clears the institution hurdle has a better-than-average chance of surviving the full trial. This critical nuance is precisely the kind of insight a sophisticated predictive model must capture. The table below visualizes this dynamic.

Table 2: PTAB IPR Success Rates in Bio/Pharma vs. All Technologies (Illustrative)

| Metric | Bio/Pharma | All Technologies | Strategic Implication |

| Petition Filings (% of Total) | ~6-7% | ~93-94% | Bio/Pharma is a niche but highly contested area within the PTAB. |

| Institution Rate (%) | ~73% | ~67-69% | The hurdle to get a trial started against a pharma patent is lower; petitions are taken very seriously. |

| Post-Institution Invalidation Rate (%) | ~66% | ~68-87% | If a trial is instituted, pharma patents have a better chance of some claims surviving compared to other tech. |

Data synthesized from multiple PTAB statistical reports.13 Rates can vary based on the fiscal year and reporting methodology, but the general trends hold.

This statistical landscape reveals that the institution decision is the pivotal moment in an IPR. For a patent challenger, getting past this gate is the primary objective. For a patent owner, defeating the petition at the institution stage is paramount, as the odds shift significantly against them once a trial begins. Any predictive framework must therefore focus intensely on modeling the factors that lead to an institution decision.

Part 2: Building the Data Foundation for Predictive Legal Analytics

The most advanced artificial intelligence model is powerless without data. In the context of pharmaceutical patent litigation, building a predictive framework requires assembling a vast, multi-dimensional dataset from a variety of disparate sources. This process of sourcing, cleaning, and integrating data is not merely a technical prerequisite; it is the primary source of sustainable competitive advantage in legal analytics. The proprietary, integrated dataset that results from this effort is a more valuable and defensible asset than the AI models themselves, which are increasingly becoming commoditized. The “moat” or barrier to entry is not in the algorithm, but in the data pipeline.

Unlocking the Vault: Sourcing Data from the USPTO and PatentsView

The foundational layer of any patent analytics dataset comes directly from the United States Patent and Trademark Office (USPTO), which has made a treasure trove of information available through its Open Data Portal.17 This portal provides a suite of Application Programming Interfaces (APIs) that allow for programmatic access to millions of patent records.

Key USPTO APIs for building a predictive dataset include :

- PTAB API: Provides detailed information on all Patent Trial and Appeal Board proceedings, including IPRs. This is the source for data on petitions, institution decisions, and final outcomes.

- Office Action APIs: These APIs provide the full text and metadata of Office Actions—the official communications from a patent examiner to an applicant—including rejections and citations. This data is invaluable for analyzing the prosecution history of a patent, which can reveal potential weaknesses.

- Patent Assignment APIs: This allows for the tracking of patent ownership, which is crucial for understanding corporate strategies and competitive landscapes.

While the USPTO APIs are comprehensive, they can be complex to work with. A more user-friendly alternative for foundational data is the PatentsView API. Supported by the USPTO, PatentsView provides a cleaned, disambiguated, and integrated database of patent information. It excels at resolving inventor and assignee names, making it easier to track the patenting activity of specific companies and individuals over time. The data includes not just the patent text itself, but crucial metadata that forms the feature set for predictive models: filing dates, priority dates, patent classifications, examiner information, and forward and backward citation data.

Decoding the Docket: Extracting Litigation Insights from PACER

While the USPTO provides the history of a patent’s life before it was granted, the story of its life in court is housed in a different system: Public Access to Court Electronic Records (PACER). PACER is the federal judiciary’s centralized electronic public access service, containing a staggering repository of more than one billion documents from every federal district, bankruptcy, and appellate court case.

For patent litigation analytics, PACER is the indispensable source of truth. However, it presents a significant data engineering challenge. PACER was not designed as a modern, API-friendly database. It is a collection of individual court websites, and extracting data at scale often requires sophisticated web scraping techniques or the use of third-party services that have already aggregated the data, such as the CourtListener RECAP Archive.

Despite the difficulty, the data that can be mined from PACER is pure gold for predictive modeling. Key data points include 18:

- Parties and Counsel: Identifying the plaintiffs, defendants, and, critically, the law firms representing them. The track record of a law firm before a specific judge is a powerful predictive feature.

- Assigned Judge: Perhaps the single most important variable in many predictive models. The judge’s past rulings, timing, and tendencies in similar cases can be analyzed in detail.

- Key Timestamps: The filing date, motion dates, and termination date allow for the modeling of case duration and key milestones.

- Nature of Suit: Cases can be filtered by specific codes, such as “830” for patent infringement, to isolate the relevant dataset.

- Docket Entries: The entire procedural history of the case is logged in the docket report, including every motion filed, every order issued, and every hearing scheduled.

- Full-Text Documents: The most valuable data lies within the documents themselves—the complaints, answers, motions for summary judgment, expert reports, and judicial opinions. These unstructured text documents are where Natural Language Processing (NLP) techniques are applied to extract arguments, legal reasoning, and ultimately, the factors that drive outcomes.

The Commercial Edge: Integrating Third-Party Intelligence from Services like DrugPatentWatch

Public data from the USPTO and PACER forms the skeleton of a predictive model, but the flesh and blood—the commercial and strategic context—often comes from specialized third-party data providers. These services invest heavily in curating, enriching, and integrating data to provide insights that are simply not available in public records.

A prime example of such a service is DrugPatentWatch. While public sources can tell you about a patent and a lawsuit, a platform like DrugPatentWatch can connect that information to the real-world business dynamics of the pharmaceutical industry.23 The specific, high-value data points it provides are essential for building a truly powerful predictive model:

- Global Intelligence: It tracks drug patents in 134 countries, allowing for a global assessment of market opportunities and litigation risks.

- Litigation and Settlement Data: Crucially, it provides information on litigation outcomes and, in some cases, access to confidential royalty and settlement terms, offering a glimpse into the true economics of these disputes.

- Commercial Data: It integrates data on historical drug sales, pricing information, and formulary coverage, which is essential for modeling the financial stakes (“amount at risk”) of a case.3

- Lifecycle Management: It provides data on anticipated generic entry dates, biosimilar approvals, and clinical trial activity, allowing for a forward-looking view of the competitive landscape.23

- Supply Chain Information: It can identify API and finished drug product suppliers, which can be relevant for understanding the market and potential infringement scenarios.

The strategic advantage comes from integration. A model trained only on USPTO data might identify a patent as weak based on its prosecution history. But a model that integrates DrugPatentWatch data might see that the same patent protects a drug with $2 billion in annual sales and a dense “patent thicket” of secondary patents, leading to a completely different prediction about the patent owner’s willingness to fight and the likely outcome of litigation.

Data Wrangling: The Unseen Challenge of Cleaning and Structuring Legal Data

The process of building a predictive legal analytics framework is governed by a simple, brutal truth: garbage in, garbage out. The most sophisticated AI model will produce meaningless or even dangerously misleading results if it is trained on inaccurate, incomplete, or poorly structured data.26 The “data wrangling” stage—the process of cleaning, transforming, and integrating raw data—is the most time-consuming and critical part of the entire endeavor.

This stage involves several formidable challenges:

- Entity Resolution: Public and private databases are notoriously inconsistent. A single company might be listed as “Pfizer Inc.,” “Pfizer,” and “Pfizer, LLC” across different records. Entity resolution algorithms are required to correctly identify and merge these records to create a single, coherent view of a litigant’s history. The same challenge applies to law firms, inventors, and even judges.

- Data Linking: This is a crucial and non-trivial task. It involves programmatically connecting a specific court case found in PACER to the exact patent or patents at issue, which are stored in the USPTO database. This linking is the bridge that allows a model to correlate patent characteristics with litigation outcomes.

- Feature Engineering: This is the art and science of transforming raw data into structured, numerical “features” that a machine learning model can understand. For example, the text of a judicial opinion must be converted into a numerical vector. The number of forward citations a patent receives becomes a numerical feature. The career history of a judge might be encoded into features representing their experience level or past rulings. This process requires deep domain expertise to know which features are likely to be predictive.

- Handling Missing and Biased Data: Legal datasets are often messy. Records may be incomplete, and more importantly, they can suffer from significant selection bias. For example, litigation data in PACER only represents disputes that escalated to a lawsuit, not the vast majority that are settled beforehand. A model trained only on litigated cases might not be representative of legal disputes more broadly. Understanding and accounting for these biases is a critical step in building a reliable model.28

Ultimately, the immense effort required for this data integration and cleaning process creates a powerful competitive barrier. While the code for many advanced AI models is open-source, the high-quality, integrated, and proprietary legal data graph that results from this process is not. It is a unique and highly valuable corporate asset, the true engine behind predictive legal analytics.

Part 3: The Predictive Modeling Toolkit: From Statistics to AI

Once a robust and integrated data foundation has been built, the next step is to apply a range of analytical techniques to extract insights and make predictions. This toolkit spans from foundational text analysis methods to sophisticated machine learning models and the latest advancements in generative AI. Each tool has its specific strengths and is best suited for different aspects of the legal decision-making process.

Natural Language Processing (NLP): The Key to Unlocking Textual Data

The vast majority of legal and patent data is unstructured text: patent claims, scientific articles, court opinions, and legal briefs. This text is dense, filled with technical jargon and legal terminology, making manual analysis incredibly slow, expensive, and prone to human error. Natural Language Processing (NLP) is the branch of AI that gives computers the ability to read, understand, and interpret human language, transforming this unstructured text into analyzable data.31

Semantic Search and Prior Art Discovery

One of the most powerful applications of NLP in patent law is in the search for prior art. Traditional search methods rely on keywords, which are often inadequate. A competing invention might describe the same concept using entirely different words (synonyms or different phrasing), making it invisible to a keyword search. NLP-powered semantic search overcomes this limitation. Instead of matching keywords, it analyzes the underlying meaning and context of the text, allowing it to find conceptually similar documents even if they don’t share the same terminology. This is a game-changer for invalidity analysis, as it can uncover “killer” prior art that would have otherwise been missed.

Automated Claim Construction Analysis

In patent litigation, one of the most pivotal events is the Markman hearing, where a judge construes, or defines, the meaning of disputed terms in the patent’s claims. This interpretation often determines the outcome of the entire case. NLP can be used to support this process by programmatically analyzing how a specific technical or legal term has been used across thousands of other patents and court decisions.30 By identifying patterns in usage and judicial interpretation, these tools can help lawyers build stronger arguments for a favorable claim construction and even predict how a particular judge might rule on a specific term.

The Abstraction Problem

Interestingly, while NLP is a powerful tool for analyzing patents, patenting NLP inventions themselves has become challenging. Following the Supreme Court’s Alice decision, inventions that are deemed to be “abstract ideas”—which can include mathematical algorithms—are not patent-eligible unless they are tied to a specific, practical application that results in a tangible technological improvement. This “abstraction problem” means that companies developing novel NLP technologies must be careful to frame their patent applications around concrete solutions, such as improving the speed of a voice-activated system or the accuracy of a diagnostic tool, rather than just the algorithm itself.

Machine Learning Models for Outcome Prediction

With textual and metadata features extracted, various machine learning (ML) models can be trained to predict outcomes. The choice of model depends on the specific legal question being asked.

Random Forests: Dissecting Complex Cases with High-Dimensional Data

A Random Forest is a powerful and popular ML model that is particularly well-suited for the complexities of legal data. It can be thought of as a form of “wisdom of the crowd.” The model builds hundreds or even thousands of individual decision trees, each trained on a random subset of the data and features. To make a prediction, it essentially takes a vote from all the trees in the “forest,” with the majority vote becoming the final prediction.35

Random Forests offer several key advantages for legal analytics :

- Handles High-Dimensionality: Legal datasets can have thousands of potential features (patent characteristics, case details, judge history, etc.). Random Forests can handle this high-dimensional data effectively without “overfitting” (i.e., memorizing the training data instead of learning general patterns).35

- Robust Variable Importance: A key feature of Random Forests is their ability to rank the features by their predictive power. The model can tell a lawyer which factors are most influential in driving an outcome—for example, it might find that the assigned judge is the most important predictor, followed by the number of forward citations on the patent, and the specific law firm handling the case. This interpretability is crucial for building trust and developing strategy.35

- Handles “Large p, Small n” Problems: It performs well in situations common to legal datasets, where the number of potential features (p) is much larger than the number of available cases (n).

Support Vector Machines (SVMs): Classifying Legal Arguments and Documents

A Support Vector Machine (SVM) is another powerful classification algorithm. In simple terms, an SVM works by finding the optimal boundary, or “hyperplane,” that best separates data points into different categories. For example, it could be trained to find the boundary that best separates patents that are ultimately found to be “valid” from those found to be “invalid.”

SVMs are particularly effective for text classification, a core task in legal tech applications like e-discovery (where they are often called “predictive coding” or “technology-assisted review”). Their strengths include:

- Effectiveness in High-Dimensional Spaces: They work well in text analysis where the feature space is enormous (each unique word can be a dimension).38

- Robustness: They are robust even when the number of features is greater than the number of training examples, which is common in text datasets.

- Versatility: SVMs are often used as a strong baseline model against which more complex models, like deep neural networks, are compared.

Other Models and Network Analysis

While Random Forests and SVMs are workhorses, other models are also employed. Logistic Regression is a simpler statistical model often used as a baseline for comparison.39

Deep Learning and neural networks, such as Convolutional Neural Networks (CNNs), have shown strong performance in text classification tasks, sometimes outperforming traditional models like SVMs.

A different but complementary approach is Network Analysis. This technique models the relationships between entities as a graph of nodes and edges. In the patent world, this can be used to create 42:

- Patent Citation Networks: To identify foundational or “pioneering” patents that are highly cited.

- Litigant Networks: To map the competitive landscape and identify frequent allies or adversaries.

- Law Firm-Judge Networks: To uncover relationships and analyze success rates.

This type of analysis can reveal hidden structures and strategic insights that are not apparent from looking at individual data points alone.18

The Rise of Generative AI: Transforming Legal Research and Risk

The most recent and perhaps most disruptive addition to the legal tech toolkit is Generative AI, powered by Large Language Models (LLMs) like those behind ChatGPT. These models are capable of generating new, human-like text, and their potential applications in law are vast.

Opportunities in Automated Briefing and Analysis

Generative AI is already being used to automate a wide range of legal tasks. It can summarize lengthy depositions, draft standard sections of contracts or legal briefs, analyze thousands of documents for due diligence, and accelerate legal research.44 The potential for efficiency gains is enormous; one Goldman Sachs report predicted that AI could automate as much as 44% of the work done in the legal sector.

The Peril of “Hallucinated” Precedent and Ethical Guardrails

However, this power comes with a significant and well-publicized risk: hallucination. LLMs can, and do, invent facts and fabricate legal citations that sound plausible but are entirely nonexistent. There have been several high-profile cases where lawyers have faced sanctions and fines for submitting briefs to courts that contained fake case citations generated by an AI. This underscores a critical and non-negotiable rule for using generative AI in law: every output must be meticulously verified by a human expert. Unverified reliance on AI is not just risky; it’s professional malpractice.

Impact on the “PHOSITA” Standard

A more subtle but profound impact of AI is its potential to change the legal standards themselves. As AI-powered research and analysis tools become ubiquitous, the baseline level of knowledge expected of the “person having ordinary skill in the art” (PHOSITA) is likely to rise. An invention that might have seemed non-obvious to a human researcher working alone could be deemed obvious to an AI-augmented researcher who can scan the entire universe of prior art in minutes. As one expert noted, AI could allow the patent office to say “it would be obvious to combine these references where, currently, it may not be obvious”. This could make it progressively more difficult to obtain and defend patents for incremental innovations.

The table below provides a strategic comparison of these different modeling techniques, highlighting their best use cases and inherent risks within the legal domain.

Table 3: Comparison of Predictive Machine Learning Models for Legal Analytics

| Model/Technique | Primary Legal Application | Key Strengths | Key Limitations & Risks in a Legal Context |

| Natural Language Processing (NLP) | Prior Art Search, Claim Construction Analysis, e-Discovery | Understands semantic meaning beyond keywords; Extracts concepts from unstructured text. | Can struggle with highly ambiguous or novel legal language; Requires large, domain-specific training data. |

| Random Forests (RF) | Outcome Prediction (Validity, Infringement), Feature Importance Ranking | Handles high-dimensional data well; Robust against overfitting; Provides interpretable feature importance rankings. | Can be a “black box” if not paired with interpretability tools; May not capture highly complex, non-linear relationships as well as neural networks. |

| Support Vector Machines (SVMs) | Text Classification (e-Discovery, Document Categorization) | Highly effective in high-dimensional text spaces; Strong theoretical foundation; Good baseline performance. | Less interpretable than tree-based models; Performance can be sensitive to parameter tuning. |

| Generative AI (LLMs) | Document Drafting, Legal Research Summarization, Deposition Analysis | Generates human-like text; Can synthesize information from vast sources; Accelerates routine writing tasks. | High risk of “hallucination” (fabricating facts/citations); Potential for data privacy and confidentiality breaches; Outputs require mandatory human verification. |

Part 4: Framework in Action: Modeling and Predicting Validity Cases

With a solid data foundation and a versatile toolkit of AI models, we can now construct practical frameworks for modeling and predicting the outcomes of pharmaceutical patent disputes. These frameworks are not crystal balls; they do not provide certainty. Instead, they provide probabilistic forecasts that can dramatically improve strategic decision-making, resource allocation, and risk management. The goal is to move from decisions based on intuition and anecdotal evidence to decisions grounded in data-driven insights.

Predicting IPR Outcomes: Modeling Institution and Invalidation Rates

As the statistics have shown, the most critical inflection point in an Inter Partes Review (IPR) is the PTAB’s decision on whether to institute the review. If the petition is denied, the patent is safe (at least for that challenge). If it is instituted, the patent owner is immediately on the defensive, with the odds of at least some claims being invalidated rising dramatically. Therefore, a predictive framework for IPRs must first and foremost model the likelihood of institution.

A robust model for predicting the institution decision would be trained on historical PTAB data and would incorporate a rich set of features, including 41:

- Patent-Specific Features:

- Prosecution History: How many Office Actions did the patent receive during examination? Were there many rejections? A difficult prosecution history can signal underlying weaknesses.

- Citation Profile: The number of forward citations (how many later patents cited this one) can be a proxy for its importance, while backward citations show its technological lineage.

- Claim Characteristics: The number of independent and dependent claims, and the complexity of the claim language.

- Litigant and Counsel Features:

- Party History: Has the patent owner or petitioner been involved in many previous IPRs?

- Law Firm Performance: What is the track record of the law firms representing each side before the PTAB? Some firms may have demonstrably higher success rates in getting petitions instituted or defeated.

- PTAB Judge Features:

- Behavioral Analytics: What is the historical institution rate for each of the three Administrative Patent Judges (APJs) assigned to the panel? How have they ruled on patents in this specific technology class (e.g., small molecule chemistry vs. biologics)?.

- Petition-Specific Textual Features:

- Argument Analysis: Using NLP to analyze the text of the IPR petition itself. Does it primarily rely on obviousness or anticipation arguments? How many prior art references are cited? The model can learn which types of arguments are more persuasive to the Board.

Once a case is instituted, a second, separate model can be used to predict the outcome of the final written decision. This model would incorporate the features above, plus new data generated during the trial, such as the arguments made in the patent owner’s response and the content of expert witness declarations.

Forecasting District Court Litigation: From Claim Construction to Final Judgment

District court litigation is a much longer and more complex process than an IPR, with multiple critical milestones along the way. A predictive framework for these cases, therefore, is not about a single outcome prediction but about forecasting probabilities at each major stage of the lawsuit.

A comprehensive model would provide data-driven answers to a series of strategic questions:

- Motion to Dismiss: Based on the complaint and the assigned judge, what is the probability that an early motion to dismiss will be granted?.

- Claim Construction (Markman Hearing): This is a pivotal stage. By analyzing the judge’s prior Markman rulings and the arguments of the parties, the model can predict the likely construction of key disputed claim terms. This prediction can radically alter the settlement calculus for both sides.

- Summary Judgment: What is the likelihood that the case will be decided on summary judgment, avoiding a full trial? This prediction would depend heavily on the outcome of claim construction and the strength of the evidence presented.

- Settlement vs. Trial: The model can estimate the probability of the case settling before trial versus proceeding to a final judgment. This can be influenced by factors like litigation costs, the judge’s tendency to encourage settlement, and the predicted trial outcome.

- Trial Outcome and Damages: If the case goes to trial, what is the predicted probability of a win for the patent owner? And if they win, what is the predicted range of monetary damages the court might award?.

The feature set for this district court model would be even richer than the IPR model, incorporating data such as 11:

- Jurisdictional Trends: How does the District of Delaware handle obviousness challenges compared to the Western District of Texas or the District of New Jersey? The model can identify venue-specific patterns.

- Deep Judicial Analytics: An in-depth analysis of the assigned judge’s entire career, including their rulings on specific types of motions (e.g., summary judgment), their average time to trial, and their history in patent cases.

- eDiscovery Data: While challenging to obtain, data from the discovery process, such as the volume and nature of documents produced, could potentially be used as a feature to model case complexity or party cooperation.

Case Study: AI in Action in Pharmaceutical Patent Disputes

The application of AI is no longer theoretical. It is actively shaping both the creation of new drugs and the litigation that surrounds them, creating novel legal questions in the process.

The most fundamental challenge has arisen from the use of AI as an inventor. In the landmark case of Thaler v. Vidal, the U.S. Federal Circuit Court of Appeals definitively ruled that under current U.S. law, an “inventor” must be a human being. The court rejected patent applications that listed an AI system named DABUS as the sole inventor.51 This decision was followed by guidance from the USPTO in 2024, which clarified that an invention is patentable if a human provides a “significant contribution” to its conception.47 Simply owning or operating an AI system is not enough to qualify for inventorship.

This “human contribution” standard is now at the heart of a new wave of patent disputes. A profound strategic tension has emerged. To secure a patent, companies must disclose enough information to enable a skilled person to make and use the invention and must be able to prove human inventorship. However, to maintain their competitive edge, these same companies want to protect their proprietary AI drug discovery platforms as trade secrets. This creates a “disclosure dilemma.” If a company is too honest about the central role of its autonomous AI, it risks having its patent invalidated under the Thaler precedent. If it downplays the AI’s role to secure the patent, it may be vulnerable to challenges that it failed to adequately disclose the true inventive process or that the named humans did not, in fact, make a “significant contribution”. This dilemma is creating a new and predictable front in patent litigation, where discovery will focus intensely on the exact nature of the human-AI interaction.

On the other side of the coin, AI can also be a powerful tool for defending a patent. In the case of In re Cyclobenzaprine, the Federal Circuit upheld a patent for a new use of a known drug that was discovered by an AI model. A key part of the reasoning was that the AI’s prediction of the drug’s efficacy for a new purpose was unexpected and diverged from established scientific principles. The decision highlighted that the “black box” nature of an AI could actually support an argument for non-obviousness, provided the human researchers can articulate why the result was unpredictable and not something a skilled person would have been motivated to try.

Imagine a hypothetical ANDA litigation scenario that ties these concepts together. A generic company is considering challenging the patent portfolio of a blockbuster drug. Using a predictive analytics platform, they first run an NLP-powered semantic search of global scientific literature and patent databases. The search uncovers two obscure papers from a decade ago that, while not explicitly linked, together suggest a pathway to the branded drug’s formulation.

Next, they feed the brand’s key patents, the newly discovered prior art, and other case data into their litigation prediction model. The model analyzes the assigned judge in the District of Delaware, noting her history of being receptive to complex obviousness arguments. It also analyzes the prosecution history of the brand’s patent, flagging that it overcame a rejection by making a very narrow argument. The model concludes that there is a 75% probability of invalidating the key patent on obviousness grounds if challenged in an IPR.

Armed with this data-driven confidence, the generic company forgoes an expensive, high-risk district court battle and instead files a targeted IPR petition. The brand company, running its own analytics, sees the strength of the challenge and the unfavorable odds. Instead of engaging in a protracted, multi-million-dollar fight, they come to the negotiating table and agree to a settlement that allows for generic entry earlier than they otherwise would have. In this scenario, data and AI were not just analytical tools; they were strategic weapons that reshaped the entire conflict.

Part 5: The Human-AI Symbiosis: Challenges and Strategic Imperatives

The integration of big data and AI into the legal profession is not a simple matter of plugging in new software. It represents a fundamental paradigm shift that comes with significant challenges, profound ethical considerations, and strategic imperatives for legal professionals. The future is not one of machines replacing lawyers, but of a new, powerful symbiosis between human expertise and artificial intelligence.

The “Black Box” Problem: The Critical Need for Interpretability and Explainable AI (XAI)

The single greatest barrier to the adoption of AI in high-stakes legal decision-making is the “black box” problem. A model might predict a 70% chance of a patent being invalidated, but if it cannot explain why, the prediction is strategically useless. Unlike other fields, such as online advertising, legal decisions demand a reasoned, transparent justification. A lawyer cannot advise a client to risk millions of dollars on litigation simply because “the algorithm said so,” and a judge cannot issue a ruling based on an opaque computational output.54

This is where the field of Explainable AI (XAI), or interpretability, becomes critical. The goal of XAI is to build “glass box” models whose reasoning can be understood and scrutinized by human users. Interpretability is essential for building trust, debugging models, ensuring fairness, and complying with regulations that may require explainable decisions.

Several techniques are used to achieve this transparency:

- Local Interpretable Model-Agnostic Explanations (LIME): This method explains an individual prediction by creating a simpler, more understandable model that approximates the behavior of the complex model in the local vicinity of that one prediction. In essence, it answers the question: “For this specific case, what were the top factors that led the model to this conclusion?”.

- Shapley Additive exPlanations (SHAP): Drawing from cooperative game theory, SHAP assigns a precise contribution value to each feature for a given prediction. It can show exactly how much each factor—the judge, the prior art, the law firm—pushed the prediction towards “valid” or “invalid”.

- Feature Importance: As discussed with Random Forests, some models can inherently rank the overall importance of all variables in the model, providing a global view of what factors are most predictive across all cases.

The ultimate objective is to create a system of augmented reasoning. The AI’s role is not to provide an answer, but to provide a data-driven hypothesis and the evidence to support it. It allows the human lawyer to see the prediction, understand the key factors driving it, and then use their own expertise and judgment to validate, challenge, or refine the conclusion, ultimately building a stronger and more persuasive legal strategy.

Garbage In, Garbage Out: Confronting and Mitigating Algorithmic Bias

If the “black box” problem is the primary technical hurdle, algorithmic bias is the primary ethical one. An AI model is only as good as the data it is trained on, and if that data reflects historical societal biases, the model will learn and perpetuate them, often at scale.

Bias can creep into a legal AI system at multiple stages :

- Data Collection and Selection Bias: This is the most common source. If historical judicial data shows that certain groups have received harsher sentences, a model trained on that data may recommend harsher sentences for those same groups. In patent law, if data is primarily drawn from a few dominant jurisdictions, the model’s predictions may not be accurate for cases in other venues.28

- Implicit Bias in Text: Legal documents are written by humans and can contain subtle, unconscious biases in language. An NLP model can learn these associations and replicate them in its analysis or generation of text.

- Measurement and Confirmation Bias: The model might be trained on data that reinforces existing patterns, such as favoring arguments that have been successful in the past, potentially stifling novel legal strategies.

In a legal context, the consequences of bias are severe. It can lead to discriminatory outcomes, undermine the fairness of the justice system, and erode public trust. Mitigating this risk requires a proactive and multi-faceted approach :

- Diverse and Representative Data: The most important step is to ensure that training datasets are as broad, diverse, and representative as possible.

- Bias Detection and Fairness Audits: Specialized tools and fairness metrics must be used to actively test models for biased outputs against different demographic or case-type groups.

- Continuous Monitoring: A model’s performance and fairness must be continuously audited after it is deployed to catch any emergent biases.

- Human in the Loop: For all critical decisions, a human expert must remain in the loop, with the authority to override the AI’s recommendation. This is the ultimate safeguard against algorithmic error and bias.

The Future of Legal Decision-Making: Augmenting, Not Replacing, Human Expertise

The rise of legal AI does not signal the end of the legal profession. Rather, it heralds a transformation. The most likely and desirable future is not one where AI replaces lawyers, but one where AI augments them, creating a “cyborg” lawyer who combines human judgment with computational power. As many experts have noted, the lawyer who uses AI will replace the lawyer who does not.

This technology will fundamentally shift the value proposition of legal work. By automating routine and time-consuming tasks—such as initial document review, basic legal research, and data analysis—AI will free up legal professionals to focus on the uniquely human skills that create the most value: complex strategic thinking, creative problem-solving, persuasive advocacy, empathetic client counseling, and ethical judgment.27

This evolution demands a new set of skills. The lawyer of the future must be not only an expert in the law but also a sophisticated consumer of data analytics. They will need to develop “data literacy”—the ability to frame a legal problem as an analytical question, query a predictive model, interpret probabilistic outputs, and critically question the assumptions and limitations of the technology they are using. Law firms and corporate legal departments will need to invest in training and cultivate a culture that embraces data-driven decision-making.

Conclusion: From Data to Dominance – Achieving Competitive Advantage Through Predictive Analytics

The landscape of pharmaceutical patent litigation is being redrawn. What was once an opaque and unpredictable art, governed by intuition and experience, is becoming a data-driven science. We have journeyed from the high-stakes legal battlegrounds of the district courts and the PTAB, through the vast and complex data ecosystems of the USPTO and PACER, and into the sophisticated world of AI, from natural language processing to predictive machine learning.

The message is clear: in the 21st century, competitive advantage in intellectual property will not be determined by the size of a law firm or the depth of a legal budget alone. It will be driven by the ability to master the new synthesis of law, data, and artificial intelligence. The organizations that successfully build or adopt these predictive frameworks will unlock unprecedented strategic capabilities. They will be able to 3:

- Make Smarter Decisions: By replacing guesswork with data-driven probabilities when deciding whether to litigate, settle, or design around a patent.

- Allocate Resources More Efficiently: By focusing multi-million-dollar litigation budgets on the cases with the highest probability of success and the arguments most likely to persuade a specific judge.

- Mitigate Risk More Effectively: By identifying potential “killer” prior art earlier, anticipating litigation threats before they materialize, and understanding the true strength of their own and their competitors’ patent portfolios.

Ultimately, this is a story of transformation. The pharmaceutical companies and law firms that embrace this change—that invest in the data pipelines, cultivate the analytical talent, and integrate these tools into their strategic core—will be the ones who can navigate the uncertainty, control the costs, and achieve a dominant position in the market. The algorithmic adjudicator has arrived, and for those who know how to wield it, it offers a clear path from data to dominance. The pace of this technological change is relentless and accelerating; the time to adapt is now.59

Key Takeaways

- Litigation is a Business Variable: Pharmaceutical patent litigation is not just a legal problem; it’s a multi-million-dollar business risk. The immense costs ($4M+ for high-stakes cases) and inherent uncertainty of the legal system are the primary economic drivers creating the market for predictive analytics.

- Data Integration is the Real “Moat”: While AI models are important, the true and most defensible competitive asset is the proprietary, integrated dataset created by combining public data (USPTO, PACER) with commercial intelligence (e.g., from services like DrugPatentWatch). This data pipeline is incredibly difficult and expensive to replicate.

- Predict the Pivotal Moments: In IPRs, the key prediction is the institution decision, as invalidation rates are very high post-institution. In district court, predictive models are most valuable when they forecast outcomes at key milestones: claim construction, summary judgment, and settlement likelihood.

- The PHOSITA Standard is Evolving: The rise of AI is elevating the standard for a “person having ordinary skill in the art.” What was non-obvious to a human may be deemed obvious to an AI-augmented human, making it harder to obtain and defend patents on incremental innovations.

- Explainability is Non-Negotiable: “Black box” predictions are unusable in a legal context. The adoption of Explainable AI (XAI) techniques like LIME and SHAP is essential for building trust, enabling strategic reasoning, and ensuring ethical use. An unexplainable prediction is not an actionable insight.

- AI Augments, It Doesn’t Replace: The future belongs to the “cyborg” lawyer who combines their human expertise in strategy, ethics, and advocacy with the analytical power of AI. The lawyer who uses AI will replace the lawyer who does not.

- AI Inventorship Creates a Disclosure Dilemma: Current law requires a “significant human contribution” for a patent, creating a strategic catch-22 for companies using advanced AI in drug discovery. Disclosing the AI’s full role risks an inventorship challenge, while hiding it risks an enablement or disclosure challenge.

Frequently Asked Questions (FAQ)

1. Can these AI models really predict a case outcome with certainty?

No, and it is a critical misconception to believe they can. These frameworks do not provide deterministic certainties; they generate probabilistic forecasts. Their value lies not in offering a single, “correct” answer but in quantifying uncertainty and shifting the odds in your favor. Think of it less like a crystal ball and more like a sophisticated weather forecast for litigation. It provides data-driven insights—such as a 70% chance of a motion succeeding before a specific judge—that supplement, rather than replace, the critical thinking and nuanced judgment of an expert human lawyer.28

2. What is the single biggest barrier to implementing a predictive analytics framework in a law firm or corporate legal department?

Beyond the significant financial investment, the biggest barrier is overwhelmingly cultural. It requires a fundamental shift in mindset from relying purely on traditional legal precedent and individual intuition to embracing a culture of data-driven decision-making. This involves overcoming skepticism, investing in new skill sets for lawyers to become “data-literate,” and tackling the immense technical challenges of sourcing, cleaning, and integrating the necessary data. Many legal organizations are not structured to handle large-scale data engineering projects, making the cultural and operational transition the most difficult part of the process.28

3. If an AI is used to help invent a new drug, who gets the patent?

Under current U.S. law, the answer is clear: only human beings can be named as inventors on a patent. The Thaler v. Vidal case cemented this principle. However, this does not mean AI-assisted inventions are unpatentable. According to 2024 guidance from the USPTO, a patent can be granted for an invention developed with the help of AI as long as one or more humans made a “significant contribution” to the conception of the invention. Simply owning the AI, designing it, or pressing “run” is not sufficient. A human must be able to demonstrate meaningful intellectual input into the inventive process, such as designing the specific problem for the AI to solve, creatively modifying the AI’s output, or using the output to design a successful experiment.47

4. How can we trust the predictions of a “black box” AI model in a legal setting?

The simple answer is that we can’t, and we shouldn’t. This is precisely why the field of legal analytics is moving rapidly away from opaque models and towards Explainable AI (XAI). In a legal setting, a prediction without a rationale is useless. Techniques like LIME and SHAP are specifically designed to open up the “black box” and make a model’s reasoning transparent. They can show which specific factors—such as the choice of jurisdiction, the judge’s history, or a particular piece of prior art—were most influential in driving a prediction. This allows a lawyer to scrutinize the AI’s reasoning, validate it against their own expertise, and build a more robust legal argument. In law, an unexplainable prediction is an unusable one.54

5. Is building an in-house predictive model better than buying a solution from a vendor like Pre/Dicta?

This is a classic “build vs. buy” strategic trade-off, and there is no single right answer. Building in-house offers complete control, deep customization to your specific needs, and proprietary ownership of the resulting models and data assets. However, it is extremely expensive and resource-intensive, requiring a dedicated team of data scientists, engineers, and legal experts, and can take years to develop. Buying a solution from a specialized vendor is much faster, provides immediate access to their expertise and pre-built data integrations, and is typically more cost-effective upfront. The downsides are less customization, potential data security concerns, and a long-term dependency on the vendor. The crucial factor to remember is that the most valuable and difficult-to-replicate asset is the underlying high-quality, integrated data. The decision should hinge on whether your organization has the long-term commitment and resources to build and maintain this data asset better than a specialized third party.

References

: Bitlaw. (n.d.). Patent Requirements. bitlaw.com.

: DrugPatentWatch. (2025, August 4). Managing Drug Patent Litigation Costs. drugpatentwatch.com.

: DrugPatentWatch. (n.d.). How Much Does a Drug Patent Cost? A Comprehensive Guide to Pharmaceutical Patent Expenses. drugpatentwatch.com.

: DrugPatentWatch. (n.d.). Best Practices for Drug Patent Portfolio Management. drugpatentwatch.com.

: Association for Accessible Medicines. (2024, November). What is “Inter Partes Review” (IPR)?. accessiblemeds.org.

: PhRMA. (n.d.). What is inter partes review and why does it matter?. phrma.org.

: Knovos. (n.d.). An eDiscovery Handbook for ANDA Litigation. knovos.com.

: DrugPatentWatch. (n.d.). Developing a Comprehensive Drug Patent Strategy. drugpatentwatch.com.

: U.S. Patent and Trademark Office. (n.d.). MPEP § 2141 The Four Factual Inquiries Underlying an Obviousness Determination. uspto.gov.

: DrugPatentWatch. (2025, July 19). ANDA Litigation Strategies and Tactics for Pharmaceutical Patent Litigators. drugpatentwatch.com.

: DrugPatentWatch. (n.d.). ANDA Litigation Strategies and Tactics for Pharmaceutical Patent Litigators. drugpatentwatch.com.

: Intrepidx. (n.d.). Our Guide to Understanding ANDA Litigation. intrepidx.com.

: The National Law Review. (2025, August 9). 2024 Hatch-Waxman Year in Review. natlawreview.com.

: The National Law Review. (2025, August 9). 2024 Hatch-Waxman Year in Review. natlawreview.com.

: DrugPatentWatch. (2025, August 4). Managing Drug Patent Litigation Costs. drugpatentwatch.com.

: PatentPC. (n.d.). Inter Partes Review on Biopharmaceutical Patents. patentpc.com.

: Association for Accessible Medicines. (2024, November). What is “Inter Partes Review” (IPR)?. accessiblemeds.org.

: PhRMA. (n.d.). What is inter partes review and why does it matter?. phrma.org.

: PhRMA. (n.d.). What is inter partes review and why does it matter?. phrma.org.

: PTAB Bar Association. (2025, January 6). Trial Statistics & Trends at the PTAB: 2024 Edition. ptablaw.com.

: PTAB Litigation Blog. (2024, October 31). PTAB AIA FY2024 Roundup: Key Insights and Statistics. ptablitigationblog.com.

: Akin Gump Strauss Hauer & Feld LLP. (n.d.). IPR and biopharma patents: what the statistics show. akingump.com.

: IPWatchdog.com. (2024, June 25). Recent Statistics Show PTAB Invalidation Rates Continue to Climb. ipwatchdog.com.

: U.S. Patent and Trademark Office. (n.d.). USPTO API Catalog. developer.uspto.gov.

: Certum Group. (n.d.). Data on Patent Law: Sources and Uses Explained. certumgroup.com.

: U.S. Patent and Trademark Office. (n.d.). API Services for Patent Assignment Search. assignment-api.uspto.gov.

: PatentsView. (n.d.). Why Explore Patent Data?. patentsview.org.

: U.S. Courts. (n.d.). PACER Case Locator. pcl.uscourts.gov.

: U.S. Courts. (n.d.). Public Access to Court Electronic Records. pacer.uscourts.gov.

: Pre/Dicta. (2025, February 7). Understanding Legal Analytics: AI Data-Driven Insights. pre-dicta.com.

: KorumLegal. (n.d.). Introduction to Legal Analytics and Predictive Modelling. korumlegal.com.

: Surden, H. (2021). Predictive Analytics and Law. National Conference of Judicial Institutes.

: Surden, H. (2021). Predictive Analytics and Law. National Conference of Judicial Institutes.

: Crozdesk. (n.d.). DrugPatentWatch. crozdesk.com.

: DrugPatentWatch. (n.d.). DrugPatentWatch Home. drugpatentwatch.com.

: DrugPatentWatch. (n.d.). DrugPatentWatch Home. drugpatentwatch.com.

: Effectual Services. (n.d.). Using Natural Language Processing (NLP) for Detecting Overlapping Patent Claims. effectualservices.com.

: Arxiv. (n.d.). A Survey on Recent Open-Source Natural Language Processing and Multimodal Methods in Patent Analysis. aclanthology.org.

: Arxiv. (2024, March). A Survey on Recent Open-Source Natural Language Processing and Multimodal Methods in Patent Analysis. arxiv.org.

: Arxiv. (2024, April). A Survey on Recent Open-Source Natural Language Processing and Multimodal Methods in Patent Analysis. arxiv.org.

: PatentPC. (n.d.). Patent Challenges in Natural Language Processing (NLP) Technologies. patentpc.com.

: Minitab. (n.d.). Random Forests. minitab.com.

: Ishwaran, H., & Kogalur, U. B. (2012). Random Forests for Genomic Data Analysis. PMC.

: Minitab. (n.d.). Random Forests. minitab.com.

: Seifert, A., & Wright, M. N. (2023). Mutual forest impact and mutual impurity reduction for the analysis of feature relations. Bioinformatics.

: Joachims, T. (1998). Text Categorization with Support Vector Machines: Learning with Many Relevant Features. Cornell University.

: Joachims, T. (2001). A Statistical Learning Model of Text Classification for Support Vector Machines. Cornell University.

: Arxiv. (2019, April). Text Classification in Legal Domain using Convolutional Neural Network. arxiv.org.

: Joachims, T. (1998). Text Categorization with Support Vector Machines: Learning with Many Relevant Features. Cornell University.

: Juranek, S., & Otneim, H. (2024). Using machine learning to predict patent lawsuits. International Review of Law and Economics.

: Hall, B. H., Jaffe, A., & Trajtenberg, M. (2007). Network Analysis of Patent Data. CABI Digital Library.

: Frontiers in Physics. (2022). Patent Application Analysis Based on Complex Network. frontiersin.org.

: AIMultiple. (2025, July 26). Generative AI Legal: Top Use Cases, Tools & Impact. research.aimultiple.com.

: USI Affinity. (2024). The Impact of Generative AI on the Legal Profession. usiaffinity.com.

: AIMultiple. (2025, July 26). Generative AI Legal: Top Use Cases, Tools & Impact. research.aimultiple.com.

: New York State Bar Association. (2025, March 7). Justice Meets Algorithms: The Rise of Gen AI in Law Firms. nysba.org.

: Akin Gump Strauss Hauer & Feld LLP. (n.d.). Patentability and Predictability in AI-Assisted Drug Discovery. akingump.com.

: Marshall, Gerstein & Borun LLP. (2023, July 12). AI Use Risks Drop in New Patents as Ideas Are ‘Obvious’. marshallip.com.

: American Bar Association. (2024, July-August). Quotable Quotes on the Impact of AI on the Legal Profession. americanbar.org.

: Deloitte. (n.d.). The Future of Legal Work. deloitte.com.

: Pre/Dicta. (n.d.). AI-Powered Legal Case Outcome Prediction Methods. pre-dicta.com.

: Pre/Dicta. (n.d.). AI-Powered Legal Case Outcome Prediction Methods. pre-dicta.com.

: DrugPatentWatch. (2025, July 17). AI Meets Drug Discovery: But Who Gets the Patent?. drugpatentwatch.com.

: Ropes & Gray LLP. (2024, October). Patentability Risks Posed by AI in Drug Discovery. ropesgray.com.

: DrugPatentWatch. (2025, July 17). AI Meets Drug Discovery: But Who Gets the Patent?. drugpatentwatch.com.

: Duke University School of Law. (n.d.). AI is ‘flooding the zone’ with patents. How can they be more reliable?. law.duke.edu.

: ResearchGate. (2024). Interpretable AI models for judicial decision-making: beyond explicability, towards legal due process. researchgate.net.

: e-publica. (n.d.). Interpretable AI models for judicial decision-making: beyond explicability, towards legal due process. e-publica.pt.

: IBM. (2024, October 8). What is AI interpretability?. ibm.com.

: IBM. (2024, October 8). What is AI interpretability?. ibm.com.

: Chapman University. (n.d.). Bias in AI. chapman.edu.

: Chapman University. (n.d.). Bias in AI. chapman.edu.

: Stanford Law School. (n.d.). Bias in Large Language Models and Who Should Be Held Accountable. law.stanford.edu.

: Chapman University. (n.d.). Bias in AI. chapman.edu.

: Taylor & Francis Online. (2024). Predictive analytics as a privacy challenge. tandfonline.com.

: Lawcadia. (n.d.). The Role of Data Analytics in Transforming Legal Departments. lawcadia.com.

: insightsoftware. (n.d.). The Benefits, Challenges, and Risks of Predictive Analytics for Your Application. insightsoftware.com.

Works cited

- Managing Drug Patent Litigation Costs: A Strategic Playbook for the Pharmaceutical C-Suite, accessed August 9, 2025, https://www.drugpatentwatch.com/blog/managing-drug-patent-litigation-costs/

- How Much Does a Drug Patent Cost? A Comprehensive Guide to Pharmaceutical Patent Expenses – DrugPatentWatch – Transform Data into Market Domination, accessed August 9, 2025, https://www.drugpatentwatch.com/blog/how-much-does-a-drug-patent-cost-a-comprehensive-guide-to-pharmaceutical-patent-expenses/

- Best Practices for Drug Patent Portfolio Management: Leveraging Patent Data for Competitive Advantage – DrugPatentWatch, accessed August 9, 2025, https://www.drugpatentwatch.com/blog/best-practices-for-drug-patent-portfolio-management-2/

- Inter Partes Review (IPR) Is Necessary to Lower Drug Prices by …, accessed August 9, 2025, https://accessiblemeds.org/wp-content/uploads/2024/11/AAM-IssueBrief-InterPartesReview_0.pdf

- What is inter partes review and why does it matter? | PhRMA, accessed August 9, 2025, https://phrma.org/blog/what-is-inter-partes-review-and-why-does-it-matter

- Patent Requirements – BitLaw, accessed August 9, 2025, https://www.bitlaw.com/patent/requirements.html

- ANDA Litigation: Strategies and Tactics for Pharmaceutical Patent …, accessed August 9, 2025, https://www.drugpatentwatch.com/blog/anda-litigation-strategies-and-tactics-for-pharmaceutical-patent-litigators/

- 2141-Examination Guidelines for Determining Obviousness Under …, accessed August 9, 2025, https://www.uspto.gov/web/offices/pac/mpep/s2141.html

- An eDiscovery handbook for ANDA Litigation – Knovos, accessed August 9, 2025, https://www.knovos.com/guides/an-ediscovery-handbook-for-anda-litigation/

- Our guide to understanding ANDA litigation. – IntrepidX, accessed August 9, 2025, https://intrepidx.com/our-guide-to-understanding-anda-litigation/

- 2024 Hatch-Waxman Litigation Trends and Key Federal Circuit Decis, accessed August 9, 2025, https://natlawreview.com/article/2024-hatch-waxman-year-review

- The Impact of Inter Partes Review on Biopharmaceutical Patents – PatentPC, accessed August 9, 2025, https://patentpc.com/blog/inter-partes-review-on-biopharmaceutical-patents

- Trial Statistics Trends at the PTAB: 2024 Edition, accessed August 9, 2025, https://www.ptablaw.com/2025/01/06/trial-statistics-trends-at-the-ptab-2024-edition/

- PTAB AIA FY2024 Roundup: Key Insights and Statistics, accessed August 9, 2025, https://www.ptablitigationblog.com/ptab-aia-fy2024-roundup-key-insights-and-statistics/

- IPR and biopharma patents: what the statistics show – Akin Gump, accessed August 9, 2025, https://www.akingump.com/a/web/39881/aoi5X/ipr-and-biopharma-patents_-what-the-statistics-show_gf.pdf

- Recent Statistics Show PTAB Invalidation Rates Continue to Climb – IPWatchdog.com, accessed August 9, 2025, https://ipwatchdog.com/2024/06/25/recent-statistics-show-ptab-invalidation-rates-continue-climb/id=178226/

- United States Patent and Trademark Office – USPTO Open Data Portal, accessed August 9, 2025, https://developer.uspto.gov/api-catalog

- Data on Patent Law: Sources and Uses Explained – Certum Group, accessed August 9, 2025, https://certumgroup.com/blog/litigation-news/data-on-patent-law-sources-and-uses-explained/

- API Services for Patent Assignment Search – USPTO, accessed August 9, 2025, https://assignment-api.uspto.gov/documentation-patent/

- API Purpose – PatentsView, accessed August 9, 2025, https://patentsview.org/apis/purpose

- PACER Case Locator, accessed August 9, 2025, https://pcl.uscourts.gov/

- Public Access to Court Electronic Records | PACER: Federal Court Records, accessed August 9, 2025, https://pacer.uscourts.gov/

- DrugPatentWatch | Software Reviews & Alternatives – Crozdesk, accessed August 9, 2025, https://crozdesk.com/software/drugpatentwatch

- DrugPatentWatch is a time-saving powerhouse, accessed August 9, 2025, https://www.drugpatentwatch.com/

- The Pharmaceutical Patent Playbook: Forging Competitive Dominance from Discovery to Market and Beyond – DrugPatentWatch, accessed August 9, 2025, https://www.drugpatentwatch.com/blog/developing-a-comprehensive-drug-patent-strategy/

- Basic Understanding of Legal Analytics – Pre/Dicta, accessed August 9, 2025, https://www.pre-dicta.com/understanding-legal-analytics-ai-data-driven-insights/

- Introduction to Legal Analytics and Predictive Modelling – KorumLegal, accessed August 9, 2025, https://www.korumlegal.com/blog/introduction-to-legal-analytics-and-predictive-modelling

- Predictive Analytics and Law.v2.docx, accessed August 9, 2025, https://ncji.org/wp-content/uploads/2021/11/Symposium-Draft-Surden-Predictive-Analytics-and-Law.pdf

- Bias in AI | Chapman University, accessed August 9, 2025, https://www.chapman.edu/ai/bias-in-ai.aspx

- Using Natural Language Processing (NLP) for Detecting Overlapping Patent Claims, accessed August 9, 2025, https://www.effectualservices.com/article/using-natural-language-processing-nlp-detecting-overlapping-patent-claims

- A Survey on Patent Analysis: From NLP to … – ACL Anthology, accessed August 9, 2025, https://aclanthology.org/2025.acl-long.419.pdf

- Artificial Intelligence Exploring the Patent Field – arXiv, accessed August 9, 2025, https://arxiv.org/html/2403.04105v1

- A Survey on Patent Analysis: From NLP to Multimodal AI – arXiv, accessed August 9, 2025, https://arxiv.org/html/2404.08668v3

- Patent Challenges in Natural Language Processing (NLP) Technologies – PatentPC, accessed August 9, 2025, https://patentpc.com/blog/patent-challenges-in-natural-language-processing-nlp-technologies

- Random Forests | Minitab, accessed August 9, 2025, https://www.minitab.com/en-us/solutions/analytics/statistical-analysis-predictive-analytics/random-forests/

- Random Forests for Genomic Data Analysis – PMC, accessed August 9, 2025, https://pmc.ncbi.nlm.nih.gov/articles/PMC3387489/

- Exploitation of surrogate variables in random forests for unbiased analysis of mutual impact and importance of features – Oxford Academic, accessed August 9, 2025, https://academic.oup.com/bioinformatics/article/39/8/btad471/7234071

- Text Categorization with Support Vector Machines … – CS@Cornell, accessed August 9, 2025, https://www.cs.cornell.edu/~tj/publications/joachims_98a.pdf

- Empirical Study of Deep Learning for Text Classification in Legal Document Review – arXiv, accessed August 9, 2025, https://arxiv.org/pdf/1904.01723

- A Statistical Learning Model of Text Classification with Support Vector Machines – CS@Cornell, accessed August 9, 2025, https://www.cs.cornell.edu/~tj/publications/joachims_01a.pdf

- Predicting patent lawsuits with machine learning – IDEAS/RePEc, accessed August 9, 2025, https://ideas.repec.org/a/eee/irlaec/v80y2024ics0144818824000486.html

- 10 Network Analysis for Interpreting Patent Data: A Preliminary, Visual Approach – CABI Digital Library, accessed August 9, 2025, https://www.cabidigitallibrary.org/doi/pdf/10.5555/20073163488

- Analysis of Patent Application Attention: A Network Analysis Method – Frontiers, accessed August 9, 2025, https://www.frontiersin.org/journals/physics/articles/10.3389/fphy.2022.893348/full

- Generative AI Legal Use Cases & Examples in 2025, accessed August 9, 2025, https://research.aimultiple.com/generative-ai-legal/

- The Impact of Generative AI on the Legal Profession – USI Affinity, accessed August 9, 2025, https://www.usiaffinity.com/news-center/news-center-articles/risk-management/2024-q4/the-impact-of-generative-ai-on-the-legal-profession/

- Justice Meets Algorithms: The Rise of Gen AI in Law Firms – New York State Bar Association, accessed August 9, 2025, https://nysba.org/justice-meets-algorithms-the-rise-of-gen-ai-in-law-firms/

- Patentability and predictability in AI-assisted drug discovery – Akin Gump, accessed August 9, 2025, https://www.akingump.com/a/web/kAJxgkjHh1XoyABdxDtAf1/8MiCMH/patentability-and-predictability-in-ai-assisted-drug-discovery-web-v3.pdf