The generic pharmaceutical industry runs on a contradiction. It is one of the most consequential public health interventions of the last half-century — a global market projected to exceed $775 billion by 2033 — and it is simultaneously teetering on economic unsustainability. The forces of competition that deliver affordable medicine are the same forces compressing margins, destabilizing supply chains, and threatening the long-term viability of the manufacturers who supply them.

For three decades, the portfolio management playbook was simple: identify a blockbuster approaching patent expiry, reverse-engineer the formulation, navigate the regulatory process, and race to market. The decisions were reactive, often opportunistic, driven by spreadsheets and internal expertise. That era is finished.

Today’s generic portfolio manager faces a convergence of pressures that no spreadsheet can handle. Consolidated buyers with enormous purchasing leverage squeeze prices toward zero. A Byzantine global regulatory maze demands multi-jurisdiction strategies that can cost tens of millions before a single tablet ships. And innovator companies deploy patent thickets, product hopping, and authorized generics with systematic precision, making Paragraph IV (PIV) litigation not a risk but a designed-in cost of doing business.

The volume and velocity of information required to make consistently profitable decisions — spanning patent law, global regulations, clinical science, and market economics simultaneously — have outpaced human analytical capacity. Artificial intelligence is the enabling technology for what comes next. Not as a buzzword, but as a deployable operational capability that can synthesize disparate datasets, forecast outcomes with quantifiable probability, and transform capital allocation from an educated guess into a data-driven science.

This guide covers the complete picture: the mechanics of the crisis, the technical architecture of AI-powered solutions, and a rigorous implementation blueprint. The target audience is the professional who needs to make or influence decisions worth millions of dollars — the IP team leader evaluating a PIV challenge, the portfolio manager building a five-year pipeline, the R&D lead defending a formulation budget, the institutional investor sizing up an acquisition target’s AI maturity.

Part I: The Affordability Paradox — A System Under Structural Stress

The generic drug industry does not face a cyclical downturn. It faces a structural problem built into its incentive architecture. The same competitive dynamics that made generics the backbone of affordable medicine globally have created a market where many products are simply no longer economically viable to produce. Understanding the precise mechanics of this problem is the prerequisite for understanding why AI is not optional — it is the only lever powerful enough to shift the equation.

Part II: The Economics of Price Erosion, IRA Disruption, and IP Valuation at the Portfolio Level

The Predictable Price Cliff

Generic drug price erosion follows a predictable and brutal curve. A single generic entrant typically cuts the brand reference price by 30 to 39 percent. Two or three entrants push that reduction to 50 to 70 percent. Once the market reaches six or more competitors, prices collapse by 85 to 95 percent from the original brand level. This is not a market anomaly. It is the designed outcome of Hatch-Waxman — and it creates an extraordinarily narrow window for profitable operation.

~35%

Price reduction with first generic entrant vs. brand

~65%

Price reduction with 3-5 generic competitors

~90%

Price reduction with 6+ competitors

30%

FDA-approved generics never launched due to market unprofitability

3,000+

Generic products withdrawn in the last decade for economic reasons

The practical implication for portfolio managers is stark: market entry position is the primary determinant of financial return, not product quality. A generic that earns $200 million for the first-to-market filer earns under $10 million for the fifth entrant on the same drug. Speed-to-market and accurate competitive intensity forecasting are therefore not operational priorities — they are the core financial levers of the entire business model.

Table 1 — Price Erosion Curve vs. Number of Generic Competitors

| Generic Competitors in Market | Approx. Price Reduction vs. Brand | Revenue Window (Months, Illustrative) | Strategic Position |

|---|---|---|---|

| 1 (first-to-market) | 30% – 39% | 0 – 6 (exclusivity window) | High margin; capture maximum share |

| 2 – 3 | 50% – 70% | 6 – 18 | Acceptable margins with volume |

| 4 – 6 | 70% – 85% | 18 – 36 | Thin margins; cost leadership required |

| 7+ | 85% – 95% | 36+ | Commoditized; avoid unless manufacturing-cost leader |

The IRA’s ‘Patent Slope’ Effect: A Structural Shift in Generic Economics

The Inflation Reduction Act (IRA) introduced a mechanism that fundamentally redraws the financial model for a subset of high-revenue generic opportunities. Under the IRA, Medicare can negotiate the price of certain brand-name drugs before their patents expire. This compresses the brand price prior to the patent cliff — transforming what was once a sharp revenue drop into a gradual slope.

The strategic consequence is significant and underappreciated by many portfolio teams. When the IRA drives the brand price down from, say, $400 per unit to $180 per unit before patent expiry, the absolute revenue pool available to generic entrants shrinks proportionally. A product that once represented a $400 million annual generic opportunity at 60% market share and 40% price parity may represent a $160 million opportunity under post-negotiation pricing. Projects already deep in development that were underwritten against pre-IRA economics need re-evaluation. Any AI-powered forecasting model that does not incorporate real-time IRA negotiation status for candidate drugs will systematically over-value large-cap Medicare-reliant targets.

The Buyer’s Gauntlet: PBM Leverage and Its IP Consequences

Pharmacy Benefit Managers (PBMs) control access to the largest U.S. drug purchasing pools. Three PBMs — CVS Caremark, Express Scripts (Cigna), and OptumRx (UnitedHealth) — together manage approximately 80 percent of U.S. commercial pharmacy benefit claims. Their formulary decisions can make or break a generic’s commercial viability regardless of FDA approval status.

The anti-competitive dynamic here is well-documented: PBMs earn larger rebates from brand manufacturers than from generic companies, which creates a perverse incentive to keep higher-priced branded drugs on preferred formulary tiers while relegating lower-cost generics to higher cost-sharing tiers. This dynamic is particularly acute for the first 180 days after a PIV-filer’s exclusivity begins, when the generic price has not yet fully eroded and the brand company still pays substantial rebates to maintain formulary positioning. Portfolio managers must factor PBM leverage explicitly into their revenue models, particularly for drugs where the brand company has historically been aggressive in rebate contracting.

IP Valuation as a Balance Sheet Asset: What Generic Firms Get Wrong

Most generic pharmaceutical companies treat patent litigation risk as an expense line — the legal budget for a given PIV challenge. This is the wrong mental model. The correct framework treats the outcome of a PIV challenge as a contingent asset with a probability-weighted net present value (NPV) that belongs on the strategic balance sheet.

Framework: Risk-Adjusted NPV for a Paragraph IV Generic Asset

rNPV = [P(tech) x P(reg) x P(lit)] x NPV(commercial) -- [C(dev) + C(legal) + C(reg)]

Where P(tech) = probability of technical/formulation success; P(reg) = probability of FDA approval; P(lit) = probability of winning or settling the PIV litigation; NPV(commercial) = present value of projected revenues; C(dev), C(legal), C(reg) = development, legal, and regulatory costs.

Traditional portfolio management assigns rough estimates to P(tech), P(reg), and P(lit) based on historical averages or expert opinion. AI changes this by generating product-specific, evidence-based probabilities for each variable. A 10-percentage-point improvement in the accuracy of P(lit) estimates across a ten-product portfolio can shift capital allocation worth hundreds of millions of dollars to higher-probability opportunities.

Drug Shortages: The Public Health Arithmetic of Market Exit

When generic firms cannot earn a sufficient return, they exit. Over the last decade, approximately 3,000 generic drug products exited the U.S. market for purely economic reasons — not safety concerns, not supply problems, but financial unsustainability. This exit pattern drives the chronic drug shortage crisis that costs U.S. hospitals an estimated $216 million annually in labor costs alone, not counting the inflated grey-market prices paid to secure essential medicines when primary supply chains fail. AI-powered portfolio optimization is therefore not just a commercial tool. It sustains the supply of products that would otherwise be abandoned, which has direct public health consequences.

Key Takeaways

Part II: Economics of Erosion

- Price erosion follows a deterministic curve; entry position — not quality — drives financial returns. First-to-market advantage in generics is worth an order of magnitude more than being the fifth entrant.

- The IRA’s pre-expiry price negotiation mechanism requires portfolio teams to re-run financial models on any Medicare Part D-exposed drug already in the development pipeline. The ‘patent slope’ replaces the cliff for these assets.

- PBM formulary dynamics can suppress generic uptake for 12 to 18 months post-launch, particularly when brand companies deploy aggressive rebate contracting. This delay must be modeled explicitly, not assumed away.

- The correct framework for IP risk is not an expense but a contingent asset valued via risk-adjusted NPV. AI improves the precision of all three probability inputs (technical, regulatory, litigation) and thus improves capital allocation accuracy.

- The 3,000+ product exits over the past decade are a measurable downstream consequence of unoptimized portfolio management. AI-driven optimization creates real public health value by improving the economic viability of essential generic drugs.

Investment Strategy Note

Scoring Portfolio Value in the IRA Era

When analyzing a generic pharmaceutical company’s pipeline for investment purposes, the first screen should identify what percentage of late-stage ANDA candidates target drugs now subject to, or likely to be subject to, IRA negotiation. Any pipeline weighted toward large-cap Medicare drugs without an updated post-IRA rNPV model should be treated as carrying unacknowledged impairment risk. Conversely, companies that have already rebuilt their commercial models around post-negotiation pricing — and whose AI forecasting tools reflect this — have a more credible earnings forecast.

The second screen: is the portfolio biased toward first-to-file PIV positions? Companies with a high ratio of first-to-file PIV challenges to total ANDA portfolio are structurally better positioned than those filing fourth or fifth on already-contested drugs. AI-powered candidate selection improves this ratio systematically.

Part III: The Patent Gauntlet — Hatch-Waxman Mechanics, Patent Thickets, and the Complete Evergreening Technology Roadmap

The Hatch-Waxman Architecture: How Litigation Became a Business Process

The Drug Price Competition and Patent Term Restoration Act of 1984 — universally called Hatch-Waxman — is the foundational statute governing the U.S. generic drug market. It created a deliberate trade: generic companies gain a streamlined approval pathway (the ANDA) that avoids repeating innovator clinical trials, and in exchange they must formally confront the brand’s patents listed in the FDA’s Orange Book.

The mechanism for confrontation is the Paragraph IV (PIV) certification, where the ANDA filer attests that the listed patent is either invalid, unenforceable, or will not be infringed by the generic product. Filing a PIV certification is an artificial act of infringement that triggers the litigation process. Upon receiving a PIV notice, the brand company has 45 days to file suit. If it does, FDA cannot grant final approval for the generic for 30 months — the statutory stay. The first company to file a substantially complete ANDA with a PIV certification wins 180 days of marketing exclusivity upon launch. That exclusivity window, for a blockbuster drug, can be worth $500 million to $1 billion.

The 30-month stay is the brand company’s most valuable defensive tool. Even when the underlying litigation is weak, filing suit and obtaining the stay buys 30 months of continued monopoly pricing. This is worth hundreds of millions of dollars for most blockbusters and effectively subsidizes the cost of litigation — which typically runs $5 million to $10 million per side for standard cases and can exceed $50 million for complex patent thicket battles.

The Complete Evergreening Technology Roadmap

Innovator companies do not wait passively for their composition-of-matter (COM) patents to expire. They build defensive IP architectures — commonly called patent thickets — designed to extend commercial exclusivity well beyond the original patent term. Understanding the specific mechanics of each evergreening layer is essential for generic IP teams building PIV challenge strategies and for AI models learning to score patent vulnerability accurately.

Evergreening Technology Roadmap: 7 Defensive Patent Layers

1

Composition-of-Matter (COM) Patent

The foundational patent covering the active pharmaceutical ingredient (API) itself. Typically the strongest and hardest to invalidate; courts uphold COM patents at significantly higher rates than secondary patents. COM patents filed at IND filing commonly expire 7 to 10 years after a drug reaches market, given the 5-year data exclusivity and patent term restoration provisions.

2

Polymorph and Crystalline Form Patents

After COM patent filing, innovators identify and patent specific crystalline forms, hydrates, or polymorphic variants of the API that may offer stability or bioavailability advantages. These are frequently vulnerable to obviousness challenges — courts have found that a skilled chemist would routinely screen for alternative solid forms — but they require resource-intensive prior art discovery to invalidate. GlaxoSmithKline’s paroxetine Form II patent dispute is the canonical example: the PIV challenge succeeded, but only after years of costly litigation.

3

Formulation and Delivery System Patents

These patents cover specific excipient combinations, coating technologies, controlled-release mechanisms, or delivery devices. Extended-release (ER) formulations are a common evergreening vehicle: when a drug’s immediate-release form approaches COM patent expiry, the brand files ER patents and markets the new formulation as clinically superior. Purdue Pharma’s OxyContin ER patents are a widely studied example of this tactic. Formulation patents are structurally more vulnerable than COM patents because they often rely on known excipients in predictable combinations — a classic obviousness attack vector.

4

Method-of-Use (MOU) Patents

These cover specific dosing regimens, patient populations, or treatment indications. A key generic defense against MOU patents is the carve-out (also called a Section viii statement under 21 CFR 314.94(a)(12)(iii)): the generic ANDA labels its product for a non-patented indication only, effectively avoiding infringement. However, skinny-label carve-outs are now under legal pressure following the GlaxoSmithKline v. Teva litigation, where the Federal Circuit found that a generic can be liable for induced infringement of a MOU patent even with a carved-out label if marketing materials encourage use in the patented indication. This ruling has materially increased the risk calculation for MOU carve-out strategies.

5

Metabolite and Prodrug Patents

Innovators patent the active metabolite of the API (the compound formed in the body after drug administration) separately from the parent molecule. This creates a separate patent family that can effectively extend exclusivity even after the original API patent expires. Esomeprazole (AstraZeneca’s Nexium) is the textbook case: it is the S-enantiomer of omeprazole, patented separately after omeprazole’s primary patents neared expiry — generating billions in additional exclusivity revenue.

6

Product Hopping

Just before a patent expires on a product, the brand makes a minor formulation change — switching from tablet to capsule, adding an enteric coating, or converting immediate-release to extended-release — obtains new patents, heavily markets the ‘improved’ version, and withdraws the original. Patients and providers switch to the new formulation, which is now covered by fresh patents. The generic pipeline for the old formulation is rendered commercially unviable because no significant patient population remains on it. Bristol-Myers Squibb’s switch of Glucophage to Glucophage XR is the classic example in this category.

7

Pediatric Exclusivity Extension

Under the Pediatric Research Equity Act and Best Pharmaceuticals for Children Act, brands can earn an automatic six-month exclusivity extension by completing FDA-requested pediatric studies. This extension applies to all Orange Book-listed patents and is stacked on top of existing exclusivity. For a brand drug generating $2 billion annually, a six-month pediatric exclusivity extension is worth approximately $1 billion in protected revenue. Generic teams must track pediatric study status in FDA databases as part of their timeline modeling.

Authorized Generics: IP Valuation and the Strategic Calculus of a Spoiler

An authorized generic (AG) is a brand-name drug sold as a generic, typically by the innovator company itself or through a licensing arrangement with a distributor. The brand launches the AG the same day the first-filer’s 180-day exclusivity begins — a tactic designed to split market share and dramatically reduce the financial value of the exclusivity prize.

The AG is one of the most consequential and underanalyzed variables in generic portfolio economics. A first-filer expecting to capture 80 to 90 percent market share during exclusivity may find themselves sharing with an AG and capturing only 40 to 50 percent. The revenue reduction is not linear with market share: it is compounded by the price pressure that AG competition creates during the exclusivity window, dragging average selling prices down further than a monopoly period would allow. For a drug projected to generate $400 million in exclusivity-period revenue, an AG launch can reduce realized revenue to $160 million — a 60 percent haircut that can completely invert the rNPV of the development investment.

AI models that incorporate historical AG launch behavior by specific brand companies can flag this risk during candidate scoring. Some brands launch AGs systematically (Pfizer, AstraZeneca) while others do so selectively. Training on this pattern allows the model to assign a probability-weighted AG adjustment to the revenue forecast, producing a materially more accurate commercial case.

Pay-for-Delay Settlements After FTC v. Actavis

Before the Supreme Court’s 2013 FTC v. Actavis decision, brand companies routinely settled PIV litigation by paying the generic challenger to delay its launch — so-called reverse payment settlements. The Court ruled that these arrangements can constitute antitrust violations and are subject to rule-of-reason analysis, materially reducing their frequency. However, they have not disappeared. Non-cash settlements — in which brand companies provide supply agreements, co-promotion rights, or milestone payments rather than direct cash — continue to raise antitrust scrutiny. Generic portfolio teams must monitor FTC enforcement activity in this area, as an accepted settlement that later attracts antitrust investigation can create significant contingent liability.

The Orange Book Transparency Act: A New IP Battlefield

The Orange Book Transparency Act, signed into law in 2021, requires drug manufacturers to certify that device components listed in the Orange Book meet statutory listing requirements. The FTC has used this authority aggressively, challenging Orange Book listings for inhaler and other drug-device combination patents that it argues should not be listed. When a patent is delisted following an FTC challenge, any 30-month stay associated with that patent is immediately invalidated — potentially accelerating a generic’s approval by years. For portfolio teams pursuing complex generics involving drug-device combinations, monitoring FTC Orange Book delisting petitions has become a required intelligence function.

Key Takeaways

Part III: The Patent Gauntlet & Evergreening

- Evergreening operates in at least seven distinct patent layers. Generic IP teams need a full-stack analysis of each layer for every candidate drug, not just the primary COM patent review. AI-powered patent landscaping can execute this multi-layer analysis in hours instead of weeks.

- The skinny-label carve-out strategy for method-of-use patents carries elevated legal risk post-GlaxoSmithKline v. Teva. Any PIV strategy relying on a carve-out should receive explicit legal review under the induced infringement doctrine before the ANDA is filed.

- Authorized generics can reduce first-filer exclusivity-period revenue by 40 to 60 percent. Failure to model AG probability is one of the most common causes of commercial forecast error in generic portfolios.

- FTC Orange Book delisting activity is a material event risk for drug-device combination generics. Active monitoring of FTC petition activity can reveal patent timeline accelerations worth modeling immediately.

- Pay-for-delay settlements still exist in non-cash form and carry FTC antitrust risk. Any settlement involving brand supply agreements or co-promotion rights should receive antitrust counsel review.

Investment Strategy Note

Building a Patent Thicket-Adjusted Competitive Timeline

When an analyst values a generic pipeline asset, the starting assumption should be that the official COM patent expiry date understates the actual time to market by two to five years for any drug generating more than $500 million annually in brand revenue. Apply a ‘thicket premium’ to the timeline by cataloging secondary, formulation, and method-of-use patents and estimating litigation duration based on historical resolution times for similar patent types in likely venues. For drugs with confirmed pediatric exclusivity extensions, add six months to all timelines as a hard constraint. This adjusted timeline materially changes the NPV calculation, often making what appears to be an attractive first-to-file position significantly less valuable.

Part IV: The Global Regulatory Maze — GDUFA III Economics, Bioequivalence Standards, and the Nitrosamine Crisis as a Portfolio Risk Framework

ANDA Pathway and GDUFA III: The True Cost of Entry

The ANDA pathway under Hatch-Waxman is a streamlined approval mechanism that eliminates the need to repeat clinical efficacy trials — but streamlined does not mean cheap. Under GDUFA III (the Generic Drug User Fee Amendments, third reauthorization, covering FY2023 to FY2027), the fees for FY2025 are: ANDA filing fee of $321,920; Drug Master File (DMF) fee of $95,084; and program and facility fees that can collectively reach $1 million or more for a manufacturing site. A company filing ten ANDAs annually and maintaining multiple manufacturing facilities could pay $5 million to $8 million in GDUFA fees alone before a single regulatory review milestone is reached.

These fees create a hard lower bound on the revenue a generic product must generate to justify filing. At a standard portfolio hurdle rate of 3x invested capital over the product lifecycle, a $400,000 combined ANDA and DMF fee floor requires a minimum risk-adjusted revenue expectation of approximately $1.2 million from the generic product at filing. For simple oral solids in saturated therapeutic classes, this threshold eliminates many candidates that might have been marginally viable before GDUFA fees were introduced at their current scale. AI-powered candidate screening must encode this financial filter as a hard constraint during initial opportunity identification.

Complete Response Letters: The Financial Anatomy of a Regulatory Setback

A Complete Response Letter (CRL) is the FDA’s formal mechanism for communicating that an ANDA cannot be approved in its current form. CRLs stop the review clock, require additional data or manufacturing corrections, and restart the review timeline — commonly adding 12 to 24 months to approval. For a drug generating $10 million per month in potential generic revenue, a 12-month CRL delay costs $120 million in foregone revenue at competitive market share levels. The CRL is therefore not merely a regulatory event; it is a high-magnitude financial event that warrants its own risk model.

The most common causes of CRLs for generic products include bioequivalence study failures, inadequate characterization of manufacturing controls, data integrity issues at the manufacturing site, and incomplete resolution of patent certification requirements. AI-powered submission quality review — discussed in Part IX — targets precisely these deficiency categories by scanning draft ANDA dossiers against current FDA guidelines before submission.

FDA vs. EMA: Structural Differences That Define Global Strategy

Table 2 — FDA vs. EMA Generic Approval Pathways: Technical Comparison

| Parameter | United States (FDA) | European Union (EMA/National) |

|---|---|---|

| Key Legislation | Hatch-Waxman Act (1984); GDUFA III | Directive 2001/83/EC; Regulation 726/2004 |

| Application Type | Abbreviated New Drug Application (ANDA) | Marketing Authorisation Application (MAA) |

| Data Exclusivity (Innovator) | 5 years (NCE); 3 years (new clinical study) | 8 years (Bolar provision) |

| Market Exclusivity (Innovator) | N/A (separate from data exclusivity) | 2 years (+1 year for new indication) |

| Generic Exclusivity | 180-day first-filer exclusivity (PIV) | No direct equivalent; market dynamics-driven |

| Approval Routes | Centralized via FDA | Centralized (EMA), Decentralized (DCP), Mutual Recognition (MRP), National |

| Bioequivalence Standard | 90% CI of 80.00-125.00% for AUC and Cmax | Same standard; additional requirements for BCS classification waivers differ |

| Patent Linkage | Yes (Orange Book + 30-month stay) | No formal patent linkage; civil law only |

| Review Timeline (Typical) | 12-24 months post-filing (goal) | 210 days (active review) for centralized |

The absence of a formal patent linkage mechanism in Europe means that the 30-month automatic stay — the brand’s primary delay tool in the U.S. — does not exist in EU markets. Generic companies must still assess infringement risk, but they can launch at commercial risk while pursuing patent invalidation through national courts rather than being automatically blocked for up to 30 months. This distinction makes the European market structurally more accessible for PIV-equivalent challenges, though it also means brand companies have less incentive to negotiate settlements that provide certain entry timelines.

The EU’s 8+2+1 exclusivity formula (8 years data exclusivity, 2 years market exclusivity, with 1 additional year possible for new indications) creates a reference period that portfolio managers must track separately from patent expiry. A drug where the COM patent has expired but the EU data exclusivity period remains in force cannot be launched in EU markets even without patent barriers — a distinction that catches less experienced teams off-guard when building global launch timelines.

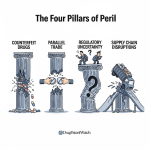

The Nitrosamine Crisis: A Case Study in Portfolio-Wide Risk Materialization

The nitrosamine impurity crisis that began in 2018 with valsartan and expanded to ranitidine, metformin, and numerous other products offers the most instructive recent example of how an unmodeled regulatory risk can materialize into portfolio-wide financial damage.

Valsartan, an angiotensin receptor blocker manufactured primarily at API production facilities in China, was found to contain N-nitrosodimethylamine (NDMA) — a probable human carcinogen. The FDA-mandated recalls affected hundreds of generic valsartan products across dozens of manufacturers, costing the industry an estimated $4 to $6 billion in recalls, reformulation costs, and market exit decisions. Several smaller manufacturers withdrew their products entirely rather than bear the cost of API re-sourcing and process re-validation.

The ranitidine situation was structurally different and ultimately more severe: NDMA formation in ranitidine was found to be an intrinsic property of the molecule under certain storage and digestion conditions, not a manufacturing contamination issue. FDA ultimately withdrew all ranitidine (Zantac) approvals in April 2020 — eliminating an entire drug class from the market. Generic manufacturers who had invested in ranitidine ANDAs lost their development capital entirely.

The lesson for portfolio management is not to avoid these drug classes — the crises were largely unforeseeable at the time of original investment. The lesson is that AI-powered regulatory risk monitoring, which can scan FDA warning letters, EMA signals, WHO safety communications, and scientific literature for emerging quality and safety concerns in real time, provides the earliest possible warning of regulatory risk materializing across an existing portfolio.

Key Takeaways

Part IV: Global Regulatory Maze

- GDUFA III fees effectively set a minimum commercial viability threshold for ANDA filing. AI screening tools that encode this threshold as a hard pre-filter will eliminate low-value candidates earlier, reducing wasted downstream investment.

- A CRL can cost 12 to 24 months of launch delay, worth $100 million or more in forgone revenue for large-market drugs. Pre-submission AI dossier review is one of the highest ROI regulatory technology investments a generic company can make.

- EU market entry does not carry the automatic 30-month stay — a structural advantage for generic challengers who can tolerate commercial-risk launches during patent litigation.

- The EU 8+2+1 exclusivity formula is a separate clock from patent expiry. Both must be tracked concurrently in global launch planning.

- The nitrosamine crisis demonstrated that portfolio-wide quality risks can materialize rapidly from API supply chain events. Real-time regulatory signal monitoring via AI is now a required function, not an aspirational one.

Part V: AI Core Technologies — What Portfolio Managers Actually Need to Know

Machine Learning Architectures: The Engine of Prediction

Machine learning (ML) is the backbone of AI-powered portfolio management. The term encompasses a range of algorithm types that learn patterns from historical data rather than following explicit rules. For generic pharmaceutical applications, three ML architectures matter most.

Gradient boosting models — of which XGBoost and LightGBM are the most widely deployed implementations — excel at structured tabular data prediction tasks: classifying whether a patent is likely to be invalidated, forecasting price erosion curves, and scoring product attractiveness. They are fast to train, highly interpretable (feature importance scores explain which variables drive predictions), and have consistently outperformed deeper neural network architectures on tabular pharmaceutical data. For a regulatory team evaluating an AI litigation prediction tool, asking whether the underlying model is gradient boosting-based with documented feature importance is a reasonable due diligence question.

Recurrent neural networks (RNNs) and their variants — long short-term memory (LSTM) networks — process sequential data and time-series inputs. They are the appropriate architecture for demand forecasting models that need to capture temporal patterns in prescription rates, seasonal disease cycles, and rolling competitive entry dynamics. A demand forecast for an anti-infective generic that does not account for seasonal flu cycles will systematically underestimate Q4 demand and generate the inventory shortfalls that plague many hospital supply chains.

Convolutional neural networks (CNNs) process spatial and spectroscopic data. In generic R&D, they are used to analyze near-infrared (NIR) and Raman spectroscopic fingerprints of innovator drug products during the deformulation step — identifying the API and key excipients from the spectral pattern without wet chemistry dissolution. A CNN trained on thousands of spectral profiles can identify excipient combinations with high accuracy, cutting weeks from the initial formulation characterization step.

Natural Language Processing: Unlocking the Patent and Court Data Layer

A substantial fraction of the most commercially critical data in generic pharmaceuticals exists as unstructured text: patent claims, court opinions, FDA guidance documents, regulatory submission templates, scientific literature. Natural language processing (NLP) is the technology that converts this text into structured, machine-readable data that ML models can analyze.

The most consequential NLP advance for generic pharma is semantic search, which queries text by meaning rather than keyword matching. A keyword search for prior art against a patent covering “crystalline form A of compound X” will miss a 2003 journal article describing “the monohydrate polymorph of compound X obtained by slow evaporation from ethanol” — even though these likely describe the same material. A semantic NLP system understands conceptual equivalence and surfaces both documents. The prior art search quality improvement from semantic NLP versus Boolean keyword search is material, and it directly affects the completeness of the invalidity argument in PIV litigation.

Large language models (LLMs), such as those in the GPT-4 family or domain-specific pharmaceutical LLMs, can read and summarize entire ANDA submission dossiers, identify specific sections referenced in FDA guidance, and flag discrepancies between submission data and current regulatory expectations. These capabilities are now being deployed in regulatory affairs functions, and their practical impact on submission quality is measurable.

Generative AI: From Novel Molecule Design to ANDA Drafting

Generative AI differs from predictive ML in that it creates new content rather than classifying existing data. In generic pharmaceutical R&D, two applications are most immediately practical. First, generative models can propose novel excipient combinations or delivery system architectures for complex generics where the standard deformulation approach fails — where, for instance, the innovator product uses a proprietary delivery technology with no obvious generic equivalent. Second, LLM-based generative tools can draft sections of ANDA submission documents (stability data summaries, bioequivalence study protocols, CMC sections) from structured data inputs, reducing the writing burden on regulatory scientists and standardizing the language against FDA style expectations. The accuracy and reliability of these drafting tools varies significantly by vendor; independent validation against historical FDA review outcomes is the required quality gate before deployment in a live submission workflow.

Key Takeaways

Part V: AI Core Technologies

- Gradient boosting models (XGBoost, LightGBM) are the appropriate architecture for most structured prediction tasks in portfolio management. Interpretability via feature importance scores is a critical requirement for GxP-adjacent applications.

- Semantic NLP search for prior art is a qualitative step change over Boolean keyword search. The delta in prior art coverage can materially strengthen — or expose weaknesses in — a PIV invalidity argument before litigation costs are incurred.

- LLM-based regulatory drafting tools are production-ready for low-risk submission sections; they require rigorous validation before use in any data-intensive or decision-critical submission component.

- CNN spectroscopic analysis of innovator drug products can cut 2 to 4 weeks from the deformulation timeline — a meaningful acceleration for high-priority candidates where speed-to-file is strategically critical.

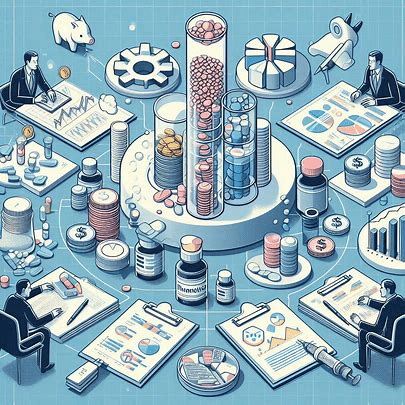

Part VI: AI-Powered Candidate Selection — IP Valuation as a Core Scoring Dimension

Automated Loss of Exclusivity Monitoring at Scale

The first analytical step in candidate selection is identifying which drugs are approaching loss of exclusivity (LOE) and when. Manual tracking of patent expiry dates, regulatory exclusivity expirations, and pediatric extension status across the entire Orange Book — more than 87,000 listed patents and exclusivities as of 2025 — is practically impossible for a team of any reasonable size. NLP-based automated monitoring systems maintain a continuously updated, structured database of all LOE events, reconciling USPTO patent data, Orange Book listings, PTAB inter partes review (IPR) proceedings, and court decisions in near-real time.

These systems do not merely track known expiry dates; they detect early signals of patent vulnerability. A successful IPR petition filed at the Patent Trial and Appeal Board against a key Orange Book patent is a material event that can pull a generic’s expected market entry date forward by years — but it will not appear in standard patent expiry tracking systems until the PTAB issues a final written decision. AI-powered monitoring catches the IPR petition filing within hours and flags the affected brand drug for immediate commercial re-evaluation.

AI-Driven Product Attractiveness Scoring: Architecture

Product attractiveness scoring consolidates the multi-dimensional risk and opportunity analysis for each candidate drug into a single ranked score that can serve as a portfolio prioritization tool. A well-designed scoring architecture weights at least five distinct input dimensions.

Commercial value inputs include the brand drug’s current U.S. net sales, the trajectory of sales growth or decline, therapeutic category dynamics, and the post-IRA revenue ceiling for Medicare-exposed drugs. Competitive intensity inputs include the number of ANDAs already filed (derived from FDA ANDA pending list data), the identity and historical behavior of likely competitors (do they typically file PIV challenges or wait for patent expiry?), and the AG probability based on the brand company’s past behavior. IP complexity inputs include the number and type of Orange Book-listed patents, the outcome of any prior litigation on those patents, PTAB proceedings, and the estimated cost and duration of PIV litigation. Technical feasibility inputs include API synthetic complexity, availability and geographic concentration of API supply, formulation difficulty class (simple oral solid vs. complex inhalation product), and the company’s internal manufacturing capabilities. Regulatory history inputs include the brand drug’s FDA inspection record, any history of CRLs for previous ANDAs referencing the same drug, and the bioequivalence study complexity score.

The ML model weighting these inputs is trained on the historical outcomes of hundreds of prior generic launches by the same company, enabling it to learn which combinations of input values have historically predicted profitable outcomes in that specific competitive context. The output is not a single score but a probability distribution across revenue scenarios — a P10/P50/P90 revenue range that gives the portfolio committee a realistic picture of downside and upside exposure.

Teva Pharmaceuticals and AION Labs: IP Valuation in AI-Driven Generic Strategy

Teva Pharmaceuticals is the world’s largest generic drug company by revenue, with an ANDA portfolio encompassing hundreds of active development programs. Teva has publicly described using AI tools to analyze large-scale patient data for real-world evidence applications and is a founding partner of AION Labs, an Israel-based venture that brings together Pfizer, AstraZeneca, GSK, and Merck alongside Teva to develop AI solutions for pharmaceutical R&D challenges.

For Teva specifically, the IP value of its generic pipeline is the core of its enterprise valuation. Teva’s total enterprise value is substantially determined by the NPV of its ANDA pipeline — the probability-weighted revenue value of each first-to-file PIV position, adjusted for litigation risk, competitive entry timing, and operating margin. An analyst valuing Teva should treat every improvement in Teva’s AI-powered candidate selection and litigation prediction capability as a direct positive adjustment to pipeline NPV. A 10-percentage-point improvement in the accuracy of Teva’s PIV challenge win rate prediction, applied across even 20 active cases, can shift the pipeline’s risk-adjusted NPV by hundreds of millions of dollars.

Sandoz: AI Maturity and Biosimilar Portfolio Valuation

Sandoz, operating as an independent company since its 2023 separation from Novartis, occupies the biosimilar market alongside its generic drug business. Sandoz has disclosed using AI and ML tools to improve forecast accuracy in its FP&A processes — a relatively mature deployment that improves capital allocation decisions across both the generics and biosimilar pipeline. The IP valuation implications for Sandoz’s biosimilar portfolio are distinct from small-molecule generics and are covered in depth in Part VII.

Key Takeaways

Part VI: Candidate Selection & IP Valuation

- NLP-based LOE monitoring catches early signals — PTAB IPR petition filings, court rulings — that can advance a generic’s expected market entry by years. This is information that manual tracking systems systematically miss.

- Product attractiveness scoring must encode at least five distinct input dimensions: commercial value, competitive intensity, IP complexity, technical feasibility, and regulatory history. Single-dimension filters (revenue only, or patent count only) produce systematically biased candidate lists.

- Teva’s enterprise value is substantially determined by the NPV of its ANDA pipeline. Any AI capability improvement that increases the accuracy of PIV challenge outcome prediction translates directly into pipeline NPV improvement — a compelling investment thesis for AI infrastructure spending.

- Authorized generic probability is the most frequently omitted variable in commercial forecast models. It should be treated as a required input, not an optional sensitivity scenario, for any PIV first-filer analysis.

Investment Strategy Note

Evaluating AI Maturity in Generic Pharma M&A Due Diligence

In an acquisition of a generic pharmaceutical company, the quality of its AI and data infrastructure should be a formal due diligence workstream, not an afterthought. The key questions: Does the target have a real-time LOE monitoring system integrated with its portfolio decision process? Does it use ML-based price erosion forecasting, or still rely on static historical averages? Does its litigation team have access to AI-powered prior art search and litigation outcome prediction tools? What is the quality and FAIR-compliance status of its historical R&D and formulation data — the training data that will determine how quickly its ML models can be improved post-acquisition?

Companies with mature AI infrastructure will have systematically better pipeline quality (higher rNPV per candidate), lower regulatory submission failure rates, and faster development timelines. These advantages compound — they are not one-time improvements but structural competitive differences that grow over time.

Part VII: Predictive Litigation Forecasting — Turning Paragraph IV Gambles into Calculated Bets

The Data Architecture of Litigation Prediction

Predicting the outcome of a Paragraph IV patent challenge is a machine learning classification problem. The model’s task is to estimate the probability that a specific patent claim will be found invalid or not infringed, given a set of observable features about that patent, the case, the venue, and the litigants. Training this model requires a large, well-structured historical dataset of resolved ANDA litigations — cases with known outcomes across a range of patent types, legal arguments, courts, and judges.

The feature set for a litigation prediction model includes four categories of input. Patent-specific features include the patent type (COM, formulation, method-of-use, polymorph), the age of the patent at the time of challenge, the number and specificity of asserted claims, the prosecution history (particularly any claim amendments or arguments made to the USPTO during examination that could create prosecution history estoppel), and the results of any IPR proceedings on the same patent. Case-specific features include the legal theories being asserted (invalidity for obviousness, invalidity for lack of written description, non-infringement under doctrine of equivalents, and so on) and the specific Orange Book-listed drug involved. Venue features include the district court (the Districts of Delaware and New Jersey handle the vast majority of ANDA cases, each with distinct judicial tendencies), the specific judge assigned, and that judge’s documented track record on claim construction and patent validity in pharma cases. Litigant features include the historical win rate of the brand company’s outside counsel firm in ANDA cases and the track record of the generic company’s legal team.

Delaware vs. New Jersey: Venue Analysis for ANDA Litigation

The choice of litigation venue has historically had a measurable effect on case outcomes in ANDA patent disputes. The District of Delaware, which has specialized procedures for complex patent cases and a deep bench of technically sophisticated judges, tends to resolve cases faster and on the merits. New Jersey — home to many large pharmaceutical companies and their counsel — has historically seen a higher proportion of cases settle before trial. A model trained on venue-specific outcomes will assign materially different litigation probability scores to the same patent depending on where the case is filed. Portfolio teams making at-risk launch decisions should verify that their AI tools incorporate venue-specific case history, not just national averages.

The 73-80% Accuracy Benchmark: What It Means in Practice

AI litigation prediction models claim accuracy rates of approximately 73 to 80 percent on holdout validation datasets. To interpret this correctly, compare it to the baseline: if a model simply predicted the most common outcome for each patent type (which is that COM patents are upheld and secondary formulation patents are invalidated), it might achieve 55 to 60 percent accuracy purely from base rate information. A model achieving 73 to 80 percent accuracy is therefore generating meaningful predictive signal beyond historical averages — but it is not a crystal ball.

The practical value of the model is not that it eliminates uncertainty. It is that it changes the prior probability that informs the at-risk launch decision. A legal team that previously estimated a 50 percent chance of winning a formulation patent challenge may receive a model output of 71 percent, which — when combined with the financial model showing $180 million in at-risk launch revenue at stake — shifts the expected value calculation from marginally negative to clearly positive. The model converts an intuitive assessment into a quantitative one, making the decision more defensible and the capital allocation more rational.

At-Risk Launch Decision Framework

At-Risk Launch Expected Value Calculation

EV(launch) = [P(win) x Revenue(excl. period)] -- [P(loss) x Damages(lost profits + royalty)]

Where P(win) = AI-modeled probability of non-infringement or invalidity verdict; Revenue = projected exclusivity-period revenue (adjusted for AG probability and price erosion); Damages = estimated lost profits to brand + reasonable royalty rate on infringing sales. Launch proceeds if EV(launch) > EV(wait).

The at-risk launch decision has historically been made on qualitative legal assessment alone. The AI-powered framework introduces a quantitative probability input that makes the decision transparent, reproducible, and auditable. It does not remove the decision from human judgment — the legal team’s qualitative assessment of litigation strengths and weaknesses remains essential context — but it provides a consistent financial framework across a portfolio of multiple simultaneous PIV cases.

Biosimilar Interchangeability: The New IP Frontier

The Biologics Price Competition and Innovation Act (BPCIA), enacted as part of the Affordable Care Act in 2010, created the regulatory framework for biosimilar approvals in the U.S. — analogous to Hatch-Waxman for small molecules, but substantially more complex. The BPCIA’s equivalent of the PIV process is the ‘patent dance’ under 42 U.S.C. 262(l), a multi-step mandatory exchange of patent and product information between the reference product sponsor (the brand biologic company) and the biosimilar applicant before any litigation can commence.

The highest-value IP designation in the biosimilar space is biosimilar interchangeability. An interchangeable biosimilar has demonstrated to the FDA that it can be expected to produce the same clinical result as the reference product in any given patient and, for products administered more than once, that the risk of alternating between the biosimilar and the reference product is no greater than using the reference product alone. Interchangeability status allows the biosimilar to be substituted for the reference product at the pharmacy level without prescriber intervention — the same automatic substitution that applies to generic small molecules. This is a commercially transformative designation: it removes the main adoption barrier for biosimilars, which is physician inertia around switching established patients to a biosimilar product.

Achieving interchangeability requires one or more switching studies — clinical trials where patients alternate between the biosimilar and the reference product. These studies are expensive, typically adding $10 million to $50 million to development costs, and the regulatory standard is still evolving. The IP implications are significant: the Biologics Price Competition and Innovation Act grants reference product sponsors 12 years of data exclusivity (versus 5 for small molecules under Hatch-Waxman) and includes a separate one-year market exclusivity period for the first interchangeable biosimilar. The first company to achieve interchangeable status for a high-revenue biologic — such as adalimumab (Humira) or ustekinumab (Stelara) — captures a disproportionate commercial reward and a distinct competitive moat versus non-interchangeable biosimilar competitors.

Sandoz’s biosimilar portfolio valuation is substantially affected by its interchangeability designations. Its adalimumab biosimilar Hyrimoz received interchangeable status from the FDA in 2023, enabling pharmacy-level substitution across the U.S. — a development that materially raised the asset’s commercial value compared to non-interchangeable adalimumab biosimilars from other manufacturers. AI-powered patent landscape analysis for biosimilars must account for the BPCIA’s separate patent dance provisions, reference product exclusivity periods, and the interchangeability switching study data requirements, all of which differ substantially from Hatch-Waxman small-molecule litigation mechanics.

Key Takeaways

Part VII: Predictive Litigation Forecasting

- AI litigation prediction models achieve 73 to 80 percent accuracy — a meaningful improvement over base-rate historical averages. The value is in systematically improving at-risk launch decision quality across a portfolio, not in eliminating individual case uncertainty.

- Venue-specific outcome data (Delaware vs. New Jersey) is a required feature in any ANDA litigation prediction model. National averages obscure court-level variation that can shift probability estimates by 10 to 15 percentage points.

- Biosimilar interchangeability is a qualitatively different competitive moat than standard biosimilar approval. The first interchangeable biosimilar in a reference product class captures a distinct commercial advantage and should be valued accordingly in portfolio NPV models.

- The BPCIA ‘patent dance’ creates a structured pre-litigation information exchange with its own strategy implications. Biosimilar IP teams must manage this process carefully, as early disclosure missteps can compromise the subsequent litigation posture.

- The at-risk launch decision framework should be formalized as an explicit financial model with AI probability inputs — not a qualitative judgment. This creates a defensible, auditable decision record and improves cross-portfolio capital allocation consistency.

Investment Strategy Note

Biosimilar Interchangeability as an IP Valuation Premium

When valuing a biosimilar pipeline, analysts should apply a distinct interchangeability premium to any asset with a clear pathway to that designation. The market share uplift from pharmacy-level substitution is conservatively estimated at 15 to 25 percentage points above what a non-interchangeable biosimilar achieves in steady-state — because it removes the need for active physician prescribing behavior change. For a biologic reference product with $3 billion in U.S. net sales, this market share differential is worth $150 million to $300 million annually at biosimilar price levels. Capitalize this at a standard pharma multiple of 5 to 7x revenue and the interchangeability designation adds $750 million to $2.1 billion to the asset’s enterprise value vs. a non-interchangeable biosimilar competitor.

Part VIII: In Silico R&D — The Complete Technical Roadmap from Deformulation to Bioequivalence

Predictive Deformulation: From Spectroscopic Fingerprint to Formulation Hypothesis

The first step in developing a generic is characterizing the innovator product — identifying the active ingredient, excipients, and their approximate concentrations. The traditional approach involves a battery of wet chemistry techniques: HPLC for assay and purity, dissolution testing, scanning electron microscopy, X-ray powder diffraction, and Karl Fischer titration for water content. This process takes 4 to 8 weeks and consumes significant material.

AI-accelerated deformulation uses CNN models trained on spectroscopic datasets to extract formulation information from NIR or Raman spectra of the intact tablet or capsule. The spectral ‘fingerprint’ encodes information about the API, excipients, and solid-state properties. A trained CNN can identify the API species with near-certainty and provide quantitative estimates of major excipients — binders, disintegrants, lubricants, coating polymers — within hours of acquiring the spectrum. This cuts the characterization phase to 1 to 2 weeks and reduces the amount of innovator reference product required, which is commercially and ethically important for high-cost specialty drugs.

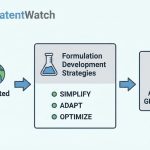

In Silico Formulation Optimization: Architecture and DoE Integration

After characterization, the formulation scientist must design a reproducible generic formulation that meets pharmacopoeial specifications for dissolution, hardness, friability, and assay. The traditional approach is Design of Experiments (DoE) — a statistical method for systematically exploring how formulation variables (excipient ratios, granulation parameters, compression force) affect product quality attributes. A typical DoE study for an oral solid dosage form requires 30 to 80 physical batches and 6 to 12 months.

AI augments DoE by training predictive models on a company’s historical formulation data — every batch ever made, with all input parameters and measured quality attributes. When a new formulation project begins, the model can run millions of virtual experiments instantly, predicting the quality attributes of formulations that have never physically been made. The scientist selects the most promising 8 to 12 virtual candidates for physical confirmation batches rather than running a full DoE from scratch. Development timelines shrink by 50 to 70 percent for drug classes well represented in the training data.

The limiting factor is training data quality. A company that has maintained rigorous, structured records of its formulation history — the FAIR data principles applied at the batch level — can extract substantial predictive value from its historical data. A company with fragmented, paper-based batch records cannot. This is why data governance investment is a prerequisite for in silico R&D capability, not a follow-on improvement.

In Silico Bioequivalence Prediction: PBPK Modeling and FDA Acceptance

The clinical bioequivalence (BE) study is the highest-cost, highest-risk single event in generic drug development. A two-period crossover BE study in 24 to 48 healthy volunteers costs $500,000 to $2 million and takes 6 to 12 months. A study failure — a result where the 90% confidence interval for the AUC or Cmax ratio falls outside the 80.00 to 125.00 percent bioequivalence limits — resets the timeline and requires another study. First-pass BE success rates for complex formulations historically run at 60 to 70 percent, meaning many programs incur the cost of at least two studies.

In silico BE prediction uses Physiologically Based Pharmacokinetic (PBPK) modeling combined with ML-predicted dissolution inputs to simulate the absorption, distribution, metabolism, and excretion of a proposed generic formulation in a virtual patient population. The two leading commercial PBPK simulation platforms are GastroPlus (Simulations Plus) and Simcyp (Certara). Both platforms construct detailed mathematical models of the gastrointestinal tract, liver, and systemic circulation, allowing scientists to simulate how formulation variables — particle size distribution, dissolution rate, solubility — affect peak plasma concentration (Cmax), time to peak (Tmax), and total exposure (AUC).

FDA’s acceptance of PBPK data in ANDA submissions has been growing since the agency issued its 2018 PBPK guidance document (Physiologically Based Pharmacokinetic Analyses — Format and Content Guidance for Industry). For BCS Class II and IV drugs — those with low aqueous solubility where absorption is dissolution-rate limited — FDA increasingly accepts PBPK-supported biowaiver requests that eliminate the need for a clinical in vivo BE study entirely. For more complex products, PBPK data can justify a smaller, more targeted BE study design, reducing cost and time while improving the probability of first-pass success. In silico BE is not yet a universal substitute for clinical studies, but it is a rapidly expanding tool that can eliminate the need for clinical studies in an increasing proportion of ANDA development programs.

Complex Generic Technology Roadmaps: Where the Highest Value Lies

The strategic opportunity in generic drug development is shifting from simple oral solids — commoditized, margin-compressed, and crowded — toward complex generics that require advanced scientific capabilities to develop. Three categories offer the highest rNPV per development dollar in the current market.

Long-acting injectables (LAIs) encompass microsphere formulations, lipid nanoparticles, and in situ-forming implants that release drug over weeks or months after a single injection. The formulation science is complex — controlling particle size distribution, polymer degradation kinetics, and drug release profiles requires precise manufacturing conditions that are difficult to reverse-engineer and reproduce. LAI patents commonly cover specific particle size ranges, polymer molecular weight specifications, and manufacturing process conditions. The FDA’s regulatory pathway for LAI generics requires clinical pharmacokinetic studies and often bridging efficacy data, creating substantial barriers to entry that protect first-mover generic firms from rapid competitive erosion. Risperidone microspheres (reference: Risperdal Consta) and naltrexone microspheres (reference: Vivitrol) illustrate both the technical complexity and the long commercial protection these products provide to the first generic entrant.

Drug-device combination products — inhalers, auto-injectors, prefilled syringes with integrated delivery devices — require the generic applicant to demonstrate device equivalence alongside drug bioequivalence. The FDA’s Office of Combination Products applies a complex regulatory framework that requires coordination between CDER and the device regulatory pathway. Orange Book listing of device patents has been a significant evergreening tool — and the FTC’s aggressive use of the Orange Book Transparency Act to challenge device patent listings has created both risk and opportunity in this space. Companies that successfully navigate the combination product pathway for a metered-dose inhaler generic (budesonide/formoterol, albuterol/ipratropium) capture markets that have been protected for years by device patent thickets.

Locally acting drugs — topical dermatologics, ophthalmic formulations, and inhaled pulmonary products — present the most complex BE challenge. Because the drug acts locally at its site of application rather than systemically, traditional PK-based BE metrics (AUC and Cmax in plasma) are not meaningful. FDA has developed product-specific guidance for many of these products that requires in vitro equivalence testing (comparative in vitro dermal absorption studies, comparative physicochemical characterization) and sometimes clinical endpoint studies. The formulation science complexity and the complexity of the BE regulatory pathway together create significant barriers that protect early generic entrants in locally acting drug classes for years after the first approval.

Insilico Medicine: 18 Months from Target to Clinical Candidate

While primarily a novel drug discovery company rather than a generic developer, Insilico Medicine’s documented track record of moving from AI-identified target to Phase I clinical candidate in 18 months — roughly half the historical industry average — is instructive for the generic industry. It demonstrates that AI-accelerated R&D timelines are achievable in practice, not just in theory. For generic R&D, the analogous benchmark is the time from ANDA filing decision to IND-equivalent (initiation of formulation development through BE study completion). Companies applying AI across the deformulation, formulation optimization, and in silico BE workflow are seeing this timeline compress from 36 to 48 months toward 18 to 24 months for standard oral solid products.

Key Takeaways

Part VIII: In Silico R&D

- CNN-based spectroscopic deformulation cuts the characterization phase from 4 to 8 weeks to 1 to 2 weeks. For high-priority first-to-file candidates where speed-to-ANDA matters, this timeline acceleration is commercially significant.

- AI-augmented DoE can compress oral solid formulation development from 6 to 12 months to 3 to 5 months for drug classes well-represented in historical training data. Historical data quality is the binding constraint — companies without structured batch records cannot leverage this capability.

- GastroPlus and Simcyp are the two commercially deployed PBPK platforms with documented FDA acceptance. In silico BE is production-ready for BCS Class II/IV biowaiver applications and is an expanding option for reducing or redesigning clinical study requirements in other categories.

- Complex generics — LAIs, drug-device combinations, and locally acting drugs — offer the highest rNPV per development dollar in the current market because their technical and regulatory complexity suppresses competitive entry, protecting margins far longer than simple oral solids.

- The in silico R&D workflow creates a data flywheel: each development program generates new training data that improves model performance for future programs. Companies that start now compound their predictive advantage over time.

Part IX: Regulatory AI — From ANDA Submission Optimization to Predictive Health Authority Modeling

AI-Powered Submission Quality Review: The CRL Prevention Layer

A Complete Response Letter from the FDA typically cites 3 to 7 distinct deficiencies across the Chemistry, Manufacturing, and Controls (CMC), bioequivalence, and patent certification sections of an ANDA. The most common CMC deficiencies involve inadequate specification justification, incomplete dissolution method validation, and insufficient stability data to support the proposed shelf life. Bioequivalence deficiencies most often involve statistical analysis errors, incomplete reference standard characterization, or study design decisions inconsistent with product-specific guidance.

NLP-based submission review tools parse a draft ANDA dossier against the full corpus of applicable FDA product-specific guidance, bioequivalence guidance, chemistry guidance, and CFR regulations. They flag specific paragraphs or data tables that conflict with regulatory expectations, generate a gap analysis report against the current FDA guidance for that drug, and suggest specific remediation actions. The ROI on this tool is straightforward: a single prevented CRL saves 12 to 24 months of review time and the associated revenue foregone. For a drug generating $15 million per month in potential generic revenue, a 15-month delay from a CRL costs $225 million. An AI submission review tool that costs $500,000 per year to deploy pays for itself on a single prevented CRL.

Predictive Health Authority Query Modeling

The FDA’s Information Request (IR) and Discipline Review Letter (DRL) processes are the primary mechanisms through which FDA reviewers ask applicants for additional data or clarification during the review cycle. These requests stop the review clock and add weeks to months to the approval timeline. A company that has submitted hundreds of ANDAs over 20 years has a rich corpus of historical FDA queries on specific drug types, dosage forms, and manufacturing processes. An LLM trained on this corpus can learn the patterns of FDA questioning and predict, with meaningful probability, which aspects of a new submission are likely to generate queries from the specific review division handling the application.

The strategy is straightforward: include answers to likely questions proactively in the original submission. If the model predicts with 65 percent probability that FDA will ask for an additional dissolution time point at pH 4.5 for a BCS Class II product, include that data in the submission. The reduction in review-cycle back-and-forth accelerates time to final approval and demonstrates regulatory proactivity to FDA reviewers, who generally view well-prepared submissions favorably in their discretionary quality assessments.

FDA’s Evolving AI Policy Framework

The FDA’s Center for Drug Evaluation and Research (CDER) published a discussion paper on AI and ML for drug manufacturing in 2023 and has outlined a risk-based framework for AI use in pharmaceutical development in multiple guidance documents and workshop proceedings. The agency’s current stance, as reflected in its published framework for AI-based software as a medical device and analogous guidance for drug applications, emphasizes risk proportionality: AI tools used in decisions that directly affect patient safety (stability prediction used to support a proposed shelf life, for example) require more rigorous validation documentation than those used in internal decision-support functions (candidate scoring, for example).

For generic companies, the practical implication is to tier their AI applications by GxP impact and apply validation rigor accordingly. Internal portfolio decision tools can be validated to a lesser standard than AI tools whose outputs appear in regulated submission documents. Early engagement with FDA through Pre-ANDA meetings — explicitly raising the company’s use of AI in formulation development or bioequivalence prediction — is the recommended approach for any company deploying AI in a way that will generate data submitted to the agency.

Key Takeaways

Part IX: Regulatory AI

- AI submission quality review delivers among the highest ROI of any AI investment in generic development. A single prevented CRL saves an order of magnitude more revenue than the cost of deploying the tool.

- Predictive health authority query modeling requires a large corpus of historical FDA communications for training. Companies with 15+ years of ANDA history have a significant data advantage for building this capability internally.

- FDA’s risk-based AI policy framework distinguishes between AI used in internal decision support and AI used in regulated submissions. Calibrate validation effort to the regulatory impact tier of each application.

- Pre-ANDA meetings are the correct venue for proactive FDA engagement on novel AI-derived data (PBPK-supported biowaivers, in silico BE predictions). Early alignment avoids costly late-stage surprises during review.

Part X: Supply Chain Predictive Intelligence — From Reactive to Anticipatory

The API Concentration Problem

Approximately 80 percent of U.S. generic drugs rely on active pharmaceutical ingredients manufactured in India or China. This geographic concentration, built over three decades of cost optimization, is the primary structural vulnerability of the global generic supply chain. The COVID-19 pandemic demonstrated what this concentration means in practice: when Wuhan-based factories shut down in early 2020, generic companies globally scrambled to source APIs for essential medicines with days or weeks of notice. Drug shortages for critical care medications proliferated within weeks.

The FDA’s Essential Medicines Supply Chain and Manufacturing Resilience Act and the executive orders on pharmaceutical supply chain resilience that followed have put regulatory and political pressure on manufacturers to diversify API sourcing. But diversification is expensive — a qualified alternative API supplier can cost $2 million to $5 million to audit, qualify, and add to an approved ANDA — and the financial incentive to maintain a single, low-cost supplier is intense in a margin-compressed market. AI-powered supply chain risk monitoring provides an intermediate option: it cannot substitute for supplier diversification, but it gives procurement teams the earliest possible warning of disruption risk, enabling proactive mitigation before a shortage materializes.

Demand Forecasting: ML Architecture for Pharmaceutical Time Series

Demand forecasting for generic drugs is structurally more complex than demand forecasting in most industries because it requires integrating signals from multiple, disparate data sources that interact in non-linear ways. A naive model based on trailing 12-month prescription volume will systematically miss demand spikes driven by competitive product shortages (when a competing generic goes into shortage, demand for remaining suppliers spikes immediately and without historical precedent), seasonal disease patterns, and formulary tier changes implemented by major PBMs.

ML-based demand forecasting integrates at minimum: real-world prescription data by payer segment, hospital system formulary data, competitor product availability signals (FDA shortage list updates, Drug Information Association shortage monitoring), epidemiological data for indication-specific seasonal patterns, and macroeconomic indicators that affect generic substitution rates. LSTM and transformer-based time-series architectures outperform traditional ARIMA and exponential smoothing methods on this multi-input, long-horizon forecasting task, typically achieving 15 to 25 percent lower mean absolute error on 90-day demand forecasts. This improvement in forecast accuracy translates directly into lower safety stock requirements and fewer stockout events — the two primary cost and service quality levers in pharmaceutical supply chain management.

Real-Time Disruption Monitoring Architecture

Beyond demand forecasting, AI-powered supply chain intelligence tracks real-time signals of potential upstream disruption. This includes FDA Form 483 observations and warning letters issued to API suppliers (a warning letter frequently precedes a supply disruption by 6 to 18 months), geopolitical and trade policy news that affects cross-border API flows (U.S.-China tariff actions, India export restrictions on specific APIs), weather and environmental events at key manufacturing locations, and financial health indicators of key suppliers (credit rating changes, payment delays on public procurement contracts).

The output is a real-time risk dashboard that flags specific API sourcing risks by probability and expected time to impact, allowing procurement teams to prioritize buffer stock investments and alternative supplier qualification activities before a shortage occurs rather than after. Companies that deployed this capability before 2020 were measurably better positioned during the COVID-19 API disruptions than those relying on manual supplier monitoring processes.

Key Takeaways

Part X: Supply Chain Predictive Intelligence

- API geographic concentration in India and China is the primary structural vulnerability of the generic supply chain. AI monitoring reduces the financial cost of this vulnerability by providing early warning; it does not eliminate the need for supplier diversification investment.

- ML-based demand forecasting integrating multi-source signals achieves 15 to 25 percent lower forecast error than traditional statistical methods. This improvement directly reduces safety stock cost and stockout frequency.

- FDA warning letter issuance to API suppliers is a leading indicator of supply disruption with a 6 to 18 month lead time. Real-time monitoring of FDA inspection outcomes is a standard input for any sophisticated supply chain AI system.

- LSTM and transformer architectures outperform ARIMA on pharmaceutical demand forecasting tasks. Any vendor claiming AI-powered demand forecasting should be able to articulate their model architecture and provide holdout validation accuracy data.

Part XI: The Implementation Blueprint — From Data Infrastructure to AI-Native Operations

The FAIR Data Principles Applied to Generic Pharmaceutical Operations

The quality of an AI model is bounded by the quality of its training data. For generic pharmaceutical companies, data quality is often the binding constraint — not algorithm sophistication. Batch manufacturing records, formulation development data, stability study results, and bioequivalence study reports are frequently stored in paper format, heterogeneous electronic systems, or PDF archives that resist machine parsing. Before any ML model can be trained on this data, it must be extracted, cleaned, standardized, and structured.

The FAIR data principles — Findable, Accessible, Interoperable, Reusable — provide the operational framework for building an AI-ready data infrastructure. Findable means every dataset has a unique identifier and is cataloged in a searchable metadata registry. Accessible means authorized users and systems can retrieve any dataset through a defined protocol (typically a secure API). Interoperable means data uses community-standard formats and vocabularies — IDMP for pharmaceutical identifiers, CDISC SEND for nonclinical data, CDISC CDASH for clinical data — so that datasets from different internal systems can be combined without manual reconciliation. Reusable means data is documented with rich provenance information so that its origin, quality, and limitations are clear to anyone using it months or years later.

Implementing FAIR across a generic company’s full data estate is a multi-year program. The practical entry point is the highest-value, most structured data domain: formulation development batches, where the structured DoE input-output format makes FAIR compliance achievable without major data transformation effort. Starting here builds the capability to deliver early ML value from in silico formulation optimization while the broader data governance program matures.

Build vs. Buy vs. Partner: A Decision Framework

Table 3 — AI Capability Build/Buy/Partner Decision Matrix for Generic Pharmaceutical Companies

| AI Capability | Build In-House | Buy (SaaS Vendor) | Partner (Academic/CRO/Vendor Ecosystem) | Recommendation for Mid-Size Generic Firm |

|---|---|---|---|---|

| LOE Monitoring & Patent Database | Not recommended; requires massive ongoing data curation | Recommended; specialized vendors (DrugPatentWatch, Citeline) provide structured, curated data | Secondary option | Buy |

| Litigation Outcome Prediction | Feasible only with 100+ historical PIV cases for training | Available from specialized vendors with broad industry training data | Good option; legal analytics firms have relevant capabilities | Buy or Partner |

| Formulation In Silico Modeling | Build if extensive proprietary batch history exists; high long-term moat value | GastroPlus, Simcyp available for PBPK; formulation-specific ML tools limited | Academic pharma science departments for novel delivery systems | Build (with existing data) + Buy PBPK tools |

| Demand Forecasting | Feasible with proprietary sales and inventory data | Available from supply chain AI vendors; fast deployment | Secondary option | Buy or Build depending on data readiness |

| Regulatory Submission QC | Possible with large historical submission corpus; high complexity | Emerging vendor market; quality varies widely | Regulatory consulting firms beginning to offer AI-augmented services | Partner initially, then evaluate Buy as vendor market matures |

| Supply Chain Disruption Monitoring | High complexity; requires broad external data sourcing | Established supply chain risk vendors (Resilinc, Everstream Analytics) | Secondary | Buy |