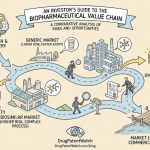

A deep-dive reference for pharma IP strategists, ANDA portfolio managers, formulation leads, and institutional investors tracking the generic drug sector.

The Generic Drug Market Is Harder Than It Looks

The generic industry saves the U.S. healthcare system money on a scale that is almost impossible to overstate. According to the Association for Accessible Medicines’ 2023 report, generic and biosimilar drugs generated $408 billion in system savings in a single year, reaching $1.9 trillion over the preceding decade. That number gets cited in every industry white paper. What gets cited less often is that earning a share of those savings is becoming brutally expensive, slow, and uncertain for the companies doing the work.

The products coming off-patent today are not the simple oral solid dosage forms that defined the first generation of the ANDA pipeline. They are modified-release formulations with intricate in vitro-in vivo correlation (IVIVC) requirements, inhalation devices where aerodynamic particle size distribution is the entire story, topical semisolids governed by complex diffusion kinetics, and complex drug-device combinations. Each category carries its own regulatory playbook, its own failure modes, and its own patent thicket to navigate. The result: a development environment where institutional expertise alone no longer guarantees execution, and where data, correctly structured and correctly queried, is the only reliable edge.

Machine learning does not replace the formulation scientist or the patent attorney. It gives them the ability to ask better questions, run fewer physical experiments, and make probabilistic decisions where gut instinct previously ruled. This guide covers every stage where that shift matters, with the technical specificity that IP teams, R&D leads, and portfolio managers actually need.

First-to-File Economics and the 180-Day Exclusivity Calculus

The financial logic of the ANDA market is built around one regulatory mechanism: the 180-day market exclusivity granted to the first applicant to file a Paragraph IV certification challenging a brand-name drug’s listed patents. Under the Hatch-Waxman Act, a Paragraph IV filer asserts that one or more patents in the FDA’s Orange Book are invalid, unenforceable, or will not be infringed by the generic product. If the brand company sues within 45 days of receiving notice, a 30-month stay of FDA approval automatically kicks in, preserving the brand’s exclusivity while litigation proceeds.

The first Paragraph IV filer, if ultimately successful, receives 180 days of generic market exclusivity before subsequent applicants can launch. For a high-revenue brand product, those six months can represent $300 million to $800 million in revenue depending on the drug’s market size and the rate of conversion from brand to generic. The window is finite, the upside is enormous, and the legal and scientific risk is substantial. Every decision about which drug to target, which patent to challenge, and whether to launch at risk before litigation resolves is a high-stakes, asymmetric bet.

That bet is increasingly being informed by machine learning models rather than being left entirely to legal judgment and market intuition.

ANDA Cost Structure and the True Price of a Failed Bioequivalence Study

A straightforward ANDA for a solid oral dosage form typically costs $1 million to $5 million from first lab work to submission. Complex dosage forms, injectables, inhalation products, and transdermal systems routinely run $15 million to $40 million per product, with some complex inhalation ANDAs exceeding that range once device characterization and in vitro testing are fully accounted for.

Within that budget, the bioequivalence (BE) study is the single most expensive and unrecoverable line item. Depending on the drug’s properties, the study might involve 24 to 72 healthy subjects in a crossover design, with a washout period that can stretch weeks for drugs with long half-lives. Clinical conduct, sample analysis, PK modeling, and the statistical package for a single BE study typically runs $500,000 to $3 million. A failed study does not just cost its own budget line: it adds 6 to 18 months to the project timeline, forces a full reformulation cycle, and, in competitive filings, may allow a rival company to overtake your first-to-file position.

Reducing BE failure rates by even 20 percentage points, which ML-enhanced PBPK simulation has shown to be achievable in published validation studies, restructures the economics of an entire generic pipeline.

Key Takeaways: Generic Market Fundamentals

The 180-day Paragraph IV exclusivity window is the primary value-creation mechanism in the ANDA market, and the race to claim it is compressing timelines across the entire development cycle. The cost of a failed BE study extends well beyond its direct budget line: it costs timeline position, potentially surrendering first-to-file exclusivity to a competitor. Complex dosage forms dominate the patent cliff pipeline over the next five years, meaning the average cost and technical difficulty of a target ANDA is rising. Machine learning’s core value proposition in this environment is risk reduction across portfolio selection, formulation development, and BE prediction, stages where probabilistic decision-making produces measurable financial returns.

Phase 1: ML-Powered Portfolio Selection and Patent Intelligence

Portfolio selection is where the most capital is committed and the most mistakes are made. Choosing to develop a product that has an insurmountable patent barrier, insufficient commercial headroom, or a crowded competitor field can waste three to seven years of development time. Choosing correctly, and being first, can generate hundreds of millions in exclusivity revenue. Machine learning now touches every layer of this decision.

NLP Deconstruction of Patent Claims for Paragraph IV Strategy

The FDA’s Orange Book lists patents by drug product, but it provides no analysis of those patents’ legal strength. A brand company might list six or eight patents for a single product, some covering the active molecule, others covering a specific polymorphic form, a particular manufacturing process, a modified-release mechanism, or a method of use. Each patent has a different invalidation profile, and identifying which ones are genuinely vulnerable requires reading claim language in the context of prior art, prosecution history, and litigation precedent.

Natural Language Processing models, specifically transformer architectures fine-tuned on patent corpora, can automate significant portions of this analysis. BERT-class models trained on United States Patent and Trademark Office (USPTO) filings and Federal Circuit case law can parse the structural elements of a patent claim, identify the independent claims that define the actual scope of protection, flag claim language that mirrors previously invalidated claims in analogous litigation, and extract prosecution history disclaimers that narrow claim scope.

The practical output is a structured dataset rather than an attorney’s memorandum. Each Orange Book patent receives a score vector covering claim breadth, prior art density, prosecution history vulnerabilities, and the litigation history of the specific claims at issue. A patent with a broad independent claim that was prosecuted narrowly due to prior art rejections, where the examiner’s interview record shows significant amendments, scores very differently from a patent with a narrow but previously unchallenged composition-of-matter claim.

Platforms ingesting this kind of NLP output can overlay it with litigation history data from PACER (Public Access to Court Electronic Records) to build a company-level litigation behavior profile. Some brand companies settle Paragraph IV challenges quickly when they believe their patents are weak. Others litigate every challenge regardless of merit, extracting value from the 30-month stay even when the underlying patent is unlikely to survive trial. Knowing which behavior pattern to expect changes the risk calculus of an at-risk launch significantly.

Predicting At-Risk Launch Viability with Gradient Boosting

An at-risk launch, entering the market before patent litigation resolves, is the highest-risk decision a generic company makes. The upside is capturing sales during the exclusivity window while the innovator is enjoined from seeking a permanent injunction; the downside, if the generic company loses the litigation, is potential damages covering the entire period of infringing sales.

Gradient boosting classifiers (XGBoost, LightGBM) trained on historical Paragraph IV litigation outcomes can assign probability estimates to specific patent challenge scenarios. The feature set for such a model includes the NLP-derived claim strength scores, the district court assignment (some districts have consistently higher patent holder win rates than others, a pattern well-documented in Federal Circuit scholarship), the technology category of the patent (composition-of-matter patents have historically survived challenge at higher rates than formulation or method-of-use patents), the number of co-filers, the brand company’s historical settlement rate, and whether analogous claims have been invalidated in Inter Partes Review (IPR) proceedings before the Patent Trial and Appeal Board (PTAB).

The output is a probability distribution, not a point estimate. A model might report: the probability of full patent invalidation is 61%, the probability of a negotiated settlement allowing early entry is 22%, and the probability of an adverse judgment following at-risk launch is 17%. That distribution, combined with a discounted cash flow model of exclusivity revenue, produces an expected value figure that the business development team can compare to the capital at risk. This is a fundamentally more defensible decision framework than the traditional approach of asking legal counsel whether the patent is ‘strong’ or ‘weak.’

Commercial Forecasting: LSTM Models for Price Erosion and Market Share

Brand product revenue projections from IQVIA or IQVIA-adjacent data vendors provide the top-line revenue figure. The harder questions are how fast the price erodes once generics enter, what market share the first filer captures, and whether the product retains formulary position through the erosion cycle.

Long Short-Term Memory (LSTM) networks, a recurrent neural network architecture designed for sequential data, can be trained on historical generic launch datasets to model these dynamics. The training data includes launch date relative to patent expiry, number of applicants at launch, pharmacy benefit manager (PBM) formulary tier assignments, brand marketing spend in the months surrounding loss of exclusivity (LOE), channel mix between retail and mail-order pharmacy, and therapeutic category-level factors that influence brand loyalty.

From this, the model generates time-series revenue projections with confidence intervals rather than single-point estimates. A portfolio manager can visualize: median first-year revenue with first-to-file exclusivity is $95 million, the 25th percentile scenario (competitive entrants arrive faster than expected, or the brand secures step therapy protocols) is $62 million, and the 75th percentile (exclusivity period runs cleanly, strong formulary position) is $135 million. These probability-weighted projections feed directly into the risk-adjusted net present value (rNPV) calculations that govern capital allocation across the development portfolio.

The models also identify what practitioners call ‘niche busters’: products with modest total market revenue but structural characteristics, complex manufacturing requirements or narrow patient populations, that limit the number of generic competitors and sustain higher prices for longer. Identifying these products before competitors do is where ML-driven commercial forecasting produces its most differentiated output.

IP Valuation Spotlight: Quantifying the Patent Asset Behind Your ANDA Target

Every ANDA target has a mirror-image IP asset on the brand side. Understanding the financial architecture of that IP estate is as important as understanding its legal strength. For portfolio managers and institutional investors, this section describes how to value the patent portfolio protecting a brand product as a way of understanding both the upside of a successful generic challenge and the downside if that challenge fails.

The Five Layers of Brand Drug IP Protection and Their Relative Value

Brand pharmaceutical IP protection rarely rests on a single patent. A well-managed lifecycle extension strategy typically layers protection across the composition of matter (the molecule itself), the specific polymorphic or salt form used in the commercial formulation, the manufacturing process, the drug delivery system or formulation technology, and one or more methods of treatment.

Composition-of-matter patents, which cover the active molecule itself, carry the highest IP value and the longest effective exclusivity. Their expiry marks the true patent cliff. Formulation and method-of-use patents, what the industry calls secondary or ‘evergreening’ patents, extend protection by an average of 6.5 years beyond composition-of-matter expiry according to data compiled from Orange Book listings across the 2010-2023 period. For a drug with $3 billion in annual U.S. sales, each year of extended exclusivity is worth roughly $2.5 billion in protected revenue (accounting for price erosion once generics enter), making each secondary patent a multi-billion-dollar asset on the brand company’s balance sheet.

From the generic company’s perspective, invalidating a single well-positioned secondary patent can unlock that same value. This asymmetry, the brand company’s defense cost versus the generic company’s potential upside, is what makes Paragraph IV litigation economically rational even for patents that are moderately strong.

Royalty Relief and Excess Earnings: Two Valuation Frameworks for Pharma Patents

Pharma IP teams and investment bankers typically use two methods to attach a dollar figure to a patent protecting a branded drug: the royalty relief method and the excess earnings method.

The royalty relief method estimates the value of the patent as the present value of the royalties the company would have to pay if it did not own the patent and had to license it from a third party. Pharmaceutical royalty rates for composition-of-matter patents on blockbuster small molecules typically range from 8% to 15% of net sales, based on comparable licensing transactions. For a $2 billion annual sales product with 7 years of remaining exclusivity and a 10% discount rate, a 10% royalty rate implies a patent value of approximately $860 million.

The excess earnings method estimates the incremental cash flow attributable to the patent by modeling what the product’s revenue and margins would look like without patent protection (i.e., subject to immediate generic competition) and discounting the difference. This method requires detailed price erosion modeling, which is where the LSTM models described in the prior section feed directly into IP valuation work. A more accurate price erosion forecast, one that accounts for the number of likely generic filers and the likely speed of formulary substitution, produces a materially more accurate IP valuation.

For generic companies evaluating at-risk launch decisions, building an explicit IP valuation model for the brand product is not optional. It quantifies the financial stake in the litigation, grounds the settlement negotiation in concrete economics, and provides the board-level justification for the capital commitment.

Investment Strategy: Patent Cliff Exposure as a Portfolio Signal

For institutional investors with positions in generic drug companies, the ML-driven patent intelligence layer described above creates several actionable signals. Companies that have demonstrably built NLP-based patent scoring systems, either through internal R&D investment, acquisitions of IP analytics firms, or disclosed partnerships with patent intelligence platforms, carry a lower litigation cost basis per ANDA and a structurally higher probability of capturing first-to-file exclusivity. That translates to higher-quality revenue in the near-term pipeline.

The inverse signal also holds: brand companies with heavy LOE exposure in their five-year horizon, where the products at risk carry secondary patent portfolios that NLP analysis scores as vulnerable, face a more compressed exclusivity runway than their disclosed patent expiry dates suggest. Identifying that gap between disclosed expiry and probable generic entry is a core application of the portfolio analysis tools described here.

Investors tracking Teva, Viatris, Sun Pharma, Hikma, and Dr. Reddy’s Laboratories should specifically assess the degree to which each company has disclosed its data infrastructure for patent and commercial analysis, because that infrastructure increasingly predicts pipeline quality before it shows up in quarterly revenue.

Key Takeaways: Portfolio Selection and Patent Intelligence

NLP transformer models trained on patent claim language and litigation history convert qualitative ‘strong/weak’ patent assessments into probability-scored, feature-rich datasets. Gradient boosting classifiers applied to Paragraph IV litigation history produce expected-value frameworks for at-risk launch decisions, replacing intuition-based judgments with quantified risk distributions. LSTM-based commercial forecasting models price erosion and market share dynamics with probability ranges, not point estimates, improving the accuracy of rNPV calculations. Patent IP valuation using royalty relief and excess earnings methods, when built on ML-generated price erosion forecasts, produces more defensible inputs for settlement negotiations and capital allocation decisions.

Phase 2: Compressing Formulation Development with Predictive Chemistry

A formulation development program that runs 24 months can, in principle, be compressed to 9 to 12 months with a well-implemented ML infrastructure. The compression comes from three places: faster deformulation of the reference listed drug, predictive excipient selection that reduces the number of physical batches required, and in silico stability prediction that eliminates the slowest bottlenecks in the stability program.

CNN-Driven Spectroscopic Deformulation

Deformulation begins with characterizing the reference listed drug (RLD). Advanced analytical techniques, HPLC, mass spectrometry, FTIR, Raman spectroscopy, and solid-state NMR, each contribute data about the API’s identity, polymorphic form, particle size, and the excipient composition. The challenge is that these techniques generate complex spectra where the signals from multiple components overlap, and interpreting them correctly requires significant analyst time and domain expertise.

Convolutional Neural Networks (CNNs) trained on spectral libraries change this. A spectrum, treated as a one-dimensional signal array, is a natural input for a CNN architecture that was originally designed for image recognition. A model trained on a library of thousands of reference spectra from known APIs and excipients can decompose a complex mixture spectrum into its constituent components, outputting both component identities and approximate concentration estimates.

In a practical workflow, a Raman scan of the innovator tablet takes minutes. The resulting spectrum feeds into the pre-trained CNN, which returns a ranked list of likely components with estimated percentage ranges. That output does not replace confirmatory quantitative analysis by HPLC or TGA, but it provides the formulation team with a high-confidence starting hypothesis in hours rather than days, eliminating the initial weeks of broad-spectrum analytical screening that characterizes conventional deformulation programs.

The same approach applies to polymorphic form identification. Solid-state NMR and XRPD spectra carry the signatures of different polymorphs, and ML models trained on polymorph databases (such as the Cambridge Structural Database) can identify the specific crystal form of the API in the innovator product with a confidence level sufficient to guide the synthesis program. Polymorphic form is critical: different forms of the same API can have dramatically different solubility and bioavailability profiles, and generic companies must match the innovator’s form or demonstrate that their chosen form produces equivalent in vivo performance.

QSPR Models for API Property Prediction

Before a formulation can be designed, the formulator needs to know how the API behaves physically: its aqueous solubility across the pH range of the GI tract, its intrinsic permeability across intestinal epithelial membranes, its hygroscopicity, its bulk density and compressibility, and its chemical stability in the presence of common excipients.

Quantitative Structure-Property Relationship (QSPR) models predict these properties from molecular structure. Graph Neural Networks (GNNs), which represent a molecule as a graph where atoms are nodes and bonds are edges, are particularly well-suited to this task because they process molecular topology natively without requiring manual feature engineering. A GNN trained on large molecular property databases (ChEMBL, PubChem) can predict aqueous solubility, logP, pKa, and membrane permeability with mean absolute errors that are operationally useful for early formulation planning.

The Biopharmaceutics Classification System (BCS) categorizes drugs by their solubility and permeability, and BCS class assignment determines the entire formulation strategy. A BCS Class II drug (low solubility, high permeability) requires solubilization enhancement techniques such as amorphous solid dispersions, nanosuspensions, or cyclodextrin complexation. A BCS Class IV drug (low solubility, low permeability) is a much harder formulation challenge. A QSPR model can assign a candidate API to a preliminary BCS class before any wet lab work begins, allowing the formulation team to select the appropriate technology platform on day one rather than discovering class membership empirically three months into development.

Bayesian Optimization for Excipient Space Exploration

The excipient selection problem is combinatorially difficult. A typical oral solid formulation might include a diluent, a binder, a disintegrant, a glidant, and a lubricant. Each excipient comes in multiple grades and chemical variants: microcrystalline cellulose grades PH-101, PH-102, PH-200; hydroxypropyl methylcellulose in K4M, K15M, K100M viscosity grades; sodium starch glycolate versus croscarmellose sodium versus crospovidone as disintegrant options. The number of possible formulation combinations across five excipient categories with four to six options each, each varied across three or four concentration levels, produces a design space with tens of thousands of feasible points.

Traditional Design of Experiments (DoE) approaches use factorial and response surface designs to explore a subset of this space efficiently. Bayesian optimization improves on DoE by dynamically selecting the next experiment based on what has already been learned. The algorithm maintains a probabilistic model (typically a Gaussian Process) of the entire formulation space. After each physical experiment, it updates this model and selects the next experiment at the point in the design space that maximizes the expected information gain, efficiently converging on the optimal formulation without exhaustively testing the space.

Published validation studies from academic and industrial groups have reported 50% to 70% reductions in the number of physical formulation batches required to reach a target dissolution profile when Bayesian optimization replaces conventional DoE. For a complex modified-release product where a single batch takes five days and uses gram quantities of expensive API, those savings translate directly to development timeline and cost.

The target product profile (TPP) for the optimization objective can specify multiple simultaneous constraints: the dissolution profile must match the RLD at all measured time points within acceptance limits, tablet hardness must fall within a defined range, friability must be below 1%, and disintegration time must meet specification. Bayesian optimization handles multi-objective problems through Pareto front methods, identifying the set of formulations that cannot be improved on any objective without degrading another.

ML Stability Models and Accelerated Shelf-Life Prediction

Stability testing is one of the last remaining bottlenecks that neither computational power nor biological simulation has fully solved. ICH Q1A(R2) requires real-time data from long-term stability studies (25°C / 60% RH for 12 months minimum at submission, with the study continuing to the proposed shelf life) and accelerated stability data (40°C / 75% RH for 6 months). For a product with a proposed 24-month shelf life, the complete stability database requires two years of real-time data that cannot be compressed below roughly 12 months at the time of submission.

ML can contribute at two stages. First, early in formulation development, stability prediction models can flag high-risk formulation choices before the stability studies begin. A model trained on degradation data from hundreds of prior products can identify API-excipient incompatibilities, such as the well-documented incompatibility between amines and reducing sugars that causes Maillard browning, or between magnesium stearate and certain APIs at elevated humidity, purely from the structural properties of the API and the composition of the proposed formulation.

Second, once accelerated stability data is available (typically at 3 and 6 months), PBPK-analogous degradation kinetics models fit to that early data can generate tighter confidence intervals around the real-time shelf-life prediction. Rather than treating the 6-month accelerated data as a simple pass/fail criterion, ML regression models can use the degradation trajectory, the rate of appearance of specific impurities, the onset of physical changes in dissolution behavior, to predict the likely 24-month condition with a quantified uncertainty range. This does not eliminate the regulatory requirement for real-time data, but it gives the formulation team earlier confidence in their formulation choice and reduces the risk of a late-stage stability failure.

IP Valuation Spotlight: The Formulation Patent as an Evergreening Weapon

Formulation patents are the primary evergreening mechanism for brand companies facing composition-of-matter expiry. They are also the primary legal battleground for Paragraph IV challenges, because they are generally more vulnerable than composition-of-matter patents but still create the 30-month stay that delays generic entry.

Technology Roadmap: How Brand Companies Layer Formulation IP

A brand company’s formulation evergreening strategy typically follows a predictable progression that begins five to seven years before composition-of-matter expiry.

In the first phase, typically 7 to 5 years pre-expiry, the company files patents on alternative salt forms, alternative polymorphic forms, and novel crystal habit modifications. These patents claim the specific solid-state form used in the commercial product, attempting to prevent generics from using the same form even after the composition patent expires. The IP value of these patents depends entirely on whether the claimed form is necessary for commercial feasibility: if the generic can use a different form with equivalent bioavailability, the patent does not block.

In the second phase, 5 to 3 years pre-expiry, the company files formulation patents covering specific excipient combinations, drug delivery mechanisms (matrix systems, osmotic systems, film-coating compositions), and manufacturing processes. These patents are filed in conjunction with sNDA supplements that add the new formulation to the Orange Book listing. The 30-month stay attached to an Orange Book-listed formulation patent can push effective generic entry 2 to 3 years beyond composition-of-matter expiry even if the patent is ultimately invalidated.

In the third phase, within 3 years of expiry, method-of-use patents covering newly approved or newly discovered indications, pediatric formulations attracting 6-month pediatric exclusivity under the Best Pharmaceuticals for Children Act, and Risk Evaluation and Mitigation Strategy (REMS) programs that can complicate generic REMS-sharing negotiations, all come into play.

For a generic company’s IP team, building an evergreening patent map for each ANDA target, charting not just expiry dates but the vulnerability scores for each secondary patent in the stack, is the foundational analytical task. The ML tools described in Phase 1 are directly applicable here.

Key Takeaways: Formulation Development and IP

CNN-based spectroscopic deformulation compresses the initial analytical characterization timeline from weeks to hours, providing a high-confidence starting hypothesis for excipient composition. QSPR and GNN models enable preliminary BCS classification and solubility prediction from molecular structure before any wet lab work begins. Bayesian optimization reduces physical formulation batch counts by 50% to 70% in validated case studies, with multi-objective Pareto methods handling simultaneous quality attribute constraints. ML stability prediction models identify high-risk API-excipient incompatibilities early and improve the precision of shelf-life predictions from limited accelerated data. Formulation evergreening patents, while generally more vulnerable than composition-of-matter patents, can impose 2- to 3-year effective exclusivity extensions through the 30-month stay mechanism alone, making their NLP-scored vulnerability assessment a core input to timeline planning.

Phase 3: De-Risking Bioequivalence with PBPK-ML Hybrid Models

The bioequivalence study is the most expensive single experiment in generic drug development, and its failure rate, estimated at 15% to 25% across complex product categories, is the primary driver of cost overruns in the ANDA pipeline. ML does not guarantee BE study success. It materially reduces the probability of failure by enabling formulation teams to predict BE study outcomes in silico before any clinical investment is made.

The Architecture of an ML-Enhanced PBPK Model

Physiologically Based Pharmacokinetic (PBPK) models simulate drug absorption, distribution, metabolism, and excretion by representing the human body as a system of interconnected compartments, each corresponding to an anatomical space: the gastrointestinal lumen, the gut wall, the portal vein, the liver, systemic circulation, peripheral tissues. Each compartment has physiologically measured parameters: volume, blood flow, enzyme abundance, transporter expression.

For oral absorption, the Advanced Compartmental Absorption and Transit (ACAT) model, or its commercial implementations in tools like Simcyp or GastroPlus, divides the GI tract into nine or more segments. Drug dissolution in each segment depends on local pH, transit time, and the drug’s solubility and dissolution rate from the formulation. The dissolved drug then permeates the gut wall at a rate governed by its intrinsic permeability and the expression of intestinal efflux transporters such as P-glycoprotein.

The core limitation of traditional PBPK modeling is parameter uncertainty. Experimental determination of every input parameter is not feasible, and literature values carry substantial variability. ML addresses this in three ways.

First, ML regression models trained on clinical PK datasets can estimate the most likely values of uncertain parameters (particularly gut wall first-pass extraction and renal clearance scaling factors) by fitting the PBPK model to observed data and using Bayesian parameter estimation. Second, ML can build the formulation-absorption interface: a sub-model that takes the in vitro dissolution profile (measured in USP apparatus I or II at multiple pH values) as input and predicts the effective in vivo dissolution and absorption rate in each GI segment. This IVIVC sub-model, trained on paired in vitro/in vivo datasets from prior BE studies and clinical trials, is the critical bridge between the formulation laboratory and the PK simulation. Third, ML population variability models replace the default Simcyp or GastroPlus population libraries with company-specific, ethnically and genetically characterized virtual populations drawn from the actual demographics of the planned BE study cohort.

Virtual Population Simulation and 90% CI Pre-calculation

The regulatory standard for bioequivalence under FDA’s 2003 guidance requires that the 90% confidence interval for the geometric mean ratio (GMR) of Test/Reference for both Cmax and AUC (from time zero to last measurable concentration, and extrapolated to infinity) fall entirely within 80.00% to 125.00%. Meeting this criterion in a study with 24 to 36 subjects requires that the point estimate of the GMR be reasonably close to 1.0 and that the intra-subject coefficient of variation (CV) for the PK parameters be low enough to produce a tight confidence interval.

With an ML-enhanced PBPK model, the team runs a Monte Carlo simulation of the planned BE study before a single subject is dosed. The simulation samples thousands of virtual subjects from the variability model, simulates the PK profiles for both the test formulation and the reference product, and computes the GMR and 90% CI from the simulated dataset. If the simulated 90% CI falls clearly within the 80-125% bounds across the majority of Monte Carlo trials, the formulation has strong predicted BE. If the simulated CI approaches or crosses either bound for a meaningful fraction of trials, the formulation needs adjustment before clinical conduct.

This iterative in silico cycle, which takes hours on a modern compute cluster rather than weeks of lab work and months of clinical execution, allows formulation teams to optimize the dissolution profile specifically to the window that produces reliable BE. The FDA’s guidance on population bioequivalence and individual bioequivalence, and the agency’s increasing acceptance of PBPK submissions for waiver of additional BE studies under the Biopharmaceutics Classification System waiver framework, create a regulatory pathway that rewards companies whose PBPK models are validated and well-documented.

Real-Time Anomaly Detection in BE Trials

Standard BE study conduct involves batch analysis: blood samples are collected from all subjects, stored, shipped to the bioanalytical laboratory, analyzed in batches, and the PK parameters calculated after all data are available. Anomalies in individual subject profiles, whether from dosing errors, protocol deviations, or analytical issues with specific samples, are discovered only in retrospect, sometimes weeks after the study concludes.

Autoencoder neural networks change this by enabling real-time monitoring. An autoencoder is trained on the expected PK profile shape for the drug in question, specifically the pattern of concentrations at each nominal sampling time relative to the dose. Profiles with unusually rapid early absorption, unexpected concentration drops at mid-curve, or anomalous trough values are compressed and reconstructed poorly by the autoencoder, generating high reconstruction errors that flag the profiles for immediate clinical review.

Real-time flagging allows the clinical operations team to investigate within hours: Was the dose administered correctly? Did the subject consume food during the fasting period? Was there a sample labeling error? In many cases, the anomaly can be attributed to a protocol deviation, documented and adjudicated before the study ends, rather than discovered during the PK analysis and requiring a post-hoc statistical defense to regulators. This shifts quality assurance from reactive to prospective and reduces the proportion of studies that require FDA queries about outlier subjects during the review period.

Intelligent Subject Selection and Genotype-Based Stratification

High intra-subject PK variability is the principal technical reason BE studies fail. For drugs metabolized primarily by CYP2D6, CYP2C19, or CYP2C9, pharmacogenomic polymorphisms create subpopulations of poor metabolizers, intermediate metabolizers, and ultra-rapid metabolizers with dramatically different plasma concentration profiles. Including a disproportionate number of extreme metabolizers in one arm of a crossover study can inflate the variance of the GMR estimate and widen the 90% CI to the point where it crosses the 80% or 125% boundary.

ML classifiers trained on historical BE data can identify the subject-level genotypic and phenotypic covariates that predict extreme PK outcomes for specific drug-metabolizing enzymes. This analysis supports two enrollment strategies. The first is prospective genotyping with balanced stratification: pre-screen subjects for relevant polymorphisms and ensure that each arm of the crossover design contains a balanced representation of metabolizer phenotypes. The second strategy, applicable to highly variable drugs and supportable with FDA guidance on highly variable drugs, is to prospectively exclude subjects with genotypes known to produce extreme outlier profiles and document the exclusion criterion in the protocol with appropriate regulatory justification.

The FDA’s guidance on highly variable drugs already acknowledges the statistical challenges posed by high PK variability and permits a Reference-Scaled Average Bioequivalence (RSABE) approach for drugs with intra-subject CV above 30% for Cmax. ML-based subject selection optimization works in parallel with RSABE to reduce study size requirements and improve the probability of meeting the scaled acceptance criterion.

Investment Strategy: BE Failure Rate as a Portfolio Quality Metric

Generic drug companies that have deployed ML-enhanced PBPK modeling as a standard part of their development workflow should, over a three- to five-year period, show measurably lower BE study failure rates than the industry baseline. That improvement does not appear in quarterly earnings directly, but it shows up in pipeline throughput: more approved ANDAs per dollar of R&D spend, shorter average time from IND-equivalent work to approval, and fewer surprise Complete Response Letters attributable to inadequate bioequivalence data.

Investors evaluating the R&D efficiency of generic companies should ask for BE study pass rates by product category as part of due diligence. A company showing a consistent first-attempt BE pass rate above 85% for complex formulations, compared to an industry average of 75% to 80% for the same categories, is generating that outperformance either from superior formulation science, superior ML-assisted simulation, or both. Either way, it is a durable competitive advantage.

Key Takeaways: Bioequivalence De-Risking

ML-enhanced PBPK models improve the precision of in vivo absorption prediction by building data-driven IVIVC sub-models that replace literature-derived estimates with company-specific, empirically calibrated parameters. Monte Carlo simulation of the complete BE study design, using ML-generated virtual populations, allows 90% CI pre-calculation before clinical conduct, enabling formulation optimization against the regulatory acceptance criterion directly. Autoencoder-based real-time anomaly detection during study conduct converts what was a batch quality control process into a prospective monitoring system, reducing the proportion of studies requiring post-hoc outlier adjudication. Genotype-based stratification models that identify high-variability subject phenotypes can meaningfully reduce the 90% CI width for drugs with CYP polymorphism-driven variability. For highly variable drugs, ML-guided enrollment strategies work in combination with FDA’s RSABE framework to reduce study size requirements.

Phase 4: Regulatory Intelligence and Quality by Design in Manufacturing

Regulatory submission and manufacturing quality are the two final phases where machine learning has historically received less attention than formulation and BE prediction. That gap is closing quickly, because the financial exposure from a Complete Response Letter (CRL) or a manufacturing-related batch failure is as damaging as a failed BE study, and often more damaging in terms of regulatory relationship capital.

NLG for ANDA Dossier Automation and Consistency Checking

An ANDA submitted under the Common Technical Document (CTD) format in Module 3 (Quality), Module 2.3 (Quality Overall Summary), and Module 5 (Clinical Study Reports) contains tens to hundreds of thousands of pages of structured technical writing. The text in these modules follows highly standardized formats with specific section structures defined by FDA guidance documents and ICH guidelines. Yet the documents are still largely drafted manually, creating opportunities for internal inconsistencies that generate Information Request letters or Refuse-to-Receive determinations.

Natural Language Generation (NLG) models, trained on prior approved submissions from the same company or from published FDA-approved NDA precedents, can draft standard sections of the CTD from structured data inputs. A stability summary section (CTD section 3.2.P.8) requires specific narrative elements: a description of the stress testing conditions, the degradation products observed, the accelerated stability results, and the real-time data through the proposed shelf life. An NLG model with access to the structured stability database can generate a compliant, accurately referenced draft of this section in minutes rather than hours.

More immediately valuable is automated consistency checking across the entire dossier. An NLP model can scan the complete submission and flag every instance where a batch number, test result, specification limit, analytical method version, or impurity identity is stated, then verify that each instance is consistent with every other instance where the same parameter appears. This cross-checking task, which in a manual review requires a dedicated team working for weeks, can be completed by an NLP system in hours with effectively zero miss rate on direct numeric inconsistencies.

Process Analytical Technology (PAT) and Real-Time Release Testing

The FDA’s PAT framework, articulated in the 2004 guidance document and expanded through subsequent guidance on continuous manufacturing, establishes the regulatory basis for monitoring critical quality attributes (CQAs) of drug products during manufacturing rather than testing finished goods before release. Real-Time Release Testing (RTRT) replaces end-of-batch testing with continuous monitoring data, with ML models serving as the inference engine that translates sensor streams into quality attribute predictions.

In a tablet manufacturing line equipped with NIR probes at the blender, granulator, and tablet press, the ML model receives a continuous stream of spectral data. The model has been trained to predict blend uniformity (the distribution of API concentration within the powder blend), granule moisture content, and tablet hardness and dissolution from the NIR spectra at each measurement point. If the predicted blend uniformity falls outside the acceptable range at the blender exit, the process can be flagged for additional blending cycles before the powder proceeds to compression, preventing an entire batch from being manufactured on out-of-specification blend.

The ICH Q8 (Pharmaceutical Development), Q9 (Quality Risk Management), and Q10 (Pharmaceutical Quality System) guidelines form the regulatory framework within which these PAT systems must operate. PAT model validation under GMP is a significant undertaking, but FDA has been consistently supportive of well-validated PAT applications and has approved RTRT in several major ANDA dossiers. For manufacturers of high-volume generic products, the yield improvement from catching process deviations in real time, rather than discovering them in end-of-batch release testing, can justify the PAT validation investment within two to three product campaigns.

Autoencoder-Based Batch Anomaly Detection

The Quality by Testing paradigm releases batches based on offline tests of a small sample. The Quality by Design paradigm builds quality into the process. Autoencoder networks trained on sensor data from validated ‘golden batches’ provide the anomaly detection layer that makes QbD operational.

A golden batch is a production batch that meets all specifications and has been selected as a reference. The autoencoder learns the high-dimensional pattern of manufacturing data from a set of golden batches: the time series of blender torque, granulator fluid bed temperature, tablet press main compression force and ejection force, coating solution spray rate and inlet temperature, and drum outlet temperature. This pattern is compressed into a lower-dimensional latent space representation and then reconstructed. The model minimizes reconstruction error during training on golden batch data.

When a new production batch runs, the same sensor streams feed into the trained autoencoder in real time. A batch that deviates from the golden batch pattern, whether due to raw material variability, environmental changes, or equipment drift, produces higher reconstruction errors. The system generates an alert when the reconstruction error exceeds the threshold established during validation, allowing the operator to intervene before the deviation propagates through the manufacturing process.

The key regulatory requirement for this application is that the autoencoder model must be validated as a critical process monitoring tool under GMP, with documented evidence of its detection sensitivity, specificity, and the statistical basis for the alert threshold. The FDA’s process validation guidance from 2011 and the more recent guidance on continuous manufacturing explicitly accommodate ML-based process monitoring tools within the Quality by Design framework.

Predictive Maintenance ROI on Manufacturing Equipment

Unplanned equipment downtime in a pharmaceutical manufacturing facility is more expensive than it appears on the maintenance cost line. The direct cost includes repair labor, replacement parts, and production delay penalties. The indirect cost includes the stability samples that may need to be restarted if the delay is long enough to create a gap in the batch campaign, the investigation and documentation required under GMP deviation procedures, the regulatory notification obligations if the delay affects an approved product’s supply commitments, and the potential recall exposure if defective product escaped before the failure was detected.

Predictive maintenance ML models, trained on vibration, temperature, pressure, and current draw data from manufacturing equipment, identify degradation signatures 72 to 240 hours before failure in validated deployments. The economic case for implementation is straightforward. A single avoided catastrophic failure on a tablet press that serves a $200 million product line, where downtime represents $500,000 per day in lost contribution margin and an investigation cost of $150,000, produces an ROI on the predictive maintenance program that covers the model development and sensor infrastructure cost within the first year.

Key Takeaways: Regulatory Submission and Manufacturing Quality

NLG drafting of standardized CTD sections, combined with NLP-based cross-document consistency checking, reduces ANDA submission deficiency rates attributable to clerical errors and internal inconsistencies. PAT-enabled RTRT, with ML models translating NIR and process sensor streams into real-time CQA predictions, shifts quality assurance from retrospective batch testing to prospective process control. Autoencoder-based batch anomaly detection operationalizes the Quality by Design framework at the sensor level, providing a GMP-compliant early warning system for process deviations. Predictive maintenance models on manufacturing equipment produce single-year ROI in high-throughput production environments by avoiding the full cost structure of unplanned downtime under GMP.

Building the ML Infrastructure in a GxP-Compliant Environment

The application-level benefits described above are achievable only with a functioning ML infrastructure. That infrastructure has three components: a data architecture that makes historical scientific and operational data accessible for model training; a technology stack that supports model development, deployment, and monitoring; and a GxP-compliant MLOps framework that satisfies regulatory auditability requirements. Each component has specific pharmaceutical-industry requirements that differ from ML infrastructure in consumer technology or financial services.

FAIR Data Principles Applied to Pharmaceutical ML

The FAIR Guiding Principles, originally articulated by Wilkinson et al. in Scientific Data (2016), have become the de facto standard for research data management and are increasingly referenced in FDA and EMA discussions of data integrity in AI/ML applications. For a generic pharmaceutical company, implementing FAIR is less a philosophical commitment than a practical necessity: without it, the historical data that is the primary raw material for ML models is inaccessible, inconsistently formatted, and unreliable.

Findability requires that every dataset, from a specific batch of dissolution experiments to a 15-year archive of BE study PK data, be indexed in a searchable metadata registry with persistent unique identifiers. Accessibility requires that authorized users and computational systems can retrieve the data through a standard protocol, which in practice means moving from network file shares and local laboratory databases to a centralized data lake with a governed API layer. Interoperability requires that datasets use common vocabularies and ontologies, the IUPAC chemical nomenclature for compounds, the ISO 11238 substance identification framework for APIs, the NCI Thesaurus for pharmaceutical process terminology, so that data from the stability laboratory can be joined to data from the manufacturing floor without manual transformation. Reusability requires that datasets carry rich provenance metadata: the instrument used, the analytical method version, the analyst, the environmental conditions, and the lineage of the sample back to the batch of origin.

The practical starting point for most companies is a data audit. Before building any ML model, map the existing data landscape: identify what datasets exist, where they are stored, what format they are in, and how they can be linked to other datasets through common keys. That audit typically reveals that 60% to 70% of the potentially valuable data is either inaccessible in legacy systems or lacks the metadata needed to make it useful for model training.

Algorithm Selection by Phase and Risk Profile

Not all ML applications in pharmaceutical development carry the same regulatory risk, and the algorithm selection should reflect that. A model used for internal formulation guidance carries lower regulatory risk than a model whose output directly informs a PBPK simulation submitted in an ANDA dossier. The principle of regulatory parsimony applies: use the simplest model that adequately solves the problem, because simpler models are easier to validate, easier to explain to regulators, and more robust to distribution shift when the model is applied to new products or new manufacturing conditions.

For patent scoring and commercial forecasting, gradient boosting methods (XGBoost, LightGBM) are the appropriate primary choice. They handle mixed-type feature sets natively, are robust to missing data, and produce SHAP-based feature importance values that explain individual predictions. Deep neural networks can provide marginal performance improvements on these tasks but at a significant cost in interpretability and validation complexity.

For spectroscopic deformulation and PAT monitoring, CNNs and autoencoders are justified because the input data (spectra, sensor time series) has the structured, high-dimensional format where deep architectures genuinely outperform shallower methods. The validation package for these models should include: the training data provenance and preprocessing record; the model architecture and hyperparameter selection rationale; the performance metrics on a held-out test set that was not seen during training or hyperparameter tuning; the sensitivity and specificity of anomaly detection at the chosen alert threshold; and the results of a robustness test where the model is evaluated on data from conditions outside the training distribution.

For PBPK-ML hybrid models submitted to regulators, the FDA’s 2023 draft guidance on AI/ML in drug development explicitly addresses the need for model documentation, performance characterization on the intended use population, and a risk assessment of the consequences of model error. Companies planning to include ML-enhanced PBPK results in ANDA submissions should engage the FDA through the Emerging Technology Program before committing to a submission strategy.

MLOps for a GxP Context: Validation, Versioning, Drift Detection, and Audit Trails

MLOps is the set of engineering practices that enables ML models to be developed, deployed, monitored, and updated reliably in production. In a GxP environment, MLOps carries additional obligations that go beyond standard software engineering: model validation as an analytical procedure, version control with regulatory traceability, model drift monitoring as a process control activity, and immutable audit logs as a data integrity requirement.

Model validation in GxP follows a risk-based approach aligned with the validation principles in ICH Q2(R1) (now revised as Q2(R2) in 2023) for analytical procedures. The model is characterized for accuracy (mean absolute prediction error against reference measurements), precision (repeatability and intermediate precision), linearity across the relevant operating range, specificity (ability to distinguish true signals from interference), and robustness to foreseeable variations in input data quality. The validation study design, the acceptance criteria, and the actual results must be documented in a Validation Report that is subject to GMP review.

Version control must be absolute: every change to the model architecture, training data, preprocessing pipeline, or inference code creates a new version with a unique identifier and a complete change record. The model registry, implemented in tools such as MLflow, Weights & Biases, or a validated pharmaceutical LIMS with ML module support, must store not just the model artifact but the complete provenance chain: the exact dataset used for training (identified by its FAIR persistent identifier), the specific compute environment, the hyperparameter configuration, and the validation report.

Drift monitoring compares the statistical distribution of new input data against the training data distribution, using metrics such as Population Stability Index (PSI) or KL divergence. When drift exceeds a pre-defined threshold, the drift monitoring system generates an alert and initiates a revalidation workflow. In manufacturing applications, drift can arise from new raw material suppliers, seasonal environmental variation, or gradual equipment wear; in commercial forecasting applications, it can arise from changes in prescribing behavior or formulary structures. The drift monitoring protocol should specify what constitutes acceptable versus unacceptable drift, who reviews the alert, and what the remediation procedure is.

Key Takeaways: Infrastructure and MLOps

FAIR data principles, applied at the enterprise level, convert the historical scientific data scattered across laboratory systems and electronic batch records into a structured, trainable asset. Algorithm selection should balance predictive performance against regulatory interpretability requirements: gradient boosting for structured tabular tasks, CNN and autoencoder architectures for spectral and sensor data where deep learning provides genuine performance advantages. GxP-compliant MLOps requires ICH Q2(R2)-aligned model validation, absolute version control with full provenance traceability, automated drift monitoring, and immutable audit logs, each of which is a regulatory obligation, not an engineering best practice.

Competitive Landscape: Who Is Deploying ML in Generics Today

The generic drug industry’s adoption of ML is uneven. Large, vertically integrated companies with substantial R&D infrastructure have the data density and capital to build sophisticated internal capabilities. Mid-sized companies are increasingly turning to partnerships with AI vendors and contract research organizations with ML competencies. Small specialty generic companies are beginning to access cloud-native ML tools that reduce the infrastructure barrier.

Teva: Data at Scale, Execution Complexity

Teva Pharmaceutical, the world’s largest generic drug company by volume, has both the largest historical database of ANDA development data and the most complex organizational structure through which to deploy ML tools. Teva has publicly discussed investments in AI-driven portfolio optimization, manufacturing quality systems using PAT and process data analytics, and regulatory intelligence platforms. The company’s manufacturing network, spanning more than 40 sites globally with highly variable levels of digital instrumentation, presents a meaningful integration challenge: the same ML model that performs well at a fully instrumented facility in Israel may receive inconsistent or incomplete sensor data from an older tablet press at a site in India or Hungary.

From an IP and R&D perspective, Teva’s litigation history, representing decades of Paragraph IV challenges across hundreds of products, constitutes one of the most valuable training datasets for patent litigation prediction models in the industry. The degree to which Teva has systematically converted that institutional knowledge into structured, ML-trainable data is not publicly disclosed, but it would be the most valuable single source of NLP patent analysis training data in the generic sector.

Sun Pharma and Dr. Reddy’s: Complex Formulation Depth

Sun Pharmaceutical Industries and Dr. Reddy’s Laboratories have each built reputations on complex formulation capabilities, particularly in dermatology (Sun Pharma) and in the U.S. generic market broadly (Dr. Reddy’s). Both companies have made public statements about digital transformation initiatives that include ML components. Sun Pharma’s acquisition of Taro Pharmaceutical and its subsequent investment in dermatological drug delivery science gives it a rich dataset of topical and semisolid formulation results, a category where PBPK-equivalent models (membrane permeation models rather than GI absorption models) are increasingly relevant for demonstrating equivalence.

Dr. Reddy’s has an explicit data science function, disclosed in annual reports, and has partnered with technology vendors for manufacturing analytics. The company’s biopharmaceutics laboratory capabilities, which support its complex oral solid and injectable ANDA pipeline, generate the kind of rich in vitro/in vivo correlation datasets that feed ML-enhanced PBPK models.

Viatris: Integration Challenge as Strategic Risk

Viatris, formed by the 2020 merger of Mylan and Pfizer’s Upjohn division, has the product breadth and global manufacturing footprint to benefit enormously from ML-driven portfolio optimization and manufacturing quality systems. The integration challenge, combining two legacy data architectures across more than 35 manufacturing sites and hundreds of active ANDAs, is also one of the most complex data harmonization projects in the generic industry. Until the underlying data is harmonized into a FAIR-compliant architecture, the potential ML applications remain aspirational.

The company’s commercial intelligence capabilities, particularly for pricing and contracting in the U.S. market, are where ML deployment is likely furthest advanced, because the data infrastructure for commercial analytics (IQVIA, Symphony Health) is external and standardized, unlike the highly idiosyncratic R&D and manufacturing data that varies by site and legacy system.

AI-Native Entrants and Specialty Platforms

A small but growing number of companies are building generic drug development capabilities natively around ML infrastructure, rather than retrofitting ML tools onto legacy scientific workflows. These companies typically focus on a specific category, complex inhalation devices, topical semisolids, or biosimilars, where the ML-addressable formulation and BE challenges are most acute and where the regulatory risk premium for incumbents is highest.

Platforms like Kebotix (now part of a broader materials informatics ecosystem), Schrödinger (which has pharma-grade molecular property prediction tools directly applicable to QSPR modeling), and emerging contract formulation organizations with AI-native development platforms are creating a vendor ecosystem that gives mid-sized generic companies access to specific ML capabilities without building full in-house data science teams.

Investment Strategy: Evaluating ML Maturity Across the Generic Sector

Investors assessing ML deployment maturity in generic companies should look for five indicators in public disclosures, earnings calls, and due diligence: disclosed investment in data infrastructure (beyond generic statements about ‘digital transformation’); specific ML use cases mentioned with operational context (BE prediction, PAT monitoring, patent scoring); evidence of reduced BE failure rates or ANDA approval timelines in recent pipeline cohorts; partnerships with or acquisitions of AI/ML platform companies in the pharmaceutical domain; and regulatory submissions that explicitly reference AI/ML tools (disclosed in the public ANDA approval database via FDA’s Orange Book updates and the EMA’s public assessment reports). Companies that can provide quantitative evidence of improved development efficiency attributable to ML deployment are making a capital allocation argument that the market is not yet pricing efficiently.

Challenges, Ethics, and the Regulatory Trajectory for AI/ML

Explainability (SHAP, LIME) and Regulatory Acceptance

The regulatory concern about ML in pharmaceutical decision-making is specific: can the company explain why the model produced a particular output, and is that explanation scientifically coherent? This is most acute for models used in regulatory submissions, where the FDA’s scientific reviewers must be able to evaluate the validity of the model’s contribution to the submission.

SHAP (SHapley Additive exPlanations), derived from cooperative game theory, decomposes a model’s prediction into contributions from each input feature for each individual prediction. For a dissolution prediction model, SHAP analysis might reveal that, for a specific test formulation, the model’s prediction of slow dissolution is driven primarily by a high concentration of a particular grade of HPMC combined with a low concentration of crospovidone, with the API particle size distribution contributing a secondary effect. This output is scientifically interpretable: a formulation scientist recognizes the mechanistic logic of those feature contributions and can validate them against physical reasoning about gel layer formation and disintegrant competition.

LIME (Local Interpretable Model-Agnostic Explanations) works differently: it creates a locally linear approximation of the complex model’s behavior in the vicinity of a specific prediction. For classification tasks like at-risk launch probability, LIME provides the set of input features that, if slightly changed, would flip the predicted outcome, giving the user a clear picture of the decision boundary.

Both methods should be incorporated into the validation package for any ML model used in a regulatory context, and their outputs should be reviewed by subject matter experts as part of model validation to confirm that the model is learning scientifically sound relationships rather than spurious correlations.

Algorithmic Bias in BE Populations

BE study populations are systematically non-representative. FDA guidance requires healthy volunteers, which excludes the elderly, patients with comorbidities, and patients on concomitant medications. The populations enrolled in BE studies at many CROs are also demographically skewed, overrepresenting young men of specific ethnic backgrounds who are disproportionately available and willing to participate in paid clinical studies.

When ML models are trained on BE study data from these populations, the models inherit these demographic biases. A PBPK virtual population model that does not adequately represent post-menopausal women, elderly patients with reduced GI motility, or patients of East Asian descent with specific CYP2C19 polymorphism frequencies will produce BE predictions that are accurate for the training population but unreliable for the actual patient population that will use the drug after approval.

Companies should conduct systematic subgroup performance analysis on all PBPK-ML models before regulatory submission, specifically evaluating whether the model’s prediction accuracy is consistent across sex, age, and relevant ethnic subgroups. Where significant performance gaps are identified, the model should be supplemented with additional virtual population data for the underrepresented groups, or the submission should include an explicit discussion of model limitations with respect to specific populations.

FDA and EMA Guidance Trajectory for AI/ML in Drug Development

The FDA’s 2023 draft guidance on ‘Artificial Intelligence and Machine Learning in the Development of Drug and Biological Products’ is the most explicit regulatory statement on ML in pharmaceutical development to date. It establishes that ML tools used to support development decisions should be documented in the submission with a description of the training data, model architecture, intended use, and performance characterization. It does not require ML models to be validated in the same manner as regulated analytical instruments, but it does require that the company be able to defend the scientific validity of the model’s contributions.

The EMA’s 2023 reflection paper on AI in the medicinal product lifecycle adopts a similar risk-proportionate framework, requiring more rigorous documentation for ML used in GxP contexts than for ML used in exploratory research. The EMA has also established a collaboration framework with the FDA on AI/ML standards, suggesting that regulatory requirements will converge across jurisdictions over the next three to five years.

Both agencies emphasize pre-submission meetings and early dialogue for novel AI/ML applications. For generic companies planning to include ML-enhanced PBPK modeling in an ANDA submission, or planning to cite ML-generated stability predictions in a CTD Module 3, proactive engagement through FDA’s Emerging Technology Program or EMA’s Innovation Task Force will materially reduce the risk of a CRL based on insufficient documentation of the ML methodology.

Key Takeaways: Challenges and Regulatory Trajectory

SHAP and LIME explainability methods are not optional add-ons: they are required components of the validation documentation for any ML model used in regulatory-facing applications, because they provide the mechanistic interpretability that scientific reviewers need to evaluate the model’s validity. Demographic bias in BE study populations propagates into PBPK-ML models through the training data and must be explicitly characterized and addressed through virtual population augmentation or documented as a model limitation. The FDA and EMA are converging on a risk-proportionate framework for ML in drug development that rewards early engagement, transparent documentation, and scientifically defensible model validation over black-box performance claims.

The Five-Year Technology Roadmap

Self-Driving Formulation Laboratories (2025-2027)

The closed-loop between computational optimization and physical experimentation is already operational in academic settings and select industrial research laboratories. The components exist: Bayesian optimization algorithms that design the next experiment, liquid-handling robotic platforms that execute dispensing and mixing with sub-milligram accuracy, miniaturized dissolution testing apparatus with automated sampling, and inline spectroscopic analysis that returns results to the optimization loop without human intervention.

The practical bottleneck for generic companies is the validation of automated systems under GMP, which requires documented qualification of each hardware component and software system at the instrument, operational, and performance validation levels. As Annex 11 (EU) and 21 CFR Part 11 (US) compliance for automated laboratory systems becomes better understood, and as instrument vendors build qualification packages into their automated platforms, the adoption curve for self-driving labs in GMP-compatible environments will steepen.

Companies that establish self-driving lab capabilities for early-stage formulation work (which does not require full GMP compliance) in 2025-2026 will be positioned to extend those capabilities into GMP formulation development by 2027-2028, compressing average formulation development timelines by 40% to 60% compared to conventional workflows.

Federated Learning Across Generic Consortia (2026-2028)

One of the recurring constraints on ML model quality in the generic industry is dataset size. A single company’s dataset of historical BE studies, while valuable, is small compared to what could be assembled across the industry. But aggregating data across competing companies is legally and commercially impossible through conventional data sharing.

Federated learning offers a technically viable path: individual companies train ML models on their own local datasets and share only the resulting model gradients, not the underlying data, with a central aggregation server. The aggregated model benefits from the collective training data without any company’s proprietary data leaving its own infrastructure. The key technical requirement is that the aggregated model’s gradients cannot be used to reconstruct the training data from individual participants, which is addressed through differential privacy mechanisms that add calibrated mathematical noise to the shared gradients.

Several precompetitive consortia, including the Pistoia Alliance and specific IMI-funded European research programs, have explored federated learning frameworks for drug discovery. The generic sector has not yet developed an equivalent institutional infrastructure, but the technology is mature enough that a well-organized industry consortium could deploy a federated PBPK-ML training program across five or six participating companies within a two-year development period, producing models with substantially larger effective training datasets than any single participant could assemble.

Personalized Generics and On-Demand Dosage Form Manufacturing

Pharmaceutical 3D printing using fused deposition modeling (FDM) or binder jetting of drug-loaded polymeric matrices has advanced from laboratory curiosity to commercial reality. The FDA approved the first 3D-printed drug product (Spritam, levetiracetam) in 2015, and clinical trials of personalized 3D-printed dosage forms for pediatric patients and for patients requiring unusual dose combinations are underway at several academic medical centers.

The relevance for generic companies is longer-term but strategically important. As 3D printing enables on-demand production of dosage forms with customized dose and release profiles, the economic model shifts from high-volume batch manufacturing to decentralized, patient-specific production. ML plays two roles in this model: designing the formulation parameters that produce a target release profile for a specific dose level (the optimization task), and predicting the PK outcomes for a patient with specific genetic and physiological characteristics from a proposed personalized formulation (the PBPK prediction task).

Generic companies that build expertise in ML-driven formulation optimization and personalized PK prediction now will hold the technical foundation for the personalized manufacturing model if and when it becomes commercially viable at scale. The IP strategy for personalized dosage form manufacturing, where the ‘product’ is defined by a patient-specific algorithm output rather than a fixed formulation, will also require new thinking about how to protect and license that algorithmic IP.

AI-Native Biosimilar Development

Biosimilars, which are biological medicines that are highly similar to approved reference biologics, represent the next frontier of the generic development model. The global biosimilar market was valued at approximately $35 billion in 2024 and is projected to exceed $100 billion by 2030. The patent cliff in biologics, with major monoclonal antibodies including adalimumab (Humira), ustekinumab (Stelara), pembrolizumab (Keytruda), and several other checkpoint inhibitors and interleukin inhibitors facing biosimilar competition in the 2024-2030 window, is the largest wave of generic opportunity in pharmaceutical history.

Biosimilar development differs from small-molecule generic development in technical depth but not in analytical logic. The demonstration of ‘no clinically meaningful differences’ from the reference biologic requires extensive analytical characterization of the biosimilar’s structural attributes (primary sequence, glycosylation profile, higher-order structure), functional attributes (binding affinity, effector function), and PK/PD profile. Each of these characterization domains generates rich, high-dimensional data that is amenable to ML analysis.

Specifically, ML models trained on mass spectrometry data can predict the glycan composition of a candidate biosimilar from LC-MS/MS spectra, identify post-translational modification hotspots that differ from the reference, and assess the structural risk of immunogenicity from predicted exposed epitope surfaces. PBPK-ML models for biologics use a different architecture than those for small molecules, incorporating subcutaneous absorption modeling with lymphatic transport and FcRn-mediated half-life extension, but the general approach of ML-optimized parameter estimation and virtual population simulation applies directly.

The IP landscape for biosimilars is, if anything, more complex than for small-molecule generics. The Biologics Price Competition and Innovation Act (BPCIA) establishes a separate patent dance process distinct from Paragraph IV, and innovator companies have filed biosimilar patent thickets of extraordinary density. AbbVie, for example, has maintained a patent estate for Humira that, at its peak, listed more than 100 U.S. patents covering formulation, device, manufacturing process, and method-of-use claims. Applying NLP-based patent scoring to these biosimilar patent thickets, to identify the most vulnerable patents and the most efficient challenge strategy, is an immediate application of the Phase 1 ML tools described earlier.

Key Takeaways: Technology Roadmap

Self-driving formulation laboratories, where Bayesian optimization algorithms and robotic platforms run in a closed development loop, will compress early formulation development timelines by 40% to 60% as GMP-compatible automated systems mature between 2025 and 2027. Federated learning across industry consortia can produce training datasets for PBPK-ML models that are an order of magnitude larger than any single company can assemble, without requiring proprietary data sharing. Personalized 3D-printed generics require ML formulation optimization and PBPK prediction as their foundational technical capabilities, making the investment in those capabilities now relevant to the personalized medicine business model that emerges over the following decade. Biosimilar development represents the largest generic opportunity in pharmaceutical history, and ML applications in structural characterization, glycan prediction, and biosimilar patent thicket analysis are directly applicable from the small-molecule ML playbook with appropriate biologic-specific adaptations.

Master Key Takeaways

The fundamental economics of the ANDA market, built around first-to-file exclusivity and the brutal cost of BE study failure, create a specific and quantifiable ROI case for ML deployment at every stage of generic drug development. ML’s contribution is not speed for its own sake; it is risk-adjusted return improvement.

Patent intelligence powered by NLP and litigation prediction models converts Paragraph IV strategy from a qualitative legal judgment into a probability-weighted financial decision. IP valuation methods grounded in ML-generated price erosion forecasts produce more accurate inputs for settlement negotiations, at-risk launch decisions, and portfolio capital allocation.

Predictive formulation development, combining QSPR-based API characterization, CNN-driven deformulation, and Bayesian optimization of excipient design space, reduces physical batch counts by 50% to 70% in validated applications. The formulation patent as an evergreening instrument is best understood through an NLP-scored vulnerability map of the entire secondary patent stack protecting any ANDA target.

ML-enhanced PBPK modeling, combining ML-calibrated parameter estimation with virtual population simulation and in silico 90% CI pre-calculation, is the most financially impactful ML application in the generic development cycle given the cost of a failed BE study. Autoencoder-based real-time anomaly detection in clinical trial conduct and in manufacturing shifts quality assurance from retrospective to prospective.