Executive Summary

The pharmaceutical industry runs on a contradiction that has defined the last 25 years. The science has never been more precise. CRISPR can edit a single nucleotide. ADC technology delivers cytotoxins with receptor-level targeting. Proteomics platforms map disease pathways that were invisible a decade ago. Yet by every efficiency metric, drug development has gotten harder and more expensive. This is Eroom’s Law in practice: the cost to bring one approved asset to market reached $2.23 billion in 2024, and the internal rate of return across top biopharma companies recovered only to a fragile 5.9% that year, barely clearing the weighted average cost of capital for most large-cap firms.

That contradiction is the business context for this report.

The industry is now in the middle of a transition from portfolio administration to algorithmic portfolio engineering. Spreadsheet-based stage-gate reviews, consensus-driven probability estimates, and siloed IP data are being replaced by machine learning models, dynamic rNPV engines, and real-time patent intelligence feeds. The question for portfolio managers, BD executives, and institutional investors is no longer whether AI will reshape pharmaceutical asset strategy. It already has. The question is how fast, at what cost, and who captures the return.

This guide covers the full landscape: the broken economics that made AI adoption inevitable, the specific mechanics of how ML models improve probability-of-success estimation, the IP valuation frameworks that underpin every asset’s worth, the technology roadmaps for lifecycle management tactics like the formulation switch and 505(b)(2) strategy, the real-world case studies that show quantifiable ROI, and the risks, from algorithmic bias to FDA regulatory uncertainty, that could erode those returns. It is written for teams that already understand the basics and need a decision-grade reference.

Section 1: The Broken Economics of Traditional Pharma Portfolio Management

1.1 Eroom’s Law: The Productivity Collapse

The drug development productivity problem is structural, not cyclical. In 1950, research and development at a major pharmaceutical company could expect to generate one new approved drug per $1 billion spent (inflation-adjusted). By 2010, that figure had fallen to roughly one approval per $10 billion. The trend has not reversed. Eroom’s Law — the inverse of Moore’s Law in semiconductors — describes this compounding inefficiency precisely. As biological understanding has deepened, the cost of regulatory-grade proof of efficacy and safety has grown faster than the tools to generate it.

The hit rate across the full discovery-to-approval funnel remains at roughly one in ten thousand molecules. For every asset that enters the funnel, ten thousand do not make it. The capital consumed by the 9,999 failures is the hidden cost embedded in the $2.23 billion average per approval figure. That average, published by Deloitte’s annual R&D returns analysis, includes the full portfolio cost of failure, not just the direct spend on the approved asset.

For portfolio managers, this means that asset selection is not a background function. It is the primary determinant of financial performance, ahead of clinical execution, pricing, and manufacturing efficiency.

1.2 Stage-Gate Reviews: Quarterly Theater

The dominant governance structure for pharma portfolio management is the stage-gate review. A cross-functional committee meets quarterly or annually to evaluate each asset against predefined criteria: safety profile, efficacy signals, commercial potential, and competitive landscape. Projects that meet criteria advance. Projects that miss criteria are theoretically killed.

The word ‘theoretically’ carries weight here. In practice, stage-gate reviews are subject to well-documented cognitive failures. The sunk cost fallacy is the most damaging: teams that have spent $50 million on a Phase II asset, or executives who championed its acquisition, carry enormous psychological investment in its continuation. A negative data signal gets rationalized. A competitor’s setback gets overweighted as validation. The portfolio accumulates ‘zombie assets,’ molecules that are not succeeding but are not being killed because no one in the room has the political standing to pull the plug.

The data on discontinuation rates confirms this. Between 2018 and 2024, top biopharma companies discontinued an average of 21-22% of their programs annually. That rate, while substantial, masks a timing problem: the majority of those discontinuations happened in Phase II or Phase III, after the most expensive developmental work had already been done. Approximately 50% of discontinued assets now terminate in Phase I, which is an improvement driven partly by early AI screening, but the industry still carries billions in late-stage failures that were statistically predictable earlier.

1.3 The Data Silo Problem: A Structural Intelligence Failure

A large pharma organization typically runs three separate intelligence silos, and the walls between them are thick.

R&D owns clinical efficacy and safety data, mechanism-of-action characterization, and biomarker programs. Legal owns patent term calculations, freedom-to-operate analyses, and litigation tracking. Commercial owns payer sentiment, prescriber segmentation, and competitive revenue models. Each silo uses different data systems, different vocabulary, and different time horizons. R&D thinks in five-to-ten-year development cycles. Commercial thinks in twelve-to-eighteen-month launch windows. Legal operates on patent term expiration dates that extend decades into the future.

The practical consequence: a clinical team can advance an asset through a Phase II trial based on strong efficacy data, entirely unaware that a competitor has constructed a patent thicket around the relevant formulation space, or that the commercial team’s payer analysis shows the therapeutic class becoming overcrowded by the asset’s projected 2031 launch date. The failure mode does not arise from incompetence in any single silo. It arises from the absence of integrated intelligence.

AI-driven portfolio management is, at its core, an answer to this structural intelligence failure.

1.4 The Static Valuation Trap

Risk-adjusted net present value (rNPV) is the industry’s standard financial model for asset valuation. It discounts projected cash flows by the cumulative probability of clinical and regulatory success at each development stage. The formula is well-established. The problem is the inputs.

Traditional rNPV models use static probability estimates drawn from phase-level historical benchmarks: Phase I oncology has a 5% success rate, Phase II CNS has a 9% success rate, and so on. These benchmarks treat all assets within a phase as statistically equivalent. A first-in-class molecule with validated biomarker selection and a robust mechanism-of-action hypothesis gets the same 5% Phase I success probability as a fifth entrant in an overcrowded indication with no differentiation. The model ignores asset-specific biology, trial design quality, and competitive context.

The sensitivity of rNPV to input assumptions makes this dangerous. A two-percentage-point shift in the probability of Phase III success can move a $500 million rNPV to $1.2 billion or to $150 million, depending on the direction. Peak sales window assumptions carry similar leverage. When the inputs are generic benchmarks rather than asset-specific predictions, the model produces a range of valuations that is too wide to be actionable and too optimistic to be honest.

Key Takeaways: Section 1

The case for AI in portfolio management rests on three concrete failures of the status quo: the compounding cost of failure under Eroom’s Law, the cognitive biases embedded in stage-gate governance that delay necessary kills, and the structurally siloed intelligence architecture that allows late-stage surprises. Static rNPV models amplify all three failures by using generic probability inputs that are insensitive to asset-specific biology and competitive context. The AI stack does not fix these problems by adding complexity. It fixes them by integrating data and improving the accuracy of probability inputs.

Section 2: How AI Rebuilds the rNPV Model from First Principles

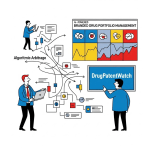

2.1 The Digital Twin Portfolio

The most useful conceptual shift in AI-driven portfolio management is from the snapshot to the continuous model. A traditional rNPV is a snapshot: it reflects conditions at the moment it was built, updated perhaps once per year. An AI-driven ‘digital twin’ is a live representation of the portfolio that updates valuations as new data arrives, from a competitor’s trial read-out to a Paragraph IV filing by a generic manufacturer to a change in the CMS reimbursement framework for the relevant therapeutic area.

This is not just a speed improvement. It changes the decision architecture. When a portfolio digital twin updates in near-real time, portfolio managers can respond to competitive events within days rather than waiting for the next quarterly review. A competitor Phase III failure that validates your mechanism of action triggers an immediate upward revision to your asset’s rNPV and a revised estimate of time-to-peak-sales. A new patent filing by a competitor in your formulation space triggers an immediate freedom-to-operate flag. The portfolio becomes responsive rather than static.

The technical infrastructure for a digital twin includes a unified data lake that ingests internal clinical data, external patent filings via APIs such as DrugPatentWatch, competitive intelligence from clinical trial registries (ClinicalTrials.gov, EudraCT, CTRI), real-world evidence, and regulatory submission data. The AI layer sits on top of this lake, running models that continuously update probability estimates and financial projections.

2.2 Machine Learning-Driven Probability of Success Estimation

The core formula for rNPV is:

rNPV = sum of [E(CF_t) x P(S_t) / (1 + r)^t]

where E(CF_t) is the expected cash flow at time t, P(S_t) is the cumulative probability of success at time t, and r is the discount rate (WACC). AI’s most direct impact on this formula is the improvement of P(S_t) from a phase-level benchmark to an asset-specific prediction.

Machine learning models trained on historical clinical trial databases can achieve 70-89% accuracy in predicting trial outcomes, compared to a 56-70% baseline for phase-level historical averages. The accuracy gain comes from the model’s ability to incorporate variables that the benchmark ignores: the drug’s chemical scaffold and its similarity to previously approved or failed molecules, the trial design (adaptive vs. fixed, biomarker-selected vs. unselected patient population), the inclusion and exclusion criteria, the historical performance of clinical sites selected for the trial, the recruitment rate trajectory, and the biological mechanism’s prior validation in the indication.

This asset-specific probability estimation changes the portfolio’s appearance immediately. Assets that look like average Phase I candidates by benchmark may score in the top quartile by AI-derived probability, or the bottom decile. The portfolio sorts differently, and the priority sequence for resource allocation changes.

2.3 Beyond Monte Carlo: Replacing Subjective Distributions

Monte Carlo simulation has been a staple of pharma financial modeling for two decades. It addresses the single-point estimate problem by running thousands of scenarios using probability distributions rather than point values. The problem is the distributions themselves. If a financial modeler draws a triangular distribution for peak sales with a most-likely value of $2 billion, a minimum of $500 million, and a maximum of $5 billion based on personal judgment and analogous product comparisons, the Monte Carlo output is a sophisticated representation of that person’s subjective opinion, not an objective risk analysis.

AI replaces subjective distribution assumptions with data-derived distributions. For peak sales, the model analyzes the actual uptake trajectories of analogous approved products, adjusted for competitive intensity, formulary positioning, payer dynamics, and real-world adherence data. The resulting distribution reflects the empirical dispersion observed in comparable launches, not an analyst’s range estimate. The Monte Carlo runs on empirical inputs and produces a more accurate risk profile.

For probability of success, the AI model generates a posterior distribution conditioned on all available asset-specific data, updated as new information arrives. If a Phase I interim analysis shows a clean safety profile and early pharmacodynamic signals, the Phase II success probability shifts upward in real time, and the downstream rNPV revisions cascade automatically.

2.4 Commercial Uptake Modeling: Beyond the S-Curve

Traditional commercial modeling uses a standard S-curve for product launch: slow initial uptake, an inflection point as adoption builds, a plateau at peak sales, and an eventual decline. The specific parameters of the curve are estimated by analogy to comparable products. This approach misses several variables that determine whether a launch follows its projected curve.

AI-driven commercial modeling incorporates payer coverage lag by analyzing the formulary access timeline for drugs in the same therapeutic area, the historical willingness of PBMs to grant preferred tier status to first-in-class vs. follow-on products, physician prescribing behavior segmented by practice type and referral pattern, and competitor launch timing relative to the projected launch date. The result is a launch trajectory that reflects the specific market conditions the asset will face, not the average trajectory of all prior launches in the class.

A top-10 pharma company demonstrated this during a recent product launch. AI-driven analysis of electronic medical records and prescription data identified a segment of physicians, previously below the threshold for sales force attention, who showed disproportionate prescribing potential based on referral network analysis. Reallocating 40% of sales calls to this segment produced a 60% increase in new prescriptions in the months following the reallocation.

2.5 Algorithmic Alpha: AI in Business Development

Intelligencia AI published case study data showing that an AI-curated portfolio of early-stage biotech companies achieved a 60% return with a Sharpe Ratio of 1.83, compared to a 17% return for the XBI-ETF biotech benchmark over the same period. The mechanism: the AI identified assets where the market had priced in high risk based on surface-level signals (small company, pre-clinical stage, niche indication), but the underlying biological and clinical data indicated a materially higher probability of success than the market consensus implied.

For BD teams at large pharma, this ‘algorithmic alpha’ approach is replacing manual due diligence sequencing. AI screens hundreds of assets simultaneously, scoring each on probability of technical and regulatory success. The BD team receives a ranked shortlist rather than a broad landscape, and they can begin competitive analysis on high-probability targets before smaller companies have completed their manual reviews of the same assets. In deal-making, speed is pricing power.

Investment Strategy Note: Section 2

Institutional investors evaluating pharma companies should treat AI integration into portfolio decision-making as a valuation input, not a qualitative feature. Companies that demonstrate AI-driven probability of success estimates with documented accuracy metrics are operating with systematically better rNPV models. Their late-stage pipeline valuations carry less model risk than companies still using phase-level benchmarks. The premium associated with AI adoption is measurable: earlier kills reduce capital burned on failures, better probability estimates reduce the premium that equity markets assign to late-stage uncertainty, and improved commercial modeling tightens the confidence interval on peak sales projections.

Section 3: Patent Intelligence as a Core Financial Asset: The Exclusivity Stack

3.1 The Patent as the Primary Revenue Protection Unit

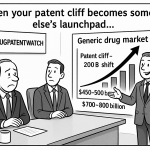

In pharmaceutical portfolio management, a patent is not background legal infrastructure. It is the primary financial asset underlying product revenue. The loss of exclusivity (LOE) event, when composition-of-matter or formulation patent protection expires and generic or biosimilar competition enters, is the single most consequential event in a branded drug’s commercial life. Revenue declines of 80-90% within 24 months of LOE are typical in small-molecule markets where multiple generic manufacturers enter simultaneously. In biologic markets, LOE dynamics are slower but structurally similar once biosimilar interchangeability designations are established.

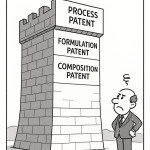

For a portfolio manager or investor, the ‘exclusivity stack’ of each asset in the portfolio is a direct proxy for the revenue protection timeline. The exclusivity stack describes all layers of IP and regulatory exclusivity that protect revenue: the composition-of-matter patent (the crown jewel, protecting the active pharmaceutical ingredient structure), method-of-use patents (protecting specific approved indications), formulation patents (protecting delivery mechanisms, dosing regimens, or formulation techniques), and regulatory exclusivities (five-year new chemical entity exclusivity, three-year new clinical investigation exclusivity, seven-year orphan drug exclusivity, and six-month pediatric exclusivity extensions).

Each layer adds time between LOE and generic entry, and each layer is subject to challenge. Understanding the stack, and modeling the probability that each layer survives challenge, is essential to accurate rNPV construction.

3.2 IP Valuation Framework: Assigning Monetary Value to Each Patent Layer

The IP valuation of a pharmaceutical patent is not the same as the market capitalization of the drug’s revenue stream. It is the incremental revenue protection that the patent provides, discounted back to present value, probability-adjusted for litigation risk.

Composition-of-matter patents carry the highest valuation because they protect the molecule itself. A successful Paragraph IV challenge to a composition-of-matter patent eliminates all downstream protection. For a drug generating $3 billion in annual revenue with eight years remaining on its composition-of-matter patent, the rNPV contribution of that single patent, at a 10% discount rate and a 95% probability of surviving challenge, approaches $14-16 billion. The same asset with a 30% probability of Paragraph IV settlement (generic entry in three years rather than eight) has a composition-of-matter patent worth $5-7 billion, a delta that defines the outcome of the litigation.

Method-of-use patents protect specific approved indications and are weaker than composition-of-matter claims. They are relevant primarily for lifecycle management: if the composition-of-matter patent has expired but a method-of-use patent covers the drug’s primary indication, it creates a legal barrier to generic entry in that indication, though generic manufacturers can legally promote their product for off-label uses that fall outside the method claim. The financial value of a method-of-use patent post-LOE is typically 20-40% of the composition-of-matter patent’s value, depending on the indication’s revenue concentration and the specificity of the claim language.

Formulation patents protect extended-release mechanisms, transdermal delivery systems, nanoparticle formulations, and other delivery innovations. Their financial value depends on whether the formulation provides a clinically meaningful benefit that payers and physicians will actively choose over the generic alternative. If a once-daily extended-release formulation improves adherence and clinical outcomes relative to a three-times-daily immediate-release formulation, payers may preferentially cover the branded formulation even after the composition-of-matter patent expires. If the formulation provides only convenience without measurable outcomes benefit, payers will switch to the generic.

3.3 DrugPatentWatch as an IP Intelligence Feed

Building an accurate exclusivity stack requires granular, real-time IP data at a level of specificity that general legal databases and manual patent searches cannot reliably provide. DrugPatentWatch aggregates the FDA’s Orange Book patent listings, Paragraph IV certification filings, patent term extension applications, pediatric exclusivity grants, and litigation outcomes into a structured database accessible via API.

For an AI portfolio model, the DrugPatentWatch API feed provides the data inputs for several critical functions. The model can automatically calculate the LOE date for each asset in the portfolio, incorporating all relevant exclusivity layers. It can flag new Paragraph IV filings against portfolio assets within days of the filing, triggering an immediate rNPV revision based on the statistical probability of settlement versus litigation victory. It can track competitor formulation patent filings, alerting the portfolio team to potential new competitive barriers in relevant therapeutic areas. It can monitor 505(b)(2) application filings, which signal that a competitor is pursuing a reformulation or new indication strategy for a molecule in the same class.

The practical workflow in an AI-powered portfolio management system integrates this data feed directly into the portfolio digital twin. A Paragraph IV filing against Asset X triggers an automatic flag that forces a revaluation of that asset’s revenue tail, a competitive analysis of the filing entity’s litigation track record, and a probability-weighted scenario analysis of settlement timing. The portfolio manager receives this analysis within 24-48 hours of the filing, not at the next quarterly review.

3.4 Detecting Patent Thickets and White Space via NLP

Natural language processing applied to patent claim language has created a new category of competitive intelligence: automated patent landscape analysis. Patent claims are written in dense, precise legal language designed to be as broad as the prior art allows. A single patent can contain dozens of independent and dependent claims, each defining a slightly different version of the protected invention. Manually mapping the claim landscape across thousands of patents in a therapeutic area requires teams of patent attorneys and months of work.

NLP models trained on patent claim language can perform this mapping in hours. The output is a visual representation of the patent landscape: dense clusters of overlapping claims identify patent thickets where competitive entry is legally constrained, and areas with sparse or narrow claims identify ‘white space’ where a new entrant can operate without freedom-to-operate concerns.

For R&D strategy, this matters early. A company exploring a new formulation in the GLP-1 agonist space, for example, can run an NLP patent landscape analysis via DrugPatentWatch before investing in wet-lab synthesis. If the analysis identifies a broad Markush structure claim filed by Novo Nordisk or Eli Lilly that encompasses the proposed molecular scaffold, the project either needs a pivot to a structure outside the claim scope or a clearance opinion before proceeding. Identifying this constraint in week two of a project costs nothing. Identifying it in week 52 after synthesis and preliminary pharmacokinetic studies costs millions and likely ends the project.

Key Takeaways: Section 3

Every asset in a pharmaceutical portfolio has an underlying IP valuation that is distinct from its revenue valuation and should be modeled separately. The exclusivity stack, composed of composition-of-matter, method-of-use, formulation, and regulatory exclusivity layers, defines the revenue protection timeline and is the primary input to the loss-of-exclusivity date calculation. Real-time API data from platforms like DrugPatentWatch is a prerequisite for accurate stack modeling in an AI-driven portfolio system. NLP-based patent landscape analysis can identify freedom-to-operate constraints before R&D capital is committed, a capability that eliminates one of the most expensive failure modes in early-stage pipeline management.

Section 4: IP Valuation Deep-Dive: Composition, Method, Formulation, and Pediatric Extensions

4.1 Composition-of-Matter Patents: Claim Scope and Litigation Risk

A composition-of-matter patent covering the active pharmaceutical ingredient is the broadest and most valuable IP protection available to a drug developer. The scope of this patent, specifically whether the claims cover the compound broadly (including salt forms, isomers, and prodrugs) or narrowly (only the specific disclosed form), determines its defensibility against Paragraph IV challenges.

Broad composition-of-matter claims are harder to invalidate on prior art grounds because the broader the claim, the less likely that prior art anticipates the specific claimed subject matter. But broad claims face greater non-obviousness challenges, since a broader claim covers more ground that might have been obvious to a person skilled in the art at the time of filing. The litigation risk distribution for a given composition-of-matter patent is a function of its prosecution history, the claim breadth, the inventor’s disclosure of the full scope, and the strength of the obviousness position.

AI-driven litigation risk modeling draws on databases of Paragraph IV outcomes by district court, claim type, and opposing counsel. The D. Del. (District of Delaware) and the Eastern District of Texas handle the majority of Hatch-Waxman litigation. Historical data on which courts have granted preliminary injunctions, which specific defense arguments have succeeded, and which law firms have the best track records in specific patent claim categories allows the AI to assign probability scores to litigation outcomes that go well beyond generic ‘likely to succeed or fail’ assessments.

4.2 Method-of-Use Patents and the Carve-Out Problem

Method-of-use patents claim the drug for a specific therapeutic use, not the drug itself. They typically expire after the composition-of-matter patent in a lifecycle management strategy, extending revenue protection into the generic competition phase. But they come with a structural vulnerability: the ‘skinny label’ carve-out.

A generic manufacturer can file an ANDA that explicitly carves out the patented indication from its approved labeling. The generic can then be marketed for other approved uses, and physicians can prescribe it off-label for the patented indication. Courts have split on whether this carve-out strategy constitutes infringement of the method-of-use patent. GSK’s case involving Coreg (carvedilol) established that induced infringement can occur even with a skinny label if the generic’s marketing efforts encourage the patented use. But subsequent cases have narrowed this interpretation. The financial modeling of a method-of-use patent must incorporate the probability of skinny label carve-out success and its impact on revenue retention in the protected indication.

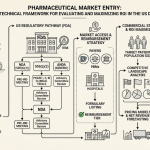

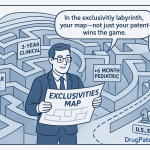

4.3 Formulation Patents: The 505(b)(2) Roadmap

Formulation patents protect specific drug delivery innovations and are the workhorse of the lifecycle management strategy. The 505(b)(2) regulatory pathway allows a drug developer to file an NDA for a new formulation, new indication, or new dosing regimen by referencing existing safety and efficacy data from an already-approved drug, combined with new bridging studies. This pathway is faster and less expensive than a full 505(b)(1) NDA and produces a new patent-eligible product.

The technology roadmap for formulation switching follows a documented sequence that AI can map for any asset approaching LOE. The typical playbook runs in this order: extended-release conversion (converting an immediate-release multiple-daily-dose product to once-daily dosing via matrix technology, osmotic pump, or coated bead systems), abuse-deterrent formulation (relevant for Schedule II-IV controlled substances), fixed-dose combination (pairing the molecule with a complementary agent to improve outcomes and create a new patent position), and nanoparticle or pro-drug reformulation (improving bioavailability, reducing side effects, or enabling new routes of administration).

Each step on this roadmap generates a new formulation patent with a 20-year term from filing, a new regulatory exclusivity period from the 505(b)(2) approval, and a new commercial product that can be positioned above the generic version of the original formulation on payer formularies.

DrugPatentWatch’s monitoring of 505(b)(2) filings is strategically critical for both branded companies planning lifecycle management and generic companies evaluating formulation challenge opportunities. A branded company that detects a competitor’s 505(b)(2) filing in its therapeutic area six months before the generic company achieves approval can accelerate its own reformulation program and preemptively capture the extended-release market position. A generic company that identifies an open formulation window, a drug approaching composition-of-matter LOE with no active formulation patents, can begin 505(b)(2) development and potentially enter the market before branded reformulation strategies are in place.

4.4 Pediatric Exclusivity: The Undervalued Revenue Extender

Pediatric exclusivity grants six months of additional market exclusivity, attached to all existing patents and regulatory exclusivity periods, in exchange for completing FDA-requested pediatric clinical studies. For a drug with $3 billion in annual revenue, six months of additional exclusivity is worth $1.5 billion in revenue, at virtually zero incremental development cost if the pediatric studies are well-designed and efficiently executed.

Despite this favorable return profile, pediatric exclusivity is systematically undervalued in traditional rNPV models because it is treated as a binary outcome (granted or not granted) rather than modeled probabilistically. AI models trained on FDA Written Request issuance history, study completion rates, and pediatric exclusivity grant patterns can assign precise probability scores to pediatric exclusivity outcomes, enabling more accurate revenue tail modeling.

The strategic timing of Written Request requests is also an AI-tractable problem. Companies that request pediatric studies early in the development program, when Phase III enrollment is generating the patient population and infrastructure needed for concurrent pediatric studies, complete studies faster and capture the exclusivity grant closer to the original LOE date. Delaying pediatric study initiation until the composition-of-matter patent is approaching expiry compresses the timeline for completing studies before LOE, reducing the probability of a timely grant.

Investment Strategy Note: Section 4

The IP valuation of a drug portfolio should be disaggregated by patent layer for accurate financial modeling. Analysts who use only LOE date as the IP variable are missing the probability-weighted value of method-of-use and formulation patent layers, the skinny label litigation risk embedded in method-of-use protection, and the pediatric exclusivity probability. A company with a well-managed exclusivity stack, one that has filed method-of-use patents covering its primary indications, has active formulation development programs targeting 505(b)(2) approval, and has completed pediatric studies for its lead asset, carries materially lower LOE cliff risk than its headline LOE date suggests.

Section 5: Generative AI and the Shift from Wargaming to Continuous Simulation

5.1 Traditional Wargaming: An Honest Assessment of Its Limits

Competitive wargaming, in its traditional form, assembles executives in a two-day off-site. Teams are assigned competitor roles and asked to develop the best strategies for their assigned companies. The exercise produces scenario plans and a shared understanding of competitor motivations that are genuinely useful for strategy teams. The problem is structural: the exercise is expensive, it happens once or twice a year, and it is populated by people who work for your company and think like your company. The ‘competitor teams’ are playing themselves with different PowerPoint templates.

The output is a snapshot of competitive dynamics at a specific point in time, presented as a set of discrete scenarios with no ongoing update mechanism. By the time the wargaming report circulates, a competitor may have already executed the strategy that the exercise identified as their most likely move.

5.2 The GenAI Wargaming Architecture

Generative AI and large language models (LLMs) replace this episodic exercise with a continuous simulation capability. The Johns Hopkins Applied Physics Laboratory’s GenWar facility demonstrated this approach in a defense context, using LLM agents to run autonomous wargaming simulations that model competitor behavior at scale. The same architecture applies directly to pharmaceutical competitive strategy.

In a pharma implementation, each competitor receives an AI agent trained on that company’s public data: annual reports, 10-K and 10-Q filings, earnings call transcripts, press releases, clinical trial registry data, patent filing history, CEO statements, and investor day presentations. The agent develops a behavioral model of the competitor that reflects their stated priorities, historical decision patterns, financial constraints, and pipeline composition.

The portfolio strategy team can then run a therapeutic area simulation: what happens to competitive dynamics in the NASH space when Asset X achieves FDA approval in Q3 2027? The AI runs thousands of iterations. In 40% of scenarios, the leading competitor initiates an aggressive contracting strategy with PBMs to lock in formulary access. In 30% of scenarios, the competitor accelerates their own NASH Phase III program. In 20% of scenarios, the competitor files a combination patent that creates a new exclusivity position around the most effective treatment regimen. In 10% of scenarios, the competitor pivots to a different indication. The portfolio team gets a probability distribution over competitive responses, not a single scenario.

This ‘second-and third-order thinking’ capability is the core strategic value. Standard scenario planning identifies first-order moves. AI simulation maps the full game tree of move and counter-move, including non-obvious outcomes like the rebate war scenario where an aggressive pricing strategy destroys value for both competitors, or the value-based contracting equilibrium where a more measured pricing approach leads to a stable duopoly.

5.3 Real-Time Competitive Intelligence: The Launch Room

The traditional ‘War Room’ for product launches ran on static dashboards: weekly prescription data, payer coverage tracking, and field force reports. GenAI replaces this with a continuous synthesis engine that processes real-time signals, from prescriber sentiment analysis on medical education platforms, to payer formulary updates, to competitor publication activity in the relevant journals.

The ‘Launch Room’ concept is already being deployed at major pharma companies. The AI synthesizes incoming data streams and surfaces actionable signals: a spike in off-label prescribing of a competitor’s product in the primary indication suggests a coverage gap the sales force should address. A pattern of formulary rejections at a specific PBM suggests a pricing or outcomes data problem. A cluster of adverse event reports on a medical education platform signals a tolerability concern that may be suppressing adoption in a specific physician segment.

The output is a prioritized action queue for the launch team, not a data dump. The human team’s role shifts from data synthesis to decision execution.

Key Takeaways: Section 5

GenAI wargaming replaces the annual off-site with a continuous simulation capability that models thousands of competitive scenarios in real time. The output is a probability distribution over competitor responses, not a discrete set of scenarios, which enables genuinely probabilistic strategy formulation. The Launch Room application of this technology gives commercial teams real-time competitive intelligence during the most capital-intensive period in a drug’s commercial life, the first 18-24 months post-launch. Companies that deploy this capability gain a measurable speed advantage in tactical response to competitive moves.

Section 6: Real-World Deployments: AbbVie, Sanofi, J&J, and Intelligencia AI

6.1 AbbVie: ARCH and the Knowledge Graph

AbbVie’s R&D Convergence Hub (ARCH) is among the most developed internal AI portfolio management systems at a large pharma company. ARCH integrates data from more than 200 internal and external sources into a unified knowledge graph. The graph encodes relationships between genetic variants, molecular targets, clinical outcomes, competitive assets, and patent positions.

The practical IP significance of ARCH: the system allows AbbVie’s portfolio teams to query for new indication opportunities for existing assets based on genetic and clinical relationships, effectively automating hypothesis generation for lifecycle management. An asset with strong mechanism-of-action data in one indication can be algorithmically flagged as a candidate for a related indication based on shared pathway biology, with the system simultaneously checking the patent landscape for the new indication and assessing the competitive position.

AbbVie’s IP strategy around its leading assets has reflected this sophistication. Humira (adalimumab) accumulated over 130 patents covering the compound, formulations, dosing regimens, methods of use in over 10 different indications, and manufacturing processes, a strategy that delayed biosimilar interchangeability in the U.S. until January 2023 despite the composition-of-matter patent expiring years earlier. Whether the full Humira patent estate was AI-generated is less important than the observation that the same systematic approach to layered IP protection that ARCH enables algorithmically was executed manually at great expense over two decades. AI makes this systematic, not exceptional.

6.2 AbbVie IP Valuation: The Skyrizi and Rinvoq Transition

AbbVie’s post-Humira portfolio transition centers on Skyrizi (risankizumab) and Rinvoq (upadacitinib), both of which are expected to generate combined revenues exceeding Humira’s peak. Skyrizi’s composition-of-matter patent runs until approximately 2031, with method-of-use patents in psoriasis, psoriatic arthritis, Crohn’s disease, and ulcerative colitis extending protection further.

The rNPV of AbbVie’s post-Humira IP estate is not simply the sum of Skyrizi and Rinvoq’s projected revenues. It includes the lifecycle management optionality: new indications for Rinvoq in atopic dermatitis and ankylosing spondylitis have generated additional method-of-use patents and three-year new clinical investigation exclusivity periods. Formulation work on both products has produced new patents. The pediatric exclusivity programs for both are pending. A properly disaggregated IP valuation of AbbVie’s current portfolio, taking each of these layers into account, produces a significantly higher risk-adjusted IP value than a naive LOE-date-based model.

6.3 Sanofi: Pipeline Pruning and AI-Driven Discontinuation

Sanofi’s use of AI in portfolio management has been most visible in its discontinuation strategy. CEO Paul Hudson’s commitment to reducing the pipeline to high-probability, high-value assets was paired with AI screening tools that evaluate PTRS (Probability of Technical and Regulatory Success) for each program at regular intervals. The result was a series of high-profile discontinuations in therapeutic areas where Sanofi’s assets showed below-threshold PTRS scores and limited competitive differentiation.

The financial rationale: a program discontinued in Phase I at a cost of approximately $30 million versus a program discontinued in Phase III at a cost of $300 million to $1 billion represents a factor-of-ten improvement in capital efficiency. Sanofi’s AI-driven approach to early discontinuation freed capital for higher-probability programs and for its Dupixent (dupilumab) lifecycle management work, where new indications in COPD and alopecia areata have generated multiple new method-of-use patents.

Dupixent’s IP valuation is complex. The original composition-of-matter patent, which Sanofi co-owns with Regeneron, was filed in the early 2000s. The accumulation of method-of-use patents across 12+ approved or late-stage indications has created an exclusivity stack that extends well beyond the composition-of-matter expiry. Modeling this stack accurately requires tracking each indication’s patent and regulatory exclusivity separately, a task that is impractical manually and is exactly the use case for AI-integrated DrugPatentWatch data feeds.

6.4 Johnson & Johnson: AI in Clinical Trial Design

J&J’s AI work spans target identification through commercial launch, but its most mature application relevant to portfolio management is in clinical trial design and patient selection. Deep learning algorithms pre-screen biomarkers and tissue samples to identify the patient subpopulations most likely to respond to a given mechanism of action. This biomarker-driven enrollment strategy reduces the probability of Phase II failure by concentrating the trial in the most responsive population.

The portfolio management implication: assets that might show a 45% success probability with unselected patient enrollment may show a 70% success probability with AI-optimized biomarker selection. The rNPV difference at those two probability levels, for a drug targeting a $2 billion peak sales market, is several hundred million dollars. The clinical trial design decision, an R&D function, becomes a direct input to the portfolio financial model.

J&J’s Janssen unit has also used AI for Paragraph IV strategy. Natural language processing tools that map competitor patent claim language can identify the most vulnerable claims in a competitor’s patent estate, informing the decision to file a Paragraph IV certification versus a 505(b)(2) new formulation strategy. This claim vulnerability analysis is AI-tractable because it involves pattern recognition across thousands of patent prosecution records.

6.5 Intelligencia AI and ZS Associates: Oncology Asset Screening

The most precisely documented case study on AI speed in portfolio management comes from a collaboration between Intelligencia AI and ZS Associates. The task was asset screening for a global pharmaceutical company evaluating entry into the Chinese oncology market. The starting universe was over 1,000 assets. The required output was a ranked shortlist with PTRS assessments for each asset.

Manual due diligence on 1,000 assets, using standard BD team methodology, typically takes four to six months. The AI-driven screening process completed the list reduction to 20 high-priority targets in eight weeks. The speed advantage is not merely about efficiency. In cross-border licensing or acquisition negotiations, the party that completes due diligence first has first-mover pricing power. A BD team that can table a term sheet six weeks before competitors have completed their landscape analysis is negotiating from a structurally different position.

The methodology used by Intelligencia’s model evaluates PTRS at the asset level based on mechanism-of-action validation, clinical trial design quality, sponsor track record, competitive landscape in the indication, and regulatory pathway clarity. The model’s training dataset consists of thousands of historical trial outcomes across mechanism, indication, and sponsor type. This is asset-specific probability estimation operating at BD-team scale and speed.

Section 7: Lifecycle Management and the Formulation-Switch Technology Roadmap

7.1 The Full Lifecycle Management Technology Stack

Lifecycle management (LCM) is the set of strategies a branded pharmaceutical company uses to extend the revenue contribution of an asset beyond its original composition-of-matter LOE date. The toolkit is well-established, but the sequencing and selection of the right strategy for a given asset requires integration of clinical, regulatory, patent, and commercial data that AI handles better than human teams working across silos.

The full LCM technology stack, from earliest to latest application in a drug’s lifecycle, runs as follows. The first-to-file strategy involves filing continuation patents covering salt forms, polymorphs, enantiomers, and hydrates of the API, all of which may be patent-eligible even when the parent compound is known, if the specific form was not previously described. This strategy generates composition-of-matter adjacent coverage that extends beyond the parent patent. Polymorph patents have been challenged with mixed success in litigation; a polymorph patent that claims a form with meaningfully superior pharmaceutical properties (better stability, higher bioavailability, lower hygroscopicity) is substantially more defensible than one that claims a form with identical properties to the original.

Extended-release conversion is typically the next step, and is the most commercially reliable LCM play when a drug has meaningful adherence or compliance challenges with its current dosing regimen. The clinical evidence requirement for a 505(b)(2) NDA covering a new formulation is a bridging pharmacokinetic study demonstrating bioequivalence between the new formulation and the original, plus any additional studies the FDA requests based on the product class. The resulting new formulation patent runs 20 years from filing, typically adding eight to twelve years of protection relative to the original composition-of-matter expiry.

Fixed-dose combination development comes later in the lifecycle, when the clinical community has established practice patterns showing that the original drug is frequently co-administered with a second agent. A fixed-dose combination that improves patient convenience, reduces pill burden, and improves outcomes over separate administration generates both a new patent position and a strong commercial argument for payer coverage of the branded combination at a premium over the generic components. The Entresto (sacubitril/valsartan) combination is the most cited example: Novartis combined two existing compound classes into a fixed-dose combination with a novel mechanism, generating a new patent estate and a product that commands premium pricing in the heart failure market.

7.2 AI’s Role in Formulation Opportunity Identification

AI applied to the LCM decision involves three separate analyses running simultaneously. The patent expiration timeline analysis, drawing on DrugPatentWatch data, calculates the current exclusivity stack for the asset and identifies when the composition-of-matter patent expires, when pediatric exclusivity runs out, and which formulation patents are already in place. The clinical landscape analysis evaluates whether the drug’s mechanism and patient population are well-suited for extended-release conversion or combination development, drawing on adherence data from electronic medical records and patient support programs. The competitive patent analysis scans competitor formulation patent filings in the same therapeutic area for signs that a rival is pursuing the same LCM strategy.

The output of this integrated analysis is a prioritized LCM roadmap with timing recommendations. The AI does not replace the formulation scientist or the clinical strategist. It provides the integrated picture that tells the team which opportunity is largest, which is most defensible from an IP perspective, and which needs to be executed by a specific date to pre-empt a competitor.

7.3 Evergreening: The Regulatory and Ethical Boundary

The term ‘evergreening’ describes the practice of accumulating incremental patents on modifications to an existing drug to extend market exclusivity beyond what the underlying innovation justifies. It is a real strategy, it is widely documented, and it attracts significant regulatory and policy scrutiny. Understanding where LCM ends and evergreening begins matters for IP teams, R&D leads, and investors.

The practical definition in patent law: a formulation or method-of-use patent that claims a modification without meaningful clinical benefit is legally vulnerable to invalidity on obviousness grounds and may be dismissed by courts as non-patentable. A polymorph patent that claims a form with no improved pharmaceutical properties over the original is the classic evergreening example. A formulation patent that claims an extended-release version with documented improvements in adherence and clinical outcomes is defensible LCM.

The FDA’s exclusivity framework creates an implicit test for clinical significance: three-year new clinical investigation exclusivity is granted only when new clinical studies were necessary to gain approval of the formulation change. If the change required clinical trials, it passed a threshold of meaningful differentiation by the FDA’s standard. If it did not require clinical trials, the exclusivity grant is narrower and the patent is more vulnerable to challenge.

AI can model the litigation risk of any given LCM patent based on claim language, prosecution history, and prior outcomes in analogous cases. This converts the evergreening risk from a qualitative concern into a quantified litigation probability that feeds directly into the IP valuation.

Section 8: Early Termination Economics: The ‘Fail Fast’ Calculus

8.1 The Cost Architecture of Drug Development

The case for AI-driven early termination rests on the cost architecture of drug development. Preclinical work and target identification typically cost $5-10 million per asset. Phase I safety trials run approximately $25-50 million. Phase II proof-of-concept trials run $100-200 million. Phase III confirmatory trials run $300 million to over $1 billion depending on indication, patient population, and trial duration. The total cost of a program that advances to Phase III and fails there is $500 million to $1.3 billion, inclusive of preclinical spend.

If an asset is going to fail, and the majority of assets are going to fail, the financial optimal outcome is to identify and act on that failure signal as early as possible. Every stage of development at which an asset is identified as non-viable and terminated reduces the capital consumed by that failure by an order of magnitude.

8.2 AI as the Objective Arbiter: The PTRS Example

The PTRS case study published by Intelligencia AI illustrates this dynamic precisely. An internal pharma team evaluated a potential acquisition target and assigned it a 45% PTRS score. The internal team was bullish, as internal teams typically are when evaluating acquisitions they have championed. The company then ran an independent AI assessment using Intelligencia’s model, trained on thousands of historical trial outcomes with similar characteristics. The AI assigned an 8% PTRS, placing the asset in the bottom quartile.

The company paused. Eventually, it declined to proceed. The target asset subsequently failed in clinical trials. The company avoided a write-off that, based on the stage of development the asset was in at the time of evaluation, would have been in the hundreds of millions of dollars.

The key mechanism is not that the AI was smarter than the internal team. It is that the AI had no sunk cost bias. It had not spent three years working on the molecule. It did not have a career investment in the acquisition. It evaluated the asset’s characteristics against its training set and returned a probability. The human team had the same underlying data but processed it through a filter of motivated reasoning.

Explainable AI (XAI) is the interface that makes this objective assessment actionable in organizational contexts. A black-box model that returns ‘8% PTRS’ with no supporting rationale will be dismissed by the project team. An XAI model that returns ‘8% PTRS because: (1) the mechanism of action has an 18% prior success rate in this indication, (2) biomarker selection criteria exclude 40% of the responsive population, and (3) two prior assets with similar chemical scaffold characteristics failed at this stage for off-target toxicity reasons’ is credible and discussable. The team can engage with the specific risk factors and decide whether they have mitigating evidence for each.

8.3 Industry-Wide Termination Efficiency

The industry-wide adoption of AI in early-stage screening is projected to save $25-54 billion annually in R&D costs by eliminating late-stage terminations that could have been identified earlier. This projection, from the Information Technology and Innovation Foundation, is consistent with McKinsey’s estimate that AI across the pharmaceutical value chain could generate up to $100 billion in annual value.

The behavioral shift is already visible in the discontinuation data. The proportion of program discontinuations occurring in Phase I rose from roughly 35% in 2015 to approximately 50% by 2024. The direction of the trend is consistent with earlier screening efficiency, though the magnitude of the AI contribution versus other factors is difficult to isolate. Companies that have deployed AI-based PTRS screening consistently report earlier kill decisions and measurably improved Phase II success rates in the surviving pipeline, which is the expected outcome if the screening is functioning correctly.

Section 9: Manufacturing Intelligence: AI in the P&L

9.1 Predictive Maintenance and Yield Optimization

AI’s portfolio management impact is not confined to the R&D and IP functions. It has direct P&L relevance in manufacturing, particularly for biologics and sterile injectables where batch failure rates and yield variability have historically been high.

A global sterile manufacturing site reported a 15% yield uplift after deploying AI-driven real-time process controls. A North American contract manufacturing organization (CMO) achieved 30-60% improvement in downstream throughput using AI-optimized purification process parameters. Across the broader manufacturing landscape, AI implementations in visual inspection, batch release, and predictive maintenance have reduced manufacturing costs by up to 31%.

For a biologic drug generating $2 billion annually with a 25% cost-of-goods-sold (COGS) ratio, a 15% yield improvement translates to approximately $75 million in annual margin uplift per asset. At a portfolio scale of 10 commercial products, this manufacturing efficiency gain alone can represent $500 million to $1 billion in annual EBITDA improvement, a figure that is not theoretical but is already being captured by early adopters.

9.2 AI in Biologic Process Development: The Technology Roadmap

Biologic drug manufacturing involves upstream cell culture, downstream purification, and formulation steps that are each independently complex. AI applications across this manufacturing chain follow a technology roadmap that pharma CMC (chemistry, manufacturing, and controls) teams are executing now.

Upstream cell culture optimization uses machine learning to model the relationship between culture medium composition, temperature, pH, dissolved oxygen, and antibody productivity. Models trained on historical batch data can recommend parameter adjustments in real time to maintain cell culture conditions in the optimal productivity range, reducing batch variability and improving yields without requiring additional experimental runs. Downstream purification, specifically protein A affinity chromatography and ion exchange polishing steps, involves parameter interactions that exceed human ability to optimize manually. Reinforcement learning models have demonstrated the ability to identify non-intuitive parameter combinations that improve yield and purity simultaneously.

Formulation development for biologics involves protein stability characterization: identifying excipient combinations that maintain protein conformation and activity across the drug’s shelf life and storage conditions. AI-driven stability prediction models, trained on large excipient-protein interaction datasets, can screen thousands of formulation options computationally before committing to physical stability studies, reducing the experimental footprint of formulation development by 50-70%.

Section 10: Biosimilar Interchangeability: The AI-Assisted Competitive Threat Model

10.1 Why Biosimilar Interchangeability Changes the Risk Model

For branded biologic manufacturers, biosimilar interchangeability is the loss-of-exclusivity equivalent in the biologic space. A biosimilar designated as interchangeable by the FDA can be substituted for the reference product at the pharmacy level without physician intervention, in states that allow automatic substitution. This is a materially different competitive dynamic from simple biosimilar approval, which requires an active prescriber decision to switch.

The first biosimilar with interchangeability status in a product class typically captures a significantly higher market share than subsequent interchangeable biosimilars, because formulary managers and pharmacy benefit managers often establish single preferred biosimilar contracts. The ‘first-to-interchangeable’ position is the biologic equivalent of first-to-file in the Hatch-Waxman small-molecule framework.

AI models tracking the biosimilar pipeline can identify which products are likely to achieve interchangeability designation for a given reference biologic, estimate the timing of that designation based on the sponsor’s clinical study progress and FDA review track record, and model the market share impact on the reference product’s revenue under different scenarios of formulary access for the interchangeable biosimilar.

10.2 The Biologic Patent Thicket: An AI-Assisted Defense Analysis

Branded biologic manufacturers defend their market position against biosimilar competition through multiple IP layers, a defensive strategy that the biosimilar industry refers to as the ‘patent thicket.’ AbbVie’s Humira estate, which ultimately encompassed over 130 patents, is the most studied example. The patents covered the compound, cell line used in manufacturing, purification processes, formulation characteristics, dosing regimens, and methods of use in each indication.

AI-assisted patent thicket analysis allows biosimilar developers to identify the most vulnerable patents in a reference product’s estate and prioritize Paragraph IV challenges accordingly. By analyzing the prosecution history of each patent, its relationship to prior art, and the outcomes of prior challenges to similar patents, the AI produces a vulnerability ranking. The biosimilar developer focuses its legal resources on the high-vulnerability patents that, if invalidated, would clear the path to earlier market entry.

For the branded manufacturer, the same analysis runs in reverse: the AI identifies the most vulnerable patents in its own estate and flags them for defensive prosecution work (adding claims, requesting reexamination, seeking continuation patents) before a Paragraph IV challenge forces the issue.

Section 11: Hidden Risks of Algorithmic Decision-Making

11.1 The Explainability Problem

Black-box machine learning models, particularly deep learning architectures, can achieve high predictive accuracy without producing human-interpretable explanations for their predictions. For portfolio management decisions that involve killing a flagship asset, terminating a multi-year partnership, or recommending against a billion-dollar acquisition, ‘the model says no’ is not sufficient rationale for the organizational decision.

The Explainable AI (XAI) movement addresses this directly. XAI models use architectures, including SHAP (SHapley Additive exPlanations) values, LIME (Local Interpretable Model-agnostic Explanations), and attention mechanisms in transformer models, that produce feature importance scores alongside predictions. A portfolio model powered by XAI does not just predict failure; it identifies which specific features drove the prediction, at what magnitude, and with what confidence intervals.

The organizational value of XAI extends beyond the single decision. When a model’s feature importance scores are consistently driven by the same biological or clinical factors across multiple assets, it trains the portfolio team to look for those factors in every asset evaluation. The AI becomes a teacher as well as a predictor.

11.2 Algorithmic Bias in Clinical Databases

Training data for pharmaceutical ML models is drawn primarily from clinical trial records. These records are heavily skewed: trial populations have historically overrepresented white, male, and younger adult demographics. A model trained on this data learns probability estimates that may be systematically inaccurate for underrepresented demographic groups.

The practical risk: an AI model might predict Phase III success for an asset based on mechanism and biomarker data that were generated in a predominantly white patient population, then fail to flag that the drug’s metabolism differs significantly in populations with high prevalence of CYP enzyme polymorphisms common in East Asian patients. The false positive failure mode, where the model predicts success in a population where the drug actually underperforms, is the primary clinical risk. The false negative failure mode, where the model predicts failure in a diverse real-world population because of limited data on that population in the training set, leads to abandoning assets that might actually succeed.

Federated learning, a technique in which ML models are trained across multiple data sources without the data leaving its source institutions, offers a path toward more diverse training datasets without compromising patient privacy. Large pharma companies, academic medical centers, and national health systems each hold pieces of the full data picture. Federated architectures allow models to learn from all of these sources.

11.3 Generative AI Hallucinations in Clinical Contexts

Large language models generate text that is statistically plausible based on their training data, not text that is factually verified. In clinical and regulatory contexts, this propensity to hallucinate (to generate confident but false statements) creates specific risks.

One documented test of a general-purpose LLM in a clinical chart review context produced an interpretation that classified a patient’s self-reported alcohol consumption as qualifying as physical activity. General-purpose models also fail on medical shorthand: they may interpret the abbreviation ‘AS’ as ‘aortic stenosis’ rather than an article when the context is ambiguous, producing incorrect clinical interpretations. These errors are not random noise; they are systematic failures at the edges of the model’s training distribution.

Purpose-built, domain-specific models, trained on clinical notes, regulatory submissions, and pharmaceutical literature with validation against ground-truth datasets, substantially reduce these hallucination risks. The distinction between general-purpose LLMs and domain-specific pharma models is not a marketing distinction. It is a clinical safety distinction with direct regulatory implications.

11.4 FDA Regulatory Uncertainty for AI-Generated Evidence

The FDA has published discussion papers and frameworks addressing AI use in drug development, but the regulatory landscape for AI-generated evidence in NDA and BLA submissions remains in development. The key open questions center on synthetic control arms, AI-generated biomarker endpoints, and adaptive trial designs informed by real-time AI analysis.

Synthetic control arms, which use historical patient data to simulate the control group in a clinical trial, have been accepted by the FDA in rare disease contexts where traditional randomized controlled trials are impractical. For common indications with large patient populations, the bar for synthetic control acceptance is higher. AI-driven adaptive trial designs, where the trial protocol is modified based on interim results analyzed by an algorithm, require pre-specified adaptation rules and prospective regulatory agreement. The FDA’s emphasis on ‘human-in-the-loop’ validation reflects the agency’s position that algorithmic decisions in clinical trial design must be supervised and auditable.

Portfolio managers and R&D leads building AI into clinical trial design need FDA engagement early, at Type B pre-IND meetings, to establish what AI-generated evidence will and will not support in a submission.

Section 12: Cultural and Organizational Barriers to AI Adoption

12.1 The ‘Drug Hunter’ Identity Problem

Pharmaceutical R&D has been built around the identity of the ‘drug hunter’: the experienced scientist or clinician who draws on years of biological intuition to identify promising molecules, read ambiguous data, and make judgment calls that algorithms cannot replicate. This identity is not entirely a myth. The intuition of skilled scientists does encode pattern recognition that training data alone cannot yet match.

The problem is when this identity becomes a barrier to AI adoption, framing algorithmic tools as threats to professional expertise rather than amplifiers of it. The Innovator’s Dilemma operates here: established scientists with long track records have the highest organizational credibility and the most to lose from a system that challenges their judgments with data. They have the most influence over the portfolio process and the strongest incentives to maintain the status quo.

Organizations that have successfully integrated AI into portfolio management typically did not frame the technology as a replacement for expert judgment. They framed it as an independent data source that the expert team consults alongside other sources. The AI prediction is one input to the stage-gate decision, not the decision itself. This framing preserves the scientist’s role as the decision-maker while introducing the AI’s probability estimate as a check on cognitive bias.

12.2 The T-Shaped Talent Requirement

Effective AI deployment in pharmaceutical portfolio management requires people who understand both the biology and the algorithms. This ‘T-shaped’ professional model, with deep expertise in one domain (the vertical bar) and broad literacy in the other (the horizontal bar), is the talent configuration that makes AI tools actionable.

A data scientist who can build a PTRS prediction model but does not understand the biology of the mechanism cannot interpret the model’s feature importance scores in clinical context. A biologist who understands mechanism-of-action but cannot interrogate a ML model cannot identify when the model is operating outside its training distribution. The teams that produce the highest-quality AI-assisted portfolio decisions combine both profiles.

The practical implication for pharma companies: training programs that give R&D scientists enough data science literacy to participate meaningfully in AI model evaluation are more cost-effective than hiring large teams of pure data scientists and expecting them to develop biological intuition from scratch. The direction of the T is easier to build from the biology side up.

12.3 Data Infrastructure: The Prerequisite

AI models are the visible layer of a portfolio intelligence system. The invisible prerequisite is a clean, integrated data infrastructure. Most large pharma organizations do not have one. Clinical trial data lives in proprietary EDC (electronic data capture) systems. Patent and regulatory data lives in legal databases. Market data lives in commercial intelligence platforms. Manufacturing data lives in MES (manufacturing execution systems). Each system uses different data models, different entity naming conventions, and different temporal granularities.

Building a unified data lake that connects these systems is a technology infrastructure project with a two-to-five-year timeline, significant capital expense, and complex organizational politics around data ownership and access. Departments that currently control access to their data as a source of organizational influence have structural incentives to resist centralization. This is the most common cause of failed pharma AI initiatives: not the algorithm, but the data plumbing.

Companies that address this infrastructure problem, either by building centralized data platforms or by deploying middleware that federates data access across existing systems, unlock the AI capability. Companies that try to build AI models on top of fragmented data infrastructure produce models that are only as good as the silo they were trained on.

Section 13: Investment Strategy: Positioning Around AI-Driven Portfolio Shifts

13.1 Identifying AI Maturity in Pharma Companies

For institutional investors evaluating pharma equity, AI maturity in portfolio management is a quantifiable factor that correlates with R&D efficiency metrics. The indicators to look for in earnings reports, investor presentations, and management discussions are specific rather than generic.

Companies that have deployed AI maturity in portfolio management will show measurable Phase I discontinuation rate increases over a multi-year period, not absolute pipeline size growth but a shift in the distribution of terminations toward earlier phases. They will show improved Phase II success rates in the surviving pipeline, which is the expected outcome of better early screening. They will show BD activity that reflects AI-assisted asset screening, including faster time-from-screening to term sheet in licensing transactions. They will have specific technology partnerships with AI platform companies, such as Intelligencia AI, Insilico Medicine, Recursion Pharmaceuticals, or Schrodinger, that indicate deployed capability rather than exploratory pilots.

Companies that show none of these signals but describe AI as a ‘key strategic priority’ in investor presentations are in the pilot phase and have not yet converted AI investment into operational efficiency.

13.2 Patent Cliff Risk Assessment: An AI-Informed Framework

The largest near-term patent cliff risk for large-cap pharma is concentrated in a specific set of assets. Analysts who follow the LOE headlines know which drugs are at risk. What AI-informed analysis adds is a probability-weighted view of the full exclusivity stack, not just the headline composition-of-matter date.

A drug with a 2028 composition-of-matter LOE date may have method-of-use protection extending to 2032 in its primary indication, a formulation patent covering its extended-release version running to 2034, and a pediatric exclusivity grant that adds six months to each of those dates. Its effective LOE, probability-weighted across the litigation risk of each patent layer, may be 2030-2031, not 2028. The market discount for LOE risk applied to this drug based solely on the 2028 headline date overstates the actual revenue cliff.

Conversely, a drug with a 2032 composition-of-matter date may have a pattern of pre-emptive Paragraph IV filings against its formulation patents that signals aggressive generic challenger activity. Its effective LOE, accounting for settlement probability and at-risk launch probability, may be 2029-2030. The market may not have priced the accelerated cliff risk.

DrugPatentWatch data, analyzed through an AI probability model, converts these qualitative observations into quantified LOE probability distributions. Investors who have access to this level of patent stack analysis hold a pricing edge in LOE-sensitive positions.

13.3 BD Activity as a Proxy for AI Screening Capability

Business development deal flow patterns reveal AI adoption in portfolio management more directly than any public disclosure. Companies that have deployed AI-assisted BD screening show deal activity concentrated in specific therapeutic areas where the AI has identified high-probability assets, with faster transaction timelines and higher specificity in the due diligence requests they make of target companies.

A pharma company that executes twelve licensing transactions in a 24-month period, with above-average Phase II success rates across the licensed assets, and a median time from first contact to LOI of six weeks rather than the industry-typical twelve to sixteen, is operating with AI-assisted BD screening. The returns from that screening capability compound: better-selected assets generate higher rNPV, which funds more BD activity, which provides more training data for the AI model.

Key Takeaways: Section 13

AI maturity in portfolio management is visible to investors through specific operational metrics: earlier-phase discontinuation rates, improved Phase II survival rates in the surviving pipeline, and BD transaction speed and selectivity. Patent cliff risk analysis requires disaggregated exclusivity stack modeling, not just headline LOE dates, and AI-assisted probability weighting across litigation scenarios. Companies that treat the DrugPatentWatch exclusivity stack as a core financial data input, alongside clinical and commercial data, carry materially lower LOE valuation model risk than peers who rely on headline patent expiration dates.

Section 14: Master FAQ

Q: How does AI improve Probability of Technical and Regulatory Success (PTRS) estimation beyond phase-level benchmarks?

A: Phase-level benchmarks treat all assets within a given phase as statistically equivalent. An ML model trained on historical trial outcomes uses asset-specific features, including mechanism-of-action class, trial design quality, biomarker selection criteria, chemical scaffold similarity to prior successes and failures, clinical site performance history, and patient population demographics, to generate a PTRS specific to the asset. Published studies show accuracy improvements of 14-19 percentage points over phase benchmarks, a difference that translates to hundreds of millions of dollars in rNPV precision for a single asset.

Q: Can AI reliably predict Paragraph IV litigation outcomes?

A: No model predicts a judge’s ruling with certainty, but AI can quantify the litigation risk. By analyzing thousands of historical Paragraph IV cases, the model identifies the success rates associated with specific claim types, court jurisdictions, defending law firms, and patent claim breadth characteristics. The output is a probability estimate (for example, 65% probability of settlement with generic entry in 2029, 25% probability of full term patent victory, 10% probability of loss) that feeds directly into the rNPV exclusivity stack model.

Q: What is synthetic data, and when is it credible for regulatory submissions?

A: Synthetic data describes computationally generated datasets that mimic the statistical properties of real patient data without containing identifiable records. In clinical development, ‘synthetic control arms’ use historical patient data to model the control group in a trial, reducing or eliminating the need for a randomized control group. The FDA has accepted synthetic control arms for rare disease submissions where randomized enrollment is impractical. For common indications, the bar for acceptance is higher, and pre-submission alignment with FDA on the validation methodology is required. For internal portfolio modeling and scenario planning, synthetic data is reliable when generated from high-quality source datasets and validated against held-out historical outcomes.

Q: How does the DrugPatentWatch API integrate into an AI portfolio management system?

A: The API delivers structured patent data, including composition-of-matter patent terms, Paragraph IV filing notifications, patent term extension applications, pediatric exclusivity grants, and litigation status, directly into the portfolio digital twin. The AI model queries this feed to update LOE date calculations automatically when new filings occur, to trigger rNPV revisions when Paragraph IV challenges are filed, to flag competitor 505(b)(2) activity in relevant therapeutic areas, and to monitor the exclusivity stack of every asset in the portfolio at a granularity that manual review cannot maintain.

Q: What is biosimilar interchangeability, and why does it matter for biologic portfolio risk?

A: Biosimilar interchangeability is an FDA designation indicating that a biosimilar can be substituted for the reference biologic at the pharmacy level without physician approval, in states that allow automatic substitution. It represents a materially higher competitive threat than basic biosimilar approval because it removes the prescriber as a gatekeeper for brand-to-biosimilar switching. Portfolio models for biologic assets must distinguish between the LOE date for basic biosimilar approval and the potentially later but commercially more disruptive date of first interchangeability designation. AI models tracking biosimilar pipeline development can estimate the probability and timing of interchangeability designations for each reference biologic, enabling more accurate revenue tail modeling.

Q: What does ‘T-shaped talent’ mean in the context of pharma AI deployment?

A: The T-shape metaphor describes professionals with deep expertise in one domain (the vertical stroke) and broad competency in a second domain (the horizontal stroke). In pharmaceutical AI, the ideal profile is a scientist or portfolio manager with deep domain knowledge in biology, chemistry, or clinical medicine who has developed sufficient data science literacy to interrogate AI model outputs, identify training data limitations, and interpret feature importance scores in domain context. This profile is more effective in an AI-integrated portfolio team than a pure data scientist who lacks biological intuition, because the domain knowledge is needed to validate model outputs, not just to generate them.

Q: Is AI replacing portfolio managers and BD executives?