Introduction: The $2 Billion Data Problem

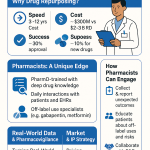

Start with the math. A new drug costs roughly $2 billion and a decade to reach market. Of every 20,000 to 30,000 compounds explored during early discovery, one gets approved. About 10% of drugs that enter Phase I clinical trials will earn regulatory clearance. The rest fail, and they fail expensively.

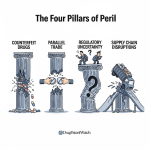

That attrition rate is not purely a scientific problem. A substantial share of clinical failures trace back to decisions made years earlier with incomplete or poorly integrated data. Compounds advance into expensive Phase II and Phase III trials carrying undetected toxicity signals, metabolic liabilities, or IP encumbrances that a rigorous data review would have surfaced in preclinical work. The financial damage from a single late-stage clinical failure can exceed $800 million in sunk development costs alone, according to estimates published in the Journal of Health Economics. That number does not include opportunity cost.

Chemical databases are the primary instrument for preventing that outcome. They are not digital filing cabinets. They are the operational and strategic infrastructure through which R&D organizations identify promising compounds, map competitive IP landscapes, predict ADMET liabilities, design synthesis routes, and justify portfolio decisions to boards and investors. In 2025, the global cheminformatics market was valued at approximately $5 billion and is forecast to reach $13.5 billion by 2032, growing at a 15.2% CAGR. That trajectory reflects how thoroughly data strategy has become a core pharmaceutical competency.

This pillar page is written for the people making decisions about that infrastructure: pharma and biotech IP teams conducting Freedom-to-Operate analyses, portfolio managers assessing pipeline risk, R&D leads allocating resources across discovery programs, and institutional investors trying to price patent-cliff exposure into their pharma holdings. It covers the full database ecosystem, from publicly funded repositories to mission-critical commercial platforms, and goes deeper than existing guides on the questions that matter most: IP valuation, evergreening roadmaps, biosimilar interchangeability designation timelines, Paragraph IV filing strategy, and how to build a proprietary knowledge graph that competitors cannot replicate.

Section 1: The Digitalized Drug Discovery Pipeline, Stage by Stage

Mapping Data Requirements to Pipeline Stages

Before selecting databases, understand the pipeline they support. Each of the five standard development stages asks different scientific questions, accepts different types of evidence, and carries a different cost profile. The databases most useful at Stage 1 discovery are nearly useless at Stage 4 regulatory review. Misaligning data resources and pipeline stage is one of the less visible causes of R&D inefficiency.

Stage 1: Target Identification and Lead Discovery

The first stage begins with a biological question: which molecular target, if modulated, would alter the course of a disease? Target identification leans on genomic, proteomic, and pathway data. Target validation, the step that confirms a target is causal rather than merely correlated with disease, requires structural biology data, genetic knockout models, and early bioactivity screening results.

Once a target is validated, chemists search for hit compounds. High-throughput screening (HTS) campaigns can test libraries of hundreds of thousands of compounds against a target in a matter of weeks. Virtual screening, now standard at companies with robust cheminformatics infrastructure, uses computational models to evaluate libraries of millions of compounds in silico before any physical synthesis occurs.

Data requirements at this stage are broad. Researchers need access to large chemical structure repositories, curated bioactivity databases, three-dimensional protein structures, patent literature, and prior art searches. The primary databases at this stage are PubChem (structure and bioassay data), ChEMBL (curated bioactivity), the Protein Data Bank (3D structural data), and CAS SciFinder or Reaxys (prior art and reaction data).

IP intelligence enters at Stage 1 too, though many organizations bring it in too late. Identifying whether a lead scaffold is already claimed in a competitor’s composition-of-matter patent is far cheaper to do before a hit-to-lead campaign than after it. The Orange Book and purpose-built pharmaceutical IP platforms become relevant from the first structural series onward.

Stage 2: Preclinical Research and the ADMET Filter

Preclinical research answers a narrower question: is this compound safe enough to test in humans? Work conducted under FDA Good Laboratory Practices guidelines includes pharmacokinetic profiling, pharmacodynamic assessment, and toxicology studies in cell-based and animal models. The IND application to the FDA is the gate at the end of this stage.

The data focus narrows sharply. Researchers need quantitative structure-activity relationship (QSAR) data, ADMET prediction outputs, metabolite identification data, and hERG cardiotoxicity screening results. ADMET-AI, ADMETlab 2.0, and Simulations Plus’s ADMET Predictor are the dominant computational tools here. The Hazardous Substances Data Bank and TOXNET provide verified experimental toxicology reference data.

A critical insight about Stage 2: this is where data failure most directly translates into financial failure. An analysis of Phase II attrition across major pharmaceutical companies consistently shows that 20 to 30 percent of failures cite inadequate preclinical ADMET characterization as a root cause. Better data at Stage 2 does not guarantee clinical success. It does substantially raise the probability that the compounds entering trials carry manageable safety profiles.

Stage 3: Clinical Research, Phases I Through IV

Clinical research is the most expensive stage, consuming roughly 60% of total development costs. The three-phase structure is well known. Phase I (20 to 80 healthy volunteers) tests safety and dose-ranging. Phase II (100 to 300 patients) tests preliminary efficacy and continues safety assessment. Phase III (300 to 3,000 patients, often multinational) provides the pivotal efficacy and safety evidence for regulatory submission. Phase IV post-marketing studies generate real-world evidence after approval.

Database needs during clinical phases shift toward competitor intelligence, clinical trial landscape analysis, and market access data. Platforms like GlobalData, Evaluate Pharma, and the ClinicalTrials.gov registry feed into go/no-go decisions during Phase II. Patent databases become critical again during Phase III, as the originator company typically files secondary patents on crystalline forms, salt forms, specific dosage regimens, pediatric formulations, and methods of use precisely at the point when the clinical program is generating validating data.

That secondary patent filing activity is an early indicator of commercial confidence. When a company files six to twelve secondary patents on a drug asset during Phase III, it is telegraphing belief that the asset will succeed and that it intends to defend the commercial position beyond the composition-of-matter patent expiry. Patent databases that track filing dates and assignee patterns can decode this signal before the clinical data readout.

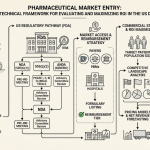

Stage 4: FDA Review and the NDA/BLA Data Package

The New Drug Application or Biologics License Application is the culmination of all prior data collection. The FDA reviews chemistry, manufacturing and controls (CMC) data, animal study results, clinical trial summaries, proposed labeling, and risk evaluation and mitigation strategies where applicable.

Data integrity at this stage is paramount. Errors, inconsistencies, or gaps in the chemistry and pharmacology data package are among the leading causes of Complete Response Letters (CRLs), which can delay approval by twelve to twenty-four months and cost hundreds of millions of dollars. The investment in clean, curated, traceable chemistry data throughout the discovery and preclinical phases pays off here.

Stage 5: Post-Market Surveillance and Pharmacovigilance

Approval is not the end of the data cycle. The FDA’s MedWatch adverse event reporting system, the FAERS database, and increasingly, real-world evidence from electronic health records continue to generate safety signals after launch. Companies with effective pharmacovigilance programs catch safety issues early, minimizing regulatory intervention. Those without them face label changes, black box warnings, or in extreme cases, market withdrawal.

Key Takeaways: Section 1

Data requirements change at each pipeline stage, and a one-size-fits-all database strategy fails because of it. The highest-value intervention point for data investment is Stage 2 preclinical ADMET filtering, where early de-risking has a multiplicative effect on clinical success rates. IP intelligence is not a Stage 4 consideration; it belongs at Stage 1 and persists through every subsequent gate. Organizations that integrate patent landscape monitoring into their discovery workflow rather than treating it as a downstream legal task consistently demonstrate better portfolio capital efficiency.

Section 2: A Strategic Taxonomy of the Chemical Database Universe

Why Taxonomy Matters Before Tool Selection

Picking a database without first categorizing it by functional purpose is the equivalent of selecting a surgical instrument by brand name rather than by the procedure it performs. The chemical database ecosystem contains hundreds of resources. Understanding which category a given tool belongs to, what problem that category is designed to solve, and what its inherent limitations are, allows R&D and CI teams to build data strategies rather than tool lists.

Public Repositories: Open Science Infrastructure

Public repositories are the foundational layer of the chemical database universe. They aggregate data from academic research programs, government screening initiatives, patent offices, and industry contributors, and provide open access. The three most consequential are PubChem (NCBI), ChEMBL (EMBL-EBI), and the Protein Data Bank (Worldwide PDB partnership).

Their strategic value is breadth, accessibility, and their role as the primary training data source for AI and machine learning models in drug discovery. Every major pharmaceutical company doing computational chemistry draws on these public repositories, directly or indirectly. The data is free; the competitive advantage comes from the analytical infrastructure built around it, not the access itself.

The limitations are equally important. Public repositories aggregate without gatekeeping. HTS data in PubChem is heavily populated with false positives, assay artifacts, and pan-assay interference compounds (PAINS). ChEMBL applies expert curation and is substantially cleaner but still carries annotation inconsistencies across its historical database. The PDB has a well-documented resolution and completeness bias: structures for targets with pharmaceutical relevance are over-represented, while targets in understudied disease areas are sparse or absent.

Commercial Platforms: Expert Curation at Scale

Commercial databases charge subscription fees because their primary product is not data, it is curated, structured, analysis-ready knowledge. CAS SciFinder and Reaxys are the two dominant platforms. Both employ large teams of PhD-level scientists who abstract, normalize, and cross-link chemical, reaction, and property data from journals and patents at a scope and depth that no public effort replicates.

The ROI case for these platforms is a labor substitution argument. A junior scientist with a SciFinder subscription can complete a comprehensive literature review in four hours that would take four days using only public resources and unstructured search. At fully-loaded personnel costs for a pharmaceutical researcher in the United States or Western Europe, that time differential translates quickly into a subscription fee that pays for itself on a handful of projects per year.

Bioactivity and Target Hubs

This category focuses on quantifying the interaction between molecules and biological targets. DrugBank integrates clinical pharmacology with chemical data, linking approved drugs to their primary targets, off-targets, metabolic enzymes, and known drug-drug interactions. BindingDB specializes in quantitative binding affinity data: Ki, Kd, and IC50 values extracted from the literature and patents.

These databases are the primary training data source for machine learning potency prediction models. A QSAR model trained on BindingDB binding affinities for a kinase family can predict the likely IC50 of a newly designed analog before a single assay is run. The accuracy of that prediction depends entirely on the quality and quantity of the binding data used to train the model.

ADMET and Toxicology Platforms

ADMET prediction platforms span a spectrum from academic web servers with machine learning models (ADMETlab 2.0, ADMET-AI) to enterprise software with integrated physiologically based pharmacokinetic simulation (ADMET Predictor from Simulations Plus). The strategic purpose across the category is identical: identify ADMET liabilities before committing experimental resources. The platforms differ substantially in their breadth of predicted endpoints, the quality of their training data, and their integration with downstream informatics workflows.

Patent and Competitive Intelligence Platforms

General-purpose patent search tools like Google Patents, Espacenet, and the USPTO full-text database provide broad coverage and free access. Purpose-built pharmaceutical IP platforms, such as DrugPatentWatch, Cortellis IP, and Citeline’s Inteleos, add pharmaceutical-specific data layers: Orange Book patent listings, FDA tentative approvals, PTAB proceedings, Paragraph IV challenge filings, supplementary protection certificates (SPCs), pediatric exclusivity extensions, and international patent family mapping.

The distinction between these two categories is not merely convenience. For high-stakes pharmaceutical IP decisions, the accuracy and currency of data is a legal and financial liability issue, not just a usability preference.

Natural Product and Metabolomics Databases

The Human Metabolome Database (HMDB), the METLIN metabolomics database, and the Traditional Chinese Medicine Systems Pharmacology (TCMSP) database form a category that supports both biomarker discovery and natural product-derived drug lead identification. These resources are increasingly relevant to precision medicine programs, where plasma metabolome profiling can identify disease biomarkers and patient stratification markers.

Table 1: Comparative Overview of Major Chemical Databases

| Database | Primary Focus | Access Model | Key Data Types | Best Use Case |

|---|---|---|---|---|

| PubChem | Public Chemical Repository | Free | Structures, Bioassays, Properties | AI/ML training, virtual library screening |

| ChEMBL | Curated Bioactive Molecules | Free | Ki, IC50, SAR, ADMET | Lead optimization, target selectivity analysis |

| Protein Data Bank | 3D Macromolecular Structures | Free | Atomic Coordinates | Structure-based drug design |

| CAS SciFinder | Comprehensive Chemistry | Commercial | Literature, Reactions, Patents, Substances | Definitive literature search, retrosynthesis |

| Reaxys | Reaction and Substance Data | Commercial | Experimental Properties, Reactions, Patents | Synthesis planning, quantitative property retrieval |

| DrugBank | Drug-Target-Clinical Integration | Freemium | Drug Targets, PK, Clinical Data | Repurposing, ADMET context, pharmacovigilance |

| BindingDB | Binding Affinities | Free | Kd, Ki, IC50 (quantitative) | QSAR model training, affinity benchmarking |

| DrugPatentWatch | Pharmaceutical IP Intelligence | Commercial | Patent Expirations, Para IV, Litigation, Generics | Generic entry strategy, FTO analysis, CI |

| HMDB | Human Metabolome | Free | Metabolite Structures, Biofluid Concentrations | Biomarker discovery, metabolic pathway analysis |

| ADMET Predictor | In Silico ADMET/PBPK | Commercial | 175+ predicted ADMET endpoints, HT-PBPK | Preclinical candidate screening, regulatory dossier support |

Key Takeaways: Section 2

No single database covers the full spectrum of drug discovery intelligence. A functional taxonomy, organized by the problem each category solves rather than by brand name, lets organizations build layered strategies: public repositories for breadth and AI training data, commercial platforms for curation and speed, specialized bioactivity databases for model training, and pharmaceutical-specific IP tools for defensible business decisions. Each tier has a distinct ROI profile and a distinct risk exposure when the wrong tool is used for the wrong task.

Section 3: The Public Data Trinity: PubChem, ChEMBL, and the Protein Data Bank

PubChem: Scale, Architecture, and the Curation Conundrum

PubChem is the largest open-access chemical database in the world. As of early 2026, it contains more than 119 million unique compounds in its Compound database, over 300 million substance records, and more than 1.5 million biological assay records spread across roughly 300,000 bioassay experiments. It is maintained by the National Center for Biotechnology Information (NCBI) at the U.S. National Institutes of Health.

The architecture of PubChem has three primary components. The Substance database archives raw records as deposited by contributors: chemical vendors, screening centers, academic labs, and government programs. The Compound database processes these raw records to generate unique, standardized chemical structures with canonical InChI keys and assigned Compound IDs (CIDs). The BioAssay database captures biological screening results, linking compounds to experimental protocols and outcome classifications.

PubChem’s depth of integration with the NCBI ecosystem is what elevates it from a compound register to a genuine discovery tool. A CID in PubChem links to related PubMed publications, gene records, protein sequences, and clinical trial data. A researcher identifying a promising hit in a BioAssay record can trace that compound through related literature and genomic context without leaving the interface.

The strategic limitation is noise. The BioAssay database accumulates HTS data from programs with highly variable assay quality. False positives, assay artifacts, and PAINS compounds are well-represented. PubChem archives this data; it does not curate it for experimental reliability. Organizations that download PubChem data for model training without applying quality filters are introducing systematic noise into their AI pipelines. The proprietary curation layer applied to raw PubChem data is where competitive advantage actually lives: the internal data science infrastructure that filters PAINS, normalizes activity thresholds across assay formats, and integrates the cleaned public data with proprietary screening results.

ChEMBL: Manual Curation as a Competitive Differentiator

ChEMBL, maintained by the EMBL-EBI in Cambridge, occupies a narrower but higher-quality position than PubChem. Its scope is approximately 2.4 million compounds and over 20 million bioactivity data points, all manually curated from peer-reviewed medicinal chemistry literature and patents. ChEMBL 34, released in late 2024, added approximately 2,400 new targets and expanded its patent-derived data to cover recent filings from the EPO and USPTO.

The editorial standard at ChEMBL is what makes it the preferred training data source for structure-activity relationship models. Curators extract quantitative endpoint data (Ki, Kd, IC50, EC50) alongside the assay conditions, species and protein isoform information, and confidence scores that indicate the directness of the compound-target interaction. This metadata allows computational chemists to filter for only high-confidence, primary activity measurements when building predictive models.

ChEMBL maintains a suite of target-class portals that provide focused views of the data. Kinase SARfari covers the kinome with selectivity profiling across hundreds of published kinase inhibitor datasets. GPCR SARfari covers G-protein coupled receptors, which represent the targets of approximately 34% of all FDA-approved drugs. ChEMBL-Neglected Tropical Diseases provides an open repository for compounds tested against pathogens relevant to diseases disproportionately affecting low-income countries.

From an IP perspective, ChEMBL’s extraction of bioactivity data from patent documents makes it a lightweight competitor intelligence tool. A patent claiming a new series of kinase inhibitors, with IC50 data reported against multiple kinase targets, will typically appear in ChEMBL within six to twelve months of the patent publication date. Organizations monitoring ChEMBL additions for new scaffold appearances in their target classes get an early signal of competitor activity at a cost of zero.

The Protein Data Bank: 90% of FDA Approvals Touch This Resource

The Protein Data Bank is the single global archive for experimentally determined three-dimensional structures of proteins, nucleic acids, and complex macromolecular assemblies. As of early 2026, it holds more than 220,000 structures deposited by researchers at academic institutions and pharmaceutical companies worldwide.

The quantified impact of the PDB on drug development is striking. Published analysis of FDA drug approvals between 2010 and 2016 found that the PDB facilitated the discovery or development of approximately 90% of the 210 new drugs approved during that period. This figure reflects the centrality of structure-based drug design (SBDD) to modern medicinal chemistry. SBDD uses atomic-resolution protein structures to guide the design of ligands that fit binding sites with high affinity and selectivity.

The strategic applications of PDB data are direct. Homology modeling of a target for which no experimental structure exists uses the PDB as its template library. Molecular docking campaigns require target structures from the PDB. Fragment-based drug discovery programs measure binding by co-crystallography and deposit their results in the PDB. Free energy perturbation calculations, now standard at larger companies for predicting binding affinity changes from structural modifications, are parameterized against experimental structures from the PDB.

One limitation worth noting: PDB structures are typically determined at cryogenic temperatures or under crystallographic packing conditions that do not fully represent the conformational dynamics of a protein in solution. This means that structures should be interpreted as snapshots, not fully representative models of target behavior. Molecular dynamics simulation, which uses PDB structures as starting points, is the standard method for exploring the conformational ensemble of a target.

Key Takeaways: Section 3

The public trinity forms a necessary but not sufficient data foundation. PubChem provides chemical breadth and AI training data; ChEMBL provides curated bioactivity intelligence with built-in CI utility; the PDB provides the structural substrate for rational drug design. Using all three in an integrated workflow, rather than as isolated resources, multiplies their individual value. The competitive moat is not in accessing these databases, which is free. It is in the proprietary curation, integration, and analysis infrastructure built around them.

Section 4: Commercial Platforms and the ROI Case for Premium Data

CAS SciFinder: A Century of Curation

The Chemical Abstracts Service has been indexing chemical literature since 1907. The modern embodiment of that effort is the CAS SciFinder Discovery Platform, a suite of tools built around the world’s most comprehensively indexed collection of chemical substances, reactions, and scientific literature.

The centerpiece is CAS SciFinderⁿ, the core search engine for the CAS content collection. CAS scientists index publications and patents at a depth that automated extraction cannot match: reaction conditions, stereochemistry, yield data, safety information, and the identity of specific compounds claimed or described in every document. PatentPak extracts the novel chemistry from patent documents and presents it in a structured format, reducing the time a chemist spends reading to find the relevant claims from hours to minutes.

CAS SciFinder’s retrosynthesis planning tool draws on the indexed reaction database to propose validated synthetic routes to any target molecule. It ranks routes by feasibility, cost, and sustainability metrics, and links each proposed step to the literature source that reports it. This capability directly reduces the time from molecule design to first physical synthesis.

A specific case worth quantifying: a mid-size biotech running a lead optimization campaign may need to synthesize forty to sixty analogs per quarter. If CAS SciFinder cuts synthesis planning time per compound from six hours to two hours, that is 160 to 240 hours of freed scientist time per quarter. At a fully-loaded annual cost of $200,000 for a senior synthetic chemist, that time differential represents $15,000 to $23,000 in labor savings per quarter, against a subscription that costs a fraction of that.

The business case extends to risk mitigation. A definitive prior art search conducted in SciFinder before initiating a new chemical series can identify existing patents that claim that scaffold, potentially avoiding months of work on an IP-blocked program. One avoided misdirected program pays for years of subscriptions.

Reaxys: The Home of Experimental Numbers

Reaxys, published by Elsevier, carves a distinct niche from SciFinder by centering on experimentally measured data. Its curation model requires that every data point in the platform correspond to a specific compound, be supported by an experimental measurement, and carry a verified citation. Physical properties, spectral data, reaction yields, and pharmacological measurements all meet this standard before inclusion.

An independent comparison by researchers at the University of Sydney found that Reaxys contains more than 100 times the number of experimental property data points as SciFinder. This makes Reaxys the better tool when a project requires large sets of measured physical or chemical properties for model training or SAR analysis. Its deep integration with Elsevier’s ScienceDirect full-text platform and the Scopus citation database makes it the natural choice for organizations already operating within the Elsevier ecosystem.

The practical comparison between SciFinder and Reaxys: SciFinder is stronger for comprehensive literature coverage, especially for older or non-English chemistry literature, and for patent analysis via PatentPak. Reaxys is stronger for quantitative experimental data retrieval, synthesis route planning from a data density standpoint, and integration with downstream Elsevier tools. Most large pharma companies subscribe to both, because the workflows they support do not fully overlap.

The True Cost of Free: Deconstructing the Build-vs-Buy Fallacy

The argument against commercial database subscriptions almost always begins with the word ‘free.’ PubChem is free. ChEMBL is free. The USPTO full-text database is free. Why pay?

The answer requires accounting for the full cost of ‘free.’ Using raw public data at enterprise scale requires a dedicated cheminformatics and data engineering team. That team designs and maintains ETL pipelines to download, parse, and normalize data from multiple sources. It builds deduplication logic to resolve the same compound appearing under different identifiers across ChEMBL, PubChem, and internal systems. It develops and applies quality filters to remove PAINS, stereochemical errors, and assay artifacts. It maintains the infrastructure to store and query tens of millions of compound records efficiently.

A team of four data scientists and two software engineers performing this work, at fully-loaded costs of $250,000 to $350,000 per person per year, costs $1.5 million to $2.1 million annually before a single piece of analysis is done. A SciFinder enterprise subscription for a mid-size pharma company runs roughly $100,000 to $300,000 per year. The math is not close. The relevant question is not ‘free vs. paid.’ It is whether building a proprietary data engineering capability creates a sustainable competitive advantage, or whether that investment is better deployed in scientific roles that generate novel IP.

For early-stage biotechs, the calculus strongly favors commercial subscriptions. For large pharma companies with proprietary datasets large enough to justify bespoke infrastructure, a hybrid strategy, public and commercial databases feeding into a proprietary data lake and knowledge graph, is standard practice.

Key Takeaways: Section 4

Commercial databases charge for curation, and curation is what converts data into reliable decision support. The ROI case is built on two dimensions: acceleration of research cycles and reduction of risk from bad data-driven decisions. SciFinder and Reaxys are not redundant; they cover adjacent and partially overlapping use cases, and the largest organizations run both. The ‘free vs. paid’ framing is a false economy that ignores the substantial hidden costs of building and maintaining enterprise-grade data engineering on top of public repositories.

Section 5: Specialized Databases for Bioactivity, ADMET, and Clinical Intelligence

DrugBank: The Chemistry-to-Clinic Bridge

DrugBank integrates chemical structure data with clinical pharmacology in a way that no other freely available database replicates. The current version covers more than 17,000 drug entries spanning FDA-approved small molecules, biologics, experimental compounds, and nutraceuticals. Each drug record links to over 5,000 protein targets and includes mechanism of action, absorption and distribution parameters, metabolic enzyme information, elimination pathways, drug-drug interaction data, and clinical indication data.

The primary strategic application is drug repurposing. By querying DrugBank for drugs that interact with a newly validated target, or drugs that share a structural scaffold with a target-class ligand but are approved for a different indication, researchers can identify candidates for repurposing with established safety profiles. Repurposing candidates face lower development risk than de novo compounds: the Phase I safety data exists, the manufacturing process is established, and in some cases the formulation and dosage work is already done.

DrugBank’s ADMET data layer provides clinical context for in silico predictions. When an ADMET prediction tool flags a compound for potential CYP3A4 inhibition, DrugBank can immediately surface which approved drugs share that liability and how it has been managed clinically. That context turns a computational warning flag into an actionable medicinal chemistry design principle.

BindingDB: Quantitative Binding Data for Model Training

BindingDB holds more than 3 million binding data points for over 1.3 million compounds and nearly 10,000 protein targets. Every record carries a quantitative affinity value (IC50, Ki, Kd, or EC50), a direct link to the source publication or patent, and target identification data at the UniProt accession level. Its 2024 update explicitly aligned its data model with FAIR principles, adding standardized metadata fields and persistent identifiers to improve machine-actionability.

The primary use of BindingDB is training and benchmarking computational potency prediction models. A machine learning model tasked with predicting the IC50 of new kinase inhibitor candidates needs a large, high-quality training set of compounds with measured kinase IC50 values. BindingDB is the most complete free source for that data. Researchers at academic institutions and AI-native drug discovery companies have used BindingDB as the core training data for published QSAR models across dozens of target classes.

BindingDB also publishes a target-pair similarity tool that allows users to find protein targets similar in binding site structure to a query target, then retrieve the chemical matter known to bind those related targets. This cross-target mining is a standard starting point for hit identification campaigns on newly drugged targets.

ADMET Prediction: From Academic Web Servers to Enterprise Platforms

The ADMET prediction landscape spans roughly four capability tiers.

At the entry level, open-access academic tools like SwissADME and FAF-Drugs4 calculate Lipinski Rule-of-Five parameters, polar surface area, and a handful of pharmacokinetic estimates. These are adequate for a rapid pre-screen of commercial compound library members but insufficient for lead optimization decisions.

The mid-tier academic platforms, notably ADMETlab 2.0 from Xiangya School of Pharmaceutical Sciences and ADMET-AI from Greenstone Biosciences, use graph neural network architectures trained on large public datasets to predict 80 to 100 distinct ADMET endpoints. ADMETlab 2.0 applies a multi-task graph attention framework that simultaneously predicts absorption, distribution, metabolism, excretion, and toxicity parameters in a single forward pass. ADMET-AI benchmarks each predicted property against the distribution observed across 2,500-plus DrugBank-approved drugs, providing a calibration that contextualizes outlier predictions.

ADMET Predictor from Simulations Plus represents the current enterprise standard. It predicts over 175 endpoints, including endpoints not covered by academic tools: intrinsic clearance in human liver microsomes and hepatocytes, P-glycoprotein substrate and inhibitor classification, OATP1B1/1B3 transporter interactions, and reactive metabolite formation potential. Its distinguishing feature is tight integration with high-throughput PBPK (HT-PBPK) simulation, which takes the predicted ADMET parameters and models the full pharmacokinetic profile of a compound in a virtual human, generating predicted Cmax, AUC, half-life, and bioavailability estimates. These outputs can feed directly into regulatory dossiers.

The selection among these tools should track the stage of the project. For early virtual screening of millions of compounds, the free academic tools are appropriate: speed matters more than precision at that scale. For lead optimization decisions that will direct synthesis of dozens of analogs, ADMET Predictor’s precision and PBPK integration justify the enterprise subscription cost. Using a free tool for lead optimization decisions is a false economy.

Key Takeaways: Section 5

Specialized databases solve problems that general repositories cannot. DrugBank provides the clinical context that transforms a hit compound into a repurposing hypothesis. BindingDB provides the quantitative binding data that grounds QSAR models in experimental reality. ADMET prediction platforms exist on a capability spectrum, and tool selection should match project stage and decision stakes. Applying entry-level ADMET tools to lead optimization decisions is as mismatched as using enterprise PBPK software to filter a million-compound virtual library.

Section 6: Patent Databases, Paragraph IV Strategy, and the IP Battleground

Why Patent Intelligence Is R&D Intelligence

Patents are the primary mechanism by which pharmaceutical companies extract return on R&D investment. They confer temporary market exclusivity, and the management of that exclusivity, through initial patent protection, secondary patent filing, litigation defense, and lifecycle management, drives the commercial strategy of every branded pharmaceutical product. Patent databases are therefore not a legal department concern alone. They are a core R&D and business intelligence resource.

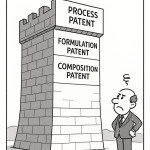

A pharmaceutical patent landscape has three primary components. The composition-of-matter (CoM) patent covers the active pharmaceutical ingredient itself and is typically the highest-value asset in a portfolio. Process patents cover specific manufacturing methods. Secondary patents, filed later in the development cycle, cover polymorphic forms, salt forms, specific formulations, dosage regimens, methods of use, metabolites, and combination products.

In the United States, the FDA Orange Book (Approved Drug Products with Therapeutic Equivalence Evaluations) lists patents that an NDA holder has certified as covering the approved drug or a method of use. Orange Book listing is a legal designation with significant commercial consequences: a generic applicant must certify against each listed patent, triggering the Hatch-Waxman framework and its associated 30-month stay period.

The Purple Book, maintained by the FDA for biological products, performs an analogous function for biologics and biosimilars, listing reference product sponsors and biosimilar applicants under the BPCIA framework.

Google Patents: Accessible Entry Point, Documented Liability

Google Patents indexes patent documents from the USPTO, EPO, WIPO, and approximately 100 other patent offices worldwide. Its full-text search, citation graph, and prior art tool make it the fastest way to get a general sense of a patent landscape. For academic research, background reading, and low-stakes exploratory searches, it is adequate.

For professional pharmaceutical IP work, several documented limitations create material risk. Data latency: Google Patents can run two to eight weeks behind official patent office records. For patent term calculations, expiration dates, and Orange Book listing status, this lag is operationally unacceptable. Structural search: Google Patents has no native chemical structure or substructure search capability, which means it cannot answer the most important question in pharmaceutical IP: ‘Is my compound covered by this patent claim?’ Biosequence search is absent.

The willful infringement risk bears particular attention for corporate users. Under 35 U.S.C. Section 284, courts can award up to treble damages for willful patent infringement. The standard for willfulness requires that the defendant knew of the patent and its claims. Internal research records, including browser histories showing Google Patents searches, are discoverable in patent litigation. If a defendant’s scientists accessed a patent-in-suit via Google Patents before launching the allegedly infringing product, that creates documentary evidence of knowledge of the patent. Companies that have successfully argued absence of willfulness typically demonstrate that they relied on a formal FTO opinion from counsel using professional-grade databases, not a Google Patents search. Using Google Patents for FTO analysis is not just suboptimal; it creates a legal paper trail that commercial competitors and plaintiff attorneys can exploit.

DrugPatentWatch: Pharmaceutical-Specific IP Intelligence

DrugPatentWatch is built specifically for the operational questions that pharmaceutical IP, CI, and business development teams need to answer. Its data architecture centers on FDA-approved drugs and links each drug to its complete IP record: Orange Book-listed patents with expiration dates, supplementary protection certificates, pediatric exclusivity periods, Paragraph IV certifications filed by generic applicants, ANDA approval dates, tentative approvals, and PTAB proceedings.

The platform’s Paragraph IV filing data is particularly valuable for generic entry strategy. When a generic company files an ANDA with a Paragraph IV certification, it notifies the brand company that it believes the relevant Orange Book patents are invalid, unenforceable, or not infringed by its proposed generic product. This triggers a 30-month stay on FDA approval of the ANDA (absent a court finding otherwise) and starts a patent litigation clock. Tracking Paragraph IV filings against a target reference product tells a brand company who its challengers are, which patents they are challenging, and when the stay period expires.

For generic companies, the same data identifies market entry windows: the products with expiring CoM patents, the secondary patents that remain, whether any Paragraph IV filers already have 180-day first-to-file exclusivity, and what litigation outcomes suggest about the validity of the blocking patents.

The legal defensibility of data sourced from professional pharmaceutical IP platforms is an underappreciated consideration. When companies commission FTO analyses or patent validity opinions from external counsel, the quality and currency of the data underlying those opinions matters. Counsel who base opinions on professional pharmaceutical IP databases can document the reliability of their data sources. Those relying on Google Patents cannot provide the same assurance.

Key Takeaways: Section 6

Patent intelligence is not a downstream legal activity. It belongs in discovery-stage decision-making and persists through every subsequent pipeline stage. Google Patents is appropriate for low-stakes, preliminary landscape work. For any business decision involving patent validity, FTO, generic entry timing, or competitive intelligence on Paragraph IV activity, professional pharmaceutical IP platforms are not optional. The cost differential between a Google Patents search and a DrugPatentWatch subscription is small relative to the liability differential between a documented FTO analysis and an undocumented Google search.

Section 7: Pharmaceutical IP Valuation: Chemical Data as a Core Asset

Why IP Valuation Is a Data Science Problem

Patent portfolios are the primary balance sheet asset of many pharmaceutical and biotech companies, yet most IP valuation work is still done with spreadsheet-based NPV models that underuse chemical and biological database data. This creates systematic valuation errors that affect licensing negotiations, M&A transactions, royalty rate determinations, and portfolio prioritization decisions.

A rigorous pharmaceutical IP valuation requires inputs that only chemical databases can reliably provide: patent expiration dates accounting for patent term extensions (PTEs) and patent term adjustments (PTAs), secondary patent coverage that extends beyond the CoM expiry, Orange Book listing status, Paragraph IV litigation history and outcomes, international patent family coverage, and biosimilar/interchangeability designation status for biologics.

Composition-of-Matter Patents: The Primary Valuation Driver

A composition-of-matter patent on a small molecule drug is typically the most valuable IP asset the originator holds. It covers the chemical structure of the active ingredient and prevents any party from manufacturing, using, selling, or importing that compound within the patent’s territorial scope without authorization. In the United States, standard patent term is twenty years from the filing date. For pharmaceutical products, this effective market exclusivity is commonly shortened because the patent is filed early in development, years before FDA approval. The Drug Price Competition and Patent Term Restoration Act (Hatch-Waxman Act) created the patent term extension mechanism to restore up to five years of patent life lost to FDA regulatory review, capped at a maximum of fourteen years of effective post-approval exclusivity.

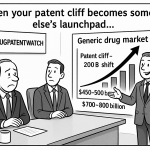

Databases like DrugPatentWatch and Cortellis IP track PTEs and PTAs applied to Orange Book-listed patents, giving analysts the actual remaining exclusivity period rather than the nominal patent expiry. For a drug generating $3 billion annually, the difference between an eighteen-month and a thirty-six-month remaining exclusivity period is $4.5 billion in discounted revenue, using a standard 8% discount rate. That is not a rounding error; it is a material valuation difference, and it requires accurate patent expiry data to compute.

Secondary Patents and Evergreening: Extending the Asset Value

Secondary patents, often described collectively as ‘evergreening’ when used strategically to extend commercial protection, cover everything that is not the active ingredient itself. Common categories include polymorphic forms (specific crystalline structures of the API), salt or ester forms, prodrug forms, pharmaceutical compositions (specific formulations), dosage forms and regimens, methods of treatment, metabolites, and combination products.

The commercial significance of a well-executed secondary patent strategy is substantial. AstraZeneca’s esomeprazole (Nexium) is the textbook case: the company filed secondary patents on the S-enantiomer of omeprazole after the racemic mixture’s CoM patent began facing Paragraph IV challenges, extending effective commercial protection by roughly a decade. The secondary patent strategy generated an estimated $20 billion in additional global revenue before Nexium’s last relevant patents expired. Not every secondary patent strategy is as successful, but the principle is well-established: secondary patents add measurable economic value to a drug asset, and that value can be quantified using patent database data.

To value secondary patents, analysts need to know which Orange Book patents are secondary (formulation, method of use, or polymorph claims rather than CoM claims), what Paragraph IV challenges have been filed against them, and what the litigation track record for similar secondary patents in the same therapeutic class looks like. Patent challenge success rates vary substantially by patent type: composition-of-matter patents survive Paragraph IV challenges at much higher rates than formulation or polymorph patents. Using DrugPatentWatch to pull the litigation history for all Paragraph IV challenges in a therapeutic class over the past decade generates a base rate that can be used as a prior probability of invalidity for individual secondary patents.

Supplementary Protection Certificates in Europe

In the European Union and European Economic Area, supplementary protection certificates (SPCs) replicate the function of US patent term extensions. An SPC can extend the effective protection of a pharmaceutical product patent for up to five years beyond the basic patent’s expiry (or fifteen years from first marketing authorization in the EU, whichever is shorter). A pediatric extension can add a further six months.

SPC tracking requires EU-specific data sources. DrugPatentWatch covers SPCs for major European markets. Cortellis IP provides comprehensive SPC data across the EEA with national-level granularity. The commercial value of SPC coverage for a European blockbuster is comparable to that of a US patent term extension: each year of SPC protection on a drug generating EUR 1 billion annually in EU revenue is worth approximately EUR 800 million to 900 million net present value, after accounting for generic entry following SPC expiry.

IP Valuation for Biologics: A Different Framework

Biologics are distinct from small molecules in their IP valuation framework. A large molecule therapeutic protein, monoclonal antibody, or ADC does not have a composition-of-matter patent in the classical chemistry sense: biological sequences can be patented, but the patent landscape around a biologic is typically more complex and less clean than for a small molecule.

The key valuation considerations for biologics are the composition claims on the protein sequence and its modifications, the formulation patents covering the specific excipient compositions needed for stability, the manufacturing process patents covering the expression systems and purification methods, the method-of-treatment patents covering specific dosing regimens, and the data exclusivity periods conferred by regulatory frameworks independent of patent protection. In the United States, the BPCIA grants twelve years of reference product exclusivity to biologics from the date of first approval, regardless of patent status. In the EU, this exclusivity period is ten years (eight years of data exclusivity plus two years of market exclusivity).

Biosimilar interchangeability designation, a distinct regulatory designation in the United States that allows pharmacists to substitute a biosimilar for the reference biologic without physician intervention, adds a further commercial wrinkle. Interchangeable biosimilars face a 12-month period of exclusivity for the first approved interchangeable, creating a tiered market entry structure. The Purple Book tracks interchangeability designations and biosimilar application statuses. DrugPatentWatch and Citeline’s Inteleos integrate Purple Book data with patent and litigation data for a complete biosimilar entry intelligence picture.

Investment Strategy: IP Valuation for Portfolio Managers

For institutional investors pricing pharmaceutical assets, chemical database intelligence provides three direct inputs.

First, accurate patent expiry data quantifies the length of the revenue generation window. A drug with three years of remaining CoM exclusivity and no secondary patent coverage is worth structurally less than a drug with three years of CoM exclusivity plus five years of formulation patent coverage backed by a strong litigation track record. The difference in discounted cash flow terms can be fifty percent or more of enterprise value.

Second, Paragraph IV filing data is a leading indicator of generic competition timing. When the first Paragraph IV certification is filed against a drug’s Orange Book patents, the 30-month stay clock starts. Generic entry can occur at the end of the stay if the patent holder does not sue within 45 days, or earlier under a settlement. Tracking Para IV filings in real time against portfolio holdings, and cross-referencing against the historical Para IV litigation success rate for the specific patent types being challenged, gives investors a probabilistically grounded timeline for loss of exclusivity.

Third, pipeline patent activity for a company’s development-stage assets provides early read on commercial ambition. A Phase II compound with a single CoM patent and no secondary patent filings is being developed differently than one with an active secondary patent filing program. The latter signals commercial confidence and a longer planned exclusivity horizon.

Section 8: Evergreening, Secondary Patents, and the Biologics Lifecycle Roadmap

The Evergreening Technology Roadmap for Small Molecules

Evergreening is a word used neutrally in patent strategy circles and critically in health policy ones. From a pure IP perspective, it refers to the systematic use of secondary patents to extend the revenue-generating period of a pharmaceutical asset beyond the expiry of the primary CoM patent. The mechanisms are well-documented and extensively used by originator companies.

The small molecule evergreening technology roadmap follows a standard sequence, with each step adding incremental IP protection while creating barriers to generic entry.

Polymorph patents are typically the first secondary patent type filed. An API can exist in multiple crystalline forms (polymorphs), each with distinct physical properties including stability, solubility, and bioavailability. Patents covering specific polymorphs (particularly Form I, which is often the commercially preferred form) are filed during late preclinical or early clinical development, when the preferred crystalline form for the final drug product is determined. Polymorph patents are listed in the Orange Book if the approved product uses that specific form. Generic manufacturers must demonstrate that their ANDA product uses the same polymorph if it is claimed in Orange Book-listed patents, or certify that those patents are invalid or not infringed.

Salt and ester patents cover alternative chemical forms of the API that may offer improved solubility or stability. These are typically filed alongside or shortly after polymorph patents.

Formulation patents cover the specific excipient composition, coating technology, modified-release mechanism, or particle size distribution of the final drug product. These are particularly common for drugs with challenging physicochemical properties, where the formulation development represents genuine technical innovation. Formulation patents can extend effective protection five to eight years beyond CoM expiry, though they are more vulnerable to Paragraph IV challenges than CoM patents.

Dosage regimen patents cover the specific dosing schedule (daily vs. weekly vs. monthly) and patient population parameters (weight-based dosing, pediatric dosing) that are optimized during clinical trials. These are listed in the Orange Book as method-of-use patents and are among the most litigated categories.

Combination product patents cover fixed-dose combinations (FDCs) of the innovator drug with one or more companion drugs. FDCs often generate patient convenience benefits and can command price premiums. The combination product may carry a separate Orange Book listing with its own set of patents.

Metabolite patents cover active metabolites identified during metabolism studies. If the primary metabolite of a drug has independent therapeutic activity, it may be separately patentable. This strategy was executed by AstraZeneca with omeprazole and esomeprazole, as noted above.

The Biologics Lifecycle Roadmap: BPCIA, Data Exclusivity, and the Patent Dance

The biologics lifecycle roadmap is structurally different from the small molecule roadmap because the underlying IP framework is different. The Biologics Price Competition and Innovation Act (BPCIA), enacted as part of the Affordable Care Act in 2010, created the US regulatory framework for biosimilar applications. It also created a complex multi-step IP negotiation process commonly called the ‘patent dance.’

The patent dance requires biosimilar applicants to share their abbreviated BLA with the reference product sponsor (RPS) after FDA acceptance of the application. The RPS then identifies which of its patents it believes are infringed by the proposed biosimilar. The parties negotiate a list of patents for immediate litigation and a list for later litigation. This process creates a structured IP disclosure that is not required under the Hatch-Waxman framework for small molecules.

For originator companies, the biologics lifecycle roadmap has five primary components. The initial biological composition claims cover the amino acid sequence of the therapeutic protein, its glycosylation pattern (where applicable), and the antibody format for monoclonal antibodies. These are the primary IP assets and are the hardest for biosimilar manufacturers to design around. The manufacturing process patents cover the expression systems (CHO cells, E. coli, yeast), upstream bioreactor conditions, purification processes, and viral clearance steps. These are particularly important because slight variations in manufacturing conditions can affect the glycosylation profile and, consequently, the biological activity and safety profile of the product. The formulation patents cover the specific buffer compositions, excipients, and pH ranges that maintain the biologic’s stability in its commercial presentation. The device and delivery system patents cover the prefilled syringe, autoinjector, or pen device used for self-administration, which is a key patient convenience differentiator for subcutaneous biologics. Finally, biosimilar interchangeability exclusivity, discussed above, provides the first approved interchangeable biosimilar with 12 months of exclusivity before a second interchangeable can be approved.

The database resources tracking biologics IP have grown substantially since BPCIA. The Purple Book provides the official FDA record of reference product exclusivity expiry and biosimilar approval status. DrugPatentWatch, BioPhorum, and Cortellis IP provide patent family maps, patent dance correspondence tracking (where public), and litigation data for major biologic reference products.

Inter Partes Review and PTAB: The Generic Company’s Weapon

The America Invents Act of 2011 created inter partes review (IPR) and post-grant review (PGR) proceedings before the Patent Trial and Appeal Board (PTAB), giving parties (including generic and biosimilar companies) a faster, cheaper administrative route to challenge patent validity than district court litigation.

PTAB proceedings have substantially altered the pharmaceutical IP landscape. Between 2012 and 2024, the institution rate for pharmaceutical IPRs has been approximately 65 to 70 percent, and final written decisions finding claims unpatentable have occurred at rates that make PTAB a credible threat to secondary pharmaceutical patents. Polymorph and formulation patents are particularly vulnerable; the PTAB has invalidated a significant fraction of challenged secondary pharmaceutical patents on grounds of obviousness or anticipation.

For generic entry strategy, PTAB proceedings are an alternative or complement to district court Paragraph IV litigation. They proceed on a shorter statutory timeline (institution decision within six months of petition, final written decision within twelve months of institution) and carry potentially lower legal costs than full district court litigation. Patent databases tracking PTAB filings and outcomes, including DrugPatentWatch’s PTAB data layer and PTAB.us, give both brand and generic companies real-time visibility into the challenge landscape.

Key Takeaways: Section 8

Evergreening is not a monolithic strategy; it is a sequenced IP engineering program with specific patent types filed at specific development milestones. The commercial value of each evergreening layer can be estimated from patent database data combined with Paragraph IV litigation base rates for the relevant patent type. Biologics follow a distinct IP roadmap governed by BPCIA, data exclusivity, and the patent dance, with manufacturing and formulation patents playing a larger relative role than in small molecule programs. PTAB proceedings have created a new competitive dynamic that has shifted the risk-adjusted value of secondary pharmaceutical patents downward relative to pre-AIA assessments.

Section 9: Building a Unified Pharma Knowledge Graph

The Data Silo Problem in Pharmaceutical R&D

Large pharmaceutical companies generate and store data across dozens of incompatible systems. Electronic lab notebooks capture synthesis and assay results. LIMS systems hold analytical chemistry data. Clinical trial management systems hold patient data. Regulatory submission platforms hold safety and efficacy dossiers. Commercial databases hold external scientific and patent data. These systems rarely share a common data model, use inconsistent compound identifiers, and require manual effort to cross-reference.

The consequence is that answering multi-system questions, the questions most relevant to strategic decisions, requires weeks of manual data extraction and integration by a team of data scientists rather than minutes of automated query execution. GlaxoSmithKline has publicly cited managing more than 2,100 data silos containing over 8 petabytes of clinical trial data. That volume of siloed data is effectively inaccessible for cross-trial analysis, which means that insights about drug mechanisms, patient subpopulation responses, and safety signals that could be derived from the aggregate dataset are systematically missed.

Ontologies as the Universal Language

Solving the silo problem requires two things: a common data language (ontologies) and a data architecture designed to store relationships (knowledge graphs).

Biomedical ontologies provide controlled, machine-readable vocabularies for entities and their relationships. The Gene Ontology (GO) defines biological processes, molecular functions, and cellular components. ChEBI (Chemical Entities of Biological Interest) provides a structured hierarchy of chemical substances. The Disease Ontology links disease terms across ICD, OMIM, and MeSH. The Unified Medical Language System (UMLS) provides cross-terminology mapping across 200-plus biomedical vocabularies.

In practice, ontologies solve the synonym resolution problem. The same drug entity may appear as ‘imatinib,’ ‘Gleevec,’ ‘STI-571,’ or ‘CHEBI:45783’ depending on which database generated the record. An ontology that formally maps these identifiers to a single canonical entity allows an automated system to recognize them as the same compound and aggregate all associated data.

Graph Databases: Native Relationship Storage

Traditional relational databases store data in rows and columns and express relationships through table joins. For queries involving multiple hops across biological entities (compound inhibits target; target is expressed in tissue; tissue is affected in disease; disease is treated by compound class), relational joins become computationally expensive and query logic becomes unwieldy.

Graph databases (Neo4j, Amazon Neptune, ArangoDB) store entities as nodes and relationships as first-class edges. A query that asks ‘find all compounds in our internal library that inhibit a kinase in the EGFR family, have a predicted IC50 below 100nM against EGFR, and do not have prior art in any granted US patent filed before 2020’ executes as a graph traversal. The same query in a relational system requires complex multi-table joins and typically runs ten to a hundred times slower.

Case Study: BenevolentAI and Baricitinib

The most widely cited pharmaceutical knowledge graph case study is BenevolentAI’s identification of baricitinib as a potential COVID-19 treatment in February 2020. BenevolentAI’s platform is a biomedical knowledge graph containing hundreds of millions of entities and relationships derived from the scientific literature, clinical trial databases, patent records, and chemical databases.

When COVID-19 was identified as caused by a novel coronavirus using ACE2 receptor-mediated cell entry, BenevolentAI queried their knowledge graph for drugs that modulate proteins involved in the cellular entry and replication pathway of the virus. The query identified baricitinib, an approved JAK1/JAK2 inhibitor used in rheumatoid arthritis, as a candidate that could potentially block viral entry and attenuate the cytokine storm associated with severe COVID-19. The in-silico identification took days. Eli Lilly initiated clinical trials; the FDA granted baricitinib emergency use authorization for hospitalized COVID-19 patients in 2021 and full approval in 2023. This outcome represents a multi-year acceleration of the drug repurposing timeline compared to conventional discovery methods.

Building the Proprietary Data Moat

The knowledge graph architecture is how an organization converts the commoditized external data in public and commercial databases into a proprietary strategic asset. The steps are straightforward in principle and complex in execution.

The foundation is a unified compound registry that assigns persistent internal identifiers to every compound the organization has ever synthesized, screened, or considered. This registry resolves synonyms, handles stereochemistry consistently, and maps internal IDs to external identifiers in PubChem, ChEMBL, DrugBank, and patent databases. Without this foundation, every downstream integration effort is contaminated by identifier ambiguity.

Above the compound registry, the knowledge graph ingests curated data from the sources appropriate to each program: bioassay results from internal HTS campaigns, target structure data from the PDB, binding affinity data from BindingDB, clinical pharmacology data from DrugBank, and patent claim data from DrugPatentWatch. Each data point enters with provenance metadata: source, date, assay conditions, data quality flags.

The uniquely valuable layer is the organization’s own unpublished experimental data: proprietary screening results, internal ADMET assay data, synthesis route information, and clinical trial biomarker data. This layer cannot be replicated by any competitor regardless of their database subscriptions, because it does not exist anywhere else. The knowledge graph that integrates public data with this proprietary layer creates a competitive intelligence resource that reflects the organization’s specific discovery history and institutional knowledge.

Key Takeaways: Section 9

Data silos are a strategic tax on pharmaceutical R&D productivity. The knowledge graph architecture, built on ontology-based entity resolution and native relationship storage, is the standard approach for connecting those silos. The competitive advantage is not in the external databases that feed the graph, which are accessible to any well-funded competitor. It is in the unique integration of proprietary experimental data with external data at scale, and in the AI and analytics infrastructure applied to the integrated dataset. Building this capability is a multi-year investment. Organizations that have done it demonstrate the ability to run drug repurposing analyses, safety signal detection, and competitive intelligence workflows that are impossible with conventional siloed architectures.

Section 10: AI as a Data Amplifier, Not a Magic Bullet

The AI-Database Symbiosis

Framing AI and chemical databases as competing approaches is a category error. AI models do not replace databases; they require them. A graph neural network trained to predict kinase IC50 values learns by analyzing thousands of kinase inhibitor structures paired with their experimentally measured IC50 values. That training data comes from ChEMBL and BindingDB. A generative model that designs novel scaffolds optimized for a specific target profile learns the structural rules of drug-like chemistry from the millions of approved and investigational compounds in PubChem. Without the underlying databases, the AI models are models without training data: formally sophisticated structures with nothing to learn from.

The correct framing is symbiotic. High-quality, curated chemical databases are the prerequisite for capable AI models, and capable AI models are the highest-leverage tool for extracting actionable intelligence from those databases at the scale required by modern drug discovery.

Virtual Screening at Scale

Traditional experimental HTS against a target assays hundreds of thousands of compounds, constrained by the physical compound library, the throughput of the assay platform, and the reagent and consumable costs associated with each assay point. Virtual screening computationally evaluates millions to billions of candidate structures against a target model, ranking them by predicted affinity before any physical synthesis.

The two dominant virtual screening paradigms are structure-based virtual screening (SBVS), which uses the 3D structure of the target binding site from the PDB and computational docking to predict binding poses and scores, and ligand-based virtual screening (LBVS), which uses known active compounds from ChEMBL or BindingDB to define the pharmacophore requirements and searches for structurally similar or shape-complementary candidates.

Machine learning-enhanced virtual screening adds a third paradigm: training a neural network on the known active and inactive compounds for a target to build a classifier that can score the probability of activity for any novel compound. These ‘active learning’ approaches iteratively cycle between computational scoring, physical synthesis and testing of top-ranked candidates, and retraining of the model on the new experimental data. Companies including Recursion Pharmaceuticals, Exscientia, and Insilico Medicine have built their discovery platforms around this iterative ML workflow.

Generative Molecular Design

Generative AI models, including variational autoencoders, generative adversarial networks, and diffusion models applied to molecular design, can propose novel structures optimized for multiple specified properties simultaneously. A medicinal chemist can specify target potency thresholds (e.g., IC50 < 10nM), selectivity requirements against off-targets, ADMET property constraints (e.g., aqueous solubility > 50 micromolar, CYP3A4 clearance < 30 microliter/minute/mg), synthetic accessibility scores, and novelty constraints relative to existing IP. The model generates candidate structures satisfying all constraints.

This capability represents a qualitative shift in discovery chemistry. Conventional SAR-driven lead optimization explores chemical space in the neighborhood of a known scaffold by making incremental structural changes. Generative design can explore regions of chemical space far from known scaffolds, potentially identifying novel chemotypes with properties unreachable by iterative modification of existing leads. This matters for IP: a genuinely novel scaffold, with no structural similarity to existing patents, is a composition-of-matter patent opportunity rather than a freedom-to-operate problem.

The data requirement for generative molecular design is demanding: the model needs large, diverse, high-quality training sets covering the chemistry and biology relevant to the target class of interest. This is where the commercial viability of AI-native drug discovery companies depends on their database access strategy. Companies like Insilico Medicine have negotiated access to premium curated datasets beyond the public repositories to give their models a richer training signal.

AI-Driven Retrosynthesis

Synthesis planning, the process of designing a sequence of chemical reactions that converts available starting materials into a target molecule, is one of the most experienced-intensive tasks in pharmaceutical chemistry. AI-driven retrosynthesis tools, trained on the tens of millions of reactions catalogued in Reaxys and CAS SciFinder, have reduced the time required to propose a viable synthetic route for a novel analog from days to minutes.

The commercial impact is measurable. Pfizer has reported internal data showing that AI-assisted synthesis planning reduced the average cycle time for analog preparation by 30 to 40 percent in lead optimization campaigns. At the scale of large pharma, that efficiency gain across hundreds of simultaneous analogs represents tens of millions of dollars in annual productivity improvement and a direct acceleration of lead optimization campaign timelines.

Key Takeaways: Section 10

AI amplifies the value of high-quality chemical databases; it does not replace them. The quality of an AI model’s predictions is bounded by the quality and scope of its training data. Organizations that invest in curated, FAIR-compliant databases are also investing in the AI models that will be trained on them. Virtual screening, generative molecular design, and AI-driven retrosynthesis are the three most commercially mature AI applications in drug discovery, and all three are fundamentally dependent on the database infrastructure covered in this guide.

Section 11: FAIR Principles as a Business Imperative

What FAIR Actually Means for R&D Operations

The FAIR Guiding Principles, published in Scientific Data in 2016, define a set of properties for scientific data that make it machine-actionable: Findable, Accessible, Interoperable, and Reusable. The language is technical, but the business case is direct.

Findable means that data and its metadata carry globally unique, persistent identifiers (such as DOIs for datasets or CAS Registry Numbers for chemical compounds) and are indexed in searchable repositories. Without findability, a researcher who has not personally generated a dataset cannot locate it, even within the same organization.

Accessible means that data can be retrieved by its identifier through a standardized protocol, with authentication mechanisms that allow controlled access where needed. A dataset accessible only through a local file system on a specific scientist’s workstation is not accessible in the FAIR sense, even if it technically exists.

Interoperable means that data uses formal, shared vocabularies and ontologies such that a computational system can interpret its meaning without human mediation. A bioassay result reported as ‘active’ in one database and ‘hit’ in another cannot be automatically integrated unless both terms map to the same ontology concept.

Reusable means that data carries rich provenance metadata, quality indicators, and clear data usage licenses so that future users can assess its fitness for their purpose and know what they are permitted to do with it. This is the dimension most commonly neglected in pharmaceutical data management: datasets without provenance metadata are scientifically uninterpretable by anyone other than their original generator.

FAIR Data as AI-Ready Data

The practical implication of machine-actionability is this: FAIR data can be automatically ingested, parsed, and analyzed by AI and machine learning systems without manual preprocessing. Non-FAIR data requires a human data scientist to locate, reformat, validate, and integrate it before analysis can begin. Industry estimates consistently place data wrangling at 60 to 80 percent of total data scientist time in non-FAIR environments. That percentage represents a direct productivity tax on the AI capabilities an organization has invested in building.

AstraZeneca’s experience illustrates the operational difference. The company’s Drug Safety and Metabolism group reported that implementing FAIR metadata standards across their DMPK datasets reduced data retrieval and reformatting time by approximately 50 percent and improved model reproducibility across scientific teams. The time savings freed scientists to focus on model interpretation and experimental design rather than data engineering.

The Financial Cost of Non-FAIR Data

A PricewaterhouseCoopers analysis commissioned by the European Commission estimated that non-FAIR research data costs the European economy approximately 10.2 billion euros annually. The costs break down across redundant data storage, time wasted by researchers recreating data that exists but cannot be found, retracted research caused by data integrity failures, and foregone innovation from siloed data that cannot be integrated for multi-source analysis.

The NIH’s Strategic Plan for Data Science explicitly aligns NIH-funded research data sharing requirements with FAIR principles. Grant applicants are increasingly required to submit Data Management and Sharing Plans that specify how their datasets will meet FAIR standards. Organizations that receive NIH funding and lack FAIR-compliant data management infrastructure face growing compliance risk.

Key Takeaways: Section 11

FAIR is not an academic principle with distant practical relevance. It is the foundation that makes AI-driven pharmaceutical R&D possible at scale. Non-FAIR data environments impose measurable time and financial penalties, reduce the quality of AI model training data, and create increasing regulatory compliance risk as funders mandate FAIR-aligned data sharing. Implementing FAIR data standards is a prerequisite for realizing the full value of both the database investments and the AI investments described in this guide.

Section 12: A Cost-Benefit Framework for Database Investment Decisions

Step 1: Map Tasks to Decision Stakes

Not all database tasks carry the same financial or legal risk. A first-pass virtual screen to identify possible hits for an early discovery project carries low decision stakes: the compound library is virtual, no synthesis has been committed, and a false negative (missing a potential hit) is regrettable but not catastrophic. An FTO analysis conducted before launching a $200 million Phase III program carries extremely high decision stakes: an incorrect conclusion exposes the company to infringement claims that could block commercial launch or require costly litigation.

The first step in a cost-benefit analysis is to map each database use case against its decision stakes. High-stakes tasks justify premium tools: professional pharmaceutical IP platforms, expert-curated databases, and formal opinion counsel. Low-stakes tasks can use free or lower-cost resources without meaningful increase in portfolio risk.

Step 2: Quantify the Full Cost of Each Option

For commercial database subscriptions, the cost is visible: annual subscription fees plus training time. For ‘free’ public database strategies, the full cost requires accounting for personnel time and IT infrastructure. A data scientist earning $200,000 in fully-loaded annual compensation who spends 60 percent of their time on data wrangling from public repositories costs $120,000 annually in data engineering labor, exclusive of any value they generate. Four such scientists cost $480,000 annually in wrangling-related labor, plus cloud compute and storage costs for the data lake infrastructure. That total routinely exceeds the cost of commercial subscriptions that would provide the same data in curated, query-ready form.

Step 3: Quantify the Value of the Benefits

Direct benefits are measurable. Reduced HTS synthesis costs from better ADMET filtering, shorter cycle times in lead optimization from AI-assisted synthesis planning, and reduced legal costs from better-documented FTO analyses all carry dollar values that can be estimated against historical baselines.

Strategic benefits require probabilistic framing. The expected value of avoiding a single late-stage clinical failure is the product of the probability that a specific failure could have been caught with better preclinical data and the cost of that failure ($500 million to $1.5 billion in sunk development costs). At a 10 percent probability of a detectable, preventable failure per clinical program, and an average failure cost of $800 million, the expected value of preclinical data investments that reduce that probability by two percentage points is $16 million per program, before any consideration of opportunity cost.