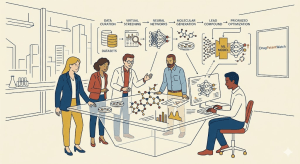

1. Eroom’s Law, the $2.6 Billion Problem, and Why Traditional R&D Has Stopped Working

Jack Scannell named Eroom’s Law in 2012, and nothing since has disproved it. The number of new molecular entities approved per billion dollars of inflation-adjusted R&D spending has fallen by half roughly every nine years since the 1950s. Moore’s Law, spelled backward, is the correct metaphor: computing gets cheaper and faster over time, while pharmaceutical discovery gets more expensive and slower. The industry has not found a technical fix for this pattern, despite the introduction of combinatorial chemistry in the 1990s, high-throughput screening in the 2000s, and the first wave of computational modeling in the 2010s.

The 2024 Deloitte biopharma R&D report puts the average capitalized cost of bringing a new molecular entity to approval at approximately $2.6 billion when accounting for the cost of failures. The Tufts Center for the Study of Drug Development has published figures in the same range. Neither estimate includes post-approval Phase IV commitments, label-extension studies, or the regulatory affairs overhead that follows a Biologics License Application. Total development time for a new chemical entity runs 10 to 15 years from IND filing to first commercial sale.

The attrition numbers are what drive those costs to stratospheric levels. Of every 10,000 compounds entering preclinical evaluation, roughly 250 will enter Phase I. Of those, around 10 will reach Phase II. Of those 10, around two will reach Phase III. Of those two, one will gain approval. The others fail, and each failure carries with it the sunk cost of synthesis, in vivo toxicology, formulation work, GMP manufacturing, patient recruitment, and clinical site operations. A single Phase III failure in an oncology indication can consume $300 to $800 million in direct costs and wipe out several years of team effort.

R&D return on investment hit a recorded nadir of 1.2% in 2022 before recovering to roughly 5.9% in 2024, per Deloitte’s calculations. That recovery is partly attributable to the commercial success of GLP-1 agonists and COVID-19-related revenue, not to structural improvements in discovery productivity. The underlying attrition curve has not materially improved.

Machine learning does not fix every variable in this equation, but it attacks the two most correctable ones: time spent searching unproductive chemical and biological space, and late-stage failure from pharmacokinetic or toxicological liabilities that computational methods could have flagged earlier. The McKinsey Global Institute’s estimate of $110 billion in annual economic value creation from AI across pharma is a ceiling figure, not a base case. But the mechanism of value creation is real, and it is visible in the clinical trial data from the AI-native cohort.

Key Takeaways: The Problem Framing

The central case for ML in drug discovery is not about AI being clever or disruptive. It is about a specific, quantified productivity crisis where average capitalized costs exceed $2.6 billion per approved drug, Phase III failure rates remain above 50%, and R&D ROI has spent the better part of a decade below the cost of capital. ML addresses those specific metrics through earlier and more accurate attrition, not through magic.

Investment Strategy Note

Investors evaluating AI-pharma companies should treat the $110 billion TAM figure with caution. It is not an addressable market in the conventional sense. It is a potential efficiency gain distributed across an entire industry. For asset-based valuation, the more relevant metric is the probability-of-success uplift attributable to ML-guided discovery, the timeline compression for the preclinical-to-IND phase, and the reduction in Phase II dose-finding failures through better patient stratification. Each of those translates to a quantifiable NPV adjustment on a per-program basis.

2. Anatomy of the Traditional Pipeline: Where the Money Dies

Understanding the traditional pipeline in structural terms matters for anyone analyzing where ML creates value, because the leverage points are not uniformly distributed.

2.1 The Discovery Phase: Target and Hit Identification

Drug discovery begins with target selection, and target selection is still largely a biological intuition exercise. A research team identifies a protein, gene product, or signaling pathway implicated in a disease phenotype, usually through genetic association studies, functional genomics, or phenotypic screens. The target must be “druggable,” meaning it has a binding site accessible to a small molecule or biological, and its modulation must produce a therapeutic effect without creating unacceptable collateral biology.

High-throughput screening then tests compound libraries against the validated target. A large pharma HTS operation can test one million compounds in two to three weeks at a cost of $0.20 to $0.50 per compound per assay. Even at that throughput, the hit rate is typically 0.1% to 1.0% of compounds screened, producing hundreds to low thousands of active compounds that require follow-up confirmation and triaging.

2.2 Preclinical Development: Safety Before Humans

Confirmed hits move into medicinal chemistry for hit-to-lead (H2L) optimization, where structural analogs are synthesized to improve potency, selectivity, and early ADMET properties. This phase alone consumes two to four years in a traditional program. The lead compound then enters formal preclinical development: ICH-compliant toxicology in two species, pharmacokinetic studies, formulation development, and manufacturing scale-up sufficient to support Phase I.

The IND application, or CTA in EU jurisdictions, packages all preclinical data and a Phase I protocol for regulatory review. FDA review of an original IND takes 30 days; substantive clinical holds add months. The cost of reaching IND from target identification historically runs $20 to $60 million for a small molecule and considerably more for a biologic.

2.3 Clinical Phases: The Three Failure Points

Phase I primarily answers the safety and tolerability question in a small cohort, typically 20 to 100 subjects. The failure rate here is around 30%, primarily from unexpected safety signals that animal models did not predict. Phase II tests proof-of-concept efficacy in a patient population of 100 to 500 subjects. The failure rate rises to approximately 60%, with lack of efficacy accounting for roughly half of terminations and safety for most of the remainder. Phase III, the pivotal confirmation stage, has a failure rate of around 50% in oncology and cardiovascular indications. The combination of those attrition rates across all three phases produces the 5% to 10% overall probability of a Phase I entrant reaching approval.

Phase III cycle times have increased consistently over the last 15 years. The average adaptive trial for an oncology indication now runs 60 to 84 months from first patient in to primary readout, partly because progression-free survival and overall survival endpoints require long follow-up windows.

2.4 Regulatory Filing and Post-Market Surveillance

An NDA or BLA filing requires assembly of the common technical document, which for a complex molecular entity can exceed 100,000 pages. FDA Priority Review takes six months from filing date; Standard Review runs 10 to 12 months. Post-approval Phase IV commitments, REMS programs, and pharmacovigilance obligations add ongoing cost after first commercial sale.

Key Takeaways: The Pipeline’s Structural Vulnerabilities

The highest-leverage ML intervention points are target validation (preventing investment in biological dead ends), ADMET prediction (avoiding late toxicological failures), and Phase II patient stratification (finding the subpopulation where the drug actually works). Each of those phases has a distinct failure mode that ML tools address through different technical mechanisms.

3. Core ML Architecture in Drug Discovery: A Technical Taxonomy

The term ‘AI’ is used loosely enough in pharma press releases to be nearly meaningless. For IP and R&D teams doing technical due diligence, the relevant distinctions are architectural. Different ML classes operate on different data types, produce different outputs, and carry different validation requirements.

3.1 Supervised Learning: The Workhorse of Property Prediction

Supervised learning trains on labeled datasets, where both the input representation (typically a molecular descriptor or fingerprint) and the target output (toxicity classification, binding affinity value, solubility) are known. The model learns a mapping function from input to output and applies it to new compounds. Algorithms commonly used include Random Forest, Gradient Boosting Machines, and Support Vector Machines for tabular molecular data.

The limitation of supervised learning in pharma is data quality and label reliability. Publicly available datasets like ChEMBL or PubChem contain measurements from multiple assay formats, concentration ranges, and laboratory protocols, producing systematic noise. A model trained on mixed-assay hERG inhibition data will produce less reliable predictions than one trained on a single, standardized patch-clamp dataset. This distinction matters for IP teams benchmarking vendor ADMET platforms: the training data provenance, not the algorithm choice, usually determines prediction reliability.

3.2 Deep Learning: Representation Learning for Complex Biological Data

Deep learning uses multilayer neural networks to learn hierarchical feature representations directly from raw data. For molecular property prediction, Graph Neural Networks (GNNs) treat molecules as graphs, with atoms as nodes and bonds as edges, and learn task-relevant features automatically without manual descriptor engineering. For biological sequence data, Transformer architectures, the same class underlying large language models, process amino acid or nucleotide sequences and learn long-range dependencies that determine folding behavior and protein-protein interactions.

AlphaFold 2, released by DeepMind in 2021 and followed by AlphaFold 3 in 2024, uses a combination of multiple sequence alignment and a Transformer-based neural network to predict three-dimensional protein structure from sequence with near-crystallographic accuracy. The AlphaFold Protein Structure Database now contains predicted structures for over 200 million proteins. For drug discovery teams, this means that the structural biology bottleneck for target-based drug design has been substantially relaxed: a crystal structure that would have taken two years and $300,000 to obtain is now available in hours at zero marginal cost.

3.3 Generative AI: Creating Molecules That Do Not Exist

Generative models produce new data instances drawn from the learned distribution of training data. The three architectures most relevant to de novo drug design are Variational Autoencoders (VAEs), which encode molecules into a continuous latent space and sample new structures from it; Generative Adversarial Networks (GANs), which train a generator and discriminator in opposition; and Diffusion Models, which learn to denoise a signal and have demonstrated state-of-the-art performance on 3D molecular generation tasks.

The distinction between these architectures matters for IP purposes. Diffusion-based generative models produce 3D conformations directly, which is valuable for structure-based design where the binding pose in a protein cavity determines potency. VAE-based models operating in SMILES string space are faster and easier to condition on multiple property objectives simultaneously, but may produce geometrically unrealistic structures without downstream 3D validation.

3.4 Reinforcement Learning: Sequential Decision Optimization

Reinforcement learning trains an agent to take actions that maximize a cumulative reward signal. In drug discovery, RL is used primarily in two settings: guiding generative models toward molecules with specific property profiles (molecular optimization as a sequential decision problem) and optimizing multi-step synthetic routes (retrosynthesis planning). The AiZynthFinder platform and IBM’s RoboRXN both use RL-based retrosynthetic planning to propose synthetic routes for AI-generated molecules, directly addressing the manufacturability gap that often makes generative outputs impractical.

3.5 Foundation Models and Transfer Learning

The most significant architectural development since 2022 is the application of large pre-trained foundation models to biological data. Models like ESM-2 (Meta’s protein language model, trained on 250 million protein sequences), ChemBERTa (for molecular SMILES), and BioMedLM have demonstrated that pre-training on massive unlabeled biological datasets produces representations that transfer effectively to specific downstream prediction tasks with limited labeled data. This matters enormously for rare disease programs, where labeled training data is scarce: a foundation model pre-trained on all available protein sequences can produce high-quality property predictions with a few hundred labeled examples, where a conventional supervised model would require tens of thousands.

Key Takeaways: Architecture Selection Principles

No single ML architecture dominates across all drug discovery tasks. GNNs perform best on molecular property prediction from structure. Transformer-based protein language models perform best on sequence-to-function tasks. Diffusion models currently lead on 3D molecular generation. RL performs best on multi-step optimization problems. For IP and R&D teams evaluating vendor platforms, the relevant technical question is whether the architecture matches the data type and prediction task, not whether the vendor describes their system as ‘AI.’

4. Target Identification and Validation: From Genomic Signal to Patentable Hypothesis

4.1 Multi-Omics Integration at Scale

The target identification problem is fundamentally a dimensionality problem. Human biology produces data at six levels: genome (genetic variants), transcriptome (RNA expression), proteome (protein abundance), metabolome (metabolite levels), epigenome (chromatin accessibility and methylation), and interactome (protein-protein interactions). Each layer is a high-dimensional dataset; the interactions among all six layers produce a combinatorial space far beyond manual analysis.

ML models address this by learning joint representations across multiple omics layers. Variational autoencoders trained on paired transcriptomic and proteomic data can identify proteins whose expression patterns across disease versus healthy tissue correlate with specific disease phenotypes. Tensor decomposition methods, including Tucker decomposition and PARAFAC, extract latent factors from multi-omics tensors that correspond to biologically meaningful disease subtypes.

The causal genetics layer is especially high value for target validation because human genetic evidence is the strongest predictor of clinical success. Mendelian randomization studies using genome-wide association study (GWAS) summary statistics provide near-causal evidence for gene-disease relationships. A 2019 Nature Genetics study found that drugs with human genetic evidence for their target are approximately 2.6 times more likely to progress from Phase I to approval than those without it. ML platforms that integrate GWAS signals with expression QTL data (eQTLs) and protein QTL data (pQTLs) to prioritize targets with triangulated genetic evidence are therefore producing validation of direct commercial value.

4.2 Knowledge Graphs and Biological Network Analysis

Knowledge graphs represent the biomedical literature as a structured network: nodes are biological entities (genes, proteins, diseases, drugs, pathways), and edges are typed relationships (inhibits, activates, upregulated in, associated with). Companies including BenevolentAI, Scinai Immunotherapeutics, and Healx have built knowledge graph platforms that use graph neural networks to traverse these networks and predict novel gene-disease associations.

BenevolentAI’s identification of baricitinib as a potential COVID-19 treatment in February 2020, two months before the first randomized trial data appeared, is the most publicized example of this approach. Their platform identified baricitinib as a JAK1/JAK2 inhibitor with potential anti-cytokine storm and anti-viral entry properties by traversing a knowledge graph that connected the drug’s known targets to pathways implicated in early SARS-CoV-2 biology. The subsequent randomized ACTT-2 trial confirmed a 28-day mortality benefit in hospitalized patients requiring supplemental oxygen. The compound, originally developed by Eli Lilly for rheumatoid arthritis under the brand name Olumiant, gained FDA Emergency Use Authorization for COVID-19 in 2020 and full approval in 2022.

The IP lesson here is significant: baricitinib had existing composition-of-matter patents, but Lilly secured new use patents covering the COVID-19 indication. The AI-derived hypothesis, though it did not name human inventors for the idea itself, supported a formal patent application for a method of treating COVID-19 using baricitinib. The new-use patent family extended commercial exclusivity beyond the original RA composition-of-matter patents.

4.3 NLP-Driven Literature Mining and Target Prioritization

The biomedical literature grows by approximately one million new PubMed-indexed articles per year. No team can read it comprehensively, which means that relevant connections between published findings go unrecognized. NLP models trained on biomedical text, including PubMedBERT, BioBERT, and their successors, can extract relationships from unstructured text and add them to knowledge graphs automatically, continuously updating the evidence base for target prioritization.

Semantic search tools built on these models allow R&D teams to query the literature with biological concepts rather than keywords. A query like ‘what proteins interact with KRAS G12C and are downregulated in pancreatic adenocarcinoma’ returns a ranked list of candidates with supporting citations, compressing what would have been weeks of manual literature review into hours.

4.4 IP Valuation of AI-Derived Targets

The patent value of an AI-identified target depends on several factors that differ from traditionally identified targets. First, freedom to operate: if the NLP model discovers a connection published years ago in a low-impact journal, the underlying biology may not be patentable, though a novel therapeutic approach to that target may still generate protectable claims. Second, claim breadth: AI platforms that identify targets through statistical association across large datasets can support broad method-of-treatment claims if they identify the target-disease link before it appears in peer-reviewed literature.

Third, validation depth: a target identified by ML association alone carries less patent value than one supported by functional genomics data showing that genetic knockout or knockdown produces the disease phenotype in a relevant model. IP teams should demand a defined validation package, including at minimum a Mendelian randomization analysis, a disease-relevant expression dataset showing dysregulation, and a phenotypic consequence from genetic perturbation before treating an ML-identified target as a core portfolio asset.

Key Takeaways: Target Identification

ML-integrated target identification with causal genetic validation doubles the probability of clinical success relative to targets without genetic support. Knowledge graph traversal has produced at least one clinically validated new indication (baricitinib in COVID-19) with commercial exclusivity implications. AI-identified targets can support new patent applications, but claim strength depends on validation depth and the novelty of the target-disease association at filing date.

5. Hit Discovery and Lead Optimization: Virtual Screening at Billion-Compound Scale

5.1 The Scale Problem in Traditional HTS

A commercial compound library at a large pharma company contains one million to three million physical compounds. Testing each against a single target in a single-dose, single-assay format costs roughly $200,000 to $1.5 million in consumables, instrument time, and data analysis. Dose-response confirmation, counter-screen for selectivity, and early ADMET profiling of confirmed hits add another $2 to $5 million before a single round of medicinal chemistry begins. The hit rate after all that work is typically 0.5% to 2% of screened compounds, and most initial hits require substantial optimization to become lead-quality compounds.

Ultra-large virtual libraries, including Enamine’s REAL Space (which contains approximately 48 billion synthesizable compounds as of 2025) and WuXi AppTec’s Galaxy library, have rendered physical HTS an inadequate primary screening strategy. No physical library can compete with virtual libraries at that scale, and the synthesis-on-demand economics of Enamine’s platform mean that any virtual hit can be in hand within two to four weeks of ordering.

5.2 Deep Learning Virtual Screening

Deep learning virtual screening uses trained ML models to predict the probability that a given compound will be active against a target, then ranks virtual library members by predicted probability and selects the top fraction for physical synthesis. A GNN trained on a target’s existing bioactivity data can screen 48 billion virtual compounds in hours on a GPU cluster, compared to two to three years of physical HTS at any plausible experimental throughput.

The critical performance metric is enrichment factor: how much more likely are the top-ranked virtual compounds to be active compared to random selection? Published benchmark studies show enrichment factors of 10 to 50-fold for well-trained models on clean datasets. That means testing the top 0.1% of a virtual library captures 10 to 50 times more active compounds than random selection, collapsing the experimental workload while expanding the structural diversity of hits.

Schrödinger’s FEP+ (Free Energy Perturbation) platform combines physics-based molecular simulation with ML-based scoring to predict binding affinities with quantitative accuracy, typically within one log unit of experimental Ki values for congeneric series. At their reported throughput of several hundred compounds per week in active R&D programs, this allows medicinal chemistry teams to computationally explore structural analogs before committing to synthesis, cutting the number of compounds synthesized per lead optimization cycle by 30 to 60%.

5.3 Structure-Activity Relationship Modeling with Graph Neural Networks

The traditional SAR model, built from a matrix of measured activities against a set of structural descriptors, fails when the activity landscape is multimodal, non-linear, or driven by distant structural features rather than local substitution effects. GNN-based SAR models address this by learning the relevant structural features from the molecular graph directly, without predefined descriptors.

The practical implication for lead optimization is that GNN-based models can predict activity cliffs, where minor structural changes produce large drops in potency, more reliably than fingerprint-based models. Activity cliff prediction is commercially important because it prevents medicinal chemists from optimizing in a direction that hits an unexpected potency floor, wasting synthesis cycles.

Multi-parameter optimization (MPO) scoring, originally developed by Pfizer as a heuristic for oral CNS drugs, quantifies how well a compound balances potency, selectivity, and physicochemical properties simultaneously. ML models trained to predict the individual MPO component properties and combine them into a composite score allow teams to rank compounds by predicted MPO score before synthesis, directing resources toward compounds with a realistic chance of achieving the full target product profile.

5.4 IP Valuation: Hit Compound Families

At the hit identification stage, the relevant IP assets are composition-of-matter patents covering the hit scaffold and its close analogs, and method-of-use patents covering the therapeutic indication. For AI-derived hits, the composition-of-matter protection is the same as for any novel compound: the claim is to the specific chemical structure and closely related structures, regardless of how the structure was generated. The method of using ML to identify the compound is not protectable as such, but can be protected as a proprietary trade secret embedded in the platform.

The strategic question for IP teams is claim breadth versus prosecution speed. Broad Markush claims covering a large structural class take longer to prosecute and invite more Office Actions, but provide stronger freedom-to-operate against competitors designing around the claims. Narrow, specific claims issue faster but are more easily designed around. For AI-generated hits where the generative model has explored broad chemical space, the breadth question is especially acute because the model may have implicitly mapped structural territory that the company has not explicitly claimed.

Key Takeaways: Hit Discovery and Lead Optimization

Virtual screening against ultra-large libraries (48+ billion compounds) with deep learning models replaces physical HTS as the primary hit identification strategy for most target classes. FEP+-validated lead optimization at Schrödinger and comparable platforms reduces synthesis cycles by 30 to 60%. For IP teams, the composition-of-matter claim strategy for AI-generated hits requires deliberate decisions about Markush claim breadth before filing, because the generative model may have explored structural space that the company has not formally claimed.

Investment Strategy Note

In licensing negotiations and business development deals involving AI-generated hit series, the key due diligence question is the training data provenance for the ML model used in hit generation. A hit series generated by a GNN trained exclusively on public ChEMBL data carries different IP risk than one generated by a model trained on proprietary HTS data. In the former case, the model and its outputs may have been partially replicated by competitors using the same public data; in the latter, the training data itself is a proprietary barrier to reproduction.

6. De Novo Molecular Design: Generative AI and the Invention Problem

6.1 The Chemical Space Problem

Estimating the size of drug-like chemical space is an exercise in large numbers. Estimates for the number of chemically stable, drug-like organic molecules with molecular weights below 500 Da range from 10^23 to 10^60. The largest physical compound library ever assembled contains roughly 10^7 compounds. Even the most ambitious virtual library contains roughly 10^10 to 10^11 structures. Generative AI is the only tool available for exploring the remainder.

The practical implication is that the global compound library assembled by the pharmaceutical industry over a century of medicinal chemistry represents a microscopic fraction of accessible chemical space. Drug programs that have failed due to poor selectivity, insufficient potency, or insurmountable ADMET liabilities may have failed because the medicinal chemistry team was working in a region of chemical space that was locally suboptimal, and no conventional tool could guide them toward the distant optimal region.

6.2 Diffusion Models for 3D Molecular Generation

The current state of the art in structure-based de novo design uses diffusion models that generate molecular structures directly in three-dimensional space, conditioned on the binding site geometry. DiffSBDD (Structure-Based Drug Design with Diffusion) and DiffDock (for docking pose prediction) are academic models in this class. Commercial implementations from companies including Relay Therapeutics, Schrödinger’s Maestro platform, and OpenBioML extend these architectures with additional conditioning on pharmacophore constraints, synthetic accessibility scores, and predicted ADMET properties.

The conditioning mechanism is the critical engineering challenge. An unconditioned generative model produces molecules that are chemically valid but not necessarily synthesizable, appropriately sized, or selective. Conditioning the generation on synthetic accessibility scores derived from ASKCOS (a retrosynthetic planning model developed at MIT) and on selectivity data from related protein family members produces a constrained generation problem where the model searches for structures that are simultaneously potent, selective, synthesizable, and drug-like.

6.3 Insilico Medicine’s Chemistry42: A Case Study in End-to-End Generative Design

Insilico Medicine’s Chemistry42 platform uses a combination of reinforcement learning and generative models to design molecules against targets identified by their PandaOmics platform. The technical architecture involves a generator, which proposes SMILES strings or 3D structures; a scorer, which evaluates predicted properties across a panel of objectives; and an RL agent, which iterates between generation and scoring to progressively improve the population of proposed molecules.

For ISM001-055 (Rentosertib), a TNIK inhibitor for idiopathic pulmonary fibrosis, Chemistry42 generated the initial hit series against a novel target identified by PandaOmics from multi-omics analysis of IPF patient datasets. The full process from target nomination to preclinical candidate selection took 18 months and approximately $2.6 million in direct preclinical costs, compared to an industry-average of five to six years and $20 to $60 million. The candidate entered Phase I in 2021 and reported positive Phase 2a topline results in 2024, demonstrating an acceptable safety profile and preliminary efficacy signals in IPF patients.

The IP architecture around Rentosertib is instructive. Insilico holds composition-of-matter patents on the specific SMILES structure of ISM001-055 and its close analogs, method-of-treatment patents covering TNIK inhibition in fibrosis, and separate patent applications covering the PandaOmics-identified target itself (the novel role of TNIK in IPF). The AI platform is protected as a trade secret and not included in any patent disclosure. This three-layer protection strategy, covering the target biology, the compound, and the indication, maximizes commercial exclusivity without disclosing the algorithmic mechanisms that produced the discovery.

6.4 The Synthesizability Gap and How RL Closes It

A persistent criticism of early generative AI systems was that they produced molecules that were chemically valid on paper but practically unsynthesizable in a chemistry laboratory. A molecule with a beautiful predicted docking score is worth nothing if no medicinal chemist can make it in fewer than 15 steps with acceptable yield.

Retrosynthetic analysis software, including AiZynthFinder (Astra-Zeneca), ASKCOS (MIT), and SYNTHIA (Merck), uses Monte Carlo Tree Search and transformer-based models to predict synthetic routes for novel structures. Integrating retrosynthetic feasibility scoring into the generative loop, so that the model is penalized for proposing structures with no practical synthesis route, has substantially reduced the synthesizability gap. Published benchmarks from 2024 show that RL-conditioned generative models with retrosynthetic penalties produce molecules with an average of four to seven synthetic steps and >40% yield projections in over 85% of cases, compared to 40% to 50% for unconditioned models.

Key Takeaways: De Novo Design

Diffusion-based 3D generative models conditioned on binding site geometry, synthetic accessibility, and multi-parameter property objectives represent the current technical standard for de novo drug design. Insilico’s Rentosertib demonstrates that an end-to-end generative AI program can produce Phase II-ready candidates in 18 months at a fraction of historical preclinical costs. The synthesizability gap, a genuine technical limitation of first-generation generative systems, has been substantially addressed through retrosynthetic RL conditioning.

Investment Strategy Note

When evaluating AI-native biotechs, the synthesizability rate of generative output is a technically meaningful KPI. Companies that report ‘molecules generated’ without specifying the fraction that are actually synthesizable and confirmed active in a physical assay are presenting a misleading pipeline metric. The relevant figure is the confirmed hit rate per physical synthesis, not the raw generative output volume.

7. ADMET Prediction: Computational Gatekeeping Before the Bench

7.1 The Cost of Getting ADMET Wrong

Approximately 40% of clinical trial failures from 2010 to 2020 were attributable to clinical pharmacology issues, including poor pharmacokinetics, unacceptable drug-drug interaction profiles, and unexpected toxicity. These properties are theoretically predictable from structure if the right models are available. The history of clinical failures from predictable causes, FIAU’s hepatotoxicity in HIV, terfenadine’s hERG-mediated arrhythmia, troglitazone’s idiosyncratic liver toxicity, established the regulatory and industry standard for mandatory ADMET assessment before human trials.

Physical in vitro ADMET panels are themselves a significant cost driver. A standard early ADMET panel covering Caco-2 permeability, microsomal metabolic stability, plasma protein binding, cytochrome P450 inhibition across five isoforms, hERG patch-clamp, and Ames mutagenicity costs roughly $3,000 to $8,000 per compound. For a lead optimization program generating 50 to 200 compounds per synthetic cycle, that cost accumulates to $150,000 to $1.6 million per cycle, before synthesis costs are included.

Accurate computational ADMET prediction reduces that burden by narrowing the synthesis queue to compounds with predicted acceptable profiles, running physical assays only on computationally pre-filtered candidates.

7.2 Graph Neural Networks for Multi-Property ADMET Prediction

The DeepChem library and commercial platforms including ADMET-AI and ADMET Predictor incorporate GNN-based models for the full ADMET property panel. GNNs outperform earlier fingerprint-based models on ADMET tasks because they capture the three-dimensional topology of functional groups and their electronic environment, which strongly influences metabolic liability, membrane permeability, and protein binding.

hERG channel blockade prediction is the highest-stakes single ADMET task because drug-induced QT prolongation causes potentially fatal cardiac arrhythmias. Regulatory guidance from ICH S7B requires experimental hERG assessment for all new molecular entities. The best published GNN models for hERG prediction achieve AUROCs of 0.88 to 0.94 on independent test sets, sufficient to filter out the most flagrant hERG risks early, though not precise enough to replace experimental confirmation for candidates in development.

Hepatotoxicity prediction is technically harder because the underlying mechanisms are heterogeneous: reactive metabolite formation, mitochondrial dysfunction, bile salt export pump inhibition, and immune-mediated injury all produce liver toxicity through different pathways. Multi-task GNN models trained on the DILIrank dataset, which classifies 1,036 FDA-approved drugs by DILI severity, provide qualitative risk stratification rather than quantitative prediction, but still add value as a screening filter. The model published by Cheng et al. in 2022 achieved 74% accuracy on the most-concern/no-concern binary classification using a graph transformer architecture.

7.3 Mechanistic PK/PD Modeling Combined with ML

Physiologically based pharmacokinetic (PBPK) modeling predicts in vivo drug concentrations in plasma and tissues from in vitro measurements using mechanistic compartment models of absorption, hepatic metabolism, renal clearance, and tissue distribution. ML supplements PBPK by predicting the in vitro inputs from structure, enabling fully in silico PK/PD simulation before any physical measurement.

The Simcyp platform (Certara), GastroPlus (Simulations Plus), and PK-Sim (Open Systems Pharmacology Suite) are the dominant commercial PBPK tools. Simulations Plus has integrated ML-based property prediction from its ADMET Predictor module as upstream inputs to GastroPlus PBPK models, creating a pipeline where a SMILES string can produce a predicted plasma concentration-time profile without any wet lab data. Regulatory agencies including FDA and EMA have accepted PBPK modeling in NDA submissions for dose selection, label language on drug-drug interactions, and pediatric dosing projections.

7.4 IP Valuation: ADMET Platforms as Core Technology Assets

ADMET prediction platforms are patentable as methods inventions and as systems combining specific model architectures with curated training datasets. The commercial value of an ADMET platform IP portfolio depends on the size and curation quality of the training dataset, because larger and cleaner datasets produce better models and are expensive to reproduce. Simulations Plus’s acquisition of Lixoft in 2020 for $22 million and their subsequent licensing of ADMET Predictor to major pharma companies demonstrates that computational ADMET tools carry standalone commercial value as licensed software, separate from any drug program they support.

For pharma companies building internal ADMET prediction capabilities, the proprietary in-house dataset is the most defensible asset. A model trained on 500,000 in-house experimental ADMET measurements across multiple modalities, accumulated over decades, produces predictions that external vendors cannot replicate regardless of algorithm sophistication.

Key Takeaways: ADMET Prediction

Physical ADMET panels cost $3,000 to $8,000 per compound per cycle. GNN-based computational prediction achieves AUROCs of 0.88 to 0.94 for hERG and comparable performance for metabolic stability, enabling pre-synthetic filtering that reduces physical assay burden by 30 to 60%. Mechanistic PBPK models integrating ML-derived property inputs can produce in silico plasma PK profiles acceptable to FDA for specific NDA submission contexts. Proprietary curated ADMET datasets are protectable IP assets with commercial licensing value.

8. Clinical Trial Optimization: Restructuring the Most Expensive Phase

8.1 The Patient Recruitment Bottleneck

Clinical trial recruitment failure is the single most underappreciated cost driver in pharmaceutical development. Approximately 80% of trials fail to meet enrollment targets on schedule, and an estimated 19% of trial sites enroll zero patients. Each month of trial delay costs a branded pharma company $600,000 to $8 million in lost patent life, depending on the indication and anticipated peak sales. Recruitment shortfalls frequently trigger protocol amendments, which require IRB approval and reset the enrollment clock.

ML approaches to recruitment acceleration operate at two levels: site selection and patient identification. At the site level, ML models trained on historical ClinicalTrials.gov data predict site performance from features including site type (academic vs. community), principal investigator publication history, historical dropout rates, and local patient population demographics. Trials using predictive site selection models reduce the proportion of low-enrolling sites by 20 to 35% in published comparisons.

At the patient level, NLP models extract eligibility criteria from protocol text and apply them against structured and unstructured electronic health record data. IBM Watson for Clinical Trial Matching and Tempus’s clinical trial matching platform both use transformer-based NLP to match patients to open trials at the point of care. TrialGPT, a model published by researchers at NIH in 2024, achieved 87.3% accuracy on patient-to-trial matching on a benchmark dataset, matching the performance of clinical research coordinators while processing cases approximately 42 times faster.

8.2 Adaptive Trial Design and RL-Driven Protocol Optimization

The FDA’s guidance on adaptive designs (issued in 2019 and updated in 2023) explicitly permits prospectively planned adaptations including sample size re-estimation, seamless Phase II/III designs, and response-adaptive randomization. These designs shift statistical power to the treatment arms or patient subgroups showing the strongest early signal, reducing the total sample size required to detect a given effect size.

Bayesian adaptive trials use posterior probability distributions updated continuously with incoming data to trigger pre-specified adaptation rules. RL algorithms can optimize the adaptation rules themselves, treating the trial as a multi-armed bandit problem where the ‘arms’ are different doses, patient subgroups, or combination regimens. The RECOVERY trial, which identified dexamethasone as an effective COVID-19 treatment in June 2020, used an adaptive platform design with ML-assisted dose allocation that allowed faster identification of the effective arm than a conventional parallel-group design.

Simulation-based trial design uses ML models trained on historical trial data to simulate thousands of trial scenarios before protocol finalization, optimizing endpoints, sample sizes, and interim analysis timing to minimize expected sample size under the constraint of acceptable Type I error rate. Companies including Berry Consultants, Cytel, and Medidata use simulation platforms that can evaluate 10,000 trial scenarios in hours, allowing sponsors to identify the optimal protocol before committing to a single expensive path.

8.3 Patient Stratification and Biomarker-Driven Trial Design

The shift from unselected to biomarker-selected trials represents the highest-leverage clinical development strategy available for improving probability of success. In oncology, the FDA’s accelerated approval pathway has increasingly required companion diagnostic co-development for targeted therapies. Exscientia’s EXS-21546, the A2A receptor antagonist for advanced solid tumors, was designed from the outset with a multi-gene ‘adenosine signature’ biomarker to prospectively identify patients with high tumor immunosuppression, the mechanistic rationale for why the drug should work. This strategy allows the Phase II trial to enroll a pre-enriched population, improving the signal-to-noise ratio and reducing the sample size needed to detect efficacy.

ML-based biomarker discovery uses supervised learning on transcriptomic or proteomic data from Phase II clinical samples to identify the biological features that differentiate responders from non-responders. The CONFIRM model published by Genentech researchers identified a 25-gene expression signature in colorectal cancer patients that predicted benefit from bevacizumab with an AUC of 0.79 in the validation cohort. Companion diagnostic development based on that signature extended the commercial application of an existing approved drug to a genomically defined subpopulation, creating a new IP opportunity in a mature indication.

8.4 AI in Pharmacovigilance and Real-World Evidence

Post-approval pharmacovigilance generates enormous volumes of adverse event data from spontaneous reporting systems (FDA FAERS, WHO VigiBase), electronic health records, and published case reports. NLP models applied to FAERS narratives can identify novel safety signals weeks to months before they appear in formal signal detection algorithms. OpenFDA’s adverse event database contains over 25 million reports; manual review at that scale is impractical.

ML signal detection platforms, including VigiBase’s VigiRank algorithm and proprietary platforms from IQVIA and Veeva, use disproportionality analysis combined with ML classifiers to separate true emerging signals from noise. Post-market safety commitments are increasingly including AI-based signal detection as a component of the pharmacovigilance system, with FDA requesting documentation of the platform’s validation methodology.

Key Takeaways: Clinical Trial Optimization

ML-driven site selection reduces low-enrolling site proportions by 20 to 35%. NLP patient matching achieves 87% accuracy at 42 times human coordinator processing speed. Biomarker-enriched trial designs built on ML-identified response signatures consistently produce higher effect sizes in smaller sample sizes. Post-approval, NLP pharmacovigilance platforms identify safety signals in FAERS data weeks to months before conventional disproportionality detection.

Investment Strategy Note

For investors evaluating drug programs in development, the presence of a validated biomarker selection strategy is the single most predictive indicator of Phase II/III success probability after target mechanism. Biomarker-unselected Phase II trials in heterogeneous populations carry a 50 to 70% higher risk of missing statistical significance compared to biomarker-enriched trials, even when the drug works mechanistically. ML-identified biomarker signatures that survive prospective validation in an independent cohort should be assigned positive probability-of-success adjustments in NPV models.

9. IP Valuation of AI-Generated Drug Candidates: Asset Classification for Analysts

9.1 The IP Stack of an AI-Native Drug Program

An AI-native drug program generates a layered IP stack that differs structurally from a traditionally discovered asset. At the base layer is the computational platform itself, the generative models, training data, and proprietary algorithms. Above that are the target validation datasets and method-of-use claims for the identified target-disease combination. Above that are the composition-of-matter claims covering the generated molecule and its structural analogs. At the top are the clinical data assets: Phase II/III datasets that support labeling claims and, in some cases, regulatory exclusivity periods (NCE exclusivity, orphan drug designation, or breakthrough therapy designation).

Each layer carries independent IP value and different vulnerability to invalidation or design-around. The platform layer is typically protected as a trade secret rather than a patent, because patent disclosure of the training methodology would allow competitors to replicate the generative process. The composition-of-matter layer is protected by patents in the traditional pharmaceutical IP sense. The clinical data layer is protected by regulatory exclusivity programs (five years of NCE exclusivity, seven years for orphan designation, 12 years for biological products under the BPCIA).

9.2 Evergreening Opportunities in AI-Discovered Programs

Pharmaceutical evergreening encompasses a range of IP lifecycle management strategies designed to extend commercial exclusivity beyond the expiration of the original composition-of-matter patent. These include new formulation patents (extended-release, fixed-dose combinations, novel salt forms), method-of-use patents for additional indications, pediatric exclusivity claims, and process patents for manufacturing improvements.

AI tools create several additional evergreening opportunities specific to computationally discovered assets. Generative models can design novel analogs with improved properties, such as higher selectivity or reduced off-target effects, that qualify for new composition-of-matter patents and can be commercialized as second-generation products. Multi-parameter optimization can identify formulation improvements, such as a polymorph with superior stability or a prodrug with improved oral bioavailability, that support new patent applications.

AI-identified biomarker signatures support companion diagnostic patent applications covering the method of identifying patients likely to respond. These patents carry separate commercial value from the drug itself: the companion diagnostic can generate licensing revenue through diagnostic kit deals, independent of the primary drug program. Genentech’s PD-L1 companion diagnostic for atezolizumab, for example, generated licensing agreements with multiple diagnostic manufacturers, extending the commercial ecosystem of the checkpoint inhibitor beyond the drug itself.

9.3 Patent Expiration Profiling and the Paragraph IV Challenge Ecosystem

For generic drug developers and specialty pharma investors, the AI patent landscape creates new analytical complexity. An AI-discovered drug may have fewer secondary patents than a traditionally discovered drug that has undergone decades of lifecycle management, or it may have more, if the generative platform was used to systematically explore the patentable structural space around the lead.

DrugPatentWatch’s patent database, which covers the full Orange Book listing and patent litigation history for FDA-approved drugs, provides a practical tool for mapping the paragraph IV exposure window of an AI-discovered product. The relevant questions for a Paragraph IV filing decision are: when does the composition-of-matter patent expire; are there secondary formulation or method-of-use patents that could support 30-month stays; and what is the litigation track record of the originator in Hatch-Waxman proceedings.

AI-native drug programs filed primarily after 2020 generally have composition-of-matter patents expiring in the 2040 to 2045 timeframe, assuming standard 20-year terms from priority date with appropriate patent term extensions. Generic entry timing analysis for these assets requires evaluating not just the primary patent but the full secondary patent cluster, which an AI platform may have generated systematically. A generative AI system that produced several hundred analogs and formulation variants around the lead compound may have enabled the filing of 30 to 80 secondary patents, each potentially supporting a 30-month litigation stay if challenged.

9.4 The AI Inventorship Doctrine After Thaler v. Vidal

The Federal Circuit’s 2022 ruling in Thaler v. Vidal confirmed what the USPTO had proposed and the European Patent Office had decided: an inventor must be a natural person. DABUS, the AI system Thaler sought to name as inventor on two patents, was not a person and therefore could not be an inventor. The patents were unpatentable with DABUS as the sole named inventor.

The USPTO’s February 2024 guidance on AI-assisted inventions clarified the practical implications. An AI-assisted invention is patentable if a human being made a ‘significant contribution’ to the conception of each claimed invention. What counts as a significant contribution? The guidance referenced the Pannu factors from the Federal Circuit’s 1996 Pannu v. Iolab Corp. decision: each inventor must contribute to the conception of the claimed invention, not merely reduce it to practice or carry out the instructions of another.

For AI-discovered drug candidates, this means that the human scientists who defined the target product profile, selected and curated the training data, interpreted the generative output, and selected the final candidate based on scientific judgment are the inventors, even if an AI system generated the specific SMILES string. The documentation burden is significant: companies must maintain detailed records of the human decision points in the discovery process, including what choices were made by human researchers versus what was produced autonomously by the ML system.

Companies including Relay Therapeutics have adopted ‘AI-assisted invention documentation protocols’ that log every material human decision in the discovery workflow, both to support inventorship claims and to defend against obviousness challenges by demonstrating the non-obvious human creative contributions at each step.

Key Takeaways: IP Valuation

AI-native drug programs generate a layered IP stack covering platform trade secrets, target validation method claims, compound composition-of-matter patents, and companion diagnostic method patents. Post-Thaler v. Vidal, composition-of-matter patent prosecution for AI-discovered compounds requires contemporaneous documentation of significant human contributions to conception. Evergreening through AI-generated analog programs, improved formulations, and biomarker-based companion diagnostic patents extends the commercial exclusivity lifecycle beyond the original composition-of-matter term.

10. The Patent Landscape: Evergreening, AI Inventorship, and Paragraph IV Exposure

10.1 Technology Roadmap for Pharmaceutical Patent Lifecycle Management

The standard pharmaceutical patent lifecycle follows a predictable arc. A drug entering development in 2025 with a provisional patent filing receives a priority date that starts the 20-year patent clock. Assuming an 8-year development timeline and launch in 2033, the effective market exclusivity period before the composition-of-matter patent expires runs approximately 12 years, from 2033 to approximately 2045, subject to patent term extension under the Hatch-Waxman Act (which can add up to five years for regulatory delay) and pediatric exclusivity (an additional six months if pediatric studies are completed on request).

Secondary patents filed during development, covering specific crystalline polymorphs, enantiomers, metabolites, combination products, and formulation technologies, extend the intellectual property barrier beyond the primary composition-of-matter expiration. The average number of Orange Book-listed patents per approved drug has increased from 3.4 in 2000 to over 7.5 in 2023, reflecting decades of systematic lifecycle management. Biologics present a different structure: the 12-year BPCIA exclusivity period runs from approval date regardless of the patent term, and biosimilar interchangeability designation (FDA’s regulatory standard for automatic substitution) requires an additional switching study demonstrating that patients can transition between the reference product and biosimilar without increased safety or efficacy concerns.

10.2 How AI Tools Are Being Used by Generic Challengers

The same AI capabilities that branded pharma uses for drug discovery are being deployed by generic drug developers and specialty pharma companies to accelerate Paragraph IV strategies. Quytech’s generative AI tool for pharmaceutical patent circumvention, which the company built to analyze originator patent claims and suggest structurally novel alternatives outside the claim scope, represents one commercial implementation of this approach. The tool ingests the full patent text of a branded compound’s patent family, identifies the structural features required to infringe each claim, and proposes novel structures that achieve similar biological activity without reproducing the patented structural motifs.

The IP implications of AI-assisted Paragraph IV strategy are still developing. If a generic challenger uses an AI system to design a compound that circumvents an originator’s patent, the compound itself is patentable as a new composition of matter, and the generic company can file both an ANDA for a bioequivalent formulation and a separate NDA for their AI-designed analog. This hybrid strategy has not yet been prominently litigated, but the commercial logic is clear: a company that can design around an originator’s patent using AI, then file its own composition-of-matter patent on the circumventing structure, achieves both freedom to operate and a new exclusivity period for the modified compound.

10.3 REMS, Regulatory Exclusivity, and Complementary Protections

Risk Evaluation and Mitigation Strategies (REMS) programs, required by FDA for drugs with serious safety concerns, can function as complementary commercial exclusivity mechanisms. If a branded drug’s REMS program restricts distribution to a limited network of certified pharmacies and prescribers, generic challengers have more limited access to the approved drug for bioequivalence testing, creating practical delays in generic entry. The FTC has targeted several pharma companies for alleged REMS abuse, including the 2020 settlement with Allergan over its use of a REMS program for Suboxone to block generic competition.

Orphan drug designation, available for drugs treating diseases with fewer than 200,000 US patients, provides seven years of post-approval marketing exclusivity independent of patent status. AI-driven drug repurposing programs frequently target rare disease indications because the smaller patient populations reduce trial size requirements and the orphan exclusivity period provides meaningful protection even for drugs with narrow patent claims. Recursion Pharmaceuticals’ pipeline includes multiple orphan disease programs, including REC-994 for cerebral cavernous malformation, where the orphan designation provides commercial exclusivity into the early 2030s for a patient population that would otherwise represent an unattractive market for a conventional pharma company.

Key Takeaways: Patent Landscape

The average branded drug now carries 7.5 Orange Book-listed patents, compared to 3.4 in 2000. AI tools are being deployed by generic challengers for automated patent circumvention design, not just by originators for discovery. Orphan drug designations (7-year exclusivity) and REMS programs provide complementary commercial protection mechanisms that AI-driven rare disease programs exploit systematically.

11. Case Studies: Insilico Medicine, Exscientia, Recursion, and the AI-Native Cohort

11.1 Insilico Medicine: Rentosertib and the TNIK Hypothesis

Insilico Medicine was founded in 2014 by Alex Zhavoronkov with an explicit mandate to use AI to address Eroom’s Law. The company’s Pharma.AI platform integrates three functional modules. PandaOmics conducts multi-omics target identification using gene expression, proteomics, single-cell sequencing, and clinical data to score biological targets by strength of disease association. Chemistry42 generates de novo small molecules against prioritized targets using RL-guided generative models. InClinico predicts Phase II clinical trial success probability from a combination of drug-target biology, trial design parameters, and historical trial outcome data.

The TNIK program demonstrates the integrated platform in its most commercially advanced form. PandaOmics identified TNIK (Traf2- and Nck-interacting kinase) as a novel fibrosis target by analyzing transcriptomic data from IPF patient biopsies and identifying TNIK as a convergence point in multiple dysregulated fibrogenic pathways. TNIK had not previously been pursued as a therapeutic target in fibrosis. Chemistry42 generated novel TNIK inhibitors that did not exist in any compound library. The preclinical candidate ISM001-055, ultimately renamed Rentosertib, was selected in 18 months at a reported cost of $2.6 million.

The IP architecture is worth examining in detail. Insilico holds patent applications covering the use of TNIK inhibitors in the treatment of fibrotic diseases, the specific structural class of compounds containing ISM001-055, and the method of identifying TNIK inhibitors using the Chemistry42 approach. The Chemistry42 platform itself is held as a trade secret. This layered structure means that even if a competitor independently identified TNIK as a target, they could not use Insilico’s compound class without a license, and they could not reproduce the AI discovery methodology without independently developing a comparable generative platform.

The Phase 2a data reported in 2024 showed a statistically significant reduction in the rate of FVC decline (a key spirometric endpoint in IPF) at the 30 mg twice-daily dose compared to placebo. The effect size was smaller than the pivotal data for nintedanib and pirfenidone, the current standard of care, but the safety profile was cleaner and the first-in-class mechanism justifies continued development. A Phase 2b trial is in planning.

11.2 Exscientia: Precision Oncology and the 163-Compound Discovery

Exscientia’s most-cited achievement is the design of EXS-21546, an A2A adenosine receptor antagonist, using 163 synthesized compounds to identify a Phase I-ready candidate. The A2A receptor is expressed on T cells and natural killer cells, where its activation by tumor-derived adenosine suppresses anti-tumor immunity. Blocking A2A receptor signaling in the tumor microenvironment theoretically restores immune cell cytotoxicity.

The Centaur Chemist AI platform that produced EXS-21546 uses a closed-loop design process: a Bayesian optimization algorithm proposes compounds based on a learned model of the structure-activity landscape; a human chemist evaluates synthetic feasibility and proposes modifications; the optimized compound is synthesized and tested; and the assay results update the Bayesian model for the next cycle. The key efficiency gain is that the Bayesian model predicts the most informative next compound to synthesize, rather than synthesizing all analogs in a structural series and testing them retrospectively.

EXS-21546 has completed Phase 1a in healthy volunteers and entered a Phase 1/2 expansion cohort in patients with advanced solid tumors with high adenosine signatures identified by the companion biomarker assay. The biomarker strategy is Exscientia’s most commercially differentiated element: if the biomarker selection proves predictive in the Phase 2 expansion, the companion diagnostic becomes a protectable IP asset and a barrier to generic substitution.

Exscientia was acquired by Recursion Pharmaceuticals in 2024 in an all-stock transaction valued at approximately $688 million, consolidating two of the three most prominent AI-native drug discovery platforms. The acquisition gives Recursion access to Exscientia’s medicinal chemistry AI capabilities and its clinical-stage pipeline, while giving Exscientia programs access to Recursion’s 60-petabyte biological dataset.

11.3 Recursion Pharmaceuticals: The Data Industrialist Model

Recursion’s core hypothesis is that biology is fundamentally a pattern recognition problem and that the company with the largest, highest-quality dataset of biological perturbation responses will build the most accurate predictive models. Their platform, Recursion OS, executes this thesis through industrialized cellular biology: automated robotic liquid handling, fluorescent imaging of human cells after genetic or chemical perturbation, and computer vision models that extract phenotypic features from high-resolution images.

The scale is genuinely industrial. Recursion runs approximately 2.2 million cellular perturbation experiments per week. Each experiment images cells at multiple timepoints across multiple fluorescent channels capturing nucleus morphology, cytoskeletal organization, and organelle distribution. The resulting phenotypic profiles, which Recursion calls ’embeddings,’ are compared across perturbations to identify compounds that phenotypically resemble genetic knockouts of disease-relevant targets, a technique called morphological profiling or Cell Painting when using a standardized fluorescent staining protocol.

As of early 2026, Recursion has accumulated over 60 petabytes of biological data, which it calls the ‘Map of Biology.’ The data asset is protected through a combination of trade secret law (the specific experimental protocols and model architectures are not disclosed) and practical replication barriers (reproducing 60 petabytes of structured biological data would require years of capital-intensive experiment).

Recursion’s clinical pipeline includes REC-994 for cerebral cavernous malformation (Phase 2), REC-2282 for NF2-related schwannomatosis (Phase 2), and multiple additional programs in CNS and oncology indications. The company has also expanded into AI-enabled chemistry through its acquisition of Cyclica (a molecular simulation company) and Valence Discovery (a generative chemistry company), adding de novo design capabilities to its existing phenotypic discovery platform.

The BioHive-2 supercomputer, built in collaboration with NVIDIA, provides the computational infrastructure for processing Recursion’s dataset. BioHive-2 uses NVIDIA DGX H100 nodes and achieves peak performance in the exaflop range for mixed-precision ML workloads. The collaboration with NVIDIA is both a technical relationship and a commercial endorsement: NVIDIA has made strategic investments in Recursion as part of a broader thesis that pharmaceutical AI represents a major demand driver for GPU compute.

11.4 Isomorphic Labs: The DeepMind Spin-Out and the Next Generation of Structure-Based Design

Isomorphic Labs was spun out of Alphabet/DeepMind in 2021 with the specific mandate of applying AlphaFold-class structural biology models to drug discovery. The company operates as an Alphabet subsidiary with dedicated leadership and signed landmark collaboration deals with Eli Lilly (up to $1.7 billion including milestones) and Novartis (up to $1.2 billion including milestones) in January 2024.

The technical capability that Isomorphic brings beyond AlphaFold 2’s protein structure prediction is structure-based drug design at scale: using the predicted protein structure to computationally design small molecules that bind to it, predicting the drug-target complex geometry, and scoring the predicted binding affinity. AlphaFold 3, published in Nature in May 2024, extended the original protein structure prediction capability to predict the 3D structure of drug-protein complexes, DNA, RNA, and small molecule-protein interactions, making it directly applicable to the lead optimization problem.

The IP positions from the Lilly and Novartis deals are not publicly disclosed in detail, but the standard structure for these collaborations gives the pharma partner composition-of-matter rights to drug candidates it advances into development, while Isomorphic retains platform IP rights. Milestone payments are triggered by preclinical nomination, IND filing, and Phase I/II/III initiation, with royalties on net sales for approved drugs. The reported deal values of $1.7 billion and $1.2 billion are aggregate ceiling figures that require multiple clinical successes across multiple programs; initial upfront payments are significantly smaller.

Key Takeaways: AI-Native Cohort

Insilico’s Rentosertib demonstrates end-to-end generative discovery from novel target to Phase 2a positive readout in under five years. Exscientia’s 163-compound discovery workflow and biomarker-first strategy represent the precision medicine design paradigm. Recursion’s data industrialization model has produced 60 petabytes of proprietary biological data and multiple Phase 2-stage programs. Isomorphic Labs is applying AlphaFold 3-class structure prediction directly to drug-protein complex design in billion-dollar pharma collaborations. These four companies represent distinct technical strategies for the same problem; no single approach has yet demonstrated late-stage clinical success across multiple programs.

12. Big Pharma’s Acquisition and Partnership Strategy: Where the Capital Is Going

12.1 The Strategic Rationale for External AI Investment

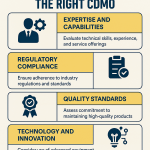

Large pharmaceutical companies face a structural dilemma on AI capability building: hire computational biologists and data scientists to build internal platforms, or pay for access to AI-native companies’ existing capabilities through partnerships, licensing deals, and acquisitions. The build option requires five to seven years and hundreds of millions in talent and infrastructure investment before generating pipeline output. The buy option provides immediate access to validated platforms but transfers future value creation to the acquired company’s investors.

Most large pharma has chosen a hybrid approach: building internal data infrastructure and foundational ML capabilities while partnering or acquiring for specific cutting-edge capabilities they cannot replicate internally in a reasonable timeframe. The AI investment wave from 2022 to 2025 has been dominated by platform partnerships rather than outright acquisitions, reflecting the uncertainty about which technical approach will prove most productive at late-stage clinical scale.

12.2 Pfizer: Generative AI for Discovery and Manufacturing

Pfizer has consistently positioned AI as a platform for compressing discovery timelines, with their stated benchmark of moving from target identification to preclinical candidate in 30 days for certain target classes. This figure applies to programs where AI-accelerated virtual screening and computational lead optimization eliminate the physical HTS step entirely. The relevant comparison is not 30 days versus the 5-to-6-year industry average; it is 30 days for the computational triage step versus the 3 to 6 months a physical HTS campaign requires for the same step.

Pfizer’s partnerships with AWS for generative AI infrastructure and with Flagship Pioneering’s Logica platform for AI-driven drug development reflect a strategy of building computational capability on top of existing cloud infrastructure rather than proprietary hardware. Their internal AI applications include clinical documentation automation (which Pfizer reports has reduced clinical document preparation time by 80%), manufacturing process optimization, and generative molecular design for specific target classes in oncology and rare diseases.

12.3 Roche/Genentech: The Lab-in-the-Loop Architecture

Roche describes their AI strategy as a ‘lab in the loop,’ a phrase that describes the closed-loop architecture where computational models are trained on experimental data, used to make predictions that guide new experiments, and then retrained on the results of those experiments. This is the standard ML workflow described in the ML literature, but its implementation in a pharmaceutical R&D context requires solving non-trivial data integration problems: connecting automated liquid handling instruments, plate reader outputs, sequencing instruments, and imaging platforms into a unified data pipeline that the ML models can consume in near-real-time.

Genentech’s internal implementation includes generative AI applications for antibody optimization, neoantigen selection for personalized cancer vaccines, and transcriptomic analysis of clinical trial samples. Their collaboration with NVIDIA covers accelerated molecular dynamics simulation and AI-driven protein design. The technical backbone is a proprietary data lake that ingests experimental data from multiple Genentech research sites and makes it available to ML workflows under unified data governance.

12.4 AbbVie: ARCH and the Data Convergence Thesis

AbbVie’s AI strategy centers on what the company calls ‘data convergence,’ which is the centralization and integration of over 200 internal and external data sources into a single analytics platform called ARCH (the AbbVie R&D Convergence Hub). ARCH ingests EHR data from clinical trial sites, genomic and proteomic data from biomarker studies, public omics datasets, patent intelligence from DrugPatentWatch and similar platforms, and published literature.

The commercial hypothesis is that insights visible only when all these data sources are combined, for example, a novel target suggested by the intersection of rare variant GWAS data, proteomic disease data, and clinical outcomes data from AbbVie’s own RA trial database, are not accessible to external AI companies that lack the proprietary clinical data layers. AbbVie’s competitive advantage in AI is therefore defined not by algorithmic sophistication but by data richness.

AbbVie’s use of generative AI for protein design specifically targets the bispecific antibody space, where designing molecules that engage two targets simultaneously requires modeling the geometry of two separate binding interfaces. The structural complexity of bispecific optimization is high enough that computational design provides significant advantages over conventional antibody engineering.

12.5 Novartis: The Isomorphic Bet and the 5-7 Year Horizon

Novartis CEO Vas Narasimhan has been publicly explicit about the time horizon for AI’s impact on drug design: he expects AI’s contribution to designing structurally superior medicines to become materially visible over the next five to seven years, rather than being already reflected in the current pipeline. The $1.2 billion Isomorphic collaboration addresses this timeline by giving Novartis access to AlphaFold 3-enabled structure-based design capabilities in its most data-rich therapeutic areas.

Novartis’s internal AI investments are concentrated on clinical trial design optimization, biomarker analysis, and manufacturing process control. Their Quantitative Systems Pharmacology group uses ML-enhanced PBPK-PD models for dose selection in oncology trials. Their AI capabilities in manufacturing include predictive maintenance for bioreactor operations, real-time process analytical technology (PAT) monitoring, and yield optimization for monoclonal antibody production.

Key Takeaways: Big Pharma AI Strategy

The dominant strategic pattern across large pharma is hybrid: build internal data infrastructure and foundational ML capabilities while acquiring external access to specific cutting-edge platforms. Data richness, not algorithmic sophistication, is the primary internal competitive advantage. For AbbVie and similar companies with large proprietary clinical datasets, the AI opportunity is in mining that proprietary data for insights not accessible to external platforms. Valuation of external AI partnerships should include milestone-weighted probability assessments rather than treating headline deal values as committed capital.

13. Data as a Competitive Moat: Proprietary Datasets and Patent-Protected Workflows

13.1 Why Data Beats Algorithms in the Long Run

In the ML literature, the empirical scaling laws (Kaplan et al., 2020; Hoffmann et al., 2022) establish that model performance scales predictably with training data volume, parameter count, and compute. For a fixed architecture and compute budget, more data consistently produces better models. This means that the company with the largest and highest-quality domain-specific dataset has a structural advantage that cannot be overcome by algorithmic cleverness alone.

In pharmaceutical AI, the relevant domain-specific data falls into four classes: experimental bioactivity data (HTS results, in vivo pharmacology, mechanism-of-action characterization); ADMET data (in vitro and in vivo toxicology, pharmacokinetics, safety biomarker data); clinical data (patient-level genomic, proteomic, and imaging data from clinical trials); and real-world evidence (EHR data, claims data, registry data from post-approval surveillance).

Each class is scarce, expensive to generate, and difficult to replicate. A proprietary HTS dataset covering 10 million compound-target activity measurements, accumulated over 20 years of drug discovery programs, is worth significantly more than a comparable dataset from public sources because it includes the experimental context (assay format, concentration range, biological system) that determines the reliability of the measurements.

13.2 Federated Learning: Sharing Insight Without Sharing Data

A structural barrier to pharmaceutical AI is that the most valuable data is proprietary to individual companies, and competitive dynamics prevent direct sharing. Federated learning addresses this by allowing ML models to be trained across multiple organizations’ datasets without the data ever leaving each organization’s servers. The model weights are shared and aggregated centrally; the raw data is not.

Melloddy, a European consortium of 10 pharma companies including Amgen, Bayer, GSK, Janssen, Lilly, Novartis, Pfizer, Sanofi, Servier, and AstraZeneca, ran a federated multi-task learning project from 2019 to 2022 in which each company contributed internal bioactivity data to train a shared molecular property prediction model. The published results showed that the federated model significantly outperformed individual company models on external benchmark tasks, confirming that federated data aggregation produces measurably better models without requiring data sharing.

The IP implications of federated learning are unresolved. If a federated training run produces a model that predicts a novel drug-target interaction for a compound that one company synthesized but another company’s data characterized, who owns the resulting insight? The Melloddy consortium handled this through contractual carve-outs specifying that each partner retains full IP rights over its own proprietary data and over any downstream drug discovery applications derived from the federated model within its own programs. The model weights themselves were treated as shared consortium property.

13.3 Patent-Protected Data Generation Workflows

Data generation workflows can be patented as method inventions when they involve novel technical steps that produce datasets not obtainable by conventional means. Recursion’s Cell Painting implementation, for example, includes proprietary modifications to the standard fluorescent staining protocol and custom computer vision models for feature extraction that are protected as trade secrets. The standard Cell Painting assay developed by Anne Carpenter’s group at the Broad Institute is publicly available, but Recursion’s specific modifications and the specific machine learning models trained on their data are not.

Synthetic data generation is an emerging area with IP implications. If an ML model is used to generate synthetic training data that augments a sparse real dataset, the synthetic data generation method and the resulting synthetic dataset may qualify for copyright or trade secret protection, depending on jurisdiction. The legal basis for copyright protection of AI-generated synthetic data is currently unsettled, but several law firms have advised clients to establish copyright registration for synthetic biological datasets under the ‘selection and arrangement’ doctrine available for compilations.

Key Takeaways: Data Moat

Proprietary experimental bioactivity, ADMET, clinical, and real-world evidence datasets produce sustainable competitive advantages that algorithmic improvements cannot overcome. Federated learning allows multi-company model improvement without data sharing, as demonstrated by the Melloddy consortium’s published results. Novel data generation workflows are protectable as method patents or trade secrets. The IP ownership of insights derived from federated models requires explicit contractual specification before the training run, not after.