The Uncomfortable Truth About Drug Discovery

Here is a number that should bother every executive in the pharmaceutical industry: $2.6 billion. That is the estimated average cost, in fully capitalized terms, of bringing a single drug from initial concept to FDA approval, according to a widely cited 2016 analysis from the Tufts Center for the Study of Drug Development [1]. Adjusted for inflation and updated failure rate assumptions, some analysts now put that figure north of $3 billion for late-stage programs.

Here is another number: 90 percent. That is roughly how often a drug candidate that enters Phase I clinical trials fails before reaching approval [2]. The failure rate has not improved meaningfully in decades. The biology is hard. The chemistry is hard. The regulatory hurdles are real. And the timeline — historically 10 to 15 years from target identification to approval — is brutal for capital allocation.

These two numbers define the structure of modern pharmaceutical R&D. They explain why drug companies run enormous portfolios: to hedge against the near-certainty of individual program failure. They explain why drug pricing becomes politically toxic: companies are recovering the cost of failed drugs, not just the one that worked. And they explain why virtually every major pharmaceutical company, every venture-backed biotech, and every academic center with ambitions beyond tenure is now pouring money into artificial intelligence.

The pitch is simple: AI can shrink both numbers. Find the right targets faster. Generate better molecules. Predict clinical failures before they happen. Run smarter trials. The pitch is also, in many cases, overstated. The actual evidence — the patent filings, the clinical trial registrations, the deal structures, the approvals — tells a more precise and interesting story than the press releases do.

This article is about that evidence. It uses patent data, including the tracking infrastructure built by DrugPatentWatch, to map where AI is genuinely changing the economics of drug discovery and where the hype has outrun the science. The goal is not to debunk AI in pharma. The goal is to tell you what is actually happening, because if you make capital allocation decisions, regulatory strategy decisions, or competitive intelligence decisions in this industry, the gap between what is real and what is marketed costs money.

Part One: What “Drug Discovery” Actually Means (And Why Each Step Is Hard)

Before examining where AI fits, it helps to be precise about what the drug discovery process involves. The phrase gets used loosely. Precision matters here because AI tools are not uniformly useful across the pipeline — they are dramatically better at some steps than others, and the business value depends entirely on which step you are talking about.

Target Identification and Validation

A drug target is a biological molecule — typically a protein — whose activity is causally linked to a disease. Identifying which proteins matter in a given disease state, and which among those can be modulated by a small molecule or biologic without causing intolerable side effects, is the first major challenge.

The human genome encodes roughly 20,000 proteins. The number that are genuinely “druggable” — meaning they have binding sites accessible to drug-like molecules and are functionally relevant to disease — is estimated at between 3,000 and 4,500 [3]. Of those, approximately 800 to 1,000 are currently targeted by approved drugs. This means the pharmaceutical industry has, in some sense, already picked the low-hanging fruit from the druggable proteome, and identifying novel, validated targets is increasingly difficult.

This is where AI’s first genuine value proposition emerges. Large language models trained on biomedical literature, combined with graph neural networks that model protein-protein interaction networks, can surface non-obvious target candidates at a scale no human research team can replicate. The question is always validation: computational prediction of target relevance does not substitute for experimental confirmation.

Hit Identification and Lead Generation

Once you have a target, you need a molecule that binds to it in a biologically meaningful way. Traditional approaches include high-throughput screening (HTS), where large compound libraries — sometimes millions of molecules — are tested against a target in a robotic assay format. HTS is expensive, slow by modern standards, and frequently produces false positives that waste years of chemistry effort.

Generative AI models, including variational autoencoders, generative adversarial networks, and more recently diffusion-based molecular design tools, can in principle explore chemical space far more efficiently than HTS. The critical word is “principle.” Real-world performance depends on the quality of training data, the accuracy of the scoring function used to evaluate generated molecules, and the reliability of synthesis routes for novel structures — a problem AI has not fully solved.

Lead Optimization

A hit molecule binds to the target. A lead molecule binds well, has acceptable preliminary safety indicators, and shows early signs of drug-likeness. Lead optimization is the process of making hundreds to thousands of chemical analogs of a hit, testing them, and iteratively improving potency, selectivity, and pharmacokinetic properties simultaneously. It is notoriously slow and iterative.

This step is where most established pharmaceutical AI companies have concentrated their tools, because the data density is highest here. Companies have years of internal SAR (structure-activity relationship) data from their own programs. AI models trained on proprietary SAR data can meaningfully accelerate optimization cycles.

ADMET Prediction

ADMET stands for Absorption, Distribution, Metabolism, Excretion, and Toxicity. A molecule can be a brilliant binder to its target and still fail as a drug if it does not reach target tissue at therapeutic concentrations, if it is metabolized too quickly, or if it proves toxic to the liver, heart, or CNS. Many of the drugs that fail in Phase II clinical trials fail for ADMET reasons that, in retrospect, might have been predictable earlier.

AI-based ADMET prediction tools have improved substantially, and this is one of the areas where the business case for AI is clearest: catching bad molecules early is far cheaper than catching them in the clinic. The limitation is that ADMET failures remain hard to predict for genuinely novel chemical scaffolds — models trained on known chemical space may not generalize.

Clinical Development

Clinical trials are where the real money gets spent. Phase I costs tens of millions of dollars. Phase II can cost hundreds of millions. Phase III routinely runs into the billions for large indications. AI applications in clinical development include patient stratification (identifying the right subpopulations most likely to respond to treatment), trial design optimization, synthetic control arms using real-world data, and biomarker identification.

This is also where regulatory complexity intensifies. FDA expectations for AI-supported clinical evidence are still evolving, and companies that move too aggressively risk creating regulatory liability they have not anticipated.

Part Two: The Patent Signal — What AI Filings Tell You That Press Releases Don’t

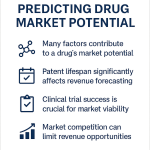

Patent filings are one of the most reliable leading indicators in pharmaceutical competitive intelligence, precisely because they predate public disclosure by 12 to 18 months on average and because they require legal precision that press releases don’t. When a company files a patent on an AI-generated drug candidate, it is making a legal commitment to the novelty and utility of that compound. That is a much stronger signal than a conference presentation.

DrugPatentWatch has built what is arguably the most comprehensive public-facing database for tracking pharmaceutical patent activity, including the ability to follow specific companies’ AI-related patent portfolios, monitor exclusivity cliffs, and identify where patent protection is thin or contested. For competitive intelligence professionals, this kind of systematic patent surveillance is the difference between reacting to a competitor’s announcement and predicting it.

The Numbers Behind the AI Patent Surge

The growth in AI-related pharmaceutical patents is not a rounding error. A 2023 analysis by the European Patent Office found that AI-related patent applications in the life sciences grew at a compound annual rate exceeding 45 percent between 2015 and 2022, with the pharmaceutical and medical sectors accounting for the largest share of that growth [4]. The United States Patent and Trademark Office has observed similar trends in domestic filings.

What is less commonly reported is the distribution of those filings. The AI patent surge in pharma is not evenly spread. A small number of organizations — a mix of technology companies that pivoted into pharma, specialized AI drug discovery companies, and a handful of large pharmaceutical companies with dedicated AI programs — account for a disproportionate share of the filings. This concentration matters for competitive strategy.

The companies generating the most AI-related pharmaceutical patents are not always the ones generating the most press. Insilico Medicine, a private company incorporated in Hong Kong with R&D operations in the United States and China, has an AI patent portfolio that rivals or exceeds that of companies with ten times its market capitalization. This is visible in patent databases in a way it is not visible in most analyst coverage.

What to Look for in an AI Drug Patent

Not all patents labeled “AI” are equal. The distinction that matters most for competitive intelligence is between patents on the AI process itself — the algorithm, the model architecture, the training methodology — and patents on the drug compounds or biological mechanisms that AI was used to identify.

Process patents protecting AI drug discovery methodologies are difficult to enforce broadly and frequently face obviousness challenges. A company that can only patent its process, not its outputs, has a defensible research tool but a weak product moat.

Compound patents and method-of-treatment patents on AI-identified drug candidates are what actually matter commercially. When a company files composition-of-matter patents on specific chemical structures that its AI generated, it is building the same kind of IP protection that traditional drug discovery generates. The AI origin of the compound is not legally significant for patentability purposes under current USPTO doctrine — what matters is whether the compound is novel and non-obvious, and whether there is adequate disclosure.

This creates an interesting tracking opportunity. Using DrugPatentWatch, analysts can monitor when a company that has publicly positioned itself as an AI drug discovery platform begins filing composition-of-matter patents on specific compounds — a transition that signals the platform is generating real drug candidates, not just research results.

The Human Inventor Requirement and Why It Creates a Hidden Risk

The USPTO’s current position, clarified through a series of guidance documents issued between 2023 and 2024, is unambiguous: AI systems cannot be listed as inventors on patents. A human must have made a meaningful contribution to the conception of the claimed invention [5]. This creates a structural risk for companies whose AI systems generate drug candidates with minimal human modification.

The risk is not theoretical. A company that patents an AI-generated compound without a defensible narrative about meaningful human inventive contribution faces the prospect of patent invalidation through inter partes review or litigation. Competitors who understand this vulnerability have a potential tool to attack patents on AI-generated compounds.

The practical implication is that pharmaceutical companies investing in AI drug discovery need to design their workflows to ensure — and document — that human scientists make genuine inventive contributions to the compounds that ultimately get patented. This is not just a legal formality; it requires thinking carefully about how AI tools interface with human creativity in the medicinal chemistry process.

Part Three: Where AI Is Actually Working — Evidence From the Clinic

Enough theory. Let’s look at what has actually happened.

AlphaFold: The Genuine Inflection Point

In November 2020, DeepMind published AlphaFold2’s performance on the Critical Assessment of Protein Structure Prediction (CASP) benchmark, and the results were genuinely startling. The system achieved accuracy that, for many protein families, was comparable to experimental methods like X-ray crystallography — but in hours rather than months, at negligible cost compared to experimental structure determination [6].

AlphaFold2’s subsequent open release, with predicted structures for virtually the entire human proteome and hundreds of millions of proteins from other organisms, changed the economics of structure-based drug design in a measurable way. X-ray crystallography of a target protein previously might cost $500,000 to $2 million and take six months to two years. A predicted structure from AlphaFold2 is free and available immediately.

The caveat that every practicing structural biologist will immediately offer is important: predicted structures are not equivalent to experimentally determined structures for drug design purposes. The confidence of predictions varies widely by protein region. Disordered regions remain poorly predicted. Dynamic protein behavior — the conformational changes that happen when a drug binds — is not captured by static structure prediction. And for novel target families without close homologs in the training data, AlphaFold2’s predictions are less reliable.

But the directional impact on drug discovery is real. A 2023 survey of pharmaceutical and biotechnology companies by the Boston Consulting Group found that 78 percent of respondents with active AI programs reported using AlphaFold2 or derivative tools in at least one active drug discovery project [7]. Structure-based drug design, which previously required significant experimental infrastructure, is now accessible to organizations that could not have pursued it before. This is expanding the competitive field for drug discovery — which has implications for the patent landscape that most large pharmaceutical companies have not fully accounted for.

Insilico Medicine: The Benchmark Case

Insilico Medicine is the closest thing the industry currently has to a full proof-of-concept for AI-driven drug discovery. The company built its own AI platform — called Pharma.AI — which integrates target identification, generative chemistry, and clinical outcome prediction. In 2023, it moved INS018_055, a small molecule for idiopathic pulmonary fibrosis (IPF), into Phase II clinical trials after an unusually short preclinical timeline.

The company reported that the discovery process for INS018_055 took approximately 18 months from target identification to clinical candidate selection [8]. For context, the industry average for that same span of development is closer to five to six years. This is not a small difference.

The target — a novel kinase involved in fibrotic signaling — was identified through Insilico’s target identification module using RNA sequencing data from disease versus healthy tissue. The compound was generated and optimized using their generative chemistry engine. Preclinical ADMET profiles were predicted computationally before synthesis.

The Phase II trial is still running, so we do not have efficacy data. IPF is a brutal disease with high unmet need and a well-defined patient population, which makes it a useful proving ground. But the timeline compression is already a business fact, regardless of clinical outcome. A program that took 18 months instead of 60 to reach Phase I costs dramatically less and creates real capital efficiency advantages.

Insilico’s patent strategy illustrates the point about composition-of-matter versus process patents. The company has filed patents on INS018_055 itself — the specific chemical structure — not just on the AI process used to discover it. This is the right strategy. The AI platform is a competitive advantage in discovery speed; the compound patent is the commercial moat.

Recursion Pharmaceuticals: Scale as a Strategy

Recursion Pharmaceuticals takes a different approach. Rather than building a generative chemistry platform, the company built a massive high-throughput biology platform — imaging millions of cell-based experiments and using deep learning to extract phenotypic signatures that link genetic perturbations to cellular states. The idea is to generate an enormous map of biology that enables target identification and compound testing at scale.

The company has screened more than 50 billion biological experiments using this platform and has built what it describes as a biological “map” of relationships between genes, diseases, and chemical perturbations [9]. Its partnership with Bayer and its acquisition of Cyclica in 2023 have added additional capabilities around molecular design and protein-drug interaction prediction.

Recursion’s pipeline is still predominantly early-stage. The company has multiple programs in Phase I and Phase II, including programs in oncology and rare genetic diseases, but none have yet reached Phase III. The business question — whether the massive upfront investment in the biological mapping infrastructure translates into clinical success at a rate that justifies the capital — remains open.

What Recursion demonstrates is a particular thesis about AI in drug discovery: that data generation is the moat, not the algorithm. The algorithms for analyzing biological imaging data are increasingly commoditized. But generating 50 billion experiments’ worth of data is a genuine competitive barrier.

Exscientia: Speed to Clinic

Exscientia, founded in Oxford and now headquartered there, was among the first companies to put AI-designed drug candidates into human trials. Its lead program — EXS21546, an adenosine A2A receptor antagonist for oncology — entered Phase I in 2021, reportedly after a discovery timeline of just 12 months from project initiation [10].

The company has since advanced multiple programs and entered significant partnerships with Sanofi (worth up to $5.2 billion in potential milestones), Sumitomo Pharma, and Bristol-Myers Squibb. The Sanofi deal in particular attracted attention because of its scale: it represented one of the largest AI drug discovery partnerships at the time of its announcement.

Exscientia’s approach centers on AI-optimized molecular design, where its platform is designed to run iterative design-make-test-analyze cycles with minimal human input in the design step. The company claims to have reduced the average number of compounds synthesized per drug candidate from hundreds to single digits — a change that, if replicated consistently, would transform the economics of medicinal chemistry programs.

The company went public on Nasdaq in 2021. Its subsequent share price performance has been volatile, reflecting the uncertainty that markets still attach to AI drug discovery companies with early pipelines. But the clinical track record — multiple candidates in human studies — is real.

BenevolentAI: The Hard Lesson About Biology

BenevolentAI’s trajectory offers a useful counterpoint to the bullish AI drug discovery narrative. The London-based company built an AI platform focused on knowledge graph construction — linking biological entities, clinical observations, genetic associations, and chemical data into a graph that can be queried to identify repurposing opportunities and novel targets.

The company’s most high-profile prediction was that baricitinib, a JAK1/JAK2 inhibitor approved for rheumatoid arthritis, might be effective against COVID-19 — a prediction made in early 2020, before clinical evidence existed [11]. The prediction proved correct; baricitinib received emergency use authorization for COVID-19 in November 2020 and later full approval.

That success elevated BenevolentAI’s profile considerably. The company went public through a SPAC merger in 2022. The subsequent years were difficult: its lead internal program in atopic dermatitis failed in Phase IIb, and the company undertook significant restructuring in 2023, cutting its workforce and refocusing its pipeline. The share price fell more than 80 percent from its post-SPAC peak.

The atopic dermatitis failure does not invalidate AI-based target identification. But it illustrates something important: predicting that a target is biologically relevant in a disease is different from developing a drug against that target that will succeed in a clinical trial. The gap between those two activities involves biology, chemistry, clinical pharmacology, and patient selection that AI currently handles with varying degrees of reliability. Companies that position AI as a solution to the full drug development problem, rather than a tool that accelerates certain steps of it, are overstating the technology’s current capabilities.

Part Four: Big Pharma’s AI Playbook — Build, Buy, or Partner?

Large pharmaceutical companies face a strategic question that does not have a universal answer: how much of your AI capability do you build internally versus acquire versus access through partnerships?

Internal Build: The Roche/Genentech Model

Roche, through its Genentech subsidiary, has invested heavily in building internal AI capability rather than outsourcing it. The company has assembled a team of hundreds of computational scientists and data engineers and has integrated AI tools into its discovery and development workflows across multiple therapeutic areas.

The reasoning behind an internal build strategy is straightforward: proprietary patient data, clinical data, and compound data are among the most valuable inputs to drug discovery AI. Sending that data to an external AI vendor creates IP risk and competitive exposure. Building internal capabilities — expensive as it is — keeps the data within the enterprise and allows AI tools to be trained on proprietary datasets.

Genentech has published research on its internal AI-assisted discovery programs, including the use of generative models for antibody design and the application of machine learning to predict clinical outcomes in oncology trials [12]. The company does not publicize its full AI strategy, which is appropriate competitive behavior: when your AI capability is a genuine advantage, you do not explain it in detail to your competitors.

The Partnership Model: Pfizer, AstraZeneca, and the Alliance Economy

The dominant model among large pharmaceutical companies is structured partnership — investing in or entering multi-year collaborations with AI drug discovery companies while maintaining internal capability building in parallel.

AstraZeneca has been among the most aggressive in this regard. The company’s AI and data science function has partnerships with Recursion, BenevolentAI, and Verge Genomics, among others. It also has an active internal AI program and has published extensively on its use of AI in target identification and clinical trial optimization.

The partnership model gives large companies access to specialized AI capabilities without the full cost of building them internally. It also provides optionality: if one AI platform proves superior, the company can shift its partnerships. The risk is dependency on external technology and the IP complications that come with co-development agreements — questions about who owns patents on compounds identified through a joint AI program require careful legal structuring.

Pfizer’s approach has included the acquisition of Arena Pharmaceuticals, though AI was not the primary rationale for that deal. The company has invested in internal AI capabilities through its Digital and Innovation Center and has run internal hackathons and AI development programs. But Pfizer’s AI profile is lower than AstraZeneca’s or Roche’s in public discourse, which may reflect genuine strategic differences or simply different communication choices.

The Acquisition Route: What Actually Gets Bought

When large pharmaceutical companies acquire AI drug discovery companies, they are almost never just buying the technology. The more important acquisition targets are usually the pipeline — the drug candidates that have already been generated and are in early development — and the specialized talent. AI drug discovery requires an unusual combination of machine learning expertise, structural biology knowledge, and medicinal chemistry skill. That combination is genuinely rare, and it cannot be hired quickly.

The acquisition of Roivant Sciences’ subsidiary Dermavant by Pfizer in 2022, the acquisition of Turning Point Therapeutics (which had used computational methods in its drug design) by Bristol-Myers Squibb for $4.1 billion, and Merck’s acquisition of Prometheus Biosciences for $10.8 billion — these deals have AI-related components, though AI is rarely the headline reason [13].

The acquisitions to watch more closely are the ones where AI-generated compounds are the primary asset — where the target was identified computationally, the molecule was designed by an AI system, and the clinical program is the commercial payload. As more AI drug discovery companies advance programs through Phase I and Phase II, the acquisition market for those compounds will clarify what the market actually values.

Part Five: The Patent Protection Challenge for AI-Generated Drugs

The interaction between AI drug discovery and pharmaceutical patent law is one of the least-discussed and most consequential issues in the industry. It deserves careful treatment.

What You Can Patent (And What You Can’t)

Under current U.S. patent law as interpreted by the USPTO, the following elements of an AI drug discovery program are potentially patentable:

The drug compound itself, if it is novel and non-obvious, can receive composition-of-matter protection regardless of how it was identified or synthesized. This is the most commercially important form of protection. A composition-of-matter patent prevents anyone else from making, using, or selling the exact compound or sufficiently close structural analogs for the patent term.

Methods of treatment using the compound — specifying patient population, dosing, and indication — can receive independent protection and often extend effective market exclusivity beyond the compound patent expiration.

Formulations, delivery systems, and manufacturing processes can also be patented, contributing to the layered protection strategy that the pharmaceutical industry calls “evergreening” and critics call anticompetitive behavior.

What is difficult to patent is the AI process itself in a way that provides meaningful competitive protection. Method patents on AI-based drug discovery face significant prior art challenges because the general categories of machine learning applied to drug discovery have been extensively published. Claiming novel specific algorithmic innovations is possible but requires genuine technical novelty.

The Obviousness Problem

The obviousness doctrine in patent law holds that an invention is not patentable if it would have been obvious to a person having ordinary skill in the relevant field at the time of the invention. This doctrine creates a specific risk for AI-generated drug candidates.

If an AI system generates a molecule by systematically exploring chemical space around a known scaffold, a competitor can argue that the resulting molecule was obvious — that a skilled medicinal chemist could have made the same molecule through routine experimentation. The fact that an AI actually made it does not necessarily establish non-obviousness.

Courts and patent examiners are still working out how to apply the obviousness doctrine to AI-generated inventions. The Federal Circuit’s existing case law on computer-implemented inventions provides some guidance, but AI drug discovery raises questions that have not been squarely litigated [14]. This means the enforceability of patents on AI-generated compounds is, to some degree, genuinely uncertain.

Companies navigating this uncertainty should invest in documenting not just the AI process that generated the compound, but the specific scientific insights that guided the process — the design constraints imposed by human scientists, the unexpected properties that emerged, the reasons a human decided a computationally generated candidate was worth pursuing. This documentation is your defense against an obviousness challenge.

Freedom to Operate in an AI-Crowded Landscape

Freedom to operate (FTO) analysis — determining whether a planned commercial activity would infringe existing patents — is increasingly complex in the AI drug discovery space because the same AI tools accessible to multiple companies can generate structurally similar compounds independently.

Two companies using similar generative chemistry platforms, trained on similar public datasets, targeting the same protein, can generate structurally overlapping molecules without any communication between them. When one files a patent and the other then files a patent on a similar structure, the resulting interference or priority dispute can be expensive and time-consuming.

DrugPatentWatch’s ability to monitor patent filings in near-real-time provides a tool for FTO monitoring that is particularly valuable in fast-moving AI drug discovery programs. When you are generating novel compounds computationally and moving quickly toward clinical development, knowing whether a competitor has already claimed structurally similar compounds — or whether a structurally similar compound appears in the prior art — is essential information. Missing a relevant patent before entering clinical development is the kind of mistake that creates catastrophic problems at the worst possible moment.

Part Six: The Data Problem — Why AI Is Only as Good as What It Trains On

Every honest assessment of AI drug discovery eventually comes back to data quality. The pharmaceutical industry’s data is enormous in aggregate, but individual datasets are often small, heterogeneous, and generated under conditions that make them difficult to combine.

The Public Data Landscape

The major public repositories that feed pharmaceutical AI training include ChEMBL (maintained by the European Bioinformatics Institute), PubChem, the Protein Data Bank, clinical trial registries, and the published literature. ChEMBL alone contains bioactivity data for more than 2.4 million compounds, representing over 17,000 targets from the published literature [15].

These datasets are genuinely useful for training models that learn the relationship between chemical structure and biological activity. They underpin most of the academic and many of the commercial AI drug discovery tools available today.

But public data has structural limitations. It overrepresents certain chemical spaces — the scaffolds that medicinal chemists have historically preferred. It underrepresents negative results, because the literature publishes positive findings preferentially. And it represents historical discovery decisions, which means it may systematically underrepresent chemical matter that does not fit historical drug development paradigms.

An AI model trained primarily on public data will tend to generate compounds that look like known drugs. This is both its strength — known drug-like matter is more likely to be synthesizable and have reasonable ADMET properties — and its limitation — it may not explore the genuinely novel chemical space where the next generation of important drugs might live.

The Proprietary Data Advantage

This is why large pharmaceutical companies with decades of internal compound screening data have a structural advantage in AI drug discovery that is not obvious from the outside. A company with 50 years of internal SAR data — millions of compounds tested against hundreds of targets, with detailed pharmacokinetic data from animal models and human studies — has training data that no public dataset can replicate.

This data is not uniformly distributed across therapeutic areas or chemical families, and it reflects the idiosyncratic discovery decisions of specific teams and programs. But it is dense, controlled, and proprietary. An AI model trained on this data and calibrated against the company’s own clinical outcomes can make predictions that external models cannot.

The strategic implication is that the value of a large pharmaceutical company’s historical data library is significantly higher in an AI world than it was before AI. Companies that have maintained good data hygiene — consistent assay formats, careful record-keeping, systematic storage of negative results — are sitting on an asset that appreciates as AI tools improve.

The Synthetic Data Question

Several AI drug discovery companies are experimenting with generating synthetic training data — using computational methods to generate hypothetical compound-activity relationships that can augment real experimental data. This is an active research area with genuine promise and genuine risks.

The promise is clear: if you can generate high-quality synthetic data, you can train better models faster and at lower cost than running wet-lab experiments. The risk is that models trained on synthetic data generated by a computational process can inherit the biases of that process. If your synthetic data generator has blind spots, your training data will have blind spots, and your model will have blind spots. This can be invisible until a real compound fails to behave as predicted.

Part Seven: ADMET Prediction — The Nearest-Term Commercial Win

Of all the applications of AI in drug discovery, ADMET prediction has the strongest near-term commercial case. The business logic is clean: catching a compound’s ADMET problems before you synthesize it saves weeks of chemistry work and tens of thousands of dollars in preclinical testing. At scale across a portfolio, the savings are real and measurable.

What Current ADMET Models Can Do

State-of-the-art ADMET prediction models — including commercial platforms from Schrödinger, simulation-based approaches from Certara, and open-source tools like ADMETlab and DeepPurpose — can make reasonably reliable predictions for a range of properties including aqueous solubility, plasma protein binding, CYP enzyme inhibition profiles (relevant to drug-drug interactions), hERG channel blockade (a cardiac safety flag), blood-brain barrier penetration, and metabolic stability in human microsomes [16].

The key word is “reasonably.” These models perform well for compounds structurally similar to their training data. For genuinely novel chemical matter, prediction errors increase. And the downstream translation from in vitro ADMET predictions to in vivo human pharmacokinetics remains imperfect — the liver-on-a-chip and gut model organ technologies that could bridge this gap are still maturing.

Nevertheless, the use of AI-based ADMET filtering early in the hit-to-lead optimization process is now standard practice at most major pharmaceutical companies and many well-funded biotechs. The tools are good enough to provide real value. The question is not whether to use them, but which ones to use and how to calibrate their outputs against your specific chemical matter.

The Clinical Translation Gap

The harder problem is predicting clinical outcomes from preclinical data. This is where the most ambitious AI drug discovery claims live, and where the evidence is most limited.

Predicting that a compound will be tolerated in humans based on animal toxicity data is, in the best circumstances, a probabilistic statement, not a certainty. Predicting that a compound will be efficacious in humans based on cell and animal efficacy data is even harder. The biological differences between mouse models of disease and human disease are substantial. AI cannot make those differences disappear. It can, in principle, learn to flag the specific features of preclinical data that correlate poorly with clinical translation — but doing this requires massive datasets of matched preclinical and clinical outcomes that are difficult to assemble.

The best current approach is the one that Recursion and similar companies use: combine high-throughput preclinical biology at massive scale with sophisticated machine learning to build predictive models. The more data you generate and the better you curate it, the better your clinical translation predictions become. But this is an expensive proposition, and the data required to train genuinely reliable clinical outcome models does not yet exist.

Part Eight: Clinical Trial Optimization — The Other AI Prize

Drug development fails most expensively in the clinic. If AI can improve clinical trial success rates — even modestly — the financial impact dwarfs anything that happens during preclinical discovery.

Patient Stratification: The Precision Medicine Connection

The logic of AI-driven patient stratification is grounded in precision medicine: the recognition that what we call “a disease” is often a collection of biologically distinct conditions that happen to share clinical features. A drug that works in one biological subtype of a disease may fail in a mixed-indication trial because the non-responding patients dilute the signal.

AI tools that analyze genomic data, proteomic data, and electronic health records to identify patient subpopulations most likely to respond to a given drug candidate can improve trial design in ways that increase the probability of success. This is not science fiction; it is the commercial case behind a number of clinical-stage biotech companies and behind FDA’s increasing emphasis on biomarker-driven trial design.

The regulatory infrastructure for precision medicine trials exists. The FDA has approved more companion diagnostics alongside targeted therapies in the past decade than in the entire previous history of the agency [17]. AI-based patient stratification is a natural extension of this trend — the question is whether the biomarkers identified by AI analysis are actually predictive, which requires prospective validation.

Synthetic Control Arms

One of the more practically impactful applications of AI in clinical development is the synthetic control arm — using real-world data from electronic health records, claims databases, and registry data to construct a comparison group for a clinical trial without requiring a parallel placebo arm.

In rare diseases with small patient populations, requiring a randomized placebo arm is sometimes ethically problematic and practically difficult. If you have sufficient historical data on the natural history of the disease, a synthetic control arm can substitute for randomized controls, allowing a smaller, faster trial.

The FDA has issued guidance on the use of real-world evidence in regulatory submissions, and several approvals have incorporated synthetic or external control data [18]. Companies working in rare diseases and pediatric indications have been most aggressive in pursuing this approach.

AI’s role here is in constructing the synthetic control — selecting appropriate historical comparators from real-world databases, adjusting for differences in baseline characteristics, and providing the statistical framework for the comparison. The technical and regulatory requirements are demanding, but the payoff when it works is substantial: faster trials, lower costs, and in some cases, less burden on vulnerable patient populations.

Trial Site Selection and Enrollment Prediction

A less discussed but commercially significant AI application is the optimization of clinical trial site selection and patient enrollment prediction. Clinical trials that fail to enroll on schedule — which is the majority of trials — cost money in proportion to the delay. Slow enrollment extends timelines, burns cash, and can compromise competitive timing.

AI tools trained on historical enrollment data, site performance data, and local patient population characteristics can predict which sites will enroll patients quickly, which patient populations are most accessible, and where protocol amendments might be needed before a trial launches rather than after. Several clinical research organizations (CROs) have invested in these tools, and their use is becoming a standard part of trial planning for well-capitalized programs.

Part Nine: The Regulatory Frontier — What FDA Is Actually Thinking

The FDA’s posture toward AI in drug development is more sophisticated than the public discussion typically acknowledges. The agency has been working on this problem seriously since at least 2019, when it published its first framework for AI/ML-based software as a medical device [19].

FDA’s AI/ML Framework for Drugs

The regulatory questions for AI in drug development are different from those for AI medical devices, and the FDA treats them separately. For drug development specifically, the agency’s primary concern is with the use of AI-generated evidence in regulatory submissions: how do you validate an AI model used to select clinical trial patients? How do you characterize and document an AI tool used to predict biomarkers? How do you ensure that a model that performed well during development still performs reliably when deployed in a different patient population?

The FDA’s Center for Drug Evaluation and Research (CDER) has issued a series of discussion documents and has held public workshops on AI in clinical development. The agency’s position is that AI tools used to generate evidence for regulatory submissions are subject to the same requirements for transparency, validation, and documentation as any other tool used in drug development. “Black box” AI models that cannot be explained to regulators are a liability.

This creates a preference, in regulatory submissions, for AI tools that are interpretable — where the model’s reasoning can be explained in terms that regulators can evaluate. Gradient boosting models and regularized regression approaches, while less powerful than deep learning in some applications, are often preferred in regulatory contexts for this reason.

The Orphan Drug and Rare Disease Opportunity

AI drug discovery companies have disproportionately targeted rare diseases, and the regulatory environment partly explains why. The Orphan Drug Act provides significant financial incentives for rare disease development, including seven-year market exclusivity post-approval, tax credits for clinical trial costs, and FDA fee waivers [20]. The patient populations are small, which limits the cost of clinical trials. And the biomedical literature for rare genetic diseases often includes detailed mechanistic understanding of disease biology — ideal training data for AI-based target identification.

Companies like Recursion, Seer Bio, and Verge Genomics have rare disease programs that take advantage of both the regulatory incentives and the biological specificity of monogenic conditions. For AI tools, diseases caused by single gene defects are an easier problem than complex multifactorial conditions: the target (the gene product or the relevant pathway) is known; the question is what to do about it.

Part Ten: Competitive Intelligence in the AI Pharma Space

For executives, investors, and analysts trying to understand the competitive dynamics of AI-driven drug discovery, the practical challenge is separating companies that have genuinely superior platforms from companies that have superior marketing.

What Actually Differentiates AI Drug Discovery Companies

The differentiation factors that actually matter in AI drug discovery are, in order of practical importance:

Clinical track record: Companies that have advanced AI-designed compounds into human trials have demonstrated something that companies without clinical programs have not. Phase I entry is a relatively low bar, but it is real evidence. Phase II is meaningful. Phase III is significant.

Data assets: What proprietary data does the company have that competitors cannot access? This includes patient-derived genomic data, proprietary compound libraries, internal SAR data, and partnerships that provide access to otherwise inaccessible biological or clinical datasets.

Wet-lab integration: AI models that are designed to work with specific experimental workflows — where the design-make-test-analyze cycle is tightly integrated and the feedback loop between computational prediction and experimental validation is rapid — produce better results than AI models applied in isolation to external data. The companies that own or have access to automated synthesis and assay infrastructure have a tangible advantage.

Target novelty: Is the company working in well-validated target space, where the AI provides efficiency advantages over traditional discovery, or is it working on genuinely novel target biology where AI is needed to identify targets that human researchers would not find? The second is harder but, if it works, more valuable.

How to Use Patent Data for Competitive Intelligence

The systematic use of patent surveillance in AI drug discovery competitive intelligence is underutilized relative to its value. DrugPatentWatch provides tools that allow analysts to set up monitoring for specific companies, specific chemical classes, and specific therapeutic areas — receiving alerts when new patent filings emerge that are relevant to a competitive situation.

The specific monitoring tasks that yield the most actionable intelligence include:

Watching for composition-of-matter patent filings from AI drug discovery companies in your competitive therapeutic area. These filings, which typically appear 12 to 18 months before public announcement of a program, tell you what chemical space a competitor is claiming.

Monitoring continuation patents — filings that extend or modify existing patents — to understand when a competitor is actively working to broaden or defend protection around a specific compound or family. Active continuation filings signal commercial seriousness.

Tracking IND filings in combination with patent data. When a company files an IND (Investigational New Drug Application) — which becomes public through clinical trial registries — and you can match that clinical program to a specific patent, you have a precise picture of both what they are pursuing and when they believe they can protect it.

Following patent expiration monitoring as a forward-looking signal. As major branded pharmaceuticals approach patent expiration, the competitive landscape in that disease area often opens. AI drug discovery companies that are watching these expirations can position programs to address the resulting therapeutic gap. <blockquote> “AI-enabled drug discovery is not about replacing scientists — it is about eliminating the experiments that would have failed. The value is in what you don’t make.” — Alex Zhavoronkov, CEO, Insilico Medicine, speaking at the 2023 AI in Drug Discovery Summit [21] </blockquote>

Part Eleven: The Deal Landscape — What Partnerships Actually Pay

The financial architecture of AI drug discovery partnerships has evolved significantly since the first wave of deals in 2016 and 2017, and the current deal structure tells you a lot about what buyers actually believe about AI’s capabilities.

The Anatomy of a Modern AI Partnership

A typical 2024-vintage AI drug discovery partnership includes:

An upfront payment, often ranging from $50 million to $200 million, that funds the discovery phase and establishes the commercial relationship. The upfront is what makes headlines. It is also often non-contingent, meaning the AI company receives it regardless of whether any drugs emerge from the collaboration.

Milestone payments tied to progression of specific compounds through clinical development, totaling hundreds of millions to low billions in aggregate. These are the “potential value” numbers that appear in press releases — they are only real if compounds advance, which most will not.

Royalty rates on approved drugs, typically in the mid-single-digit to low-double-digit percentage range, depending on which party brought the most significant scientific contribution.

Option rights, allowing the pharmaceutical partner to in-license specific programs or acquire specific companies at pre-negotiated terms. These option structures have become more common because they give large pharma the ability to wait for de-risking before making larger commitments.

The evolution in deal terms reflects genuine market learning. Early AI drug discovery deals tended to overvalue platform capability and undervalue clinical execution risk. Later deals — the Sanofi-Exscientia deal, the Roche-Recursion deal, the AstraZeneca-Benevolent AI expansion — reflect more sophisticated understanding of where value actually gets created and what risks remain.

Deals That Did Not Deliver

Honest assessment requires acknowledging deals that underperformed their early promise. BenevolentAI’s partnership with AstraZeneca on target identification, which was announced with considerable fanfare in 2019, produced a pipeline contribution that both parties have been relatively quiet about in subsequent years. The collaboration has continued, which suggests ongoing value, but the breakthrough that early press coverage implied has not materialized on the announced timeline.

Numerate’s partnerships in cardiometabolic disease have not produced publicly visible clinical programs years after significant partnership announcements. Atomwise’s collaborations with major pharmaceutical companies have been prolific in terms of the number of compounds identified computationally, but the translation to clinical candidates has been slower than some early projections suggested.

None of this is grounds for abandoning AI drug discovery. It is grounds for calibrating expectations — understanding that AI accelerates specific steps of the pipeline and that the full development challenge, including biology, clinical medicine, and regulatory navigation, remains what it has always been.

Part Twelve: The Economics of AI Drug Discovery — Where the ROI Actually Comes From

Senior executives making capital allocation decisions in pharmaceutical R&D need a realistic model of where AI generates financial return. The honest answer is more granular than most promotional material suggests.

The Discovery Phase: Real Savings

In the discovery phase — from target identification through lead optimization — AI is generating real, measurable cost and time savings at companies that have implemented it seriously. The numbers are program-specific and not systematically published, but the directional evidence is strong.

The reduction in the number of compounds synthesized per program — from the historical average of hundreds per lead series to dozens or, in the best AI-assisted programs, single digits — translates directly to reduced synthesis costs, reduced assay costs, and faster timelines. If a typical lead optimization program involves synthesizing 500 compounds at an average cost of $5,000 to $10,000 per compound (including synthesis, purification, and biological testing), the fully loaded cost is $2.5 to $5 million. Reducing that to 50 compounds saves $2 to $4 million per program. Across a portfolio of 20 active programs, the savings are meaningful.

The timeline savings compound this. Getting to clinical candidate selection 18 to 24 months earlier per program — which appears achievable based on published case studies — means earlier IND filing, earlier clinical data, and either earlier approval or earlier recognition that a program is failing. The last item matters as much as the others: learning that a program has a fatal flaw 18 months earlier saves all the spending that would have occurred in those 18 months.

The Clinical Phase: Potential, Not Proof

In the clinical phase, AI’s financial impact is mostly potential rather than demonstrated. The mechanisms are real — better patient stratification should improve success rates, better biomarker identification should enable smaller trials, synthetic control arms should reduce costs. But these mechanisms have not yet produced a statistically significant improvement in industry-wide clinical success rates that can be attributed specifically to AI.

This is partly a timing issue: drugs currently in late-stage development were largely put into development before AI tools were widely deployed, meaning the clinical trial data does not yet reflect the full impact of AI-assisted program selection. As the cohort of AI-assisted programs advances through development over the next five to ten years, the success rate comparison will become meaningful.

The Long-Term Structural Change

The most significant economic consequence of AI in drug discovery may not be the direct cost and time savings, but the structural change in competitive dynamics it enables. If AI genuinely reduces the cost and time required to bring a drug candidate to Phase I from $500 million over five years to $100 million over two years, the number of organizations that can participate in drug discovery increases dramatically.

This is already visible. Companies that could not have competed with major pharmaceutical R&D operations a decade ago — because they lacked the compound libraries, the HTS infrastructure, the structural biology capabilities, or the computational chemistry teams — can now access AI-based tools that substitute for some of that infrastructure. The number of AI-native drug discovery companies that have advanced programs to Phase I is growing. The number that will advance to Phase III, and ultimately to approval, will define the competitive landscape of the pharmaceutical industry for the next two decades.

Part Thirteen: Specific Diseases Where AI Has Changed the Game

The impact of AI varies enormously by therapeutic area. Some disease areas have benefited disproportionately from AI tools, and understanding which ones — and why — is more useful than generalized claims about AI’s impact on drug discovery.

Oncology: Target-Rich, Data-Dense

Oncology is the therapeutic area where AI has had the most visible impact, for reasons that are structural rather than accidental. Cancer biology is complex, but it is also extensively studied — The Cancer Genome Atlas, the Broad Institute’s DepMap project, and decades of academic and commercial research have generated large, well-annotated datasets that AI tools can use effectively.

The specific applications where AI has added most in oncology include: identifying synthetic lethal relationships between cancer vulnerabilities and existing targeted agents; designing cancer-specific bispecific antibodies and CAR-T constructs; and predicting patient response to checkpoint inhibitors using tumor microenvironment analysis.

Multiple AI-designed oncology compounds are now in Phase I or Phase II trials, more than any other therapeutic area. The indication-specific success rates remain to be seen.

Neuroscience: Hard But High-Priority

Neurological disease is historically one of the highest-failure-rate areas of pharmaceutical development. The blood-brain barrier limits drug delivery. The biology of psychiatric and neurodegenerative diseases is incompletely understood. Clinical endpoints are often subjective and slow to change. Animal models of neurological diseases are notoriously poor predictors of human outcomes.

AI has not solved any of these problems. But it has changed the approach to target identification in neurodegeneration. Graph-based analysis of protein-protein interaction networks, integration of genetic data from biobank-scale studies like the UK Biobank, and machine learning analysis of electronic health record data have all produced novel target hypotheses in Alzheimer’s disease, Parkinson’s disease, and ALS that are now being experimentally validated.

The companies working on AI-assisted neuroscience drug discovery include AbCellera (antibody optimization), Verge Genomics (genomic target identification), and Alector (neuroinflammation). Whether these programs will ultimately deliver approved drugs remains to be seen, but the scientific rigor of the AI-assisted approach is credible.

Infectious Disease: Speed as the Value Proposition

In infectious disease, particularly in the context of pandemic preparedness, AI’s most compelling value proposition is speed. AlphaFold2 predicted structures for SARS-CoV-2 proteins within days of the genome sequence becoming available. Generative chemistry tools were applied to design potential antivirals within weeks of the pandemic’s onset.

Whether speed actually translated into better treatments for COVID-19 is complicated by the regulatory and clinical realities: demonstrating efficacy and safety in humans still takes time, regardless of how quickly you identify a lead compound. But the trajectory is clear: in the next pandemic, AI will substantially compress the discovery and early development timeline in ways that could save lives.

The baricitinib case — where BenevolentAI’s knowledge graph identified the existing drug as potentially efficacious against COVID-19 before clinical evidence existed — is the clearest example of AI-enabled speed in infectious disease. It also illustrates that the value is in repurposing as much as in new drug discovery.

Part Fourteen: What’s Actually Coming — Realistic 5-Year Projections

Forecasting in a field as fast-moving as AI drug discovery is hazardous, but grounded projections based on current evidence are more useful than either uncritical optimism or reflexive skepticism.

AI-Designed Drugs Will Get Approved in This Decade

This is not speculative. Multiple AI-designed drug candidates are in Phase II clinical trials as of 2024-2025. The statistical expectation, based on historical Phase II-to-approval success rates of around 13 to 16 percent, is that at least some of these will succeed. The timeline for Phase II-to-approval is typically four to seven years. So the first approved drug with a credible AI-originated design claim should emerge within the next two to five years.

Insilico Medicine’s INS018_055 for IPF and Exscientia’s programs are the most advanced publicly disclosed examples. Both companies have data readouts expected in the near term. A positive Phase III result from either would be a genuine milestone — not because it proves AI drug discovery is superior to traditional approaches, but because it demonstrates that AI-designed compounds can be safe and effective in humans.

Multimodal AI Integration Will Become Standard

The next wave of AI drug discovery will integrate data types that are currently analyzed separately: genomic, transcriptomic, proteomic, metabolomic, imaging, and clinical data analyzed in unified models that can span from molecular mechanism to clinical outcome. This is technically challenging but technologically feasible given current progress in multimodal AI architectures.

Companies that build or access these integrated models will have an advantage in target identification and patient stratification that single-modality AI tools cannot replicate. The foundational models being built by Ginkgo Bioworks, Recursion, and similar companies are early versions of this multimodal future.

The Patent Wars Are Coming

As AI drug discovery generates real commercial assets — approved drugs with significant revenues — the patent disputes will intensify. Questions about inventorship, obviousness, and the validity of patents on AI-generated compounds will be litigated in earnest.

The outcome of these disputes will shape the IP strategy of the entire field. If courts broadly invalidate patents on AI-generated compounds for lack of human inventorship, companies will need to restructure their discovery workflows to ensure adequate human creative contribution. If courts uphold these patents, the IP protection framework for AI drug discovery will be stable and predictable.

Patent monitoring tools like DrugPatentWatch will become more valuable as the landscape becomes more contested. The ability to track related filings, identify where claims overlap, and monitor the litigation status of relevant patents will be a competitive advantage for legal and business development teams.

Regulatory Frameworks Will Mature

FDA and EMA will establish clearer, more specific guidance on AI-assisted drug development over the next five years. This will be welcomed by industry: current regulatory uncertainty is itself a cost, because companies make conservative choices in the absence of clear expectations.

The likely direction of regulatory evolution includes requirements for AI model validation, transparency in training data selection, and documentation of the human decision-making process in AI-assisted programs. Companies that invest in these capabilities now — before they are required — will have an advantage when the requirements become official.

Part Fifteen: The Human Factor — What AI Cannot Replace

Every serious participant in this field knows something that the marketing often obscures: AI is a tool, and the quality of its output depends on the quality of the humans using it.

Biological Intuition Remains Irreplaceable

The best drug discovery scientists develop intuitions about biology that are difficult to formalize and impossible to fully encode in a training dataset. The sense that a target is “real” — that it plays a causal role in disease rather than being a passenger — comes from deep engagement with the relevant biology that combines experimental experience with mechanistic understanding. AI tools are good at pattern recognition in large datasets; they are not good at recognizing when the pattern is wrong because the dataset has a systematic bias.

This is why the best AI drug discovery programs are not ones where AI has replaced the scientist, but ones where scientists use AI to do things they could not do alone — exploring chemical space more thoroughly, processing biological data at larger scale, identifying correlations across datasets too large for manual analysis — while applying their own judgment to interpret the results.

The Clinical Medicine Gap

Drug development requires deep knowledge of clinical medicine that AI systems do not have in any operationally useful sense. Understanding why patients respond or don’t respond to a given treatment, interpreting safety signals from early clinical data, designing dose escalation schemes that balance efficacy and tolerability — these activities require the combination of scientific training and clinical experience that exists only in clinician-scientists.

Companies that treat AI-generated drug candidates as the end of the discovery challenge, rather than the beginning of the development challenge, consistently make costly mistakes. The handoff from computational discovery to clinical development is where organizational capability matters most.

Key Takeaways

AI is delivering measurable value in drug discovery, but the value is concentrated in specific steps. Target identification, lead generation, lead optimization, and early ADMET filtering are the areas where AI tools have demonstrated real, documented impact. Clinical development improvements are real in principle but not yet demonstrated at scale in outcomes.

The patent record is the most reliable indicator of which AI drug discovery companies are building real assets. Composition-of-matter patent filings on specific compounds — trackable through databases like DrugPatentWatch — signal genuine progress far more reliably than press releases or conference presentations.

The human inventor requirement creates a real but manageable IP risk. Companies must design their workflows to ensure documented human inventive contributions to AI-generated compounds, or they risk patent invalidation.

Data quality and proprietary data assets differentiate AI drug discovery companies more than algorithmic sophistication. The algorithms are increasingly commoditized. The data — particularly proprietary SAR data, patient-derived biological data, and clinical outcome data — is the defensible moat.

The first approved AI-designed drug is likely within five years. Multiple Phase II programs are sufficiently advanced that approval within this decade is a reasonable expectation, not an aspiration.

Freedom-to-operate analysis in AI drug discovery requires proactive patent surveillance. Multiple companies using similar tools can generate structurally similar compounds independently. Systematic monitoring of competitor patent filings in your target area is no longer optional.

Regulatory clarity is coming, and early preparedness is a competitive advantage. Companies that invest in AI model validation, transparency infrastructure, and documentation of human decision-making now will be better positioned when regulatory requirements become specific and mandatory.

The biggest economic impact of AI in drug discovery may be structural, not transactional. If AI genuinely lowers the barrier to entry for drug discovery, the pharmaceutical competitive landscape will look very different in 2035 than it does today.

FAQ

Q: Is there a single AI drug candidate that has already been FDA-approved?

Not as of mid-2025, but the field is close. Several candidates with credible AI-originated discovery stories are in Phase II, and one — Insilico Medicine’s INS018_055 for IPF — has reported early Phase II data suggesting biological activity. The standard FDA approval timeline for a Phase II drug is approximately four to eight years, depending on the indication and regulatory pathway. A conditional approval in a rare disease indication with high unmet need could arrive faster. The better framing is that AI-assisted drug discovery has contributed to multiple approved drugs through its role in partial discovery processes — predicting crystal structures, optimizing lead series, selecting clinical patient populations — even without a drug that was fully AI-originated from target through compound.

Q: How does a pharmaceutical company actually protect an AI-generated drug compound through patents?

The process is similar to patenting any drug compound, with additional care required around inventorship documentation. The company files a composition-of-matter patent claiming the specific chemical structure (and often a range of structurally related analogs) of the AI-generated compound. The patent application must name human inventors who made meaningful contributions to the conception of the claimed invention. This means the company must be able to articulate and document what specific intellectual contributions its scientists made in directing, constraining, or meaningfully modifying the AI’s output. The compound’s novelty and non-obviousness are evaluated against prior art just as they would be for any drug compound. The AI origin of the compound is not disclosed on the patent itself; it is relevant only to internal documentation practices that would matter if the patent were challenged.

Q: What distinguishes a genuine AI drug discovery platform from one that is primarily a marketing label?

The most reliable distinguishing criteria are: whether the company has advanced AI-originated compounds into human clinical trials; whether the company has composition-of-matter patents on AI-generated structures (not just process patents); whether the company has published peer-reviewed validation of its platform’s predictive accuracy; and whether the company’s discovery timeline — the time from project initiation to clinical candidate selection — is demonstrably shorter than industry averages. Companies that can point to multiple clinical-stage programs with documented AI discovery origins, short discovery timelines, and peer-reviewed platform publications are credible. Companies that can only point to press releases, partnership announcements, and in silico benchmarks are not demonstrating equivalent capability.

Q: Why do AI drug discovery companies target rare diseases disproportionately?

Several factors converge to make rare diseases attractive for AI drug discovery companies. Rare diseases often have well-defined genetic bases, making target identification more tractable computationally. The patient populations are small, which limits clinical trial costs. The FDA’s Orphan Drug Act provides seven-year market exclusivity, tax credits, and fee waivers that improve the economics of rare disease programs. Rare disease regulators are more receptive to adaptive trial designs and surrogate endpoints that can accelerate approval timelines. And the competitive landscape in rare disease indications is less crowded — there are fewer established drugs to compete against and fewer large companies with equivalent programs in the same space. For an AI drug discovery company trying to demonstrate clinical proof-of-concept, a rare disease program with a clear mechanism, a small but well-characterized patient population, and favorable regulatory incentives is an efficient choice.

Q: How should a pharmaceutical company monitor competitor AI drug discovery activity?

The most systematic approach combines three data streams: patent surveillance, clinical trial registry monitoring, and scientific literature tracking. Patent surveillance — best executed using a dedicated pharmaceutical patent database like DrugPatentWatch with automated alerts for relevant companies and chemical spaces — provides the earliest signal of competitor programs, typically 12 to 18 months before any public announcement. Clinical trial registry monitoring (ClinicalTrials.gov, EU Clinical Trials Register) provides a more real-time picture of what competitors have advanced into human studies. Scientific literature tracking, including preprint servers like bioRxiv, gives visibility into platform publications that signal methodological capabilities. Together, these three streams, analyzed by someone with both competitive intelligence skills and pharmaceutical R&D knowledge, provide a reasonably complete picture of what competitors are doing and where they are in their programs.

Citations

[1] DiMasi, J. A., Grabowski, H. G., & Hansen, R. W. (2016). Innovation in the pharmaceutical industry: New estimates of R&D costs. Journal of Health Economics, 47, 20-33. https://doi.org/10.1016/j.jhealeco.2016.01.012

[2] Sun, D., Gao, W., Hu, H., & Zhou, S. (2022). Why 90% of clinical drug development fails and how to improve it? Acta Pharmaceutica Sinica B, 12(7), 3049-3062. https://doi.org/10.1016/j.apsb.2022.02.002

[3] Santos, R., Ursu, O., Gaulton, A., Bento, A. P., Donadi, R. S., Bologa, C. G., et al. (2017). A comprehensive map of molecular drug targets. Nature Reviews Drug Discovery, 16(1), 19-34. https://doi.org/10.1038/nrd.2016.230

[4] European Patent Office. (2023). Patents and the fourth industrial revolution: AI and life sciences. EPO Technology and Innovation Intelligence. https://www.epo.org/news-events/news/2023/ai-life-sciences.html

[5] United States Patent and Trademark Office. (2024). Inventorship guidance for AI-assisted inventions. USPTO. https://www.federalregister.gov/documents/2024/02/13/2024-02623/inventorship-guidance-for-ai-assisted-inventions

[6] Jumper, J., Evans, R., Pritzel, A., Green, T., Figurnov, M., Ronneberger, O., et al. (2021). Highly accurate protein structure prediction with AlphaFold. Nature, 596(7873), 583-589. https://doi.org/10.1038/s41586-021-03819-2

[7] Boston Consulting Group. (2023). How AI is reshaping pharmaceutical R&D. BCG Health Care Practice. https://www.bcg.com/publications/2023/ai-reshaping-pharmaceutical-research-development

[8] Ren, F., Aliper, A., Chen, J., Zhao, H., Rao, S., Kuppe, C., et al. (2023). A small-molecule TNIK inhibitor targets fibrosis in preclinical and clinical models. Nature Biotechnology. https://doi.org/10.1038/s41587-023-01874-4

[9] Recursion Pharmaceuticals. (2024). 2023 Annual Report and Pipeline Update. Recursion Pharmaceuticals, Inc. https://investors.recursion.com/annual-reports

[10] Exscientia. (2021). AI-designed drug enters clinical trials in record time. Exscientia Press Release. https://www.exscientia.com/news-and-insights

[11] Richardson, P., Griffin, I., Tucker, C., Smith, D., Oechsle, O., Phelan, A., & Stebbing, J. (2020). Baricitinib as potential treatment for 2019-nCoV acute respiratory disease. The Lancet, 395(10223), e30-e31. https://doi.org/10.1016/S0140-6736(20)30304-4

[12] Genentech Research and Early Development. (2023). AI and machine learning in drug discovery at Genentech. Roche Group Publication. https://www.gene.com/stories/ai-machine-learning-drug-discovery

[13] Merck & Co. (2023). Merck completes acquisition of Prometheus Biosciences. Press Release. https://www.merck.com/news/merck-completes-acquisition-of-prometheus-biosciences/

[14] Levin, A., & Mair, C. (2023). AI inventions and patent law: Current developments and future challenges. Journal of Law and the Biosciences, 10(2). https://doi.org/10.1093/jlb/lsad024

[15] Gaulton, A., Hersey, A., Nowotka, M., Bento, A. P., Chambers, J., Mendez, D., et al. (2017). The ChEMBL database in 2017. Nucleic Acids Research, 45(D1), D945-D954. https://doi.org/10.1093/nar/gkw1074

[16] Xiong, G., Wu, Z., Yi, J., Fu, L., Yang, Z., Hsieh, C., et al. (2021). ADMETlab 2.0: An integrated online platform for accurate and comprehensive predictions of ADMET properties. Nucleic Acids Research, 49(W1), W5-W14. https://doi.org/10.1093/nar/gkab255

[17] U.S. Food and Drug Administration. (2023). Table of pharmacogenomic biomarkers in drug labeling. FDA. https://www.fda.gov/drugs/science-and-research-drugs/table-pharmacogenomic-biomarkers-drug-labeling

[18] U.S. Food and Drug Administration. (2023). Considerations for the use of real-world data and real-world evidence to support regulatory decision-making for drug and biological products: Guidance for industry. FDA. https://www.fda.gov/regulatory-information/search-fda-guidance-documents

[19] U.S. Food and Drug Administration. (2019). Proposed regulatory framework for modifications to artificial intelligence/machine learning (AI/ML)-based software as a medical device (SaMD). FDA Discussion Paper. https://www.fda.gov/media/122535/download

[20] U.S. Food and Drug Administration. (2024). Orphan drug act: Relevant excerpts. FDA Rare Diseases Program. https://www.fda.gov/patients/rare-diseases-fda/orphan-drug-act-relevant-excerpts

[21] Zhavoronkov, A. (2023, March). AI-enabled drug discovery: From molecules to the clinic. Keynote address at the 2023 AI in Drug Discovery Summit, Boston, MA.

Patent data references and exclusivity tracking in this article were verified using DrugPatentWatch (www.drugpatentwatch.com), a pharmaceutical patent intelligence platform that provides real-time monitoring of drug patent filings, expiration dates, and competitive intelligence data across the pharmaceutical and biotechnology sectors.