1. Why Traditional Drug R&D Is Structurally Broken

Between 2000 and 2015, more than 86% of drug candidates that entered clinical development failed to meet their primary endpoints. Out of every 10,000 compounds screened in early-stage research, roughly five reach human trials, and fewer than one achieves approval. The cost to bring a single approved drug to market, fully accounting for the failures that subsidize each success, exceeded $2.6 billion by BCG’s 2023 estimate. The timeline from target hypothesis to NDA submission has historically run 12 to 15 years.

These numbers aren’t a data problem. They reflect a structural mismatch: the biological search space is effectively infinite (the drug-like chemical universe contains an estimated 10^60 theoretically synthesizable small molecules), but the tools pharma has used to search it for most of the past 40 years are linear, hypothesis-driven, and human-throughput-limited. High-throughput screening at scale can evaluate 100,000 to 1 million compounds per year in a well-resourced facility. That sounds impressive until you acknowledge the denominator.

The attrition rate by phase is roughly as follows. Phase I clinical trials see about a 63% success rate across all therapeutic areas when averaged. Phase II success hovers at 31%. Phase III is closer to 58%, but by that point, the sunk cost per candidate is already in the hundreds of millions. Oncology is worse at every stage. CNS is worse still.

The result is an industry that must charge enormous prices on the drugs that do work to cross-subsidize the overwhelming majority that do not. AI and machine learning attack this problem at its root: not by incrementally improving hit rates at one stage, but by compressing the entire pre-clinical and early-clinical stack simultaneously.

Key Takeaways: Section 1

The core problem is search-space mismatch, not talent or effort. The drug-like chemical universe is orders of magnitude too large for human-led screening. AI’s value lies in probabilistic navigation of that space, not in replacing medicinal chemists.

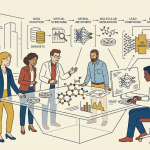

2. The AI/ML Toolkit: A Precise Technical Inventory

Pharma executives encounter a lot of AI terminology without precision. The following is a working taxonomy of the methods actually in production use across leading drug discovery organizations.

Supervised Learning for Property Prediction

Gradient-boosted trees (XGBoost, LightGBM), random forests, and deep neural networks trained on labeled datasets predict quantitative structure-activity relationships (QSAR). These are the workhorses of ADMET prediction. They require large, clean training sets and produce probabilistic outputs: a predicted pKi, an estimated logD, an IC50 range. Their interpretability is improving with SHAP (SHapley Additive exPlanations) values, which attribute prediction weight to individual molecular features.

Graph Neural Networks

Molecules represented as graphs, with atoms as nodes and bonds as edges, are processed by message-passing graph neural networks (GNNs). Schnet, MPNN, and Attentive FP are canonical architectures. GNNs outperform fingerprint-based methods on scaffold-diverse datasets because they encode 3D-informed local chemistry rather than hashing molecular structure into a fixed-length bit vector that loses spatial context.

Transformer-Based Molecular Language Models

ChemBERTa, MolBERT, and related architectures treat SMILES strings as sentences and molecular fragments as words. Trained on tens of millions of known compounds (ChEMBL alone now exceeds 2.4 million bioactive molecules with curated target annotations), these models develop latent representations of chemical space that generalize across target classes. When fine-tuned on a proprietary dataset of 50,000 compounds against a specific kinase, they routinely outperform GNNs trained from scratch on the same data.

Variational Autoencoders and Diffusion Models for Generative Chemistry

VAEs encode molecules into a continuous latent space. Decoding a sampled point from that space produces a novel molecule with properties interpolated from its nearest neighbors. Reinforcement learning (RL) attached to a VAE or RNN-based generator allows the model to maximize multi-objective reward functions, typically combining predicted binding affinity, synthetic accessibility score (SAS), and predicted selectivity against off-target kinases. Diffusion models (DiffSBDD, TargetDiff) operate directly in 3D coordinate space, generating ligand conformations conditioned on the binding pocket geometry of a target protein.

Large Language Models for Literature Mining and Hypothesis Generation

GPT-4-class and domain-specific models (Galactica, BioMedLM) synthesize evidence from PubMed, ClinicalTrials.gov, patent databases, and genomic data repositories to surface testable hypotheses. BenevolentAI’s platform is probably the most mature commercial implementation: it ingests unstructured literature alongside structured databases and applies a knowledge graph layer to surface mechanistic connections between disease nodes and potential target nodes.

AlphaFold and Structural Prediction

DeepMind’s AlphaFold2, released to the scientific community in 2021 and extended with AlphaFold3 in 2024, predicts protein 3D structures with near-crystallographic accuracy for the majority of protein families in the human proteome. The AlphaFold Protein Structure Database now covers over 200 million structures across 48 species. AlphaFold3 extends prediction to protein-ligand, protein-DNA, and protein-RNA complexes, making it directly useful for structure-based drug design without requiring an experimentally solved co-crystal structure. This removes one of the most expensive and time-consuming bottlenecks in the pre-clinical workflow.

Key Takeaways: Section 2

There is no single ‘AI for drug discovery.’ The field uses at least six distinct algorithm families, each with different data requirements, interpretability profiles, and appropriate use cases. IP teams need to understand which method generated a given molecule or target hypothesis because the inventorship and patentability analysis differ substantially between, say, a GNN-predicted binding affinity and a VAE-generated de novo scaffold.

3. Target Identification and Validation at Scale

From GWAS to Drug Target: Closing the Translation Gap

Genome-wide association studies have generated hundreds of thousands of genetic associations between sequence variants and disease phenotypes. Translating those statistical signals into tractable drug targets remains one of the hardest problems in pharma. The challenge is that a GWAS hit identifies a genomic locus, not a mechanism. A variant in a non-coding region 400 kilobases from the nearest annotated gene could affect expression of a dozen proteins through distal regulatory elements.

ML methods built on multi-omic integration, combining GWAS data with expression quantitative trait loci (eQTL) maps, chromatin accessibility data (ATAC-seq), and protein interaction networks, now close most of that interpretive gap computationally. Mendelian randomization analysis, increasingly automated by ML pipelines, uses genetic variants as instrumental variables to infer causal relationships between gene expression levels and disease outcomes. This is a harder causal claim than correlation, and targets that survive MR analysis have substantially higher clinical success rates: Open Targets’ analysis of their genetic evidence tier showed that targets with MR support had a Phase II to Phase III transition rate roughly twice that of targets identified by phenotypic screening alone.

Proteomics and the Undruggable Proteome

Roughly 85% of the human proteome has historically been considered undruggable by conventional small molecules because the relevant proteins lack the well-defined hydrophobic binding pockets that small molecule drugs exploit. AI is changing this in two ways. Structural prediction via AlphaFold3 reveals cryptic binding sites, transient pockets that only appear in specific conformational states, not visible in static crystal structures. And generative design techniques specifically trained on protein-protein interaction interfaces are producing macrocycle and stapled peptide leads that can disrupt interactions previously thought inaccessible. The PROTAC and molecular glue design space, where the ‘drug’ recruits an E3 ubiquitin ligase to degrade the target rather than inhibiting it, is particularly active: ML tools now predict ternary complex geometry and degradation efficiency from sequence alone.

Target Validation: Moving Beyond the Knockout Mouse

In vivo validation has been the gold standard for target credentialing, but it is slow, expensive, and frequently misleading in its translation to human biology. Human organoid and organ-on-a-chip systems combined with high-content imaging are generating human-relevant functional data at scale. Recursion processes 2 million perturbational experiments per week using automated cell biology and deep learning on the resulting image data. Their morphological profiling approach, which uses a custom convolutional neural network to extract thousands of cellular features from stained fluorescence images, can phenotypically cluster compounds by mechanism of action, identify off-target liabilities before any animal study, and prioritize targets that show disease-relevant morphological rescue in patient-derived cell lines.

Key Takeaways: Section 3

Genetic validation via Mendelian randomization is the single most powerful predictor of clinical success for novel targets. AI tools that integrate GWAS with eQTL and protein interaction data are now fast enough to evaluate thousands of candidate targets per quarter. Organizations that build proprietary multi-omic target-scoring models will accumulate a structural intelligence advantage over competitors relying on public data alone.

4. Virtual Screening, In Silico Lead Discovery, and the IP Surface They Create

Structure-Based Virtual Screening at Billion-Compound Scale

Classical docking algorithms (Glide, AutoDock Vina) score ligand poses in a target binding pocket using empirical force fields. They are reasonably accurate but computationally expensive, making billion-compound screens impractical without massive compute infrastructure. AI-accelerated docking changes this. Gnina, a deep learning-based scoring function, and DiffDock, a diffusion model for ligand pose generation, achieve accuracy comparable to physics-based methods at one to two orders of magnitude lower computational cost.

Enamine’s REAL Space library, which contains over 36 billion synthesizable molecules, is now routinely screened virtually by organizations with sufficient compute. Schrödinger’s FEP+ (free energy perturbation) method, once limited to late-stage lead optimization on dozens of compounds, is increasingly applied earlier because GPU-accelerated implementations have cut the cost per calculation by roughly 95% since 2018.

The IP implication is direct: a novel hit identified by AI-powered virtual screening from a public compound library, without any novel synthetic effort, faces a patentability question. The composition of matter claim requires novelty and non-obviousness. If the AI is essentially a very fast version of what a skilled medicinal chemist would have done with the same data, the ‘non-obvious’ standard may not be met in the United States under 35 U.S.C. 103. European practice has additional complications: the EPO requires a human inventor, which means AI-generated compounds must be assigned a human inventive contribution to secure a European patent, a requirement that is actively litigated.

Fragment-Based Screening and AI-Assisted Elaboration

Fragment-based drug discovery (FBDD) starts with small, simple molecules (molecular weight below 300 Da) that bind weakly but efficiently. The challenge is elaboration: growing a fragment into a drug-like lead without losing binding efficiency. ML models trained on matched molecular pair analysis (MMPA) data now automate fragment elaboration, suggesting synthetic vectors ranked by predicted binding improvement, synthetic accessibility, and novelty over the prior art.

Key Takeaways: Section 4

Every AI-assisted screening campaign produces an IP decision problem. Compounds identified purely from public libraries through in silico methods require careful prosecution strategy. The cleanest patent position comes from AI-guided design of compounds that are genuinely structurally novel: scaffolds outside the Markush claims of existing patents, demonstrated by freedom-to-operate (FTO) analysis before synthesis. Organizations should run automated FTO against the Orange Book and patent databases at the virtual screening stage, not after lead selection.

Investment Strategy: Virtual Screening

Companies with proprietary generative design tools and the computational infrastructure to screen billion-compound libraries have a clear lead-generation advantage. The barrier to entry is not the algorithm (many are open-source) but the proprietary data used to train and validate it. Evaluate AI drug discovery firms by the size and curation quality of their training datasets, their internal synthesis and assay capacity to close the design-make-test-analyze (DMTA) cycle, and the number of candidates currently in IND-enabling studies from AI-generated scaffolds.

5. Generative Chemistry: De Novo Design and the Patent Question

How Generative Models Work in Practice

A generative model for drug design typically operates on a multi-objective reward function. The objectives commonly include predicted pIC50 against the primary target, predicted selectivity over a panel of off-target proteins (e.g., hERG for cardiac liability, CYP3A4 for metabolic stability), synthetic accessibility score (SAS, where 1 is trivially synthesizable and 10 is practically impossible), and, increasingly, predicted clinical pharmacokinetics from a population PK model. The generator, whether a VAE, a transformer with RL, or a diffusion model, samples the chemical space to maximize the composite reward.

The output is not a single molecule but a Pareto frontier: a set of compounds that cannot improve on one objective without sacrificing another. The medicinal chemist’s role shifts from proposing synthetic routes to selecting among computationally optimal candidates and applying tacit knowledge about manufacturability, stability, and formulation that the model cannot encode.

The Inventorship Problem

The USPTO’s February 2024 guidance on AI-assisted inventions confirmed that AI cannot be a named inventor. A human must have made a ‘significant contribution’ to the conception of the claimed invention. For AI-generated molecules, this creates a documentation requirement: research organizations need contemporaneous records showing that a human scientist exercised substantive judgment in selecting, modifying, or characterizing the AI-generated compound, not merely pressing ‘run’ on a generative model and filing whatever came out.

The EPO’s position is stricter on identification of the human inventor but has not precluded AI-assisted inventions from grant. The UK’s Court of Appeal ruled definitively in 2023 that DABUS, an AI system designated as sole inventor, could not appear on a patent. The underlying question, what level of human contribution suffices, remains actively contested in each jurisdiction.

Evergreening Opportunities from Generative Design

Generative chemistry creates a technically legitimate evergreening mechanism that is distinct from conventional salt or polymorph patents. An AI system trained on structure-activity data from a marketed drug can generate novel compounds with the same mechanism of action but structurally distinct scaffolds. If those compounds demonstrate superior pharmacokinetics, higher target selectivity, or a different dosing profile, they qualify for new composition of matter patents with their own 20-year term. This is not cosmetic reformulation; it is genuine chemical innovation informed by the clinical learnings from the first-generation drug.

Pfizer’s use of generative design in its oral COVID antiviral discovery program (resulting in nirmatrelvir, the active ingredient in Paxlovid) is the clearest recent example. The program compressed a multi-year lead optimization campaign into roughly 18 months by using structure-based generative design to navigate around protease selectivity problems that had blocked earlier candidates.

Key Takeaways: Section 5

Generative chemistry is the area where AI creates the most durable IP value, but only when organizations maintain meticulous inventorship documentation. The ‘significant contribution’ standard requires process redesign: research notebooks, model run logs, and decision records must capture human judgment at each selection point. Organizations that treat generative design as an automated pipeline without human intervention will face patent challenges.

6. ADMET Prediction: From Black Box to Regulatory-Grade Evidence

The Scope of the Problem

ADMET failures account for roughly 40% of late-stage clinical attrition. Compounds that looked excellent in cell-based and animal models turn out to have unacceptable human pharmacokinetics (poor oral bioavailability, rapid CYP-mediated clearance, or non-linear PK due to P-glycoprotein efflux), off-target toxicity (hepatotoxicity, cardiotoxicity via hERG inhibition, or phospholipidosis), or both. These failures are expensive: a Phase II attrition due to PK failure represents roughly $50 to $150 million in sunk cost.

Specific Predictive Models

Absorption models (Caco-2 permeability, P-gp substrate probability, human intestinal absorption) are among the most mature in the field. CNNs and GNNs trained on thousands of in vitro Caco-2 measurements predict permeability within roughly half a log unit of experimental values for most drug-like scaffolds. The remaining error is concentrated in compounds with novel heterocyclic cores not well-represented in training data, which is exactly where generative design operates.

CYP inhibition prediction has advanced significantly. Models predicting reversible inhibition of CYP3A4, 2D6, 2C9, 2C19, and 1A2 from molecular structure achieve AUROC values above 0.85 on held-out test sets in multiple published benchmarks. Time-dependent (mechanism-based) inhibition is harder to predict and remains an area of active model development.

Cardiotoxicity via hERG channel inhibition is a well-studied liability. hERG binding affinity prediction models are sufficiently mature that the FDA’s CDER has informally acknowledged their use in IND-stage risk assessment, though they do not substitute for in vitro hERG patch-clamp assays required by ICH E14.

Hepatotoxicity is the hardest ADMET endpoint to predict reliably. Drug-induced liver injury (DILI) has multiple mechanisms, many of which are idiosyncratic (immune-mediated or dependent on patient-specific metabolic polymorphisms). The best current models achieve AUROC values of 0.75 to 0.82 on external test sets, which is useful for triage but insufficient to support a regulatory claim that DILI risk has been computationally ruled out.

Regulatory Position on AI-Derived ADMET Data

The FDA’s 2024 guidance on AI in drug development does not prohibit the use of AI-derived ADMET predictions in INDs or NDAs, but it does require sponsors to characterize the model’s training data, validation methodology, and applicability domain for the specific chemical series in the submission. Sponsors whose compounds fall outside the applicability domain of a submitted model must supplement with experimental data. The EMA’s position is similar but places additional emphasis on model interpretability: a regulatory submission that relies on ADMET predictions from a black-box GNN without explainability analysis is more likely to generate a question during scientific advice or day-180 assessment.

Key Takeaways: Section 6

AI-derived ADMET data has graduated from a screening tool to a regulatory data type in early-phase submissions, but with conditions. The applicability domain problem, where models trained on historical data perform poorly on novel scaffolds, is the biggest practical limitation. Organizations should invest in experimental ADMET validation pipelines that can rapidly close applicability domain gaps rather than relying on AI predictions alone at the IND stage.

7. Company Deep Dives: Platforms, Pipelines, and IP Valuations

Recursion Pharmaceuticals

Platform Architecture

Recursion’s core asset is its biological foundational model, built on a dataset that now exceeds 50 petabytes of imaging and genomic data generated from over 2 million perturbational experiments per week. The company uses automated liquid handling and high-content confocal imaging to expose hundreds of human cell lines to thousands of genetic and chemical perturbations simultaneously. A proprietary convolutional neural network extracts roughly 1,000 morphological features per cell, producing a high-dimensional fingerprint for each experimental condition. Compounds with similar fingerprints presumably share mechanisms of action; this is Recursion’s core phenotypic clustering methodology.

The company’s acquisition of Exscientia in 2024 added a generative chemistry capability, giving Recursion a vertically integrated DMTA platform: biological target identification and prioritization (Recursion’s original platform), AI-assisted compound design (Exscientia’s generative tools), and in-house synthesis and screening to close the cycle.

IP Valuation

Recursion’s IP position is built on several patent families covering image-based phenotypic profiling methods, machine learning architectures for morphological clustering, and specific compound-target pairs identified by the platform. The composition of matter patents on specific compounds are the most commercially valuable: they directly cover the revenue-generating product. Method patents on the AI platform have less direct revenue relevance but create defensive IP that competitors must design around. As of early 2025, Recursion’s patent portfolio spans over 300 granted patents and applications, with the highest concentration in morphological profiling methods (US 10,325,209 and related family members) and specific oncology compounds.

The integration of Exscientia’s portfolio adds generative design IP, including patents on AI-assisted molecular design workflows. The combined entity’s R&D productivity, measured as IND-enabling studies per quarter from AI-generated compounds, is the primary metric for assessing whether that IP translates into commercial value.

Pipeline Status

Recursion has advanced multiple compounds into clinical development, including REC-994 (cerebral cavernous malformation), REC-2282 (NF2-related schwannomatosis), and oncology candidates identified through its Bayer and Roche partnerships. The company’s 2024 partnership revenue from Roche and Bayer provides non-dilutive funding but also means a portion of the platform’s output is licensed rather than owned outright, a consideration for portfolio analysts calculating residual IP value.

Insilico Medicine

Platform Architecture

Insilico’s platform, Pharma.AI, has three components: PandaOmics for target identification, Chemistry42 for generative molecular design, and InClinico for clinical trial outcome prediction. The company uses generative adversarial networks (GANs) and RL-based generators in Chemistry42, combined with AlphaFold-based structural analysis for structure-based design.

The most commercially significant output is ISM001-055, an AI-designed first-in-class TNIK inhibitor for idiopathic pulmonary fibrosis. The compound progressed from AI-generated structure to IND filing in approximately 30 months, a timeline roughly 4 to 5 times faster than conventional programs. Phase II data expected in 2025 will be the first major clinical readout for an AI-designed molecule with no human-designed precursor scaffold.

IP Valuation

ISM001-055 represents the clearest case study in AI-generated IP valuation. The compound’s IP estate includes composition of matter patents on the novel TNIK inhibitor scaffold (which Insilico’s generators produced de novo), method of treatment patents for IPF, and manufacturing process patents. If Phase II data is positive, the composition of matter patents become the primary value driver.

Insilico has been explicit that ISM001-055 was designed entirely by AI with human scientists serving in a selection and validation role rather than a design role. This makes its inventorship documentation particularly important. If the USPTO or EPO ever challenges the human inventive contribution behind ISM001-055’s patents, the commercial value of the entire IP estate is at risk. Investors and partners should conduct thorough IP due diligence on Insilico’s inventorship records and prosecution history before entering licensing or M&A discussions.

Investment Strategy: Insilico

At the time of writing, Insilico is private. Any pre-IPO investment should weight ISM001-055 Phase II data probability heavily: a clean Phase II signal in IPF would likely trigger a liquidity event and validate the entire AI-designed molecule category, raising valuations across the sector. A negative or ambiguous readout would not necessarily invalidate the platform but would extend the capital cycle significantly.

Exscientia

Prior to its acquisition by Recursion, Exscientia operated as an independent AI drug design company. Its DSP-1181 program, a 5-HT1A/7 agonist for obsessive-compulsive disorder designed in partnership with Sumitomo Dainippon Pharma, entered Phase I trials in approximately 12 months from project initiation. This was widely cited as the first AI-designed molecule in human trials, though the claim requires qualification: human medicinal chemists were involved in the process, and DSP-1181’s scaffold has precedent in prior 5-HT1A agonist literature.

The Exscientia platform’s core differentiator was its closed-loop DMTA system, where automated synthesis and assay robots continuously fed experimental data back into the generative model, tightening the design cycle without human handoffs between each iteration.

BenevolentAI

BenevolentAI’s platform focuses on target identification rather than molecular design. Its knowledge graph, built from structured and unstructured biomedical literature, contains over 1 billion relationships between biological entities: genes, proteins, diseases, drugs, phenotypes, and genomic variants. Graph neural network traversal of this knowledge graph generates ranked target hypotheses for input disease queries.

The company’s highest-profile clinical-stage asset, baricitinib for COVID-19, originated from a BenevolentAI target hypothesis: the company identified JAK kinase inhibition as a mechanism to block viral entry via numb-associated kinase (NAK) inhibition, published that hypothesis in February 2020, and the subsequent clinical confirmation (baricitinib was eventually FDA-authorized for COVID-19) validated the platform’s ability to generate actionable biological insights from literature data.

BenevolentAI’s IP estate is weighted toward platform method patents rather than composition of matter. Its commercial model is primarily partnership-based: licensing platform access and co-development deals with AstraZeneca (for chronic kidney disease and heart failure targets), Pfizer, and others. For IP teams assessing BenevolentAI as an M&A target, the core asset is the knowledge graph and the curation process that maintains it, not specific compound patents.

Lantern Pharma

Lantern Pharma occupies a distinct niche: AI-driven oncology drug repurposing. Its RADR (Responsive to Anticancer Drug Response) platform uses ML to identify patient subpopulations most likely to respond to drugs that were abandoned in development due to low average response rates across unselected populations. The platform inputs genomic and transcriptomic data from tumor samples and predicts response probability for a panel of cancer drugs, including compounds with expired or near-expiring composition of matter patents.

The IP strategy is different from de novo discovery. Lantern pursues new use patents (method of treatment claims for specific genomically-defined patient subpopulations) rather than composition of matter patents. These method claims are harder to enforce against generic manufacturers, who are not generally liable for off-label use by prescribers, but they can support orphan drug designation, which grants seven-year market exclusivity in the United States for rare diseases.

Big Pharma’s AI Alliances: Isomorphic Labs, Eli Lilly, and Novartis

In January 2024, Eli Lilly and Novartis announced separate deals with Isomorphic Labs, the commercial spinout from DeepMind, for AI-assisted drug discovery. Lilly’s deal is reportedly valued at up to $1.7 billion including milestones; Novartis’s at up to $1.2 billion. Isomorphic Labs uses AlphaFold3 (developed internally at DeepMind) as its structural prediction backbone, combined with proprietary generative and scoring models.

The strategic logic is clear. Both Lilly and Novartis are facing patent cliffs on major products in the late 2020s. Lilly’s Trulicity (dulaglutide) loses U.S. exclusivity in 2027, representing approximately $7 billion in annual revenue at risk. Novartis’s Entresto (sacubitril/valsartan) faces biosimilar competition starting around 2025. AI-accelerated discovery is a tool to refill pipelines faster than conventional methods allow.

From a portfolio valuation perspective, these deals do not add compositional patent value to Lilly or Novartis immediately. They represent R&D productivity bets: if the Isomorphic Labs platform can reduce the time from target hypothesis to IND by two to three years, the discounted cash flow impact on the pipeline NPV is substantial, but the value is realized only when candidates advance to clinical milestones.

Key Takeaways: Section 7

The AI drug discovery landscape has a clear bifurcation. Pure-play AI companies (Recursion, Insilico, BenevolentAI) own platforms and are building internal pipelines; their IP value is concentrated in early-stage composition of matter and method patents whose clinical validation is still pending. Big pharma is buying AI capability through partnerships and internal programs; their platform investments are R&D infrastructure rather than direct IP assets. For M&A purposes, the most defensible acquisition targets are those with proprietary data generation pipelines and closed-loop DMTA systems: the algorithm is increasingly commoditized, but the curated experimental data it runs on is not.

8. Data Infrastructure as a Moat: Who Owns the Feedstock

Why Proprietary Data Is the Actual Competitive Differentiator

Most AI algorithms used in drug discovery are open-source or commercially available. GNNs, VAEs, and transformer architectures are published in peer-reviewed literature, with reference implementations on GitHub. What is not public is the training data. A GNN trained on Novartis’s 30 years of internal HTS data, with fully annotated assay conditions, dose-response curves, and associated structural characterization, will substantially outperform the same GNN trained on ChEMBL alone.

This means data is the IP, even when it is not formally patented. Proprietary datasets, maintained as trade secrets under the Defend Trade Secrets Act in the United States and equivalent national laws elsewhere, can protect competitive advantage as long as reasonable measures are taken to prevent disclosure. The implication for M&A is that the data governance practices of an acquisition target matter as much as its patent portfolio.

Publicly Available Data Resources and Their Limitations

ChEMBL 34 (released 2024) contains approximately 2.4 million bioactive compounds with curated activity data across roughly 14,000 targets. PubChem contains over 115 million unique chemical structures, though most lack associated biological activity data. The Protein Data Bank holds over 220,000 experimentally solved protein structures. These resources are essential for pre-training foundational models, but they have systematic gaps: the most commercially valuable chemical space, the proprietary screening data from pharma’s in-house HTS campaigns, is absent.

ExCAPE-DB, ZINC15, and similar compiled databases partially address coverage but do not substitute for high-quality, annotated, proprietary data. The companies that will win at AI-driven discovery are those that generate vast amounts of their own experimental data (as Recursion does with its automated imaging platform) rather than those that apply more sophisticated algorithms to the same public training sets.

Data Sharing Consortia: Strategic Participation

Pre-competitive data sharing through consortia like ATOM (Accelerating Therapeutics for Opportunities in Medicine), the Pistoia Alliance, and MELLODDY provides access to pooled data without pooling the algorithms trained on it. The federated learning model used by MELLODDY, where a central ML server coordinates training across data held locally at each participating company without the raw data ever leaving the company’s firewall, is the emerging template for data sharing that preserves trade secret protection.

Participation in these consortia is strategically rational for companies with large proprietary datasets: they gain access to the collective pool while retaining the unique competitive advantage of their own data in their own training runs.

Key Takeaways: Section 8

Evaluate AI drug discovery platforms by asking three questions: How much proprietary experimental data do they generate per quarter? How is that data curated, annotated, and maintained? What trade secret protections govern access? The answers determine whether an AI platform is a replicable algorithm applied to public data, or a defensible data-driven intelligence advantage.

9. The Patent Landscape for AI-Discovered Drugs

Patentability of AI-Generated Molecules: Jurisdiction by Jurisdiction

United States: The USPTO’s 2024 guidance confirms that AI-generated inventions are patentable when a human inventor made a ‘significant contribution’ to each claim. The guidance specifies that selecting, analyzing, modifying, or validating an AI-generated output can constitute significant contribution, but merely recognizing that an AI-generated output is interesting does not. Organizations should document precisely which decisions a human scientist made in the design and selection process.

European Patent Office: The EPO requires a natural person as inventor. AI-assisted inventions are grantable, but the inventive step analysis applies human standards: would the claimed compound be obvious to a ‘person skilled in the art’ given the prior art? If an AI’s generative process produces a compound that any ordinarily skilled medicinal chemist, using known methods, would have arrived at from the prior art, the EPO will reject it for lack of inventive step. Novel scaffolds genuinely outside the prior art are more defensible.

China: The CNIPA has accepted applications disclosing AI-assisted discoveries but requires human inventors. China’s pharmaceutical patent system has additional complexity for biologics and formulation patents, and the CNIPA has been more aggressive than the USPTO or EPO in restricting evergreening claims.

Japan: The JPO’s position is broadly similar to the EPO: human inventors required, inventive step assessed on standard grounds, AI assistance documented but not disqualifying.

The Orange Book and AI-Discovered Drug Exclusivity

A drug approved by the FDA receives five years of new chemical entity (NCE) exclusivity under the Hatch-Waxman Act regardless of whether it was discovered by AI or conventional methods. NCE exclusivity blocks generic ANDA filers from relying on the NDA holder’s safety and efficacy data for five years, and prevents Paragraph IV filings for four years. This applies to AI-discovered small molecules in the same way as any other small molecule NDA.

The Orange Book also lists patents submitted by the NDA holder: composition of matter, formulation, and method of treatment patents. Paragraph IV certifications challenging the validity or infringement of those listed patents trigger 30-month stays, a mechanism unchanged by the AI origin of the underlying molecule.

For AI-discovered drugs, the composition of matter patent is often the most important Orange Book listing and the most likely target of Paragraph IV challenge. Generic filers will scrutinize the prosecution history for admissions about the inventive step (particularly relevant if the applicant argued around prior art by citing AI as providing an unexpected result) and for inventorship allegations that could create inequitable conduct defenses.

Method of Treatment Patents and AI-Identified Indications

AI platforms that identify novel therapeutic uses for approved drugs (repurposing) generate method of treatment patent opportunities. These claims cover the use of a known compound for a new indication, and they do not require a new composition of matter. The term is 20 years from filing, and they can extend commercial protection on a molecule whose composition of matter patent has expired.

The enforceability of method of treatment patents against generic manufacturers is limited in the United States: generics are not liable for off-label prescribing. But they provide market positioning value (method claims are listed in the Orange Book and trigger 30-month stays against Paragraph IV filers), and they can support regulatory exclusivities including orphan drug designation, pediatric exclusivity, and New Chemical Entity exclusivity for new molecular forms.

Key Takeaways: Section 9

The patent strategy for AI-discovered drugs requires the same rigorous prosecution approach as any pharmaceutical IP program, with three additional requirements: documented human inventive contribution at each claim-covered decision point, freedom-to-operate analysis against the generative model’s training data and outputs, and jurisdiction-specific inventorship compliance. Organizations that integrate IP strategy into the AI discovery workflow from the first computational run will have fewer prosecution problems than those that treat IP as a post-discovery task.

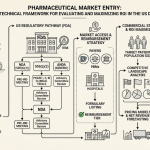

10. Regulatory Strategy: How FDA and EMA Are Treating AI-Derived Evidence

FDA’s Framework for AI in Drug Development

The FDA published its action plan for AI/ML-based software as a medical device (SaMD) starting in 2021, and extended its guidance to AI in drug discovery and development through a series of draft guidance documents culminating in the 2024 ‘Artificial Intelligence in Drug Development’ framework. Key principles from that framework:

The FDA expects sponsors to characterize the purpose, training data, validation methodology, and intended applicability domain of any AI model used to generate data submitted in an IND, NDA, or BLA. This is documented through a model card or model risk management file, analogous to the process validation documentation required for manufacturing.

Sponsors should prespecify how AI-derived predictions were used in decision-making, whether prospectively (the AI result was the primary basis for a go/no-go decision before the experiment) or post-hoc (the AI result was generated after experimental data confirmed the outcome). Post-hoc AI analysis is treated more skeptically, similar to post-hoc statistical analyses in clinical trials.

The FDA’s Office of Pharmaceutical Quality has separately issued guidance on AI/ML in pharmaceutical manufacturing and quality control, covering real-time release testing, continuous process verification, and AI-based process analytical technology (PAT). This is a distinct regulatory framework from the drug discovery application and is not covered here.

EMA’s Position

The EMA’s reflection paper on AI in medicines development, updated in 2023, takes a broadly similar approach to FDA but with greater emphasis on model transparency and human oversight. The EMA has been more explicit that black-box predictions without interpretable outputs are unlikely to be accepted in regulatory submissions without extensive supplementary experimental validation.

The EMA’s scientific advice process, available to sponsors developing novel medicines, provides an opportunity to align AI data packages with the CHMP’s expectations before NDA submission. Organizations developing first-in-class AI-discovered molecules should seek scientific advice early: the CHMP’s view on what experimental data must supplement AI predictions will vary by target, mechanism, and therapeutic area.

ICH Harmonization

The International Council for Harmonisation (ICH) is developing AI-specific technical guidelines, expected to be finalized in 2025 and 2026, that will harmonize AI data requirements across the U.S., EU, Japan, Canada, and China. Until those guidelines are finalized, sponsors operating globally face jurisdiction-specific requirements that may conflict. The practical approach is to design the AI data package to the most stringent requirements across target markets, then document jurisdiction-specific variations.

Key Takeaways: Section 10

Regulatory acceptance of AI-derived data is real but conditional. The conditions are documentation-intensive and require investment in model governance infrastructure before IND filing. Organizations that treat AI model validation as a regulatory requirement equivalent to analytical method validation will avoid the questions that slow down submissions.

11. Investment Strategy for Analysts

Valuing AI Drug Discovery Companies

Standard DCF models for drug discovery companies assign probability-weighted NPVs to pipeline assets at each clinical stage. AI drug discovery companies require three modifications to this framework.

First, the pre-clinical probability of success (POS) for AI-designed molecules appears higher than industry average based on available data. BCG’s 2023 analysis of AI-discovered molecules in clinical development found a Phase I POS of approximately 80 to 90%, compared to the industry-wide Phase I POS of roughly 63%. If this holds in larger samples, the risk-adjusted NPV of pre-clinical AI programs should be discounted less than conventional pre-clinical assets. The sample size of AI-derived molecules with Phase II and Phase III data is still small, so caution is warranted in extrapolating the early-stage signal.

Second, platform value is genuinely separable from pipeline value in AI-driven companies, unlike most conventional biotech. A platform that generates two to four IND-enabling candidates per year has value independent of any specific compound’s clinical outcome. Platform value should be assessed on throughput (candidates per year), cycle time (months from target hypothesis to IND-enabling study completion), and capital efficiency (spend per candidate). Compare these metrics across companies and over time within a company to assess whether the platform is improving.

Third, partnership revenue as a validation signal. A milestone payment from a top-20 pharma company following internal validation of an AI platform is strong evidence that the platform works. Look at the structure of the deal: discovery-stage payments (pre-IND) are worth less than development milestones (post-IND), which in turn are worth less than regulatory milestones (NDA filing, approval). A deal structured primarily around discovery milestones suggests the partner is buying optionality, not paying for demonstrated results.

Portfolio Construction

A portfolio approach to AI drug discovery exposure should balance pure-play AI biotech (higher risk, higher leverage to sector validation events like Insilico’s IPF readout) against big pharma with AI-enhanced pipelines (lower risk, lower AI-specific upside, but protection from individual program failure). NVIDIA and cloud infrastructure providers (Microsoft Azure, Amazon Web Services, Google Cloud) offer indirect exposure to the compute demand that scales with AI drug discovery adoption.

For the pure-play category, the companies with the strongest fundamental positions are those where the AI platform has generated a candidate currently in Phase II or later. That clinical data provides the first real-world validation of whether AI-designed molecules work in humans at scale.

Key Takeaways: Section 11

The single most important near-term catalyst for the AI drug discovery investment thesis is Phase II efficacy data from AI-designed molecules. ISM001-055 (Insilico/IPF), Recursion’s oncology candidates, and similar programs represent binary readout events that will reshape valuations across the sector regardless of which compound reports first.

12. The Autonomous Discovery Roadmap: 2025 to 2032

Near Term: 2025 to 2027

The primary developments in this window will be clinical validation. Multiple AI-designed molecules will report Phase II data, providing the first statistically meaningful signal on whether AI-derived POS improvements hold in later-stage clinical development. The regulatory frameworks for AI data packages will be finalized through ICH guidelines, reducing uncertainty for global submissions.

In the technology stack, multimodal foundational models that integrate genomics, proteomics, imaging, and clinical data in a single latent representation will become standard tools for target identification. AlphaFold3’s extension to full proteome-scale interaction mapping will enable systematic identification of cryptic binding sites across the entire druggable proteome, opening new target classes.

Medium Term: 2027 to 2030

Closed-loop autonomous discovery laboratories, where AI-directed robotic platforms design, synthesize, test, and analyze compounds in continuous cycles without human intervention except for safety oversight, will move from proof-of-concept to routine operation at leading companies. The DMTA cycle, currently running four to six weeks with significant human coordination overhead, will compress to days in these systems.

Large language models trained on clinical trial data, electronic health records, and genomic patient databases will begin generating clinical trial designs optimized for enriched patient populations, increasing Phase II and Phase III POS for AI-discovered compounds with companion diagnostic selection strategies.

Long Term: 2030 to 2032

Quantum computing provides the largest potential acceleration for molecular simulation, particularly for accurate modeling of enzyme catalysis and ligand binding thermodynamics, problems where current classical computing provides approximations rather than exact solutions. Fault-tolerant quantum computers capable of running variational quantum eigensolver (VQE) algorithms on drug-sized molecules are not expected before 2030 at the earliest, and more realistic estimates put meaningful pharmaceutical quantum advantage closer to 2035. The current quantum computing landscape in pharma is pre-commercial: useful for benchmarking and algorithm development, not for production drug design.

Digital twin models of individual patients, populated with multi-omic data, longitudinal EHR data, and real-world evidence, will enable in silico clinical trial simulations that can predict safety signals before first-in-human dosing. This represents the long-term convergence of AI in drug discovery and AI in clinical development into a single integrated pipeline.

Key Takeaways: Section 12

The 2025 to 2027 window is primarily a clinical validation phase for the drug discovery AI thesis. Organizations should be investing now in the data infrastructure and regulatory documentation practices that will allow them to move AI-designed molecules through later clinical stages efficiently. The companies that have Phase II data by 2027 will have a substantial competitive advantage in attracting partnerships, capital, and regulatory familiarity.

13. Key Takeaways by Segment

Across the full scope of AI in drug discovery, the following points represent the highest-confidence conclusions supported by published evidence and market data as of mid-2025.

The structural failure rate of traditional drug discovery is a mathematical problem, not a talent problem. The drug-like chemical search space is intractably large for human-led screening, and AI’s primary value is probabilistic navigation of that space at scales no human team can match.

The algorithm is increasingly commoditized; the training data is not. Organizations investing in proprietary data generation, whether through high-content imaging platforms, automated synthesis, or curated clinical databases, are building IP moats that will compound over time regardless of which generative architecture is dominant.

Inventorship documentation is a first-order IP risk for AI-discovered drugs. The USPTO’s ‘significant contribution’ standard requires process redesign, not just legal review. Research organizations need to capture human judgment at every selection point in their AI workflows before IND filing, not after.

Phase II data from AI-designed molecules is the most important near-term validation event for the sector. The sample size of AI-designed molecules with Phase II and Phase III readouts is currently too small to draw statistically robust conclusions about POS improvements. That will change between 2025 and 2027.

Big pharma’s AI partnership deals are R&D productivity bets, not direct IP acquisitions. Analysts should evaluate them by the structure of milestone payments (later-stage milestones signal greater partner confidence) and by whether the partnerships give the AI company access to proprietary clinical data that improves its platform.

Regulatory acceptance of AI-derived data is real and growing, but conditional on documentation quality. Model governance infrastructure, including training data provenance, validation methodology, and applicability domain characterization, needs to be built before IND filing.

14. Frequently Asked Questions

Q: How quickly can AI actually reduce drug development timelines?

The clearest demonstrated examples show pre-clinical timelines compressed by three to five times. Insilico’s ISM001-055 went from AI-generated target hypothesis to IND filing in approximately 30 months, versus a conventional industry average of six to eight years for the same pre-clinical stages. Exscientia’s DSP-1181 reached Phase I in roughly 12 months from project start. These are the fastest examples; the average AI-assisted program is faster than the conventional average but not by these extreme multiples.

Q: What clinical success rates do AI-designed drugs show?

BCG’s 2023 analysis found that AI-derived molecules had Phase I success rates of 80 to 90%, compared to the industry-wide average of approximately 63%. This is a meaningful improvement if it holds at scale. Phase II and Phase III data is still limited, as most AI-designed molecules are in early clinical development. The full-pipeline POS improvement is not yet quantifiable from the available data.

Q: Are big pharmaceutical companies actually deploying AI internally, or just buying partnerships?

Both. Major companies including Pfizer, AstraZeneca, Roche, and Amgen have substantial internal AI teams and platforms. Pfizer used AI in the nirmatrelvir program. AstraZeneca has an internal platform called Alchemite for ADMET prediction and generative design. Simultaneously, the Isomorphic Labs deals with Lilly and Novartis show that even large internal AI teams purchase external platform access for specific capabilities.

Q: What are the main IP risks specific to AI-discovered drugs?

There are four primary risks. First, inventorship challenges if documentation of human contribution to the claimed invention is inadequate. Second, enablement challenges if a patent application claims a broad genus of AI-generated compounds but discloses only a handful of specific examples. Third, prior art challenges if the AI’s generative model was trained on data that itself anticipated the claimed compound. Fourth, trade secret misappropriation risk if training data included third-party proprietary information without appropriate licensing.

Q: How should an R&D lead evaluate an AI drug discovery vendor?

Five criteria matter most. First, what proprietary data does the vendor generate internally versus relying on public databases? Second, what is the vendor’s track record in the specific target class relevant to your program? Third, what is the cycle time for a full DMTA iteration? Fourth, what is the vendor’s regulatory track record for data packages submitted in INDs? Fifth, what are the IP ownership terms for any compounds generated using the vendor’s platform?

Q: How does AI affect the biosimilar competitive landscape?

AI accelerates biosimilar development by predicting critical quality attributes (CQAs) of reference biologics from published characterization data, reducing the analytical characterization work needed to demonstrate biosimilarity. It also improves cell line engineering for biologic manufacturing and predicts immunogenicity risk from sequence data. For innovators, AI-driven structure-based design can generate next-generation biologic variants with superior efficacy or reduced immunogenicity, supporting new composition of matter patents that extend market protection beyond the reference product’s exclusivity period.

Sources: Boston Consulting Group, ‘AI in Biopharma R&D: Delivering on the Promise’ (2023); FDA, ‘Artificial Intelligence in Drug Development’ (2024); Insilico Medicine clinical and corporate disclosures (2022-2025); Nature Reviews Drug Discovery, AI in drug discovery review (2023); MIT News, halicin discovery paper (2020); Exscientia press releases (2020-2024); McKinsey & Company, ‘Scaling AI in Pharmaceutical Industry’ (2024); BenevolentAI investor reports (2024); Recursion Pharmaceuticals corporate disclosures (2024); Lantern Pharma RADR platform documentation (2024); DeepMind/Isomorphic Labs press releases (2024); Open Targets genetic evidence analysis (2023); USPTO AI inventorship guidance (February 2024); EMA reflection paper on AI in medicines development (2023).