I. Why Patent Landscaping Is a Core IP Asset, Not a Research Function

Patent landscaping has a credibility problem in many pharma organizations. It gets treated as a library exercise, something a junior IP associate runs before a filing or an acquisition. That framing is expensive. The companies that have operationalized landscaping as a continuous intelligence function, not a project-based deliverable, consistently demonstrate shorter time-to-insight on competitor R&D pivots, faster Freedom-to-Operate (FTO) clearance cycles, and stronger Paragraph IV defense records.

The distinction matters financially. A well-maintained patent landscape on a lead program does three things a standard patent search cannot. First, it maps the technological ‘white space’ around your compound class, revealing differentiation opportunities before competitors file. Second, it benchmarks your portfolio density against peer companies in the same technology cluster, giving IP committees an objective basis for patent prosecution investment decisions. Third, it surfaces prior art signals early enough to inform claim construction rather than respond to examiner rejections.

In pharma specifically, that third function carries the highest dollar value. A composition-of-matter patent on a blockbuster NME can represent anywhere from $2 billion to $15 billion in NPV depending on the indication, competitive set, and loss-of-exclusivity (LOE) trajectory. If a landscaping gap lets a Paragraph IV filer challenge that patent on grounds that prior art existed and was missed, the cost of that miss dwarfs any investment in landscaping infrastructure.

The global patent analytics market reflects this shift in priority. Valued at $1.13 billion in 2024, it is projected to reach $3.03 billion by 2032 at a 13.3% CAGR, driven primarily by pharmaceutical and healthcare patent activity. That growth rate is not the result of curiosity about AI tools. It is the result of organizations waking up to the financial risk of operating with inadequate IP intelligence.

Key Takeaways: Framing

- Patent landscaping generates direct IP asset value, not just research support.

- The NPV protected by rigorous landscaping on a single blockbuster drug can exceed $5 billion over the patent lifecycle.

- The patent analytics market is growing at 13.3% CAGR, with pharma as the primary demand driver.

- Organizations that treat landscaping as a continuous intelligence function outperform those running it as a project-based service.

II. The Scale Problem: Why Manual Methods Collapsed

Over 150 million patent documents exist globally. Annual filing volumes grow 3-5% per year, with pharma and biotech accounting for a disproportionate share of that growth. The USPTO received over 650,000 applications in fiscal 2023. The EPO processed more than 190,000. CNIPA, China’s patent office, now receives more applications annually than the USPTO and EPO combined.

A senior patent analyst working full-time can meaningfully review perhaps 500-800 patent documents per week with the depth required for strategic landscaping. That includes reading abstract, claims, and specification; assessing IPC/CPC classification accuracy; mapping assignee relationships; and tagging for technology cluster assignment. At that throughput, covering a technology space with 50,000 relevant patents takes two years. By the time the landscape is complete, the competitive picture has shifted.

The manual approach also suffers from classification drift. IPC codes are assigned by patent office examiners who apply jurisdiction-specific norms and personal judgment. The same compound class, say a PCSK9 inhibitor delivered by a specific antibody format, may receive substantially different IPC designations at the USPTO versus the EPO versus CNIPA. A manual search relying on classification codes alone routinely misses 20-30% of relevant prior art in cross-jurisdictional landscapes.

The legal exposure from incomplete landscapes is well-documented in ANDA litigation. A recurring pattern in Paragraph IV challenges is that the challenger’s landscape work was more comprehensive than the originator’s, allowing the generic filer to identify prior art the originator had either missed or failed to proactively distinguish. The Orange Book-listed patents survive or fall partly on the quality of the IP intelligence work that preceded the filing.

Key Takeaways: The Scale Problem

- Human-only review throughput cannot cover active technology spaces within actionable timeframes.

- Cross-jurisdictional IPC drift causes 20-30% classification gaps in manual searches.

- Paragraph IV challengers frequently out-landscape originators on their own compound classes.

- Global filing volume growth makes the scale problem structural, not cyclical.

III. What Technology Clusters Actually Are (and Where Automated Tools Fail)

A technology cluster in patent analytics is a set of patent families grouped by shared technical domain, established through algorithmic analysis of titles, abstracts, IPC codes, CPC codes, and increasingly, full-text embeddings. The mechanics matter because different clustering methods produce fundamentally different strategic pictures.

PatentSight, Derwent Innovation, and Patsnap each use proprietary clustering engines. Their outputs diverge in ways that matter for decision-making. PatentSight’s classification platform, for instance, applies separate algorithms for clustering and for cluster naming specifically to prevent the naming function from biasing group formation. That is good practice. If the algorithm names a cluster first and then pulls patents toward that label, you get an artifact, not a technology map.

Current automated systems typically cap cluster outputs at sixteen distinct groups. Patent families that do not fit the top sixteen get assigned to a ‘miscellaneous’ bucket. In a technology space as heterogeneous as CRISPR therapeutics, that ceiling is analytically useless. CRISPR applications span base editing, prime editing, epigenetic editing, in vivo delivery, ex vivo cell engineering, guide RNA optimization, and anti-CRISPR defense mechanisms. A sixteen-cluster cap collapses that landscape into categories that cannot support serious IP strategy.

The weighting problem compounds this. Classification codes are more useful for small-molecule chemistry than for biologics or software-adjacent drug delivery systems. An automated tool applying uniform weights across IPC codes will systematically mis-cluster computational protein design patents (which often receive A61K or C12N codes interchangeably depending on the examiner) and platform technology patents that span multiple therapeutic modalities.

None of this means automated clustering is worthless. It means automated clustering produces a first draft, not a finished landscape. The question is what process converts that draft into reliable strategic intelligence. That is where the Human-in-the-Loop framework earns its value.

Key Takeaways: Technology Clusters

- Automated clustering tools typically max out at sixteen groups, which is inadequate for multi-modal technology spaces like CRISPR or mRNA.

- Naming and clustering algorithms should run separately to prevent label-to-group bias.

- IPC/CPC code weighting fails systematically for biologics and platform technologies.

- All automated cluster outputs require expert review before they support strategic decisions.

IV. AI Capabilities That Changed the Game: NLP, LLMs, and Semantic Search

Keyword-based patent search has a ceiling that most IP professionals hit within their first year. The terminology problem is fundamental: two patents describing functionally identical inventions can share zero keywords if one uses ‘adeno-associated viral vector’ and the other uses ‘recombinant parvoviral construct.’ A search built on Medical Subject Headings or IPC codes catches some of this, but not all.

Natural Language Processing (NLP) changed the geometry of the problem. Embedding-based semantic search converts patent text into high-dimensional vectors and identifies conceptual proximity regardless of shared vocabulary. The practical result is that a search for prior art on a novel lipid nanoparticle (LNP) formulation now surfaces patents using terminology from polymer science, cosmetic emulsification, and nucleic acid delivery that a keyword search would miss entirely. In mRNA therapeutic development, this cross-domain reach matters enormously because many foundational LNP patents originated in the siRNA and antisense oligonucleotide literature, not the mRNA literature.

Large Language Models (LLMs) extended NLP capabilities into structured analysis. An LLM trained on patent corpora can interpret the functional relationship between claim elements, distinguish independent from dependent claim contributions, and generate claim charts comparing a target patent to a set of prior art references. Patlytics, Solve Intelligence, and IPRally have each built LLM-native workflows around these tasks. The speed differential versus human-only analysis is not marginal: tasks that required four to six weeks of associate time can now produce a first-pass output in hours.

Generative AI adds a documentation layer on top of the analytical layer. When a search identifies a relevant prior art cluster, generative AI can produce a structured comparison memo: claim mapping, assignee history, prosecution history summary, and preliminary non-obviousness analysis. Patent counsel still reviews and validates every output, but the raw analytical work that supports that review arrives pre-structured.

Machine learning classification compounds these gains over time. Every human correction to a misclassified patent, every cluster reassignment made by a domain expert, feeds back into the model’s training data. A classification system that starts with 70% accuracy on a specialized technology space can reach 90%+ accuracy after six months of active human feedback. That learning trajectory is the core economic case for human-supervised AI versus static databases.

Key Takeaways: AI Capabilities

- Semantic/embedding-based search eliminates the cross-domain terminology gap that defeats keyword search.

- LLMs can generate preliminary claim charts and prosecution history summaries in hours rather than weeks.

- Active learning means the system improves with every human correction, creating compounding accuracy gains.

- The speed advantage over human-only analysis is structural: first-pass outputs in hours, not weeks.

V. The Hard Limits of Purely Algorithmic Patent Analysis

Every major AI patent analytics vendor qualifies its tools’ outputs. The qualifications are not marketing disclaimers. They reflect genuine architectural limits that matter for the specific tasks pharma IP teams need AI to perform.

Claim construction cannot be automated. Claim scope in US patent law turns on the prosecution history, the specification’s definition of claim terms, the doctrine of equivalents, and jurisdiction-specific eligibility rules. The USPTO’s Alice/Mayo framework for method claims, the EPO’s technical effect requirement, and the distinctions between product-by-process and functional claims across jurisdictions each require legal judgment that no current AI system can apply reliably. An AI tool can tell you that two patents share conceptual domain. It cannot tell you whether one reads on the other under the correct claim construction standard.

The black box problem undermines accountability. When a patent landscape is used to support an FTO opinion, a Board of Directors presentation on pipeline risk, or a Paragraph IV challenge strategy, the conclusions must be defensible. Defensible means the analyst can explain not just what the data shows but why the AI grouped certain patents together, what signals drove cluster assignment, and where the model’s confidence was low. Deep learning models with millions of parameters cannot provide that explanation. Explainability is not an optional feature for IP analysis in regulated industries: it is a professional responsibility requirement.

Training data quality determines output quality. AI models trained on patent data inherit the biases and gaps of their training corpora. If the corpus skews toward US and EP patents (the most commercially available in machine-readable form), the model will systematically underweight CNIPA, KIPO, and JPO filings. For companies competing in oncology or infectious disease where Chinese and Korean originator filings matter, that gap creates material analytical risk. No current commercial platform fully resolves this. Responsible use of AI patent tools requires knowing where each tool’s coverage ends.

Weak signal detection requires human pattern recognition. The most strategically valuable intelligence in a patent landscape is often the faintest signal: a competitor filing a single provisional in an adjacent technology space, a series of CIP filings around a formulation approach, or an academic institution’s patent activity correlating with a publicly known industry collaboration. These patterns require a domain expert who knows what is happening in the field scientifically, commercially, and competitively. An AI model trained on historical patterns cannot identify significance in a signal it has not seen before.

Key Takeaways: AI Limits

- Claim construction requires legal judgment that current AI cannot apply under jurisdiction-specific standards.

- Black box outputs are not defensible in FTO opinions, litigation support, or Board-level risk presentations.

- Geographic training data gaps systematically underweight CNIPA and other non-US/EP filings.

- Weak signal detection in patent data is a human cognitive task, not a pattern-matching problem.

VI. Human-in-the-Loop (HITL): Architecture, Workflows, and Active Learning

Human-in-the-Loop (HITL) machine learning is a specific architectural choice: human expertise is embedded in the model training cycle, not layered on top as a post-hoc review. The distinction determines what kind of improvement is possible.

In a post-hoc review model, a human analyst reviews AI outputs, corrects errors, and delivers a final product. The AI does not learn from those corrections. The next time it encounters a similar patent, it makes the same error. In a HITL architecture, human corrections are structured as training data and fed back into the model. Each correction makes the next prediction more accurate. The system is designed to get better, not just to get reviewed.

Active Learning for Patent Classification

Active learning is the most efficient form of HITL for patent classification at scale. The standard workflow runs as follows.

The AI model runs an initial classification pass over the full patent corpus, assigning each document to a candidate technology cluster and generating a confidence score for each assignment. High-confidence assignments are accepted provisionally. Low-confidence assignments, typically those near cluster boundaries or involving cross-domain technologies, are flagged for human review. Domain experts review only the flagged subset, perhaps 5-10% of the full corpus, rather than the entire dataset. Their corrections are labeled and used to retrain the model. The retrained model reclassifies the full corpus, generates a new set of flagged documents, and the cycle repeats.

This process achieves two things simultaneously: it concentrates expert time where it has the highest marginal impact, and it produces training data that is specifically calibrated to the edge cases where the model struggles. IPRally’s active learning classifier, for instance, can be trained to a company’s proprietary taxonomy rather than the standard IPC/CPC schema. A specialty pharma company with a portfolio spanning small molecules, peptide conjugates, and targeted delivery systems can build a classifier that reflects its own technical categories rather than forcing its IP into general-purpose classification bins.

Expert Cluster Refinement

The active learning loop handles classification. Cluster refinement is a separate, higher-order task. After initial clustering, domain experts review cluster compositions for four common failure modes.

The first is spurious splitting, where a single coherent technology (e.g., all patents on PD-1/PD-L1 checkpoint inhibitors) gets divided into multiple clusters because different assignees use different vocabulary. The expert merges these back to a single cluster and labels it with terminology that reflects actual technical usage.

The second is inappropriate merging, where patents from distinct technical approaches (e.g., small-molecule KRAS G12C inhibitors and allosteric KRAS degraders) land in the same cluster because they share classification codes. The expert splits these and creates distinct cluster entries.

The third is ‘miscellaneous bucket’ rescue. As noted, automated tools typically cap cluster counts. The miscellaneous bucket contains a mix of patents that did not meet the similarity threshold for any of the top clusters. Expert review frequently reveals that the miscellaneous bucket contains a coherent emerging technology cluster, one that is too new or too cross-domain to have generated enough volume for automated detection. Identifying that cluster early is often the highest-value output of the entire landscaping exercise.

The fourth is terminology alignment. AI-generated cluster names rely on text frequency, not technical judgment. The most frequent terms in a cluster’s patent text may be process terms rather than inventive terms. An expert renames clusters to reflect the core technical contribution, not the most common words.

Workflow Integration

HITL processes need to be embedded in IP management workflows, not run as standalone analytical projects. In practice, this means the patent landscaping system connects to the organization’s docketing software, flags new competitor filings in monitored technology clusters on a weekly basis, and routes those flags to the appropriate domain expert or technology-watcher for review. The expert’s classification decision, accept the assignment or reassign to a different cluster, feeds directly into the model’s training data without requiring a separate data entry step.

Parexel has published data on HITL implementation in pharmacovigilance case processing that demonstrates the model: AI processes 85-90% of cases automatically; humans handle the 10-15% flagged for complexity or ambiguity; expert decisions are captured as labeled data and used for continuous model improvement. The same architecture transfers directly to patent classification workflows.

Key Takeaways: HITL Architecture

- HITL is an architectural choice, not a review process. Human corrections must feed model retraining to generate compounding accuracy gains.

- Active learning concentrates expert time on the 5-10% of patents where human judgment adds the most value.

- Cluster refinement catches four systematic failure modes: spurious splits, inappropriate merges, miscellaneous bucket rescue, and terminology misalignment.

- HITL workflows should integrate with docketing software to automate competitive monitoring as a continuous function.

VII. Technology Roadmap: Biologics, mRNA, and CRISPR Patent Cluster Mapping

Three therapeutic technology classes illustrate how human-supervised AI patent landscaping generates different strategic outputs depending on the IP architecture of the field. Each has a distinct patent topology that requires different clustering and analytical approaches.

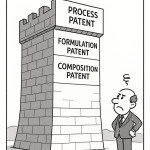

Biologics: The Biosimilar Interchangeability Battleground

The biologic patent landscape is layered by design. A typical biologics portfolio consists of a composition-of-matter patent covering the molecule itself, typically expiring 12-15 years post-filing; a set of method-of-treatment patents covering approved indications; formulation patents covering approved concentrations, excipients, and delivery devices; and process patents covering cell line, fermentation, purification, and fill-finish methods.

Each layer is a separate patent cluster in the landscape. Human-supervised AI earns its value here in two specific ways. First, it can correctly separate these layers from each other and from competitor filings in adjacent spaces, something automated clustering handles poorly because the same compound can appear across all four cluster types with different claim language. Second, it can identify the ‘thinness’ of coverage in any given layer, revealing whether a biosimilar developer can design around the formulation cluster without touching the molecule cluster.

The regulatory intersection matters for cluster valuation. Under the BPCIA’s 12-year exclusivity provision and the FDA’s interchangeability designation pathway, different layers of the biologic patent stack carry different commercial value. A landscape that maps these layers accurately gives an originator’s IP team a realistic picture of when effective market exclusivity (EME) ends for each indication, not just when the molecule patent expires. For AbbVie’s adalimumab (Humira) portfolio, for example, the composition-of-matter patent expired in 2016, but a dense thicket of formulation and device patents sustained effective exclusivity in the US market until 2023. Understanding that architecture requires cluster-level analysis, not patent-count analysis.

IP valuation framework for biologics: The core valuation input from a landscape is the EME trajectory. Analysts model EME by mapping cluster coverage dates across jurisdictions, identifying the sequence in which biosimilar entry becomes legally and technically feasible in each market, and discounting cash flows accordingly. A landscape that correctly maps the formulation cluster separately from the process cluster, and correctly identifies which formulation patents are Orange Book-listed versus unlisted, produces a materially different EME model than one that treats all cluster layers as equivalent.

mRNA Therapeutics: The Foundational Patent Dispute as a Landscape Lesson

The mRNA therapeutics field contains one of the most consequential patent landscape disputes of the past decade. The foundational lipid nanoparticle technology that makes mRNA delivery viable, commercialized in Moderna’s COVID-19 vaccine and BNT162b2, rests on a contested patent cluster that spans Moderna, Alnylam Pharmaceuticals, Arbutus Biopharma, and Genevant Sciences. The cluster dispute centers on whether Moderna’s ionizable lipid formulations infringe Arbutus’s foundational LNP patents.

An automated clustering tool would group all of these patents together in a single ‘LNP delivery’ cluster. A human-supervised analysis would correctly identify at least five distinct sub-clusters: ionizable lipid chemistry, lipid molar ratio optimization, polyethylene glycol (PEG) lipid configuration, particle size distribution control, and nucleic acid encapsulation efficiency. Each sub-cluster represents a separately contestable IP position. Moderna’s litigation defense strategy, and Arbutus’s licensing leverage, both depend on correctly characterizing which sub-clusters are covered by which company’s priority dates.

For R&D teams building in the mRNA space, a cluster analysis at this granularity reveals which technical approaches remain available, which require licensing, and which are subject to active dispute. The investment thesis for any company entering mRNA therapeutics post-2020 depends on that analysis.

mRNA technology roadmap, patent cluster perspective: LNP sub-clusters will be the central IP battleground through 2030. New entrants should build landscape analyses at sub-cluster granularity before initiating formulation work. Route-of-administration patents (inhaled, intradermal, intratumoral) are underpopulated relative to IV delivery and may represent available white space. mRNA sequence optimization and codon-optimization algorithm patents are a separate cluster with a different assignee profile dominated by academic institutions, including MIT, Penn, and BioNTech’s preclinical research arm.

CRISPR: The Multi-Cluster Licensing Architecture

CRISPR patent landscaping is the most analytically demanding exercise in current biotech IP. The core dispute between the Broad Institute and UC Berkeley over CRISPR-Cas9 inventorship ran for nearly a decade. That dispute was resolved, but it left behind a licensing architecture where any developer working in eukaryotic CRISPR editing must account for both estates. The Broad controls key eukaryotic application patents; Berkeley controls key foundational mechanistic patents. The licenses are separate, the royalty stacks are additive, and the sub-licensing terms vary by application.

A human-supervised AI landscape of CRISPR technologies would correctly identify at minimum the following distinct clusters: Cas9 variants (SpCas9, SaCas9, Cas9-NG), Cas12a/Cpf1 and Cas13 applications, base editing (BE3, BE4, ABE systems), prime editing, guide RNA design and delivery, anti-CRISPR regulatory mechanisms, in vivo delivery (AAV-CRISPR, LNP-CRISPR, ribonucleoprotein particle delivery), and ex vivo cell engineering applications. Each cluster has a different assignee profile, different licensing terms, and different exposure to inter partes review (IPR) challenges.

An automated tool groups these into four or five buckets. A HITL-supervised analysis resolves them into fourteen or more defensible sub-clusters, each with its own IP valuation and strategic implications. For a gene editing company in diligence, the difference between these two outputs is not analytical precision: it is a meaningful difference in deal valuation and IP risk disclosure.

Key Takeaways: Technology Roadmaps

- Biologic patent landscapes must map all four IP layers (molecule, method, formulation, process) separately to generate an accurate EME trajectory.

- mRNA LNP sub-cluster analysis at five or more levels of granularity is required before any formulation development decision.

- CRISPR landscape work should generate at minimum fourteen sub-clusters to support licensing strategy and deal due diligence.

- All three technology classes require human expert intervention to reach the sub-cluster granularity that supports investment-grade decisions.

VIII. IP Valuation as a Strategic Asset: Integrating Landscape Data into Financials

Patent portfolios appear on balance sheets as intangible assets under GAAP and IFRS, but the accounting treatment captures very little of their economic significance. Book value for internally developed IP is typically zero under GAAP (development costs are expensed as incurred unless strict criteria are met). Fair value is estimated in business combinations. Neither method reflects the forward-looking revenue protection function that makes a patent portfolio strategically valuable.

Patent landscape data provides inputs that the accounting treatment does not. The three most decision-relevant inputs for financial analysis are effective market exclusivity (EME) trajectory, technology cluster density relative to peers, and Paragraph IV exposure.

EME trajectory converts the patent landscape into a revenue timeline. By mapping every patent in each technology cluster, with expiration dates by jurisdiction and accounting for PTA (Patent Term Adjustment), PTE (Patent Term Extension), and any pending SPCs in EU markets, the landscape produces a jurisdiction-by-jurisdiction window of exclusivity for each indication. That window feeds directly into net present value models. A 12-month extension in EME for a drug generating $3 billion in annual US revenue is worth approximately $2-2.5 billion in NPV at standard pharma discount rates. The landscape work that secures or anticipates that extension is not an IP administration cost: it is a value-creation function.

Technology cluster density measures how defensible a portfolio position is relative to what it would take for a competitor to design around it. A molecule with a dense, internally consistent cluster across composition-of-matter, multiple method-of-treatment, and formulation patents is harder to design around than one with a thin composition-of-matter patent and no downstream protection. Density analysis quantifies this qualitative observation: counting patents per cluster layer, assessing the geographic coverage of each layer, and comparing the coverage map to the competitive set’s filing history in the same clusters.

Paragraph IV exposure assessment uses the landscape to model which issued patents are most vulnerable to challenge. The relevant variables are priority date strength, claim breadth, prosecution history estoppel, and the volume of prior art citations in each technology cluster. A landscape-based Paragraph IV model identifies which Orange Book-listed patents are most likely to be challenged, which are most likely to survive, and which warrant design-around investment or reformulation work before the ANDAs arrive.

For biotech companies raising capital or preparing for M&A, landscape-derived IP valuation analysis has become a diligence standard. Sophisticated acquirers commission independent landscape analyses specifically to compare the target’s internal IP claims against the actual patent cluster picture. Discrepancies between management’s IP narrative and the landscape data are deal-risk indicators. Companies that maintain current, HITL-supervised landscapes are in a substantially stronger negotiating position because they can verify their own claims before a buyer does.

Key Takeaways: IP Valuation

- EME trajectory built from patent cluster analysis is the most accurate input for revenue-protection NPV modeling.

- Cluster density analysis quantifies IP defensibility in terms comparable across a competitive set.

- Paragraph IV exposure modeling from landscape data identifies which Orange Book patents are vulnerable before ANDAs are filed.

- Buyers in pharma M&A routinely commission independent landscapes to test management’s IP narrative, creating a diligence standard that favors companies with current HITL-supervised landscapes.

Investment Strategy: IP Valuation

Portfolio managers assessing pharma companies should request landscape-derived EME models rather than accepting patent-count metrics or nominal expiration dates. The gap between nominal patent expiration and effective market exclusivity can be measured in years or decades (see Humira’s US EME trajectory). Companies unable to produce a cluster-level EME analysis for their lead programs are likely operating with incomplete IP intelligence, which is a risk disclosure issue.

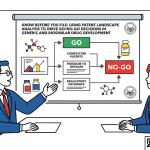

IX. Evergreening, Paragraph IV Filings, and the Lifecycle Architecture of Pharma Patents

Evergreening is the accumulation of follow-on patents around a commercial drug molecule to extend effective market exclusivity beyond the primary composition-of-matter patent’s expiration. The tactics are well-characterized, and generic drug developers spend considerable resources landscaping them. Originators spend equally considerable resources executing them.

The core evergreening techniques, and their patent cluster signatures, include the following.

Formulation patents cover improved delivery mechanisms, changed excipient profiles, extended-release or controlled-release formulations, and fixed-dose combinations. These generate a distinct formulation sub-cluster in the landscape. A generic entrant must either design around the formulation patents, accept a license, or challenge them via Paragraph IV. Because many formulation patents are Orange Book-listed (FDA lists patents that claim the approved drug, its formulation, or its method of use), they trigger a 30-month stay of ANDA approval upon Paragraph IV challenge, creating independent commercial value separate from their technical merit.

Method-of-treatment patents cover new dosing regimens, new indications, new patient populations (pediatric, geriatric, renally impaired), and new combination therapies. These are typically harder to design around than formulation patents because a generic label must avoid the patented method. They generate a method-of-treatment sub-cluster in the landscape that is often more dense than the original composition-of-matter cluster by year 10 of a drug’s commercial life.

Polymorph and salt-form patents cover specific crystalline forms of the active pharmaceutical ingredient (API). These are the most contested category in Paragraph IV litigation because the prior art question (whether the claimed polymorph was obvious from the base compound) is technically complex and jurisdiction-specific. India’s Section 3(d) was specifically enacted to prevent polymorph evergreening from extending effective exclusivity beyond the core compound’s protection.

Metabolite patents claim active metabolites discovered after the parent compound’s commercial launch. These are legally distinct from the parent compound and can carry independent composition-of-matter protection. The landscape analysis must correctly classify metabolite patents as a separate sub-cluster from the parent compound to avoid misrepresenting the LOE timeline.

A HITL-supervised landscape maps all of these clusters simultaneously and generates an integrated LOE model that accounts for each layer’s expiration schedule, Orange Book listing status, and estimated Paragraph IV vulnerability. That model is the correct analytical input for both originator lifecycle strategy and generic entry timing decisions.

Paragraph IV strategy is where landscape analysis connects most directly to litigation outcomes. A generic developer uses the landscape to identify which patents are most vulnerable to invalidity or non-infringement arguments, which challenges are most likely to generate a declaratory judgment rather than a 30-month stay, and which originator patents, if successfully challenged, would create the most commercially valuable first-filer exclusivity window under Hatch-Waxman.

The originator uses the same landscape in reverse: identifying which patents are most likely to be challenged, stress-testing those patents’ claim construction and prosecution history for vulnerabilities, and deciding where to invest in claim differentiation, continuation filings, or pre-emptive design-around work.

Key Takeaways: Evergreening and Paragraph IV

- Evergreening generates at minimum five distinct patent cluster types (composition, formulation, method, polymorph, metabolite), each with separate LOE implications.

- Orange Book-listed formulation and method patents carry independent commercial value from their 30-month ANDA stay trigger.

- Paragraph IV strategy for both originators and generics requires cluster-level landscape analysis, not patent-count analysis.

- HITL supervision is required to correctly classify polymorph and metabolite patents separately from the parent compound cluster.

X. Freedom-to-Operate Analysis in the HITL Era

Freedom-to-Operate (FTO) analysis determines whether a product can be made, used, or sold in a given jurisdiction without infringing a valid, enforceable patent. The standard of care for FTO analysis has risen significantly as AI tools have made it possible to search broader prior art sets more quickly. Courts and IP committees now expect FTO opinions to demonstrate comprehensive search methodology, not just thorough legal analysis of the patents identified.

HITL-supervised AI improves FTO analysis in four specific ways.

Semantic search across jurisdictions finds relevant patents that keyword and classification searches miss, particularly in the cross-domain areas (delivery technology, formulation chemistry, device design) where relevant prior art frequently originates outside the primary technology cluster. An FTO search for a novel injectable biologic that relies only on A61K classification codes will miss relevant blocking patents filed under polymer chemistry (C08) or materials science (B01) classifications.

Automated monitoring maintains the FTO analysis on a rolling basis. Published applications in monitored technology clusters trigger review workflows as they publish, allowing the IP team to identify potentially blocking applications 18 months before they issue. That 18-month window is typically enough time to design around a problematic claim if the issue is identified early, which is rarely true once the patent issues and a product is in Phase 3.

Confidence-ranked output from AI search tools tells the reviewer which identified patents require the deepest analysis. Rather than treating every hit in a search results list equally, the AI ranks by relevance score, by claim-to-product mapping probability, and by citation network centrality (highly-cited patents in the cluster are more likely to reflect the core IP landscape). Human reviewers allocate their time according to these rankings, concentrating depth of analysis on the highest-risk patents.

Gap analysis in FTO coverage identifies jurisdictions where the search was limited by database coverage. A responsible FTO opinion in 2025 should document not just what was found but what was searched, at what database coverage, and where the search methodology’s limits create residual uncertainty. HITL workflows can automate the documentation of these coverage gaps, generating a standard ‘search scope’ section of the FTO opinion that satisfies current best-practice requirements.

Key Takeaways: FTO Analysis

- Semantic search catches cross-domain blocking patents that IPC/CPC-based keyword searches miss.

- Automated cluster monitoring creates an 18-month early-warning window before problematic applications issue.

- Confidence-ranked AI output allows human reviewers to concentrate depth of analysis on the highest-risk patent families.

- Coverage gap documentation is a professional responsibility requirement that HITL systems can automate.

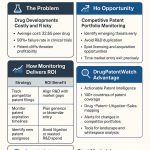

XI. Competitive Intelligence Workflows for Portfolio Managers

For portfolio managers, patent landscape data has four applications that go beyond what company disclosures typically provide.

First, filing velocity analysis. Changes in a competitor’s patent filing rate in a specific technology cluster are a leading indicator of R&D investment shifts. A biotech that files three to five patents per year in a given cluster over several years, then files fifteen in a single year, has almost certainly received meaningful preclinical data in that area. This is observable via patent monitoring months or years before the company discusses the program in investor calls or conference presentations. The limitation is that provisional applications are not published for 12-18 months, creating a lag. HITL-supervised monitoring of published applications, PCT filings, and foreign prosecution events narrows this lag to the extent current databases allow.

Second, white space mapping. A cluster-level landscape identifies technology areas where no company has filed more than a handful of patents despite clear scientific rationale for activity. These white spaces can indicate either that the science is genuinely underdeveloped or that companies are relying on trade secrets rather than patents (common in process chemistry). For a portfolio manager evaluating an early-stage biotech, white space positioning in a meaningful cluster is a positive signal about differentiation potential.

Third, in-licensing and partnership signal detection. Co-assignment analysis in patent clusters reveals when two companies are co-developing in a technology area before any formal partnership announcement. Academic institution filings with pharma company co-inventors are similarly predictive. These signals typically precede collaboration announcements by 12-24 months.

Fourth, patent cliff calibration. The standard patent cliff model uses Orange Book expiration dates as the LOE trigger. The correct model uses EME, as discussed in Section VIII. For any drug where the difference between the nominal expiration date and the real EME date exceeds two years, the standard model misprices the LOE risk, either overstating it (if the company has meaningful follow-on protection) or understating it (if the follow-on cluster is weaker than it appears). Landscape-derived EME models are the basis for correct patent cliff timing.

Investment Strategy: Competitive Intelligence

Analysts covering a pharma company should cross-reference the company’s disclosed patent expiration schedule against a HITL-supervised cluster analysis of their portfolio. Discrepancies indicate either that management is correctly presenting a robust LOE defense the market has not fully priced, or that they are presenting nominal expiration dates while the actual cluster is thin. Both situations are pricing signals. For generic drug developers, the same analysis identifies which Paragraph IV opportunities have the most favorable risk/reward: dense prior art in the challenger cluster, thin formulation coverage in the Orange Book listing, and a large revenue base.

XII. Building a Human-Supervised AI Patent Program: Implementation Guide

Organizations implementing HITL patent landscaping for the first time face predictable challenges. The following roadmap reflects what deployment looks like in practice across pharma and biotech IP teams.

Phase 1: Data Infrastructure (Months 1-3). Establish access to at least two major patent databases with machine-readable full text, covering USPTO, EPO, CNIPA, KIPO, and JPO at minimum. Evaluate database coverage for your specific technology clusters by running test searches and manually auditing recall against known patents. Establish a standard data schema for patent metadata that maps across database formats. Many organizations underestimate this step. Inconsistent metadata fields between databases create downstream merging errors that corrupt cluster analyses.

Phase 2: Baseline Landscape and Taxonomy Development (Months 2-5). Run an initial AI-generated cluster analysis on your priority technology spaces. Convene domain expert review sessions to apply the four-failure-mode cluster refinement described in Section VI. Develop an internal taxonomy that maps AI-generated clusters to your organization’s technology categories, drug program names, and competitive set definitions. This taxonomy becomes the training schema for your active learning classifier.

Phase 3: Classifier Training and Validation (Months 4-8). Use the HITL active learning cycle to train a custom classifier on your taxonomy. The target accuracy for pharmaceutical technology classification should be 85%+ at the sub-cluster level before you rely on the classifier for strategic decisions. Validate by running the trained classifier against a held-out set of patents that human experts have already classified independently. Document accuracy metrics by technology cluster.

Phase 4: Workflow Integration (Months 6-12). Connect the classification system to your patent monitoring feed. Configure automated alerts for new filings in priority clusters, assignee-specific monitoring for your key competitive set, and Paragraph IV filing alerts for all Orange Book-listed patents in your portfolio. Route alerts to technology watchers with clear escalation criteria: what triggers an immediate IP committee notification versus a weekly digest.

Phase 5: Continuous Improvement (Ongoing). Establish a regular cadence of classifier retraining on accumulated human feedback data, at minimum quarterly. Run annual full landscape refreshes on priority technology spaces. Track classification accuracy metrics over time to verify the learning trajectory. The compounding accuracy gains that make HITL economically compelling only materialize if the retraining cadence is maintained.

Talent requirements: the program requires IP professionals with domain expertise in your technology areas, sufficient AI literacy to evaluate and correct model outputs, and enough process discipline to maintain the labeling quality that the active learning cycle depends on. Labeling inconsistency is the primary cause of HITL program failure. If different experts classify the same patent into different sub-clusters, the model learns noise rather than signal. Establishing clear classification criteria, with worked examples for every sub-cluster boundary case, is not optional overhead: it is the prerequisite for a functional program.

Key Takeaways: Implementation

- Data infrastructure (multi-database, standardized metadata) is the most commonly underinvested phase.

- Target 85%+ sub-cluster classification accuracy before using the classifier for strategic decisions.

- Annual full landscape refreshes plus continuous monitoring is the correct operational cadence.

- Labeling inconsistency between experts is the primary failure mode. Classification criteria documentation and training are non-negotiable.

XIII. Explainable AI (XAI): The Trust Architecture That Makes HITL Defensible

Explainable AI (XAI) is the set of methods and technical approaches that make an AI system’s decision-making process interpretable to human reviewers. In patent analytics, XAI is the answer to the black box accountability problem described in Section V.

Two XAI approaches are most directly applicable to patent classification and clustering. The first is SHAP (Shapley Additive Explanations), a method that assigns each input feature (in patent classification, these are typically n-gram features from the text, IPC codes, and citation network metrics) a contribution score for a specific prediction. When the classifier assigns a patent to the ‘ionizable lipid LNP’ sub-cluster, a SHAP-equipped system can report which features drove that assignment: which claim terms contributed most, which IPC codes were most predictive, which co-citation relationships were most influential. A human reviewer can then confirm or correct the assignment based on that explanation, and the correction carries more training signal than a binary right/wrong label.

The second is attention visualization in transformer-based models. Transformer architectures, which underlie most current LLM-based patent analysis tools, generate attention weights that indicate which tokens in the input text the model weighted most heavily for its output. A patent professional can inspect these attention maps to verify that the model was attending to technically meaningful claim language rather than boilerplate preamble.

Neither approach solves the interpretability problem entirely. SHAP values can be misleading when input features are correlated (which they frequently are in patent text, where technical terms appear together in consistent grammatical structures). Attention maps are imperfect proxies for what the model ‘knows’ about the relationship between concepts. Responsible deployment of XAI in patent analytics means documenting these limitations alongside the explanations.

For IP teams preparing FTO opinions, landscape reports used in Board presentations, or patent validity analyses for litigation support, XAI outputs are not just analytically useful. They are documentation. The explanation of how the AI reached a conclusion, and the human expert’s assessment of whether that explanation is technically sound, constitutes part of the professional work product. That documentation standard is already developing in jurisdictions where AI-assisted legal work product is subject to disclosure rules.

Key Takeaways: XAI

- SHAP-equipped classifiers generate feature contribution scores that make individual patent assignments reviewable and correctable.

- Attention visualization in transformer models allows patent professionals to verify that classifications are driven by technically meaningful claim language.

- XAI outputs function as documentation in FTO opinions and litigation support work product.

- Current XAI methods have limitations, particularly with correlated input features; responsible use requires documenting those limits alongside the explanations.

XIV. Investment Strategy: How Patent Cluster Data Drives Deal Sourcing and Due Diligence

Patent landscape analysis is a standard component of pharmaceutical M&A due diligence. The following discussion is aimed at institutional investors and deal teams who commission or interpret this analysis.

Deal sourcing from white space analysis. Technology clusters with significant scientific activity but thin patent coverage represent potential early-stage acquisition targets. A cluster where academic institutions hold the foundational patents and no commercial company has filed more than a handful of applications is a potential in-licensing opportunity. A cluster where a single small biotech holds disproportionate coverage relative to the commercial landscape is a potential acquisition target before the technology is widely recognized. Both patterns are visible in landscape data months to years before they appear in analyst coverage.

Valuation adjustments from EME modeling. Standard pharma company valuations use nominal patent expiration dates as the revenue cliff trigger. As discussed in Section VIII, nominal expiration and EME can diverge significantly. In the 2023 US biosimilar adalimumab launch, multiple biosimilar entrants had been technically ready to enter the market years before effective entry was feasible. The cost of that delay was measurable in hundreds of millions in foregone revenues for generic manufacturers. On the originator side, AbbVie’s ability to maintain US pricing power through 2023 on a molecule with a composition-of-matter patent expiring in 2016 represents extraordinary IP lifecycle management. Deal models that miss this dynamic misvalue assets in both directions.

IP risk in clinical-stage acquisitions. The most common IP diligence error in clinical-stage acquisitions is treating a clean FTO opinion as sufficient validation of IP position. FTO confirms the ability to operate; it does not confirm the ability to exclude. A target company might have clear FTO but hold only a thin or vulnerable composition-of-matter patent, meaning that upon achieving market approval, a well-funded generic developer could mount a successful Paragraph IV challenge and enter the market within 30 months. Landscape-based cluster density analysis quantifies this exclusion risk independently of FTO status.

Biosimilar market entry timing for generics investors. For investors in generic drug developers, the most valuable output of a patent landscape analysis is a jurisdiction-by-jurisdiction market entry timeline for a specific biosimilar target. This requires mapping every patent in the originator’s cluster by jurisdiction and expiration date, identifying which patents are litigation-viable versus design-aroundable, and modeling the sequence of events (Paragraph IV filings, 30-month stays, IPR proceedings, potential settlements) that determines actual first-generic entry. This analysis is routinely commissioned by well-capitalized generic developers and, increasingly, by their investors.

Key Takeaways: Investment Strategy

- White space cluster analysis surfaces acquisition and in-licensing targets before commercial recognition.

- EME-based valuation models produce materially different outputs than nominal expiration date models, particularly for biologics with multi-layer portfolio protection.

- FTO clearance is necessary but not sufficient: cluster density analysis is required to assess exclusion risk separately from infringement risk.

- Biosimilar entry timing analysis requires a full cluster-level landscape, not just Orange Book expiration date review.

XV. Key Takeaways by Segment

For IP Teams and Patent Counsel

Patent landscaping at cluster-level granularity is the correct unit of analysis for FTO, lifecycle strategy, and Paragraph IV defense. Automated tools generate first drafts; HITL refinement generates the actionable intelligence. The 16-cluster ceiling in most automated tools is inadequate for complex technology spaces. Build a custom taxonomy using active learning against your organization’s internal classification criteria. Maintain classifier retraining on a quarterly cadence.

For R&D Leads and Portfolio Managers

Technology cluster analysis provides earlier competitive signals than any other publicly available data source. Filing velocity changes in a competitor’s priority clusters are observable months before conference disclosures. White space identification in cluster maps reveals genuine differentiation opportunity, not just freedom to operate. Invest in HITL landscape infrastructure before a program reaches Phase 2: the cost of correcting IP position at late clinical stage is an order of magnitude higher than the cost of getting it right in discovery.

For Institutional Investors and Deal Teams

EME-derived revenue models are materially more accurate than nominal expiration date models. Commission HITL-supervised landscape analyses as a standard diligence component, not an optional add-on. Understand that a company’s disclosed IP narrative and the actual cluster-level picture can diverge significantly. Cluster density analysis quantifies exclusion risk independently of FTO status, which is the correct measure of IP defensibility for investment purposes.

For Business Development and Licensing Teams

Patent cluster analysis identifies licensing opportunities from three directions simultaneously: gaps in your own portfolio where in-licensing would add coverage, white spaces where competitors have not filed but the technology exists, and co-assignment patterns that signal undisclosed partnerships before they are announced. Landscape-derived IP valuation analysis, particularly EME modeling, is the appropriate input for royalty rate and milestone structure negotiations.

XVI. Frequently Asked Questions

What is the difference between a patent landscape and a patent search?

A patent search retrieves documents matching specific parameters. A patent landscape analyzes a set of retrieved documents to identify technology clusters, competitive positioning, filing trends, white spaces, and IP coverage gaps. The landscape is an analytical output built on top of search results, not the search itself. A search answers ‘what exists’; a landscape answers ‘what it means.’

How many technology clusters should a pharmaceutical patent landscape contain?

The correct number is determined by the technology space, not by tool limitations. A landscape of cardiovascular small molecule drugs might require eight to twelve clusters. A landscape of CRISPR therapeutic applications might require fourteen to twenty. The 16-cluster ceiling in automated tools is an artifact of computational constraint, not analytical design. HITL refinement should produce as many clusters as the technology architecture requires.

What does Human-in-the-Loop mean in practice for patent analysis?

In HITL patent analysis, human domain experts are embedded in the model training cycle, not just the output review process. Their corrections to AI-generated patent classifications and cluster assignments are used as labeled training data to retrain the model. This distinguishes HITL from post-hoc quality review, in which human corrections improve the current output but do not improve the model’s future performance.

How does AI patent analysis handle non-English patents?

Major platforms support machine translation for Chinese, Japanese, Korean, German, French, and other major filing languages. The quality of semantic analysis on translated text is lower than on English text because translation errors propagate through the embedding layer. Platforms that perform embedding on the source language before translation and then align cross-lingual embeddings produce more accurate cross-jurisdictional cluster analyses. This is a meaningful differentiator between platforms for organizations with significant exposure to CNIPA and KIPO filings.

What is the correct unit of analysis: patent documents or patent families?

Patent families. A patent family groups all documents claiming priority to the same initial filing across jurisdictions. Analyzing at the document level overcounts coverage (one invention filed in 15 countries counts as 15 separate patents) and distorts competitive landscape analysis by inflating the apparent portfolio size of companies with aggressive international filing strategies. Cluster analysis should be performed at the family level, with jurisdiction coverage assessed as a secondary metric.

What is Paragraph IV and why does it matter for patent landscaping?

A Paragraph IV certification is a declaration by an ANDA filer that a listed Orange Book patent is invalid or will not be infringed by the generic product. Filing a Paragraph IV certification is an act of patent infringement under Hatch-Waxman, triggering a 30-month stay of ANDA approval if the originator sues within 45 days. Patent landscapes directly inform Paragraph IV strategy: for generic developers, by identifying the most vulnerable listed patents; for originators, by identifying which listed patents need the most vigorous defense or continued investment in claim differentiation.

DrugPatentWatch provides pharmaceutical patent intelligence, ANDA filing tracking, and Orange Book data for IP teams, portfolio managers, and R&D organizations.